Recognition: unknown

Hu\'i S\`u: Co-constructing a Dual Feedback Apparatus

Pith reviewed 2026-05-07 14:30 UTC · model grok-4.3

The pith

A performance uses dual recursive feedback loops to show music emerging from entangled human-AI interactions rather than fixed mappings.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The performance establishes a duet between Sù, which operates in audio space through latent feedback embedded in a RAVE model that reuses its internal states to influence ongoing sound, and Agentier, which operates in control space through recurrent feedback that shapes evolving control signals from a Roland S-1 and QuNeo interface. By contrasting these domains, the systems and performers produce musical phenomena through entangled states of interaction, shared agency, resistance, and negotiation instead of through any pre-existing system configuration or fixed mappings.

What carries the argument

The dual feedback apparatus, with one loop operating inside the latent representation of a RAVE synthesis model for audio history and the other using a recurrent neural network to evolve control gestures over time.

If this is right

- The instruments actively reuse their own recent states to shape future audio and control behavior.

- Human performers encounter ongoing resistance and must negotiate rather than direct the systems.

- Musical phenomena arise dynamically from the interaction process itself.

- Contrasting audio-domain and control-domain feedback reveals different forms of entanglement between human and machine actions.

Where Pith is reading between the lines

- The same recursive principle could be tested in non-musical domains such as visual or movement-based interaction to check whether co-production appears outside sound.

- Varying the depth or type of the feedback loops might change whether the systems feel intentional or merely noisy, offering a way to measure the boundary between complex behavior and shared agency.

- This setup implies that design effort should shift from defining fixed input-output rules to tuning how systems remember and reuse their own recent activity.

Load-bearing premise

Embedding feedback loops in the latent space of a RAVE model and in a recurrent control network will produce meaningful shared agency, resistance, and negotiation rather than merely complex but non-intentional behavior.

What would settle it

A recording or analysis of the performance in which the instruments' outputs remain fully predictable from performer inputs with no detectable adaptation, resistance, or influence from their own recent history.

Figures

read the original abstract

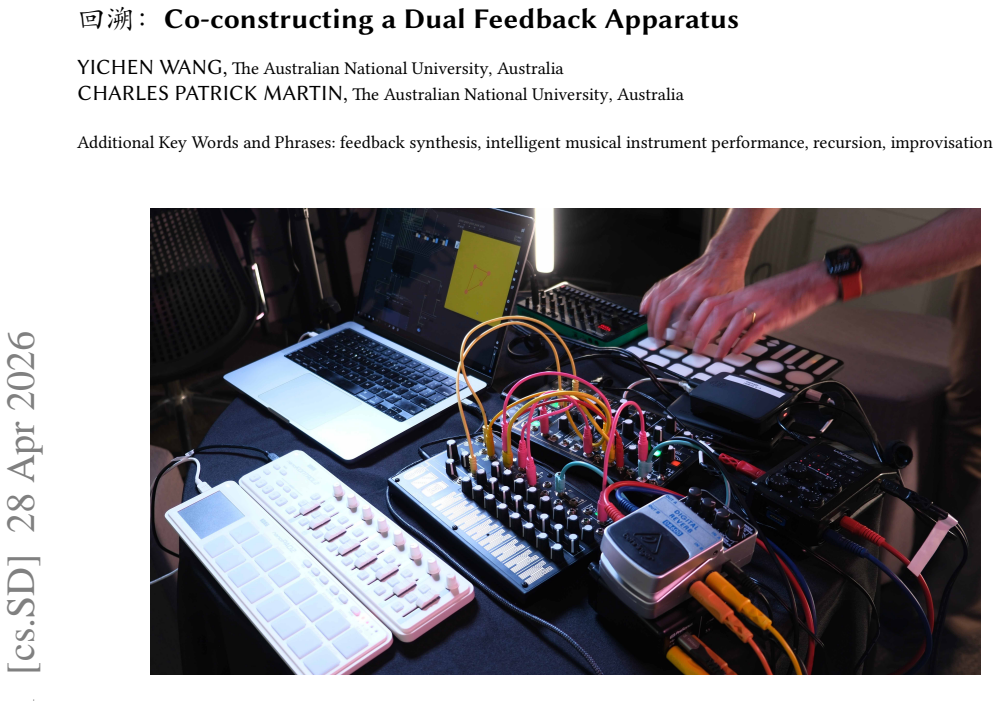

This performance presents a duet between two intelligent musical instruments, S\`u (to trace back; to go upstream) and Agentier (playing on agentic clavier), and their human performers, connected through feedback loops. Rather than treating AI as a tool that responds predictably to input, both systems operate recursively, where past actions continuously influence future behaviour. The S\`u operates in the audio space through latent representation. Its performer uses Make Noise 0-series synthesisers and MIDI controllers to work with a neural feedback synthesis system based on a RAVE model, with a latent feedback loop embedded within the model's internal structure. This allows the instrument to remember and reuse its own internal states, influencing ongoing sound generation through its recent sonic history. The Agentier functions in the control space. Its performer interacts with the system using a Roland S-1 synthesiser and Keith McMillen QuNeo touchpad, where control gestures are routed into a recurrent neural network that feeds back into the synthesis process. Through this feedback loop, the system actively shapes the evolution of control signals over time. Contrasting feedback in the audio and control domains, the performance explores shared agency, resistance, and negotiation between humans and intelligent musical systems. Musical phenomena are co-produced through the entangled states of interaction, rather than through pre-existing system configuration or fixed mappings.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript describes a musical performance titled 'Huì Sù' as a duet between two intelligent instruments—Sù (using a RAVE neural audio model with an embedded latent feedback loop in audio space) and Agentier (using a recurrent neural network for evolving control signals)—and their human performers. The systems incorporate recursive feedback so that past actions influence future behavior, with the goal of exploring shared agency, resistance, and negotiation; the central interpretive claim is that musical phenomena are co-produced through entangled interaction states rather than fixed mappings or pre-existing configurations.

Significance. If the described dual-feedback setups do enable the claimed forms of co-construction and non-deterministic interaction, the work offers a concrete artistic example of contrasting audio-domain and control-domain recursion in live AI music performance. This framing could usefully complement technical literature on intelligent musical instruments by emphasizing history-dependent agency and negotiation, potentially informing designs that move beyond one-way mapping paradigms.

major comments (1)

- Abstract: the central claim that 'Musical phenomena are co-produced through the entangled states of interaction, rather than through pre-existing system configuration or fixed mappings' is presented as the performance's outcome, yet the manuscript supplies no description of observed sonic results, performer reports, or specific manifestations of resistance/negotiation that would allow evaluation of whether the feedback loops produced the claimed co-production rather than merely complex but non-intentional behavior.

minor comments (2)

- The manuscript would benefit from a short methods or implementation subsection detailing the precise placement of the latent feedback within the RAVE architecture and the architecture of the recurrent control network, as these are the enabling mechanisms for the described recursion.

- Title and abstract use non-standard diacritics ('Hu´i S`u'); consistent rendering and an English gloss would improve accessibility for an international readership.

Simulated Author's Rebuttal

We thank the referee for the constructive comment and positive overall assessment. We address the point below and will incorporate revisions to clarify the framing of our interpretive claim.

read point-by-point responses

-

Referee: Abstract: the central claim that 'Musical phenomena are co-produced through the entangled states of interaction, rather than through pre-existing system configuration or fixed mappings' is presented as the performance's outcome, yet the manuscript supplies no description of observed sonic results, performer reports, or specific manifestations of resistance/negotiation that would allow evaluation of whether the feedback loops produced the claimed co-production rather than merely complex but non-intentional behavior.

Authors: We agree that the abstract presents the claim in a way that could be misread as an empirical outcome rather than an interpretive framing of the artistic work. The manuscript is a practice-based description of a live performance exploring shared agency via contrasting audio- and control-domain recursion; the claim articulates the conceptual intent and design rationale rather than asserting measured results. To address the concern, we will revise the abstract to qualify the claim as emerging from the performance's entangled interaction states and add a short section to the body text with concrete examples of observed sonic behaviors, resistance, and negotiation drawn from rehearsal and performance notes. This will allow readers to evaluate the systems' history-dependent dynamics without overstating the paper's scope. revision: yes

Circularity Check

No significant circularity; purely descriptive performance paper with no derivations

full rationale

The paper contains no equations, derivations, fitted parameters, predictions, or load-bearing self-citations. It is an artistic performance description of two feedback-based instruments (Sù using RAVE latent feedback and Agentier using recurrent control networks). The central interpretive claim—that musical phenomena are co-produced through entangled interaction states—is presented as a framing of the setup rather than a result derived from any chain of reasoning that could reduce to its inputs. No self-definitional, fitted-input, or uniqueness-imported patterns appear. The work is self-contained as a descriptive account of a performance apparatus.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

acids-ircam. 2026. RA VE Models Download: Pretrained Models for the RA VE Neural Audio Synthesizer.https://acids-ircam.github.io/rave_models_ download. ACIDS-IRCAM GitHub Pages website, accessed 10 Feb 2026

2026

-

[2]

Antoine Caillon and Philippe Esling. 2021. RA VE: A variational autoencoder for fast and high-quality neural audio synthesis. https://arxiv.org/ abs/2111.05011. arXiv:2111.05011 (2021). https://doi.org/10.48550/arXiv.2111.05011 arXiv preprint, accessed 10 Feb 2026

-

[3]

Théo Jourdan and Baptiste Caramiaux. 2023. Culture and Politics of Machine Learning in NIME: A Preliminary Qualitative Inquiry. In Proceedings of the International Conference on New Interfaces for Musical Expression , Miguel Ortiz and Adnan Marquez-Borbon (Eds.). Mexico City, Mexico, Article 47, 7 pages. https://doi.org/10.5281/zenodo.11189202

-

[4]

Chris Kiefer, Alice Eldridge, and Dan Overholt. 2025. Future Research Challenges in Feedback Musicianship. https://doi.org/10.5281/zenodo. 15005138

-

[5]

Charles Patrick Martin, Kyrre Glette, Tønnes Frostad Nygaard, and Jim Torresen. 2020. Understanding Musical Predictions With an Embodied Interface for Musical Machine Learning. Frontiers in Artificial Intelligence 3 (2020). https://doi.org/10.3389/frai.2020.00006

-

[6]

Charles Patrick Martin and Jim Torresen. 2019. An Interactive Musical Prediction System with Mixture Density Recurrent Neural Networks. In Proceedings of the International Conference on New Interfaces for Musical Expression . 260–265. https://doi.org/10.5281/zenodo.3672952

-

[7]

Landon Morrison and Andrew McPherson. 2024. Entangling Entanglement: A Diffractive Dialogue on HCI and Musical Interactions. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems (Honolulu, HI, USA) (CHI ’24). Association for Computing Machinery, New York, NY, USA, Article 493, 17 pages. https://doi.org/10.1145/3613904.3642171

-

[8]

Tom Mudd. 2019. Material-Oriented Musical Interactions . Springer International Publishing, Cham, 123–133. https://doi.org/10.1007/978-3-319- 92069-6_8

-

[9]

Tom Mudd. 2023. Playing with Feedback: Unpredictability, Immediacy, and Entangled Agency in the No-input Mixing Desk. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems (Hamburg, Germany) (CHI ’23). Association for Computing Machinery, New York, NY, USA, Article 243, 11 pages. https://doi.org/10.1145/3544548.3580662

-

[10]

Sarah Nabi, Philippe Esling, Geoffroy Peeters, and Frédéric Bevilacqua. 2024. Embodied exploration of deep latent spaces in interactive dance- music performance. In Proceedings of the 9th International Conference on Movement and Computing (Utrecht, Netherlands) (MOCO ’24). Association for Computing Machinery, New York, NY, USA, Article 12, 9 pages. https:...

-

[11]

Nicola Privato and Thor Magnusson. 2024. Querying the Ghost: AI Hauntography in NIME. In Proceedings of the International Conference on New Interfaces for Musical Expression , S M Astrid Bin and Courtney N. Reed (Eds.). Utrecht, Netherlands, Article 63, 7 pages. https://doi.org/10.5281/ zenodo.13904901

2024

-

[12]

Nicola Privato, Victor Shepardson, Giacomo Lepri, and Thor Magnusson. 2024. Stacco: Exploring the Embodied Perception of Latent Representa- tions in Neural Synthesis. In Proceedings of the International Conference on New Interfaces for Musical Expression , S M Astrid Bin and Courtney N. Reed (Eds.). Utrecht, Netherlands, Article 62, 8 pages. https://doi.o...

-

[13]

Courtney N. Reed, Adan L. Benito, Franco Caspe, and Andrew P. McPherson. 2024. Shifting Ambiguity, Collapsing Indeterminacy: Designing with Data as Baradian Apparatus. ACM Trans. Comput.-Hum. Interact. 31, 6, Article 73 (Dec. 2024), 41 pages. https://doi.org/10.1145/3689043 ිğCo-constructing a Dual Feedback Apparatus NIME ’26, June 23–26, 2026, London, UK

-

[14]

Nicholas Shaheed and Ge Wang. 2024. I Am Sitting in a (Latent) Room. In Proceedings of the International Conference on New Interfaces for Musical Expression, S M Astrid Bin and Courtney N. Reed (Eds.). Utrecht, Netherlands, Article 49, 6 pages. https://doi.org/10.5281/zenodo.13904872

-

[15]

Victor Shepardson, Halla Steinunn Stefánsdóttir, and Thor Magnusson. 2025. Evolving the Living Looper: Artistic Research, Online Learning, and Tentacle Pendula. In Proceedings of the International Conference on New Interfaces for Musical Expression , Doga Cavdir and Florent Berthaut (Eds.). Canberra, Australia, Article 36, 6 pages. https://doi.org/10.5281...

-

[16]

Halla Steinunn Stefánsdóttir and Thor Magnusson. 2025. Of altered instrumental relations: a practice-led inquiry into agency through musical performance with neural audio synthesis and violin. Frontiers in Computer Science Volume 7 - 2025 (2025). https://doi.org/10.3389/fcomp.2025. 1578595

-

[17]

Koray Tahiroğlu, Miranda Kastemaa, and Oskar Koli. 2021. AI-terity 2.0: An Autonomous NIME Featuring GANSpaceSynth Deep Learning Model. In Proceedings of the International Conference on New Interfaces for Musical Expression . Shanghai, China, Article 80. https://doi.org/10.21428/ 92fbeb44.3d0e9e12

2021

-

[18]

Paul Théberge. 2017. Musical Instruments as Assemblage . Springer Singapore, Singapore, 59–66. https://doi.org/10.1007/978-981-10-2951-6_5

-

[19]

Federico Visi. 2024. The Sophtar: a networkable feedback string instrument with embedded machine learning. In Proceedings of the International Conference on New Interfaces for Musical Expression , S M Astrid Bin and Courtney N. Reed (Eds.). Utrecht, Netherlands, Article 22, 7 pages. https: //doi.org/10.5281/zenodo.13904810

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.