Recognition: unknown

Qvine: Vine Structured Quantum Circuits for Loading High Dimensional Distributions

Pith reviewed 2026-05-07 13:58 UTC · model grok-4.3

The pith

Vine-structured quantum circuits load high-dimensional distributions with depth scaling linearly or quadratically in dimension.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

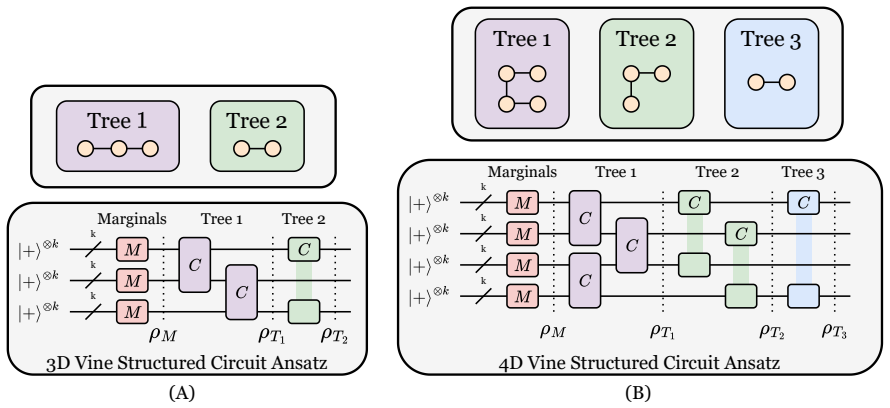

Qvine builds a parameterized quantum circuit by translating each pair-copula in a vine decomposition into a corresponding block of gates, so that the circuit depth is at most quadratic in dimension for regular vines and linear for D-vines and many practical regular vines. The same structure preserves the approximation quality already known from classical vine copulas and yields circuits that can be trained to high fidelity on low-dimensional test cases.

What carries the argument

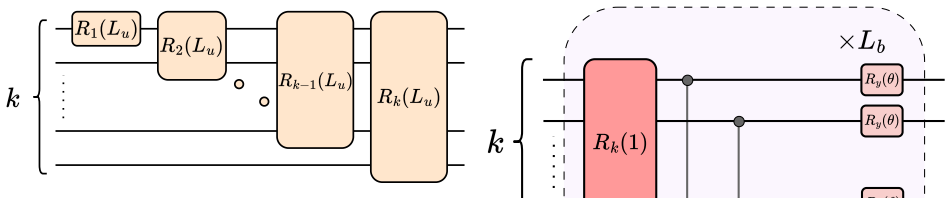

The Qvine ansatz, a quantum circuit whose gate layers are arranged exactly according to the tree sequence of a vine copula decomposition, thereby encoding successive conditional dependencies with controlled depth.

If this is right

- Distributions in moderate dimensions become encodable with circuit depths that remain practical on near-term hardware.

- The same vine structure supplies a natural inductive bias that supports gradient-based training without requiring exponential resources.

- Applications such as quantum generative modeling or risk-measure estimation in finance can use the loaded state directly.

- D-vine choices give the strongest scaling guarantee, making them the default for hardware with strict depth limits.

Where Pith is reading between the lines

- The linear-depth regime for D-vines suggests that hardware-aware vine selection could further reduce compilation overhead on specific qubit topologies.

- Once a distribution is loaded, the same structured circuit might be reused as a generative model by measuring in the computational basis.

- The vine layering pattern could be combined with other ansatz motifs such as hardware-efficient layers to balance expressivity and trainability.

Load-bearing premise

Directly embedding the classical vine decomposition into quantum gates will keep both the approximation accuracy and the trainability intact without new sources of overhead or loss of expressivity.

What would settle it

For a ten-dimensional test distribution, train a D-vine Qvine circuit whose depth is linear in dimension and measure whether its final fidelity falls below the fidelity achieved by an unstructured circuit of comparable total gate count, or whether gradients vanish during training.

Figures

read the original abstract

Loading high dimensional distributions is an important task for utilizing quantum computers on applications ranging from machine learning to finance. The high dimensionality leads to a curse of dimensionality, representing a d-dimensional distribution with k resolution requires dk qubits and an unstructured parameterized circuit would express a unitary in an exponential operator space in the number of qubits, leading to vanishing gradients and poor convergence guarantees even at high depth. Vine copula decompositions are widely used to represent high dimensional distributions classically, showing high quality approximation in many important applications, such as financial modeling. We present Qvine, a vine structured ansatz for quantum circuits, that mirrors the vine decomposition to construct scalable quantum circuits with efficient trainability while achieving similarly high quality approximation for amplitude encoding distributions. For regular vines (R-vines), we show that the circuit depth scales at most quadratic in the dimension of the distribution, while for D-vines, as well as many practical R-vines, the circuit depth scales linear in the dimension. For 3-dimensional and 4-dimensional Gaussians and empirical joint stock price return distributions for selected stocks, our experiments show Qvines achieve high quality loading.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces Qvine, a vine-structured parameterized quantum circuit ansatz for amplitude encoding of high-dimensional distributions. It claims that mirroring classical vine copula decompositions yields circuits whose depth scales at most quadratically in dimension d for regular vines (R-vines) and linearly for D-vines (and many practical R-vines), while preserving high approximation quality, as evidenced by experiments on 3D/4D Gaussians and empirical stock-return distributions.

Significance. If the scaling and quality claims hold, the work would offer a structured route to trainable quantum circuits for distribution loading that sidesteps the exponential parameter space of unstructured ansatze, with direct relevance to quantum machine learning and finance. The direct structural mapping from classical vines is a conceptual strength that could aid interpretability and trainability.

major comments (3)

- [Abstract] Abstract: the claim that 'our experiments show Qvines achieve high quality loading' is unsupported by any reported quantitative metrics (fidelity, KL divergence, total variation, etc.), baselines, error bars, or statistical analysis, which is load-bearing for the central approximation-quality assertion.

- [Abstract] Abstract: the depth-scaling statements ('at most quadratic' for R-vines, 'linear' for D-vines) rest on a structural argument without an explicit gate-count derivation, theorem statement, or accounting for possible implementation overheads in the quantum circuit construction, undermining verification of the claimed scalability.

- [Abstract] Abstract: no details are given on how the vine decomposition is mapped to specific gates or parameterized blocks, leaving open whether the mapping introduces new expressivity losses or trainability issues that could negate the depth advantage.

Simulated Author's Rebuttal

We thank the referee for the careful and constructive review. The comments highlight opportunities to strengthen the abstract's self-contained presentation of evidence, derivations, and construction details. We address each point below and will incorporate revisions to improve clarity and verifiability while preserving the manuscript's core contributions.

read point-by-point responses

-

Referee: [Abstract] Abstract: the claim that 'our experiments show Qvines achieve high quality loading' is unsupported by any reported quantitative metrics (fidelity, KL divergence, total variation, etc.), baselines, error bars, or statistical analysis, which is load-bearing for the central approximation-quality assertion.

Authors: We agree that the abstract would be strengthened by explicit quantitative support. The experimental results in Section 4 report fidelity, KL divergence, and total variation values for the 3D/4D Gaussian and stock-return cases, along with comparisons to unstructured baselines. To address the concern directly, we will revise the abstract to reference these specific metrics and note that error bars and statistical analysis appear in the main text and figures. revision: yes

-

Referee: [Abstract] Abstract: the depth-scaling statements ('at most quadratic' for R-vines, 'linear' for D-vines) rest on a structural argument without an explicit gate-count derivation, theorem statement, or accounting for possible implementation overheads in the quantum circuit construction, undermining verification of the claimed scalability.

Authors: The scaling follows from enumerating the pair-copula terms in each vine tree and mapping each to a fixed-depth two-qubit block. A gate-count derivation and bound proof are given in Section 3. We will add an explicit theorem statement to the main text (and a concise reference in the abstract) that states the O(d^2) and O(d) bounds while explicitly discussing overheads such as SWAP gates for non-linear qubit layouts. revision: yes

-

Referee: [Abstract] Abstract: no details are given on how the vine decomposition is mapped to specific gates or parameterized blocks, leaving open whether the mapping introduces new expressivity losses or trainability issues that could negate the depth advantage.

Authors: Section 3 details the mapping: each vine edge corresponds to a parameterized two-qubit block (RY/RZ rotations plus CNOT) that encodes the conditional copula for amplitude encoding. We will expand this section with circuit diagrams of the basic blocks, explicit parameter counts, and a short analysis of expressivity and gradient behavior to confirm that the structure does not introduce prohibitive losses relative to the depth savings. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper constructs the Qvine ansatz by explicit structural mirroring of classical vine copula decompositions into parameterized quantum gates for amplitude encoding. The depth scaling claims (at most quadratic for R-vines, linear for D-vines) are direct consequences of counting gates in that mirrored structure, using the known parameter count and conditional independence properties of vines from classical statistics; this is not a self-referential fit or prediction but a straightforward translation. Experimental results on 3D/4D Gaussians and stock returns are separate empirical checks on approximation quality and do not feed back into the scaling derivation. No step reduces by construction to its own inputs, no self-citation is load-bearing for the central claims, and the derivation remains self-contained against external vine literature and direct gate counting.

Axiom & Free-Parameter Ledger

invented entities (1)

-

Qvine ansatz

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Quantum generative ad- versarial networks for learning and loading random distributions,

C. Zoufal, A. Lucchi, and S. Woerner, “Quantum generative ad- versarial networks for learning and loading random distributions,” npj Quantum Information , vol. 5, no. 1, p. 103, 2019

2019

-

[2]

Efficient quantum amplitude encoding of polynomial functions,

J. Gonzalez-Conde, T. W. Watts, P. Rodriguez-Grasa, and M. Sanz, “Efficient quantum amplitude encoding of polynomial functions,” Quantum, vol. 8, p. 1297, 2024

2024

-

[3]

G. Brassard, P. Hoyer, M. Mosca, and A. Tapp, “Quantum amplitude amplification and estimation,” arXiv preprint quant- ph/0005055, 2000

-

[4]

Quantum risk analysis,

S. Woerner and D. J. Egger, “Quantum risk analysis,” npj Quantum Information, vol. 5, no. 1, p. 15, 2019

2019

-

[5]

Option pricing using quantum com- puters,

N. Stamatopoulos, D. J. Egger, Y . Sun, C. Zoufal, R. Iten, N. Shen, and S. Woerner, “Option pricing using quantum com- puters,” Quantum, vol. 4, p. 291, 2020

2020

-

[6]

A hybrid quantum wasserstein gan with applications to option pricing,

F. Fuchs and B. Horvath, “A hybrid quantum wasserstein gan with applications to option pricing,” Available at SSRN 4514510, 2023

2023

-

[7]

Contextual quantum neural networks for stock price prediction,

S. Mourya, H. Leipold, and B. Adhikari, “Contextual quantum neural networks for stock price prediction,” Scientific Reports , 2026

2026

-

[8]

Hierarchical learning for training large-scale variational quantum circuits,

H. Gharibyan, V . P. Su, and H. Tepanyan, “Hierarchical learning for training large-scale variational quantum circuits,” in 2024 International Conference on Machine Learning and Applications (ICMLA), 2024, pp. 1810–1814

2024

-

[9]

Risk management with high- dimensional vine copulas: An analysis of the euro stoxx 50,

E. C. Brechmann and C. Czado, “Risk management with high- dimensional vine copulas: An analysis of the euro stoxx 50,” Statistics and Risk Modeling , vol. 30, no. 4, pp. 307–342, 2013

2013

-

[10]

Mea- suring systemic risk using vine-copula,

A. Pourkhanali, J.-M. Kim, L. Tafakori, and F. A. Fard, “Mea- suring systemic risk using vine-copula,” Economic modelling , vol. 53, pp. 63–74, 2016

2016

-

[11]

Forecasting var and es of stock index portfolio: A vine copula method,

B. Zhang, Y . Wei, J. Yu, X. Lai, and Z. Peng, “Forecasting var and es of stock index portfolio: A vine copula method,” Physica A: Statistical Mechanics and its Applications , vol. 416, pp. 112– 124, 2014

2014

-

[12]

Predicting hydrological drought with bayesian model averaging ensemble vine copula (bmavic) model,

H. Wu, X. Su, V . P. Singh, and T. Zhang, “Predicting hydrological drought with bayesian model averaging ensemble vine copula (bmavic) model,” Water Resources Research, vol. 58, no. 11, p. e2022WR033146, 2022

2022

-

[13]

Canonical vine copulas in the context of modern portfolio management: Are they worth it?

R. K. Y . Low, J. Alcock, R. Faff, and T. Brailsford, “Canonical vine copulas in the context of modern portfolio management: Are they worth it?” Asymmetric Dependence in Finance: Di- versification, Correlation and Portfolio Management in Market Downturns, pp. 263–289, 2018

2018

-

[14]

Generative quantum learning of joint probability distribution functions,

E. Y . Zhu, S. Johri, D. Bacon, M. Esencan, J. Kim, M. Muir, N. Murgai, J. Nguyen, N. Pisenti, A. Schouela et al., “Generative quantum learning of joint probability distribution functions,” Physical Review Research, vol. 4, no. 4, p. 043092, 2022

2022

-

[15]

Theory of overparametrization in quantum neural networks,

M. Larocca, N. Ju, D. Garc ´ıa-Mart´ın, P. J. Coles, and M. Cerezo, “Theory of overparametrization in quantum neural networks,” Nature Computational Science, vol. 3, no. 6, pp. 542–551, 2023

2023

-

[16]

(2026) S&p 500 market data

Financial Modeling Prep. (2026) S&p 500 market data. [Online]. Available: https://site.financialmodelingprep.com/

2026

-

[17]

Pair-copula constructions for financial applications: A review,

K. Aas, “Pair-copula constructions for financial applications: A review,”Econometrics, vol. 4, no. 4, p. 43, 2016

2016

-

[18]

Statistical inference for regular vines and appli- cation,

J. F. Dißmann, “Statistical inference for regular vines and appli- cation,” 2010

2010

-

[19]

Analyzing dependent data with vine copulas,

C. Czado, “Analyzing dependent data with vine copulas,” Lecture Notes in Statistics, Springer , vol. 222, 2019

2019

-

[20]

R. B. Nelsen, An introduction to copulas . Springer, 2006

2006

-

[21]

Fonctions de r ´epartition `a n dimensions et leurs marges,

A. Sklar, “Fonctions de r ´epartition `a n dimensions et leurs marges,” Publications de l’Institut de Statistique de l’Universit ´e de Paris, vol. 8, pp. 229–231, 1959

1959

-

[22]

Vines–a new graphical model for dependent random variables,

T. Bedford and R. M. Cooke, “Vines–a new graphical model for dependent random variables,” The Annals of statistics , vol. 30, no. 4, pp. 1031–1068, 2002

2002

-

[23]

Pair-copula constructions of multiple dependence,

K. Aas, C. Czado, A. Frigessi, and H. Bakken, “Pair-copula constructions of multiple dependence,” Insurance: Mathematics and economics, vol. 44, no. 2, pp. 182–198, 2009

2009

-

[24]

Kurowicka and R

D. Kurowicka and R. M. Cooke, Uncertainty analysis with high dimensional dependence modelling . John Wiley & Sons, 2006

2006

-

[25]

Financial dependence analysis: applications of vine copulas,

D. E. Allen, M. A. Ashraf, M. McAleer, R. J. Powell, and A. K. Singh, “Financial dependence analysis: applications of vine copulas,” Statistica Neerlandica , vol. 67, no. 4, pp. 403–435, 2013

2013

-

[26]

Truncated regular vines in high dimensions with application to financial data,

E. C. Brechmann, C. Czado, and K. Aas, “Truncated regular vines in high dimensions with application to financial data,” Canadian journal of statistics , vol. 40, no. 1, pp. 68–85, 2012

2012

-

[27]

Risk measurement and risk modelling using applications of vine copulas,

D. E. Allen, M. McAleer, and A. K. Singh, “Risk measurement and risk modelling using applications of vine copulas,” Sustain- ability, vol. 9, no. 10, p. 1762, 2017

2017

-

[28]

Bayesian model selection for d-vine pair- copula constructions,

A. Min and C. Czado, “Bayesian model selection for d-vine pair- copula constructions,” Canadian Journal of Statistics , vol. 39, no. 2, pp. 239–258, 2011

2011

-

[29]

Modeling high dimen- sional time-varying dependence using d-vine scar models,

C. Almeida, C. Czado, and H. Manner, “Modeling high dimen- sional time-varying dependence using d-vine scar models,” arXiv preprint arXiv:1202.2008, 2012

-

[30]

H. Gharibyan, V . Su, and H. Tepanyan, “Hierarchical Learning for Quantum ML: Novel Training Technique for Large-Scale Variational Quantum Circuits,” Nov. 2023, arXiv:2311.12929 [quant-ph]

-

[31]

On the lrerror in histogram density es- timation: The multidimensional case,

J. Beirlant and L. Gyorfi, “On the lrerror in histogram density es- timation: The multidimensional case,” Journal of Nonparametric Statistics, vol. 9, no. 2, pp. 197–216, 1998

1998

-

[32]

Symmetry principles in quantum systems theory,

R. Zeier and T. Schulte-Herbr ¨uggen, “Symmetry principles in quantum systems theory,” Journal of mathematical physics , vol. 52, no. 11, 2011

2011

-

[33]

Diagnosing barren plateaus with tools from quantum optimal control,

M. Larocca, P. Czarnik, K. Sharma, G. Muraleedharan, P. J. Coles, and M. Cerezo, “Diagnosing barren plateaus with tools from quantum optimal control,” Quantum, vol. 6, p. 824, 2022

2022

-

[34]

Classification of dynamical lie algebras of 2-local spin systems on linear, circular and fully connected topologies,

R. Wiersema, E. K ¨okc¨u, A. F. Kemper, and B. N. Bakalov, “Classification of dynamical lie algebras of 2-local spin systems on linear, circular and fully connected topologies,” npj Quantum Information, vol. 10, no. 1, p. 110, 2024

2024

-

[35]

Adam: A Method for Stochastic Optimization

D. P. Kingma and J. Ba, “Adam: A method for stochastic optimization,” arXiv preprint arXiv:1412.6980 , 2014

work page internal anchor Pith review arXiv 2014

-

[36]

PennyLane: Automatic differentiation of hybrid quantum-classical computations

V . Bergholm, J. Izaac, M. Schuld, C. Gogolin, S. Ahmed, V . Ajith, M. S. Alam, G. Alonso-Linaje, B. Akash- Narayanan, A. Asadi et al. , “Pennylane: Automatic differenti- ation of hybrid quantum-classical computations,” arXiv preprint arXiv:1811.04968, 2018

work page internal anchor Pith review arXiv 2018

-

[37]

O jist ´em probl ´emu minim ´aln´ım. (z dopisu panu o. bor˚uvkovi),

V . Jarn ´ık, “O jist ´em probl ´emu minim ´aln´ım. (z dopisu panu o. bor˚uvkovi),” Pr´ace Moravsk´e pˇr´ırodovˇedeck´e spoleˇcnosti, vol. 6, no. 4, pp. 57–63, 1930

1930

-

[38]

Shortest connection networks and some general- izations,

R. C. Prim, “Shortest connection networks and some general- izations,” The Bell System Technical Journal , vol. 36, no. 6, pp. 1389–1401, 1957

1957

-

[39]

pyvinecopulib,

T. Nagler and T. Vatter, “pyvinecopulib,” Feb. 2025

2025

-

[40]

Asymptotic properties of histogram density estimation for long- span high-frequency data in diffusion processes,

S. Zhang, D. Liang, S. Yang, Z. Wu, X. Yang, and X. Yang, “Asymptotic properties of histogram density estimation for long- span high-frequency data in diffusion processes,” Communica- tions in Statistics-Simulation and Computation , vol. 54, no. 12, pp. 5210–5231, 2025

2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.