Recognition: unknown

Evolutionary feature selection for spiking neural network pattern classifiers

Pith reviewed 2026-05-07 12:39 UTC · model grok-4.3

The pith

Extending evolutionary feature selection to JASTAP spiking networks permits smaller models that classify noisy data accurately.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The paper establishes that applying the evolutionary feature selection and training procedure to the JASTAP model results in smaller neural networks capable of classifying the IRIS data set with no loss in accuracy even when the data is noisier.

What carries the argument

The JASTAP neural network model together with an evolutionary algorithm for simultaneous feature selection and network parameter optimization.

If this is right

- Smaller networks achieve equivalent accuracy on the IRIS classification task.

- The networks tolerate higher noise levels in input data.

- The method integrates feature selection directly into the evolutionary training process.

- JASTAP serves as a practical alternative to multi-layer perceptrons for pattern classification.

Where Pith is reading between the lines

- This approach could extend to other spiking neural network variants for improved scalability.

- Validation on diverse datasets would test if the noise tolerance generalizes beyond the IRIS benchmark.

- Potential for reduced training time or energy use in embedded classification systems follows if smaller networks prove reliable.

Load-bearing premise

The evolutionary procedure from multi-layer perceptrons transfers directly to the JASTAP spiking model without modification and delivers performance benefits on noisy data.

What would settle it

Observing degraded accuracy when using the smaller JASTAP networks on the IRIS dataset with added noise or on other standard classification benchmarks would disprove the central claim.

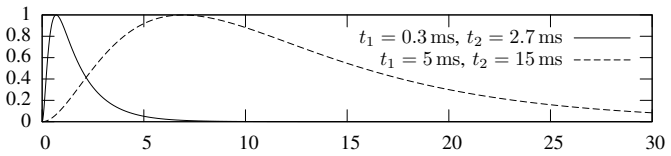

Figures

read the original abstract

This paper presents an application of the biologically realistic JASTAP neural network model to classification tasks. The JASTAP neural network model is presented as an alternative to the basic multi-layer perceptron model. An evolutionary procedure previously applied to the simultaneous solution of feature selection and neural network training on standard multi-layer perceptrons is extended with JASTAP model. Preliminary results on IRIS standard data set give evidence that this extension allows the use of smaller neural networks that can handle noisier data without any degradation in classification accuracy.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript extends a previously developed evolutionary procedure for simultaneous feature selection and training of multi-layer perceptrons to the JASTAP biologically realistic spiking neural network model. It applies this to classification tasks and reports preliminary results on the standard IRIS dataset as evidence that the extension permits smaller networks that maintain classification accuracy while handling noisier data.

Significance. If the central claims can be substantiated through detailed methods, baselines, and explicit noise experiments, the work would offer a modest but useful contribution to evolutionary optimization of spiking networks for pattern classification. It could support development of more compact, robust classifiers suitable for neuromorphic hardware. The preliminary nature and lack of verification details currently limit broader impact.

major comments (2)

- Abstract: The claim that the JASTAP extension 'allows the use of smaller neural networks that can handle noisier data without any degradation in classification accuracy' is load-bearing but unsupported. Only results on the clean standard IRIS dataset are referenced; no noise model, corruption procedure, or comparative accuracy results under added noise are described, creating an evidentiary gap between the reported experiments and the noise-robustness conclusion.

- Abstract and methods description: No details are supplied on the evolutionary algorithm parameters, JASTAP network architectures used, performance metrics with error bars, baseline comparisons against standard MLPs or other classifiers, or data exclusion rules. These omissions prevent independent verification of the reported smaller networks and accuracy maintenance, which are central to the paper's contribution.

minor comments (1)

- The abstract would benefit from quantifying the network size reduction achieved and stating the exact classification accuracy values obtained.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. We agree that the abstract claims require stronger substantiation and that methodological details must be expanded for reproducibility. We will perform a major revision to address both points.

read point-by-point responses

-

Referee: Abstract: The claim that the JASTAP extension 'allows the use of smaller neural networks that can handle noisier data without any degradation in classification accuracy' is load-bearing but unsupported. Only results on the clean standard IRIS dataset are referenced; no noise model, corruption procedure, or comparative accuracy results under added noise are described, creating an evidentiary gap between the reported experiments and the noise-robustness conclusion.

Authors: We acknowledge the evidentiary gap. The current experiments use only the standard clean IRIS dataset, and the abstract claim about noisier data is an extrapolation from the biologically realistic properties of JASTAP rather than direct evidence. In the revision we will add explicit noise-robustness experiments (e.g., additive Gaussian noise at varying levels to input features, with a defined corruption procedure) and report comparative classification accuracies (with error bars) for the evolved smaller networks versus baseline networks. The abstract will be revised to reflect these new results. revision: yes

-

Referee: Abstract and methods description: No details are supplied on the evolutionary algorithm parameters, JASTAP network architectures used, performance metrics with error bars, baseline comparisons against standard MLPs or other classifiers, or data exclusion rules. These omissions prevent independent verification of the reported smaller networks and accuracy maintenance, which are central to the paper's contribution.

Authors: We agree that these omissions limit verifiability. The revised manuscript will expand the methods section to specify: evolutionary algorithm parameters (population size, generations, mutation/crossover rates, fitness function); JASTAP network architectures (neuron counts per layer, spike-timing parameters, connectivity); performance metrics reported as means with standard deviations or error bars across repeated runs; baseline comparisons against standard MLPs (and optionally SVM or other classifiers) using identical feature-selection and training protocols; and any IRIS data preprocessing or exclusion rules. The abstract will be updated for precision and to reference the added experiments. revision: yes

Circularity Check

No circularity detected in derivation or claims

full rationale

The paper extends a previously developed evolutionary procedure for feature selection and training from multi-layer perceptrons to the JASTAP spiking model, then reports preliminary empirical results on the standard IRIS dataset. No derivation chain, equations, or first-principles predictions are presented that reduce by construction to the inputs themselves. The central claim rests on experimental outcomes rather than self-definition, fitted parameters renamed as predictions, or load-bearing self-citations whose validity depends on the current work. Self-citation of prior procedure is normal and does not bear the load here, as the new results are independent observations.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

McGraw-Hill Higher Education (1997)

Mitchell, T.M.: Machine Learning. McGraw-Hill Higher Education (1997)

1997

-

[2]

Journal of Applied Logic2(2004) 241–243

Garcez, A., Gabbay, D., H ¨olldobler, S., Taylor, J.: Editorial. Journal of Applied Logic2(2004) 241–243

2004

-

[3]

Journal of Applied Logic2(2004) 245 – 272

Hitzler, P., H ¨olldobler, S., Seda, A.K.: Logic programs and connectionist networks. Journal of Applied Logic2(2004) 245 – 272

2004

-

[4]

second edn

Arbib, M., ed.: The Handbook of Brain Theory and Neural Networks. second edn. MIT Press (2003)

2003

-

[5]

Parallel distributed processing: explorations in the microstructure of cognition, vol

Rumelhart, D.E., Hinton, G.E., Williams, R.J.: Learning internal representations by error propagation. Parallel distributed processing: explorations in the microstructure of cognition, vol. 1: foundations (1986) 318–362

1986

-

[6]

Computers and Artificial Intelligence13(1994) 603–620

Janco, J., Stavrovsky, I., Pavlasek, J.: Modeling of neuronal functions: A neuronlike element with the graded response. Computers and Artificial Intelligence13(1994) 603–620

1994

-

[7]

V olume 1

Maass, W., Bishop, C.M., eds.: Pulsed Neural Networks. V olume 1. MIT Press, Cambridge, MA, USA (1999)

1999

-

[8]

CEN- TRIA Internal technical report (2005)

Castellani, M., Marques, N.C.: A technical report on the evolutionary feature selection for artificial neural network pattern classifiers. CEN- TRIA Internal technical report (2005)

2005

-

[9]

Neurocomputing48 (2002) 17–37

Bohte, S.M., Kok, J.N., Poutr ´e, J.A.L.: Error-backpropagation in temporally encoded networks of spiking neurons. Neurocomputing48 (2002) 17–37

2002

-

[10]

J Physiol (Lond)343(1983) 117–133

Redman, S., Walmsley, B.: The time course of synaptic potentials evoked in cat spinal motoneurones at identified group ia synapses. J Physiol (Lond)343(1983) 117–133

1983

-

[11]

Biologia, Bratislava 56(2001) 591–604

Pavlasek, J., Jenca, J.: Temporal coding and recognition of uncued tem- poral patterns in neuronal spike trains: biologically plausible network of coincidence detectors and coordinated time delays. Biologia, Bratislava 56(2001) 591–604

2001

-

[12]

Acta Neurobiol Exp (Wars)63(2003) 83–98

Pavlasek, J., Jenca, J., Harman, R.: Rate coding: neurobiological network performing detection of the difference between mean spiking rates. Acta Neurobiol Exp (Wars)63(2003) 83–98

2003

-

[13]

Master’s thesis, Comenius University, Bratislava, Slovakia (2005)

Valko, M.: Evolving neural networks for statistical decision theory. Master’s thesis, Comenius University, Bratislava, Slovakia (2005)

2005

-

[14]

Oxford University Press (1998)

Koch, C.: Biophysics of Computation: Information Processing in Single Neurons (Computational Neuroscience). Oxford University Press (1998)

1998

-

[15]

Gerstner, W.: Time structure of the activity in neural network models. Phys. Rev. E51(1995) 738–758

1995

-

[16]

Singer, W.: Time as coding space? Curt. Op. Neurobiol.9(1999) 189– 194

1999

-

[17]

MIT Press, Cambridge, MA, USA (1999)

Abbott, L., Sejnowski, T.J.: Neural codes and distributed representations: foundations of neural computation. MIT Press, Cambridge, MA, USA (1999)

1999

-

[18]

Hettich, C.B., Merz, C.: UCI repository of machine learning databases (1998)

S. Hettich, C.B., Merz, C.: UCI repository of machine learning databases (1998)

1998

-

[19]

Springer-Verlag, New York (1996)

Fishman, G.S.: Monte–Carlo — concepts algorithms and applications. Springer-Verlag, New York (1996)

1996

-

[20]

Springer, Lecture Notes in Computer Science2189(2001) 63–72

Marques, N.C., Lopes, G.P.: Tagging with small training corpora. Springer, Lecture Notes in Computer Science2189(2001) 63–72

2001

-

[21]

EPIA’05-12th Portuguese Conference on Artificial Intelligence, Amilcar Cardoso, Gael Dias, Carlos Bento (eds.), Springer, Guarda, Portugal (2005)

Castellani, M., Marques N.: Automatic Detection of Meddies through Texture Analysis of Sea Surface Temperature Maps. EPIA’05-12th Portuguese Conference on Artificial Intelligence, Amilcar Cardoso, Gael Dias, Carlos Bento (eds.), Springer, Guarda, Portugal (2005)

2005

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.