Recognition: unknown

Revealing NVIDIA Closed-Source Driver Command Streams for CPU-GPU Runtime Behavior Insight

Pith reviewed 2026-05-07 11:52 UTC · model grok-4.3

The pith

A hardware watchpoint on the GPU doorbell register recovers the complete command streams from NVIDIA's closed-source userspace driver.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We recover the hardware command streams emitted by NVIDIA's closed-source userspace driver with full integrity by leveraging the recently open-sourced kernel driver, instrumenting the memory-mapping path, and installing a hardware watchpoint on the userspace mapping of the GPU doorbell register. This lets us capture complete command submissions at the moment they are committed. Using this methodology, we present two case studies. For CUDA data movement, we identify the DMA submission modes selected by the driver and characterize their raw hardware performance independently of driver overhead through CUDA-bypassing controlled command issuance. For CUDA Graphs, we show that the reduced launch

What carries the argument

Instrumentation of the memory-mapping path in the open kernel driver together with a hardware watchpoint on the doorbell register to intercept command streams at submission.

If this is right

- DMA submission modes for CUDA data movement can be identified and their raw hardware performance characterized without driver overhead.

- Reduced launch overhead in newer CUDA releases for graphs is associated with smaller command footprints and more efficient submission patterns.

- Command-level visibility provides a practical basis for understanding GPU middleware behavior and improving performance interpretation.

- The approach can inform hardware-software co-design for CUDA and related accelerator stacks.

Where Pith is reading between the lines

- The same instrumentation pattern could be tested on other partially open accelerator drivers to recover their command streams.

- Observed command sequences could be replayed in simulators to predict performance for new hardware designs.

- This visibility offers a concrete reference for evaluating whether open-source command generators match proprietary efficiency.

Load-bearing premise

The added instrumentation and hardware watchpoint do not change the timing, ordering, or content of command submissions from the closed-source driver.

What would settle it

A direct comparison that shows the captured streams miss submissions, differ in content, or alter timing compared to the driver's actual behavior, verified by an independent capture method.

Figures

read the original abstract

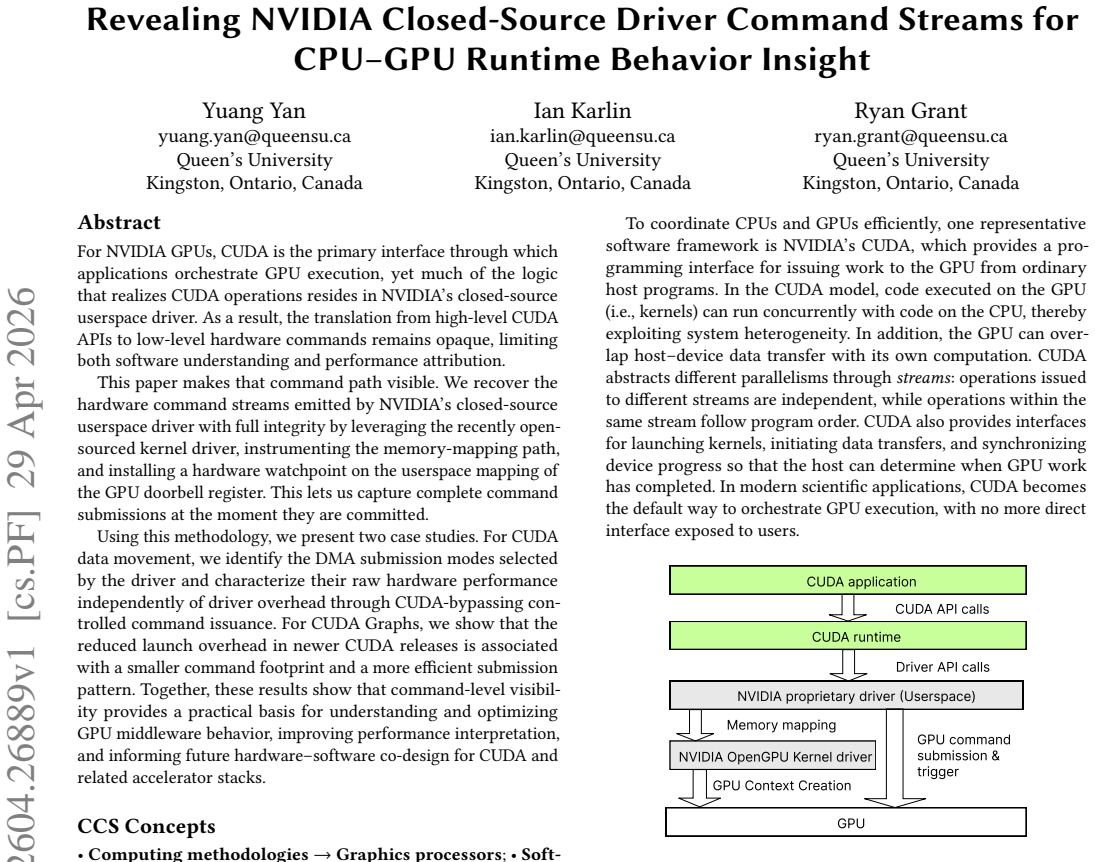

For NVIDIA GPUs, CUDA is the primary interface through which applications orchestrate GPU execution, yet much of the logic that realizes CUDA operations resides in NVIDIA's closed-source userspace driver. As a result, the translation from high-level CUDA APIs to low-level hardware commands remains opaque, limiting both software understanding and performance attribution. This paper makes that command path visible. We recover the hardware command streams emitted by NVIDIA's closed-source userspace driver with full integrity by leveraging the recently open-sourced kernel driver, instrumenting the memory-mapping path, and installing a hardware watchpoint on the userspace mapping of the GPU doorbell register. This lets us capture complete command submissions at the moment they are committed. Using this methodology, we present two case studies. For CUDA data movement, we identify the DMA submission modes selected by the driver and characterize their raw hardware performance independently of driver overhead through CUDA-bypassing controlled command issuance. For CUDA Graphs, we show that the reduced launch overhead in newer CUDA releases is associated with a smaller command footprint and a more efficient submission pattern. Together, these results show that command-level visibility provides a practical basis for understanding and optimizing GPU middleware behavior, improving performance interpretation, and informing future hardware--software co-design for CUDA and related accelerator stacks.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents a method to recover the hardware command streams emitted by NVIDIA's closed-source userspace driver with claimed full integrity. It leverages the recently open-sourced kernel driver by instrumenting the memory-mapping path and installing a hardware watchpoint on the userspace mapping of the GPU doorbell register to capture complete command submissions at commit time. Two case studies are provided: one characterizing DMA submission modes and raw hardware performance for CUDA data movement via CUDA-bypassing command issuance, and another showing that reduced launch overhead in newer CUDA releases correlates with smaller command footprints and more efficient submission patterns in CUDA Graphs.

Significance. If the capture method is non-intrusive and the streams are unmodified, the work offers a practical foundation for low-level visibility into proprietary GPU middleware, enabling improved performance interpretation, optimization of CUDA and related stacks, and informed hardware-software co-design. The case studies illustrate concrete benefits in attributing data-movement costs and understanding launch-efficiency changes across CUDA versions.

major comments (1)

- [capture methodology (abstract and §3)] The central claim of recovering streams 'with full integrity' rests on the instrumentation of the memory-mapping path and the hardware watchpoint being transparent to the closed-source driver. No direct comparison to an unmodified submission path, no timing measurements of added latency, and no argument ruling out reordering or content changes are provided, leaving the weakest assumption unverified despite the closed-source nature preventing independent checks.

minor comments (1)

- [abstract] The abstract and introduction could more explicitly state the scope limitations imposed by the closed-source driver (e.g., inability to compare against ground truth).

Simulated Author's Rebuttal

We thank the referee for the careful review and for identifying the need for stronger verification of the capture method's transparency. We address the major comment on the capture methodology below and will revise the manuscript accordingly.

read point-by-point responses

-

Referee: [capture methodology (abstract and §3)] The central claim of recovering streams 'with full integrity' rests on the instrumentation of the memory-mapping path and the hardware watchpoint being transparent to the closed-source driver. No direct comparison to an unmodified submission path, no timing measurements of added latency, and no argument ruling out reordering or content changes are provided, leaving the weakest assumption unverified despite the closed-source nature preventing independent checks.

Authors: We agree that the manuscript would benefit from a more explicit defense of the integrity claim. The kernel instrumentation augments only the mmap path in the recently open-sourced kernel driver to record the virtual address of the doorbell mapping; it performs no modification to the mapping itself, no interception of userspace driver calls, and no alteration of command buffers. The hardware watchpoint is installed solely to trigger on the write to the doorbell register, at which point the command buffer is captured. This occurs after the closed-source userspace driver has completed all command preparation and before the GPU begins execution, so the captured stream matches the driver's emitted commands. Reordering or content changes are precluded because our mechanism does not participate in the write or the preparation phase. We acknowledge the absence of side-by-side unmodified comparisons and explicit latency numbers. In revision we will expand §3 with (i) a dedicated paragraph formalizing why the design rules out interference, (ii) available overhead measurements from our testbed, and (iii) discussion of why a fully unmodified comparison is not feasible without driver source access. These changes will directly address the verification concern. revision: yes

Circularity Check

No circularity: empirical instrumentation method with external dependencies

full rationale

The paper describes a systems instrumentation technique that recovers command streams by leveraging the open-sourced NVIDIA kernel driver, memory-mapping instrumentation, and a hardware watchpoint on the doorbell register. No equations, fitted parameters, or first-principles derivations appear in the provided text. The central claim of 'full integrity' recovery rests on an external assumption about non-interference from added instrumentation rather than any self-definition, self-citation chain, or renaming of known results. The two case studies (DMA modes and CUDA Graphs) are observational characterizations enabled by the method, not predictions that reduce to the inputs by construction. This is a standard non-circular empirical systems paper.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The recently open-sourced kernel driver accurately exposes the memory-mapping path used by the closed-source userspace driver.

Reference graph

Works this paper leans on

-

[1]

Tyler Allen, Bennett Cooper, and Rong Ge. 2024. Fine-grain Quantitative Analysis of Demand Paging in Unified Virtual Memory.ACM Trans. Archit. Code Optim. 21, 1, Article 14 (Jan. 2024), 24 pages. doi:10.1145/3632953

-

[2]

Rob Armstrong, Kevin Mittman, and Fred Oh. 2024. NVIDIA Transi- tions Fully Towards Open-Source GPU Kernel Modules. NVIDIA Technical Blog. https://developer.nvidia.com/blog/nvidia-transitions-fully-towards-open- source-gpu-kernel-modules/

2024

-

[3]

Joshua Bakita and James H. Anderson. 2024. Demystifying NVIDIA GPU Internals to Enable Reliable GPU Management. In2024 IEEE 30th Real-Time and Embedded Technology and Applications Symposium (RTAS). 294–305. doi:10.1109/RTAS61025. 2024.00031

-

[4]

Bowen, Andrew Case, Ibrahim Baggili, and Golden G

Christopher J. Bowen, Andrew Case, Ibrahim Baggili, and Golden G. Richard

-

[5]

Forensic Science International: Digital Investigation49 (2024), 301760

A step in a new direction: NVIDIA GPU kernel driver memory forensics. Forensic Science International: Digital Investigation49 (2024), 301760. doi:10.1016/ j.fsidi.2024.301760 DFRWS USA 2024 - Selected Papers from the 24th Annual Digital Forensics Research Conference USA

-

[6]

Shulin Fan, Zhichao Hua, Yubin Xia, and Haibo Chen. 2025. XpuTEE: A High- Performance and Practical Heterogeneous Trusted Execution Environment for GPUs.ACM Trans. Comput. Syst.43, 1–2, Article 2 (April 2025), 27 pages. doi:10. 1145/3719653

2025

-

[7]

Zhongshu Gu, Enriquillo Valdez, Salman Ahmed, Julian James Stephen, Michael Le, Hani Jamjoom, Shixuan Zhao, and Zhiqiang Lin. 2025. NVIDIA GPU Confi- dential Computing Demystified. arXiv:2507.02770 [cs.CR] https://arxiv.org/abs/ 2507.02770

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[8]

Houston Hoffman and Fred Oh. 2024. Constant Time Launch for Straight- Line CUDA Graphs and Other Performance Enhancements. NVIDIA Technical Blog. https://developer.nvidia.com/blog/constant-time-launch-for-straight-line- cuda-graphs-and-other-performance-enhancements

2024

-

[9]

John Hubbard, Gonzalo Brito, Chirayu Garg, Nikolay Sakharnykh, and Fred Oh. 2023. Simplifying GPU Application Development with Heterogeneous Memory Management. NVIDIA Technical Blog. https://developer.nvidia.com/blog/simplifying-gpu-application-development- with-heterogeneous-memory-management/

2023

-

[10]

Ao Li, Bojian Zheng, Gennady Pekhimenko, and Fan Long. 2022. Automatic hor- izontal fusion for GPU kernels. InProceedings of the 20th IEEE/ACM International Symposium on Code Generation and Optimization (CGO ’22). IEEE Press, 14–27. doi:10.1109/CGO53902.2022.9741270

-

[11]

2024.User-mode Work Submission

Microsoft. 2024.User-mode Work Submission. https://learn.microsoft.com/en- us/windows-hardware/drivers/display/user-mode-work-submission

2024

-

[12]

NVIDIA. [n. d.].open-gpu-doc: Documentation of NVIDIA chip/hardware interfaces. https://github.com/NVIDIA/open-gpu-doc

-

[13]

NVIDIA. 2025. Open GPU Kernel Modules. GitHub repository. Version 580.105.08

2025

-

[14]

2026.CUDA C++ Programming Guide: Asynchronous Execution

NVIDIA Corporation. 2026.CUDA C++ Programming Guide: Asynchronous Execution. https://docs.nvidia.com/cuda/cuda-programming-guide/02-basics/ asynchronous-execution.html

2026

-

[15]

2026.NVIDIA Nsight Systems User Guide

NVIDIA Corporation. 2026.NVIDIA Nsight Systems User Guide. https://docs. nvidia.com/nsight-systems/UserGuide/index.html

2026

-

[16]

2026.Heterogeneous Memory Management (HMM)

The Linux Kernel community. 2026.Heterogeneous Memory Management (HMM). https://www.kernel.org/doc/html/latest/mm/hmm.html

2026

-

[17]

Shulai Zhang, Ao Xu, Quan Chen, Han Zhao, Weihao Cui, Zhen Wang, Yan Li, Limin Xiao, and Minyi Guo. 2025. Efficient performance-aware GPU sharing with compatibility and isolation through kernel space interception. InProceedings of the 2025 USENIX Conference on Usenix Annual Technical Conference (USENIX ATC ’25). USENIX Association, USA, Article 59, 17 pages

2025

-

[18]

Zhenkai Zhang, Tyler Allen, Fan Yao, Xing Gao, and Rong Ge. 2023. TunneLs for Bootlegging: Fully Reverse-Engineering GPU TLBs for Challenging Isolation Guarantees of NVIDIA MIG. InProceedings of the 2023 ACM SIGSAC Conference Revealing NVIDIA Closed-Source Driver Command Streams for CPU–GPU Runtime Behavior Insight xxx, 2026, xxx on Computer and Communica...

-

[19]

Shixuan Zhao, Zhongshu Gu, Salman Ahmed, Enriquillo Valdez, Hani Jamjoom, and Zhiqiang Lin. 2025. GPU Travelling: Efficient Confidential Collaborative Training with TEE-Enabled GPUs. InProceedings of the 2025 ACM SIGSAC Confer- ence on Computer and Communications Security (CCS ’25). Association for Com- puting Machinery, New York, NY, USA, 2653–2667. doi:...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.