Recognition: unknown

Tailwind: A Practical Framework for Query Accelerators

Pith reviewed 2026-05-07 05:37 UTC · model grok-4.3

The pith

Tailwind lets any relational database use custom accelerators without modifying its engine or optimizer.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Tailwind is an external query planner that sits atop any RDBMS supporting data import and export. Users define accelerators using abstract logical plans (ALPs), a new mostly-declarative abstraction over relational operators built on regular tree expressions. These ALPs let Tailwind automatically construct customized neural network models that estimate when a particular accelerator is beneficial. At runtime the planner transparently rewrites queries to execute across one or more accelerators when the models predict a net gain, otherwise falling back to the underlying RDBMS.

What carries the argument

Abstract logical plans (ALPs): mostly-declarative tree expressions over relational operators that enable automated training of per-accelerator neural network models for benefit prediction and transparent query rewriting.

If this is right

- Accelerators can be added, tested, and removed without any changes to the database engine or its optimizer.

- The same accelerator library works across different RDBMSes such as Redshift and DuckDB as long as they support data import and export.

- Execution can mix accelerated and non-accelerated paths within a single query when multiple accelerators are available.

- Engineering cost for specialized optimizations drops because the work of integration and correctness is handled outside the core system.

Where Pith is reading between the lines

- Database administrators could maintain private accelerator libraries tuned to their own data patterns without waiting for vendor support.

- If ALPs prove expressive enough, the approach could later support accelerators for more complex operations such as multi-way joins or window functions.

- Cloud environments where data movement between storage and compute is already cheap would be natural places to deploy this style of transparent acceleration.

Load-bearing premise

Users can write effective accelerators as abstract logical plans and the resulting neural network models can predict when an accelerator helps without adding unacceptable planning cost or prediction errors.

What would settle it

A workload of queries in which the neural models mispredict accelerator value often enough, or planning overhead exceeds gains often enough, that overall query times become slower than the unmodified RDBMS baseline.

Figures

read the original abstract

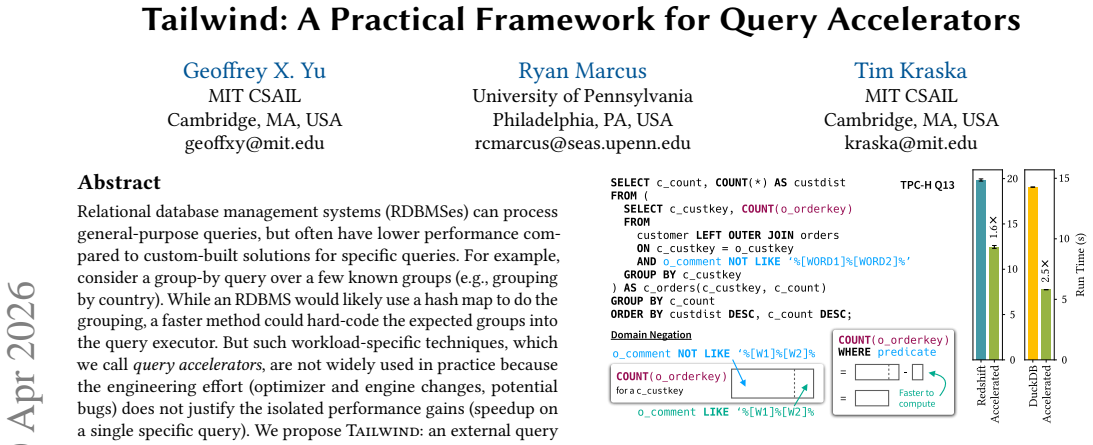

Relational database management systems (RDBMSes) can process general-purpose queries, but often have lower performance compared to custom-built solutions for specific queries. For example, consider a group-by query over a few known groups (e.g., grouping by country). While an RDBMS would likely use a hash map to do the grouping, a faster method could hard-code the expected groups into the query executor. But such workload-specific techniques, which we call query accelerators, are not widely used in practice because the engineering effort (optimizer and engine changes, potential bugs) does not justify the isolated performance gains (speedup on a single specific query). We propose Tailwind: an external query planner that brings accelerators into any RDBMS that supports data import/export. Users define their accelerators using abstract logical plans (ALPs): a new mostly-declarative abstraction over relational operators built on regular tree expressions. ALPs allow Tailwind to automatically build customized neural network models to estimate when using a particular accelerator is beneficial. At runtime, Tailwind sits atop an RDBMS and transparently rewrites queries to run across one or more accelerators when predicted to be beneficial, falling back to the underlying RDBMS when not. On Redshift and DuckDB with a library of four diverse accelerators, Tailwind accelerates TPC-H queries by 1.38x on average (up to 29x).

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents Tailwind, an external query planner that integrates workload-specific query accelerators into general-purpose RDBMSes (e.g., Redshift, DuckDB) supporting data import/export. Users define accelerators via Abstract Logical Plans (ALPs), a declarative abstraction over relational operators using regular tree expressions. Tailwind automatically generates neural-network models to predict accelerator benefit at runtime, transparently rewrites queries to use one or more accelerators when beneficial, and falls back to the RDBMS otherwise. With a library of four diverse accelerators, it reports 1.38x average (up to 29x) speedup on TPC-H queries.

Significance. If the empirical claims hold under rigorous controls, Tailwind would meaningfully reduce the engineering cost of deploying specialized accelerators by automating both the definition interface and the runtime decision logic. The ALP abstraction and automated NN construction are practical contributions that could influence optimizer design in production systems. The evaluation on real engines and a standard benchmark is a positive aspect, though the absence of detailed methodology, predictor metrics, and overhead measurements in the abstract limits assessment of whether the net speedups are robust.

major comments (3)

- [Abstract] Abstract: the central empirical claim of 1.38x average TPC-H speedup (and 29x peak) is presented without any description of experimental methodology, baselines, number of runs, variance, or controls for confounds. This information is load-bearing for verifying the reported gains and must be supplied with concrete numbers and tables.

- [Evaluation] Evaluation section: no numbers are given for the neural-network predictors' precision, recall, training-set size, false-positive rate, or isolated inference latency. Without these, it is impossible to confirm that mispredictions or predictor overhead do not erode or reverse the claimed speedups, directly addressing the stress-test concern.

- [§4] §4 (ALP and model construction): the claim that ALPs allow automatic, effective NN model building for arbitrary accelerators lacks concrete examples of ALP definitions, the resulting model architectures, or validation that the models generalize beyond the training workload. This is central to the 'practical framework' contribution.

minor comments (3)

- [§3] Clarify the exact interface and overhead of the transparent rewrite layer when multiple accelerators are available; a small diagram or pseudocode would help.

- [Abstract] The abstract states 'four diverse accelerators' but does not list them or their target query patterns; add this enumeration early in the paper.

- [Evaluation] Ensure all figures in the evaluation section include error bars or explicit variance reporting to support the average speedup numbers.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback, which identifies opportunities to strengthen the verifiability and concreteness of our presentation. We respond to each major comment below and will revise the manuscript accordingly to address the concerns while preserving the core contributions.

read point-by-point responses

-

Referee: [Abstract] Abstract: the central empirical claim of 1.38x average TPC-H speedup (and 29x peak) is presented without any description of experimental methodology, baselines, number of runs, variance, or controls for confounds. This information is load-bearing for verifying the reported gains and must be supplied with concrete numbers and tables.

Authors: We agree that the abstract would be improved by including a concise reference to the experimental methodology to support the reported speedups. The body of the manuscript (Section 5) already details the TPC-H benchmark, Redshift and DuckDB baselines, multiple runs per query to compute averages and variance, and controls such as isolating accelerator execution from data import/export overhead. We will revise the abstract to briefly note the setup (TPC-H on two engines, native RDBMS baselines, averaged results) and will ensure the evaluation section expands any tables with explicit variance and confound controls. revision: yes

-

Referee: [Evaluation] Evaluation section: no numbers are given for the neural-network predictors' precision, recall, training-set size, false-positive rate, or isolated inference latency. Without these, it is impossible to confirm that mispredictions or predictor overhead do not erode or reverse the claimed speedups, directly addressing the stress-test concern.

Authors: We acknowledge that explicit predictor metrics would directly address potential concerns about misprediction impact and overhead. While the manuscript describes the NN construction process and reports end-to-end gains, it does not isolate these metrics. In revision we will add a dedicated subsection to the Evaluation section reporting training-set sizes (generated from TPC-H query templates), precision/recall/false-positive rates on held-out queries, and measured inference latency (sub-millisecond and negligible relative to query execution). This will confirm that predictor overhead does not reverse the net speedups. revision: yes

-

Referee: [§4] §4 (ALP and model construction): the claim that ALPs allow automatic, effective NN model building for arbitrary accelerators lacks concrete examples of ALP definitions, the resulting model architectures, or validation that the models generalize beyond the training workload. This is central to the 'practical framework' contribution.

Authors: We agree that concrete examples would better substantiate the practicality of ALPs for automated NN construction. The current §4 presents the general ALP syntax and model-building pipeline but omits specific instances. We will revise §4 to include the exact ALP definitions (as regular tree expressions) for each of the four accelerators, the derived NN architectures (feature vectors from ALP nodes and layer configurations), and additional generalization results (e.g., accuracy on queries withheld from training and across scale factors). These additions will illustrate how ALPs enable effective, workload-agnostic model building. revision: yes

Circularity Check

No significant circularity in derivation or claims

full rationale

The paper is a system description of Tailwind, an external query planner using ALPs for accelerator definition and automatically trained neural networks for runtime benefit prediction. The primary result (1.38x average TPC-H speedup on Redshift/DuckDB) is an empirical measurement from benchmark runs, not a first-principles derivation or equation that reduces to its inputs by construction. No self-definitional steps, fitted parameters renamed as predictions, or load-bearing self-citations appear in the load-bearing claims. The NN training and prediction mechanism is a standard supervised learning setup whose accuracy is evaluated externally via the reported speedups rather than being tautological. This is a normal non-circular empirical systems paper.

Axiom & Free-Parameter Ledger

free parameters (1)

- Neural network weights and hyperparameters

axioms (1)

- domain assumption ALPs can capture performance-relevant structure of accelerators

invented entities (2)

-

Abstract Logical Plan (ALP)

no independent evidence

-

Neural network models for accelerator benefit estimation

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Narasayya

Sanjay Agrawal, Surajit Chaudhuri, and Vivek R. Narasayya. 2000. Automated Selection of Materialized Views and Indexes in SQL Databases. In Proceedings of the 26th International Conference on Very Large Data Bases (VLDB ’00) . San Francisco, CA, USA, 496–505

2000

-

[2]

Alexander Aiken and Brian R. Murphy. 1991. Implementing Regular Tree Expres- sions. In Proceedings of the 5th ACM Conference on Functional Programming Lan- guages and Computer Architecture. Springer-Verlag, Berlin, Heidelberg, 427–447

1991

-

[3]

Amazon Web Services. 2025. Amazon EC2. https://aws.amazon.com/ec2/. Retrieved November 2025

2025

-

[4]

Amazon Web Services. 2025. Amazon Redshift. https://aws.amazon.com/ redshift/. Retrieved November 2025

2025

-

[5]

Amazon Web Services. 2025. Amazon S3. https://aws.amazon.com/s3/. Retrieved November 2025

2025

-

[6]

Amazon Web Services. 2026. SQL Commands - Amazon Redshift. https://docs. aws.amazon.com/redshift/latest/dg/c_SQL_commands.html

2026

-

[7]

Apache Software Foundation. 2026. Apache Arrow. https://arrow.apache.org

2026

-

[8]

Mowry, Jignesh M

Samuel Arch, Yuchen Liu, Todd C. Mowry, Jignesh M. Patel, and Andrew Pavlo

-

[9]

Proceedings of the VLDB Endowment 18, 1 (Sept

The Key to Effective UDF Optimization: Before Inlining, First Perform Outlining. Proceedings of the VLDB Endowment 18, 1 (Sept. 2024), 1–13. doi:10. 14778/3696435.3696436

-

[10]

Edmon Begoli, Jesús Camacho-Rodríguez, Julian Hyde, Michael J. Mior, and Daniel Lemire. 2018. Apache Calcite: A Foundational Framework for Optimized Query Processing Over Heterogeneous Data Sources. In Proceedings of the 2018 International Conference on Management of Data (Houston, TX, USA) (SIGMOD ’18). Association for Computing Machinery, New York, NY, ...

-

[11]

Ahlem Belabbaci, Hadda Cherroun, Loek Cleophas, and Djelloul Ziadi. 2018. Tree Pattern Matching From Regular Tree Expressions. Kybernetika 54, 2 (2018), 221–242

2018

-

[12]

Graham Bent, Patrick Dantressangle, David Vyvyan, Abbe Mowshowitz, and Valia Mitsou. 2008. A Dynamic Distributed Federated Database. In Proceedings of the 2nd Annual Conference on International Technology Alliance (ACITA ’08)

2008

-

[13]

Peter Boncz, Thomas Neumann, and Orri Erling. 2013. TPC-H analyzed: Hidden messages and lessons learned from an influential benchmark. In Technology Conference on Performance Evaluation and Benchmarking . Springer, 61–76

2013

-

[14]

Yuri Breitbart, Hector Garcia-Molina, and Abraham Silberschatz. 1992. Overview of Multidatabase Transaction Management. VLDB Journal 1 (10 1992), 181–239. doi:10.1145/1925805.1925811

-

[15]

Yuri Breitbart and Avi Silberschatz. 1988. Multidatabase Update Issues. In Pro- ceedings of the 1988 ACM SIGMOD International Conference on Management of Data (SIGMOD ’88). 135–142. doi:10.1145/50202.50217

-

[16]

Mihai Budiu, Tej Chajed, Frank McSherry, Leonid Ryzhyk, and Val Tannen. 2023. DBSP: Automatic Incremental View Maintenance for Rich Query Languages. Proc. VLDB Endow. 16, 7 (March 2023), 1601–1614. doi:10.14778/3587136.3587137

-

[17]

Chaudhuri, Mayur Datar, and Vivek Narasayya

Surajit. Chaudhuri, Mayur Datar, and Vivek Narasayya. 2004. Index Selection for Databases: A Hardness Study and A Principled Heuristic Solution. IEEE Transactions on Knowledge and Data Engineering 16, 11 (2004), 1313–1323. doi:10. 1109/TKDE.2004.75

2004

- [18]

-

[19]

Surajit Chaudhuri and Vivek Narasayya. 1998. AutoAdmin “what-if” index analysis utility. SIGMOD Rec. 27, 2 (June 1998), 367–378. doi:10.1145/276305. 276337

-

[20]

Douglas Comer. 1978. The Difficulty of Optimum Index Selection. ACM Trans. Database Syst. 3, 4 (Dec. 1978), 440–445. doi:10.1145/320289.320296

-

[21]

Hubert Comon, Max Dauchet, Rémi Gilleron, Florent Jacquemard, Denis Lugiez, Christof Löding, Sophie Tison, and Marc Tommasi. 2008. Tree Automata Tech- niques and Applications

2008

-

[22]

Bailu Ding, Sudipto Das, Ryan Marcus, Wentao Wu, Surajit Chaudhuri, and Vivek R. Narasayya. 2019. AI Meets AI: Leveraging Query Executions to Improve Index Recommendations. In 38th ACM Special Interest Group in Data Management (SIGMOD ’19). doi:10.1145/3299869.3324957

-

[23]

Jialin Ding, Ryan Marcus, Andreas Kipf, Vikram Nathan, Aniruddha Nrusimha, Kapil Vaidya, Alexander van Renen, and Tim Kraska. 2022. SageDB: An Instance- Optimized Data Analytics System. Proc. VLDB Endow. 15, 13 (Sept. 2022), 4062–4078. doi:10.14778/3565838.3565857

-

[24]

John Doner. 1970. Tree acceptors and some of their applications. Journal of Computer and System Sciences 4, 5 (1970), 406–451

1970

-

[25]

DuckDB. 2025. DuckDB Extensions. https://duckdb.org/docs/extensions/ working_with_extensions.html

2025

-

[26]

DuckDB Labs. 2025. DuckDB: An in-process SQL OLAP database management system. https://duckdb.org/. Retrieved November 2025

2025

-

[27]

Jennie Duggan, Ugur Cetintemel, Olga Papaemmanouil, and Eli Upfal. 2011. Performance Prediction for Concurrent Database Workloads. In Proceedings of the 2011 ACM SIGMOD International Conference on Management of Data (SIGMOD ’11). ACM, Athens, Greece, 337–348. doi:10.1145/1989323.1989359

-

[28]

John Feser, Sam Madden, Nan Tang, and Armando Solar-Lezama. 2020. Deductive Optimization of Relational Data Storage. Proc. ACM Program. Lang. 4, OOPSLA, Article 170 (Nov. 2020), 30 pages. doi:10.1145/3428238 13 Geoffrey X. Yu, Ryan Marcus, and Tim Kraska

-

[29]

Yannis Foufoulas, Alkis Simitsis, Lefteris Stamatogiannakis, and Yannis Ioan- nidis. 2022. YeSQL: "You extend SQL" with Rich and Highly Performant User- Defined Functions in Relational Databases. Proc. VLDB Endow. 15, 10 (June 2022), 2270–2283. doi:10.14778/3547305.3547328

-

[30]

Haralampos Gavriilidis, Kaustubh Beedkar, Jorge-Arnulfo Quiané-Ruiz, and Volker Markl. 2023. In-Situ Cross-Database Query Processing. In 2023 IEEE 39th International Conference on Data Engineering (ICDE ’23). IEEE, 2794–2807. doi:10.1109/ICDE55515.2023.00214

-

[31]

Dimitrios Georgakopoulos, Marek Rusinkiewicz, and Amit P. Sheth. 1991. On Serializability of Multidatabase Transactions Through Forced Local Conflicts. In Proceedings of the Seventh International Conference on Data Engineering (ICDE ’91). 314–323

1991

-

[32]

Jonathan Goldstein and Per-Åke Larson. 2001. Optimizing Queries Using Ma- terialized Views: A Practical, Scalable Solution. SIGMOD Rec. 30, 2 (May 2001), 331–342. doi:10.1145/376284.375706

-

[33]

Ian Goodfellow, Yoshua Bengio, and Aaron Courville. 2016. Deep Learning. MIT Press. http://www.deeplearningbook.org

2016

-

[34]

Google. 2026. Data definition language (DDL) statements in Goog- leSQL. https://docs.cloud.google.com/bigquery/docs/reference/standard-sql/ data-definition-language

2026

-

[35]

Goetz Graefe. 1995. The Cascades Framework for Query Optimization. IEEE Data Eng. Bull. 18, 3 (1995), 19–29

1995

-

[36]

Tim Gubner and Peter Boncz. 2022. Excalibur: A Virtual Machine for Adaptive Fine-grained JIT-Compiled Query Execution based on VOILA.Proc. VLDB Endow. 16, 4 (Dec. 2022), 829–841. doi:10.14778/3574245.3574266

-

[37]

Alon Y Halevy. 2001. Answering Queries Using Views: A Survey. The VLDB Journal 10 (2001), 270–294

2001

-

[38]

Benjamin Hilprecht and Carsten Binnig. 2022. Zero-Shot Cost Models for Out- of-the-box Learned Cost Prediction. Proceedings of the VLDB Endowment 15, 11 (2022), 2361–2374. https://www.vldb.org/pvldb/vol15/p2361-hilprecht.pdf

2022

-

[39]

Tin Kam Ho. 1995. Random Decision Forests. In Proceedings of 3rd International Conference on Document Analysis and Recognition , Vol. 1. IEEE, 278–282

1995

-

[40]

S.-Y. Hwang, E.-P. Lim, H.-R. Yang, S. Musukula, K. Mediratta, M. Ganesh, D. Clements, J. Stenoien, and J. Srivastava. 1994. The MYRIAD Federated Database Prototype. In Proceedings of the 1994 ACM SIGMOD International Conference on Management of Data (SIGMOD ’94). doi:10.1145/191839.191986

-

[41]

Vanja Josifovski, Peter Schwarz, Laura Haas, and Eileen Lin. 2002. Garlic: A New Flavor of Federated Query Processing for DB2. In Proceedings of the 2002 ACM SIGMOD International Conference on Management of Data (SIGMOD ’02) . 524–532

2002

-

[42]

Timo Kersten, Viktor Leis, Alfons Kemper, Thomas Neumann, Andrew Pavlo, and Peter Boncz. 2018. Everything you always wanted to know about compiled and vectorized queries but were afraid to ask. Proc. VLDB Endow. 11, 13 (Sept. 2018), 2209–2222. doi:10.14778/3275366.3284966

-

[43]

Abigale Kim, Marco Slot, David G. Andersen, and Andrew Pavlo. 2025. Anarchy in the Database: A Survey and Evaluation of Database Management System Extensibility. Proc. VLDB Endow. 18, 6 (Feb. 2025), 1962–1976. doi:10.14778/ 3725688.3725719

- [44]

- [45]

-

[46]

Ryan Marcus, Parimarjan Negi, Hongzi Mao, Nesime Tatbul, Mohammad Al- izadeh, and Tim Kraska. 2022. Bao: Making Learned Query Optimization Practi- cal. In Proceedings of the International Conference on Management of Data (SIG- MOD ’22)

2022

-

[47]

Ryan Marcus, Parimarjan Negi, Hongzi Mao, Chi Zhang, Mohammad Alizadeh, Tim Kraska, Olga Papaemmanouil, and Nesime Tatbul. 2019. Neo: A Learned Query Optimizer. Proceedings of the VLDB Endowment 12, 11 (2019)

2019

-

[48]

Ryan Marcus and Olga Papaemmanouil. 2019. Plan-Structured Deep Neural Network Models for Query Performance Prediction. Proceedings of the VLDB Endowment 12, 11 (2019), 1733–1746. doi:10.14778/3342263.3342646

-

[49]

Mowry, and Andrew Pavlo

Prashanth Menon, Amadou Ngom, Lin Ma, Todd C. Mowry, and Andrew Pavlo

- [50]

-

[51]

Mert Akdere and Ugur Cetintemel. 2012. Learning-based query performance modeling and prediction. In 2012 IEEE 28th International Conference on Data Engineering (ICDE ’12). IEEE, 390–401

2012

-

[52]

Microsoft Corporation. 2026. Microsoft Fabric Documentation. https://learn. microsoft.com/en-us/fabric/

2026

-

[53]

Sudarshan, and Krithi Ramamritham

Hoshi Mistry, Prasan Roy, S. Sudarshan, and Krithi Ramamritham. 2001. Ma- terialized view selection and maintenance using multi-query optimization. In Proceedings of the 2001 ACM SIGMOD International Conference on Management of Data (Santa Barbara, California, USA) (SIGMOD ’01). Association for Computing Machinery, New York, NY, USA, 307–318. doi:10.1145/...

-

[54]

Guido Moerkotte, Thomas Neumann, and Gabriele Steidl. 2009. Preventing bad plans by bounding the impact of cardinality estimation errors.Proc. VLDB Endow. 2, 1 (Aug. 2009), 982–993. doi:10.14778/1687627.1687738

-

[55]

Charles Gregory Nelson. 1980. Techniques for Program Verification. Ph. D. Dis- sertation. Stanford University

1980

-

[56]

Thomas Neumann. 2011. Efficiently Compiling Efficient Query Plans for Modern Hardware. Proceedings of the VLDB Endowment 4, 9 (2011), 539–550

2011

-

[57]

Robert Nieuwenhuis and Albert Oliveras. 2005. Proof-Producing Congruence Closure. In Term Rewriting and Applications, Jürgen Giesl (Ed.). Springer Berlin Heidelberg, Berlin, Heidelberg, 453–468

2005

-

[58]

Gregory Piatetsky-Shapiro. 1983. The optimal selection of secondary indices is NP-complete. SIGMOD Rec. 13, 2 (Jan. 1983), 72–75. doi:10.1145/984523.984530

-

[59]

PostgreSQL Global Development Group. 2025. PostgreSQL Extensions. https: //www.postgresql.org/docs/current/external-extensions.html

2025

-

[60]

Calton Pu. 1988. Superdatabases for Composition of Heterogeneous Databases. In Proceedings of the Fourth International Conference on Data Engineering (ICDE ’88) . 548–555

1988

-

[61]

Maximilian Rieger and Thomas Neumann. 2025. T3: Accurate and Fast Perfor- mance Prediction for Relational Database Systems With Compiled Decision Trees. Proc. ACM Manag. Data3, 3, Article 227 (June 2025), 27 pages. doi:10.1145/3725364

-

[62]

Bogdan Răducanu, Peter Boncz, and Marcin Zukowski. 2013. Micro Adaptivity in Vectorwise. In Proceedings of the 2013 ACM SIGMOD International Conference on Management of Data (SIGMOD ’13). Association for Computing Machinery, New York, NY, USA, 1231–1242. doi:10.1145/2463676.2465292

-

[63]

Tobias Schmidt, Andreas Kipf, Dominik Horn, Gaurav Saxena, and Tim Kraska

-

[64]

In Companion of the 2024 International Conference on Management of Data (SIGMOD ’24)

Predicate Caching: Query-Driven Secondary Indexing for Cloud Data Warehouses. In Companion of the 2024 International Conference on Management of Data (SIGMOD ’24). Association for Computing Machinery, New York, NY, USA, 347–359. doi:10.1145/3626246.3653395

-

[65]

Amazon Web Services. 2026. User-defined functions in Amazon Redshift. https: //docs.aws.amazon.com/redshift/latest/dg/user-defined-functions.html

2026

-

[66]

Amit P Sheth and James A Larson. 1990. Federated Database Systems for Manag- ing Distributed, Heterogeneous, and Autonomous Databases. ACM Computing Surveys (CSUR) 22, 3 (1990), 183–236

1990

-

[67]

Naughton

Amit Shukla, Prasad Deshpande, and Jeffrey F. Naughton. 1998. Materialized View Selection for Multidimensional Datasets. In Proceedings of the 24rd International Conference on Very Large Data Bases (VLDB ’98). Morgan Kaufmann Publishers Inc., San Francisco, CA, USA, 488–499

1998

-

[69]

Moritz Sichert and Thomas Neumann. 2022. User-defined operators: efficiently integrating custom algorithms into modern databases. Proc. VLDB Endow. 15, 5 (Jan. 2022), 1119–1131. doi:10.14778/3510397.3510408

-

[70]

Michael Sipser. 2021. Introduction to the Theory of Computation . Cengage

2021

-

[71]

Leonhard Spiegelberg, Rahul Yesantharao, Malte Schwarzkopf, and Tim Kraska

-

[72]

Tuplex: Data Science in Python at Native Code Speed. In Proceedings of the 2021 International Conference on Management of Data (Virtual Event, China) (SIG- MOD ’21). Association for Computing Machinery, New York, NY, USA, 1718–1731. doi:10.1145/3448016.3457244

- [73]

-

[74]

Sivaprasad Sudhir, Michael Cafarella, and Samuel Madden. 2021. Replicated layout for in-memory database systems. Proc. VLDB Endow. 15, 4 (Dec. 2021), 984–997. doi:10.14778/3503585.3503606

-

[75]

Ji Sun and Guoliang Li. 2019. An End-to-End Learning-based Cost Estimator. Proceedings of the VLDB Endowment 13, 3 (2019), 307–319. doi:10.14778/3368289. 3368296

-

[76]

Kanat Tangwongsan, Martin Hirzel, Scott Schneider, and Kun-Lung Wu. 2015. General Incremental Sliding-Window Aggregation. Proceedings of the VLDB Endowment 8, 7 (2015), 702–713

2015

-

[77]

Ross Tate, Michael Stepp, Zachary Tatlock, and Sorin Lerner. 2009. Equality Saturation: A New Approach to Optimization. SIGPLAN Not. 44, 1 (Jan. 2009), 264–276. doi:10.1145/1594834.1480915

-

[78]

J. W. Thatcher and J. B. Wright. 1968. Generalized finite automata theory with an application to a decision problem of second-order logic. Mathematical systems theory 2, 1 (1968), 57–81. doi:10.1007/BF01691346

-

[79]

Transaction Processing Performance Council. 2025. TPC-H Benchmark. https: //www.tpc.org/TPC_Documents_Current_Versions/pdf/TPC-H_v3.0.1.pdf

2025

-

[80]

Immanuel Trummer. 2023. Demonstrating GPT-DB: Generating Query-Specific and Customizable Code for SQL Processing with GPT-4. Proc. VLDB Endow. 16, 12 (Aug. 2023), 4098–4101. doi:10.14778/3611540.3611630

-

[81]

Alexander van Renen, Dominik Horn, Pascal Pfeil, Kapil Vaidya, Wenjian Dong, Murali Narayanaswamy, Zhengchun Liu, Gaurav Saxena, Andreas Kipf, and Tim Kraska. 2024. Why TPC is Not Enough: An Analysis of the Amazon Redshift Fleet. Proc. VLDB Endow. 17, 11 (July 2024), 3694–3706. doi:10.14778/3681954.3682031 14 Tailwind: A Practical Framework for Query Accelerators

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.