Recognition: unknown

Index-Assisted Stratified Sampling for Online Aggregation

Pith reviewed 2026-05-07 05:38 UTC · model grok-4.3

The pith

A two-phase index-assisted stratified sampling method reduces the samples needed for accurate online aggregation by optimizing strata from the first batch of draws.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We design index-assisted stratified sampling for online aggregation, which features a two-phase sampling framework. Samples drawn from first phase are used for both online aggregation and optimizing future sampling cost, while the second phase continues the online aggregation using the optimized strata. We prove optimal stratification and sample size allocation strategies for index-based sampling cost model, and design several greedy and dynamic programming based optimization methods to balance optimization cost and effectiveness in cost reduction.

What carries the argument

The two-phase sampling framework that uses first-phase index-assisted samples to estimate stratum variances and selectivities, then applies proven optimal stratification and allocation rules under an index-based sampling cost model before completing the query in the second phase.

If this is right

- For data with high variance or low selectivity, the total number of index probes needed to reach a target error bound drops because strata receive sample sizes proportional to their measured standard deviation and cost.

- Query latency improves because fewer index accesses are performed overall; the paper reports consistent speedups and peaks of 3x versus index-assisted uniform sampling.

- The same first-phase samples serve dual purposes, so the overhead of optimization is paid only once per query rather than requiring separate statistics collection.

- The greedy and dynamic-programming planners let users trade a small extra optimization cost for larger reductions in sampling cost depending on query selectivity.

Where Pith is reading between the lines

- The dual-use of early samples suggests a general pattern for any sampling-based estimator that can afford a small pilot phase: use the pilot both for the estimate and to tune the sampling plan for the remainder.

- Because the cost model is tied to index probes rather than rows scanned, the same two-phase logic could be tested on other index structures such as B-trees or inverted indexes without changing the optimization proofs.

- If the first phase is kept small, the method remains compatible with streaming updates that arrive between phases, provided the index can reflect those updates before the second phase begins.

Load-bearing premise

Samples from the first phase give sufficiently accurate estimates of per-stratum variances and selectivities to let the second-phase allocation actually reach the theoretically lower sampling cost without introducing bias into the final aggregate or its confidence bounds.

What would settle it

Execute the two-phase method on a dataset whose value distribution or selectivity changes sharply after the first phase is collected and check whether the final confidence interval still covers the true aggregate at the claimed rate and whether the total sample count is smaller than uniform index sampling would have required.

Figures

read the original abstract

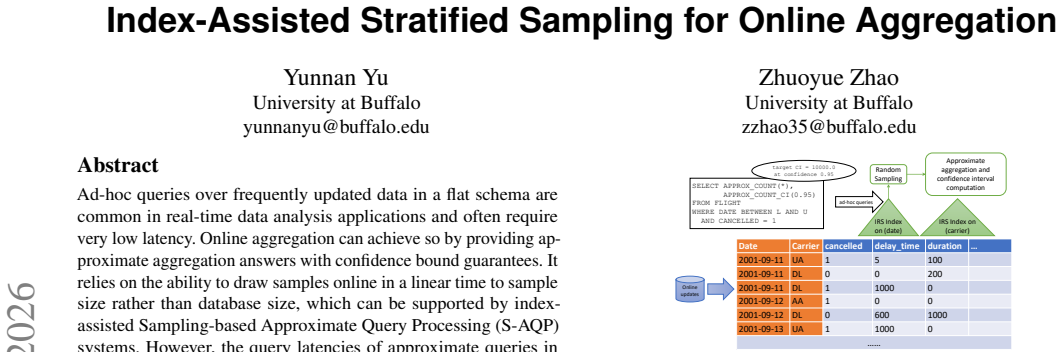

Ad-hoc queries over frequently updated data in a flat schema are common in real-time data analysis applications and often require very low latency. Online aggregation can achieve so by providing approximate aggregation answers with confidence bound guarantees. It relies on the ability to draw samples online in a linear time to sample size rather than database size, which can be supported by index-assisted Sampling-based Approximate Query Processing (S-AQP) systems. However, the query latencies of approximate queries in these systems can still suffer from excessive sampling cost required to achieve a desired confidence bound, due to increased sample size for data with high variance in value distribution and selectivity. Classic stratified sampling methods with Neyman allocation can minimize sample size in theory, but several challenges prevent it from being applicable in index-assisted S-AQP systems, including requiring apriori statistics, high optimization cost, and inaccurate sampling cost model based on sample size. Towards that, we design index-assisted stratified sampling for online aggregation, which features a two-phase sampling framework. Samples drawn from first phase are used for both online aggregation and optimizing future sampling cost, while the second phase continues the online aggregation using the optimized strata. We prove optimal stratification and sample size allocation strategies for index-based sampling cost model, and design several greedy and dynamic programming based optimization methods to balance optimization cost and effectiveness in cost reduction. We evaluate our methods on several real-world and synthetic datasets and queries, and the results show ours consistently achieve good speedup and, in extreme cases, up to 3x speedup and 98708x speedup, when compared to index-assisted uniform sampling and classic scan-based stratified sampling respectively.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes index-assisted stratified sampling for online aggregation over frequently updated flat-schema data. It introduces a two-phase framework in which phase-1 samples contribute to the running aggregate estimate while also being used to select strata boundaries and Neyman-style allocations that minimize an index-based sampling cost model for phase 2. The authors prove optimal stratification and allocation strategies under this cost model, present greedy and dynamic-programming algorithms to trade optimization overhead against cost reduction, and report empirical speedups of up to 3× versus index-assisted uniform sampling and up to 98 708× versus scan-based stratified sampling on real and synthetic workloads.

Significance. If the statistical validity of the final estimator is preserved, the work would meaningfully advance practical online aggregation in index-assisted S-AQP systems by removing the need for a priori statistics while still exploiting stratification. The explicit treatment of an index-specific cost model, the accompanying optimality proofs, and the provision of multiple optimization algorithms that balance overhead against benefit constitute clear technical contributions. The reported speedups, if reproducible and free of post-hoc selection, would indicate substantial latency gains for low-latency ad-hoc analytics.

major comments (3)

- [two-phase sampling framework] The two-phase sampling framework (abstract and the description of the method) uses the same phase-1 draws both for the aggregate estimate and for data-dependent estimation of strata boundaries and allocations. Standard sampling theory shows that the resulting design is adaptive; the usual Neyman variance formula and the associated confidence intervals are then invalid unless an explicit correction (Horvitz–Thompson weights, post-stratification, or conditional inference) is applied. The manuscript must state precisely how unbiasedness and valid confidence bounds are maintained and must supply the corresponding derivation or reference. This issue is load-bearing for the headline claim of “confidence bound guarantees.”

- [proofs of optimal stratification and sample size allocation] The proofs of optimal stratification and sample-size allocation (abstract) are stated for the index-based cost model under the assumption that strata and stratum variances are known a priori. Because the method estimates these quantities from the phase-1 sample, the optimality result does not directly apply. The paper should either (a) prove that the estimated design remains asymptotically optimal or (b) quantify the sub-optimality gap and show that the reported speedups survive this gap.

- [experimental evaluation] Table or experimental section reporting the 98 708× speedup versus scan-based stratified sampling: the magnitude is extreme and depends on the interplay between data distribution, selectivity, and the index cost model. The manuscript must document the exact data-exclusion rules, the distribution of per-stratum variances, and the number of strata chosen, and must include an ablation that isolates the contribution of the index cost model versus the adaptive stratification itself.

minor comments (3)

- [Abstract] Abstract: “apriori” should be “a priori”; “ours consistently achieve good speedup” should be rephrased for grammatical correctness.

- [method description] The free parameters “number of strata” and “stratum boundaries” are listed in the axiom ledger; the manuscript should state explicitly how these are chosen in the reported experiments and whether they are treated as user-specified or internally optimized.

- [optimization methods] The optimization-cost versus effectiveness trade-off is mentioned but not quantified; a plot or table showing cumulative optimization time versus total query latency for the greedy and DP variants would improve clarity.

Simulated Author's Rebuttal

We thank the referee for the thorough review and valuable suggestions. We have carefully considered each major comment and provide point-by-point responses below. Where revisions are needed, we outline the changes to be made in the revised manuscript.

read point-by-point responses

-

Referee: [two-phase sampling framework] The two-phase sampling framework (abstract and the description of the method) uses the same phase-1 draws both for the aggregate estimate and for data-dependent estimation of strata boundaries and allocations. Standard sampling theory shows that the resulting design is adaptive; the usual Neyman variance formula and the associated confidence intervals are then invalid unless an explicit correction (Horvitz–Thompson weights, post-stratification, or conditional inference) is applied. The manuscript must state precisely how unbiasedness and valid confidence bounds are maintained and must supply the corresponding derivation or reference. This issue is load-bearing for the headline claim of “confidence bound guarantees.”

Authors: We appreciate the referee highlighting this critical statistical issue. The design is adaptive, and the manuscript's current presentation assumes the standard Neyman allocation applies directly to the combined sample. To correct this, we will revise the paper to clarify that the final estimator is unbiased because it is a stratified sample with fixed (though estimated) strata, using the combined phase-1 and phase-2 observations. For variance estimation and confidence intervals, we will incorporate a post-stratification adjustment and use the estimated stratum variances from the full sample, with a reference to literature on inference in adaptive sampling designs (such as conditional inference given the phase-1 data). We will add a formal derivation in an appendix showing that the bias is zero and the variance estimator is consistent. This addresses the validity of the confidence bounds. We will make these changes in the revised version. revision: yes

-

Referee: [proofs of optimal stratification and sample size allocation] The proofs of optimal stratification and sample-size allocation (abstract) are stated for the index-based cost model under the assumption that strata and stratum variances are known a priori. Because the method estimates these quantities from the phase-1 sample, the optimality result does not directly apply. The paper should either (a) prove that the estimated design remains asymptotically optimal or (b) quantify the sub-optimality gap and show that the reported speedups survive this gap.

Authors: We thank the referee for this precise comment. The proofs establish the optimal strata boundaries and Neyman-style allocation that minimize the index-based sampling cost when the stratum variances are known. Since the method uses phase-1 samples to estimate these, the achieved allocation is approximate. In the revision, we will add a subsection analyzing the asymptotic optimality: as the phase-1 sample size n1 grows (with n1 a fixed fraction of total n), the estimated variances converge in probability to the true ones by the law of large numbers, and thus the selected strata and allocations converge to the optimal, implying asymptotic optimality of the cost. Additionally, we will report new experiments comparing the estimated design to an oracle design with true variances, showing that the speedup gap is small (e.g., less than 10% loss) and the reported speedups largely survive. This will be included in the experimental evaluation section. revision: yes

-

Referee: [experimental evaluation] Table or experimental section reporting the 98 708× speedup versus scan-based stratified sampling: the magnitude is extreme and depends on the interplay between data distribution, selectivity, and the index cost model. The manuscript must document the exact data-exclusion rules, the distribution of per-stratum variances, and the number of strata chosen, and must include an ablation that isolates the contribution of the index cost model versus the adaptive stratification itself.

Authors: We agree that the large speedup figure warrants detailed documentation to allow readers to understand the conditions and to verify the contributions. In the revised manuscript, we will expand the experimental section as follows: include a table or paragraph detailing the data-exclusion rules (e.g., removal of records with null values or extreme outliers beyond 3 standard deviations); provide supplementary figures or tables showing the per-stratum variance distributions for key datasets and queries; specify the number of strata used in each experiment and the method for choosing it (e.g., via the optimization algorithm); and add an ablation study with four variants: (i) index-assisted uniform sampling, (ii) adaptive stratification with sample-size cost model, (iii) fixed strata with index cost model, and (iv) our full method. This will isolate the impact of the index cost model versus the adaptive stratification. We believe these additions will make the results more transparent and reproducible. revision: yes

Circularity Check

No circularity: optimality proof is independent of phase-1 estimates

full rationale

The paper derives and proves optimal stratification and Neyman-style allocation under an explicit index-based sampling cost model; this is a mathematical result on the model parameters (assumed known). The two-phase framework then applies those derived optima by estimating strata boundaries and allocations from phase-1 samples, which also contribute to the running aggregate. This is an implementation choice, not a reduction of the proof itself to fitted quantities by construction. The greedy and dynamic-programming methods are algorithmic approximations that trade off computation against the proven optimum; they do not redefine the optimum in terms of the same data. No equation or claim equates a prediction to its own input, and no self-citation chain is invoked to justify the core result. The derivation chain therefore remains self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

free parameters (2)

- number of strata

- stratum boundaries

axioms (2)

- domain assumption Index access cost is linear in the number of sampled rows and can be modeled independently of data values.

- domain assumption First-phase samples are representative enough to optimize second-phase allocation without bias.

Reference graph

Works this paper leans on

-

[1]

Borui Cai, Yong Xiang, Longxiang Gao, He Zhang, Yunfeng Li, and Jianxin Li

2008. Data Expo 2009: Airline on time data. https://doi.org/10.7910/DVN/ HG7NV7

-

[2]

Phillips

Peyman Afshani and Jeff M. Phillips. 2019. Independent Range Sampling, Revis- ited Again. InSoCG 2019. 4:1–4:13

2019

-

[3]

Peyman Afshani and Zhewei Wei. 2017. Independent Range Sampling, Revisited. InESA 2017. 3:1–3:14

2017

-

[4]

Sameer Agarwal, Barzan Mozafari, Aurojit Panda, Henry Milner, Samuel Madden, and Ion Stoica. 2013. BlinkDB: queries with bounded errors and bounded response times on very large data. InEuroSys. 29–42

2013

-

[5]

Billingsley

Patrick. Billingsley. 2012.Probability and measure(anniversary edition. ed.). Wiley, Hoboken, N.J. 12

2012

-

[6]

Peter Bodik, Wei Hong, Carlos Guestrin, Sam Madden, Mark Paskin, and Romain Thibaux. 2004. Intel Lab Data. https://db.csail.mit.edu/labdata/labdata.html

2004

-

[7]

Surajit Chaudhuri, Gautam Das, and Vivek Narasayya. 2007. Optimized stratified sampling for approximate query processing.ACM Trans. Database Syst.32, 2 (June 2007), 9–es. https://doi.org/10.1145/1242524.1242526

-

[8]

Bolin Ding, Silu Huang, Surajit Chaudhuri, Kaushik Chakrabarti, and Chi Wang

-

[9]

InSIGMOD ’16

Sample + Seek: Approximating Aggregates with Distribution Precision Guarantee. InSIGMOD ’16. 679–694

-

[10]

Rong Gu, Han Li, Haipeng Dai, Wenjie Huang, Jie Xue, Meng Li, Jiaqi Zheng, Haoran Cai, Yihua Huang, and Guihai Chen. 2023. ShadowAQP: Efficient Approximate Group-by and Join Query via Attribute-Oriented Sample Size Allo- cation and Data Generation.Proc. VLDB Endow.16, 13 (Sept. 2023), 4216–4229. https://doi.org/10.14778/3625054.3625059

-

[11]

Peter J. Haas. 1997. Large-Sample and Deterministic Confidence Intervals for Online Aggregation. InSSDBM ’97. 51–63

1997

-

[12]

J. M. Hellerstein, P. J. Haas, and H. J. Wang. 1997. Online Aggregation. InProc. ACM SIGMOD International Conference on Management of Data

1997

-

[13]

Benjamin Hilprecht, Andreas Schmidt, Moritz Kulessa, Alejandro Molina, Kris- tian Kersting, and Carsten Binnig. 2020. DeepDB: Learn from Data, Not from Queries!Proc. VLDB Endow.13, 7 (mar 2020), 992–1005. https: //doi.org/10.14778/3384345.3384349

-

[14]

D. G. Horvitz and D. J. Thompson. 1952. A generalization of sampling without replacement from a finite universe.J. Amer. Statist. Assoc.47 (1952), 663–685

1952

-

[15]

Xiaocheng Hu, Miao Qiao, and Yufei Tao. 2014. Independent Range Sampling. InPODS ’14. 246–255

2014

-

[16]

Saehan Jo and Immanuel Trummer. 2020. BitGourmet: Deterministic Approxima- tion via Optimized Bit Selection. In10th Conference on Innovative Data Systems Research, CIDR 2020, Amsterdam, The Netherlands, January 12-15, 2020, Online Proceedings. www.cidrdb.org. http://cidrdb.org/cidr2020/papers/p5-jo-cidr20.pdf

2020

-

[17]

Saehan Jo and Immanuel Trummer. 2020. Demonstration of BitGourmet: Data Analysis via Deterministic Approximation. InProceedings of the 2020 Interna- tional Conference on Management of Data, SIGMOD Conference 2020, online conference [Portland, OR, USA], June 14-19, 2020, David Maier, Rachel Pot- tinger, AnHai Doan, Wang-Chiew Tan, Abdussalam Alawini, and H...

-

[18]

Srikanth Kandula. 2020. A Parallel Zipf-skewed Data Generator for TPC-H Benchmark. Retrieved July, 2023 from https://github.com/SrikanthKandula/ tpch_dbgen_zipf_skew

2020

-

[19]

Srikanth Kandula, Anil Shanbhag, Aleksandar Vitorovic, Matthaios Olma, Robert Grandl, Surajit Chaudhuri, and Bolin Ding. 2016. Quickr: Lazily Approximating Complex AdHoc Queries in BigData Clusters. InSIGMOD ’16. 631–646

2016

-

[20]

Ron Kohavi. 1996. Census Income. UCI Machine Learning Repository. DOI: https://doi.org/10.24432/C5GP7S

-

[21]

Feifei Li, Bin Wu, Ke Yi, and Zhuoyue Zhao. 2016. Wander Join: Online Aggre- gation via Random Walks. InProceedings of the 2016 International Conference on Management of Data(San Francisco, California, USA)(SIGMOD ’16). ACM, New York, NY , USA, 615–629. https://doi.org/10.1145/2882903.2915235

-

[22]

Xi Liang, Stavros Sintos, Zechao Shang, and Sanjay Krishnan. 2021. Combining Aggregation and Sampling (Nearly) Optimally for Approximate Query Processing. InProceedings of the 2021 International Conference on Management of Data (Virtual Event, China)(SIGMOD ’21). Association for Computing Machinery, New York, NY , USA, 1129–1141. https://doi.org/10.1145/3...

-

[23]

Qingzhi Ma and Peter Triantafillou. 2019. DBEst: Revisiting Approximate Query Processing Engines with Machine Learning Models. InSIGMOD ’19. 1553–1570

2019

-

[24]

F. Olken. 1993.Random Sampling from Databases. Ph.D. Dissertation. University of California at Berkeley

1993

-

[25]

Yongjoo Park, Barzan Mozafari, Joseph Sorenson, and Junhao Wang. 2018. VerdictDB: Universalizing Approximate Query Processing. InSIGMOD ’18. 1461–1476

2018

-

[26]

Jinglin Peng, Dongxiang Zhang, Jiannan Wang, and Jian Pei. 2018. AQP++: Connecting Approximate Query Processing With Aggregate Precomputation for Interactive Analytics. InSIGMOD ’18. 1477–1492

2018

-

[27]

Douglas B. Rumbaugh and Dong Xie. 2023. Practical Dynamic Extension for Sampling Indexes.Proc. ACM Manag. Data1, 4, Article 254 (dec 2023), 26 pages. https://doi.org/10.1145/3626744

-

[28]

Korth, and S

Avi Silberschatz, Henry F. Korth, and S. Sudarshan. 2020.Database System Concepts, Seventh Edition. McGraw-Hill Book Company. https://www.db- book.com/

2020

-

[29]

Yufei Tao. 2022. Algorithmic Techniques for Independent Query Sampling. In Proceedings of the 41st ACM SIGMOD-SIGACT-SIGAI Symposium on Princi- ples of Database Systems(Philadelphia, PA, USA)(PODS ’22). Association for Computing Machinery, New York, NY , USA, 129–138. https://doi.org/10.1145/ 3517804.3526068

- [30]

-

[31]

Phillips, Michael Matheny, and Feifei Li

Dong Xie, Jeff M. Phillips, Michael Matheny, and Feifei Li. 2021. Spatial Independent Range Sampling. InProceedings of the 2021 International Con- ference on Management of Data(Virtual Event, China)(SIGMOD ’21). Asso- ciation for Computing Machinery, New York, NY , USA, 2023–2035. https: //doi.org/10.1145/3448016.3452806

-

[32]

Zongheng Yang, Amog Kamsetty, Sifei Luan, Eric Liang, Yan Duan, Xi Chen, and Ion Stoica. 2020. NeuroCard: One Cardinality Estimator for All Tables. Proc. VLDB Endow.14, 1 (sep 2020), 61–73. https://doi.org/10.14778/3421424. 3421432

-

[33]

Edward A. Youngs and Elliot M. Cramer. 1971. Some Results Relevant to Choice of Sum and Sum-of-Product Algorithms.Technometrics13, 3 (1971), 657–665. https://doi.org/10.1080/00401706.1971.10488826

-

[34]

Fangyuan Zhang, Mengxu Jiang, and Sibo Wang. 2023. Efficient Dynamic Weighted Set Sampling and Its Extension.Proc. VLDB Endow.17, 1 (Sept. 2023), 15–27. https://doi.org/10.14778/3617838.3617840

-

[35]

Zhuoyue Zhao, Dong Xie, and Feifei Li. 2022. AB-Tree: Index for Concur- rent Random Sampling and Updates.Proc. VLDB Endow.15, 9 (may 2022), 1835–1847. https://doi.org/10.14778/3538598.3538606

-

[36]

Yuxuan Zhu, Tengjun Jin, Stefanos Baziotis, Chengsong Zhang, Charith Mendis, and Daniel Kang. 2025. PilotDB: Database-Agnostic Online Approximate Query Processing with A Priori Error Guarantees.Proc. ACM Manag. Data3, 3, Article 198 (June 2025), 28 pages. https://doi.org/10.1145/3725335 13

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.