Recognition: unknown

Autoformalizing Memory Specifications with Agents

Pith reviewed 2026-05-09 20:39 UTC · model grok-4.3

The pith

AI agents translate natural language DRAM specifications into formal DRAMPyML for verification tasks.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Our method automatically formalizes natural language memory chip specifications, for industry relevant Dynamic Random Access Memory (DRAM) standards, into a formal representation called DRAMPyML that can be used for downstream DV tasks like the generation of SystemVerilog assertions, stimulus, and functional coverage. We also release our benchmarking dataset, DRAMBench, which can be used to evaluate the evolution of model capabilities at hardware autoformalization.

What carries the argument

Multi-agent AI pipelines that parse natural language DRAM documents to generate DRAMPyML, a formal executable model of memory behavior and timing rules.

If this is right

- DRAMPyML models allow direct, automated generation of SystemVerilog assertions that check design compliance.

- Test stimulus and coverage points can be derived mechanically from the same formal representation.

- DRAMBench provides a reusable test set for comparing different autoformalization approaches over time.

- The overall process reduces the manual translation step between specification documents and verification environments.

Where Pith is reading between the lines

- The same agent approach could be tested on specifications for other hardware components such as processors or interconnects.

- Maintaining DRAMPyML as standards evolve might reduce the cost of re-verifying updated designs.

- If the method scales, it could surface ambiguities in natural language specs earlier in the design cycle.

- Integration of DRAMPyML with existing simulation and formal tools would create more complete automated flows.

Load-bearing premise

AI agents can reliably capture the full complexity and all edge cases of real DRAM specifications without introducing semantic errors or requiring extensive human correction.

What would settle it

Expert manual comparison of agent-produced DRAMPyML against the original specification documents for a standard like DDR5, checking whether timing parameters, command sequences, and state transitions are represented completely and accurately.

Figures

read the original abstract

The primary goal of Design Verification (DV) is to ensure that a proposed chip design implementation (either in code, or physical form) exactly matches its specification and is free of functional errors in order to avoid costly re-designs. Achieving this often demands extensive manual interpretation, translating the specification document into a formal, testable representation. While AI has made progress in DV, current approaches typically focus on narrow, isolated tasks rather than full end-to-end specification compliance of modern chip designs, failing to capture the complexity of real-world verification. Our method automatically formalizes natural language memory chip specifications, for industry relevant Dynamic Random Access Memory (DRAM) standards, into a formal representation called DRAMPyML that can be used for downstream DV tasks like the generation of SystemVerilog assertions, stimulus, and functional coverage. We also release our benchmarking dataset, DRAMBench, which can be used to evaluate the evolution of model capabilities (and new approaches) at hardware autoformalization.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper describes an agent-based pipeline that translates natural-language DRAM memory specifications (e.g., JEDEC standards) into a formal intermediate representation called DRAMPyML; this representation is then used to generate SystemVerilog assertions, stimulus, and functional coverage. The authors also release the DRAMBench dataset to benchmark future autoformalization methods.

Significance. If the generated DRAMPyML representations are shown to be semantically faithful to the source specifications and to capture the full range of timing, protocol, and edge-case constraints, the approach would address a major manual bottleneck in hardware design verification. The public DRAMBench benchmark would further enable reproducible progress measurement in AI-assisted formalization of industrial hardware specs.

major comments (3)

- The central claim that DRAMPyML outputs are directly usable for industry DV tasks rests on unverified semantic equivalence to the original JEDEC-style documents. No formal semantics for DRAMPyML, no automated equivalence checker, and no expert review of real multi-page constraints (e.g., tREFI scheduling or command ordering) are described; downstream SystemVerilog generation therefore inherits any undetected translation errors.

- Evaluation details are insufficient to support the claim of reliable autoformalization. The manuscript reports only high-level pass/fail outcomes on DRAMBench prompts rather than quantitative metrics (precision/recall against human-authored ground truth, coverage of edge cases, or inter-annotator agreement with verification engineers).

- The weakest assumption—that LLM agents can capture the full complexity of DRAM timing tables and multi-page protocol rules without human correction—is not stress-tested. No ablation on prompt engineering, agent architecture, or failure modes for ambiguous natural-language phrasing is provided.

minor comments (2)

- Clarify the exact syntax and semantics of DRAMPyML early in the paper so readers can assess its expressiveness before seeing the generation results.

- Add a table comparing DRAMBench examples to the corresponding JEDEC sections they derive from, including any manual corrections applied.

Simulated Author's Rebuttal

We thank the referee for the thoughtful and detailed review of our manuscript. We address each major comment below with clarifications on the current scope of the work and indicate where revisions will strengthen the presentation.

read point-by-point responses

-

Referee: The central claim that DRAMPyML outputs are directly usable for industry DV tasks rests on unverified semantic equivalence to the original JEDEC-style documents. No formal semantics for DRAMPyML, no automated equivalence checker, and no expert review of real multi-page constraints (e.g., tREFI scheduling or command ordering) are described; downstream SystemVerilog generation therefore inherits any undetected translation errors.

Authors: We agree that establishing semantic equivalence is essential for strong claims of direct industrial usability. The manuscript demonstrates that DRAMPyML representations enable downstream generation of SystemVerilog assertions, stimulus, and coverage that align with the source specifications, as evaluated on DRAMBench. However, we do not provide formal semantics for DRAMPyML, an automated equivalence checker, or expert reviews of complex multi-page constraints such as tREFI scheduling. This is a genuine limitation of the present work, whose primary focus is the agent pipeline and benchmark release rather than exhaustive semantic validation. In the revised manuscript we will add a dedicated discussion subsection that (i) explicitly acknowledges the absence of formal semantics and equivalence checking, (ii) provides concrete translation examples for timing and protocol constraints including tREFI and command ordering, and (iii) states that human verification remains advisable for production DV use. We will also outline planned future work on formal semantics. revision: partial

-

Referee: Evaluation details are insufficient to support the claim of reliable autoformalization. The manuscript reports only high-level pass/fail outcomes on DRAMBench prompts rather than quantitative metrics (precision/recall against human-authored ground truth, coverage of edge cases, or inter-annotator agreement with verification engineers).

Authors: We accept that the current evaluation section reports primarily aggregate pass/fail rates. DRAMBench does contain human-authored ground truth for a subset of prompts. In the revision we will expand the evaluation to report precision and recall against this ground truth, quantitative edge-case coverage statistics, and inter-annotator agreement figures from the dataset construction process. These additions will provide a more granular and reproducible assessment of autoformalization quality. revision: yes

-

Referee: The weakest assumption—that LLM agents can capture the full complexity of DRAM timing tables and multi-page protocol rules without human correction—is not stress-tested. No ablation on prompt engineering, agent architecture, or failure modes for ambiguous natural-language phrasing is provided.

Authors: We concur that the robustness of the agent approach to complex DRAM specifications merits further empirical examination. The revised manuscript will include an ablation study covering (i) alternative prompt-engineering strategies, (ii) variations in agent architecture (single-agent versus multi-agent configurations and tool-use patterns), and (iii) a categorized error analysis of failure modes, with particular attention to ambiguous natural-language phrasing and multi-page timing tables. These results will be presented alongside the existing DRAMBench outcomes. revision: yes

Circularity Check

No circularity: applied pipeline description with no derivations or self-referential predictions

full rationale

The paper presents an agent pipeline for translating natural-language DRAM specs into DRAMPyML and releasing DRAMBench; it contains no equations, fitted parameters, predictions of derived quantities, or load-bearing self-citations. The central claim is an empirical description of an applied method whose validity rests on external evaluation of the generated artifacts rather than any internal reduction to its own inputs. No steps match the enumerated circularity patterns.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Scaling Learning Algorithms Towards

Bengio, Yoshua and LeCun, Yann , booktitle =. Scaling Learning Algorithms Towards

-

[2]

and Osindero, Simon and Teh, Yee Whye , journal =

Hinton, Geoffrey E. and Osindero, Simon and Teh, Yee Whye , journal =. A Fast Learning Algorithm for Deep Belief Nets , volume =

-

[3]

2016 , publisher=

Deep learning , author=. 2016 , publisher=

2016

-

[4]

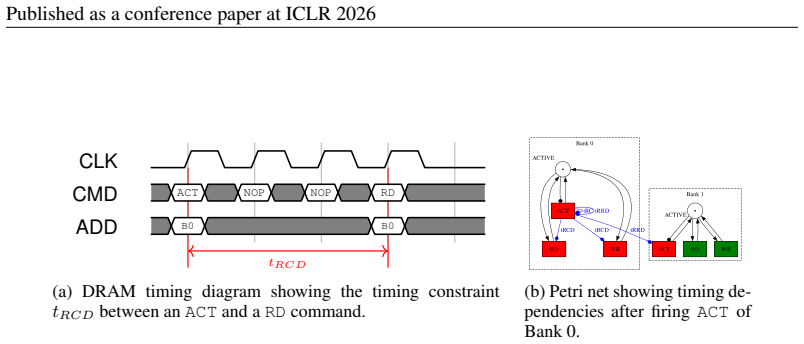

DRAMPyML: A Formal Description of DRAM Protocols with Timed Petri Nets , venue =

Christ, Derek and Zimmermann, Thomas and Barbie, Philippe and Saberi, Dmitri and Yin, Yao and Jung, Matthias , biburl =. DRAMPyML: A Formal Description of DRAM Protocols with Timed Petri Nets , venue =. Design and Verification Conference and Exhibition (DVCON) Europe 2025 , eventdate =

2025

-

[6]

2024 , eprint=

AssertLLM: Generating and Evaluating Hardware Verification Assertions from Design Specifications via Multi-LLMs , author=. 2024 , eprint=

2024

-

[7]

2010 , publisher =

The Power of Assertions in SystemVerilog , author =. 2010 , publisher =

2010

-

[8]

Flipping bits in memory without accessing them: an experimental study of dram disturbance errors,

Kim, Yoongu and Daly, Ross and Kim, Jeremie and Fallin, Chris and Lee, Ji Hye and Lee, Donghyuk and Wilkerson, Chris and Lai, Konrad and Mutlu, Onur , title =. SIGARCH Comput. Archit. News , month = jun, pages =. 2014 , issue_date =. doi:10.1145/2678373.2665726 , abstract =

-

[9]

Proceeding of the 41st Annual International Symposium on Computer Architecuture , pages =

Kim, Yoongu and Daly, Ross and Kim, Jeremie and Fallin, Chris and Lee, Ji Hye and Lee, Donghyuk and Wilkerson, Chris and Lai, Konrad and Mutlu, Onur , title =. Proceeding of the 41st Annual International Symposium on Computer Architecuture , pages =. 2014 , isbn =

2014

-

[11]

, booktitle=

Foster, Harry D. , booktitle=. Trends in functional verification: A 2014 industry study , year=

2014

-

[12]

and Ren, Haoxing , booktitle=

Kang, Minwoo and Liu, Mingjie and Hamad, Ghaith Bany and Suhaib, Syed M. and Ren, Haoxing , booktitle=. FVEval: Understanding Language Model Capabilities in Formal Verification of Digital Hardware , year=

-

[16]

ICLR Workshop on AI and Formal Methods , year =

An Evaluation Benchmark for Autoformalization in Lean4 , author =. ICLR Workshop on AI and Formal Methods , year =

-

[17]

2025 , eprint=

FLAG: Formal and LLM-assisted SVA Generation for Formal Specifications of On-Chip Communication Protocols , author=. 2025 , eprint=

2025

-

[18]

2025 , eprint=

DeepSeek-V3.2: Pushing the Frontier of Open Large Language Models , author=. 2025 , eprint=

2025

-

[22]

2012 , publisher =

SystemVerilog for Verification , author =. 2012 , publisher =

2012

-

[24]

Quantitative Deadlock Analysis in Petri Nets using Inconsistency Measures , year=

Unruh, Elina and Delfmann, Patrick and Thimm, Matthias , booktitle=. Quantitative Deadlock Analysis in Petri Nets using Inconsistency Measures , year=

-

[25]

Yunsheng Bai , Ghaith Bany Hamad , Syed Suhaib , and Haoxing Ren . AssertionForge: Enhancing Formal Verification Assertion Generation with Structured Representation of Specifications and RTL . arXiv e-prints, art. arXiv:2503.19174, March 2025. doi:10.48550/arXiv.2503.19174

-

[26]

The Power of Assertions in SystemVerilog

Eduard Cerny, Surrendra Dudani, John Havlicek, and Dmitry Korchemny. The Power of Assertions in SystemVerilog. Springer, 2010. doi:10.1007/978-1-4419-6600-1

-

[27]

Drampyml: A formal description of dram protocols with timed petri nets

Derek Christ, Thomas Zimmermann, Philippe Barbie, Dmitri Saberi, Yao Yin, and Matthias Jung. Drampyml: A formal description of dram protocols with timed petri nets. In Design and Verification Conference and Exhibition (DVCON) Europe 2025, 2025

2025

-

[28]

DeepSeek-V3.2: Pushing the Frontier of Open Large Language Models

DeepSeek-AI. Deepseek-v3.2: Pushing the frontier of open large language models, 2025. URL https://arxiv.org/abs/2512.02556

work page internal anchor Pith review arXiv 2025

-

[29]

Wenji Fang, Mengming Li, Min Li, Zhiyuan Yan, Shang Liu, Zhiyao Xie, and Hongce Zhang. Assertllm: Generating and evaluating hardware verification assertions from design specifications via multi-llms, 2024. URL https://arxiv.org/abs/2402.00386

-

[30]

Harry D. Foster. Trends in functional verification: A 2014 industry study. In 2015 52nd ACM/EDAC/IEEE Design Automation Conference (DAC), pp.\ 1--6, 2015. doi:10.1145/2744769.2744921

-

[31]

An evaluation benchmark for autoformalization in lean4

Aryan Gulati, Devanshu Ladsaria, Shubhra Mishra, Jasdeep Sidhu, and Brando Miranda. An evaluation benchmark for autoformalization in lean4. In ICLR Workshop on AI and Formal Methods, 2024. Benchmark of LLM performance on translating informal mathematics into Lean4 formal code

2024

-

[32]

John L. Hennessy and David A. Patterson. A new golden age for computer architecture. Commun. ACM, 62 0 (2): 0 48–60, January 2019. ISSN 0001-0782. doi:10.1145/3282307. URL https://doi.org/10.1145/3282307

-

[33]

Rheinl \"a nder, Christian Weis, and Norbert Wehn

Matthias Jung, Carl C. Rheinl \"a nder, Christian Weis, and Norbert Wehn. Reverse Engineering of DRAMs : Row Hammer with Crosshair . In Proceedings of the Second International Symposium on Memory Systems , pp.\ 471--476, Alexandria VA USA, October 2016. ACM. ISBN 978-1-4503-4305-3. doi:10.1145/2989081.2989114

-

[34]

A New State Model for DRAMs Using Petri Nets

Matthias Jung, Kira Kraft, and Norbert Wehn. A New State Model for DRAMs Using Petri Nets . In 2017 International Conference on Embedded Computer Systems : Architectures , Modeling , and Simulation ( SAMOS ) , pp.\ 221--226, Pythagorion, Greece, July 2017. IEEE. ISBN 978-1-5386-3437-0. doi:10.1109/SAMOS.2017.8344631

-

[35]

Fast Validation of DRAM Protocols with Timed Petri Nets

Matthias Jung, Kira Kraft, Taha Soliman, Chirag Sudarshan, Christian Weis, and Norbert Wehn. Fast Validation of DRAM Protocols with Timed Petri Nets . In Proceedings of the International Symposium on Memory Systems , pp.\ 133--147, Washington District of Columbia USA, September 2019. ACM. ISBN 978-1-4503-7206-0. doi:10.1145/3357526.3357556

-

[36]

Flipping bits in memory without accessing them: an experimental study of dram disturbance errors

Yoongu Kim, Ross Daly, Jeremie Kim, Chris Fallin, Ji Hye Lee, Donghyuk Lee, Chris Wilkerson, Konrad Lai, and Onur Mutlu. Flipping bits in memory without accessing them: an experimental study of dram disturbance errors. In Proceeding of the 41st Annual International Symposium on Computer Architecuture, ISCA '14, pp.\ 361–372. IEEE Press, 2014. ISBN 9781479943944

2014

-

[37]

Yu-An Shih, Annie Lin, Aarti Gupta, and Sharad Malik. Flag: Formal and llm-assisted sva generation for formal specifications of on-chip communication protocols, 2025. URL https://arxiv.org/abs/2504.17226

-

[38]

SystemVerilog for Verification

Chris Spear and Greg Tumbush. SystemVerilog for Verification. Springer, 2012

2012

-

[40]

A Framework for Formal Verification of DRAM Controllers

Lukas Steiner, Chirag Sudarshan, Matthias Jung, Dominik Stoffel, and Norbert Wehn. A Framework for Formal Verification of DRAM Controllers . In Proceedings of the 2022 International Symposium on Memory Systems , pp.\ 1--7, Washington DC USA, October 2022 b . ACM. ISBN 978-1-4503-9800-8. doi:10.1145/3565053.3565059

-

[41]

AssertCoder: LLM-Based Assertion Generation via Multimodal Specification Extraction

Enyuan Tian , Yiwei Ci , Qiusong Yang , Yufeng Li , and Zhichao Lyu . AssertCoder: LLM-Based Assertion Generation via Multimodal Specification Extraction . arXiv e-prints, art. arXiv:2507.10338, July 2025. doi:10.48550/arXiv.2507.10338

-

[42]

Shift-Left Techniques in Electronic Design Automation: A Survey

Xinyue Wu , Zixuan Li , Fan Hu , Ting Lin , Xiaotian Zhao , Runxi Wang , and Xinfei Guo . Shift-Left Techniques in Electronic Design Automation: A Survey . arXiv e-prints, art. arXiv:2509.14551, September 2025. doi:10.48550/arXiv.2509.14551

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.