Recognition: unknown

Reconstructing conformal field theoretical compositions with Transformers

Pith reviewed 2026-05-09 18:30 UTC · model grok-4.3

The pith

Transformers recover the constituent RCFTs of tensor products from low-energy spectra with 98 percent accuracy.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Transformer models trained on the low-energy spectra of tensor products of Wess-Zumino-Witten RCFTs recover the central charges and affine Lie algebra labels of the constituent theories at 98 percent accuracy. The same models generalize to RCFTs with larger central charge and to new classes of RCFTs when the training set is supplemented with a small number of out-of-domain examples. These results establish that transformers can perform the combinatorial decomposition task and indicate their utility as a tool for bulk reconstruction in AdS/CFT.

What carries the argument

A transformer neural network that maps the low-energy spectrum of a tensor product RCFT to the central charges and affine Lie algebra labels of its constituent WZW models.

If this is right

- Composite RCFTs built from WZW models can be decomposed automatically at high accuracy without enumerating all possible combinations.

- The demonstrated generalization means the method can be applied to RCFTs with central charges beyond the initial training range.

- Machine learning decomposition offers a practical route to identifying candidate boundary CFTs that could correspond to given bulk geometries in AdS/CFT.

- The same training strategy may extend to other RCFT constructions once a modest number of labeled examples from the new class are supplied.

Where Pith is reading between the lines

- If low-energy spectra suffice for identification, then full modular invariance data may be redundant for many decomposition tasks.

- The approach could be tested on tensor products that include non-WZW RCFTs to check whether the same transformer architecture continues to succeed.

- In string theory applications the method might speed up scans for consistent compactifications by quickly filtering possible component theories from spectral data.

Load-bearing premise

The low-energy spectrum alone contains enough information to uniquely determine the constituent RCFTs of a tensor product.

What would settle it

A set of tensor product RCFTs drawn from central charges or classes outside the training distribution on which the transformer consistently returns incorrect constituents even after the addition of a few out-of-domain examples.

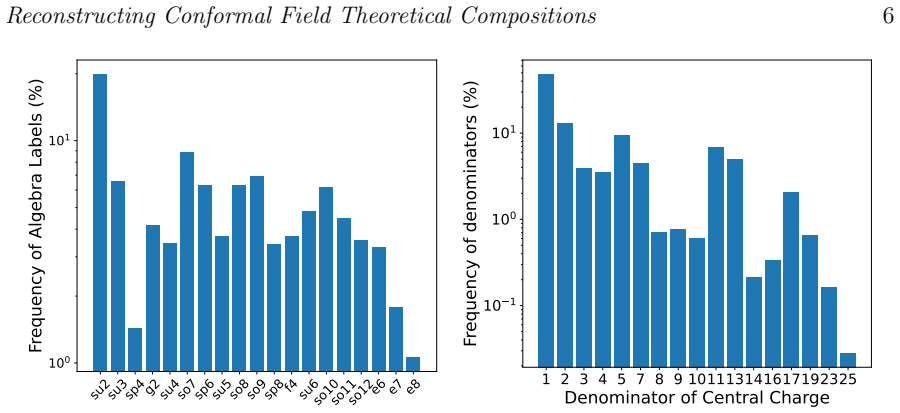

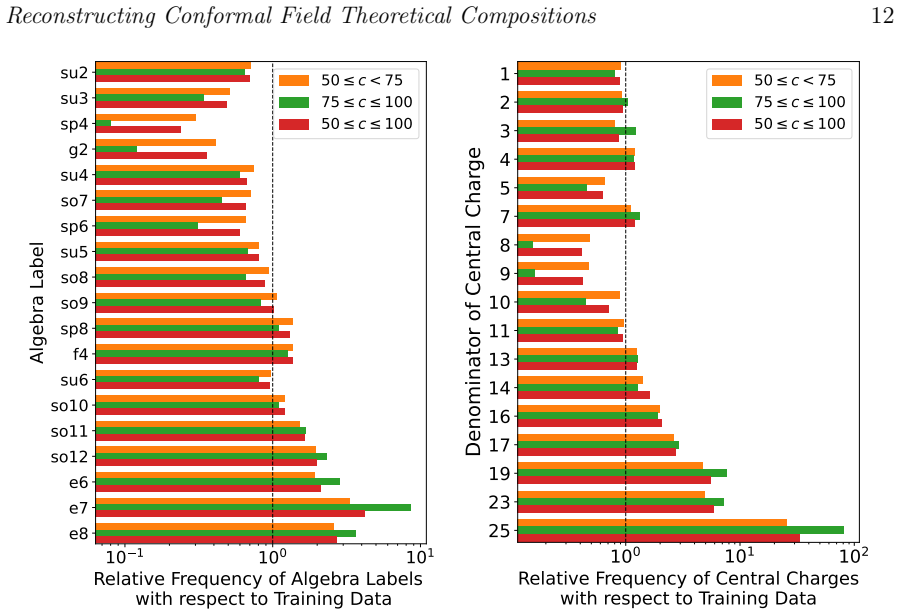

Figures

read the original abstract

We study the use of transformers to reconstruct the compositions of tensor products of two-dimensional rational conformal field theories (RCFTs) based on their low-energy spectra. The task is challenging due to its combinatorial nature. The constituent theories are characterized by their central charges and affine Lie algebra labels. We achieve 98% accuracy in recovering the constituents of tensor products theories constructed from Wess-Zumino-Witten models. We further demonstrate that our method generalizes to CFTs with larger central charge and unseen classes of RCFTs by adding a small number of out-of-domain examples. Our results show that transformers are effective at this task and point towards a new tool for bulk reconstruction in AdS/CFT.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper explores the application of Transformer neural networks to the problem of decomposing tensor products of rational conformal field theories (RCFTs) using only their low-energy spectra. Focusing on Wess-Zumino-Witten (WZW) models, the authors report achieving 98% accuracy in recovering the constituent theories. They also show that the model can generalize to CFTs with larger central charges and to previously unseen classes of RCFTs when provided with a small number of out-of-domain examples. The approach is positioned as a potential tool for bulk reconstruction in the AdS/CFT correspondence.

Significance. Should the reported accuracies prove robust upon provision of missing experimental details, this work would demonstrate that modern machine learning architectures can effectively tackle combinatorial reconstruction tasks in conformal field theory. The few-shot generalization to new central charges and RCFT classes is a notable strength, indicating that the model captures transferable features from the spectral data. This could represent a useful computational aid in the classification and identification of RCFTs, complementing traditional algebraic methods.

major comments (3)

- Abstract: The 98% accuracy is stated without accompanying details on dataset size, train-test split, error bars, or overfitting controls, which are necessary to substantiate the claim given the combinatorial nature of the task.

- Results on WZW models: The high accuracy may reflect the specific construction of the training dataset rather than a general solution to the decomposition problem; no analysis is provided on whether distinct pairs of WZW models can produce identical low-lying spectra due to overlaps in their conformal dimensions.

- Generalization experiments: The claim of generalization to larger central charges and unseen RCFT classes relies on adding a small number of out-of-domain examples, but the exact number, the choice of examples, and any ablation on this number are not specified, limiting the ability to assess the robustness of this few-shot capability.

minor comments (2)

- Introduction: The discussion of prior work on machine learning applications to CFTs could include more references to related efforts in using neural networks for spectrum analysis.

- Notation: Clarify how the low-energy spectrum is encoded as input to the Transformer, including the precise format for central charges and degeneracies.

Simulated Author's Rebuttal

We thank the referee for their thorough review and valuable suggestions. We have carefully considered each comment and made revisions to address the concerns regarding experimental details and robustness. Our point-by-point responses are provided below.

read point-by-point responses

-

Referee: Abstract: The 98% accuracy is stated without accompanying details on dataset size, train-test split, error bars, or overfitting controls, which are necessary to substantiate the claim given the combinatorial nature of the task.

Authors: We agree that additional details are needed to substantiate the reported accuracy. In the revised manuscript, we have updated the abstract to include the dataset size of approximately 10,000 tensor product spectra, an 80-20 train-test split, and mention that error bars are computed from 5 independent runs with standard deviation below 1%. We have also added a dedicated subsection in the Methods section detailing the overfitting controls, including the use of dropout, early stopping based on validation loss, and cross-validation. These additions ensure the claim is properly supported. revision: yes

-

Referee: Results on WZW models: The high accuracy may reflect the specific construction of the training dataset rather than a general solution to the decomposition problem; no analysis is provided on whether distinct pairs of WZW models can produce identical low-lying spectra due to overlaps in their conformal dimensions.

Authors: This is a valid concern. To address it, we have added an analysis in the revised Results section showing that in our dataset of WZW model tensor products, all low-lying spectra (considering the first 10 conformal dimensions and their multiplicities) are unique; no two different pairs produce identical spectra. This was verified by computing the spectra for all generated combinations and checking for duplicates. While a full theoretical proof for all possible WZW models is not provided (as it would require exhaustive enumeration), for the range of models considered (levels up to 10 for SU(2), etc.), no overlaps were found. This supports that the high accuracy reflects the model's ability to distinguish the constituents rather than dataset artifacts. revision: yes

-

Referee: Generalization experiments: The claim of generalization to larger central charges and unseen RCFT classes relies on adding a small number of out-of-domain examples, but the exact number, the choice of examples, and any ablation on this number are not specified, limiting the ability to assess the robustness of this few-shot capability.

Authors: We appreciate this observation. In the revised manuscript, we now specify that for generalization to larger central charges, we added 4 out-of-domain examples, and for unseen RCFT classes, 3 examples were included. We have performed and added an ablation study varying the number of out-of-domain examples from 0 to 10, showing that performance saturates after 3-4 examples with accuracy reaching over 90%. The examples were chosen as representative cases from the target domain (e.g., higher level WZW models and minimal models). This demonstrates the few-shot capability more robustly. revision: yes

Circularity Check

No significant circularity in the supervised learning pipeline

full rationale

The paper presents a standard supervised machine learning setup: synthetic spectra of tensor-product RCFTs are generated, a Transformer is trained to map low-energy spectra to constituent labels, and accuracy (98%) plus few-shot generalization are measured on held-out and out-of-domain test sets. No equations or claims reduce the reported performance to the training inputs by construction; the test-set metric is statistically independent. No self-citations are invoked as load-bearing uniqueness theorems, no ansatzes are smuggled, and no fitted parameters are relabeled as predictions. The derivation chain is therefore self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

free parameters (2)

- number of out-of-domain examples

- transformer architecture hyperparameters

axioms (1)

- domain assumption Low-energy spectra of tensor-product RCFTs contain sufficient information to identify the constituent theories.

Reference graph

Works this paper leans on

-

[1]

P. Di Francesco, P. Mathieu, and D. Senechal.Conformal Field Theory. Graduate Texts in Contemporary Physics. New York: Springer-Verlag, 1997.isbn: 978-0- 387-94785-3, 978-1-4612-7475-9.doi:10.1007/978-1-4612-2256-9

- [2]

-

[3]

Patashinskii and V.L

A.Z. Patashinskii and V.L. Pokrovskii.Fluctuation Theory of Phase Transitions. International Series in Natural Philosophy. Oxford: Pergamon Press, 1979.url: https://books.google.com/books?id=G0IuywEACAAJ

1979

-

[4]

The Large N Limit of Superconformal Field Theories and Supergravity

Juan Martin Maldacena. “The LargeNlimit of superconformal field theories and supergravity”. In:Adv. Theor. Math. Phys.2 (1998), pp. 231–252.doi:10.4310/ ATMP.1998.v2.n2.a1. arXiv:hep-th/9711200

work page internal anchor Pith review arXiv 1998

-

[5]

Koji Hashimoto, Koshiro Matsuo, Masaki Murata, Gakuto Ogiwara, and Daichi Takeda. “Machine-learning emergent spacetime from linear response in future tabletop quantum gravity experiments”. In:Mach. Learn. Sci. Tech.6.1 (2025), p. 015030.doi:10.1088/2632-2153/adb09f. arXiv:2411.16052 [hep-th]

-

[6]

Tetsuya Akutagawa, Koji Hashimoto, and Takayuki Sumimoto. “Deep learning and AdS/QCD”. In:Physical Review D102.2 (July 2020).issn: 2470-0029.doi: 10.1103/physrevd.102.026020.url:http://dx.doi.org/10.1103/PhysRevD. 102.026020

work page doi:10.1103/physrevd.102.026020.url:http://dx.doi.org/10.1103/physrevd 2020

-

[7]

Polchinski.String theory

J. Polchinski.String theory. Vol. 2: Superstring theory and beyond. Cambridge Monographs on Mathematical Physics. Cambridge University Press, Dec. 2007. isbn: 978-0-511-25228-0, 978-0-521-63304-8, 978-0-521-67228-3.doi:10 . 1017 / CBO9780511618123

2007

-

[8]

Gravity dual of the Ising model

Alejandra Castro, Matthias R. Gaberdiel, Thomas Hartman, Alexander Maloney, and Roberto Volpato. “Gravity dual of the Ising model”. In:Physical Review D 85.2 (Jan. 2012).issn: 1550-2368.doi:10 . 1103 / physrevd . 85 . 024032.url: http://dx.doi.org/10.1103/PhysRevD.85.024032

-

[9]

Averaging over Narain moduli space

Alexander Maloney and Edward Witten. “Averaging over Narain moduli space”. In:Journal of High Energy Physics2020.10 (Oct. 2020).issn: 1029-8479.doi:10. 1007/jhep10(2020)187.url:http://dx.doi.org/10.1007/JHEP10(2020)187

-

[10]

Poincar´ e Series, 3d Gravity and Averages of Rational CFT

Viraj Meruliya, Sunil Mukhi, and Palash Singh. “Poincar´ e Series, 3d Gravity and Averages of Rational CFT”. In:JHEP04 (2021), p. 267.doi:10 . 1007 / JHEP04(2021)267. arXiv:2102.03136 [hep-th]

-

[11]

Anomalies and Fermion Zero Modes on Strings and Domain Walls,

A.A. Belavin, A.M. Polyakov, and A.B. Zamolodchikov. “Infinite conformal symmetry in two-dimensional quantum field theory”. In:Nuclear Physics B241.2 (1984), pp. 333–380.issn: 0550-3213.doi:https://doi.org/10.1016/0550- 3213(84)90052-X.url:https://www.sciencedirect.com/science/article/ pii/055032138490052X. REFERENCES19

-

[12]

Space-Time Supersymmetry in Compactified String Theory and Superconformal Models

Doron Gepner. “Space-Time Supersymmetry in Compactified String Theory and Superconformal Models”. In:Nucl. Phys. B296 (1988). Ed. by B. Schellekens, p. 757.doi:10.1016/0550-3213(88)90397-5

-

[13]

Exactly Solvable String Compactifications on Manifolds of SU(N) Holonomy

Doron Gepner. “Exactly Solvable String Compactifications on Manifolds of SU(N) Holonomy”. In:Phys. Lett. B199 (1987), pp. 380–388.doi:10 . 1016 / 0370 - 2693(87)90938-5

1987

-

[14]

The Standard Model from String Theory: What Have We Learned?

Fernando Marchesano, Gary Shiu, and Timo Weigand. “The Standard Model from String Theory: What Have We Learned?” In:Ann. Rev. Nucl. Part. Sci.74 (2024), pp. 113–140.doi:10.1146/annurev-nucl-102622-012235. arXiv:2401.01939 [hep-th]

-

[15]

Classification of Unitary RCFTs with Two Primaries and Central Charge Less Than 25

Sunil Mukhi and Brandon C. Rayhaun. “Classification of Unitary RCFTs with Two Primaries and Central Charge Less Than 25”. In:Communications in Mathematical Physics401.2 (Mar. 2023), pp. 1899–1949.issn: 1432-0916.doi: 10.1007/s00220-023-04681-1.url:http://dx.doi.org/10.1007/s00220- 023-04681-1

work page doi:10.1007/s00220-023-04681-1.url:http://dx.doi.org/10.1007/s00220- 2023

-

[16]

On the Classification of Rational Conformal Field Theories

Samir D. Mathur, Sunil Mukhi, and Ashoke Sen. “On the Classification of Rational Conformal Field Theories”. In:Phys. Lett. B213 (1988), pp. 303–308.doi:10. 1016/0370-2693(88)91765-0

1988

-

[17]

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin.Attention Is All You Need. 2023. arXiv:1706.03762 [cs.CL].url:https://arxiv.org/abs/1706.03762

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[18]

Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova.BERT: Pre- training of Deep Bidirectional Transformers for Language Understanding. 2019. arXiv:1810.04805 [cs.CL].url:https://arxiv.org/abs/1810.04805

work page internal anchor Pith review Pith/arXiv arXiv 2019

-

[19]

Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Sylvain Gelly, Jakob Uszkoreit, and Neil Houlsby.An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. 2021. arXiv:2010. 11929 [cs.CV].url:https://arxiv.org/abs/2010.11929

work page internal anchor Pith review Pith/arXiv arXiv 2021

- [20]

-

[21]

Ellenberg, Adam Zsolt Wagner, and Geordie Williamson

Fran¸ cois Charton, Jordan S. Ellenberg, Adam Zsolt Wagner, and Geordie Williamson.PatternBoost: Constructions in Mathematics with a Little Help from AI. 2024. arXiv:2411.00566 [math.CO].url:https://arxiv.org/abs/2411. 00566. REFERENCES20

-

[22]

Mathematical discoveries from program search with large language models

Bernardino Romera-Paredes, Mohammadamin Barekatain, Alexander Novikov, Matej Balog, M. Pawan Kumar, Emilien Dupont, Francisco J. R. Ruiz, Jordan S. Ellenberg, Pengming Wang, Omar Fawzi, Pushmeet Kohli, Alhussein Fawzi, Josh Grochow, Andrea Lodi, Jean-Baptiste Mouret, Talia Ringer, and Tao Yu. “Mathematical discoveries from program search with large langua...

2023

-

[23]

Graph neural networks in particle physics

Tianji Cai, Garrett W. Merz, Fran¸ cois Charton, Niklas Nolte, Matthias Wilhelm, Kyle Cranmer, and Lance J. Dixon. “Transforming the bootstrap: using transformers to compute scattering amplitudes in planarN= 4 super Yang–Mills theory”. In:Mach. Learn. Sci. Tech.5.3 (2024), p. 035073.doi:10.1088/2632- 2153/ad743e. arXiv:2405.06107 [cs.LG]

-

[24]

Learning the simplicity of scattering amplitudes

Clifford Cheung, Aur´ elien Dersy, and Matthew D. Schwartz. “Learning the simplicity of scattering amplitudes”. In:SciPost Phys.18.2 (2025), p. 040.doi: 10.21468/SciPostPhys.18.2.040. arXiv:2408.04720 [hep-th]

-

[25]

Transforming Calabi-Yau Constructions: Generating New Calabi-Yau Manifolds with Transformers

Jacky H. T. Yip, Charles Arnal, Fran¸ cois Charton, and Gary Shiu. “Transforming Calabi-Yau Constructions: Generating New Calabi-Yau Manifolds with Transformers”. In: (July 2025). arXiv:2507.03732 [hep-th]

-

[26]

Chanyong Park, Sejin Kim, and Jung Hun Lee. “Holography transformer”. In: Mod. Phys. Lett. A41.10 (2026), p. 2650046.doi:10.1142/S021773232650046X. arXiv:2311.01724 [hep-th]

-

[27]

Simplifying Polylogarithms with Machine Learning

Aur´ elien Dersy, Matthew D. Schwartz, and Xiaoyuan Zhang. “Simplifying Polylogarithms with Machine Learning”. In:Int. J. Data Sci. Math. Sci.1.2 (2024), pp. 135–179.doi:10 . 1142 / S2810939223500028. arXiv:2206 . 04115 [cs.LG]

2024

-

[28]

H., Jedamzik, K., & Pogosian, L

Sergei Gukov and Rak-Kyeong Seong. “Machine learning BPS spectra and the gap conjecture”. In:Phys. Rev. D110.4 (2024), p. 046016.doi:10.1103/PhysRevD. 110.046016. arXiv:2405.09993 [hep-th]

-

[29]

Decoding conformal field theories: from supervised to unsupervised learning

En-Jui Kuo, Alireza Seif, Rex Lundgren, Seth Whitsitt, and Mohammad Hafezi. Decoding conformal field theories: from supervised to unsupervised learning. 2021. arXiv:2106.13485 [cond-mat.str-el].url:https://arxiv.org/abs/2106. 13485

-

[30]

Heng-Yu Chen, Yang-Hui He, Shailesh Lal, and M. Zaid Zaz.Machine Learning Etudes in Conformal Field Theories. 2020. arXiv:2006.16114 [hep-th].url: https://arxiv.org/abs/2006.16114

-

[31]

Solving Conformal Field Theories with Artificial Intelligence

Gergely K´ antor, Constantinos Papageorgakis, and Vasilis Niarchos. “Solving Conformal Field Theories with Artificial Intelligence”. In:Physical Review Letters 128.4 (Jan. 2022).issn: 1079-7114.doi:10 . 1103 / physrevlett . 128 . 041601. url:http://dx.doi.org/10.1103/PhysRevLett.128.041601. REFERENCES21

-

[32]

Learning Conformal Field Theory with Symbolic Regression: Recovering the Symbolic Expressions for the Energy Spectrum

Haotian Cao, Garrett W Merz, Kyle Cranmer, and Gary Shiu. “Learning Conformal Field Theory with Symbolic Regression: Recovering the Symbolic Expressions for the Energy Spectrum”. In:NeurIPS 2024 Workshop on Machine Learning and the Physical Sciences (ML4PS). Workshop paper. 2024.url:https: //ml4physicalsciences.github.io/2024/files/NeurIPS_ML4PS_2024_190. pdf

2024

-

[33]

Miles Cranmer.Interpretable Machine Learning for Science with PySR and SymbolicRegression.jl. 2023. arXiv:2305 . 01582 [astro-ph.IM].url:https : //arxiv.org/abs/2305.01582

work page internal anchor Pith review arXiv 2023

-

[34]

Descending into the Modular Bootstrap

Nathan Benjamin, A. Liam Fitzpatrick, Wei Li, and Jesse Thaler.Descending into the Modular Bootstrap. 2026. arXiv:2604 . 01275 [hep-th].url:https : //arxiv.org/abs/2604.01275

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[35]

Cambridge studies in advanced mathematics 96

Roger W Carter.Lie algebras of finite and affine type. Cambridge studies in advanced mathematics 96. Cambridge University Press, 2005

2005

- [36]

-

[37]

Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-Voss, Gretchen Krueger, Tom Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler, Jeffrey Wu, Clemens Winter, Christopher Hesse, Mark Chen, Eric Sigler, Mateusz Litwin...

work page internal anchor Pith review arXiv 2020

- [38]

-

[39]

Kingma and Jimmy Ba.Adam: A Method for Stochastic Optimization

Diederik P. Kingma and Jimmy Ba.Adam: A Method for Stochastic Optimization

-

[40]

arXiv:1412.6980 [cs.LG].url:https://arxiv.org/abs/1412.6980

work page internal anchor Pith review Pith/arXiv arXiv

-

[41]

2006.doi:10.21231/GNT1-HW21.url:https://chtc.cs.wisc.edu/

Center for High Throughput Computing.Center for High Throughput Computing. 2006.doi:10.21231/GNT1-HW21.url:https://chtc.cs.wisc.edu/

work page doi:10.21231/gnt1-hw21.url:https://chtc.cs.wisc.edu/ 2006

- [42]

-

[43]

arXiv preprint arXiv:1411.1792 , year=

Jason Yosinski, Jeff Clune, Yoshua Bengio, and Hod Lipson.How transferable are features in deep neural networks?2014. arXiv:1411.1792 [cs.LG].url:https: //arxiv.org/abs/1411.1792

-

[44]

String theory on Calabi-Yau manifolds

Brian R. Greene. “String theory on Calabi-Yau manifolds”. In:Theoretical Advanced Study Institute in Elementary Particle Physics (TASI 96): Fields, Strings, and Duality. June 1996, pp. 543–726. arXiv:hep-th/9702155. REFERENCES22

-

[45]

Non-Hamiltonian approach to conformal quantum field theory

A. M. Polyakov. “Non-Hamiltonian approach to conformal quantum field theory”. In:Zh. Eksp. Teor. Fiz.66.1 (1974), pp. 23–42

1974

-

[46]

Momentum space approach to crossing symmetric CFT correlators

Hiroshi Isono, Toshifumi Noumi, and Gary Shiu. “Momentum space approach to crossing symmetric CFT correlators”. In:JHEP07 (2018), p. 136.doi:10.1007/ JHEP07(2018)136. arXiv:1805.11107 [hep-th]

-

[47]

Momentum space approach to crossing symmetric CFT correlators. Part II. General spacetime dimension

Hiroshi Isono, Toshifumi Noumi, and Gary Shiu. “Momentum space approach to crossing symmetric CFT correlators. Part II. General spacetime dimension”. In: JHEP10 (2019), p. 183.doi:10.1007/JHEP10(2019)183. arXiv:1908.04572 [hep-th]

-

[48]

Conformaln-point functions in momentum space

Adam Bzowski, Paul McFadden, and Kostas Skenderis. “Conformaln-point functions in momentum space”. In:Phys. Rev. Lett.124.13 (2020), p. 131602. doi:10.1103/PhysRevLett.124.131602. arXiv:1910.10162 [hep-th]

-

[49]

Conformal correlators as simplex integrals in momentum space

Adam Bzowski, Paul McFadden, and Kostas Skenderis. “Conformal correlators as simplex integrals in momentum space”. In:JHEP01 (2021), p. 192.doi: 10.1007/JHEP01(2021)192. arXiv:2008.07543 [hep-th]

-

[50]

Conformal Bootstrap in Mellin Space

Rajesh Gopakumar, Apratim Kaviraj, Kallol Sen, and Aninda Sinha. “Conformal Bootstrap in Mellin Space”. In:Phys. Rev. Lett.118.8 (2017), p. 081601.doi: 10.1103/PhysRevLett.118.081601. arXiv:1609.00572 [hep-th]

-

[51]

A Mellin space approach to the conformal bootstrap

Rajesh Gopakumar, Apratim Kaviraj, Kallol Sen, and Aninda Sinha. “A Mellin space approach to the conformal bootstrap”. In:JHEP05 (2017), p. 027.doi: 10.1007/JHEP05(2017)027. arXiv:1611.08407 [hep-th]

-

[52]

Reconstructing Conformal Field Theoretical Composition with Transformers

Haotian Cao, Garrett W Merz, Kyle Cranmer, and Gary Shiu. “Reconstructing Conformal Field Theoretical Composition with Transformers”. In:NeurIPS 2025 Workshop on Machine Learning and the Physical Sciences (ML4PS). Workshop paper. 2025.url:https : / / ml4physicalsciences . github . io / 2025 / files / NeurIPS_ML4PS_2025_242.pdf. REFERENCES23 Appendix A. Su...

2025

-

[53]

For heterotic string, the left- and right-moving sector has different central charges

strings. For heterotic string, the left- and right-moving sector has different central charges. Theory Type Critical Dimension Spacetime Sector (c) Internal Sector (c int) Bosonic String 26c=d c int = 26−d Type II Superstring 10c=d+ 1 2 d= 3 2 d c int = 15− 3 2 d Heterotic String 26 (Left), 10 (Right)c L =d,c R = 3 2 d c int,L = 26−d,c int,R = 15− 3 2 d a...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.