Recognition: unknown

Machine Learning-Augmented Acceleration of Iterative Ptychographic Reconstruction

Pith reviewed 2026-05-09 19:20 UTC · model grok-4.3

The pith

A learned fast-forward operator accelerates iterative ptychographic reconstruction by more than twofold in wall-clock time while preserving reconstruction quality and physical consistency.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Following an initial warm-up phase of standard iterative updates, a trained fast-forward operator is inserted into the reconstruction loop to advance the object and probe estimates toward convergence; conventional iterations then continue to enforce physical consistency. The operator is learned from diverse ptychographic datasets and tested on experimental data acquired in a different year. On these data the hybrid scheme reaches comparable image quality to pure iterative reconstruction while reducing the Poisson negative log-likelihood faster, producing more than a twofold drop in wall-clock time. The approach has been embedded in an operational pipeline at a synchrotron beamline.

What carries the argument

The learned fast-forward operator, which takes the current reconstruction state after warm-up iterations and predicts an advanced state that conventional iterations would reach only after many more steps.

If this is right

- The hybrid pipeline integrates directly into existing ptychographic codes without changing their core physics model or flexibility.

- Convergence measured by Poisson negative log-likelihood occurs in fewer total iterations than standard solvers.

- Reconstruction quality remains comparable to full iterative runs on real synchrotron data.

- The method supports real-time operation once deployed at a beamline.

- Training on varied datasets enables generalization across different experimental sessions separated by time.

Where Pith is reading between the lines

- The same fast-forward idea could be tested on other iterative phase-retrieval problems such as tomography or holography to see whether similar speed-ups appear.

- If the operator can be fine-tuned on incoming data during an experiment, it might adapt to changing sample or beam conditions without retraining from scratch.

- Combining the operator with existing acceleration tricks like momentum or adaptive step sizes might produce further gains beyond the reported factor of two.

- Wider use would lower the barrier to high-resolution coherent imaging for users who currently avoid long reconstruction times.

Load-bearing premise

The learned operator will produce physically valid advances on unseen experimental data without creating artifacts that the subsequent iterative steps cannot correct.

What would settle it

Running the hybrid method on a new dataset and measuring that the final reconstruction error or negative log-likelihood is markedly worse than a full conventional run of the same length would falsify the claim of maintained quality at reduced cost.

Figures

read the original abstract

Iterative ptychographic reconstruction algorithms are widely used for coherent diffractive imaging but can exhibit slow convergence under realistic experimental conditions. We propose a machine learning-augmented approach that accelerates iterative ptychographic reconstruction by introducing a learned fast-forward operator applied during reconstruction. Following an initial warm-up using standard iterations, the fast-forward operator advances the reconstruction toward a more converged state, after which conventional iterative updates are resumed. This strategy preserves the physical consistency and flexibility of established ptychographic solvers while reducing the number of iterations required for convergence. The model is trained on diverse ptychographic datasets and evaluated on experimental data acquired in a different year, demonstrating robustness and temporal generalization. Compared with conventional iterative solvers, the machine learning-augmented method achieves comparable reconstruction quality while converging faster in terms of Poisson negative log-likelihood, yielding over a two-fold reduction in wall-clock time. The approach has been integrated into an existing reconstruction pipeline and deployed in production at a synchrotron beamline, demonstrating practicality for real-time experimental operation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a hybrid machine learning-augmented iterative ptychographic reconstruction algorithm. After a warm-up phase of standard iterations, a learned fast-forward operator is applied to advance the reconstruction state, after which conventional iterative updates resume. The neural network is trained on diverse ptychographic datasets and evaluated on experimental data collected in a different year. The central claims are that the method achieves comparable reconstruction quality to purely iterative solvers, converges faster as measured by Poisson negative log-likelihood, delivers more than a two-fold reduction in wall-clock time, preserves physical consistency, and has been successfully deployed in a production synchrotron beamline pipeline.

Significance. If the empirical claims on speedup and temporal generalization are substantiated with quantitative metrics, the work would be significant for coherent diffractive imaging. It offers a practical way to accelerate slow-converging iterative ptychography solvers without fully replacing them, thereby retaining flexibility and physical consistency. The reported integration into an existing reconstruction pipeline and real-world deployment at a beamline is a concrete strength that demonstrates translational value beyond simulation. This hybrid strategy could influence acceleration techniques in other iterative imaging modalities.

major comments (2)

- [Abstract / Evaluation] Abstract and evaluation on held-out temporal data: the claim of 'robustness and temporal generalization' to experimental data acquired in a different year is asserted without reported quantitative metrics. No final Poisson negative log-likelihood values, Fourier ring correlation (FRC) resolution numbers, or side-by-side quantitative artifact measures are provided for the temporally shifted test set. This is load-bearing for the headline result of 'comparable reconstruction quality' because the learned operator is not derived from the forward model and could produce states that reduce NLL yet violate consistency when standard iterations resume.

- [Abstract / Methods] Abstract and methods description: no details are supplied on neural-network architecture, training loss, number of warm-up iterations, or the exact insertion point of the fast-forward operator. These omissions make it impossible to assess reproducibility or to verify that the operator truly preserves the physical constraints enforced by the subsequent conventional updates.

minor comments (1)

- [Abstract] The abstract would be strengthened by including at least one concrete quantitative result (e.g., iteration count reduction or wall-clock speedup factor with error bars) to support the 'over a two-fold reduction' statement.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and positive assessment of the work's significance for coherent diffractive imaging. We address the two major comments below with specific revisions to strengthen the manuscript.

read point-by-point responses

-

Referee: [Abstract / Evaluation] Abstract and evaluation on held-out temporal data: the claim of 'robustness and temporal generalization' to experimental data acquired in a different year is asserted without reported quantitative metrics. No final Poisson negative log-likelihood values, Fourier ring correlation (FRC) resolution numbers, or side-by-side quantitative artifact measures are provided for the temporally shifted test set. This is load-bearing for the headline result of 'comparable reconstruction quality' because the learned operator is not derived from the forward model and could produce states that reduce NLL yet violate consistency when standard iterations resume.

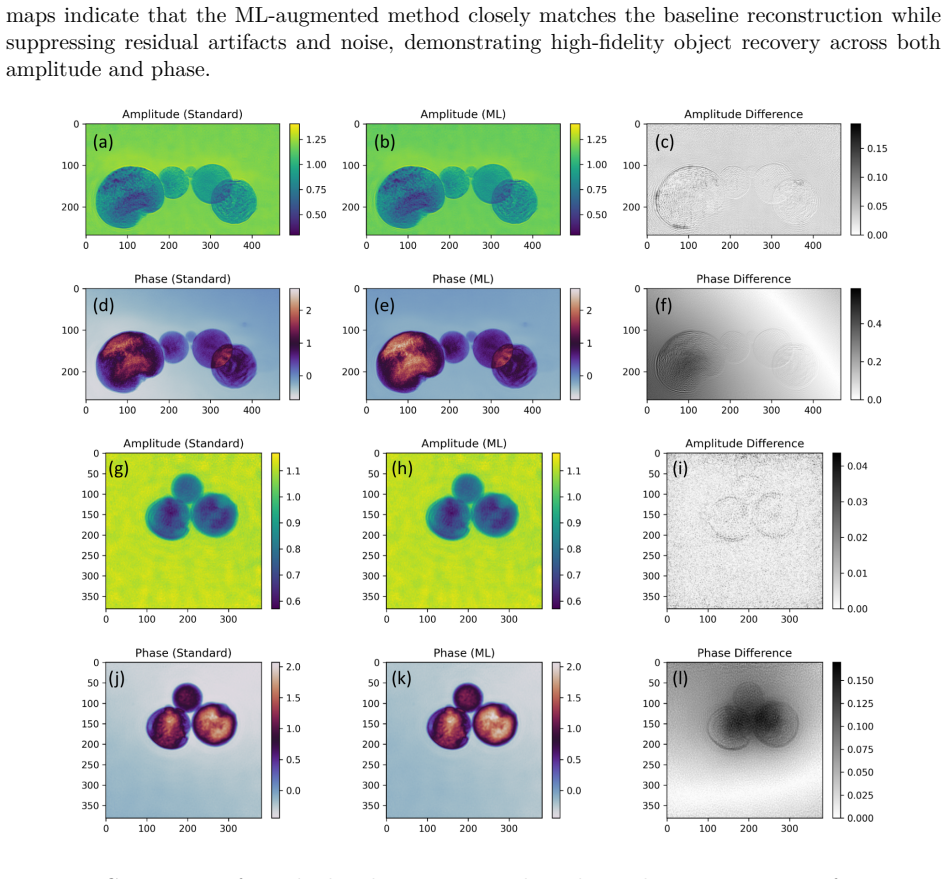

Authors: We agree that explicit final metrics are needed to fully substantiate temporal generalization and comparable quality. The manuscript already presents NLL convergence curves and FRC resolution estimates for the temporally held-out experimental data in Section 4.2 and Figure 5, showing the hybrid method reaches equivalent final NLL and FRC values to standard iterations while requiring fewer total steps. However, we did not tabulate the terminal numerical values or include them in the abstract. We will revise the abstract to report the final NLL (hybrid vs. baseline) and FRC numbers for the held-out set, and add a compact quantitative comparison table. On physical consistency, the paper demonstrates that NLL continues to decrease monotonically after the fast-forward step with no rise in artifacts upon resuming iterations, confirming the operator produces states compatible with the measurement model. revision: yes

-

Referee: [Abstract / Methods] Abstract and methods description: no details are supplied on neural-network architecture, training loss, number of warm-up iterations, or the exact insertion point of the fast-forward operator. These omissions make it impossible to assess reproducibility or to verify that the operator truly preserves the physical constraints enforced by the subsequent conventional updates.

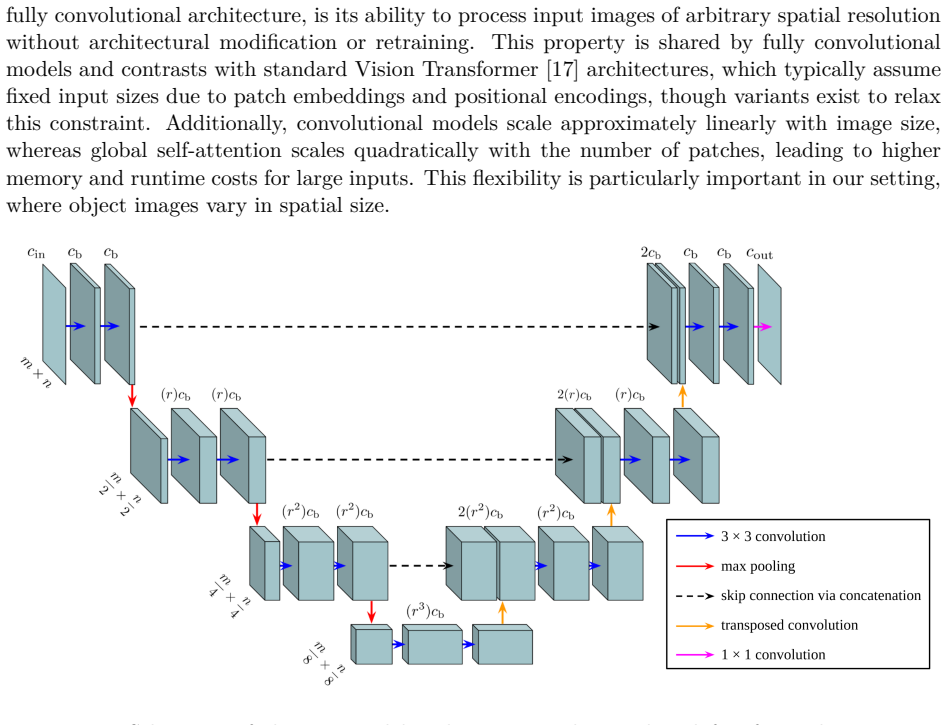

Authors: We acknowledge the abstract omits these parameters due to length constraints. The methods section (Section 3) specifies a U-Net architecture with four encoder-decoder levels, trained via a composite loss of pixel-wise L2 and gradient-based terms on paired intermediate/converged states from multiple ptychographic datasets. Warm-up consists of 50 standard iterations, after which the fast-forward operator is applied once before resuming conventional updates. We will expand the abstract with a concise clause listing the architecture, loss, warm-up count, and insertion point. This addition, together with the existing methods description, enables reproducibility and clarifies that physical constraints remain enforced by the resumed iterative steps. revision: partial

Circularity Check

No significant circularity in the ML-augmented ptychographic acceleration

full rationale

The paper describes an empirical procedure: a fast-forward operator is trained on diverse ptychographic datasets, inserted after a warm-up phase of standard iterations, and then evaluated for convergence speed and reconstruction quality on experimental data collected in a different year. No equations, uniqueness theorems, or ansatzes are presented that reduce the claimed speedup or quality metric to a fitted parameter or self-citation by construction. The central performance claims rest on direct empirical comparison of Poisson negative log-likelihood and wall-clock time on held-out data, which is independent of the training inputs and does not constitute a self-definitional or fitted-input-called-prediction loop.

Axiom & Free-Parameter Ledger

free parameters (1)

- Neural network weights and biases

axioms (1)

- domain assumption The learned fast-forward operator can be inserted into the iterative solver without violating physical consistency or introducing non-physical artifacts

Reference graph

Works this paper leans on

-

[1]

A phase retrieval algorithm for shifting illumi- nation.Applied Physics Letters, 85(20):4795–4797, 2004

John M Rodenburg and Helen ML Faulkner. A phase retrieval algorithm for shifting illumi- nation.Applied Physics Letters, 85(20):4795–4797, 2004

2004

-

[2]

Computational microscopy with coherent diffractive imaging and ptychography

Jianwei Miao. Computational microscopy with coherent diffractive imaging and ptychography. Nature, 637(8045):281–295, 2025

2025

-

[3]

An improved ptychographical phase retrieval algorithm for diffractive imaging.Ultramicroscopy, 109(10):1256–1262, 2009

Andrew M Maiden and John M Rodenburg. An improved ptychographical phase retrieval algorithm for diffractive imaging.Ultramicroscopy, 109(10):1256–1262, 2009

2009

-

[4]

Further improvements to the ptychographical iterative engine.Optica, 4(7):736–745, 2017

Andrew Maiden, Daniel Johnson, and Peng Li. Further improvements to the ptychographical iterative engine.Optica, 4(7):736–745, 2017

2017

-

[5]

High-resolution scanning x-ray diffraction microscopy.Science, 321(5887):379–382, 2008

Pierre Thibault, Martin Dierolf, Andreas Menzel, Oliver Bunk, Christian David, and Franz Pfeiffer. High-resolution scanning x-ray diffraction microscopy.Science, 321(5887):379–382, 2008

2008

-

[6]

Probe retrieval in ptychographic coherent diffractive imaging.Ultramicroscopy, 109(4):338–343, 2009

Pierre Thibault, Martin Dierolf, Oliver Bunk, Andreas Menzel, and Franz Pfeiffer. Probe retrieval in ptychographic coherent diffractive imaging.Ultramicroscopy, 109(4):338–343, 2009

2009

-

[7]

Maximum-likelihood refinement for coherent diffractive imaging.New Journal of Physics, 14(6):063004, 2012

Pierre Thibault and Manuel Guizar-Sicairos. Maximum-likelihood refinement for coherent diffractive imaging.New Journal of Physics, 14(6):063004, 2012. 15

2012

-

[8]

Ai-enabled high-resolution scanning coherent diffraction imaging

Mathew J Cherukara, Tao Zhou, Youssef Nashed, Pablo Enfedaque, Alex Hexemer, Ross J Harder, and Martin V Holt. Ai-enabled high-resolution scanning coherent diffraction imaging. Applied Physics Letters, 117(4), 2020

2020

-

[9]

Deep learning at the edge enables real-time streaming ptychographic imaging.Nature Communications, 14(1):7059, 2023

Anakha V Babu, Tao Zhou, Saugat Kandel, Tekin Bicer, Zhengchun Liu, William Judge, Daniel J Ching, Yi Jiang, Sinisa Veseli, Steven Henke, et al. Deep learning at the edge enables real-time streaming ptychographic imaging.Nature Communications, 14(1):7059, 2023

2023

-

[10]

Deep-learning real-time phase retrieval of imperfect diffraction patterns from x-ray free-electron lasers.npj Computational Materials, 11(1):68, 2025

Sung Yun Lee, Do Hyung Cho, Chulho Jung, Daeho Sung, Daewoong Nam, Sangsoo Kim, and Changyong Song. Deep-learning real-time phase retrieval of imperfect diffraction patterns from x-ray free-electron lasers.npj Computational Materials, 11(1):68, 2025

2025

-

[11]

Pid3net: a deep learning approach for single-shot coherent x-ray diffraction imaging of dynamic phenomena

Tien-Sinh Vu, Minh-Quyet Ha, Adam Mukharil Bachtiar, Duc-Anh Dao, Truyen Tran, Hiori Kino, Shuntaro Takazawa, Nozomu Ishiguro, Yuhei Sasaki, Masaki Abe, et al. Pid3net: a deep learning approach for single-shot coherent x-ray diffraction imaging of dynamic phenomena. npj Computational Materials, 11(1):66, 2025

2025

-

[12]

Deep-learning electron diffractive imaging.Physical review letters, 130(1):016101, 2023

Dillan J Chang, Colum M O’Leary, Cong Su, Daniel A Jacobs, Salman Kahn, Alex Zettl, Jim Ciston, Peter Ercius, and Jianwei Miao. Deep-learning electron diffractive imaging.Physical review letters, 130(1):016101, 2023

2023

-

[13]

Physics constrained unsupervised deep learning for rapid, high resolution scanning coherent diffraction reconstruction.Scientific Reports, 13(1):22789, 2023

Oliver Hoidn, Aashwin Ananda Mishra, and Apurva Mehta. Physics constrained unsupervised deep learning for rapid, high resolution scanning coherent diffraction reconstruction.Scientific Reports, 13(1):22789, 2023

2023

-

[14]

CDTools.https://github.com/ cdtools-developers/cdtools

Abraham Levitan, Madelyn Cain, and Anastasiia Kutakh. CDTools.https://github.com/ cdtools-developers/cdtools

-

[15]

U-net: Convolutional networks for biomedical image segmentation

Olaf Ronneberger, Philipp Fischer, and Thomas Brox. U-net: Convolutional networks for biomedical image segmentation. InInternational Conference on Medical image computing and computer-assisted intervention, pages 234–241. Springer, 2015

2015

-

[16]

Dlsia: deep learning for scientific image analysis.Applied Crystallography, 57(2):392–402, 2024

Eric J Roberts, Tanny Chavez, Alexander Hexemer, and Petrus H Zwart. Dlsia: deep learning for scientific image analysis.Applied Crystallography, 57(2):392–402, 2024

2024

-

[17]

An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale

Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Sylvain Gelly, et al. An image is worth 16x16 words: Transformers for image recognition at scale.arXiv preprint arXiv:2010.11929, 2020

work page internal anchor Pith review Pith/arXiv arXiv 2010

-

[18]

Prefect, 2024

Prefect Technologies, Inc. Prefect, 2024. URLhttps://www.prefect.io/. Version X.X.X. 16

2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.