Recognition: unknown

Optimizing Reservoir Computing for Reconstructing Ergodic Properties

Pith reviewed 2026-05-10 14:54 UTC · model grok-4.3

The pith

Optimizing reservoir computing by minimizing invariant distribution error accurately reproduces Lyapunov exponents even with partial observations.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Minimizing the error between the reservoir's reconstructed invariant distribution and the data distribution leads to accurate reproduction of ergodic properties such as Lyapunov exponents in model systems and biological time series, outperforming optimization based on prediction accuracy.

What carries the argument

The central mechanism is the minimization of error in the reconstructed invariant distribution (or its low-dimensional projections) to select reservoir parameters, directly targeting the long-term statistics of the dynamics.

Load-bearing premise

That achieving a close match in the invariant distribution is enough by itself to ensure the reservoir's dynamics correctly capture the original system's ergodic measures and exponents.

What would settle it

Finding a reservoir configuration where the invariant distribution matches the data closely but the computed Lyapunov exponents deviate substantially from the true values would falsify the claim.

Figures

read the original abstract

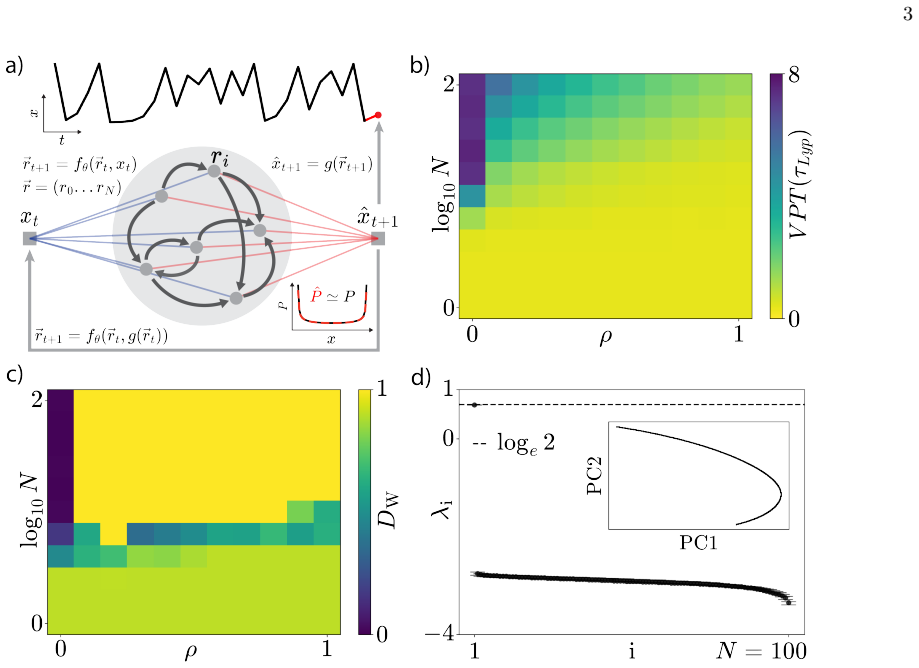

Reservoir computing is a powerful framework for modeling dynamical systems due to its universality and computational efficiency. However, a major challenge is achieving a forecast with accurate long-time statistics, or climate, which is essential for inferring ergodic properties such as Lyapunov exponents. A common approach is to optimize the reservoir's macroscopic parameters, such as the spectral radius, by maximizing prediction time. But here we show that even predictions accurate over multiple Lyapunov times do not guarantee the correct long-time statistics. Instead, we choose reservoir properties by minimizing the error in the reconstructed invariant distribution (or its projections), which is easily available from data. We demonstrate that this approach reproduces the Lyapunov exponents of model dynamical systems, including the logistic and standard maps, as well as the double pendulum, even with partial observations. We further show that recurrent connections, and resulting reservoir memory, are only required in the partially-observed case. We introduce a temporal scaling which reliably separates system and reservoir dynamics. In the posture time series of the nematode C. elegans we show that our approach quantitatively reproduces a chaotic behavioral attractor, but this requires a further constraint on the maximal conditional Lyapunov exponent to ensure the reservoir remains consistently synchronized to the complex biological input.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes optimizing reservoir computing hyperparameters (e.g., spectral radius) by minimizing the discrepancy between the reservoir's reconstructed invariant distribution (or its projections) and the empirical data distribution, rather than by maximizing short-term prediction accuracy. This is claimed to reproduce ergodic properties such as Lyapunov exponents for the logistic map, standard map, double pendulum (including partial observations), and C. elegans posture time series; a temporal scaling factor is introduced to separate timescales, and an additional constraint on the maximal conditional Lyapunov exponent is required for the biological data to maintain synchronization.

Significance. If the empirical results hold under scrutiny, the approach provides a practical, data-driven alternative for tuning reservoirs to capture correct long-term statistics and invariants in chaotic systems, where prediction-based optimization is shown to be insufficient. The demonstrations across multiple model systems and a real dataset, plus the finding that recurrent connections are needed only for partial observations, could be useful for applications in nonlinear dynamics and time-series modeling. However, the lack of theoretical grounding limits the broader significance.

major comments (3)

- [Abstract] Abstract and Methods (optimization description): the central claim that minimizing error between the reservoir's reconstructed invariant distribution and the data distribution suffices to reproduce Lyapunov exponents lacks any derivation or analysis showing why the loss constrains the tangent dynamics; distinct systems can share an invariant measure while having different Lyapunov exponents, which are determined by the average of log|Jacobian| along trajectories.

- [Results] Results (demonstrations on logistic map, standard map, double pendulum): no quantitative error bars, no details on the precise computation of the distribution error (e.g., binning method, distance metric, or minimization procedure), and no comparison tables against prediction-time optimization or other baselines are provided, making the strength of the reproduction claims difficult to assess.

- [Results] Results (C. elegans section): the additional constraint on the maximal conditional Lyapunov exponent is introduced to ensure consistent synchronization, but it is unclear how this is formally incorporated into the optimization objective or whether it undermines the claim that distribution matching alone is sufficient.

minor comments (1)

- [Abstract] The abstract states that 'a temporal scaling which reliably separates system and reservoir dynamics' is introduced, but the explicit functional form and implementation details of this scaling are not specified in the provided text.

Simulated Author's Rebuttal

We thank the referee for their careful reading and constructive comments. We respond point-by-point to the major comments below and indicate planned revisions to the manuscript.

read point-by-point responses

-

Referee: [Abstract] Abstract and Methods (optimization description): the central claim that minimizing error between the reservoir's reconstructed invariant distribution and the data distribution suffices to reproduce Lyapunov exponents lacks any derivation or analysis showing why the loss constrains the tangent dynamics; distinct systems can share an invariant measure while having different Lyapunov exponents, which are determined by the average of log|Jacobian| along trajectories.

Authors: We agree that the manuscript provides no theoretical derivation connecting the distribution-matching objective to the tangent dynamics or Lyapunov exponents. As the referee correctly notes, distinct systems may share an invariant measure yet differ in their Lyapunov exponents. Our contribution is empirical: across the tested chaotic systems, hyperparameter optimization based on invariant distribution error produces reservoirs that more reliably recover the Lyapunov exponents than prediction-time optimization. We will revise the abstract and methods to state explicitly that the approach is empirical rather than theoretically guaranteed, and we will add a brief discussion acknowledging the general distinction between invariant measures and other ergodic invariants such as Lyapunov exponents. revision: partial

-

Referee: [Results] Results (demonstrations on logistic map, standard map, double pendulum): no quantitative error bars, no details on the precise computation of the distribution error (e.g., binning method, distance metric, or minimization procedure), and no comparison tables against prediction-time optimization or other baselines are provided, making the strength of the reproduction claims difficult to assess.

Authors: We accept that the current manuscript lacks quantitative error bars, precise methodological details, and direct comparison tables. In the revised version we will report error bars obtained from multiple independent reservoir realizations. We will specify the distribution error computation (histogram binning with a fixed number of bins and the L1 distance as the metric) and the minimization procedure (grid search over the relevant hyperparameter ranges). We will also add tables that directly compare the errors in recovered Lyapunov exponents and attractor statistics between the distribution-matching objective and the prediction-time baseline. revision: yes

-

Referee: [Results] Results (C. elegans section): the additional constraint on the maximal conditional Lyapunov exponent is introduced to ensure consistent synchronization, but it is unclear how this is formally incorporated into the optimization objective or whether it undermines the claim that distribution matching alone is sufficient.

Authors: The constraint is implemented as a hard filter applied before evaluating the distribution error: only hyperparameter configurations whose maximal conditional Lyapunov exponent lies below a chosen threshold (ensuring reliable synchronization) are retained for the subsequent distribution-matching optimization. We will revise the C. elegans section and the methods to describe this filtering step explicitly and to clarify its role in the overall procedure. We will also note that the synchronization constraint is a practical requirement for this partially observed biological dataset and does not replace the distribution-matching objective; the primary optimization remains the invariant distribution error. revision: yes

Circularity Check

No significant circularity; empirical validation independent of optimization target

full rationale

The paper optimizes reservoir parameters by minimizing error between the reconstructed invariant distribution (or projections) and the observed data distribution, then separately computes and compares Lyapunov exponents from the resulting reservoir trajectories against known values for the logistic map, standard map, and double pendulum. This chain does not reduce by construction because the Lyapunov exponents depend on the tangent map averaged along trajectories and are not inputs to the distribution-matching loss; the paper presents only empirical reproduction on specific examples rather than a derivation claiming the match is forced. No self-citations, self-definitional steps, or fitted inputs renamed as predictions appear in the load-bearing claims. The additional constraint on maximal conditional Lyapunov exponent for C. elegans data is introduced explicitly as an extra requirement, further separating the steps.

Axiom & Free-Parameter Ledger

free parameters (2)

- reservoir hyperparameters (spectral radius, scaling, etc.)

- temporal scaling factor

axioms (2)

- domain assumption The underlying dynamical system possesses a well-defined invariant probability distribution that can be estimated from finite data.

- domain assumption Ergodicity holds so that time averages equal ensemble averages and Lyapunov exponents are well-defined.

Reference graph

Works this paper leans on

-

[1]

S. H. Strogatz,Nonlinear dynamics and chaos: with applications to physics, biology, chemistry, and engineering(Chapman and Hall/CRC, 2024)

2024

-

[2]

Ott,Chaos in dynamical systems(Cambridge university press, 2002)

E. Ott,Chaos in dynamical systems(Cambridge university press, 2002)

2002

-

[3]

Manley, S

J. Manley, S. Lu, K. Barber, J. Demas, H. Kim, D. Meyer, F. M. Traub, and A. Vaziri, Simultaneous, cortex-wide dynamics of up to 1 million neurons reveal unbounded scaling of dimensionality with neuron number, Neuron112, 1694 (2024)

2024

-

[4]

Ahamed, A

T. Ahamed, A. C. Costa, and G. J. Stephens, Capturing the continuous complexity of behaviour in Caenorhabditis elegans, Nature Physics17, 275 (2021)

2021

-

[5]

A. C. Costa, T. Ahamed, D. Jordan, and G. J. Stephens, Maximally predictive states: From partial observations to long timescales, Chaos: An Interdisciplinary Journal of Nonlinear Science33, 023136 (2023)

2023

-

[6]

J. P. Crutchfield and B. McNamara, Equations of motion from a data series ‘, Complex systems1, 417 (1987)

1987

-

[7]

Champion, B

K. Champion, B. Lusch, J. N. Kutz, and S. L. Brunton, Data-driven discovery of coordinates and governing equations, Proceedings of the National Academy of Sciences116, 22445 (2019)

2019

-

[8]

Supekar, B

R. Supekar, B. Song, A. Hastewell, G. P. Choi, A. Mietke, and J. Dunkel, Learning hydrodynamic equations for active matter from particle simulations and experiments, Proceedings of the National Academy of Sciences120, e2206994120 (2023)

2023

-

[9]

C. D. Young and M. D. Graham, Deep learning delay coordinate dynamics for chaotic attractors from partial observable data, Phys. Rev. E107, 034215 (2023)

2023

-

[10]

N. H. Packard, J. P. Crutchfield, J. D. Farmer, and R. S. Shaw, Geometry from a time series, Physical review letters45, 712 (1980)

1980

-

[11]

Brandst¨ ater, J

A. Brandst¨ ater, J. Swift, H. L. Swinney, A. Wolf, J. D. Farmer, E. Jen, and P. Crutchfield, Low-dimensional chaos in a hydrodynamic system, Physical Review Letters51, 1442 (1983)

1983

-

[12]

A. Wolf, J. B. Swift, H. L. Swinney, and J. A. Vastano, Determining lyapunov exponents from a time series, Physica D: Nonlinear Phenomena16, 285 (1985)

1985

-

[13]

J. A. Platt, S. G. Penny, T. A. Smith, T.-C. Chen, and H. D. Abarbanel, A systematic exploration of reservoir computing for forecasting complex spatiotemporal dynamics, Neural Networks153, 530 (2022)

2022

-

[14]

Lukoˇ seviˇ cius and H

M. Lukoˇ seviˇ cius and H. Jaeger, Reservoir computing approaches to recurrent neural network training, Computer science review3, 127 (2009)

2009

-

[15]

Vlachas, J

P.-R. Vlachas, J. Pathak, B. R. Hunt, T. P. Sapsis, M. Girvan, E. Ott, and P. Koumoutsakos, Backpropagation algorithms and reservoir computing in recurrent neural networks for the forecasting of complex spatiotemporal dynamics, Neural Networks126, 191 (2020)

2020

-

[16]

Frankle and M

J. Frankle and M. Carbin, The lottery ticket hypothesis: Finding sparse, trainable neural networks, in7th International Conference on Learning Representations(2019)

2019

-

[17]

echo state

H. Jaeger, The “echo state” approach to analysing and training recurrent neural networks-with an erratum note, Bonn, Germany: German national research center for information technology gmd technical report148, 13 (2001)

2001

-

[18]

Maass, T

W. Maass, T. Natschl¨ ager, and H. Markram, Real-time computing without stable states: A new framework for neural computation based on perturbations, Neural computation14, 2531 (2002)

2002

-

[19]

Pathak, Z

J. Pathak, Z. Lu, B. R. Hunt, M. Girvan, and E. Ott, Using machine learning to replicate chaotic attractors and calculate Lyapunov exponents from data, Chaos: An Interdisciplinary Journal of Nonlinear Science27, 121102 (2017)

2017

-

[20]

Z. Lu, B. R. Hunt, and E. Ott, Attractor reconstruction by machine learning, Chaos: An Interdisciplinary Journal of Nonlinear Science28(2018)

2018

-

[21]

Mikhaeil, Z

J. Mikhaeil, Z. Monfared, and D. Durstewitz, On the difficulty of learning chaotic dynamics with rnns, Advances in neural information processing systems35, 11297 (2022)

2022

-

[22]

L. M. Pecora and T. L. Carroll, Synchronization in chaotic systems, Physical review letters64, 821 (1990)

1990

-

[23]

A. Hart, J. Hook, and J. Dawes, Embedding and approximation theorems for echo state networks, Neural Networks128, 234 (2020)

2020

-

[24]

Grigoryeva, A

L. Grigoryeva, A. Hart, and J.-P. Ortega, Chaos on compact manifolds: Differentiable synchronizations beyond the takens theorem, Physical Review E103, 062204 (2021)

2021

-

[25]

A. G. Hart, Generalised synchronisations, embeddings, and approximations for continuous time reservoir computers, Physica D: Nonlinear Phenomena458, 133956 (2024)

2024

-

[26]

J. D. Hart, Attractor reconstruction with reservoir computers: The effect of the reservoir’s conditional lyapunov exponents on faithful attractor reconstruction, Chaos: An Interdisciplinary Journal of Nonlinear Science34(2024)

2024

- [27]

-

[28]

G. J. Stephens, B. Johnson-Kerner, W. Bialek, and W. S. Ryu, Dimensionality and dynamics in the behavior of c. elegans, PLoS computational biology4, e1000028 (2008)

2008

-

[29]

R. M. May, Simple mathematical models with very complicated dynamics, Nature261, 459 (1976)

1976

-

[30]

J. A. Platt, S. G. Penny, T. A. Smith, T.-C. Chen, and H. D. I. Abarbanel, Constraining chaos: Enforcing dynamical invariants in the training of reservoir computers, Chaos: An Interdisciplinary Journal of Nonlinear Science33, 103107 (2023)

2023

-

[31]

F. J. Massey, The Kolmogorov-Smirnov Test for Goodness of Fit, Journal of the American Statistical Association46, 68 15 (1951), publisher: [American Statistical Association, Taylor & Francis, Ltd.]

1951

-

[32]

Chirikov, A universal instability of many-dimensional oscillator systems, Physics Reports52, 263 (1979)

B. Chirikov, A universal instability of many-dimensional oscillator systems, Physics Reports52, 263 (1979)

1979

-

[33]

Takens, Detecting Strange Attractors in Turbulence, inDynamical Systems and Turbulence, Warwick 1980, Lecture Notes in Mathematics, Vol

F. Takens, Detecting Strange Attractors in Turbulence, inDynamical Systems and Turbulence, Warwick 1980, Lecture Notes in Mathematics, Vol. 898, edited by D. Rand and L.-S. Young (Springer, Berlin, 1981) Chap. 21, pp. 366–381

1980

-

[34]

Zhang, H

H. Zhang, H. Fan, L. Wang, and X. Wang, Learning hamiltonian dynamics with reservoir computing, Physical Review E 104, 024205 (2021)

2021

-

[35]

Jaeger, M

H. Jaeger, M. Lukoˇ seviˇ cius, D. Popovici, and U. Siewert, Optimization and applications of echo state networks with leaky-integrator neurons, Neural networks20, 335 (2007)

2007

-

[36]

Ebato, S

Y. Ebato, S. Nobukawa, Y. Sakemi, H. Nishimura, T. Kanamaru, N. Sviridova, and K. Aihara, Impact of time-history terms on reservoir dynamics and prediction accuracy in echo state networks, Scientific Reports14, 8631 (2024)

2024

-

[37]

Bonneel, J

N. Bonneel, J. Rabin, G. Peyr´ e, and H. Pfister, Sliced and radon wasserstein barycenters of measures, Journal of Mathe- matical Imaging and Vision51, 22 (2015)

2015

-

[38]

Zhen and A

M. Zhen and A. D. Samuel, C. elegans locomotion: small circuits, complex functions, Current opinion in neurobiology 33, 117 (2015)

2015

-

[39]

K. M. Hallinen, R. Dempsey, M. Scholz, X. Yu, A. Linder, F. Randi, A. K. Sharma, J. W. Shaevitz, and A. M. Leifer, Decoding locomotion from population neural activity in movingC. elegans, eLife10, e66135 (2021)

2021

-

[40]

A. A. Atanas, J. Kim, Z. Wang, E. Bueno, M. Becker, D. Kang, J. Park, T. S. Kramer, F. K. Wan, S. Baskoylu,et al., Brain-wide representations of behavior spanning multiple timescales and states in c. elegans, Cell186, 4134 (2023)

2023

-

[41]

Bryant, R

P. Bryant, R. Brown, and H. D. Abarbanel, Lyapunov exponents from observed time series, Physical Review Letters65, 1523 (1990)

1990

-

[42]

Dingle, C

K. Dingle, C. Q. Camargo, and A. A. Louis, Input–output maps are strongly biased towards simple outputs, Nature communications9, 761 (2018)

2018

-

[43]

Dingle, M

K. Dingle, M. Alaskandarani, B. Hamzi, and A. A. Louis, Exploring simplicity bias in 1d dynamical systems, Entropy 26, 426 (2024)

2024

-

[44]

Brudno, Entropy and the complexity of the trajectories of a dynamic system, Trudy Moskovskogo Matematicheskogo Obshchestva44, 124 (1982)

A. Brudno, Entropy and the complexity of the trajectories of a dynamic system, Trudy Moskovskogo Matematicheskogo Obshchestva44, 124 (1982)

1982

-

[45]

Y. B. Pesin, Characteristic lyapunov exponents and smooth ergodic theory, Russian Mathematical Surveys32, 55 (1977)

1977

-

[46]

Kantz, G

H. Kantz, G. Radons, and H. Yang, The problem of spurious lyapunov exponents in time series analysis and its solution by covariant lyapunov vectors, Journal of Physics A: Mathematical and Theoretical46, 254009 (2013)

2013

-

[47]

A. L. Duarte and M. Eisencraft, Denoising of discrete-time chaotic signals using echo state networks, Signal Processing 214, 109252 (2024)

2024

-

[48]

Huang, Z

Y. Huang, Z. Fu, and C. L. E. Franzke, Detecting causality from time series in a machine learning framework, Chaos: An Interdisciplinary Journal of Nonlinear Science30, 063116 (2020)

2020

-

[49]

Sugihara, R

G. Sugihara, R. May, H. Ye, C.-h. Hsieh, E. Deyle, M. Fogarty, and S. Munch, Detecting Causality in Complex Ecosystems, Science338, 496 (2012)

2012

-

[50]

Krishnamurthy, T

K. Krishnamurthy, T. Can, and D. J. Schwab, Theory of gating in recurrent neural networks, Physical Review X12, 011011 (2022)

2022

-

[51]

Benettin, L

G. Benettin, L. Galgani, A. Giorgilli, and J.-M. Strelcyn, Lyapunov characteristic exponents for smooth dynamical systems and for hamiltonian systems; a method for computing all of them. part 1: Theory, Meccanica15, 9 (1980)

1980

-

[52]

Eckmann and D

J.-P. Eckmann and D. Ruelle, Ergodic theory of chaos and strange attractors, Reviews of modern physics57, 617 (1985)

1985

-

[53]

Hodges Jr, The significance probability of the smirnov two-sample test, Arkiv f¨ or matematik3, 469 (1958)

J. Hodges Jr, The significance probability of the smirnov two-sample test, Arkiv f¨ or matematik3, 469 (1958)

1958

-

[54]

Hairer, G

E. Hairer, G. Wanner, and S. P. Nørsett,Solving ordinary differential equations I: Nonstiff problems(Springer, 1993)

1993

-

[55]

P. J. Bickel and A. Sakov, On the choice of m in the m out of n bootstrap and confidence bounds for extrema, Statistica Sinica , 967 (2008). 16 SUPPLEMENT AR Y MA TERIAL FIG. S1.Optimization of reservoir computing by minimizing the Kolmogorov-Smirnov distance.(a) Distribution reconstruction errors measured by the Kolmogorov-Smirnov distance,D KS, between ...

2008

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.