Recognition: unknown

SplAttN: Bridging 2D and 3D with Gaussian Soft Splatting and Attention for Point Cloud Completion

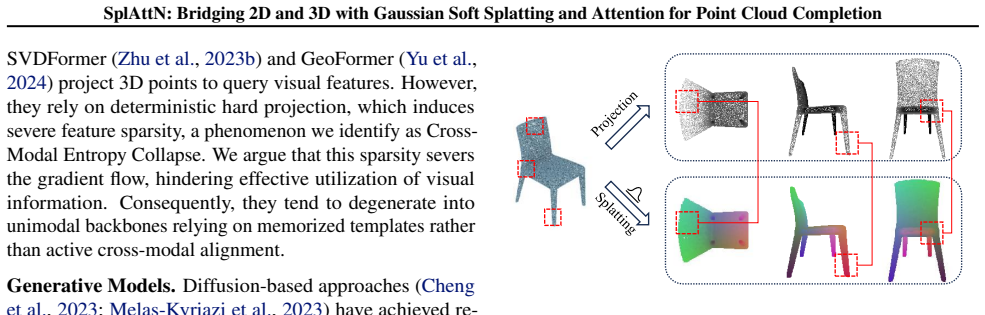

Pith reviewed 2026-05-09 14:53 UTC · model grok-4.3

The pith

Differentiable Gaussian splatting replaces hard projection to prevent cross-modal entropy collapse in point cloud completion.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

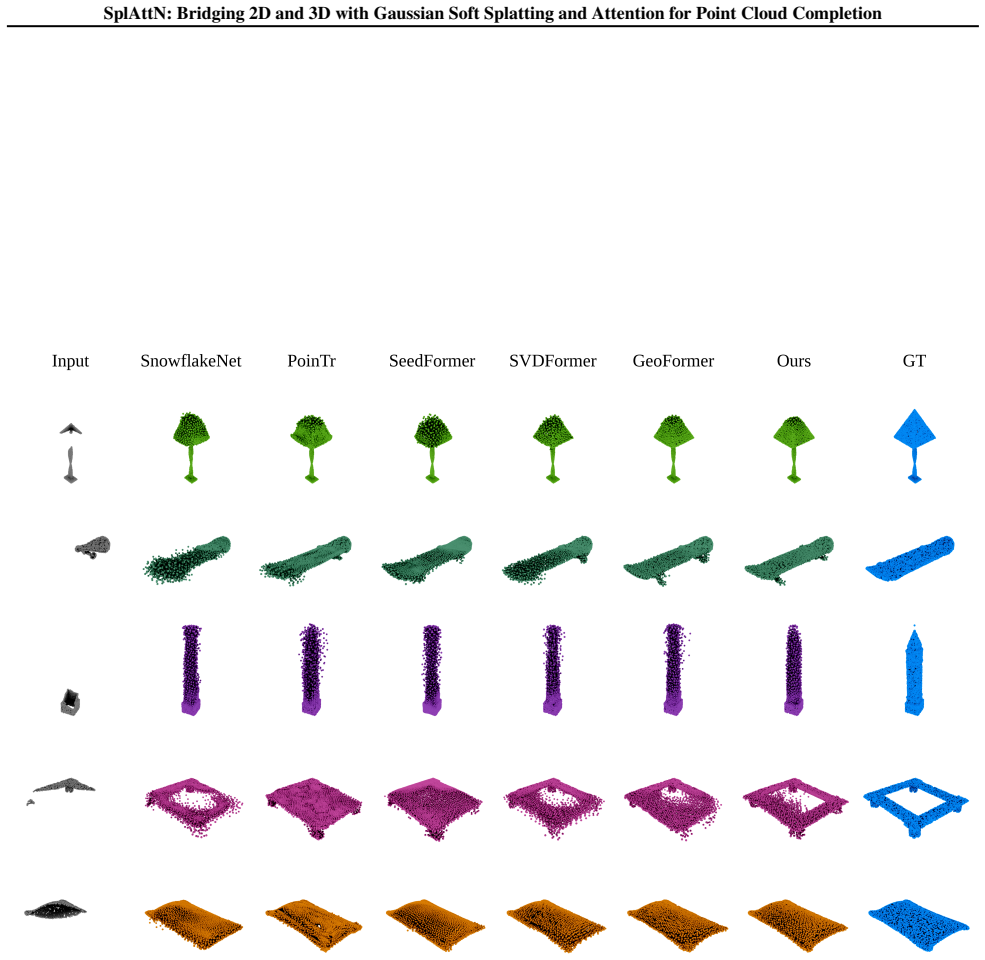

SplAttN replaces hard projection with Differentiable Gaussian Splatting to produce a dense, continuous image-plane representation. By reformulating projection as continuous density estimation, it avoids collapsed sparse support, facilitates gradient flow, and improves cross-modal connection learnability, achieving state-of-the-art performance on PCN and ShapeNet-55/34 while maintaining robust dependency on visual cues in counter-factual KITTI evaluation.

What carries the argument

Differentiable Gaussian Splatting, which reformulates the projection from sparse 3D points to the 2D image plane as continuous density estimation to create dense support instead of sparse points.

Load-bearing premise

The performance gains and robustness come specifically from using Gaussian splatting to avoid cross-modal entropy collapse, rather than from other parts of the architecture or training procedure.

What would settle it

Ablating the Gaussian splatting module and retraining the network to measure drops in benchmark scores and loss of visual dependency on the KITTI counter-factual test.

Figures

read the original abstract

Although multi-modal learning has advanced point cloud completion, the theoretical mechanisms remain unclear. Recent works attribute success to the connection between modalities, yet we identify that standard hard projection severs this connection: projecting a sparse point cloud onto the image plane yields an extremely sparse support, which hinders visual prior propagation, a failure mode we term Cross-Modal Entropy Collapse. To address this practical limitation, we propose SplAttN, which replaces hard projection with Differentiable Gaussian Splatting to produce a dense, continuous image-plane representation. By reformulating projection as continuous density estimation, SplAttN avoids collapsed sparse support, facilitates gradient flow, and improves cross-modal connection learnability. Extensive experiments show that SplAttN achieves state-of-the-art performance on PCN and ShapeNet-55/34. Crucially, we utilize the real-world KITTI benchmark as a stress test for multi-modal reliance. Counter-factual evaluation reveals that while baselines degenerate into unimodal template retrievers insensitive to visual removal, SplAttN maintains a robust dependency on visual cues, validating that our method establishes an effective cross-modal connection. Code is available at https://github.com/zay002/SplAttN.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes SplAttN for point cloud completion, which replaces hard projection with differentiable Gaussian soft splatting plus attention to bridge 2D image features and 3D points. It identifies a failure mode termed Cross-Modal Entropy Collapse arising from sparse support under hard projection, claims that continuous density estimation via splatting enables better gradient flow and cross-modal connection, reports SOTA results on PCN and ShapeNet-55/34, and uses a counter-factual KITTI evaluation to show that SplAttN retains visual-cue dependency while baselines collapse to unimodal template retrieval. Code is released.

Significance. If the performance and robustness gains are shown to stem specifically from the Gaussian splatting operator, the work would advance multi-modal 3D vision by offering a concrete architectural fix for maintaining cross-modal information flow in sparse-to-dense settings. The counter-factual KITTI stress test and public code are positive elements that support reproducibility and falsifiability of the multi-modal reliance claim.

major comments (2)

- [Section 4] Section 4 (Experiments): the ablation studies and main tables do not isolate the contribution of differentiable Gaussian splatting. No controlled swap of only the projection operator (hard vs. Gaussian) is reported while freezing the attention fusion module, encoder, decoder, loss, and training schedule; without this, the SOTA numbers on PCN/ShapeNet-55/34 and the differential KITTI sensitivity cannot be attributed to avoidance of cross-modal entropy collapse rather than capacity or optimization effects.

- [Section 3] Section 3 (Method): the claim that Gaussian splatting produces 'dense, continuous image-plane representation' and thereby prevents entropy collapse is presented at a high level; a quantitative comparison (e.g., support density or gradient magnitude before/after splatting) or equation showing the difference from hard projection would be needed to make the mechanism load-bearing for the central cross-modal claim.

minor comments (2)

- [Abstract] Abstract and Section 1: the term 'Cross-Modal Entropy Collapse' is introduced without a reference or short formal definition; adding a one-sentence characterization would improve readability for readers outside the immediate sub-area.

- [Tables 1-3] Tables 1-3: error bars or standard deviations across runs are not reported; including them would strengthen the SOTA claims.

Simulated Author's Rebuttal

We thank the referee for the constructive and insightful comments. We agree that isolating the precise contribution of the Gaussian splatting operator and providing more quantitative support for the cross-modal entropy collapse mechanism will strengthen the paper. We address each major comment below and will incorporate the suggested revisions in the next manuscript version.

read point-by-point responses

-

Referee: [Section 4] Section 4 (Experiments): the ablation studies and main tables do not isolate the contribution of differentiable Gaussian splatting. No controlled swap of only the projection operator (hard vs. Gaussian) is reported while freezing the attention fusion module, encoder, decoder, loss, and training schedule; without this, the SOTA numbers on PCN/ShapeNet-55/34 and the differential KITTI sensitivity cannot be attributed to avoidance of cross-modal entropy collapse rather than capacity or optimization effects.

Authors: We acknowledge that our existing ablations compare complete model variants but do not include the exact controlled swap requested. To directly attribute performance gains to the splatting operator, we will add a new ablation in the revised Section 4. In this experiment, we will replace only the differentiable Gaussian soft splatting with standard hard projection inside the SplAttN architecture while freezing the attention fusion module, encoder, decoder, loss function, and all training hyperparameters. We will report the resulting performance on PCN and ShapeNet-55/34, as well as the change in KITTI counter-factual sensitivity. This will allow readers to isolate the effect of continuous density estimation from other architectural or optimization factors. revision: yes

-

Referee: [Section 3] Section 3 (Method): the claim that Gaussian splatting produces 'dense, continuous image-plane representation' and thereby prevents entropy collapse is presented at a high level; a quantitative comparison (e.g., support density or gradient magnitude before/after splatting) or equation showing the difference from hard projection would be needed to make the mechanism load-bearing for the central cross-modal claim.

Authors: We agree that the mechanism description would benefit from greater formality and empirical grounding. In the revised Section 3, we will insert an explicit equation contrasting hard projection (a discrete, sparse mapping p_i → (u,v) with binary support) against the Gaussian splatting formulation, which computes a continuous density field as the sum of projected 2D Gaussians with learnable covariance. We will also add a quantitative analysis (new table or figure in Section 4 or an appendix) reporting average support density (fraction of non-zero pixels in the projected feature map) and mean gradient magnitude through the projection layer for both operators on PCN validation samples. These metrics will demonstrate that soft splatting maintains substantially denser support and improved gradient flow, directly supporting the claim that it mitigates cross-modal entropy collapse. revision: yes

Circularity Check

No significant circularity; architectural proposal stands as independent contribution

full rationale

The paper defines Cross-Modal Entropy Collapse as a consequence of hard projection's sparse support and proposes SplAttN's differentiable Gaussian splatting plus attention as a direct architectural remedy. No equations, derivations, or predictions are shown that reduce the claimed benefit to a fitted parameter, self-referential definition, or self-citation chain. The central mechanism is presented as an independent change to the projection operator, with experimental validation on PCN, ShapeNet, and KITTI serving as external evidence rather than tautological output. This matches the default expectation for non-circular ML architecture papers.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Differentiable Gaussian splatting produces a dense continuous image-plane representation from sparse point clouds that facilitates visual prior propagation

invented entities (1)

-

Cross-Modal Entropy Collapse

no independent evidence

Reference graph

Works this paper leans on

-

[1]

2018 international conference on 3D vision (3DV) , pages=

Pcn: Point completion network , author=. 2018 international conference on 3D vision (3DV) , pages=. 2018 , organization=

2018

-

[2]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

Foldingnet: Point cloud auto-encoder via deep grid deformation , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[3]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Topnet: Structural point cloud decoder , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[4]

European conference on computer vision , pages=

Grnet: Gridding residual network for dense point cloud completion , author=. European conference on computer vision , pages=. 2020 , organization=

2020

-

[5]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Pmp-net: Point cloud completion by learning multi-step point moving paths , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[6]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Lake-net: Topology-aware point cloud completion by localizing aligned keypoints , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[7]

Proceedings of the AAAI Conference on artificial intelligence , volume=

Pointattn: You only need attention for point cloud completion , author=. Proceedings of the AAAI Conference on artificial intelligence , volume=

-

[8]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Cascaded refinement network for point cloud completion , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[9]

European conference on computer vision , pages=

Detail preserved point cloud completion via separated feature aggregation , author=. European conference on computer vision , pages=. 2020 , organization=

2020

-

[10]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Pointr: Diverse point cloud completion with geometry-aware transformers , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[11]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Snowflakenet: Point cloud completion by snowflake point deconvolution with skip-transformer , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[12]

IEEE Transactions on Pattern Analysis and Machine Intelligence , volume=

PMP-Net++: Point cloud completion by transformer-enhanced multi-step point moving paths , author=. IEEE Transactions on Pattern Analysis and Machine Intelligence , volume=. 2022 , publisher=

2022

-

[13]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Pointclip v2: Prompting clip and gpt for powerful 3d open-world learning , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[14]

European conference on computer vision , pages=

Seedformer: Patch seeds based point cloud completion with upsample transformer , author=. European conference on computer vision , pages=. 2022 , organization=

2022

-

[15]

Yu, Xumin and Rao, Yongming and Wang, Ziyi and Lu, Jiwen and Zhou, Jie , title =. IEEE Trans. Pattern Anal. Mach. Intell. , month = dec, pages =. 2023 , issue_date =. doi:10.1109/TPAMI.2023.3309253 , abstract =

-

[16]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Anchorformer: Point cloud completion from discriminative nodes , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[17]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Hyperbolic chamfer distance for point cloud completion , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[18]

Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

Svdformer: Complementing point cloud via self-view augmentation and self-structure dual-generator , author=. Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

-

[19]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

SymmCompletion: High-Fidelity and High-Consistency Point Cloud Completion with Symmetry Guidance , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[20]

Forty-second International Conference on Machine Learning , year=

Unpaired Point Cloud Completion via Unbalanced Optimal Transport , author=. Forty-second International Conference on Machine Learning , year=

-

[21]

Advances in Neural Information Processing Systems , volume=

Cross-modal learning for image-guided point cloud shape completion , author=. Advances in Neural Information Processing Systems , volume=

-

[22]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Pulsar: Efficient sphere-based neural rendering , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[23]

An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale

An image is worth 16x16 words: Transformers for image recognition at scale , author=. arXiv preprint arXiv:2010.11929 , year=

work page internal anchor Pith review Pith/arXiv arXiv 2010

-

[24]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Swin transformer: Hierarchical vision transformer using shifted windows , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[25]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Masked autoencoders are scalable vision learners , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[26]

Advances in neural information processing systems , volume=

Pointnet++: Deep hierarchical feature learning on point sets in a metric space , author=. Advances in neural information processing systems , volume=

-

[27]

International conference on machine learning , pages=

Mutual information neural estimation , author=. International conference on machine learning , pages=. 2018 , organization=

2018

-

[28]

Representation Learning with Contrastive Predictive Coding

Representation learning with contrastive predictive coding , author=. arXiv preprint arXiv:1807.03748 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[29]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

Deep residual learning for image recognition , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[30]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

DC-PCN: Point Cloud Completion Network with Dual-Codebook Guided Quantization , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[31]

Differentiable surface splatting for point-based geometry processing , year =

Yifan, Wang and Serena, Felice and Wu, Shihao and \". Differentiable surface splatting for point-based geometry processing , year =. ACM Trans. Graph. , month = nov, articleno =. doi:10.1145/3355089.3356513 , abstract =

-

[32]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Softmax splatting for video frame interpolation , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[33]

Proceedings of the 32nd ACM International Conference on Multimedia , pages=

Geoformer: Learning point cloud completion with tri-plane integrated transformer , author=. Proceedings of the 32nd ACM International Conference on Multimedia , pages=

-

[34]

and Yuille, Alan and Tan, Mingxing , title =

Li, Yingwei and Yu, Adams Wei and Meng, Tianjian and Caine, Ben and Ngiam, Jiquan and Peng, Daiyi and Shen, Junyang and Lu, Yifeng and Zhou, Denny and Le, Quoc V. and Yuille, Alan and Tan, Mingxing , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , month =. 2022 , pages =

2022

-

[35]

European conference on computer vision , pages=

Tinyvit: Fast pretraining distillation for small vision transformers , author=. European conference on computer vision , pages=. 2022 , organization=

2022

-

[36]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , month =

Zhang, Xuancheng and Feng, Yutong and Li, Siqi and Zou, Changqing and Wan, Hai and Zhao, Xibin and Guo, Yandong and Gao, Yue , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , month =. 2021 , pages =

2021

-

[37]

Li, Yixuan and Ma, Lipeng and Yang, Weidong and Fei, Ben , title =. ACM Trans. Multimedia Comput. Commun. Appl. , month = nov, keywords =. 2025 , publisher =. doi:10.1145/3774887 , abstract =

-

[38]

The international journal of robotics research , volume=

Vision meets robotics: The kitti dataset , author=. The international journal of robotics research , volume=. 2013 , publisher=

2013

-

[39]

Decoupled Weight Decay Regularization

Decoupled weight decay regularization , author=. arXiv preprint arXiv:1711.05101 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[40]

2017 , eprint=

Cyclical Learning Rates for Training Neural Networks , author=. 2017 , eprint=

2017

-

[41]

, author=

3D Gaussian splatting for real-time radiance field rendering. , author=. ACM Trans. Graph. , volume=

-

[42]

and Gui, Liang-Yan , title =

Cheng, Yen-Chi and Lee, Hsin-Ying and Tulyakov, Sergey and Schwing, Alexander G. and Gui, Liang-Yan , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , month =. 2023 , pages =

2023

-

[43]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , month =

Melas-Kyriazi, Luke and Rupprecht, Christian and Vedaldi, Andrea , title =. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , month =. 2023 , pages =

2023

-

[44]

Proceedings of the 29th ACM international conference on multimedia , pages=

Asfm-net: Asymmetrical siamese feature matching network for point completion , author=. Proceedings of the 29th ACM international conference on multimedia , pages=

-

[45]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

Cra-pcn: Point cloud completion with intra-and inter-level cross-resolution transformers , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[46]

Computational visual media , volume=

Pct: Point cloud transformer , author=. Computational visual media , volume=. 2021 , publisher=

2021

-

[47]

ACM Transactions on Graphics (tog) , volume=

Dynamic graph cnn for learning on point clouds , author=. ACM Transactions on Graphics (tog) , volume=. 2019 , publisher=

2019

-

[48]

Advances in neural information processing systems , volume=

Attention is all you need , author=. Advances in neural information processing systems , volume=

-

[49]

Intelligence & Robotics , VOLUME =

Dingchen Yang and Bowen Cao and Sanqing Qu and Fan Lu and Shangding Gu and Guang Chen , TITLE =. Intelligence & Robotics , VOLUME =. 2025 , NUMBER =

2025

-

[50]

Intelligence & Robotics , VOLUME =

Zhengyi Lu and Yunhong Liao and Jia Li , TITLE =. Intelligence & Robotics , VOLUME =. 2025 , NUMBER =

2025

-

[51]

Advances in neural information processing systems , volume=

Learning representations by maximizing mutual information across views , author=. Advances in neural information processing systems , volume=

-

[52]

Advances in Neural Information Processing Systems , volume=

Point cloud completion with pretrained text-to-image diffusion models , author=. Advances in Neural Information Processing Systems , volume=

-

[53]

Advances in Neural Information Processing Systems , volume=

A theory of multimodal learning , author=. Advances in Neural Information Processing Systems , volume=

-

[54]

The Thirteenth International Conference on Learning Representations , year=

SplatFormer: Point Transformer for Robust 3D Gaussian Splatting , author=. The Thirteenth International Conference on Learning Representations , year=

-

[55]

ACM SIGGRAPH 2024 conference papers , pages=

2d gaussian splatting for geometrically accurate radiance fields , author=. ACM SIGGRAPH 2024 conference papers , pages=

2024

-

[56]

Proceedings of the 29th ACM International Conference on Multimedia , pages =

Xia, Yaqi and Xia, Yan and Li, Wei and Song, Rui and Cao, Kailang and Stilla, Uwe , title =. Proceedings of the 29th ACM International Conference on Multimedia , pages =. 2021 , isbn =. doi:10.1145/3474085.3475348 , abstract =

-

[57]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

View-guided point cloud completion , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[58]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Point transformer , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[59]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Variational relational point completion network , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[60]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Proxyformer: Proxy alignment assisted point cloud completion with missing part sensitive transformer , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[61]

European Conference on Computer Vision , pages=

Fbnet: Feedback network for point cloud completion , author=. European Conference on Computer Vision , pages=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.