Recognition: unknown

Unifying Deep Stochastic Processes for Image Enhancement

Pith reviewed 2026-05-09 14:05 UTC · model grok-4.3

The pith

All stochastic image enhancement methods arise from one shared stochastic differential equation differing mainly in drift, diffusion, terminals, and boundaries.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Unconditional diffusion models, Ornstein-Uhlenbeck processes, and diffusion bridges used for image enhancement all emerge from a single SDE. They differ principally in their drift and diffusion terms, terminal distributions, and boundary conditions. Schedulers and samplers act as orthogonal design choices. When the same network architectures and training protocols are applied across these families, no process type consistently outperforms the others; instead, specific choices within the SDE control most performance variation.

What carries the argument

The common stochastic differential equation (SDE) that parametrizes the three process families through choices of drift function, diffusion function, terminal distribution, and boundary conditions.

If this is right

- Performance differences trace mainly to specific drift and diffusion terms plus boundary conditions rather than to the choice of process family.

- Schedulers and samplers can be selected independently of the core SDE family.

- No single family is superior across tasks once architectures are matched.

- Key design choices become separable and can be optimized in isolation.

Where Pith is reading between the lines

- Researchers could now systematically combine elements such as the best drift from one family with the boundary condition from another to create hybrids.

- The same SDE lens might be applied to related tasks like video restoration or medical-image denoising to test whether the same design patterns hold.

- Theoretical work could derive which terminal distributions or boundary conditions best match particular degradation models, such as blur versus noise.

Load-bearing premise

The three families cover essentially all recent stochastic enhancement methods and that identical architectures plus training protocols isolate the stochastic-process choice without hidden implementation differences.

What would settle it

Discovery of a new stochastic enhancement method whose trajectory cannot be written as one of the three families inside the shared SDE, or a controlled re-run in which swapping only the process family reverses performance rankings in a manner not explained by the identified drift or boundary terms.

Figures

read the original abstract

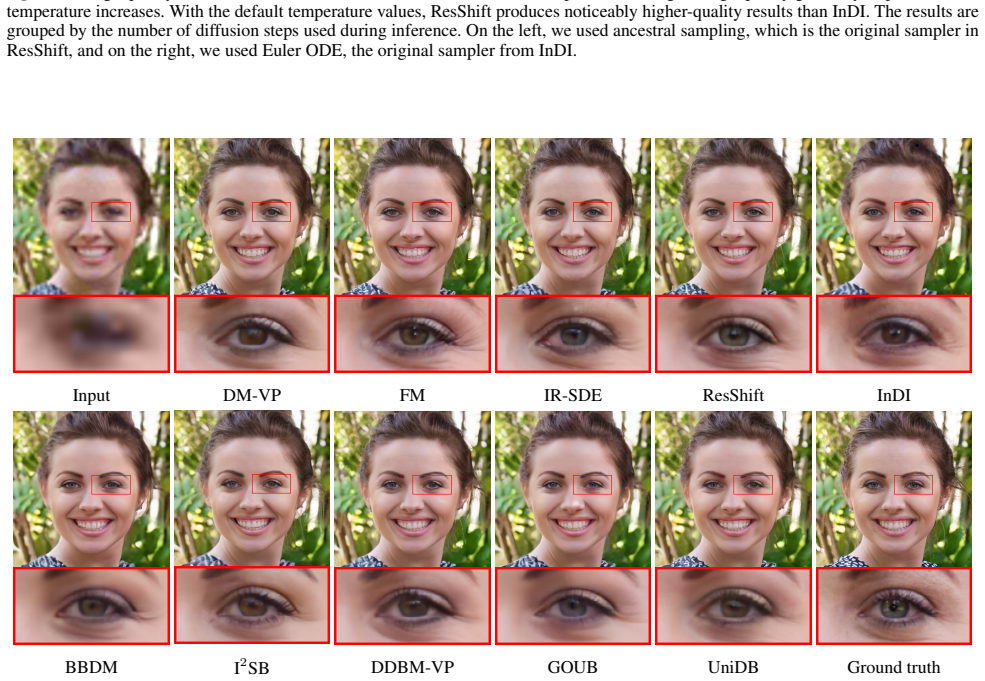

Deep stochastic processes have recently become a central paradigm for image enhancement, with many methods explicitly conditioning the stochastic trajectory on the degraded input. However, the relationship between these conditional processes and standard diffusion models remains unclear. In this work, we introduce a unified perspective on stochastic image enhancement by classifying recent methods into three families of continuous-time processes: unconditional diffusion models, Ornstein-Uhlenbeck (OU) processes, and diffusion bridges. We show that all of these approaches arise from a common stochastic differential equation (SDE) formulation. This framework makes explicit that seemingly disparate methods differ primarily in their drift and diffusion terms, terminal distributions, and boundary conditions, while schedulers and samplers constitute orthogonal design choices. Leveraging this unification, we conduct a controlled empirical study across multiple image enhancement tasks using identical architectures and training protocols. Our results reveal no consistently dominant method; instead, we identify and disentangle the specific design choices that most strongly influence performance. Finally, we release ItoVision, a modular PyTorch library that implements the unified framework and enables rapid prototyping and fair comparison of stochastic image enhancement methods.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript claims that recent deep stochastic processes for image enhancement can be unified under a single SDE framework, with unconditional diffusion models, Ornstein-Uhlenbeck processes, and diffusion bridges arising as special cases that differ primarily in their drift and diffusion coefficients, terminal distributions, and boundary conditions (while schedulers and samplers are orthogonal). It supports the unification with a controlled empirical study that trains identical architectures under fixed protocols on multiple image enhancement tasks, finding no consistently dominant family, and releases the ItoVision PyTorch library to enable fair comparisons.

Significance. If the unification is complete and the empirical controls succeed in isolating SDE effects, the work supplies a useful organizing lens for the field, shifting attention from superficial methodological differences to the load-bearing choices in drift, diffusion, and conditioning. The modular library is a concrete asset for reproducibility and rapid experimentation.

major comments (1)

- [Empirical study] Empirical study section: The claim that 'identical architectures and training protocols' isolate the stochastic process itself requires explicit verification that the conditioning mechanism on the degraded input (concatenation, cross-attention, or time-dependent injection) is implemented identically across the three families. Different families conventionally employ distinct conditioning routes; any residual mismatch would undermine attribution of performance differences to drift/diffusion/terminal choices alone.

minor comments (2)

- [Abstract] Abstract: The phrase 'recent methods' is left broad; a parenthetical list of representative papers from each family would help readers assess coverage.

- Notation: Ensure that the common SDE (presumably Eq. (X) in the unification section) is written once with all variable terms (drift, diffusion, terminal) labeled so that later specializations can be referenced by name rather than re-derived.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address the major comment point by point below and have made revisions to strengthen the empirical study section.

read point-by-point responses

-

Referee: Empirical study section: The claim that 'identical architectures and training protocols' isolate the stochastic process itself requires explicit verification that the conditioning mechanism on the degraded input (concatenation, cross-attention, or time-dependent injection) is implemented identically across the three families. Different families conventionally employ distinct conditioning routes; any residual mismatch would undermine attribution of performance differences to drift/diffusion/terminal choices alone.

Authors: We thank the referee for highlighting this important point regarding the isolation of SDE effects. We agree that explicit verification of the conditioning mechanism is necessary. In the unified framework, conditioning on the degraded input is treated as orthogonal to the choice of drift, diffusion, terminal distribution, and boundary conditions. In our controlled experiments, we implemented conditioning identically across all three families by concatenating the degraded input image channel-wise with the current noisy state at each timestep before feeding it into the shared network architecture. This concatenation-based injection was chosen for compatibility with the common SDE formulation and was applied uniformly in the code base for unconditional diffusion models, Ornstein-Uhlenbeck processes, and diffusion bridges. To make this explicit, we have revised the Empirical Study section to include a dedicated paragraph describing the conditioning implementation, along with a table confirming that the same mechanism (concatenation) was used for every family and task. We believe this revision fully addresses the concern and supports attribution of results to the SDE components. revision: yes

Circularity Check

No circularity: unification rests on standard SDE and new controlled experiments

full rationale

The paper's core derivation classifies methods into unconditional diffusion, OU processes, and diffusion bridges, then states they arise from one SDE by differing only in drift/diffusion terms, terminal distributions, and boundary conditions. This follows directly from the general Itô SDE form in stochastic calculus (no self-derived inputs or fitted parameters renamed as predictions). The empirical study runs fresh trainings under fixed architectures and protocols rather than reusing prior constants. No self-citation chains, uniqueness theorems from the authors, or ansatz smuggling appear as load-bearing steps. The framework is externally grounded and self-contained.

Axiom & Free-Parameter Ledger

axioms (1)

- standard math Standard existence and uniqueness results for solutions of stochastic differential equations

Reference graph

Works this paper leans on

-

[1]

Stochastic Processes and their Applications , volume=

Reverse-time diffusion equation models , author=. Stochastic Processes and their Applications , volume=. 1982 , publisher=

1982

-

[2]

Advances in neural information processing systems , volume=

Denoising diffusion probabilistic models , author=. Advances in neural information processing systems , volume=

-

[4]

Advances in Neural Information Processing Systems , volume=

Schrödinger bridge flow for unpaired data translation , author=. Advances in Neural Information Processing Systems , volume=

-

[5]

Denoising Diffusion Implicit Models

Denoising diffusion implicit models , author=. arXiv preprint arXiv:2010.02502 , year=

work page internal anchor Pith review Pith/arXiv arXiv 2010

-

[6]

Flow Matching for Generative Modeling

Flow matching for generative modeling , author=. arXiv preprint arXiv:2210.02747 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[7]

Advances in Neural Information Processing Systems , volume=

Resshift: Efficient diffusion model for image super-resolution by residual shifting , author=. Advances in Neural Information Processing Systems , volume=

-

[9]

Journal of Machine Learning Research , volume=

Diffusion bridge mixture transports, Schrödinger bridge problems and generative modeling , author=. Journal of Machine Learning Research , volume=

-

[10]

European conference on computer vision , pages=

Microsoft coco: Common objects in context , author=. European conference on computer vision , pages=. 2014 , organization=

2014

-

[11]

GitHub repository , publisher =

TorchVision: PyTorch's Computer Vision library , author =. GitHub repository , publisher =

-

[12]

International Conference on Medical image computing and computer-assisted intervention , pages=

U-net: Convolutional networks for biomedical image segmentation , author=. International Conference on Medical image computing and computer-assisted intervention , pages=. 2015 , organization=

2015

-

[13]

GitHub repository , howpublished =

Patrick von Platen and Suraj Patil and Anton Lozhkov and Pedro Cuenca and Nathan Lambert and Kashif Rasul and Mishig Davaadorj and Dhruv Nair and Sayak Paul and William Berman and Yiyi Xu and Steven Liu and Thomas Wolf , title =. GitHub repository , howpublished =. 2022 , publisher =

2022

-

[14]

International conference on machine learning , pages=

Deep unsupervised learning using nonequilibrium thermodynamics , author=. International conference on machine learning , pages=. 2015 , organization=

2015

-

[15]

, author=

Estimation of non-normalized statistical models by score matching. , author=. Journal of Machine Learning Research , volume=

-

[16]

Neural computation , volume=

A connection between score matching and denoising autoencoders , author=. Neural computation , volume=. 2011 , publisher=

2011

-

[17]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

High-resolution image synthesis with latent diffusion models , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[18]

Advances in Neural Information Processing Systems , year=

Global Structure-Aware Diffusion Process for Low-Light Image Enhancement , author=. Advances in Neural Information Processing Systems , year=

-

[19]

Advances in neural information processing systems , volume=

Elucidating the design space of diffusion-based generative models , author=. Advances in neural information processing systems , volume=

-

[20]

Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow

Flow straight and fast: Learning to generate and transfer data with rectified flow , author=. arXiv preprint arXiv:2209.03003 , year=

work page internal anchor Pith review arXiv

-

[21]

1984 , publisher=

Classical potential theory and its probabilistic counterpart , author=. 1984 , publisher=

1984

-

[22]

Proceedings of the IEEE/CVF conference on computer vision and pattern Recognition , pages=

Bbdm: Image-to-image translation with brownian bridge diffusion models , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern Recognition , pages=

-

[23]

Denoising diffusion bridge models

Denoising diffusion bridge models , author=. arXiv preprint arXiv:2309.16948 , year=

-

[24]

arXiv preprint arXiv:2110.11291 , year=

Likelihood training of schrödinger bridge using forward-backward sdes theory , author=. arXiv preprint arXiv:2110.11291 , year=

-

[25]

arXiv preprint arXiv:2312.10299 , year=

Image restoration through generalized ornstein-uhlenbeck bridge , author=. arXiv preprint arXiv:2312.10299 , year=

-

[26]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

A style-based generator architecture for generative adversarial networks , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[27]

Advances in neural information processing systems , volume=

Diffusion models beat gans on image synthesis , author=. Advances in neural information processing systems , volume=

-

[28]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

The unreasonable effectiveness of deep features as a perceptual metric , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[29]

Deep retinex decomposition for low-light enhancement

Deep retinex decomposition for low-light enhancement , author=. arXiv preprint arXiv:1808.04560 , year=

-

[30]

Proceedings of the 27th ACM international conference on multimedia , pages=

Kindling the darkness: A practical low-light image enhancer , author=. Proceedings of the 27th ACM international conference on multimedia , pages=

-

[31]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Retinexformer: One-stage retinex-based transformer for low-light image enhancement , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[32]

arXiv preprint arXiv:2305.10028 , year=

Pyramid diffusion models for low-light image enhancement , author=. arXiv preprint arXiv:2305.10028 , year=

-

[33]

arXiv preprint arXiv:1501.00092 , year=

Image Super-Resolution Using Deep Convolutional Networks, arXiv , author=. arXiv preprint arXiv:1501.00092 , year=

-

[34]

European conference on computer vision , pages=

Accelerating the super-resolution convolutional neural network , author=. European conference on computer vision , pages=. 2016 , organization=

2016

-

[35]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

Learning to see in the dark , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[36]

Proceedings of the 24th ACM international conference on Multimedia , pages=

LIME: A method for low-light image enhancement , author=. Proceedings of the 24th ACM international conference on Multimedia , pages=

-

[37]

IEEE Transactions on image processing , volume=

BM3D frames and variational image deblurring , author=. IEEE Transactions on image processing , volume=. 2011 , publisher=

2011

-

[38]

et almbox. 2017. Photo-realistic single image super-resolution using a generative adversarial network , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , year=

2017

-

[39]

Proceedings of the European conference on computer vision (ECCV) workshops , pages=

Esrgan: Enhanced super-resolution generative adversarial networks , author=. Proceedings of the European conference on computer vision (ECCV) workshops , pages=

-

[40]

IEEE transactions on image processing , volume=

Enlightengan: Deep light enhancement without paired supervision , author=. IEEE transactions on image processing , volume=. 2021 , publisher=

2021

-

[41]

International conference on articulated motion and deformable objects , pages=

Image colorization using generative adversarial networks , author=. International conference on articulated motion and deformable objects , pages=. 2018 , organization=

2018

-

[42]

IEEE transactions on pattern analysis and machine intelligence , volume=

Image super-resolution via iterative refinement , author=. IEEE transactions on pattern analysis and machine intelligence , volume=. 2022 , publisher=

2022

-

[43]

ACM SIGGRAPH 2022 conference proceedings , pages=

Palette: Image-to-image diffusion models , author=. ACM SIGGRAPH 2022 conference proceedings , pages=

2022

-

[44]

arXiv preprint arXiv:2502.05749 , year=

UniDB: A Unified Diffusion Bridge Framework via Stochastic Optimal Control , author=. arXiv preprint arXiv:2502.05749 , year=

-

[45]

International Journal of Computer Vision , pages=

Diffusion models for image restoration and enhancement: a comprehensive survey , author=. International Journal of Computer Vision , pages=. 2025 , publisher=

2025

-

[46]

Improving and generalizing flow-based generative models with minibatch optimal transport

Improving and generalizing flow-based generative models with minibatch optimal transport , author=. arXiv preprint arXiv:2302.00482 , year=

work page internal anchor Pith review arXiv

-

[47]

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

Residual denoising diffusion models , author=. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition , pages=

-

[48]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Refusion: Enabling large-size realistic image restoration with latent-space diffusion models , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[49]

International journal of computer vision , volume=

Imagenet large scale visual recognition challenge , author=. International journal of computer vision , volume=. 2015 , publisher=

2015

-

[50]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

Removing rain from single images via a deep detail network , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[51]

Advances in neural information processing systems , volume=

Denoising diffusion restoration models , author=. Advances in neural information processing systems , volume=

-

[52]

Advances in Neural Information Processing Systems , volume=

Snips: Solving noisy inverse problems stochastically , author=. Advances in Neural Information Processing Systems , volume=

-

[53]

Advances in Neural Information Processing Systems , volume=

Improving diffusion models for inverse problems using manifold constraints , author=. Advances in Neural Information Processing Systems , volume=

-

[54]

Advances in neural information processing systems , volume=

Diffusion normalizing flow , author=. Advances in neural information processing systems , volume=

-

[55]

International Conference on Learning Representations , year=

Pseudoinverse-guided diffusion models for inverse problems , author=. International Conference on Learning Representations , year=

-

[56]

Stochastic differential equations: an introduction with applications , pages=

Stochastic differential equations , author=. Stochastic differential equations: an introduction with applications , pages=. 2003 , publisher=

2003

-

[57]

Advances in neural information processing systems , volume=

Generative modeling by estimating gradients of the data distribution , author=. Advances in neural information processing systems , volume=

-

[58]

Pattern Recognition , volume=

Implicit Image-to-Image Schrödinger Bridge for image restoration , author=. Pattern Recognition , volume=. 2025 , publisher=

2025

-

[59]

Advances in Neural Information Processing Systems , volume=

Cold diffusion: Inverting arbitrary image transforms without noise , author=. Advances in Neural Information Processing Systems , volume=

-

[60]

Dual diffusion implicit bridges for image-to-image translation.arXiv preprint arXiv:2203.08382,

Dual diffusion implicit bridges for image-to-image translation , author=. arXiv preprint arXiv:2203.08382 , year=

-

[61]

Advances in neural information processing systems , volume=

Are gans created equal? a large-scale study , author=. Advances in neural information processing systems , volume=

-

[62]

Unpaired image-to-image translation via neural schrödinger bridge , author=. arXiv preprint arXiv:2305.15086 , year=

-

[63]

Advances in neural information processing systems , volume=

Diffusion schrödinger bridge with applications to score-based generative modeling , author=. Advances in neural information processing systems , volume=

-

[64]

arXiv preprint arXiv:2502.02367 , year=

Field matching: an electrostatic paradigm to generate and transfer data , author=. arXiv preprint arXiv:2502.02367 , year=

-

[65]

2014 , publisher=

Brownian motion and stochastic calculus , author=. 2014 , publisher=

2014

-

[66]

completely blind

Making a “completely blind” image quality analyzer , author=. IEEE Signal processing letters , volume=. 2012 , publisher=

2012

-

[67]

Advances in neural information processing systems , volume=

Gans trained by a two time-scale update rule converge to a local nash equilibrium , author=. Advances in neural information processing systems , volume=

-

[68]

arXiv preprint arXiv:2212.00490 , year=

Zero-shot image restoration using denoising diffusion null-space model , author=. arXiv preprint arXiv:2212.00490 , year=

-

[69]

Advances in Neural Information Processing Systems , volume=

PGDiff: Guiding diffusion models for versatile face restoration via partial guidance , author=. Advances in Neural Information Processing Systems , volume=

-

[70]

CVPR , year=

Photo-Realistic Single Image Super-Resolution Using a Generative Adversarial Network , author=. CVPR , year=

-

[71]

K., Zhao, Z., Sj¨olund, J., and Sch¨on, T

Image Restoration with Mean-Reverting Stochastic Differential Equations , author=. arXiv preprint arXiv:2301.11699 , year=

-

[72]

I2SB: Image-to-image Schrödinger bridge.arXiv preprint arXiv:2302.05872,

I ^2 SB: Image-to-Image Schrödinger Bridge , author=. arXiv preprint arXiv:2302.05872 , year=

-

[73]

Score-Based Generative Modeling through Stochastic Differential Equations

Score-Based Generative Modeling through Stochastic Differential Equations , author=. arXiv preprint arXiv:2011.13456 , year=

work page internal anchor Pith review arXiv 2011

-

[74]

arXiv preprint arXiv:2303.11435 , year=

Inversion by Direct Iteration: An Alternative to Denoising Diffusion for Image Restoration , author=. arXiv preprint arXiv:2303.11435 , year=

-

[75]

2023 IEEE , author=

Scalable diffusion models with transformers. 2023 IEEE , author=. CVF International Conference on Computer Vision (ICCV) , volume=

2023

-

[76]

NTIRE 2017 Challenge on Single Image Super-Resolution: Dataset and Study , year=

Agustsson, Eirikur and Timofte, Radu , booktitle=. NTIRE 2017 Challenge on Single Image Super-Resolution: Dataset and Study , year=

2017

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.