Recognition: no theorem link

Testing, Credible Compilation, and Verification in the Axon Verified Compiler in Lean and Claude Code

Pith reviewed 2026-05-12 01:17 UTC · model grok-4.3

The pith

Machine-checked Lean proofs written by Claude Code certify the Axon compiler's generated code as correct.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

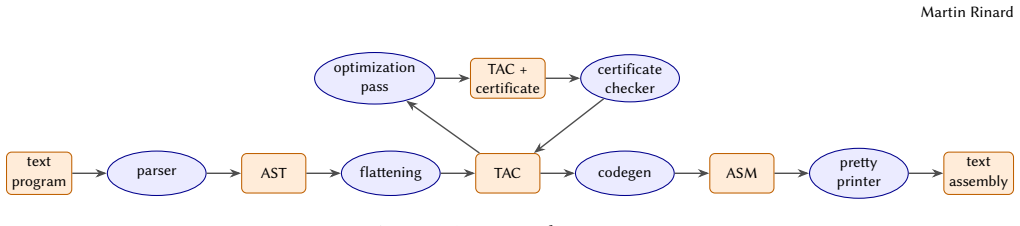

Axon is a compiler whose complete implementation and formal correctness proofs were generated in Lean by Claude Code. The proofs certify that every translation step from source program to executable preserves semantics, which removes the need to examine or audit any of the verified code. This result was obtained by running the compiler through repeated cycles of testing, translation validation, proof construction, and targeted audits during its development.

What carries the argument

Machine-checked Lean proofs that certify the correctness of the Axon compiler's code generation steps.

If this is right

- Generated compiler output can be used without manual review once the proofs are accepted.

- The same combination of testing, credible compilation, and Lean verification can be applied to other compiler projects.

- Development effort shifts from code inspection to proof maintenance and refinement.

- Audits become focused on the proof specifications rather than the generated code.

Where Pith is reading between the lines

- The approach could be tested on other AI-assisted formal-verification tasks such as verified parsers or runtime systems.

- If the proofs hold, teams might produce verified components faster than with fully manual proof writing.

- Success here suggests that AI-generated proofs could be checked against independent test suites to increase confidence.

Load-bearing premise

The Lean proofs accurately capture the intended semantics of the compiler and contain no specification errors or gaps that would let incorrect code pass verification.

What would settle it

Discovery of a program that Axon compiles to incorrect target code while the Lean proofs still accept the translation as correct, or an audit that finds a missing case in the proof specifications.

Figures

read the original abstract

This paper presents the use of testing, credible compilation/translation validation, verification, and audits in the Axon compiler. Axon comes with fully machine checked proofs that guarantee the correctness of the generated code. All code and proofs were written in Lean by Claude Code, with the correctness proofs eliminating any need to audit or examine any verified code. I present a development process for using these validation techniques, evaluate the use of this process during the development of the compiler, and discuss implications for other development efforts.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents the Axon verified compiler developed in Lean, with all code and proofs generated by Claude Code. It claims that the fully machine-checked proofs guarantee the correctness of the generated code and eliminate any need to audit or examine the verified code. The paper describes a development process incorporating testing, credible compilation/translation validation, verification, and audits; evaluates the use of this process during compiler development; and discusses implications for other development efforts.

Significance. If the machine-checked proofs are faithful to the intended semantics of the source and target languages and the evaluation provides concrete evidence of the process's effectiveness, this work would demonstrate a viable workflow for AI-assisted construction of verified compilers. The explicit combination of multiple assurance techniques (testing, credible compilation, and verification) and the choice of Lean for machine-checked guarantees are notable strengths that could reduce human auditing effort in formal compiler development.

major comments (2)

- [Abstract] Abstract: The central claim that the machine-checked proofs 'guarantee the correctness of the generated code' and 'eliminate any need to audit or examine any verified code' is not supported by any description of the formalized semantics, the specific properties verified (e.g., semantic preservation, type safety), or the structure of the proofs. Without this information, it is impossible to determine whether the proofs are relative to a faithful specification or whether specification errors could allow incorrect code to pass verification.

- [Evaluation section] Throughout the manuscript (evaluation of the development process): The paper states that it evaluates the use of the development process but supplies no quantitative data such as the number of tests run, translation-validation cases checked, bugs found by each technique, verification effort in person-hours, or error rates. This absence prevents assessment of whether the process is effective or scalable.

Simulated Author's Rebuttal

We thank the referee for their constructive comments on our manuscript. We address each major comment below and indicate the revisions made to strengthen the paper.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central claim that the machine-checked proofs 'guarantee the correctness of the generated code' and 'eliminate any need to audit or examine any verified code' is not supported by any description of the formalized semantics, the specific properties verified (e.g., semantic preservation, type safety), or the structure of the proofs. Without this information, it is impossible to determine whether the proofs are relative to a faithful specification or whether specification errors could allow incorrect code to pass verification.

Authors: We agree that the abstract's strong claims would be better supported by explicit details on the formalization. In the revised manuscript we have updated the abstract to qualify the guarantees and added a new subsection in Section 2 that describes the source and target language semantics, the verified property of semantic preservation (including type safety for the source language), and the high-level structure of the Lean proofs (inductive relations and tactic-based verification). This addition makes the scope of the machine-checked guarantees clear while preserving the paper's focus on the development process. revision: yes

-

Referee: [Evaluation section] Throughout the manuscript (evaluation of the development process): The paper states that it evaluates the use of the development process but supplies no quantitative data such as the number of tests run, translation-validation cases checked, bugs found by each technique, verification effort in person-hours, or error rates. This absence prevents assessment of whether the process is effective or scalable.

Authors: The referee correctly notes the absence of quantitative metrics. The evaluation in the original manuscript is descriptive, recounting how testing, credible compilation, verification, and audits were interleaved during the AI-assisted development of Axon. We did not systematically record the specific counts or effort figures requested. We have partially revised the evaluation section to clarify the qualitative outcomes (successful end-to-end verification and reduced auditing burden) and to explain the rationale for a case-study rather than a controlled quantitative study. We maintain that the described process still illustrates a viable workflow. revision: partial

- Quantitative metrics (test counts, bugs found per technique, person-hours, error rates) because these were not collected or logged during development.

Circularity Check

No circularity: verification claims rest on external machine-checked artifacts

full rationale

The paper presents a development process for the Axon compiler that incorporates testing, credible compilation, and Lean-based machine-checked proofs written with Claude Code. Its central claim—that these proofs guarantee correctness and eliminate the need to audit verified code—refers to the existence of independent formal artifacts rather than any internal mathematical derivation, fitted parameter, or self-referential equation. No equations, predictions, or load-bearing self-citations appear; the argument is self-contained against the external Lean proofs and does not reduce to its own inputs by construction.

Axiom & Free-Parameter Ledger

Forward citations

Cited by 1 Pith paper

-

Quantitative Comparison of Credible Compilation and Verification In Coding Agent Compiler Development

Full verification of compiler optimizations requires roughly an order of magnitude more development effort than credible compilation when implemented by a coding agent.

Reference graph

Works this paper leans on

-

[1]

Alfred V. Aho, Monica S. Lam, Ravi Sethi, and Jeffrey D. Ullman. 2006. Compilers: Principles, Techniques, and Tools(2nd ed.). Pearson Addison- Wesley, Boston, MA, USA

work page 2006

-

[2]

Andrew W. Appel, Robert Dockins, Aquinas Hobor, Lennart Beringer, Josiah Dodds, Gordon Stewart, Sandrine Blazy, and Xavier Leroy. 2014. Program Logics for Certified Compilers. Cambridge University Press, New York, NY, USA

work page 2014

-

[3]

Andrew W. Appel and Jens Palsberg. 2002.Modern Compiler Imple- mentation in Java(2nd ed.). Cambridge University Press

work page 2002

-

[4]

John Backus. 1978. The History of Fortran I, II, and III. InProceedings of the ACM SIGPLAN History of Programming Languages Conference (HOPL). ACM, Los Angeles, CA, USA, 25–74

work page 1978

-

[5]

John W. Backus, H. Stern, I. Ziller, R. A. Hughes, R. Nutt, R. J. Beeber, Sheldon Best, R. Goldberg, Lois M. Haibt, H. L. Herrick, R. A. Nelson, D. Sayre, and P. B. Sheridan. 1957. The FORTRAN Automatic Coding System. InPapers Presented at the February 26–28, 1957, Western Joint Computer Conference: Techniques for Reliability (IRE-AIEE-ACM 1957, Western)....

work page 1957

-

[6]

Junjie Chen, Jibesh Patra, Michael Pradel, Yingfei Xiong, Hongyu Zhang, Dan Hao, and Lu Zhang. 2020. A Survey of Compiler Testing. Comput. Surveys53, 1, Article 4 (2020), 36 pages

work page 2020

-

[7]

Adam Chlipala. 2007. A Certified Type-Preserving Compiler from Lambda Calculus to Assembly Language. InProceedings of the 28th ACM SIGPLAN Conference on Programming Language Design and Im- plementation (PLDI). 54–65

work page 2007

-

[8]

Adam Chlipala. 2010. A Verified Compiler for an Impure Functional Language. InProceedings of the 37th ACM SIGPLAN-SIGACT Sympo- sium on Principles of Programming Languages (POPL 2010). 93–106

work page 2010

-

[9]

Keith D. Cooper and Linda Torczon. 2011.Engineering a Compiler (2nd ed.). Morgan Kaufmann

work page 2011

-

[10]

Patrick Cousot and Radhia Cousot. 1977. Abstract Interpretation: A Unified Lattice Model for Static Analysis of Programs by Construction or Approximation of Fixpoints. InProceedings of the 4th ACM SIGACT- SIGPLAN Symposium on Principles of Programming Languages (POPL). ACM, 238–252

work page 1977

-

[11]

Patrick Cousot and Radhia Cousot. 1979. Systematic Design of Pro- gram Analysis Frameworks. InProceedings of the 6th ACM SIGACT- SIGPLAN Symposium on Principles of Programming Languages (POPL). ACM, 269–282

work page 1979

-

[12]

Leonardo de Moura and Sebastian Ullrich. 2021. The Lean 4 Theorem Prover and Programming Language. InProceedings of the 28th Inter- national Conference on Automated Deduction (CADE) (Lecture Notes in Computer Science, Vol. 12699). Springer, 625–635

work page 2021

-

[13]

Michael J. C. Gordon and Thomas F. Melham (Eds.). 1993.Introduc- tion to HOL: A Theorem Proving Environment for Higher Order Logic. Cambridge University Press

work page 1993

-

[14]

Jeehoon Kang, Yoonseung Kim, Youngju Song, Juneyoung Lee, Sanghoon Park, Mark Dongyeon Shin, Yonghyun Kim, Sungkeun Cho, Joonwon Choi, Chung-Kil Hur, and Kwangkeun Yi. 2018. Crel- lvm: Verified Credible Compilation for LLVM. InProceedings of the 39th ACM SIGPLAN Conference on Programming Language Design and Implementation (PLDI). ACM.https://sf.snu.ac.kr/crellvm/

work page 2018

-

[15]

Gary A. Kildall. 1973. A Unified Approach to Global Program Op- timization. InProceedings of the 1st Annual ACM SIGACT-SIGPLAN Symposium on Principles of Programming Languages (POPL). ACM, 194–206

work page 1973

-

[16]

Myreen, Michael Norrish, and Scott Owens

Ramana Kumar, Magnus O. Myreen, Michael Norrish, and Scott Owens

-

[17]

InProceedings of the 41st ACM SIGPLAN-SIGACT Symposium on Principles of Programming Languages (POPL)

CakeML: A Verified Implementation of ML. InProceedings of the 41st ACM SIGPLAN-SIGACT Symposium on Principles of Programming Languages (POPL). ACM, 179–191

-

[18]

Vu Le, Chengnian Sun, and Zhendong Su. 2015. Finding Deep Com- piler Bugs via Guided Stochastic Program Mutation. InProceedings of the 2015 ACM SIGPLAN International Conference on Object-Oriented Programming, Systems, Languages, and Applications (OOPSLA). 386– 399

work page 2015

-

[19]

Xavier Leroy. 2006. Formal Certification of a Compiler Back-end, or: Programming a Compiler with a Proof Assistant. InProceedings of the 33rd ACM SIGPLAN-SIGACT Symposium on Principles of Programming Languages (POPL). 42–54

work page 2006

-

[20]

Xavier Leroy. 2009. Formal Verification of a Realistic Compiler.Com- mun. ACM52, 7 (2009), 107–115

work page 2009

-

[21]

Xavier Leroy. 2009. A Formally Verified Compiler Back-end.Journal of Automated Reasoning43, 4 (2009), 363–446

work page 2009

-

[22]

Lopes, Juneyoung Lee, Chung-Kil Hur, Zhengyang Liu, and John Regehr

Nuno P. Lopes, Juneyoung Lee, Chung-Kil Hur, Zhengyang Liu, and John Regehr. 2021. Alive2: bounded translation validation for LLVM. In PLDI ’21: 42nd ACM SIGPLAN International Conference on Programming Language Design and Implementation, Virtual Event, Canada, June 20- 25, 2021, Stephen N. Freund and Eran Yahav (Eds.). ACM, 65–79

work page 2021

-

[23]

Darko Marinov. 2000.Credible Compilation. S.M. Thesis. Mas- sachusetts Institute of Technology, Department of Electrical Engi- neering and Computer Science, Cambridge, Massachusetts. Advisor(s) Martin C. Rinard.[dspace.mit.edu](https://dspace.mit.edu/handle/ 1721.1/86621)

work page 2000

-

[24]

John McCarthy and James Painter. 1967. Correctness of a Compiler for Arithmetic Expressions. InMathematical Aspects of Computer Science, J. T. Schwartz (Ed.). Proceedings of Symposia in Applied Mathematics, Vol. 19. American Mathematical Society, Providence, Rhode Island

work page 1967

-

[25]

F. H. McMahon. 1986.The Livermore Fortran Kernels: A Computer Test of the Numerical Performance Range. Technical Report UCRL-53745. Lawrence Livermore National Laboratory, Livermore, CA. Source distributed via netlib athttps://www.netlib.org/benchmark/livermore

work page 1986

-

[26]

Robin Milner and Richard Weyhrauch. 1972. Proving Compiler Cor- rectness in a Mechanized Logic. InMachine Intelligence 7, Bernard Meltzer and Donald Michie (Eds.). Edinburgh University Press, 51–72

work page 1972

-

[27]

David Monniaux and Cyril Six. 2021. Simple, light, yet formally ver- ified, global common subexpression elimination and loop-invariant code motion. InLCTES ’21: 22nd ACM SIGPLAN/SIGBED International Conference on Languages, Compilers, and Tools for Embedded Systems, Virtual Event, Canada, 22 June, 2021, Jörg Henkel and Xu Liu (Eds.). ACM, 85–96

work page 2021

- [28]

-

[29]

Scott Owens, Michael Norrish, Ramana Kumar, Magnus O. Myreen, and Yong Kiam Tan. 2017. Verifying efficient function calls in CakeML. Proc. ACM Program. Lang.1, ICFP (2017), 18:1–18:27

work page 2017

- [30]

-

[31]

Amir Pnueli, Michael Siegel, and Eli Singerman. 1998. Translation Val- idation. InProceedings of the 4th International Conference on Tools and Algorithms for Construction and Analysis of Systems (TACAS) (Lecture Notes in Computer Science, Vol. 1384). Springer, 151–166

work page 1998

-

[32]

Martin C. Rinard. 1999.Credible Compilation. Technical Report MIT- LCS-TR-776. MIT Laboratory for Computer Science. Martin Rinard

work page 1999

-

[33]

Martin C. Rinard and Darko Marinov. 1999. Credible Compilation with Pointers. InProceedings of the FLoC Workshop on Run-Time Result Verification (RTRV)

work page 1999

-

[34]

Cyril Six, Léo Gourdin, Sylvain Boulmé, David Monniaux, Justus Fasse, and Nicolas Nardino. 2022. Formally verified superblock scheduling. InCPP ’22: 11th ACM SIGPLAN International Conference on Certified Programs and Proofs, Philadelphia, PA, USA, January 17 - 18, 2022, Andrei Popescu and Steve Zdancewic (Eds.). ACM, 40–54

work page 2022

-

[35]

Chengnian Sun, Vu Le, and Zhendong Su. 2016. Finding Compiler Bugs via Live Code Mutation. InProceedings of the 2016 ACM SIGPLAN International Conference on Object-Oriented Programming, Systems, Languages, and Applications (OOPSLA). 849–863

work page 2016

-

[36]

Myreen, Ramana Kumar, Anthony Fox, Scott Owens, and Michael Norrish

Yong Kiam Tan, Magnus O. Myreen, Ramana Kumar, Anthony Fox, Scott Owens, and Michael Norrish. 2019. The Verified CakeML Com- piler Backend.Journal of Functional Programming29 (2019), e2

work page 2019

-

[37]

The Coq Development Team. 2024. The Coq Proof Assistant, Version 8.20.https://coq.inria.fr

work page 2024

-

[38]

Jean-Baptiste Tristan and Xavier Leroy. 2009. Verified Validation of Lazy Code Motion. InProceedings of the 30th ACM SIGPLAN Conference on Programming Language Design and Implementation (PLDI). 316– 326

work page 2009

-

[39]

Krister Walfridsson. 2022. Branch/cmove and Compiler Optimizations. Blog post.https://kristerw.github.io/2022/05/24/branchless/Accessed: 2026-04-29

work page 2022

-

[40]

1993.The Formal Semantics of Programming Lan- guages: An Introduction

Glynn Winskel. 1993.The Formal Semantics of Programming Lan- guages: An Introduction. MIT Press

work page 1993

-

[41]

Xuejun Yang, Yang Chen, Eric Eide, and John Regehr. 2011. Find- ing and Understanding Bugs in C Compilers. InProceedings of the 32nd ACM SIGPLAN Conference on Programming Language Design and Implementation (PLDI). 283–294

work page 2011

-

[42]

Zuck, Amir Pnueli, Yi Fang, and Benjamin Goldberg

Lenore D. Zuck, Amir Pnueli, Yi Fang, and Benjamin Goldberg. 2002. VOC: A Translation Validator for Optimizing Compilers.Electronic Notes in Theoretical Computer Science (ENTCS)65, 2 (2002), 2–18. Pro- ceedings of COCV 2002

work page 2002

-

[43]

Zuck, Amir Pnueli, Benjamin Goldberg, Clark W

Lenore D. Zuck, Amir Pnueli, Benjamin Goldberg, Clark W. Barrett, Yi Fang, and Ying Hu. 2005. Validating Optimizing Compilers.Electronic Notes in Theoretical Computer Science (ENTCS)141, 2 (2005), 37–53. Proceedings of COCV 2004

work page 2005

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.