Categorical triple formalizes LLM agent harness design

Harness Engineering as Categorical Architecture

The (G, Know, Phi) structure maps memory, skills, and protocols to components whose guarantees are preserved by compiler identity and replay

Programming Languages

Covers programming language semantics, language features, programming approaches (such as object-oriented programming, functional programming, logic programming). Also includes material on compilers oriented towards programming languages; other material on compilers may be more appropriate in Architecture (AR). Roughly includes material in ACM Subject Classes D.1 and D.3.

Harness Engineering as Categorical Architecture

The (G, Know, Phi) structure maps memory, skills, and protocols to components whose guarantees are preserved by compiler identity and replay

Automated Amortised Analysis of Skew Heaps and Leftist Heaps (Extended Version)

Enhanced inference supports all known potential functions and confirms established bounds for skew and leftist heaps.

full image

full image

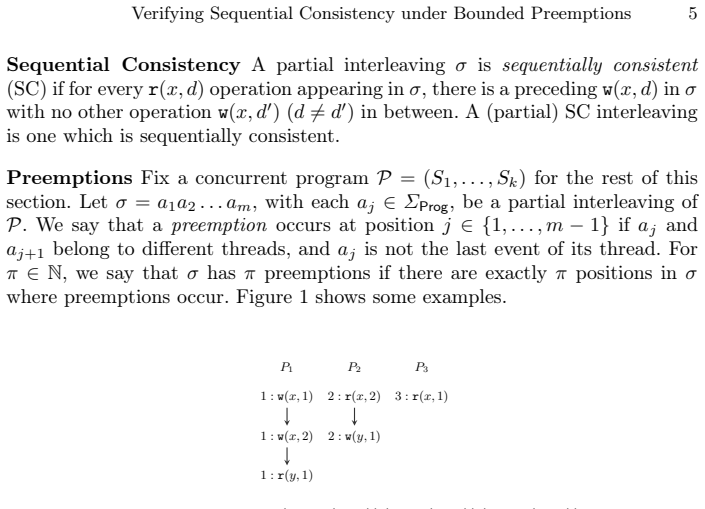

Verifying Sequential Consistency under Bounded Preemptions

The problem is polynomial-time solvable for single-writer code but NP-hard for two writers and ETH-hard for three.

full image

full image

Formal Verification of Imperative First-Class Functions in Move

Behavioral predicates and state labels let SMT check dynamic dispatch in Move without separation logic.

Combining Mechanical and Agentic Specification Inference for Move

The prover validates candidates for loop invariants and high-level properties until verification succeeds on code with loops and dynamic dis

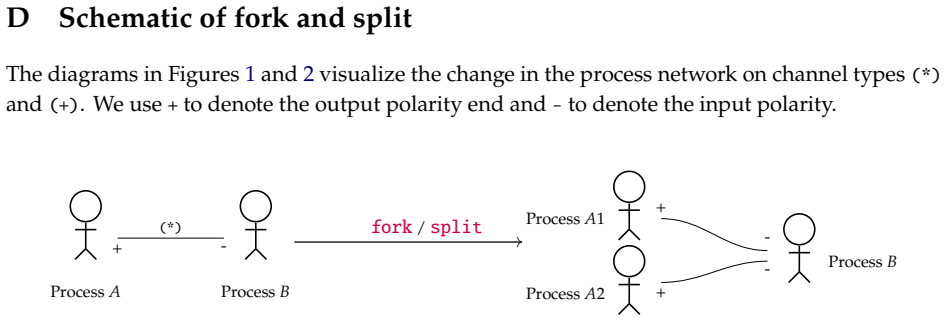

Categorical Message Passing Language (CaMPL) for programmers

Category-theory derived types keep non-recursive message-passing programs free of deadlock and livelock while allowing races and custom ch

full image

full image

Persistent Amortised Analysis, Operationally

An operational semantics reveals that restricting credits to thunks suffices for correct bounds, clarifying why the debit method works.

A coding agent comparison of compiler optimizations shows higher session counts and simpler algorithms for the verified versions.

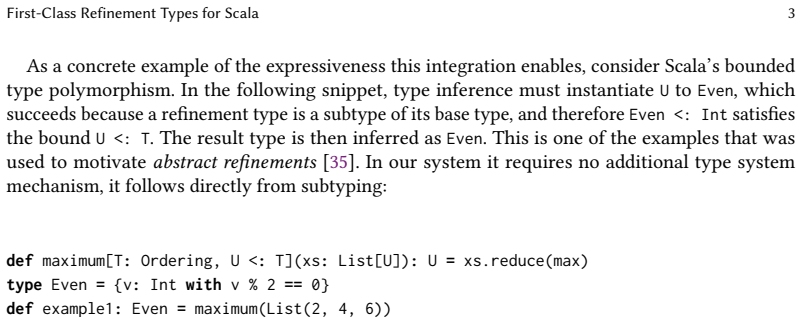

First-Class Refinement Types for Scala

Logical predicates participate in subtyping, inference, and pattern matching without separate specifications

full image

full image

A New Interaction Concept for Interactive and Autoactive Program Verification

The interface maps logical proofs back to original code and specs, letting users understand failures and locate defects without low-level re

full image

full image

Bounding Fixed Points of Non-Monotone Processes: Theory to Practice

A sound anytime algorithm tightens AFT approximations, terminates on finite lattices, and accelerates answer set programming and speculative

full image

full image

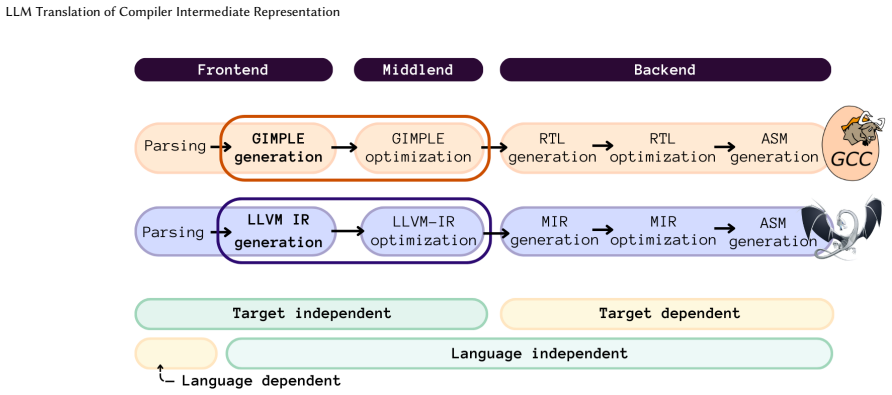

LLM Translation of Compiler Intermediate Representation

Fine-tuned on paired examples from real C code, it beats general models by up to 44 points and enables cross-toolchain reuse.

full image

full image

GPU-Accelerated Synthesis of Mixed-Boolean Arithmetic: Beyond Caching

Bottom-up design without caches scales to larger specifications and bigger expressions for deobfuscation tasks.

full image

full image

Beyond BLEU: A Semantic Evaluation Method for Code Translation

LLM decompilers pass far more runtime checks than heuristics, with correlation to string overlap near zero.

full image

full image

Interaction Tree Semantics for RISC-V: Bridging Compiler and Hardware Verification

Interaction Trees support machine-checked bisimulation proofs of equivalence and correctness, validated by passing all standard test suites.

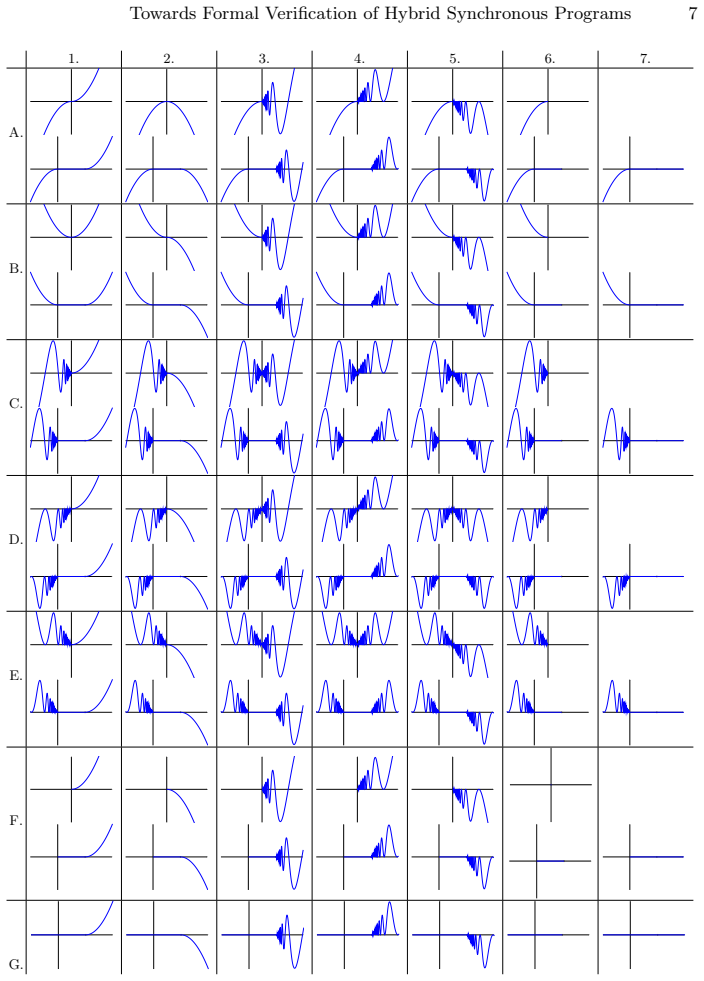

Towards Formal Verification of Hybrid Synchronous Programs with Refinement Types

Formal semantics for initial value problems and zero crossings support sound safety proofs on executable code.

full image

full image

Governed Metaprogramming for Intelligent Systems: Reclassifying Eval as a Governed Effect

Dynamic program generation in intelligent systems requires treating the move from form to execution as an inspected authority step.

Governed Metaprogramming for Intelligent Systems: Reclassifying Eval as a Governed Effect

Program forms stay first-class and pure while materialization requires inspection of capabilities and policy before execution.

Pact: A Choreographic Language for Agentic Ecosystems

Designers can solve for the decision policies that self-interested agents will follow in multi-party settings.

full image

full image

Transpiling functional neural specifications enables infinite-horizon safety proofs for discrete and continuous systems across Rocq, Is

full image

full image

Compile-Time Java Stream Fusion via mapMulti

Merges map and filter stages to remove intermediate objects without converting pipelines to loops.

full image

full image

How to benchmark: the Measure-Explain-Test-Improve loop

Measure, explain, test, and improve steps let novices create solid performance evaluations in programming language work.

Towards Definitional Interpreters for Hoare Logics

Operating on proof structure rather than code lets correctness rules guide semantics for heaps, recursion, and subtyping.

full image

full image

Machine-checked guarantees from Claude Code remove the need to inspect the generated code itself.

full image

full image

Fully machine-checked Lean proofs written by Claude Code remove any need to audit generated code

full image

full image

FlowBook: Enforcing Reproducibility in Computational Notebooks

FlowBook enforces notebook reproducibility by checking whether top-to-bottom execution from an empty state matches recorded outputs, using…

DITRON: Distributed Multi-level Tiling Compiler for Parallel Tensor Programs

DITRON's three-level hierarchy enables 6-30% kernel speedups and over 10% MFU gains in LLM training and inference without hand tuning.

SoCal: A Language for Memory-Layout Factorization of Recursive Datatypes

SoCal and Colobus turn interleaved trees into factored buffers, producing 1.46x speedup on tree benchmarks without manual rewriting.

full image

full image

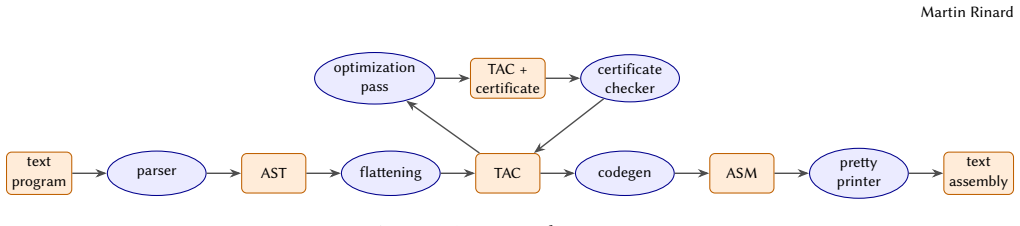

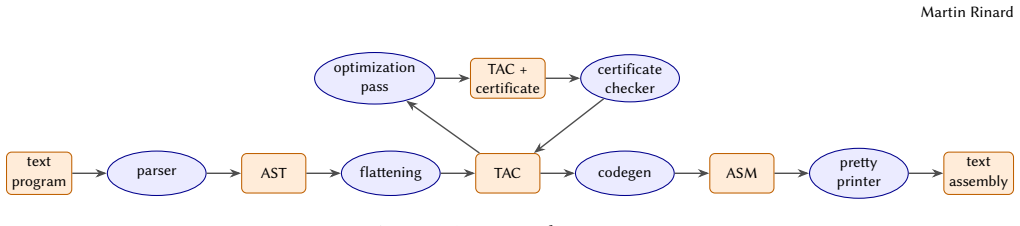

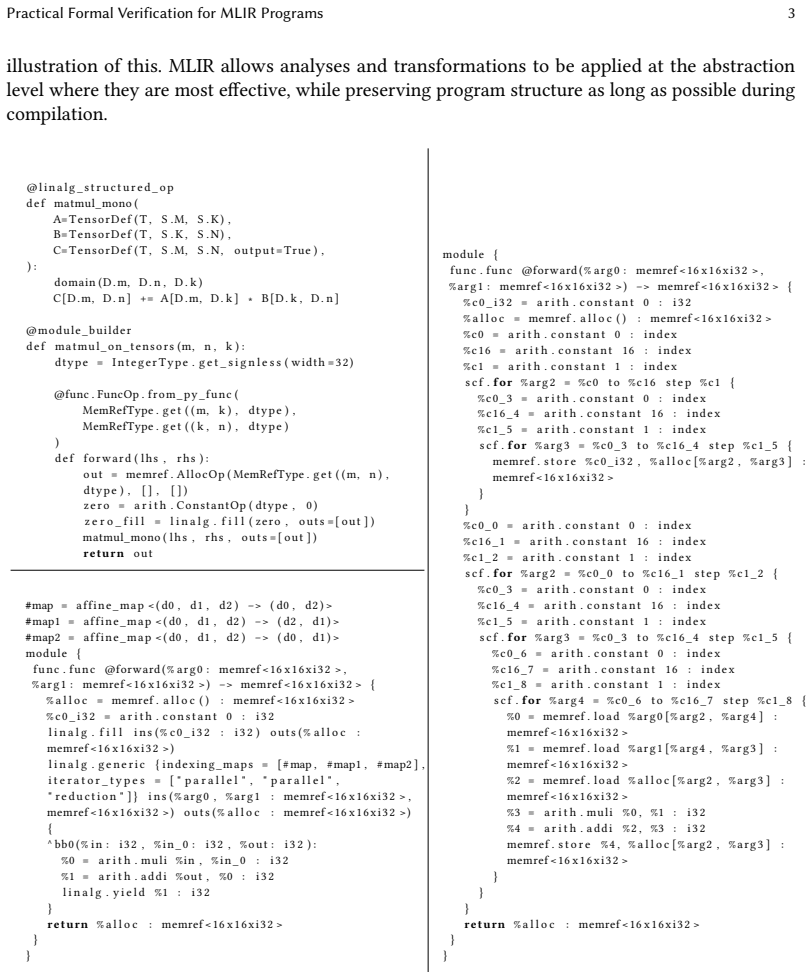

Practical Formal Verification for MLIR Programs

Hybrid interpretation verifies optimizations on toolchains and hundreds of variants without depending on implementation details

full image

full image

Structural SOGAT formulation yields correct extraction to untyped lambda calculus via presheaf gluing.

A Monadic Implementation of Functional Logic Programs

A pure monadic encoding supports memoization of non-deterministic choices and advanced features such as functional patterns to deliver the 0

full image

full image

Predicates become input-output tables; graded effects and relevance types keep support finite while handling Datalog and aggregation.

full image

full image

Hybrid Path-Sums for Hybrid Quantum Programs

A new symbolic form and rewriting rules let tools check equivalence and probabilities in programs that combine quantum and classical control

full image

full image

Compile-Time Tensor Shape Checking via Staged Shape-Dependent Types

Shape consistency conditions are checked before code generation, so successfully compiled tensor programs are guaranteed to be free of shape

full image

full image

Remote Concolic Multiverse Debugging -- Extended Version with Additional Appendices

By selecting only input values that create distinct paths during live sessions, the debugger shrinks explored states while preserving full代码

Ownership Refinement Types for Pointer Arithmetic and Nested Arrays

Allowing ownership to reference outer indices enables sound checking of programs with matrices and pointer arithmetic.

From Monolithic to Compositional: A Compositional Operational Semantics for Crystality

Local engines plus global coordinator yield simple proofs of isolation and commutativity without behavioral change.

full image

full image

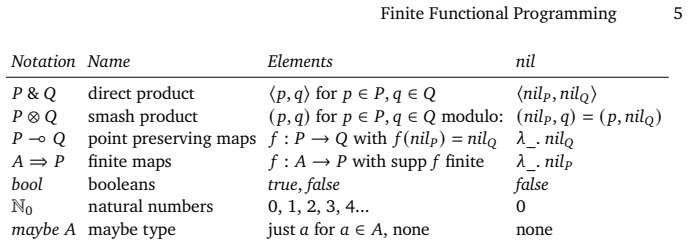

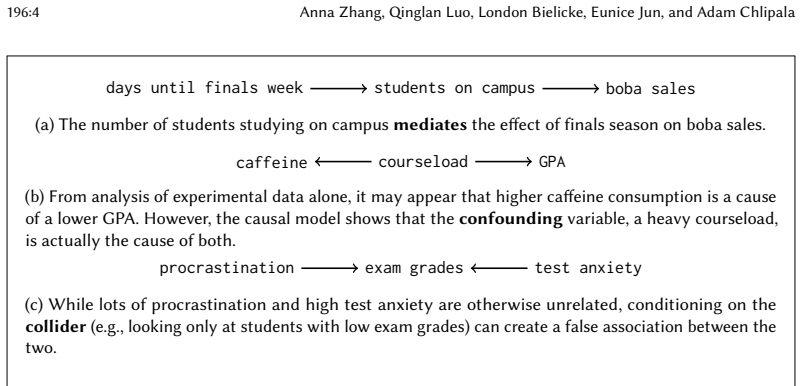

Causality and Semantic Separation

Mechanized result shows d-separation for confounders equals a security-style semantic property, without probabilities.

full image

full image

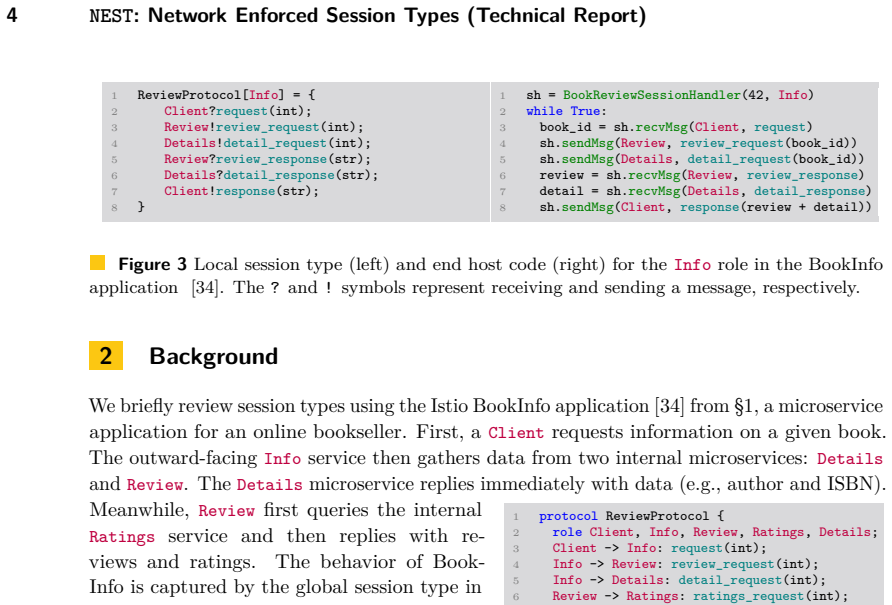

NEST: Network Enforced Session Types (Technical Report)

Algorithms translate session types into P4 programs that detect violations even with packet loss and reordering.

full image

full image

They serve as implicit linear arguments resolved by an extended GHC solver, with safety ensured by desugaring to Linear Haskell.

full image

full image

Automatic Code and Test Generation of Smart Contracts from Coordination Models

A toolchain validates high-level specs, emits code, and produces tests for dynamic decentralized workflows on blockchain.

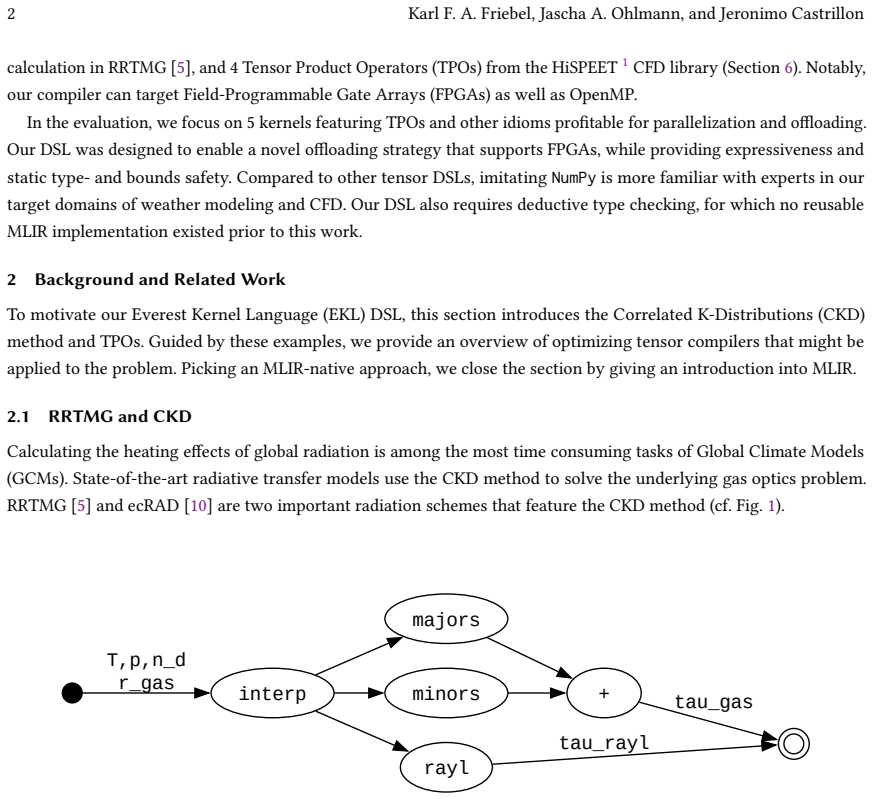

Demonstrating a Future for MLIR-native DSL Compilers on a NumPy-like Example

Dialect-agnostic type checker and parallel lowering enable native analyses and offloading for weather and CFD workloads.

full image

full image

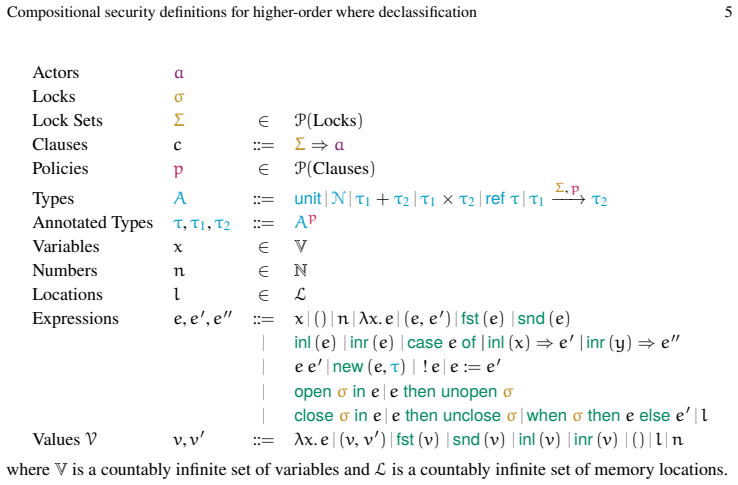

Compositional security definitions for higher-order where declassification

Halting indistinguishability checks after relevant releases produces a compositional definition stronger than prior lower-order work.

full image

full image

Implementing CPSLint: A Data Validation and Sanitisation Tool for Industrial Cyber-Physical Systems

CPSLint replaces repetitive ad-hoc scripts with reusable expressions for time-series validation and preparation in industrial systems.

full image

full image

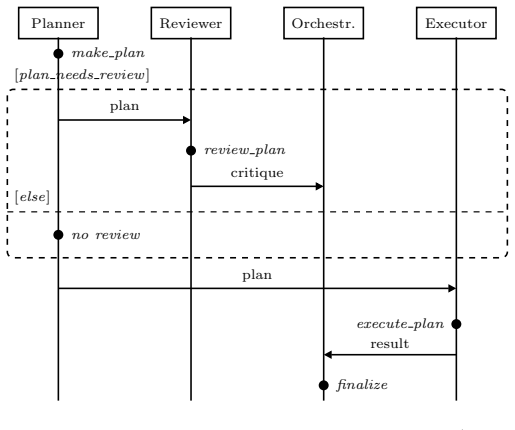

Provable Coordination for LLM Agents via Message Sequence Charts

A syntax-directed method separates coordination from unpredictable LLM actions and guarantees no deadlocks.

full image

full image

&inator: Correct, Precise C-to-Rust Interface Translation

Constraint solver yields declarations that admit semantics-preserving safe-Rust implementations

full image

full image

Partitioning Unstructured Sparse Tensor Algebra for Load-Balanced Parallel Execution

Generalizes merging techniques to multi-operand hierarchical structures and generates competitive parallel kernels for CPUs and GPUs.

full image

full image

Shift schema drift left: policy-aware compile-time contracts for typed JVM and Spark pipelines

Framework proves producer compatibility before runtime and adds checks Spark omits at the sink.

full image

full image

jMT: Testing Correctness of Java Memory Models (Extended Version)

The tool constructs per-program multi-execution semantics to check new JMM proposals against compiler behaviors and optimizations.

full image

full image

Literate execution uses provenance tracking in Fluid to let readers explore how inputs shape results through computed explanations and views

full image

full image

Synthesizing Backward Error Bounds, Backward

Shel morphisms and an automated search yield stability proofs for programs with variable reuse that manual methods could not reach.

full image

full image

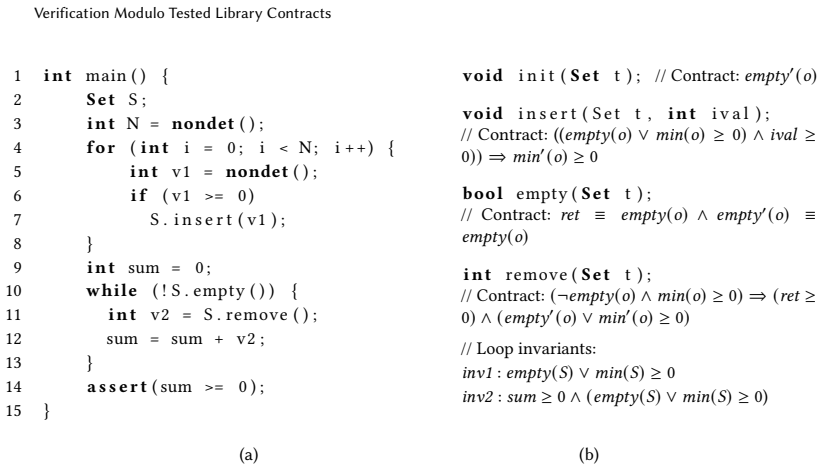

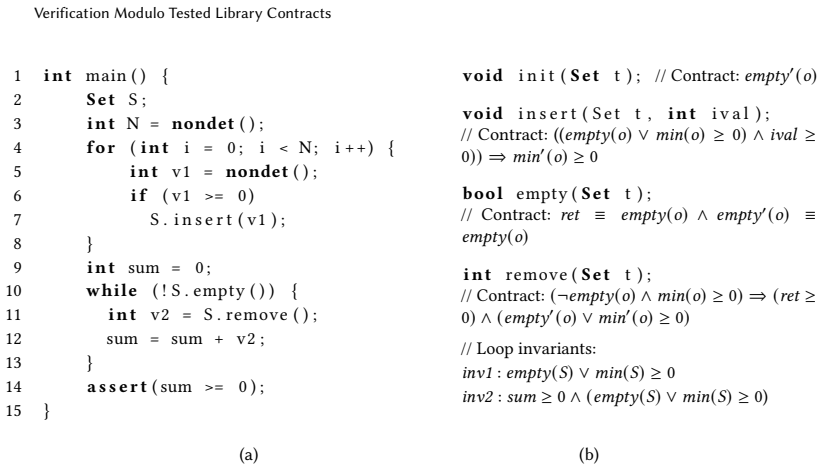

Verification Modulo Tested Library Contracts

A learning loop refines modular and contextual contracts until they prove the client correct and pass library tests.

full image

full image

Verification Modulo Tested Library Contracts

Counterexample-guided CHC learning produces adequate modular and contextual contracts checked against library implementations

full image

full image

Pure Borrow: Linear Haskell Meets Rust-Style Borrowing

A library enables safe parallel mutation inside pure functions while retaining laziness and leak freedom.

full image

full image

Prism: Symbolic Superoptimization of Tensor Programs

Prism is the first symbolic superoptimizer for tensor programs that uses sGraph for compact representation of program families, two-level…

full image

full image

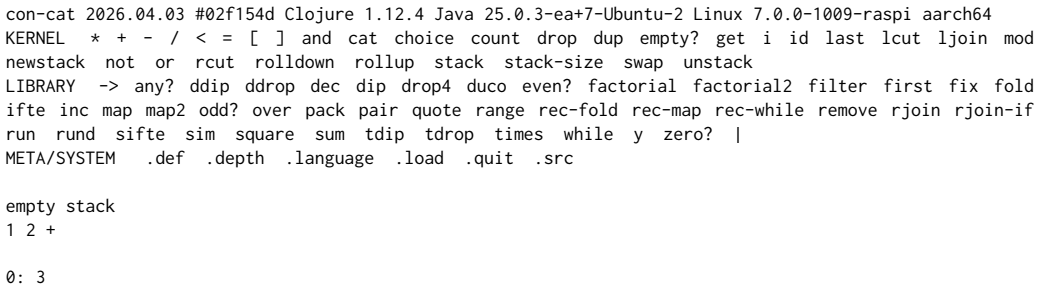

What if we have 90 minutes only to teach programming?

CON-CAT removes accidental complexity so novices reach core computing ideas through direct puzzles.

full image

full image

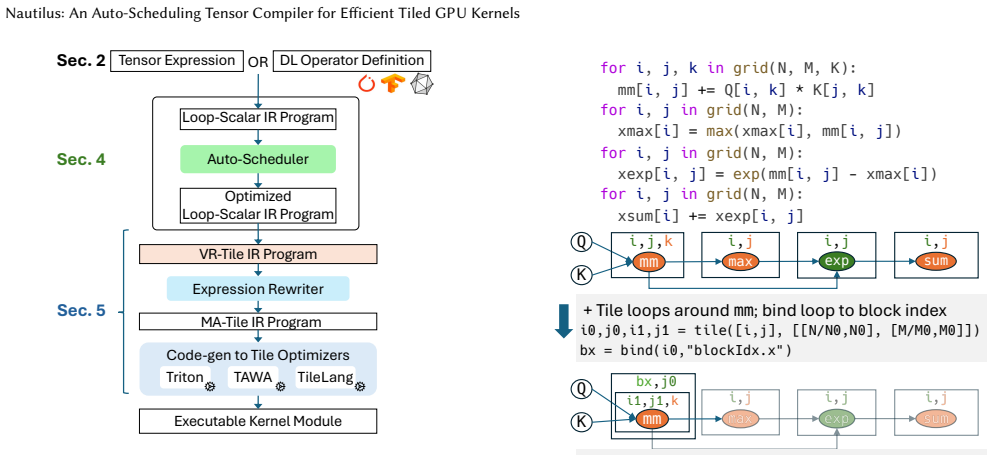

Nautilus: An Auto-Scheduling Tensor Compiler for Efficient Tiled GPU Kernels

Nautilus discovers efficient tiled GPU code automatically, delivering up to 42% higher throughput than prior compilers on modern hardware

full image

full image

Filament: Denning-Style Information Flow Control for Rust

Macros and type inference track explicit and implicit flows with low overhead and fewer restrictions than prior library approaches.

full image

full image

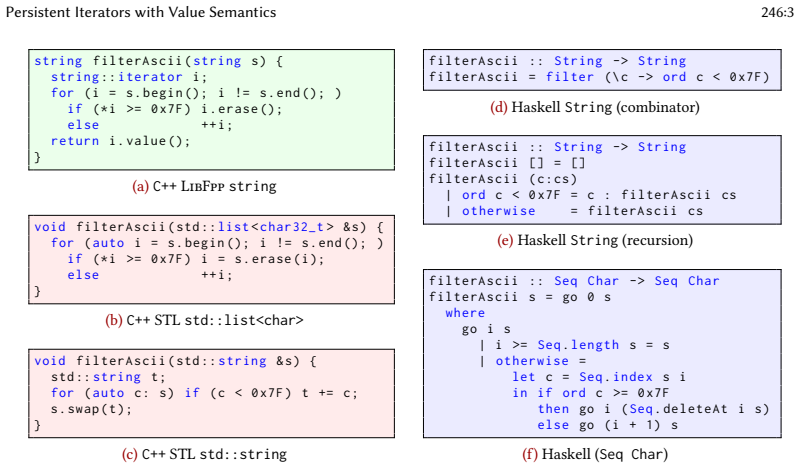

Persistent Iterators with Value Semantics

Local copies of each snapshot eliminate invalidation and aliasing while preserving ordinary iterator-based code style.

full image

full image

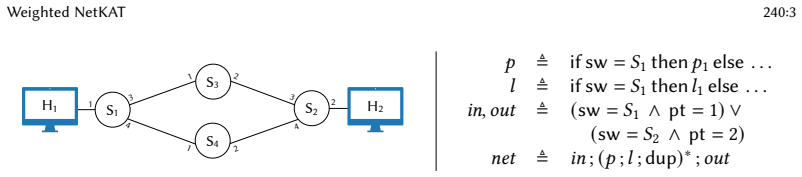

Weighted NetKAT: A Programming Language For Quantitative Network Verification

Semiring-parametric language and automata give automatic decision procedures even when programs contain unbounded iteration.

full image

full image

AI Coding Agents Need Better Compiler Remarks

Ambiguous feedback triggers hallucinations while structured analysis unlocks small models for safe refactoring.

full image

full image

On the Decidability of Verification under Release/Acquire

The result settles a seven-year open question and shows decidability returns only when both context switches and RMWs are bounded.

full image

full image

Erlang Binary and Source Code Obfuscation

Targeted transformations preserve runtime behavior while blocking standard decompilers and reverse engineering.

full image

full image

Cerisier: A Program Logic for Attestation in a Capability Machine

It supplies a universal contract that lets developers prove end-to-end security for systems mixing trusted enclaves, untrusted code, and att

full image

full image

Optimal Predicate Pushdown Synthesis

Framework produces strongest pre-filters and weakest post-filters for stateful computations, delivering 2.4× average speedup across 150 real

full image

full image

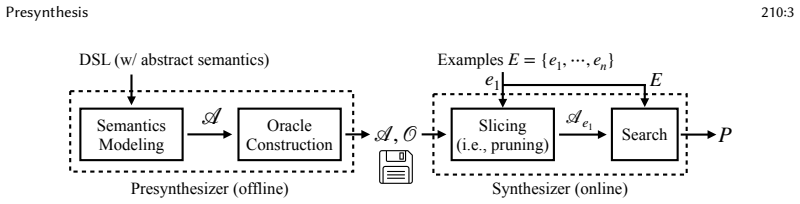

Presynthesis: Towards Scaling Up Program Synthesis with Finer-Grained Abstract Semantics

A tree automaton and oracle built once enable fast, sound pruning across SQL, string, and matrix tasks.

full image

full image

Towards a Linear-Algebraic Hypervisor

A new parallel VM maps general-purpose code to concurrent GPU runs, addressing underused hardware in synthesis and optimization.

full image

full image

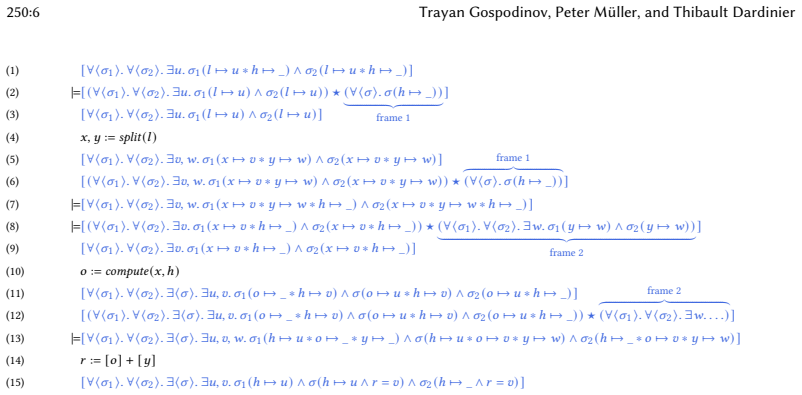

Hyper Separation Logic (extended version)

It lifts separating conjunction to sets of states so a frame rule stays sound for mixed quantifiers in imperative code.

full image

full image

Privacy budgets are treated as resources so that complex libraries with filters, caching, and interactive algorithms can be checked modularl

full image

full image

Polyregular equivalence is undecidable in higher-order types

Reduction from tiling shows no algorithm can check if two such functions match

λ_A: A Typed Lambda Calculus for LLM Agent Composition

Extends simply-typed lambda calculus with oracle calls and bounded fixpoints, proves safety, and shows 94 percent of real GitHub configs are

Streaming algorithm runs faster than cubic predecessors and adds low overhead inside TSAN.

full image

full image

Lifting from restricted models yields robust analyses for full programs that behave predictably under changes.

full image

full image

Parameterized Algorithms and Complexity for Function Merging with Branch Reordering

Two algorithms show the problem stays tractable even with unbounded nesting in one function; hardness holds for constant d1, b2, d2.

full image

full image

Emulation-Completeness of Programming Languages

Turing-completeness leaves out control flow, exceptions, timing, and metadata that real programs observe.

Denotational reasoning for asynchronous multiparty session types

The model handles non-blocking sends and message reordering while ensuring safety, deadlock-freedom and liveness.

full image

full image

Points-to Analysis Using MDE: A Multi-level Deduplication Engine for Repetitive Data and Operations

Recursive removal of over 90% redundant set operations yields 8x runtime gains that increase with program size.

Vanilla Object Orientation (VOO): A Value-Semantics Approach to Classes in Tcl

Native lists and copy-on-write replace framework code, cutting creation time and memory use while keeping the same script API.

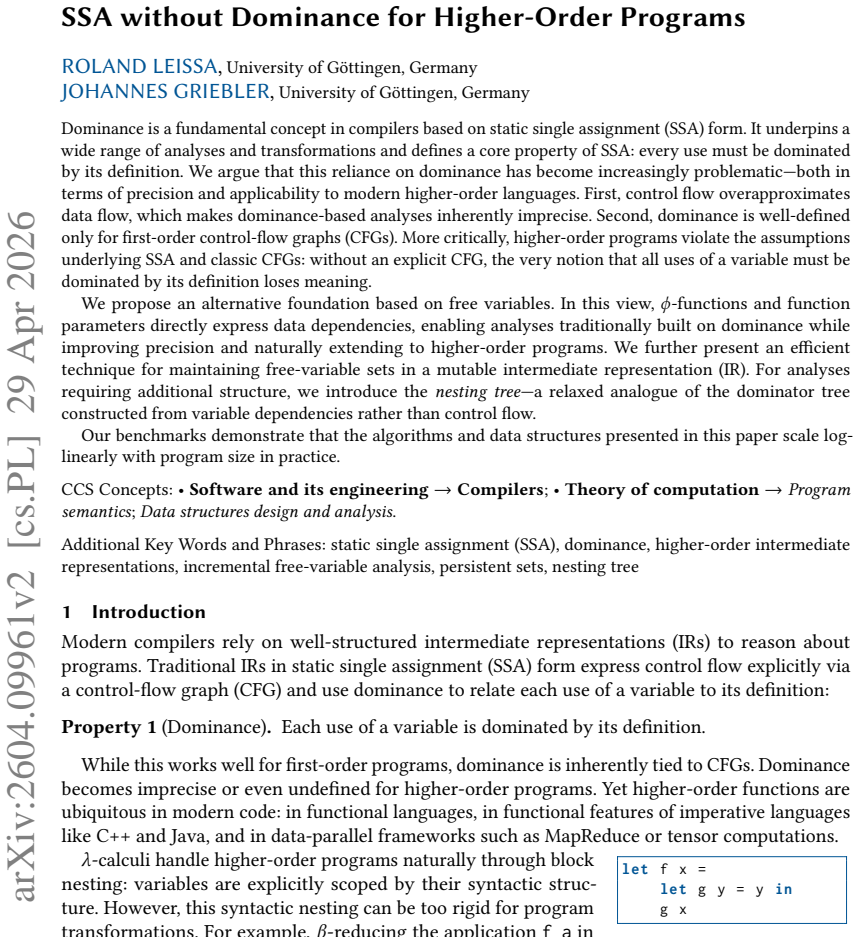

SSA without Dominance for Higher-Order Programs

Tracking dependencies directly through phi-functions and parameters improves precision and removes the need for control-flow graphs.

full image

full image

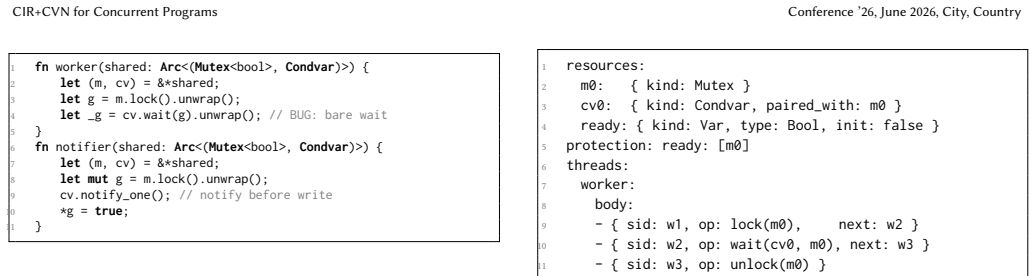

CIR+CVN: Bridging LLM Semantic Understanding and Petri-Net Verification for Concurrent Programs

Natural language requirements turn into explicit structures that enable exhaustive checking, counterexample repair, and preservation of crit

full image

full image