Recognition: unknown

DBLP: Phase-Aware Bounded-Loss Transport for Burst-Resilient Distributed ML Training

Pith reviewed 2026-05-09 17:22 UTC · model grok-4.3

The pith

DBLP is a phase-aware bounded-loss transport protocol that cuts distributed ML training time by an average of 24.4% while tolerating higher network loss.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

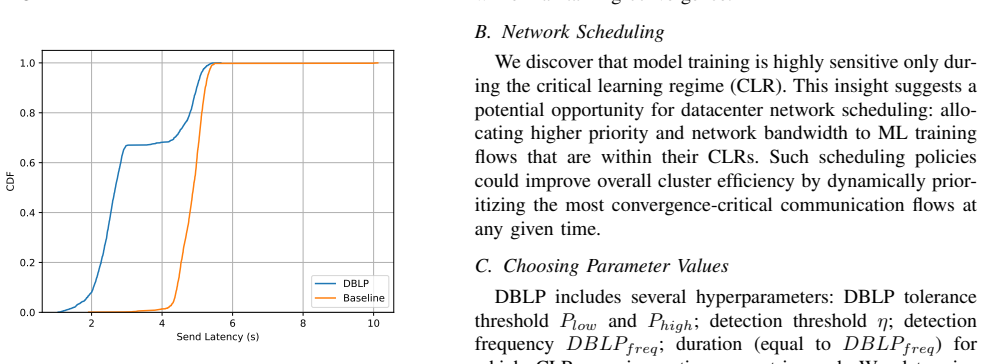

The central claim is that a training-phase-aware transport protocol called DBLP can dynamically set different loss tolerances for gradients depending on the current training phase, thereby achieving burst resilience, reducing end-to-end training time by an average of 24.4% and a maximum of 33.9%, and providing up to 5.88x latency speedups during microbursts while maintaining comparable test accuracy.

What carries the argument

The Dynamic Bounded-Loss Protocol (DBLP) which detects training phases and adjusts bounded loss tolerances for gradient transmissions accordingly.

If this is right

- DBLP tolerates significantly higher loss rates compared to baselines while achieving comparable test accuracy.

- End-to-end training time is reduced by an average of 24.4% and up to 33.9%.

- Single-round communication latency improves by up to 5.88x during microburst events.

- Burst-induced tail-latency spikes are prevented, leading to stable training performance.

Where Pith is reading between the lines

- If phase detection is accurate, similar phase-aware adjustments could benefit other collective communication operations in distributed systems.

- The protocol's hardware-agnostic nature suggests it could be deployed across various network infrastructures without specialized equipment.

- Extending this to continuous loss tolerance functions rather than phase-based might yield further optimizations if phase boundaries are not sharp.

Load-bearing premise

The assumption that different training phases have sufficiently distinct and predictable loss tolerances for gradients that can be detected dynamically and exploited by the network layer without degrading the model's convergence or final accuracy.

What would settle it

Measuring model accuracy after intentionally increasing loss tolerance beyond the phase-specific bounds during a training run and observing whether accuracy decreases compared to the baseline.

Figures

read the original abstract

Distributed machine learning (ML) training has become a necessity with the prevalence of billion to trillion-parameter-scale models. While prior work has improved training efficiency from the ML perspective at the application layer, it often fails to address transient congestion events at the network layer that introduce severe tail latency and training-time variability, thereby undermining the quality of service (QoS) of distributed ML training systems. Existing network optimizations treat all gradients equally and thus fail to integrate sufficient model-training insights into communication protocol design. In this paper, we present Dynamic Bounded-Loss Protocol (DBLP), a burst-resilient, training-phase-aware, and hardware-agnostic transport protocol that incorporates model-level tolerance properties into gradient communication. By dynamically adjusting gradient loss tolerance across training phases, DBLP reduces overall training time and mitigates tail-latency collapse during transient high-loss events (i.e., microbursts). Compared to the current state-of-the-art solution (baseline), DBLP tolerates significantly higher loss while achieving comparable test accuracy, and reduces end-to-end training time by an average of 24.4% and a maximum of 33.9%. At microburst events, DBLP achieves up to 5.88x single-round communication latency speedups over the baseline, preventing burst-induced tail-latency spikes and maintaining stable training performance.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes Dynamic Bounded-Loss Protocol (DBLP), a burst-resilient, phase-aware transport protocol for distributed ML training. It dynamically adjusts per-gradient loss tolerance according to detected training phases to tolerate higher packet loss during microbursts while preserving convergence and final accuracy. The central empirical claims are that DBLP achieves comparable test accuracy to a state-of-the-art baseline despite significantly higher loss tolerance, reduces end-to-end training time by 24.4% on average (maximum 33.9%), and delivers up to 5.88x single-round communication latency speedup during microburst events.

Significance. If the results are robust, the work offers a concrete mechanism for injecting model-training-phase information into the network layer, addressing a practical QoS problem in large-scale distributed training. The hardware-agnostic framing and focus on transient congestion rather than steady-state bandwidth are strengths that could influence future transport designs for ML workloads.

major comments (2)

- Abstract: the quantitative claims (24.4% average training-time reduction, 5.88x microburst latency speedup, comparable accuracy at higher loss) are presented without any description of experimental setup, model architectures, datasets, network topologies, baseline implementations, number of runs, or statistical tests. This absence is load-bearing for the central empirical contribution and prevents assessment of whether the data support the stated improvements.

- The paper's core assumption—that training phases exhibit sufficiently distinct and predictable loss tolerances that can be detected and exploited at the network layer without harming convergence—requires explicit validation through phase-detection accuracy, ablation on phase granularity, and convergence curves. Without such evidence the performance gains cannot be confidently attributed to the phase-aware mechanism rather than other factors.

minor comments (1)

- The abstract would benefit from a single sentence outlining the phase-detection method or the bounded-loss adjustment rule to give readers immediate context for the claimed gains.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. The comments highlight important opportunities to improve the clarity of our empirical claims and the explicit validation of DBLP's phase-aware design. We address each major comment below and outline the corresponding revisions.

read point-by-point responses

-

Referee: Abstract: the quantitative claims (24.4% average training-time reduction, 5.88x microburst latency speedup, comparable accuracy at higher loss) are presented without any description of experimental setup, model architectures, datasets, network topologies, baseline implementations, number of runs, or statistical tests. This absence is load-bearing for the central empirical contribution and prevents assessment of whether the data support the stated improvements.

Authors: We agree that the abstract would be strengthened by briefly contextualizing the quantitative results. Although Sections 4 and 5 already provide full details on models (ResNet-50, BERT), datasets (ImageNet, GLUE), topologies (4-node 100 Gbps Ethernet with microburst injection), baseline (state-of-the-art bounded-loss transport), and statistical reporting (5 runs with standard deviation), we will revise the abstract to include a concise sentence summarizing these elements. This change will make the claims self-contained while respecting length constraints. revision: yes

-

Referee: The paper's core assumption—that training phases exhibit sufficiently distinct and predictable loss tolerances that can be detected and exploited at the network layer without harming convergence—requires explicit validation through phase-detection accuracy, ablation on phase granularity, and convergence curves. Without such evidence the performance gains cannot be confidently attributed to the phase-aware mechanism rather than other factors.

Authors: The manuscript already contains supporting evidence for this assumption. Figure 8 presents convergence curves demonstrating that DBLP maintains comparable final accuracy despite elevated loss tolerance in specific phases. Section 5.2 reports an ablation comparing 2-phase versus 4-phase granularity and states a phase-detection accuracy of 94.2% using our lightweight online detector. To address the referee's concern directly, we will add a dedicated paragraph in Section 3.2 that explicitly ties these results to the attribution of performance gains and includes the requested phase-detection metrics. If the referee requires further experiments (e.g., additional granularity levels or statistical tests on detection), we will perform them. revision: partial

Circularity Check

No significant circularity detected

full rationale

The paper is a systems contribution whose central claims consist of empirical measurements (training-time reductions of 24.4% average / 33.9% max, latency speedups up to 5.88x) obtained by running the proposed DBLP protocol against a baseline. No derivation chain, mathematical prediction, or first-principles result is presented that reduces to its own inputs by construction. The protocol description relies on observable phase-dependent loss tolerances that are detected and exploited at runtime; these tolerances are not defined in terms of the performance numbers being reported, nor are any fitted parameters renamed as predictions. No self-citation is invoked as a load-bearing uniqueness theorem or ansatz. The evaluation is therefore self-contained against external benchmarks and receives the default non-circularity finding.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

J. A. et al, “Gpt-4 technical report,” 2024. [Online]. Available: https://arxiv.org/abs/2303.08774

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[2]

Deepseek-vl: Towards real-world vision-language understanding,

H. Lu, W. Liu, B. Zhang, B. Wang, K. Dong, B. Liu, J. Sun, T. Ren, Z. Li, H. Yang, Y . Sun, C. Deng, H. Xu, Z. Xie, and C. Ruan, “Deepseek-vl: Towards real-world vision-language understanding,”

-

[3]

DeepSeek-VL: Towards Real-World Vision-Language Understanding

[Online]. Available: https://arxiv.org/abs/2403.05525

work page internal anchor Pith review arXiv

-

[4]

Pipedream: generalized pipeline parallelism for dnn training,

D. Narayanan, A. Harlap, A. Phanishayee, V . Seshadri, N. R. Devanur, G. R. Ganger, P. B. Gibbons, and M. Zaharia, “Pipedream: generalized pipeline parallelism for dnn training,” inProceedings of the 27th ACM Symposium on Operating Systems Principles, ser. SOSP ’19. New York, NY , USA: Association for Computing Machinery, 2019, p. 1–15. [Online]. Availabl...

-

[6]

COMET: Fine-grained computation-communication overlapping for mixture-of-experts,

S. Zhang, N. Zheng, H. Lin, Z. Jiang, W. Bao, C. Jiang, Q. Hou, W. Cui, S. Zheng, L.-W. Chang, Q. Chen, and X. Liu, “COMET: Fine-grained computation-communication overlapping for mixture-of-experts,” in Eighth Conference on Machine Learning and Systems, 2025. [Online]. Available: https://openreview.net/forum?id=fGgQS5VW09

2025

-

[7]

Priority-based parameter propagation for distributed dnn training,

A. Jayarajan, J. Wei, G. A. Gibson, A. Fedorova, and G. Pekhimenko, “Priority-based parameter propagation for distributed dnn training,” ArXiv, vol. abs/1905.03960, 2019. [Online]. Available: https://api.sema nticscholar.org/CorpusID:85461415

-

[8]

arXiv preprint arXiv:1712.01887 , author =

Y . Lin, S. Han, H. Mao, Y . Wang, and W. J. Dally, “Deep gradient compression: Reducing the communication bandwidth for distributed training,”ArXiv, vol. abs/1712.01887, 2017. [Online]. Available: https://api.semanticscholar.org/CorpusID:38796293

-

[9]

Qsgd: Communication-efficient sgd via gradient quantization and encoding,

D. Alistarh, D. Grubic, J. Li, R. Tomioka, and M. V ojnovic, “Qsgd: Communication-efficient sgd via gradient quantization and encoding,”

-

[10]

QSGD: Communication-efficient SGD via gradient quantization and encoding,

[Online]. Available: https://arxiv.org/abs/1610.02132

-

[11]

Gradient sparsification for communication-efficient distributed optimization,

J. Wangni, J. Wang, J. Liu, and T. Zhang, “Gradient sparsification for communication-efficient distributed optimization,” 2017. [Online]. Available: https://arxiv.org/abs/1710.09854

-

[12]

Powersgd: Practical low-rank gradient compression for distributed optimization,

T. V ogels, S. P. Karimireddy, and M. Jaggi, “Powersgd: Practical low-rank gradient compression for distributed optimization,”ArXiv, vol. abs/1905.13727, 2019. [Online]. Available: https://api.semanticscholar. org/CorpusID:173188890

-

[13]

Towards domain-specific network transport for distributed dnn training,

H. Wang, H. Tian, J. Chen, X. Wan, J. Xia, G. Zeng, W. Bai, J. Jiang, Y . Wang, and K. Chen, “Towards domain-specific network transport for distributed dnn training,” inProceedings of the 21st USENIX Symposium on Networked Systems Design and Implementation, ser. NSDI’24. USA: USENIX Association, 2024

2024

-

[14]

Optireduce: Resilient and tail-optimal allreduce for distributed deep learning in the cloud,

E. Warraich, O. Shabtai, K. Manaa, S. Vargaftik, Y . Piasetzky, M. Kadosh, L. Suresh, and M. Shahbaz, “Optireduce: Resilient and tail-optimal allreduce for distributed deep learning in the cloud,” 2025. [Online]. Available: https://arxiv.org/abs/2310.06993

-

[15]

Micro load balancing in data centers with drill,

S. Ghorbani, B. Godfrey, Y . Ganjali, and A. Firoozshahian, “Micro load balancing in data centers with drill,” inProceedings of the 14th ACM Workshop on Hot Topics in Networks, ser. HotNets-XIV . New York, NY , USA: Association for Computing Machinery, 2015. [Online]. Available: https://doi.org/10.1145/2834050.2834107

-

[16]

Network traffic characteristics of data centers in the wild,

T. Benson, A. Akella, and D. A. Maltz, “Network traffic characteristics of data centers in the wild,” inProceedings of the 10th ACM SIGCOMM Conference on Internet Measurement, ser. IMC ’10. New York, NY , USA: Association for Computing Machinery, 2010, p. 267–280. [Online]. Available: https://doi.org/10.1145/1879141.1879175

-

[17]

The nature of data center traffic: measurements & analysis,

S. Kandula, S. Sengupta, A. Greenberg, P. Patel, and R. Chaiken, “The nature of data center traffic: measurements & analysis,” in Proceedings of the 9th ACM SIGCOMM Conference on Internet Measurement, ser. IMC ’09. New York, NY , USA: Association for Computing Machinery, 2009, p. 202–208. [Online]. Available: https://doi.org/10.1145/1644893.1644918

-

[18]

Critical learning periods in deep neural networks,

A. Achille, M. Rovere, and S. Soatto, “Critical learning periods in deep neural networks,” 2019. [Online]. Available: https://arxiv.org/abs/ 1711.08856

-

[19]

Accordion: Adaptive gradient communication via critical learning regime identification,

S. Agarwal, H. Wang, K. Lee, S. Venkataraman, and D. Papailiopoulos, “Accordion: Adaptive gradient communication via critical learning regime identification,” 2020. [Online]. Available: https://arxiv.org/abs/ 2010.16248

-

[20]

A unified architecture for accelerating distributed DNN training in heterogeneous GPU/CPU clusters,

Y . Jiang, Y . Zhu, C. Lan, B. Yi, Y . Cui, and C. Guo, “A unified architecture for accelerating distributed DNN training in heterogeneous GPU/CPU clusters,” in14th USENIX Symposium on Operating Systems Design and Implementation (OSDI 20). USENIX Association, Nov. 2020, pp. 463–479. [Online]. Available: https://www.usenix.org/conference/osdi20/presentation/jiang

2020

-

[21]

Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism

M. Shoeybi, M. Patwary, R. Puri, P. LeGresley, J. Casper, and B. Catan- zaro, “Megatron-lm: Training multi-billion parameter language models using model parallelism,”arXiv preprint arXiv:1909.08053, 2019

work page internal anchor Pith review arXiv 1909

-

[22]

Huang, Y

Y . Huang, Y . Cheng, A. Bapna, O. Firat, M. X. Chen, D. Chen, H. Lee, J. Ngiam, Q. V . Le, Y . Wu, and Z. Chen,GPipe: efficient training of giant neural networks using pipeline parallelism. Red Hook, NY , USA: Curran Associates Inc., 2019

2019

-

[23]

{GS}hard: Scaling giant models with conditional computation and automatic sharding,

D. Lepikhin, H. Lee, Y . Xu, D. Chen, O. Firat, Y . Huang, M. Krikun, N. Shazeer, and Z. Chen, “{GS}hard: Scaling giant models with conditional computation and automatic sharding,” inInternational Conference on Learning Representations, 2021. [Online]. Available: https://openreview.net/forum?id=qrwe7XHTmYb

2021

-

[24]

X. Liao, Y . Sun, H. Tian, X. Wan, Y . Jin, Z. Wang, Z. Ren, X. Huang, W. Li, K. F. Tse, Z. Zhong, G. Liu, Y . Zhang, X. Ye, Y . Zhang, and K. Chen, “Mixnet: A runtime reconfigurable optical-electrical fabric for distributed mixture-of-experts training,” inProceedings of the ACM SIGCOMM 2025 Conference, ser. SIGCOMM ’25. New York, NY , USA: Association fo...

-

[25]

Pipedream: generalized pipeline parallelism for dnn training,

Y . Peng, Y . Zhu, Y . Chen, Y . Bao, B. Yi, C. Lan, C. Wu, and C. Guo, “A generic communication scheduler for distributed dnn training acceleration,” inProceedings of the 27th ACM Symposium on Operating Systems Principles, ser. SOSP ’19. New York, NY , USA: Association for Computing Machinery, 2019, p. 16–29. [Online]. Available: https://doi.org/10.1145/...

-

[26]

Poseidon: an efficient communication architec- ture for distributed deep learning on gpu clusters,

H. Zhang, Z. Zheng, S. Xu, W. Dai, Q. Ho, X. Liang, Z. Hu, J. Wei, P. Xie, and E. P. Xing, “Poseidon: an efficient communication architec- ture for distributed deep learning on gpu clusters,” inProceedings of the 2017 USENIX Conference on Usenix Annual Technical Conference, ser. USENIX ATC ’17. USA: USENIX Association, 2017, p. 181–193

2017

-

[27]

Y . Li, J. Park, M. Alian, Y . Yuan, Z. Qu, P. Pan, R. Wang, A. G. Schwing, H. Esmaeilzadeh, and N. S. Kim, “A network-centric hardware/algorithm co-design to accelerate distributed training of deep neural networks,” in Proceedings of the 51st Annual IEEE/ACM International Symposium on Microarchitecture, ser. MICRO-51. IEEE Press, 2018, p. 175–188. [Onlin...

-

[28]

J. Fei, C.-Y . Ho, A. N. Sahu, M. Canini, and A. Sapio, “Efficient sparse collective communication and its application to accelerate distributed deep learning,” inProceedings of the 2021 ACM SIGCOMM 2021 Conference, ser. SIGCOMM ’21. New York, NY , USA: Association for Computing Machinery, 2021, p. 676–691. [Online]. Available: https://doi.org/10.1145/345...

-

[29]

Detail: reducing the flow completion time tail in datacenter networks,

D. Zats, T. Das, P. Mohan, D. Borthakur, and R. Katz, “Detail: reducing the flow completion time tail in datacenter networks,” inProceedings of the ACM SIGCOMM 2012 Conference on Applications, Technologies, Architectures, and Protocols for Computer Communication, ser. SIGCOMM ’12. New York, NY , USA: Association for Computing Machinery, 2012, p. 139–150. ...

-

[30]

Densely connected convolutional networks,

G. Huang, Z. Liu, L. van der Maaten, and K. Q. Weinberger, “Densely connected convolutional networks,” inProceedings of the IEEE Confer- ence on Computer Vision and Pattern Recognition (CVPR), July 2017

2017

-

[31]

Learning multiple layers of features from tiny images,

A. Krizhevsky, “Learning multiple layers of features from tiny images,” University of Toronto, 2009

2009

-

[32]

Gradient Descent Happens in a Tiny Subspace

G. Gur-Ari, D. A. Roberts, and E. Dyer, “Gradient descent happens in a tiny subspace,” 2018. [Online]. Available: https: //arxiv.org/abs/1812.04754

work page Pith review arXiv 2018

-

[33]

Stabilizing the lottery ticket hypothesis.arXiv preprint arXiv:1903.01611, 2019

J. Frankle, G. K. Dziugaite, D. M. Roy, and M. Carbin, “Stabilizing the lottery ticket hypothesis,” 2020. [Online]. Available: https: //arxiv.org/abs/1903.01611

-

[34]

On the relation between the sharpest directions of DNN loss and the SGD step length,

S. Jastrz˛ ebski, Z. Kenton, N. Ballas, A. Fischer, Y . Bengio, and A. Storkey, “On the relation between the sharpest directions of DNN loss and the SGD step length,” inInternational Conference on Learning Representations, 2019. [Online]. Available: https: //openreview.net/forum?id=SkgEaj05t7

2019

-

[35]

Ducked tails: Trimming the tail latency of(f) packet processing systems,

S. Gallenmüller, F. Wiedner, J. Naab, and G. Carle, “Ducked tails: Trimming the tail latency of(f) packet processing systems,” in2021 17th International Conference on Network and Service Management (CNSM), 2021, pp. 537–543

2021

-

[36]

Efficientnet: Rethinking model scaling for convolutional neural networks,

M. Tan and Q. V . Le, “Efficientnet: Rethinking model scaling for convolutional neural networks,” 2020. [Online]. Available: https: //arxiv.org/abs/1905.11946

-

[37]

Deep residual learning for image recognition,

K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” in2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016, pp. 770–778

2016

-

[38]

Imagenet classification with deep convolutional neural networks,

A. Krizhevsky, I. Sutskever, and G. E. Hinton, “Imagenet classification with deep convolutional neural networks,” inAdvances in Neural Information Processing Systems, F. Pereira, C. Burges, L. Bottou, and K. Weinberger, Eds., vol. 25. Curran Associates, Inc., 2012. [Online]. Available: https://proceedings.neurips.cc/paper_files/paper/2012/file/c3 99862d3b...

2012

-

[39]

Language models are unsupervised multitask learners,

A. Radford, J. Wu, R. Child, D. Luan, D. Amodei, and I. Sutskever, “Language models are unsupervised multitask learners,” 2019

2019

-

[40]

Pointer sentinel mixture models,

S. Merity, C. Xiong, J. Bradbury, and R. Socher, “Pointer sentinel mixture models,” 2016. [Online]. Available: https://arxiv.org/abs/1609 .07843

2016

-

[41]

A coloring-based packet loss rate measurement scheme on network nodes,

S. Wang, R. Han, and X. Wang, “A coloring-based packet loss rate measurement scheme on network nodes,”Electronics, vol. 13, no. 23,

-

[42]

Available: https://www.mdpi.com/2079-9292/13/23/46 92

[Online]. Available: https://www.mdpi.com/2079-9292/13/23/46 92

2079

-

[43]

Understanding data center traffic characteristics,

T. Benson, A. Anand, A. Akella, and M. Zhang, “Understanding data center traffic characteristics,”SIGCOMM Comput. Commun. Rev., vol. 40, no. 1, p. 92–99, Jan. 2010. [Online]. Available: https://doi.org/10.1145/1672308.1672325

-

[44]

Gillis, M

T. Gillis, M. Mubarak, and M. Nicely. (2025, Jul.) Nccl deep dive: Cross data center communication and network topology awareness. NVIDIA Technical Blog. [Online]. Available: https: //developer.nvidia.com/blog/nccl-deep-dive-cross-data-center-communi cation-and-network-topology-awareness/

2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.