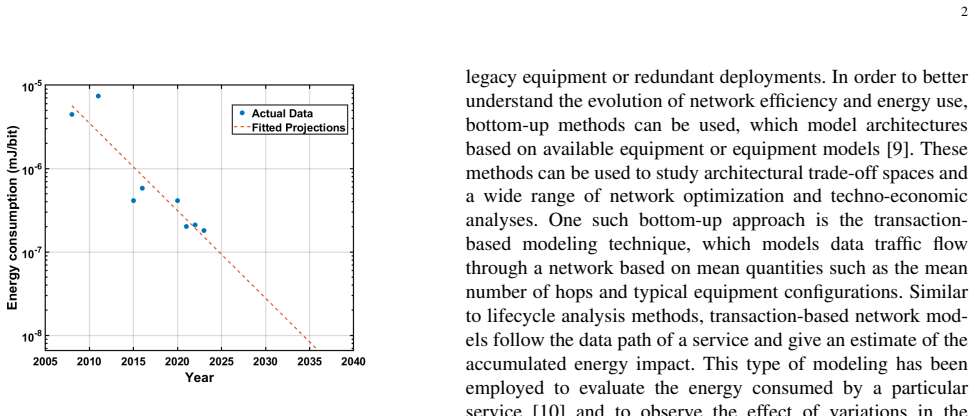

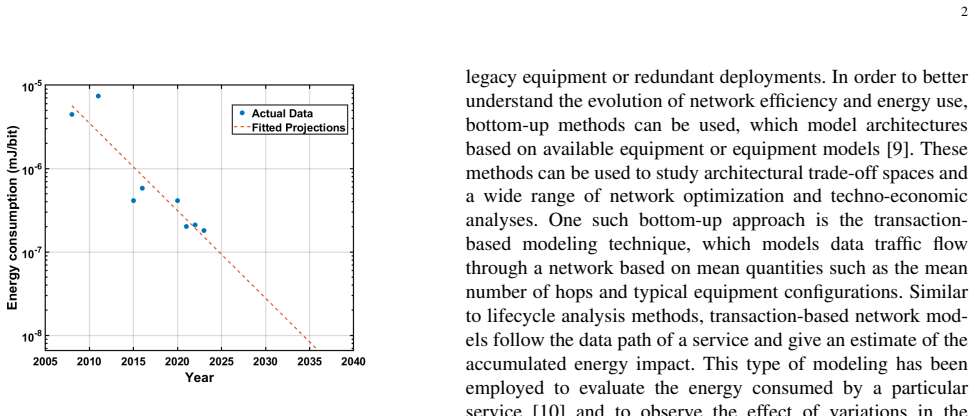

Processing energy dominates RAN consumption

Energy Consumption in Next Generation Radio Access Networks

Baseband processing location strongly affects efficiency in next-generation mobile networks.

full image

full image

Networking and Internet Architecture

Covers all aspects of computer communication networks, including network architecture and design, network protocols, and internetwork standards (like TCP/IP). Also includes topics, such as web caching, that are directly relevant to Internet architecture and performance. Roughly includes all of ACM Subject Class C.2 except C.2.4, which is more likely to have Distributed, Parallel, and Cluster Computing as the primary subject area.

Energy Consumption in Next Generation Radio Access Networks

Baseband processing location strongly affects efficiency in next-generation mobile networks.

full image

full image

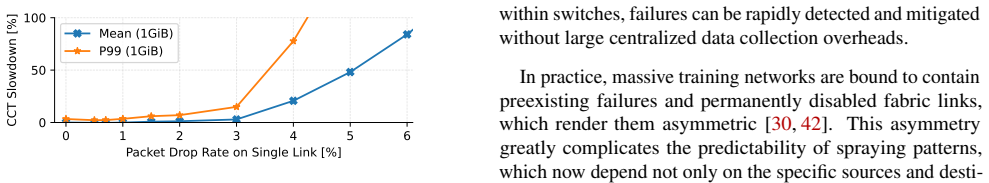

Avoiding Cross-Datacenter Collective Congestion via Disaggregated Buffering

Spillway stores packets lost during collisions at the receiving datacenter and drains them after congestion clears, requiring no host or框架框架

full image

full image

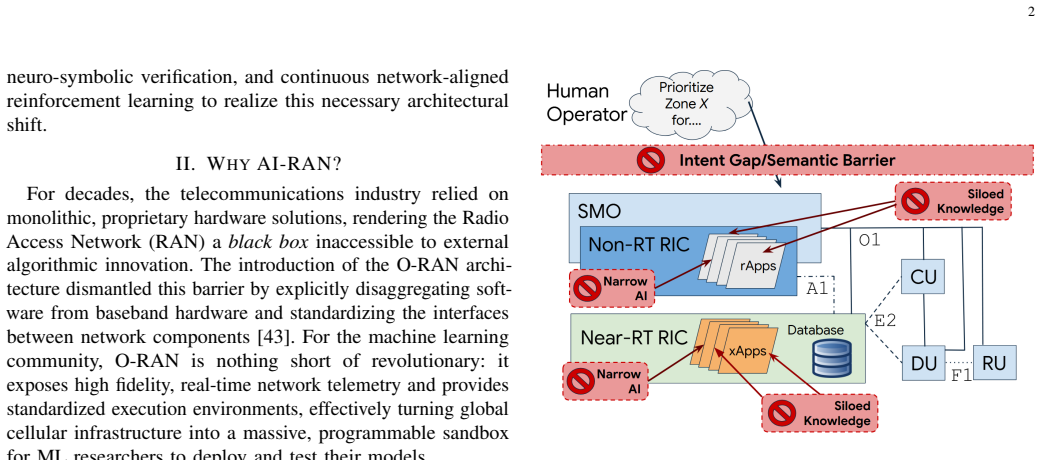

Agents Should Replace Narrow Predictive AI as the Orchestrator in 6G AI-RAN

Position paper argues that replacing narrow predictive models with large language models in the RAN controller can close the intent gap and

full image

full image

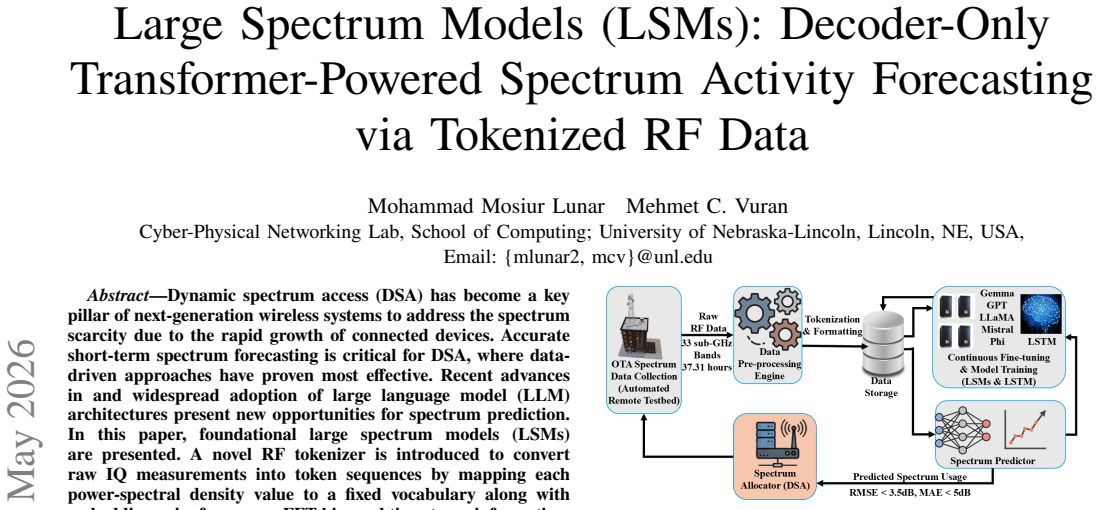

Custom tokenization of 22 TB of raw RF data lets decoder-only models generalize to new locations for dynamic spectrum access.

full image

full image

Without needing operator permission, new open tools enable reproducible experiments on cellular networks and messaging apps to check for隐私r

full image

full image

DeRAN abstracts telemetry into semantic concepts then builds readable policies for slicing and mobility on a live 5G testbed.

full image

full image

DeRAN extracts human-readable policies from reinforcement learning for network slicing and mobility management while preserving most rewards

full image

full image

Learning-Based Spectrum Cartography in Low Earth Orbit Satellite Networks: An Overview

Review shows attention-based learning adapts to orbital dynamics and data reliability for localization, mapping, and allocation.

full image

full image

Statistical Analysis for Energy-Efficient Satellite Edge Computing with Latency Guarantees

Parametric models of execution and communication delays let operators pick GPU speeds that hit deadlines reliably without wasting power.

full image

full image

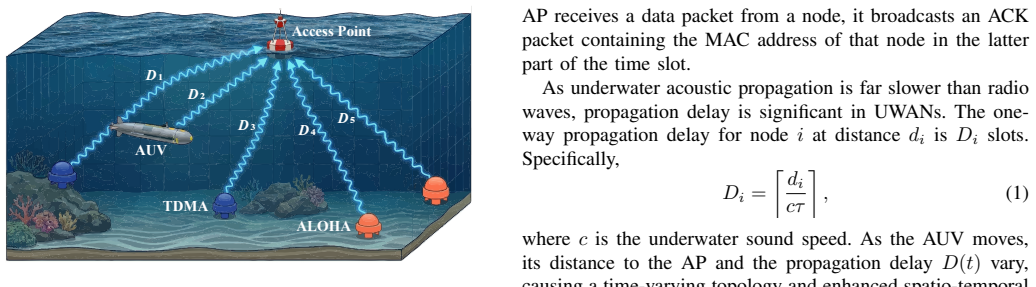

By handling observation losses and balancing rewards, EA-MAC sets node schedules without extra information exchange.

full image

full image

Drift-plus-penalty budgeting and entropy early exits balance speed and power in device-edge speculative decoding.

full image

full image

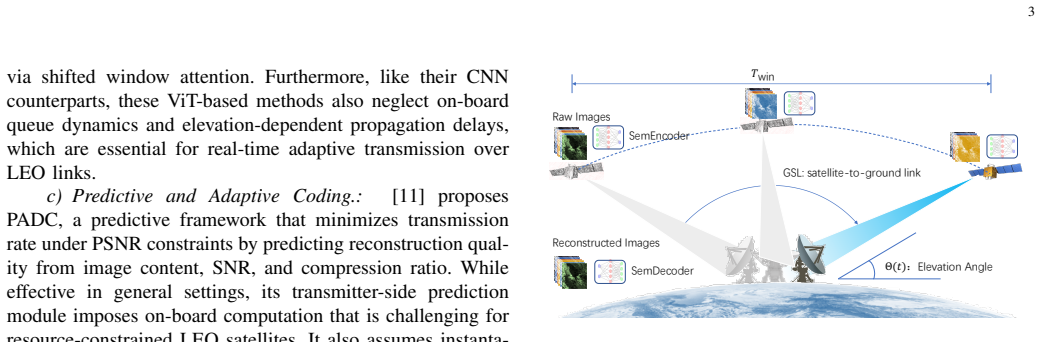

Adaptive JSCC compression uses SNR forecasts to meet quality targets inside short satellite visibility windows with zero packet loss.

full image

full image

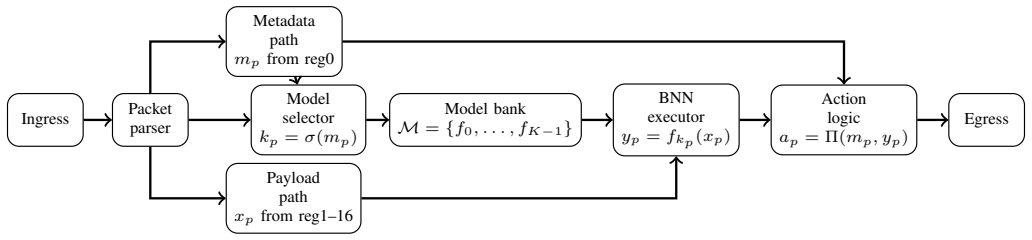

In-Network Artificial Computing Enhanced Light Model-Switching for Emergency Communications Networks

Emergency networks sustain 1.894 Mpps at 0.528 us inference latency on commodity hardware.

full image

full image

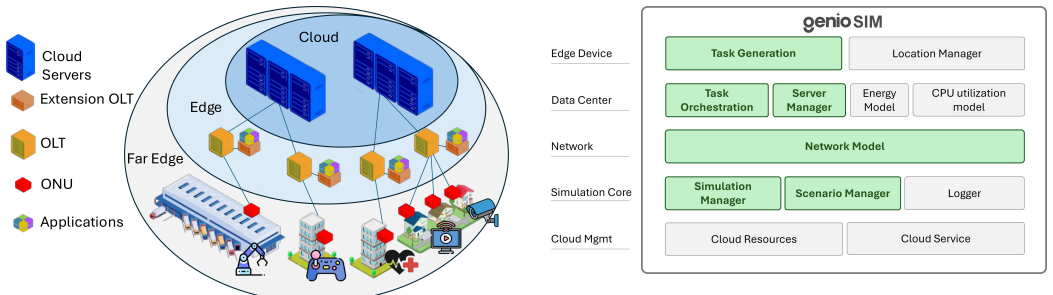

GenioSim: A Novel Simulation Platform for Edge Computing over Optical Networks

GenioSim captures realistic PON behavior in OLTs and ONTs with hybrid virtualization to test policies for capacity planning and offloading.

full image

full image

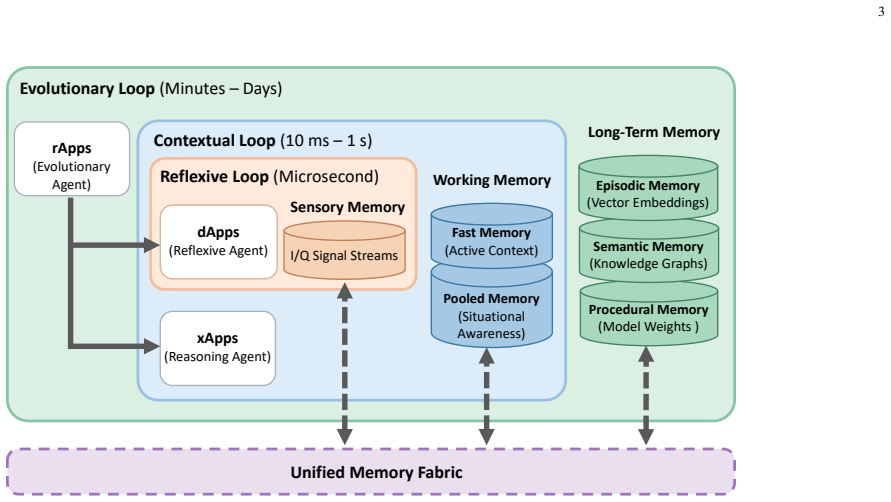

Bridging the Cognitive Gap: A Unified Memory Paradigm for 6G Agentic AI-RAN

Biological hierarchies placed on computing fabrics let agents share states across time scales without compression losses.

full image

full image

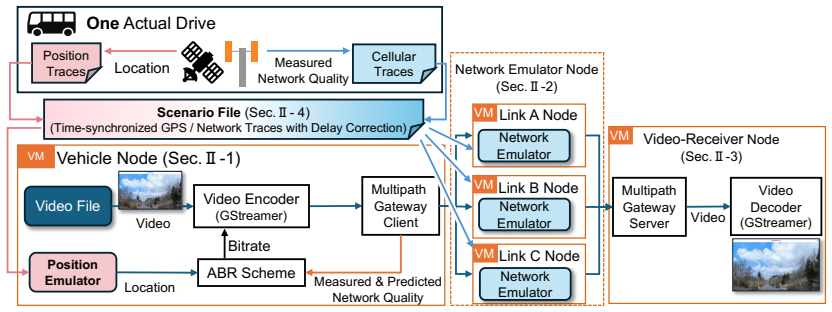

Virtual nodes tie cellular dynamics to route positions so uplink stacks can be validated without repeated road trials.

full image

full image

Mixed-Criticality Flow Scheduling with Low Delay and Limited Bandwidth in TSN

Aggregating frames with same endpoints and harmonic periods then splitting non-critical ones cuts bandwidth use by 12 percent while raising

full image

full image

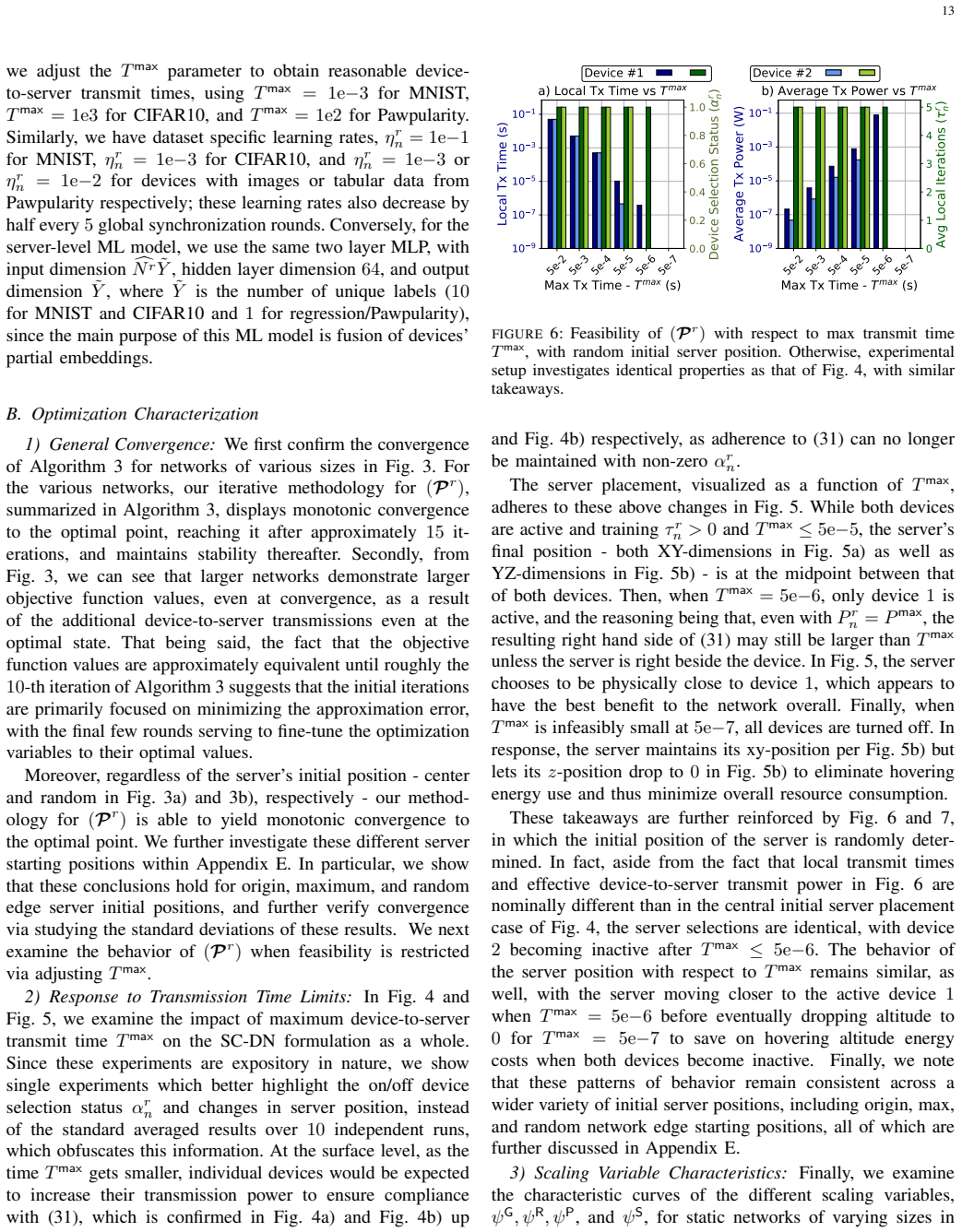

Optimizing Server Placement for Vertical Federated Learning in Dynamic Edge/Fog Networks

Proving a stationary point exists each round enables joint tuning of placement, power, frequency and iterations, improving accuracy with cut

full image

full image

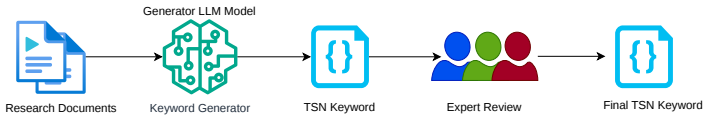

TSNBench: Benchmarking LLM Proficiency in Time-Sensitive Networking

New benchmark shows multiple-choice scores hide large errors in worst-case delay tasks for safety-critical networks

full image

full image

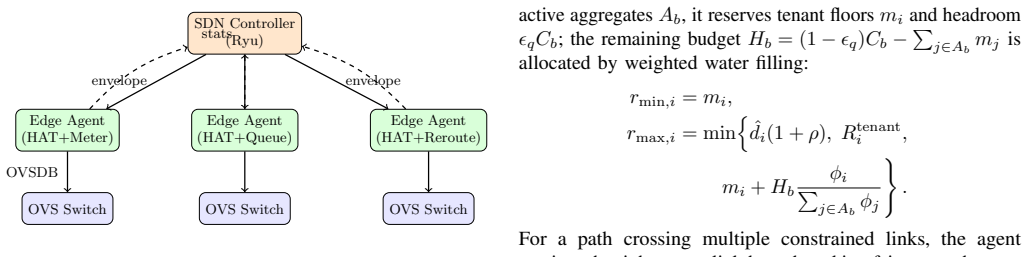

PolicyCache-SDN: Hierarchical Intra-Path Learning for Adaptive SDN Traffic Control

Central compilation of bounds enables online learning that raises link use 35% and cuts violations to under 7%

full image

full image

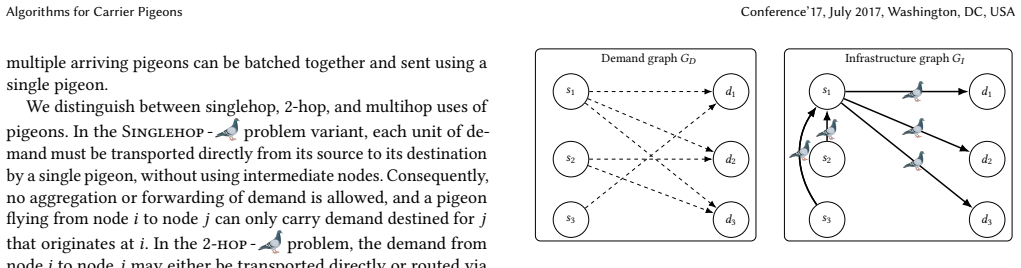

The Carrier Pigeon Internet Protocol: An Algorithmic (and Lighthearted) Perspective

Even though exact minimization for two- and multihop cases is NP-hard, demand aggregation solves it in polynomial time with a factor-two gu

full image

full image

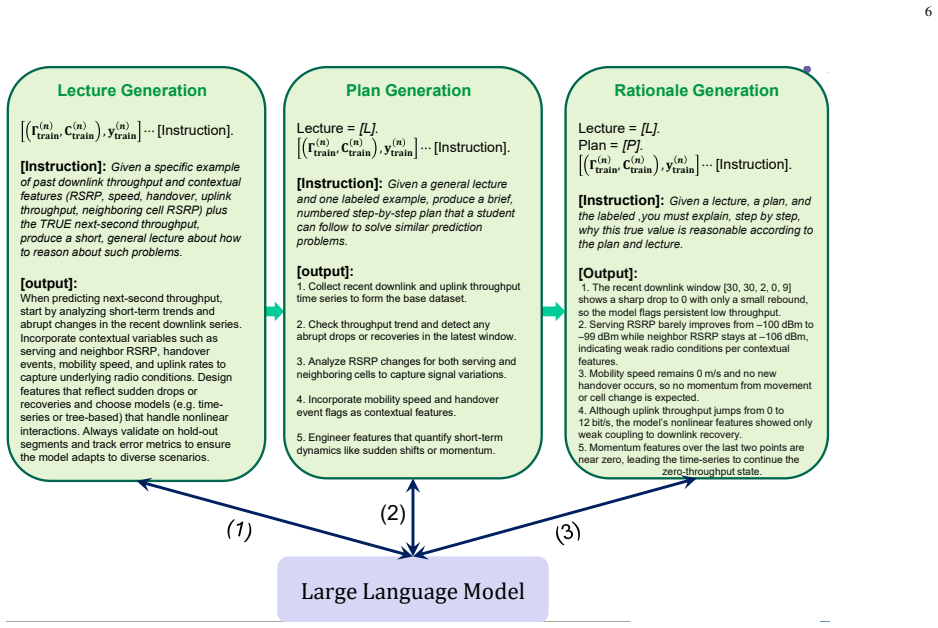

Chain-of-Thought Reasoning Enhances In-Context Learning for LLM-Based Mobile Traffic Prediction

Plan-based rationales and pattern similarity retrieval let models handle rapid fluctuations in 5G data without retraining.

full image

full image

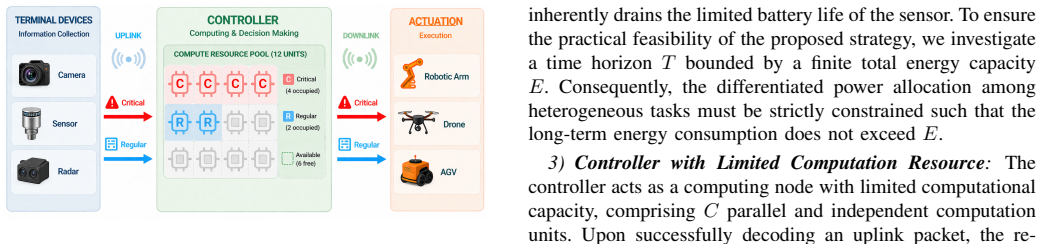

Semantics-Aware Communication:A Differentiated Allocation Perspective

Semantic categorization lets limited compute resources protect high-priority actuations while regular tasks tolerate more delay.

full image

full image

Locational Pricing for Generative-AI Services via Token-Flow Market Clearing

Dual variables from a network-constrained linear program show how compute capacity and bandwidth limits shape regional costs and dispatch in

full image

full image

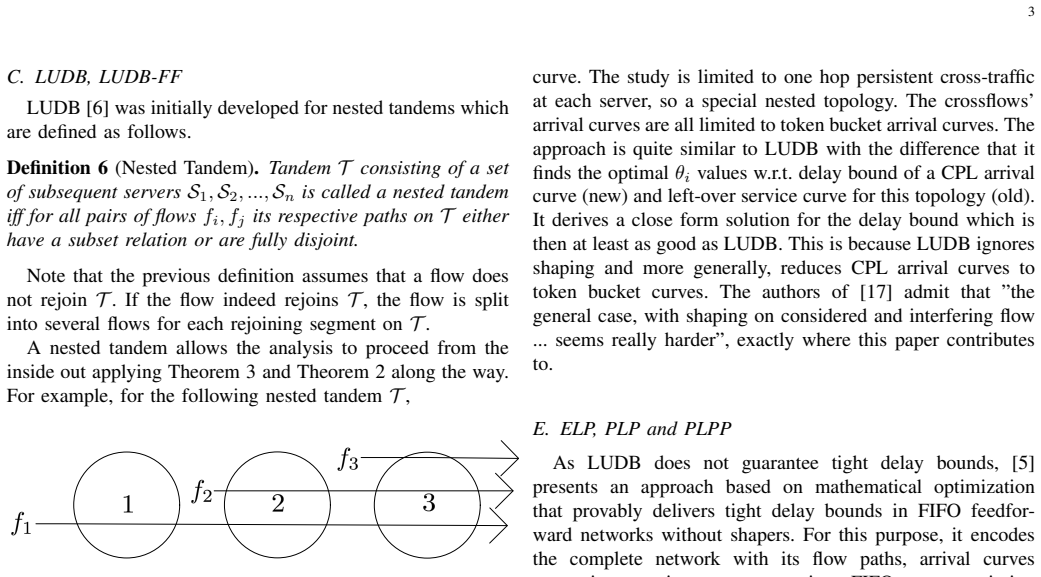

LUDB++: Enabling LUDB for the Analysis of Shaped Feedforward FIFO Networks using Network Calculus

Extending the least upper delay bound method to include shapers improves accuracy over standard LUDB and beats ELP in most of 130 test cases

full image

full image

Episode and step-level weighting yields faster convergence and higher completion rates than standard methods in simulated emergency missions

full image

full image

Proof-of-concept with Task Controller fulfills requirements, but security cuts throughput; new flexible DDI proposed.

full image

full image

Unconsented Sensing: A Sociotechnical Governance Framework for 6G ISAC

Current technical privacy measures underestimate legal issues from ML analysis of raw sensing data under GDPR and the EU AI Act.

full image

full image

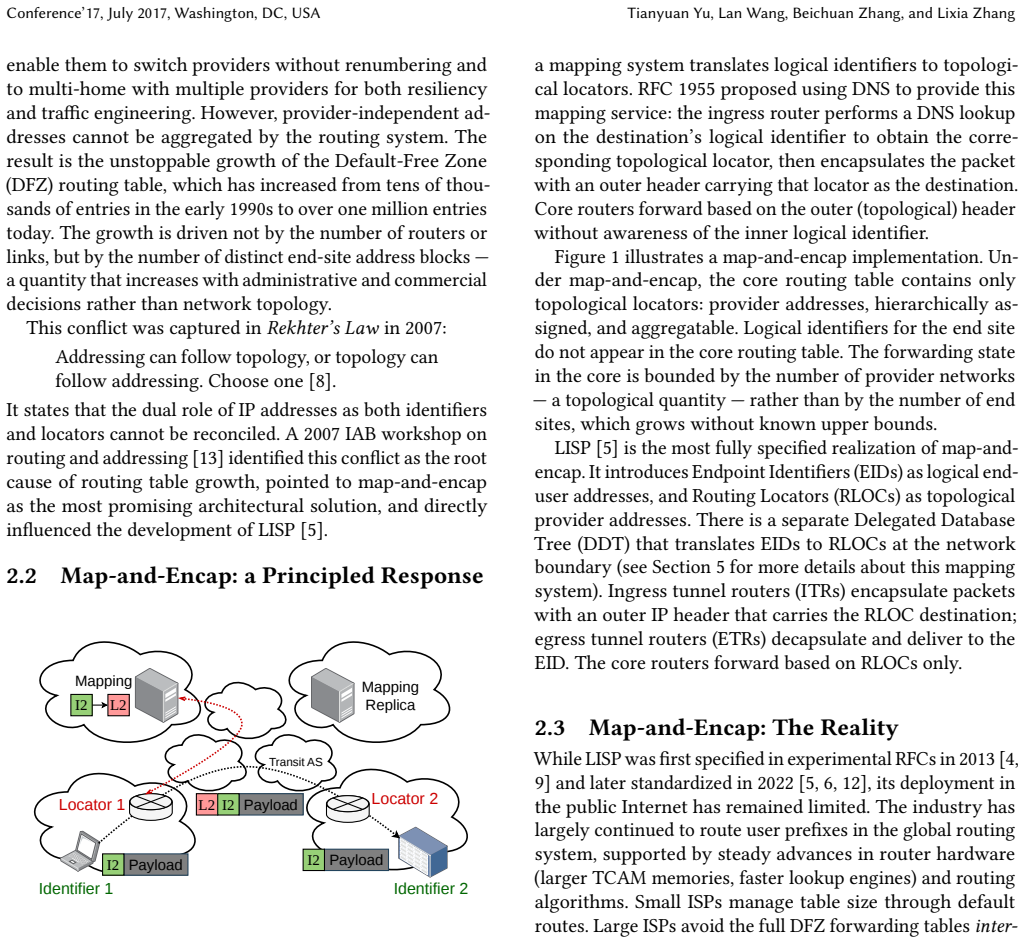

From Map-and-Encap to BIER: Observations on Network Routing Scalability

Observations from unicast and multicast history show why BGP cannot scale and what future designs need.

full image

full image

Toward Quantum-Safe 6G: Experimental Evaluation of Post-Quantum Cryptography Techniques

Benchmarks find computation times acceptable yet ciphertext and signature expansion reduces success rates and throughput in wireless edge 6G

full image

full image

CHILL-STER algorithm stabilizes learning under long delays and mobility using only a known maximum delay bound.

full image

full image

New adversary model shows how channel correlations enable attacks on OFDM challenge-response systems, yielding a guideline for safe use.

full image

full image

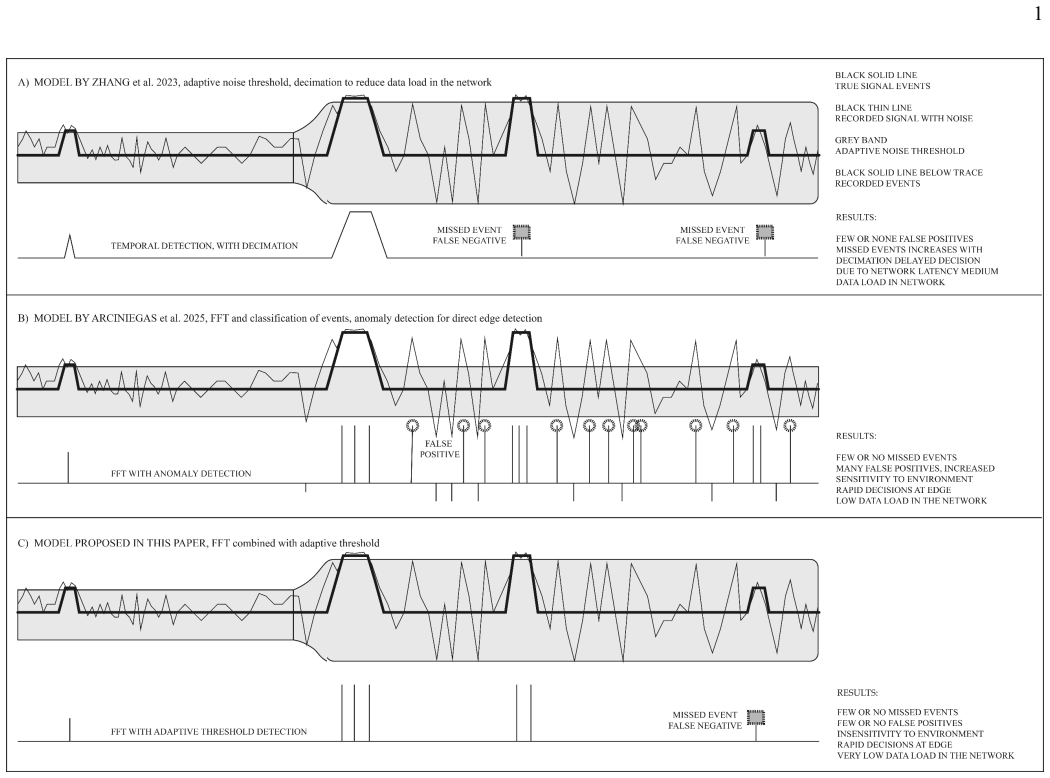

Edge Triggering in IoT Mesh Networks: A Comparative Monte Carlo Study of Seven Detection Algorithms

Simulation shows three combined defences are needed for reliable autonomous triggering under realistic noise.

full image

full image

Temporal Spectral Noise-Floor Adaptation for Error-Intolerant Trigger Integrity in IoT Mesh Networks

Each node tracks its own evolving noise floor to ignore wind, rain and vibration while catching real events without calibration or cloud aid

full image

full image

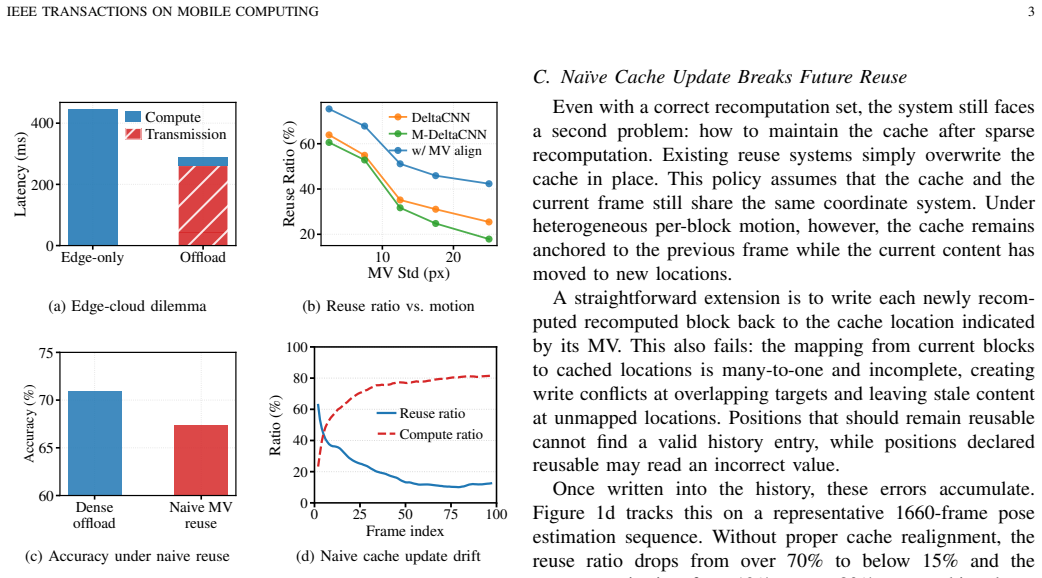

FluxShard remaps cached features by block displacement so most content stays reusable even when different parts of a mobile scene move at不同的

full image

full image

A Disaster-Aware Integrated TN-NTN System-Level Simulator for Resilient 6G Wireless Networks

It tracks throughput, packet loss, and latency when terrestrial base stations fail and users migrate to satellites or UAVs.

full image

full image

D2C connects with standard phones for fast emergency access, but standardized NTN delivers better performance, security and growth for true

full image

full image

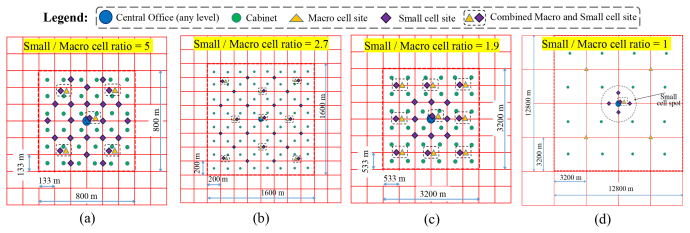

DSCM-enabled point-to-multipoint designs outperform traditional point-to-point across geotypes in simulations for 6G scaling.

full image

full image

SixGman: An Open-Source Planner for Fixed 6G Hierarchical Optical Access-Core Networks

SixGman evaluation on a 157-node real topology also reports 29% lower energy use and reduced latency.

full image

full image

Performance Characterization of dApps in Open Radio Access Networks

Tests across bare-metal and container setups identify bottlenecks that hardware offloading can fix for better real-time performance.

full image

full image

SILC: Lookahead Caching for Short-form Video Delivery Systems

By folding recommendation sequences and popularity overlaps into eviction decisions, SILC lowers origin fetches versus ten standard policies

full image

full image

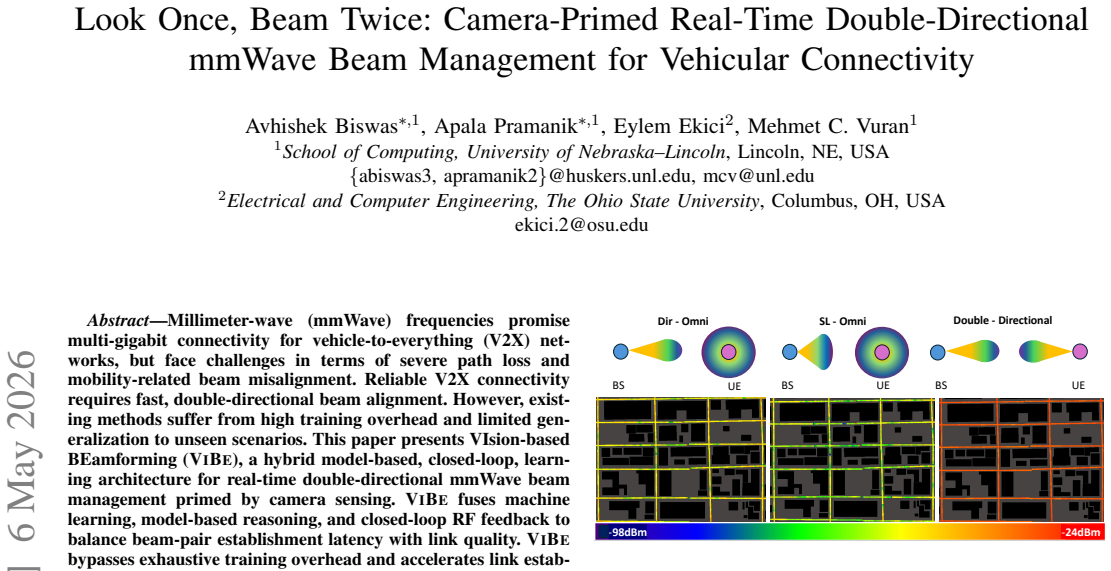

Hybrid VIBE reaches 1.1-1.4% outage in tests, beating 5G NR and pure ML for real-time V2X links

full image

full image

Traffic Chunk Sizing vs. Optical Switching Speed in Future All-Optical Satellite Networks

Ground station traffic assembly choices set the performance bar for onboard optical fabrics

full image

full image

A Separation Between Optimal Demand-Oblivious and Demand-Aware Network Throughput

This beats the known demand-oblivious limit and resolves an open question on adaptive network performance.

full image

full image

SADE: Symptom-Aware Diagnostic Escalation for LLM-Based Network Troubleshooting

SADE encodes Cisco layer-by-layer methods to separate evidence from hypotheses on eleven unseen scenarios.

full image

full image

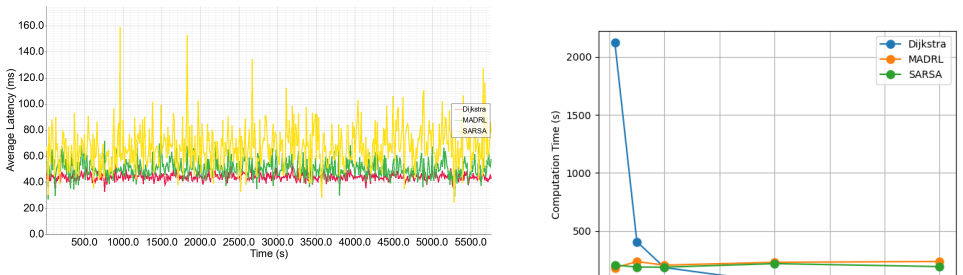

Queue-Aware and Resilient Routing in LEO Satellite Networks Using Multi-Agent Reinforcement Learning

Each satellite picks next hops locally from queue and resilience signals, sidestepping the signaling costs that grow with centralized recall

full image

full image

SOCP paths, DRL-LLM resources with reward decoupling, and LP offloading together cut delay and energy versus standard multi-agent RL in city

Worst-Case Discovery and Runtime Protection for RL-Based Network Controllers

It locates larger gaps than baselines and closes 79-85% of them with simple rules that leave normal performance untouched.

full image

full image

Resilient AI Supercomputer Networking using MRC and SRv6

New RDMA protocol plus SRv6 source routing keeps frontier-model jobs running in clusters over 100K GPUs

full image

full image

Surviving the Edge: Federated Learning under Networking and Resource Constraints

Tests identify exact failure thresholds for packet loss and dropouts, showing that three TCP tweaks can extend operation in constrained edge

full image

full image

Nested array design of extended coprime sets for DOA estimation of non-circular signals

Merging sum and difference co-arrays removes redundancy and improves accuracy over standard nested designs.

full image

full image

SprayCheck: Finding Gray Failures in Adaptive Routing Networks

SprayCheck spots gray failures from traffic statistics alone, letting operators reroute before ML training slows.

full image

full image

Cross-Slice Co-Location Risk-Aware SFC Provisioning in Multi-Slice LEO Satellite Networks

Risk-aware SFC placement also slashes avoidable migrations by 80% and delivers 23x faster decisions after the first epoch.

full image

full image

Dynamic hypergame perceptions let platforms and mobile units adapt proposals and acceptances over repeated interactions.

full image

full image

DACP: A Scientific Data Access and Collaboration Protocol

Unified IDs plus reverse supply let users discover holdings and run computations where the data already lives.

full image

full image

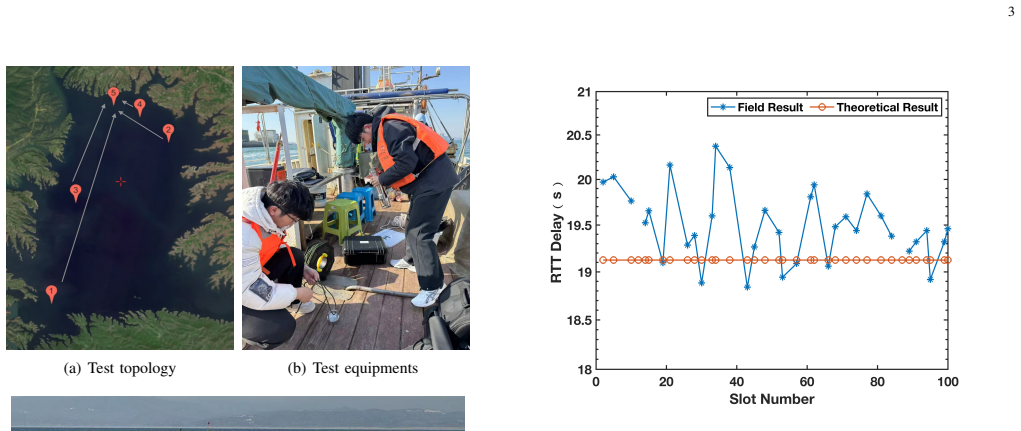

CRT: Collision-Tolerant Residence Time for Deterministic Transmission in LEO Satellite Networks

CRT compensates for varying link delays and bounds collision jitter to support time-sensitive services without global synchronization.

full image

full image

Joint optimization of bandwidth, compute and wireless resources via constrained MDP, attention topology and LSTM timing yields steadier low-

Grid positions and vehicle-distribution predictions enable CSI-free local adjustments that avoid service interruptions in fast networks.

full image

full image

Renewables Power the Orbit? Achieving Sustainable Space Edge Computing via QoS-Aware Offloading

Offloading tasks to ground data centers near clean power sources also shortens delays in Starlink-scale networks.

full image

full image

PERFECT: Personalized Federated Learning for CBRS Radar Detection

Local training at each sensor protects naval radar without moving raw data, matching central accuracy.

full image

full image

Sensitivity Analysis of Tactical Wireless Network Design Under Realistic Operational Constraints

Statistical tests on optimized tactical wireless designs show structural rules trigger thresholds at certain scales while other factors only

full image

full image

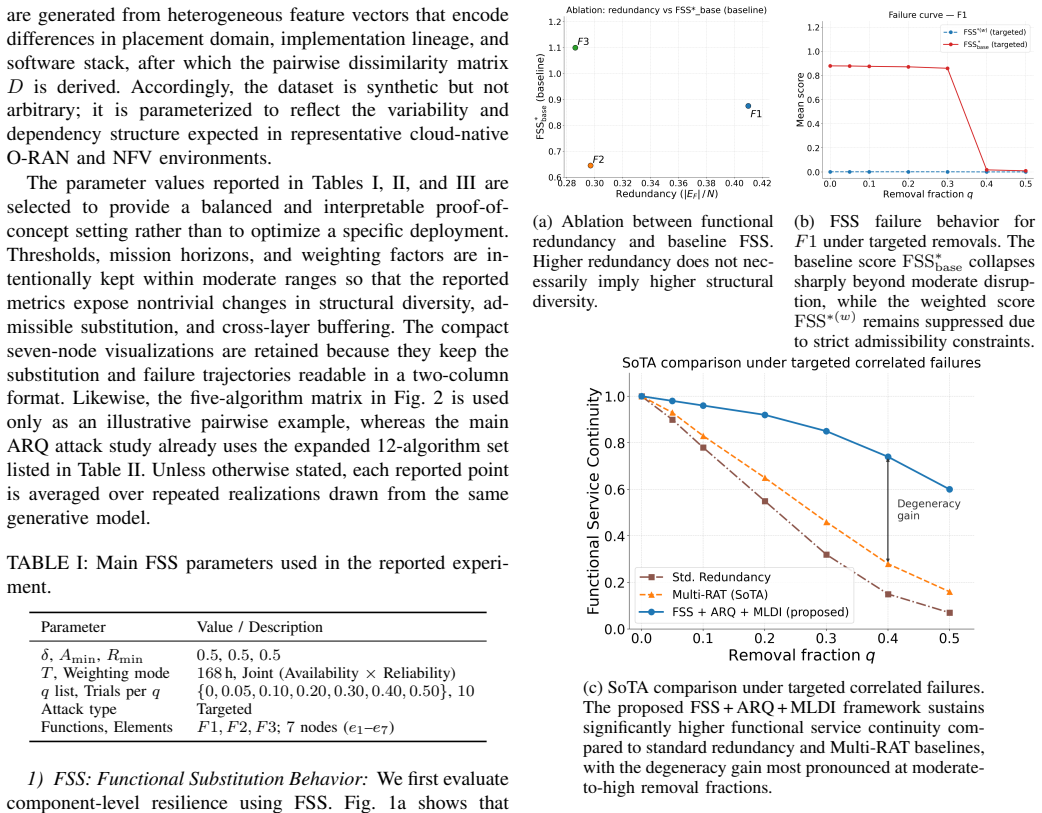

Structurally diverse alternatives provide better protection than replication against correlated failures in virtualized networks.

full image

full image

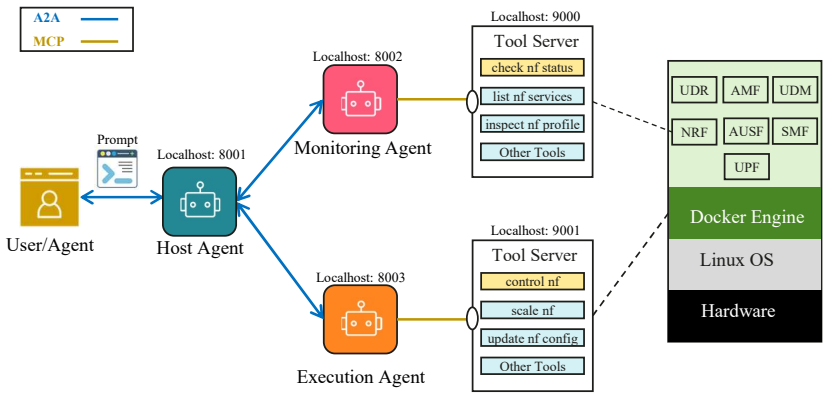

Tool Use as Action: Towards Agentic Control in Mobile Core Networks

MCP and A2A protocols support intent execution while packet flows and latencies are measured from prompt to completion.

full image

full image

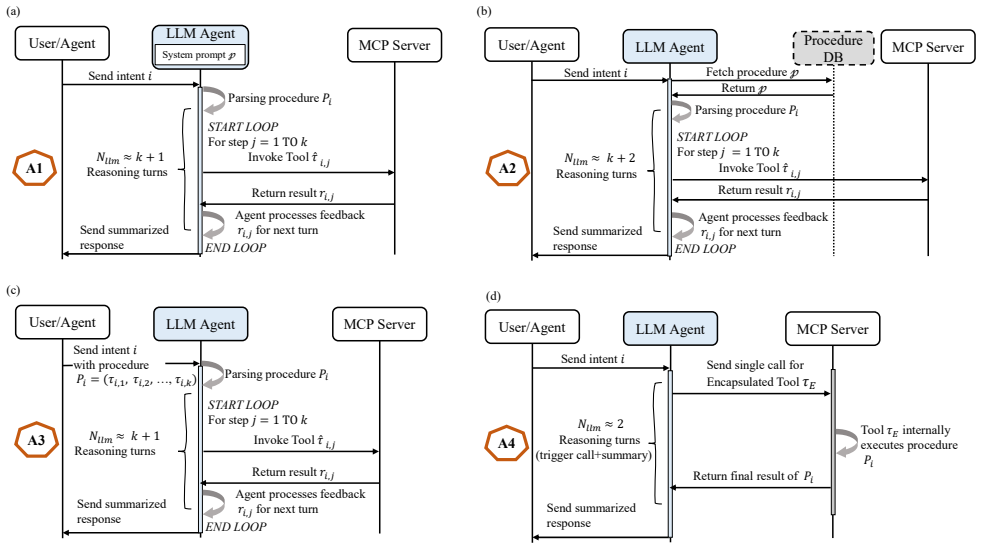

Beyond State Machines: Executing Network Procedures with Agentic Tool-Calling Sequences

UE IP allocation tests show iterative reasoning slows execution and raises errors while encapsulated tools maintain reliability over more步骤.

full image

full image

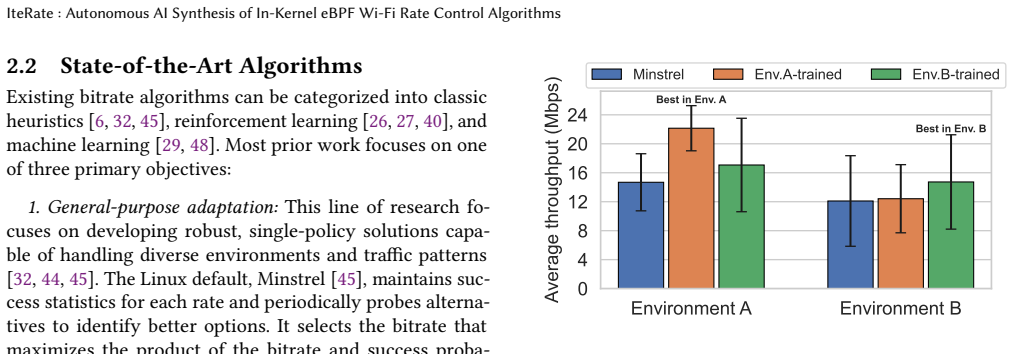

IteRate: Autonomous AI Synthesis of In-Kernel eBPF Wi-Fi Rate Control Algorithms

On a 58-node testbed the closed-loop system writes eBPF kernel code to achieve 21% faster web loads, 7% better video QoE, and 21% higherpeak

full image

full image

Choir: Tackling RTBC Performance Impossible Triangle with 5G Collaboration

By guiding senders from the base station using radio and video patterns, it also ensures fairness for multiple streams.

full image

full image

Early-Stage IoT Device Identification Using Passive Network Traffic Analysis

Passive flow features yield up to 99% accuracy for 37 devices, with longer windows not improving results.

full image

full image

Spatial-Temporal Learning-Based Distributed Routing for Dynamic LEO Satellite Networks

Graph attention and LSTM inside a DQN let satellites choose paths from local observations alone and avoid congestion proactively.

full image

full image

Rethinking Traffic Matrix Completion: Estimate the Process, Not the Entries

By estimating process parameters from multiple partial frames inside stationary windows, the method beats direct entry-filling baselines,尤其s

full image

full image

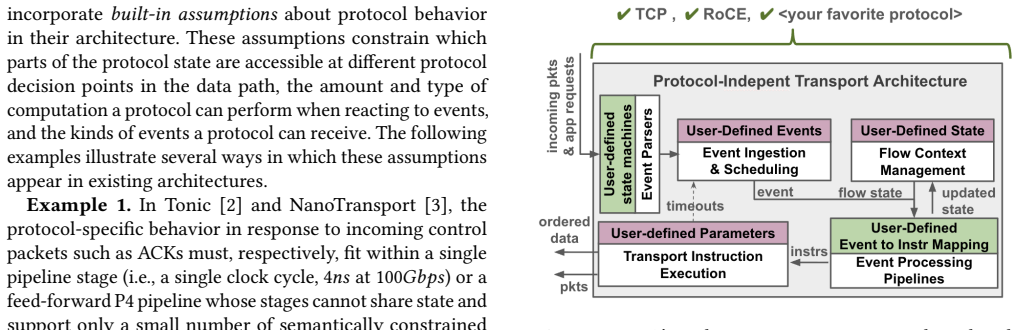

A Protocol-Independent Transport Architecture

Uniform abstraction replaces protocol-specific logic so the hardware stays programmable without losing speed.

full image

full image

Zero-Trust Bilateral Edge Service Trading with Deposit-Refund Regulation for Runtime Compliance

Deposit-refund rules tied to runtime outcomes improve welfare and reduce delay plus privacy costs over baselines while keeping rationality.

full image

full image

Forecasting-Driven Stable Successor Matching for UAV-Assisted Continuous Edge Services

LSTM forecasts of UAV failure let the system reserve a successor ahead of time, keeping missions running with only modest extra cost.

full image

full image

Risk-Budgeted Online Scheduling for Continuous Edge Inference over Evolving Time Horizons

Updatable user risk states and short-term forecasts balance immediate speed with long-term reliability under changing bandwidth and compute

full image

full image

Hierarchical Cooperative MARL for Joint Downlink PRB and Power Allocation in a 5G System

Two-stage agents trained in ray tracing beat proportional-fair scheduling on aggregate rate while Jain index falls only modestly.

full image

full image

Method supports up to 13% more traffic on networks of 143 nodes while keeping blocking below 0.1%.

full image

full image

Relay networks deliver orders-of-magnitude higher capacity than ground stations for continuous space vehicle data transfer.

full image

full image

Throughput Analysis and On-Board Buffer Sizing for Hybrid RF and Optical LEO Satellites

Analysis of RF and laser LEO links shows optimal scheduling cuts buffer needs and packet loss while raising data rates under weather outages

full image

full image

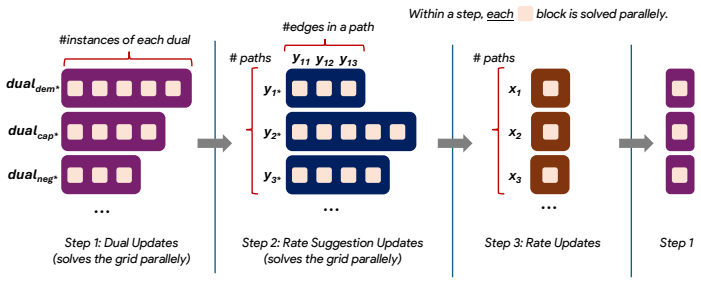

GATE: GPU-Accelerated Traffic Engineering for the WAN

Parallel decomposition lets it converge on fair allocations quickly enough for real-time adjustments in large cloud networks.

full image

full image

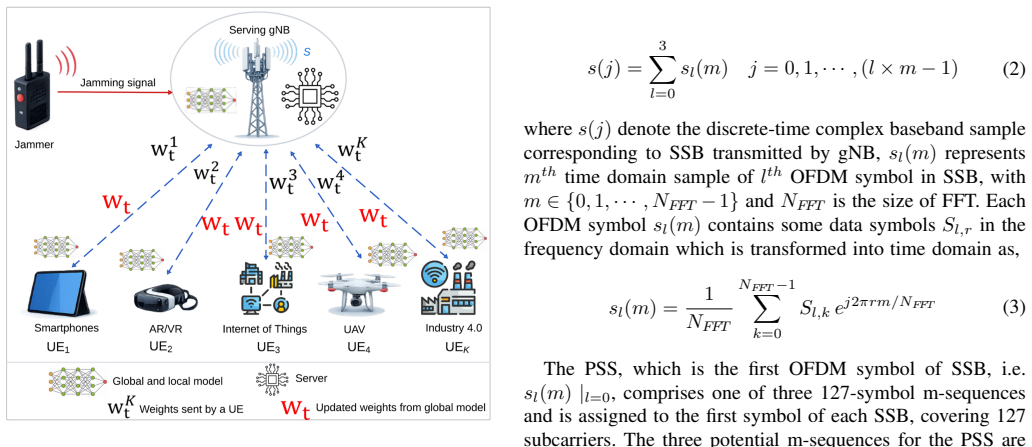

By training on local IQ samples from synchronization blocks, the system matches centralized performance without transmitting raw data.

full image

full image

ShieldShare routes client traffic through VPN tunnels and meters usage separately without needing root privileges.

full image

full image