Recognition: unknown

From Where Things Are to What They Are For: Benchmarking Spatial-Functional Intelligence in Multimodal LLMs

Pith reviewed 2026-05-09 16:56 UTC · model grok-4.3

The pith

Multimodal LLMs struggle to connect where objects are located with what they are used for in videos.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

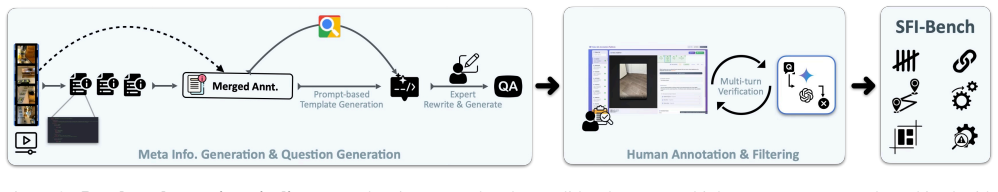

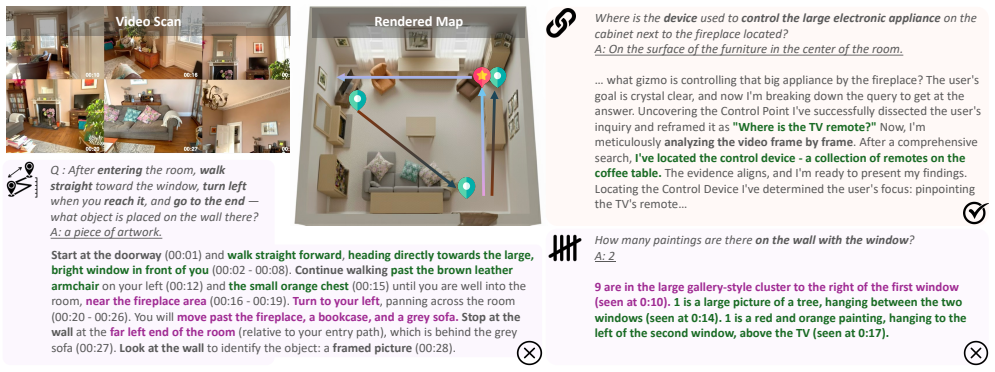

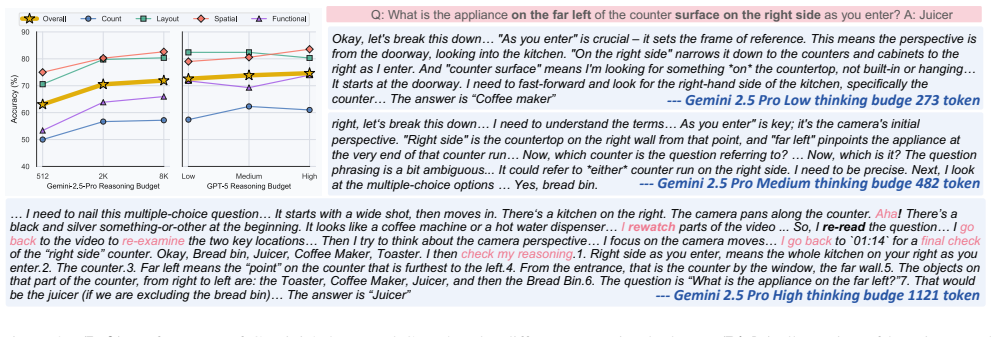

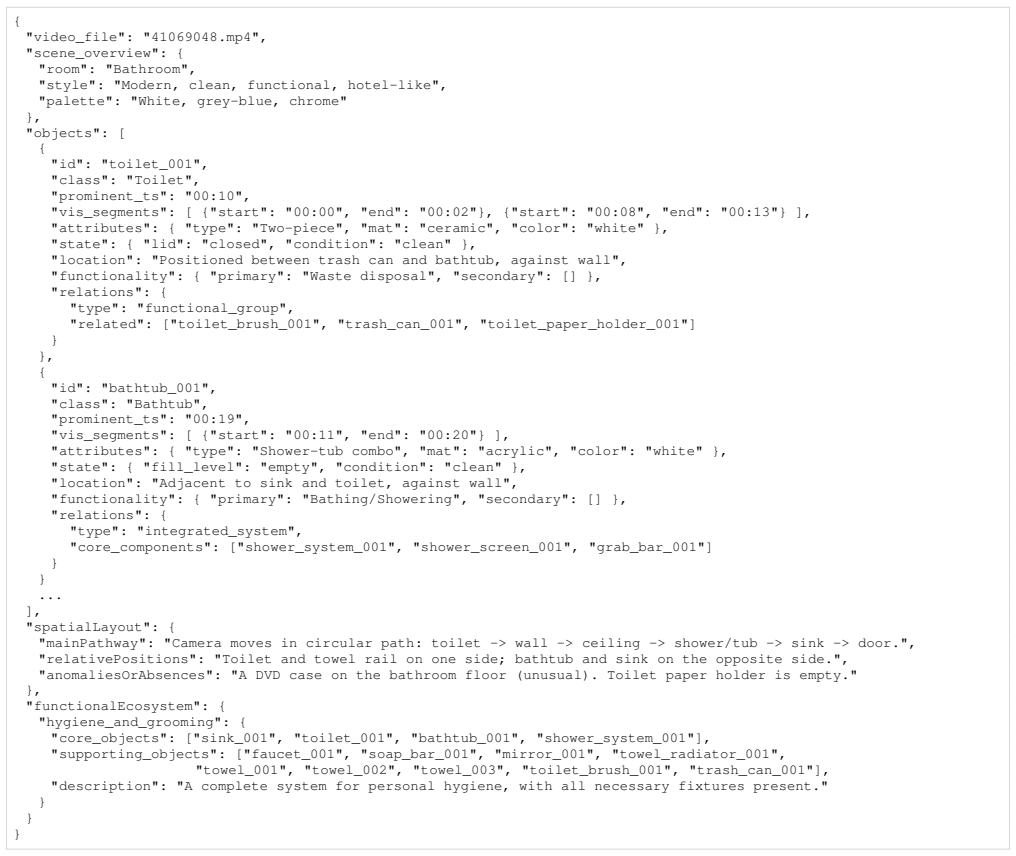

SFI-Bench is a video-based benchmark containing over 1,500 expert-annotated questions from diverse egocentric indoor scans that evaluates two dimensions of advanced reasoning: structured spatial reasoning for understanding complex layouts and forming coherent spatial representations, and functional reasoning for inferring object affordances and their context-dependent utility. The benchmark includes tasks such as conditional counting, multi-hop relational reasoning, functional pairing, and knowledge-grounded troubleshooting. Experiments show that current MLLMs consistently struggle to combine spatial memory with functional reasoning and external knowledge, which the authors identify as a key

What carries the argument

SFI-Bench, a video benchmark with expert-annotated questions that tests integration of spatial layout understanding and functional affordance inference in multimodal LLMs.

Where Pith is reading between the lines

- The performance gap may arise because current training data rarely requires models to link remembered positions with later functional uses.

- Extending the benchmark to test whether high scores predict success in physical robotic tasks would clarify its relevance for embodied agents.

- This points to a need for memory mechanisms in multimodal models that retain spatial details long enough to support purpose-based reasoning.

- The findings connect to broader challenges in creating AI that can plan actions based on object utilities rather than surface descriptions.

Load-bearing premise

The expert-annotated questions and tasks in the benchmark accurately isolate and measure spatial-functional integration without being confounded by video processing limits, language biases, or annotation subjectivity.

What would settle it

A multimodal model that achieves high accuracy across the functional reasoning and knowledge-grounded troubleshooting tasks by correctly recalling and applying spatial positions from the provided video would indicate the reported integration bottleneck has been resolved.

Figures

read the original abstract

Human-level agentic intelligence extends beyond low-level geometric perception, evolving from recognizing where things are to understanding what they are for. While existing benchmarks effectively evaluate the geometric perception capabilities of multimodal large language models (MLLMs), they fall short of probing the higher-order cognitive abilities required for grounded intelligence. To address this gap, we introduce the Spatial-Functional Intelligence Benchmark (SFI-Bench), a video-based benchmark with over 1,500 expert-annotated questions derived from diverse egocentric indoor video scans. SFI-Bench systematically evaluates two complementary dimensions of advanced reasoning: (1) Structured Spatial Reasoning, which requires understanding complex layouts and forming coherent spatial representations, and (2) Functional Reasoning, which involves inferring object affordances and their context-dependent utility. The benchmark includes tasks such as conditional counting, multi-hop relational reasoning, functional pairing, and knowledge-grounded troubleshooting, directly challenging models to integrate perception, memory, and inference. Our experiments reveal that current MLLMs consistently struggle to combine spatial memory with functional reasoning and external knowledge, highlighting a critical bottleneck in achieving grounded intelligence. SFI-Bench therefore provides a diagnostic tool for measuring progress toward more cognitively capable and truly grounded multimodal agents.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces SFI-Bench, a video-based benchmark with over 1,500 expert-annotated questions derived from egocentric indoor video scans. It evaluates multimodal LLMs on two dimensions of advanced reasoning—Structured Spatial Reasoning (complex layouts and spatial representations) and Functional Reasoning (object affordances and context-dependent utility)—via tasks including conditional counting, multi-hop relational reasoning, functional pairing, and knowledge-grounded troubleshooting. The central claim is that current MLLMs consistently struggle to combine spatial memory with functional reasoning and external knowledge, identifying a bottleneck for grounded intelligence beyond existing geometric perception benchmarks.

Significance. If the benchmark tasks validly isolate higher-order spatial-functional integration without low-level confounds, the work would be significant for the field by providing a diagnostic tool that shifts evaluation from low-level perception to cognitively grounded abilities in MLLMs. The independent, expert-annotated construction from real video scans is a clear strength, offering falsifiable predictions about model capabilities.

major comments (2)

- [Abstract] Abstract: The claim that 'our experiments reveal that current MLLMs consistently struggle to combine spatial memory with functional reasoning and external knowledge' is presented without any details on model selection, quantitative metrics, error bars, statistical tests, or baseline comparisons. This leaves the central empirical claim without visible support from the reported data or methods.

- [Benchmark construction and evaluation sections] Benchmark construction and evaluation sections: The tasks (e.g., multi-hop relational reasoning, knowledge-grounded troubleshooting) assume that performance gaps reflect deficits in spatial-functional integration. However, no controls or ablations are described to separate these from documented MLLM weaknesses in long-context video encoding, object tracking across frames, or frame sampling (e.g., no static keyframe baselines, oracle spatial graphs, or perception-only controls). This directly undermines attribution of the observed struggles to the intended higher-order bottleneck.

minor comments (2)

- [Abstract] The abstract would benefit from a brief statement of the number of models evaluated and the primary metrics used to quantify 'struggle.'

- Consider including a summary table of benchmark statistics (questions per task category, video sources, annotation process) for clarity.

Simulated Author's Rebuttal

We thank the referee for the constructive comments, which help clarify the presentation of our central claims and strengthen the attribution of results. We have revised the manuscript accordingly, updating the abstract for better support of the empirical findings and adding controls and discussion in the evaluation sections.

read point-by-point responses

-

Referee: [Abstract] Abstract: The claim that 'our experiments reveal that current MLLMs consistently struggle to combine spatial memory with functional reasoning and external knowledge' is presented without any details on model selection, quantitative metrics, error bars, statistical tests, or baseline comparisons. This leaves the central empirical claim without visible support from the reported data or methods.

Authors: We agree that the abstract would benefit from greater specificity to make the central claim more immediately supported. In the revised manuscript, we have updated the abstract to briefly reference the models evaluated (a range of state-of-the-art open-source and proprietary MLLMs), summarize key quantitative performance metrics from the experiments, and direct readers to the detailed tables, baselines, and analyses in Section 4. Full error bars, statistical tests, and comparisons remain in the main body, as they exceed abstract length limits, but the revision ensures the claim is visibly grounded in the reported data. revision: yes

-

Referee: [Benchmark construction and evaluation sections] Benchmark construction and evaluation sections: The tasks (e.g., multi-hop relational reasoning, knowledge-grounded troubleshooting) assume that performance gaps reflect deficits in spatial-functional integration. However, no controls or ablations are described to separate these from documented MLLM weaknesses in long-context video encoding, object tracking across frames, or frame sampling (e.g., no static keyframe baselines, oracle spatial graphs, or perception-only controls). This directly undermines attribution of the observed struggles to the intended higher-order bottleneck.

Authors: We acknowledge that the original manuscript did not explicitly describe ablations for low-level video factors. We have added a dedicated paragraph in the revised evaluation section (Section 4) that includes new static keyframe baseline comparisons and perception-only control tasks using simplified recognition questions. These show that low-level issues contribute modestly but that the primary deficits align with spatial-functional integration demands. We have also clarified in Section 3 how the expert-annotated, high-level question design reduces reliance on precise frame-to-frame tracking. Full oracle spatial graphs are noted as future work due to the substantial additional annotation required, but the current results and task structure support the intended attribution. revision: partial

Circularity Check

No significant circularity: empirical benchmark evaluation

full rationale

The paper introduces SFI-Bench as an independent, expert-annotated video benchmark with >1500 questions targeting spatial and functional reasoning tasks. It reports direct empirical performance of existing MLLMs on these tasks without any mathematical derivations, equations, fitted parameters, or self-referential definitions that reduce claims to inputs by construction. No load-bearing steps invoke self-citations for uniqueness theorems, ansatzes, or renamed known results. The central claim (model struggles on spatial-functional integration) is an observation from benchmark runs, not a tautological prediction. This matches the default expectation for non-circular empirical benchmark papers.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Expert annotations on video scans accurately reflect human-like functional reasoning and spatial relations without significant subjectivity or bias.

Reference graph

Works this paper leans on

-

[1]

RoboArena: Distributed real-world evaluation of generalist robot policies

Pranav Atreya, Karl Pertsch, Tony Lee, Moo Jin Kim, Arhan Jain, Artur Kuramshin, Clemens Eppner, Cyrus Neary, Ed- ward Hu, Fabio Ramos, et al. Roboarena: Distributed real- world evaluation of generalist robot policies.arXiv preprint arXiv:2506.18123, 2025. 2

-

[2]

Vismin: Visual minimal-change understanding

Rabiul Awal, Saba Ahmadi, Le Zhang, and Aishwarya Agrawal. Vismin: Visual minimal-change understanding. Advances in Neural Information Processing Systems, 37: 107795–107829, 2024. 8

2024

-

[3]

Jinze Bai, Shuai Bai, Yunfei Chu, Zeyu Cui, Kai Dang, Xiaodong Deng, Yang Fan, Wenbin Ge, Yu Han, Fei Huang, et al. Qwen technical report.arXiv preprint arXiv:2309.16609, 2023. 8

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[4]

Shuai Bai, Keqin Chen, Xuejing Liu, Jialin Wang, Wenbin Ge, Sibo Song, Kai Dang, Peng Wang, Shijie Wang, Jun Tang, et al. Qwen2. 5-vl technical report.arXiv preprint arXiv:2502.13923, 2025. 2, 8

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[5]

ARK- itscenes - a diverse real-world dataset for 3d indoor scene understanding using mobile RGB-d data

Gilad Baruch, Zhuoyuan Chen, Afshin Dehghan, Tal Dimry, Yuri Feigin, Peter Fu, Thomas Gebauer, Brandon Joffe, Daniel Kurz, Arik Schwartz, and Elad Shulman. ARK- itscenes - a diverse real-world dataset for 3d indoor scene understanding using mobile RGB-d data. InNeurIPS, 2021. 2

2021

-

[6]

$\pi_0$: A Vision-Language-Action Flow Model for General Robot Control

Kevin Black, Noah Brown, Danny Driess, Adnan Esmail, Michael Equi, Chelsea Finn, Niccolo Fusai, Lachy Groom, Karol Hausman, Brian Ichter, et al. pi0 : A vision-language- action flow model for general robot control.arXiv preprint arXiv:2410.24164, 2024. 2

work page internal anchor Pith review arXiv 2024

-

[7]

Ellis Brown, Jihan Yang, Shusheng Yang, Rob Fergus, and Saining Xie. Benchmark designers should “train on the test set” to expose exploitable non-visual shortcuts.arXiv preprint arXiv:2511.04655, 2025. 8

-

[8]

Lan- guage models are few-shot learners.Advances in neural in- formation processing systems, 33:1877–1901, 2020

Tom Brown, Benjamin Mann, Nick Ryder, Melanie Sub- biah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakan- tan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Lan- guage models are few-shot learners.Advances in neural in- formation processing systems, 33:1877–1901, 2020. 8

1901

-

[9]

Spatialbot: Pre- cise spatial understanding with vision language models

Wenxiao Cai, Yaroslav Ponomarenko, Jianhao Yuan, Xiaoqi Li, Wankou Yang, Hao Dong, and Bo Zhao. Spatialbot: Pre- cise spatial understanding with vision language models. In ICRA, 2025. 8

2025

- [10]

-

[11]

GR-2: A Generative Video-Language-Action Model with Web-Scale Knowledge for Robot Manipulation

Chi-Lam Cheang, Guangzeng Chen, Ya Jing, Tao Kong, Hang Li, Yifeng Li, Yuxiao Liu, Hongtao Wu, Jiafeng Xu, Yichu Yang, et al. Gr-2: A generative video-language-action model with web-scale knowledge for robot manipulation. arXiv preprint arXiv:2410.06158, 2024. 2

work page internal anchor Pith review arXiv 2024

-

[12]

Spatialvlm: Endow- ing vision-language models with spatial reasoning capabili- ties

Boyuan Chen, Zhuo Xu, Sean Kirmani, Brain Ichter, Dorsa Sadigh, Leonidas Guibas, and Fei Xia. Spatialvlm: Endow- ing vision-language models with spatial reasoning capabili- ties. InCVPR, 2024. 8

2024

-

[13]

Yiteng Chen, Zhe Cao, Hongjia Ren, Chenjie Yang, Wenbo Li, Shiyi Wang, Yemin Wang, Li Zhang, Yanming Shao, Zhenjun Zhao, et al. Roborouter: Training-free pol- icy routing for robotic manipulation.arXiv preprint arXiv:2603.07892, 2026. 8

-

[14]

Internvl: Scaling up vision foundation mod- els and aligning for generic visual-linguistic tasks

Zhe Chen, Jiannan Wu, Wenhai Wang, Weijie Su, Guo Chen, Sen Xing, Muyan Zhong, Qinglong Zhang, Xizhou Zhu, Lewei Lu, et al. Internvl: Scaling up vision foundation mod- els and aligning for generic visual-linguistic tasks. InPro- ceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 24185–24198, 2024. 8

2024

-

[15]

Spatial- rgpt: Grounded spatial reasoning in vision-language mod- els.Advances in Neural Information Processing Systems, 37:135062–135093, 2024

An-Chieh Cheng, Hongxu Yin, Yang Fu, Qiushan Guo, Rui- han Yang, Jan Kautz, Xiaolong Wang, and Sifei Liu. Spatial- rgpt: Grounded spatial reasoning in vision-language mod- els.Advances in Neural Information Processing Systems, 37:135062–135093, 2024. 8

2024

-

[16]

Gheorghe Comanici, Eric Bieber, Mike Schaekermann, Ice Pasupat, Noveen Sachdeva, Inderjit Dhillon, Marcel Blis- tein, Ori Ram, Dan Zhang, Evan Rosen, et al. Gemini 2.5: Pushing the frontier with advanced reasoning, multimodality, long context, and next generation agentic capabilities.arXiv preprint arXiv:2507.06261, 2025. 2, 4, 6

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[17]

Zhehao Dong, Xiaofeng Wang, Zheng Zhu, Yirui Wang, Yang Wang, Yukun Zhou, Boyuan Wang, Chaojun Ni, Runqi Ouyang, Wenkang Qin, et al. Emma: Generalizing real-world robot manipulation via generative visual transfer. arXiv preprint arXiv:2509.22407, 2025. 8

-

[18]

Embspatial-bench: Benchmarking spatial un- derstanding for embodied tasks with large vision-language models

Mengfei Du, Binhao Wu, Zejun Li, Xuanjing Huang, and Zhongyu Wei. Embspatial-bench: Benchmarking spatial un- derstanding for embodied tasks with large vision-language models. InACL, 2024. 8

2024

-

[19]

The cognitive map in humans: spatial navi- gation and beyond.Nature neuroscience, 20(11):1504–1513,

Russell A Epstein, Eva Zita Patai, Joshua B Julian, and Hugo J Spiers. The cognitive map in humans: spatial navi- gation and beyond.Nature neuroscience, 20(11):1504–1513,

-

[20]

VLM-3R: Vision-Language Models Augmented with Instruction-Aligned 3D Reconstruction

Zhiwen Fan, Jian Zhang, Renjie Li, Junge Zhang, Runjin Chen, Hezhen Hu, Kevin Wang, Huaizhi Qu, Dilin Wang, Zhicheng Yan, et al. Vlm-3r: Vision-language models aug- mented with instruction-aligned 3d reconstruction.arXiv preprint arXiv:2505.20279, 2025. 8

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[21]

Video-mme: The first-ever comprehensive evaluation benchmark of multi-modal llms in video analysis

Chaoyou Fu, Yuhan Dai, Yongdong Luo, Lei Li, Shuhuai Ren, Renrui Zhang, Zihan Wang, Chenyu Zhou, Yunhang Shen, Mengdan Zhang, et al. Video-mme: The first-ever comprehensive evaluation benchmark of multi-modal llms in video analysis. InCVPR, 2025. 8

2025

-

[22]

Basic books, 2011

Howard Gardner.Frames of mind: The theory of multiple intelligences. Basic books, 2011. 8

2011

-

[23]

Psychology press, 2014

James J Gibson.The ecological approach to visual percep- tion: classic edition. Psychology press, 2014. 2

2014

-

[24]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning.arXiv preprint arXiv:2501.12948, 2025. 6

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[25]

Deepeyesv2: Toward agentic multimodal model

Jack Hong, Chenxiao Zhao, ChengLin Zhu, Weiheng Lu, Guohai Xu, and Xing Yu. Deepeyesv2: Toward agentic mul- timodal model.arXiv preprint arXiv:2511.05271, 2025. 2

-

[26]

Glm-4.1 v-thinking: Towards versatile multi- modal reasoning with scalable reinforcement learning.arXiv e-prints, pages arXiv–2507, 2025

Wenyi Hong, Wenmeng Yu, Xiaotao Gu, Guo Wang, Guob- ing Gan, Haomiao Tang, Jiale Cheng, Ji Qi, Junhui Ji, Li- 9 hang Pan, et al. Glm-4.1 v-thinking: Towards versatile multi- modal reasoning with scalable reinforcement learning.arXiv e-prints, pages arXiv–2507, 2025. 2

2025

-

[27]

Glm-4.1 v-thinking: Towards versatile multi- modal reasoning with scalable reinforcement learning.arXiv e-prints, pages arXiv–2507, 2025

Wenyi Hong, Wenmeng Yu, Xiaotao Gu, Guo Wang, Guob- ing Gan, Haomiao Tang, Jiale Cheng, Ji Qi, Junhui Ji, Li- hang Pan, et al. Glm-4.1 v-thinking: Towards versatile multi- modal reasoning with scalable reinforcement learning.arXiv e-prints, pages arXiv–2507, 2025. 4

2025

-

[29]

Aaron Hurst, Adam Lerer, Adam P Goucher, Adam Perel- man, Aditya Ramesh, Aidan Clark, AJ Ostrow, Akila Weli- hinda, Alan Hayes, Alec Radford, et al. Gpt-4o system card. arXiv preprint arXiv:2410.21276, 2024. 8

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[30]

OpenVLA: An Open-Source Vision-Language-Action Model

Moo Jin Kim, Karl Pertsch, Siddharth Karamcheti, Ted Xiao, Ashwin Balakrishna, Suraj Nair, Rafael Rafailov, Ethan Foster, Grace Lam, Pannag Sanketi, et al. Openvla: An open-source vision-language-action model.arXiv preprint arXiv:2406.09246, 2024. 2, 8

work page internal anchor Pith review arXiv 2024

-

[31]

Llava-next: What else influences visual instruction tun- ing beyond data?, 2024

Bo Li, Hao Zhang, Kaichen Zhang, Dong Guo, Yuanhan Zhang, Renrui Zhang, Feng Li, Ziwei Liu, and Chunyuan Li. Llava-next: What else influences visual instruction tun- ing beyond data?, 2024. 4

2024

-

[32]

LLaVA-OneVision: Easy Visual Task Transfer

Bo Li, Yuanhan Zhang, Dong Guo, Renrui Zhang, Feng Li, Hao Zhang, Kaichen Zhang, Peiyuan Zhang, Yanwei Li, Zi- wei Liu, et al. Llava-onevision: Easy visual task transfer. arXiv preprint arXiv:2408.03326, 2024. 8

work page internal anchor Pith review arXiv 2024

-

[33]

Topviewrs: Vision-language models as top-view spatial reasoners

Chengzu Li, Caiqi Zhang, Han Zhou, Nigel Collier, Anna Korhonen, and Ivan Vuli ´c. Topviewrs: Vision-language models as top-view spatial reasoners. InEMNLP, 2024. 8

2024

-

[34]

Blip-2: Bootstrapping language-image pre-training with frozen image encoders and large language models

Junnan Li, Dongxu Li, Silvio Savarese, and Steven Hoi. Blip-2: Bootstrapping language-image pre-training with frozen image encoders and large language models. InICML,

-

[35]

VideoChat: Chat-Centric Video Understanding

KunChang Li, Yinan He, Yi Wang, Yizhuo Li, Wenhai Wang, Ping Luo, Yali Wang, Limin Wang, and Yu Qiao. Videochat: Chat-centric video understanding.arXiv preprint arXiv:2305.06355, 2023. 8

work page internal anchor Pith review arXiv 2023

-

[36]

Llama-vid: An image is worth 2 tokens in large language models

Yanwei Li, Chengyao Wang, and Jiaya Jia. Llama-vid: An image is worth 2 tokens in large language models. InECCV,

-

[37]

Sti-bench: Are mllms ready for precise spatial-temporal world understanding? InICCV,

Yun Li, Yiming Zhang, Tao Lin, XiangRui Liu, Wenxiao Cai, Zheng Liu, and Bo Zhao. Sti-bench: Are mllms ready for precise spatial-temporal world understanding? InICCV,

-

[38]

Coarse correspondences boost spatial-temporal reasoning in multimodal language model

Benlin Liu, Yuhao Dong, Yiqin Wang, Zixian Ma, Yansong Tang, Luming Tang, Yongming Rao, Wei-Chiu Ma, and Ran- jay Krishna. Coarse correspondences boost spatial-temporal reasoning in multimodal language model. InCVPR, 2025. 8

2025

-

[39]

Chengwen Liu, Xiaomin Yu, Zhuoyue Chang, Zhe Huang, Shuo Zhang, Heng Lian, Kunyi Wang, Rui Xu, Sen Hu, Jianheng Hou, et al. Watching, reasoning, and searching: A video deep research benchmark on open web for agentic video reasoning.arXiv preprint arXiv:2601.06943, 2026. 8

-

[40]

Visual instruction tuning.Advances in neural information processing systems, 36:34892–34916, 2023

Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. Visual instruction tuning.Advances in neural information processing systems, 36:34892–34916, 2023. 8

2023

-

[41]

Spatialreasoner: Towards explicit and generalizable 3d spatial reasoning

Wufei Ma, Yu-Cheng Chou, Qihao Liu, Xingrui Wang, Celso de Melo, Jianwen Xie, and Alan Yuille. Spatialreasoner: Towards explicit and generalizable 3d spatial reasoning. In NeurIPS, 2025. 8

2025

-

[42]

Openeqa: Embodied question answering in the era of foun- dation models

Arjun Majumdar, Anurag Ajay, Xiaohan Zhang, Pranav Putta, Sriram Yenamandra, Mikael Henaff, Sneha Silwal, Paul Mcvay, Oleksandr Maksymets, Sergio Arnaud, et al. Openeqa: Embodied question answering in the era of foun- dation models. InCVPR, 2024. 8

2024

-

[43]

Egoschema: A diagnostic benchmark for very long- form video language understanding.Advances in Neural In- formation Processing Systems, 36:46212–46244, 2023

Karttikeya Mangalam, Raiymbek Akshulakov, and Jitendra Malik. Egoschema: A diagnostic benchmark for very long- form video language understanding.Advances in Neural In- formation Processing Systems, 36:46212–46244, 2023. 8

2023

-

[44]

SmolVLM: Redefining small and efficient multimodal models

Andr ´es Marafioti, Orr Zohar, Miquel Farr ´e, Merve Noyan, Elie Bakouch, Pedro Cuenca, Cyril Zakka, Loubna Ben Allal, Anton Lozhkov, Nouamane Tazi, Vaibhav Srivastav, Joshua Lochner, Hugo Larcher, Mathieu Morlon, Lewis Tun- stall, Leandro von Werra, and Thomas Wolf. Smolvlm: Redefining small and efficient multimodal models.arXiv preprint arXiv:2504.05299...

work page internal anchor Pith review arXiv 2025

-

[45]

Deepseek-r1 thoughtology: Let's think about llm reasoning

Sara Vera Marjanovi ´c, Arkil Patel, Vaibhav Adlakha, Milad Aghajohari, Parishad BehnamGhader, Mehar Bhatia, Aditi Khandelwal, Austin Kraft, Benno Krojer, Xing Han L`u, et al. Deepseek-r1 thoughtology: Let’s think about llm reasoning. arXiv preprint arXiv:2504.07128, 2025. 6

-

[46]

M., Shiee, N., Grasch, P., Jia, C., Yang, Y ., and Gan, Z

Kartik Narayan, Yang Xu, Tian Cao, Kavya Nerella, Vishal M Patel, Navid Shiee, Peter Grasch, Chao Jia, Yin- fei Yang, and Zhe Gan. Deepmmsearch-r1: Empowering multimodal llms in multimodal web search.arXiv preprint arXiv:2510.12801, 2025. 2

-

[47]

Spatial cognition.Memory and Cogni- tive Processes, 3:113–163, 2004

Nora S Newcombe. Spatial cognition.Memory and Cogni- tive Processes, 3:113–163, 2004. 8

2004

-

[48]

Chaojun Ni, Cheng Chen, Xiaofeng Wang, Zheng Zhu, Wen- zhao Zheng, Boyuan Wang, Tianrun Chen, Guosheng Zhao, Haoyun Li, Zhehao Dong, et al. Swiftvla: Unlocking spa- tiotemporal dynamics for lightweight vla models at minimal overhead.arXiv preprint arXiv:2512.00903, 2025. 8

-

[49]

Oxford university press, 1978

John O’keefe and Lynn Nadel.The hippocampus as a cog- nitive map. Oxford university press, 1978. 8

1978

-

[50]

GPT-5 System Card

OpenAI. GPT-5 System Card. Online, 2025. Accessed: October 8, 2025. 2, 4

2025

-

[51]

Spacer: Rein- forcing mllms in video spatial reasoning.arXiv preprint arXiv:2504.01805, 2025

Kun Ouyang, Yuanxin Liu, Haoning Wu, Yi Liu, Hao Zhou, Jie Zhou, Fandong Meng, and Xu Sun. Spacer: Rein- forcing mllms in video spatial reasoning.arXiv preprint arXiv:2504.01805, 2025. 8

-

[52]

Learn- ing transferable visual models from natural language super- vision

Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, et al. Learn- ing transferable visual models from natural language super- vision. InICML, 2021. 8

2021

-

[53]

Does spatial cognition emerge in frontier models? InICLR, 2025

Santhosh Kumar Ramakrishnan, Erik Wijmans, Philipp Kraehenbuehl, and Vladlen Koltun. Does spatial cognition emerge in frontier models? InICLR, 2025. 8 10

2025

-

[54]

Plummer, Ranjay Krishna, Kuo-Hao Zeng, and Kate Saenko

Arijit Ray, Jiafei Duan, Ellis Brown, Reuben Tan, Dina Bashkirova, Rose Hendrix, Kiana Ehsani, Aniruddha Kem- bhavi, Bryan A. Plummer, Ranjay Krishna, Kuo-Hao Zeng, and Kate Saenko. SAT: Spatial Aptitude Training for Multi- modal Language Models. InCOLM, 2025. 8

2025

-

[55]

Roger N Shepard and Lynn A Cooper.Mental images and their transformations.The MIT Press, 1986. 8

1986

-

[56]

Moviechat: From dense token to sparse memory for long video understanding

Enxin Song, Wenhao Chai, Guanhong Wang, Yucheng Zhang, Haoyang Zhou, Feiyang Wu, Haozhe Chi, Xun Guo, Tian Ye, Yanting Zhang, et al. Moviechat: From dense token to sparse memory for long video understanding. InCVPR,

-

[57]

Gemini: A Family of Highly Capable Multimodal Models

Gemini Team, Rohan Anil, Sebastian Borgeaud, Jean- Baptiste Alayrac, Jiahui Yu, Radu Soricut, Johan Schalkwyk, Andrew M Dai, Anja Hauth, Katie Millican, et al. Gemini: a family of highly capable multimodal models.arXiv preprint arXiv:2312.11805, 2023. 8

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[58]

Gigabrain-0: A world model-powered vision-language-action model.arXiv e-prints, pages arXiv– 2510, 2025

GigaBrain Team, Angen Ye, Boyuan Wang, Chaojun Ni, Guan Huang, Guosheng Zhao, Haoyun Li, Jie Li, Jiagang Zhu, Lv Feng, et al. Gigabrain-0: A world model-powered vision-language-action model.arXiv e-prints, pages arXiv– 2510, 2025. 8

2025

-

[59]

Gigaworld-0: World models as data engine to empower embodied ai,

GigaWorld Team, Angen Ye, Boyuan Wang, Chaojun Ni, Guan Huang, Guosheng Zhao, Haoyun Li, Jiagang Zhu, Kerui Li, Mengyuan Xu, et al. Gigaworld-0: World mod- els as data engine to empower embodied ai.arXiv preprint arXiv:2511.19861, 2025. 8

-

[60]

Gemini Robotics: Bringing AI into the Physical World

Gemini Robotics Team, Saminda Abeyruwan, Joshua Ainslie, Jean-Baptiste Alayrac, Montserrat Gonzalez Are- nas, Travis Armstrong, Ashwin Balakrishna, Robert Baruch, Maria Bauza, Michiel Blokzijl, et al. Gemini robotics: Bringing ai into the physical world.arXiv preprint arXiv:2503.20020, 2025. 8

work page internal anchor Pith review arXiv 2025

-

[61]

Cambrian-1: A Fully Open, Vision-Centric Exploration of Multimodal LLMs

Peter Tong, Ellis Brown, Penghao Wu, Sanghyun Woo, Adithya Jairam Vedagiri Iyer, Sai Charitha Akula, Shusheng Yang, Jihan Yang, Manoj Middepogu, Ziteng Wang, Xichen Pan, Ziteng Wang, Rob Fergus, Yann LeCun, and Saining Xie. Cambrian-1: A Fully Open, Vision-Centric Exploration of Multimodal LLMs. InNeurIPS, 2024. 8

2024

-

[62]

Eyes wide shut? exploring the visual shortcomings of multimodal llms

Shengbang Tong, Zhuang Liu, Yuexiang Zhai, Yi Ma, Yann LeCun, and Saining Xie. Eyes wide shut? exploring the visual shortcomings of multimodal llms. InCVPR, 2024. 8

2024

-

[63]

LLaMA: Open and Efficient Foundation Language Models

Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timoth´ee Lacroix, Baptiste Rozi`ere, Naman Goyal, Eric Hambro, Faisal Azhar, et al. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971, 2023. 8

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[64]

Michael Tschannen, Alexey Gritsenko, Xiao Wang, Muham- mad Ferjad Naeem, Ibrahim Alabdulmohsin, Nikhil Parthasarathy, Talfan Evans, Lucas Beyer, Ye Xia, Basil Mustafa, et al. Siglip 2: Multilingual vision-language en- coders with improved semantic understanding, localization, and dense features.arXiv preprint arXiv:2502.14786, 2025. 8

work page internal anchor Pith review arXiv 2025

-

[65]

Boyuan Wang, Xinpan Meng, Xiaofeng Wang, Zheng Zhu, Angen Ye, Yang Wang, Zhiqin Yang, Chaojun Ni, Guan Huang, and Xingang Wang. Embodiedreamer: Advancing real2sim2real transfer for policy training via embodied world modeling.arXiv preprint arXiv:2507.05198, 2025. 8

-

[66]

Qwen2-VL: Enhancing Vision-Language Model's Perception of the World at Any Resolution

Peng Wang, Shuai Bai, Sinan Tan, Shijie Wang, Zhihao Fan, Jinze Bai, Keqin Chen, Xuejing Liu, Jialin Wang, Wenbin Ge, et al. Qwen2-vl: Enhancing vision-language model’s perception of the world at any resolution.arXiv preprint arXiv:2409.12191, 2024. 8

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[67]

Multimodal chain-of-thought reasoning: A comprehensive survey.arXiv preprint arXiv:2503.12605, 2025

Yaoting Wang, Shengqiong Wu, Yuecheng Zhang, Shuicheng Yan, Ziwei Liu, Jiebo Luo, and Hao Fei. Multimodal chain-of-thought reasoning: A comprehensive survey.arXiv preprint arXiv:2503.12605, 2025. 6

-

[68]

Yi Xin, Qi Qin, Siqi Luo, Kaiwen Zhu, Juncheng Yan, Yan Tai, Jiayi Lei, Yuewen Cao, Keqi Wang, Yibin Wang, et al. Lumina-dimoo: An omni diffusion large language model for multi-modal generation and understanding.arXiv preprint arXiv:2510.06308, 2025. 8

-

[69]

Runsen Xu, Weiyao Wang, Hao Tang, Xingyu Chen, Xi- aodong Wang, Fu-Jen Chu, Dahua Lin, Matt Feiszli, and Kevin J Liang. Multi-spatialmllm: Multi-frame spatial un- derstanding with multi-modal large language models.arXiv preprint arXiv:2505.17015, 2025. 8

-

[70]

An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, et al. Qwen3 technical report.arXiv preprint arXiv:2505.09388, 2025. 2, 4, 8

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[71]

V-IRL: Grounding virtual intelligence in real life

Jihan Yang, Runyu Ding, Ellis Brown, Xiaojuan Qi, and Saining Xie. V-IRL: Grounding virtual intelligence in real life. InECCV, 2024. 8

2024

-

[72]

Thinking in Space: How Multi- modal Large Language Models See, Remember and Recall Spaces

Jihan Yang, Shusheng Yang, Anjali Gupta, Rilyn Han, Li Fei-Fei, and Saining Xie. Thinking in Space: How Multi- modal Large Language Models See, Remember and Recall Spaces. InCVPR, 2024. 8

2024

-

[73]

Thinking in space: How mul- timodal large language models see, remember, and recall spaces

Jihan Yang, Shusheng Yang, Anjali W Gupta, Rilyn Han, Li Fei-Fei, and Saining Xie. Thinking in space: How mul- timodal large language models see, remember, and recall spaces. InProceedings of the Computer Vision and Pattern Recognition Conference, pages 10632–10643, 2025. 2

2025

-

[74]

Visual spatial tuning.arXiv preprint arXiv:2511.05491, 2025

Rui Yang, Ziyu Zhu, Yanwei Li, Jingjia Huang, Shen Yan, Siyuan Zhou, Zhe Liu, Xiangtai Li, Shuangye Li, Wenqian Wang, Yi Lin, and Hengshuang Zhao. Visual spatial tuning. arXiv preprint arXiv:2511.05491, 2025. 8

-

[75]

MAGIC-VQA: Multimodal and grounded inference with commonsense knowledge for visual question answering

Shuo Yang, Caren Han, Siwen Luo, and Eduard Hovy. MAGIC-VQA: Multimodal and grounded inference with commonsense knowledge for visual question answering. In Findings of the Association for Computational Linguistics: ACL 2025, pages 16967–16986, Vienna, Austria, 2025. As- sociation for Computational Linguistics. 8

2025

-

[76]

Multimodal commonsense knowledge distillation for visual question an- swering (student abstract)

Shuo Yang, Siwen Luo, and Soyeon Caren Han. Multimodal commonsense knowledge distillation for visual question an- swering (student abstract). InProceedings of the AAAI con- ference on artificial intelligence, pages 29545–29547, 2025. 8

2025

-

[77]

Cambrian-s: Towards spatial supersensing in video.arXiv preprint arXiv:2511.04670, 2025

Shusheng Yang, Jihan Yang, Pinzhi Huang, Ellis Brown, Zi- hao Yang, Yue Yu, Shengbang Tong, Zihan Zheng, Yifan Xu, Muhan Wang, et al. Cambrian-s: Towards spatial supersens- ing in video.arXiv preprint arXiv:2511.04670, 2025. 8

-

[78]

Shuo Yang, Soyeon Caren Han, Xueqi Ma, Yan Li, Moham- mad Reza Ghasemi Madani, and Eduard Hovy. Evotool: 11 Self-evolving tool-use policy optimization in llm agents via blame-aware mutation and diversity-aware selection.arXiv preprint arXiv:2603.04900, 2026. 8

-

[79]

Yuncong Yang, Jiageng Liu, Zheyuan Zhang, Siyuan Zhou, Reuben Tan, Jianwei Yang, Yilun Du, and Chuang Gan. Mindjourney: Test-time scaling with world models for spa- tial reasoning.arXiv preprint arXiv:2507.12508, 2025. 8

-

[80]

Seeing from another perspective: Evaluating multi-view understanding in mllms

Chun-Hsiao Yeh, Chenyu Wang, Shengbang Tong, Ta-Ying Cheng, Ruoyu Wang, Tianzhe Chu, Yuexiang Zhai, Yubei Chen, Shenghua Gao, and Yi Ma. Seeing from another perspective: Evaluating multi-view understanding in mllms. arXiv preprint arXiv:2504.15280, 2025. 8

-

[81]

Scannet++: A high-fidelity dataset of 3d indoor scenes

Chandan Yeshwanth, Yueh-Cheng Liu, Matthias Nießner, and Angela Dai. Scannet++: A high-fidelity dataset of 3d indoor scenes. InICCV, 2023. 2

2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.