Recognition: 2 theorem links

· Lean TheoremQuIVer: Rethinking ANN Graph Topology via Training-Free Binary Quantization

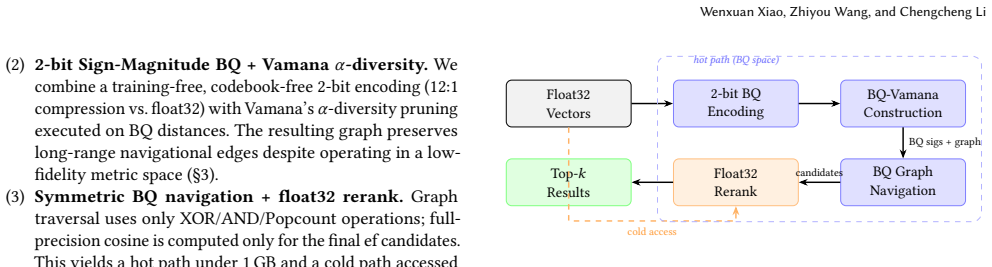

Pith reviewed 2026-05-12 01:48 UTC · model grok-4.3

The pith

QuIVer builds ANN graph indices entirely inside a 2-bit sign-magnitude binary quantization space.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

QuIVer performs edge selection, pruning, and graph navigation entirely in a 2-bit Sign-Magnitude BQ metric space by combining a memory-efficient encoding that preserves sign and magnitude strength, direct application of Vamana alpha-diversity pruning on the quantized distances, and symmetric BQ beam search that uses only bitwise operations before a small float32 rerank.

What carries the argument

The 2-bit Sign-Magnitude Binary Quantization metric space, which reduces each float32 vector to one-twelfth the storage while retaining enough relative distance information to support pruning and navigation decisions.

If this is right

- Index construction completes in 58 to 262 seconds while using under 1.3 GB of resident memory.

- Multi-threaded query throughput reaches 13K to 41K QPS at 88 percent or higher Recall@10 across tested datasets.

- At equal recall the method exceeds the throughput of DiskANN, hnswlib, and FAISS HNSW by factors of 2.5x to 4.9x on Cohere-1M.

- Performance remains stable when the beam width parameter increases, indicating that quantization noise mainly slows navigation rather than destroying graph quality.

Where Pith is reading between the lines

- The reported applicability boundary implies the technique may need separate handling or higher bit widths when data lack cosine-native structure or exhibit high intrinsic dimension.

- Because the final rerank uses only a small candidate set, the method could be combined with other post-processing filters without changing the core quantized graph.

- The fact that monotonic recall gains continue with larger ef suggests that modest increases in search effort can compensate for the information lost in two-bit encoding.

Load-bearing premise

That two-bit sign-magnitude encoding keeps enough distance ordering information for alpha-diversity pruning and beam search to produce a graph whose connectivity quality stays close to what full-precision construction would give, at least on cosine-native data with low effective dimension.

What would settle it

Measuring whether Recall@10 drops below 80 percent or construction time balloons when the same method is run on uniform random vectors or on embeddings whose effective dimensionality is known to be high.

Figures

read the original abstract

Approximate nearest neighbor (ANN) graph indices such as HNSW and Vamana construct graph topology in full-precision or high-fidelity quantized metric spaces, using binary quantization (BQ) only as a post-hoc search-time distance estimator. We ask whether BQ can build the graph itself. We present QuIVer (Quantized Index for Vector Retrieval), a training-free ANN graph index that performs edge selection, pruning, and graph navigation entirely in a 2-bit Sign-Magnitude BQ metric space. QuIVer combines: (i) a 2-bit encoding that preserves sign and magnitude strength at 1/12 the memory of float32 vectors; (ii) Vamana alpha-diversity pruning directly on BQ distances; and (iii) symmetric BQ beam search using XOR/AND/Popcount, followed by float32 reranking over a small candidate set. On six embedding datasets spanning 384--3072 dimensions, QuIVer achieves at least 88% Recall@10 at 13--41K multi-threaded QPS with 58--262-second construction and less than 1.3 GB hot memory. At matched recall on Cohere-1M, it outperforms the official DiskANN Rust implementation by 2.5--3.3x, hnswlib by 3.6--4.7x, and FAISS HNSW by 3.8--4.9x in multi-threaded throughput. Controlled experiments on additional datasets, including word vectors, CV features, uniform random vectors, multimodal CLIP embeddings, and low-rank synthetic data, delineate QuIVer's applicability boundary: high recall requires cosine-native distributions with low effective dimensionality, while monotonic recall gains with increasing ef suggest that BQ noise mainly affects navigation efficiency in the tested regimes.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces QuIVer, a training-free ANN graph index that performs edge selection, Vamana-style alpha-diversity pruning, and beam-search navigation entirely within a 2-bit sign-magnitude binary quantization (BQ) metric space (1/12 the memory of float32), followed by limited float32 reranking. It reports at least 88% Recall@10 at 13-41K multi-threaded QPS, 58-262s construction, and <1.3GB hot memory on six embedding datasets (384-3072 dims), outperforming DiskANN, hnswlib, and FAISS HNSW at matched recall on Cohere-1M; controlled experiments on word vectors, CV features, CLIP, and synthetic data delineate an applicability boundary to cosine-native low-effective-dimensionality data.

Significance. If the central empirical claims hold under tighter controls, QuIVer would demonstrate that low-resolution BQ can replace full-precision operations for graph topology construction in ANN indices, yielding substantial memory and throughput gains without training. The training-free design, symmetric XOR/AND/Popcount navigation, and explicit applicability boundary are strengths; the outperformance numbers versus production baselines on real embedding data would be of practical interest to database and retrieval systems.

major comments (2)

- [Abstract] Abstract and experimental results: the headline recall/QPS figures are obtained after float32 reranking over a small candidate set, but the manuscript provides no ablation or direct measurement isolating the quality of the BQ-constructed graph itself (e.g., Recall@10 or beam-search efficiency without reranking, or graph statistics such as average degree and connectivity relative to a full-precision Vamana baseline). This is load-bearing for the claim that 2-bit distances suffice for alpha-diversity pruning and navigation.

- [Experimental Evaluation] Experimental evaluation: while the applicability boundary to low-effective-dimensionality cosine data is noted, there is no controlled test of whether 2-bit sign-magnitude distances produce frequent ties or distance inversions that degrade pruning decisions, nor any comparison of the resulting graph topology's navigability to full-precision construction on the same data.

minor comments (2)

- The reported recall and QPS numbers lack error bars or multiple runs with different seeds, and exact dataset cardinalities, effective dimensionality statistics, and full hardware/protocol details (thread counts, CPU model, exact ef values) are not provided.

- Notation for the 2-bit sign-magnitude encoding and the precise definition of the BQ distance (XOR/AND/Popcount) could be formalized with an equation or pseudocode for reproducibility.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review. The comments correctly identify opportunities to strengthen the isolation of binary quantization effects on graph topology, and we will revise the manuscript accordingly to address these points directly.

read point-by-point responses

-

Referee: [Abstract] Abstract and experimental results: the headline recall/QPS figures are obtained after float32 reranking over a small candidate set, but the manuscript provides no ablation or direct measurement isolating the quality of the BQ-constructed graph itself (e.g., Recall@10 or beam-search efficiency without reranking, or graph statistics such as average degree and connectivity relative to a full-precision Vamana baseline). This is load-bearing for the claim that 2-bit distances suffice for alpha-diversity pruning and navigation.

Authors: We agree that isolating the BQ-constructed graph's intrinsic quality is important for validating the core claim. While the reported results reflect practical end-to-end performance (with reranking as is standard for high-recall ANN), the manuscript does not currently include the requested direct ablations. In the revision we will add: Recall@10 and beam-search efficiency measured exclusively in the BQ space without float32 reranking; and comparative graph statistics (average degree, connectivity, and related topology measures) against a full-precision Vamana baseline on the same datasets. These will appear in the experimental evaluation section. revision: yes

-

Referee: [Experimental Evaluation] Experimental evaluation: while the applicability boundary to low-effective-dimensionality cosine data is noted, there is no controlled test of whether 2-bit sign-magnitude distances produce frequent ties or distance inversions that degrade pruning decisions, nor any comparison of the resulting graph topology's navigability to full-precision construction on the same data.

Authors: The referee accurately notes that our existing controlled experiments on applicability boundaries do not explicitly quantify ties or distance inversions, nor provide a side-by-side navigability comparison. We will add these analyses in revision: measurements of tie frequency and inversion rates between 2-bit sign-magnitude and full-precision distances; and direct comparisons of graph navigability (beam-search performance, connectivity metrics) for BQ-constructed versus full-precision graphs on identical data. This will be incorporated into the experimental section to more rigorously support the use of BQ for pruning and navigation. revision: yes

Circularity Check

No circularity; empirical method with direct baseline comparisons

full rationale

The paper introduces QuIVer as a training-free construction procedure that applies Vamana alpha-diversity pruning and beam search directly in 2-bit sign-magnitude BQ space, followed by float32 reranking. All headline performance numbers (Recall@10, QPS, construction time, memory) are obtained from controlled experiments on six embedding datasets and compared against external implementations (DiskANN, hnswlib, FAISS HNSW). No equations derive a target quantity from parameters fitted to that same quantity, no self-citation supplies a load-bearing uniqueness theorem, and no ansatz is smuggled in. The central claim that BQ distances suffice for high-quality topology is validated by external recall measurements rather than by internal redefinition or statistical forcing. The derivation chain is therefore self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

free parameters (2)

- 2-bit sign-magnitude encoding

- Vamana alpha parameter

axioms (1)

- domain assumption Vamana alpha-diversity pruning remains effective when distances are replaced by 2-bit BQ distances

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearQuIVer performs edge selection, pruning, and graph navigation entirely in a 2-bit Sign-Magnitude BQ metric space

-

IndisputableMonolith/Foundation/AlphaCoordinateFixation.leanalpha_pin_under_high_calibration unclearVamana alpha-diversity pruning executed directly on BQ distances

Reference graph

Works this paper leans on

- [1]

-

[2]

T. Wang and P. Isola. Understanding contrastive representation learning through alignment and uniformity on the hypersphere. InICML, pages 9929–9939, 2020

work page 2020

-

[3]

Representation Learning with Contrastive Predictive Coding

A. van den Oord, Y. Li, and O. Vinyals. Representation learning with contrastive predictive coding.arXiv preprint arXiv:1807.03748, 2018

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[4]

Y. A. Malkov and D. A. Yashunin. Efficient and robust approximate nearest neighbor search using Hierarchical Navigable Small World graphs.IEEE TPAMI, 42(4):824–836, 2020

work page 2020

-

[5]

S. J. Subramanya et al. DiskANN: Fast accurate billion-point nearest neighbor search on a single node. InNeurIPS, 2019

work page 2019

- [6]

- [7]

-

[8]

T. Ge, K. He, Q. Ke, and J. Sun. Optimized product quantization for approximate nearest neighbor search. InCVPR, pages 2946–2953, 2013

work page 2013

-

[9]

R. Guo, P. Sun, E. Lindgren, Q. Geng, D. Simcha, F. Chern, and S. Kumar. Acceler- ating large-scale inference with anisotropic vector quantization. InICML, pages 3887–3896, 2020

work page 2020

-

[10]

M. S. Charikar. Similarity estimation techniques from rounding algorithms. In STOC, pages 380–388, 2002

work page 2002

-

[11]

P. Indyk and R. Motwani. Approximate nearest neighbors: Towards removing the curse of dimensionality. InSTOC, pages 604–613, 1998

work page 1998

- [12]

-

[13]

M. X. Goemans and D. P. Williamson. Improved approximation algorithms for maximum cut and satisfiability problems using semidefinite programming.Jour- nal of the ACM, 42(6):1115–1145, 1995

work page 1995

- [14]

-

[15]

C. Fu, C. Xiang, C. Wang, and D. Cai. Fast approximate nearest neighbor search with the navigating spreading-out graph. InProc. VLDB Endowment, 12(5):461– 474, 2019

work page 2019

- [16]

-

[17]

S. N. Bernstein. On a modification of Chebyshev’s inequality and of the error formula of Laplace.Annals of Science of the Ukrainian National Academy of Sciences, 1:38–49, 1924

work page 1924

-

[18]

S. Boucheron, G. Lugosi, and P. Massart.Concentration Inequalities: A Nonasymp- totic Theory of Independence. Oxford University Press, 2013

work page 2013

-

[19]

D. A. Freedman. On tail probabilities for martingales.Annals of Probability, 3(1):100–118, 1975

work page 1975

- [20]

-

[21]

Vershynin.High-Dimensional Probability: An Introduction with Applications in Data Science

R. Vershynin.High-Dimensional Probability: An Introduction with Applications in Data Science. Cambridge University Press, 2018

work page 2018

-

[22]

J. Chen, S. Xiao, P. Zhang, K. Luo, D. Lian, and Z. Liu. BGE M3-Embedding: Multi-lingual, multi-functionality, multi-granularity text embeddings through self-knowledge distillation.arXiv preprint arXiv:2402.03216, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[23]

Wolt product embeddings dataset

Wolt Engineering. Wolt product embeddings dataset. https://huggingface.co/ datasets/Wolt/CLIP-ViT-B-32-Product-images-v1, 2024

work page 2024

- [24]

-

[25]

M. Aumüller, E. Bernhardsson, and A. Faithfull. ANN-Benchmarks: A bench- marking tool for approximate nearest neighbor algorithms.Information Systems, 87:101374, 2020

work page 2020

-

[26]

M. Aumüller and M. Ceccarello. Recent approaches and trends in approximate nearest neighbor search, with remarks on benchmarking.Data Engineering, pages 27–38, 2023

work page 2023

-

[27]

W. Li, Y. Zhang, Y. Sun, W. Wang, M. Li, W. Zhang, and X. Lin. Approximate nearest neighbor search on high dimensional data—experiments, analyses, and improvement.IEEE TKDE, 32(8):1475–1488, 2020

work page 2020

-

[28]

J. Johnson, M. Douze, and H. Jégou. Billion-scale similarity search with GPUs. IEEE Trans. Big Data, 7(3):535–547, 2021

work page 2021

- [29]

-

[30]

D. R. Karger and M. Ruhl. Finding nearest neighbors in growth-restricted metrics. InSTOC, pages 741–750, 2002

work page 2002

-

[31]

M. Wang, X. Xu, Q. Yue, and Y. Wang. A comprehensive survey and experimental comparison of graph-based approximate nearest neighbor search.Proc. VLDB Endow., 14(11):1964–1978, 2021

work page 1964

- [32]

- [33]

- [34]

-

[35]

F. André, A.-M. Kermarrec, and N. Le Scouarnec. Cache locality is not enough: High-performance nearest neighbor search with product quantization fast scan. Proc. VLDB Endow., 9(4):288–299, 2015

work page 2015

-

[36]

F. André, A.-M. Kermarrec, and N. Le Scouarnec. Accelerated nearest neighbor search with quick ADC. InICMR, pages 159–166, 2017

work page 2017

-

[37]

C. Aguerrebere, I. S. Bhati, M. Hildebrand, M. Tepper, and T. L. Willke. Similar- ity search in the blink of an eye with compressed indices.Proc. VLDB Endow., 16(11):3433–3446, 2023

work page 2023

-

[38]

B. Trent. Better Binary Quantization (BBQ) in Lucene and Elasticsearch. Elas- tic Search Labs Blog, November 2024. https://www.elastic.co/search-labs/blog/ better-binary-quantization-lucene-elasticsearch

work page 2024

-

[39]

OpenSearch Project. Vector quantization. OpenSearch Documentation, 2024. https://docs.opensearch.org/latest/vector-search/optimizing-storage/knn- vector-quantization/

work page 2024

-

[40]

N. Ailon and B. Chazelle. The fast Johnson–Lindenstrauss transform and approx- imate nearest neighbors.SIAM J. Computing, 39(1):302–322, 2009. QuIVer: Rethinking ANN Graph Topology via Training-Free Binary Quantization A Detailed Proofs and Derivations This appendix provides complete derivations for the theoretical results stated in the main text. We rest...

work page 2009

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.