Recognition: 2 theorem links

A Large-Scale Observational Study on Obtaining Lightweight, Randomized Weekly Student Feedback

Pith reviewed 2026-05-08 18:13 UTC · model grok-4.3

The pith

Continued use of lightweight randomized feedback raises learning ratings by 0.045 points per term in small classes.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

First-time HRCF use shows no measurable link to changes in average student ratings. Among small- and medium-enrollment offerings, sustained use correlates with rating gains of 0.045 to 0.048 points per additional term specifically on learning-related evaluation items. Large-enrollment courses and items measuring instructional quality or course organization exhibit no statistically significant associations.

What carries the argument

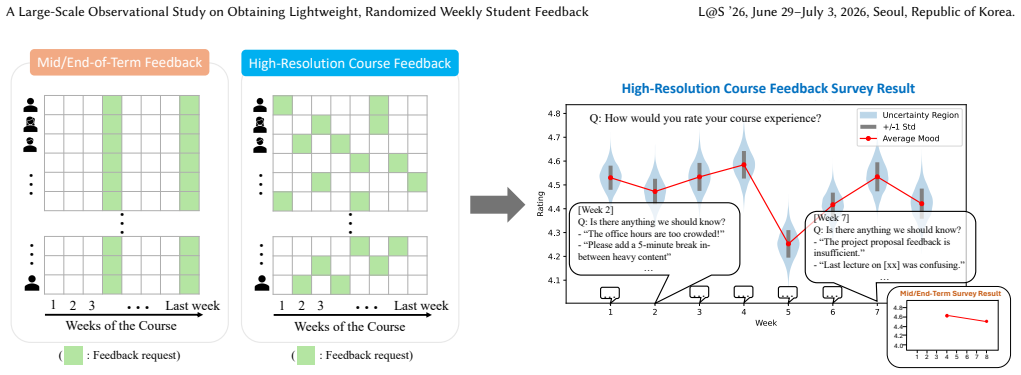

High-Resolution Course Feedback (HRCF): a lightweight mechanism that randomly selects a small number of weeks per term to survey students, keeping participation high while still supplying instructors with frequent input.

If this is right

- Instructors of smaller courses may observe gradual improvements in students' reported learning experiences by maintaining HRCF across terms.

- The feedback approach does not appear to shift ratings on teaching quality or course organization.

- Large-enrollment courses show no detectable rating changes tied to HRCF adoption or duration.

- Single-term use alone does not produce measurable shifts in end-of-term evaluations.

Where Pith is reading between the lines

- If the observed association proves causal, sustained HRCF could serve as a low-effort iterative improvement loop for learning-focused aspects of smaller courses.

- The absence of effects in large classes suggests the mechanism may need scaling adjustments, such as different randomization or aggregation methods, to reach similar outcomes.

- Combining HRCF with targeted interventions on organization or instruction could test whether broader rating gains become possible.

- Replicating the analysis in non-computer-science disciplines would clarify whether the pattern generalizes beyond technical subjects.

Load-bearing premise

The regression assumes that instructors who adopt and keep using HRCF are not simultaneously changing other unmeasured factors, such as their own effort or course design, that could independently improve student ratings.

What would settle it

A randomized controlled trial that assigns instructors to use HRCF for one versus multiple consecutive terms while holding other course elements fixed, then tracks whether rating trajectories diverge on the learning items.

Figures

read the original abstract

Conventional methods of obtaining student feedback on course experience face a fundamental tradeoff between feedback frequency and quality: as feedback requests become more frequent, participation often declines, and responses become less thoughtful over time. To obtain both timely and thoughtful feedback from students, Kim and Piech (Learning at Scale, 2023) recently proposed a simple, lightweight course feedback mechanism: surveying each student a small number of times per term during randomly selected weeks. Named High-Resolution Course Feedback (HRCF), this method has been shown to elicit feedback that instructors find helpful without imposing excessive burden on students. An important question, however, remains unanswered: is the use of this simple method associated with measurable improvements in students' actual course experiences? We study HRCF use across 103 course offerings, totaling 24,216 student enrollments, over four years from Fall 2021 through Fall 2025, spanning 42 unique computer science courses at an R1 institution. Through a regression analysis of four end-of-term student evaluation items for these courses, we find that first-time use of HRCF is not associated with a measurable change in average student ratings. However, among small- and medium-enrollment (<250 students) course offerings, continued HRCF use is associated with average rating increases of 0.045 to 0.048 points per additional term of use for learning-related items. We observe no statistically significant associations for large-enrollment (250 or more students) course offerings, nor for items measuring instructional quality and course organization. Together, these findings suggest that sustained HRCF use may support improvements in students' learning experiences, but that further design enhancements may be needed to produce measurable improvements in instructional quality and course organization.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper conducts a large-scale observational study of High-Resolution Course Feedback (HRCF) across 103 computer science course offerings involving 24,216 student enrollments over four years (Fall 2021–Fall 2025). Through regression analysis of four end-of-term student evaluation items, it reports no measurable association with first-time HRCF use. Among small- and medium-enrollment courses (<250 students), continued HRCF use is associated with average rating increases of 0.045 to 0.048 points per additional term on learning-related items. No statistically significant associations are found for large-enrollment courses or for items measuring instructional quality and course organization.

Significance. If the associations prove robust, the study supplies valuable large-scale observational evidence that sustained use of lightweight randomized feedback tools can correlate with modest improvements in students' reported learning experiences, particularly in smaller classes. The dataset size and focus on specific outcome measures represent strengths that could guide educational technology adoption and course design in computer science and related fields.

major comments (2)

- [Regression Analysis / Results] The central claim of 0.045–0.048 point gains per additional term of continued HRCF use in <250-student courses rests on a regression that conditions on observed course characteristics but treats continued adoption as conditionally exogenous. Without instructor or course fixed effects, the per-term coefficient is vulnerable to selection bias from unmeasured factors (e.g., instructor effort or concurrent redesigns) that also affect ratings. Please specify the exact model (covariates, fixed effects, clustering) and report robustness checks such as within-instructor comparisons.

- [Discussion / Interpretation] The interpretation that sustained HRCF use 'may support improvements in students' learning experiences' (abstract and discussion) assumes the observed association is attributable to the feedback mechanism. Given the purely observational design across 103 offerings and the noted weakest assumption, this causal-leaning language requires stronger qualification or supporting analyses (e.g., difference-in-differences or matching on instructor trajectories).

minor comments (1)

- [Abstract] The abstract omits all details on regression specification, covariates, or robustness checks, forcing readers to consult the full methods section to evaluate the reported associations.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our observational study. We agree that the regression specification and potential selection biases require clearer exposition, and that the interpretive language must be carefully qualified to reflect the correlational nature of the findings. We will make revisions to address both points, including adding model details, robustness checks, and tempered language in the abstract and discussion.

read point-by-point responses

-

Referee: [Regression Analysis / Results] The central claim of 0.045–0.048 point gains per additional term of continued HRCF use in <250-student courses rests on a regression that conditions on observed course characteristics but treats continued adoption as conditionally exogenous. Without instructor or course fixed effects, the per-term coefficient is vulnerable to selection bias from unmeasured factors (e.g., instructor effort or concurrent redesigns) that also affect ratings. Please specify the exact model (covariates, fixed effects, clustering) and report robustness checks such as within-instructor comparisons.

Authors: We will revise the Methods and Results sections to fully specify the model: an OLS regression with the end-of-term evaluation item as the outcome, a binary indicator for first-time HRCF use, a count of consecutive prior terms of HRCF use (the coefficient of interest), controls for enrollment size, course level, and term fixed effects, with standard errors clustered at the course level. We did not include instructor fixed effects in the main specification because most instructors contribute only one or two offerings, which would severely reduce power and prevent identification of the continued-use effect. We acknowledge the risk of bias from time-varying unobservables and will add explicit discussion of this limitation. For robustness, we will add (1) course fixed effects for the subset of courses with repeated offerings and (2) within-instructor comparisons for instructors with multiple terms of data, reporting these results in the revision. revision: yes

-

Referee: [Discussion / Interpretation] The interpretation that sustained HRCF use 'may support improvements in students' learning experiences' (abstract and discussion) assumes the observed association is attributable to the feedback mechanism. Given the purely observational design across 103 offerings and the noted weakest assumption, this causal-leaning language requires stronger qualification or supporting analyses (e.g., difference-in-differences or matching on instructor trajectories).

Authors: We agree that the current phrasing risks implying causation. We will revise the abstract, discussion, and conclusion to state that the results show associations consistent with modest gains in learning-related ratings for sustained use in smaller courses, but that these could reflect selection or other unmeasured factors. We will remove or qualify any language suggesting the mechanism directly causes the changes and will note that stronger causal evidence would require designs such as difference-in-differences or instructor fixed effects. We will explore and report such checks in the revision where the data permit. revision: yes

Circularity Check

No significant circularity: observational regression on new data is self-contained

full rationale

The paper reports regression coefficients for end-of-term ratings across 103 new course offerings (24,216 enrollments) spanning 2021–2025. These coefficients (0.045–0.048 point per-term gains for learning items in <250-student courses) are obtained by fitting standard models to the collected rating data and observed course characteristics. The 2023 Kim & Piech citation is used only to define the HRCF intervention itself; it supplies neither the outcome variables nor the regression estimates analyzed here. No equation reduces a claimed result to a fitted parameter by construction, no uniqueness theorem is imported from prior self-work, and no ansatz or renaming of known patterns occurs. The derivation chain therefore remains independent of its inputs.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Linear regression can recover unbiased associations between HRCF use and end-of-term ratings after including observed covariates.

Reference graph

Works this paper leans on

-

[1]

Robert D Abbott, Donald H Wulff, Jody D Nyquist, Vickie A Ropp, and Carla W Hess. 1990. Satisfaction with processes of collecting student opinions about instruction: The student perspective.Journal of Educational Psychology82, 2 (1990), 201

1990

-

[2]

Philip C Abrami, Sylvia d’Apollonia, and Peter A Cohen. 1990. Validity of student ratings of instruction: What we know and what we do not.Journal of educational psychology82, 2 (1990), 219

1990

-

[3]

Meredith JD Adams and Paul D Umbach. 2012. Nonresponse and online student evaluations of teaching: Understanding the influence of salience, fatigue, and academic environments.Research in Higher Education53, 5 (2012), 576–591

2012

-

[4]

Linet Arthur. 2009. From performativity to professionalism: Lecturers’ responses to student feedback.Teaching in Higher Education14, 4 (2009), 441–454

2009

-

[5]

1994.Assessing Faculty Work: Enhancing Individual and Institutional Performance

Larry A Braskamp and John C Ory. 1994.Assessing Faculty Work: Enhancing Individual and Institutional Performance. Jossey-Bass Higher and Adult Education Series.ERIC

1994

-

[6]

Michael J Brown. 2008. Student perceptions of teaching evaluations.Journal of Instructional Psychology35, 2 (2008), 177–182

2008

-

[7]

David Carless and David Boud. 2018. The development of student feedback literacy: Enabling uptake of feedback.Assessment & evaluation in higher education 43, 8 (2018), 1315–1325

2018

-

[8]

1993.Reflective Faculty Evaluation: Enhancing Teaching and Determining Faculty Effectiveness

John A Centra. 1993.Reflective Faculty Evaluation: Enhancing Teaching and Determining Faculty Effectiveness. The Jossey-Bass Higher and Adult Education Series.ERIC

1993

-

[9]

John A Centra. 2003. Will teachers receive higher student evaluations by giving higher grades and less course work?Research in higher education44, 5 (2003), 495–518

2003

-

[10]

John A Centra and F Reid Creech. 1976. The relationship between student, teacher, and course characteristics and student ratings of teacher effectiveness.Project report76, 1 (1976)

1976

-

[11]

Yining Chen and Leon B Hoshower. 1998. Assessing student motivation to participate in teaching evaluations: An application of expectancy theory.Issues in Accounting Education13, 3 (1998), 531

1998

-

[12]

Yining Chen and Leon B Hoshower. 2003. Student evaluation of teaching effec- tiveness: An assessment of student perception and motivation.Assessment & evaluation in higher education28, 1 (2003), 71–88

2003

-

[13]

Peter A Cohen. 1980. Effectiveness of student-rating feedback for improving college instruction: A meta-analysis of findings.Research in higher education13, 4 (1980), 321–341

1980

-

[14]

Peter A Cohen. 1981. Student ratings of instruction and student achievement: A meta-analysis of multisection validity studies.Review of educational Research51, 3 (1981), 281–309

1981

-

[15]

K Patricia Cross and Thomas A Angelo. 1988. Classroom Assessment Techniques. A Handbook for Faculty. (1988)

1988

-

[16]

Joe Cuseo. 2007. The empirical case against large class size: Adverse effects on the teaching, learning, and retention of first-year students.The Journal of Faculty Development21, 1 (2007), 5–21

2007

-

[17]

Miriam Rosalyn Diamond. 2004. The usefulness of structured mid-term feedback as a catalyst for change in higher education classes.Active Learning in Higher Education5, 3 (2004), 217–231

2004

-

[18]

Laura A Driscoll and William L Goodwin. 1979. The effects of varying information about use and disposition of results on university students’ evaluations of faculty and courses.American Educational Research Journal16, 1 (1979), 25–37

1979

-

[19]

Howard Ebmeier. 2003. How supervision influences teacher efficacy and commit- ment: An investigation of a path model.Journal of Curriculum and supervision 18, 2 (2003), 110–141

2003

-

[20]

Kenneth A Feldman. 1992. College students’ views of male and female college teachers: Part I—Evidence from the social laboratory and experiments.Research in Higher Education33 (1992), 317–375

1992

-

[21]

Jonas Flodén. 2017. The impact of student feedback on teaching in higher education.Assessment & Evaluation in Higher Education42, 7 (2017), 1054–1068

2017

-

[22]

2008.Student course evalua- tions: Research, models and trends

Pamela Gravestock and Emily Gregor-Greenleaf. 2008.Student course evalua- tions: Research, models and trends. Higher Education Quality Council of Ontario Toronto

2008

-

[23]

Anthony G Greenwald and Gerald M Gillmore. 1997. Grading leniency is a removable contaminant of student ratings.American psychologist52, 11 (1997), 1209

1997

-

[24]

2011.Survey methodology

Robert M Groves, Floyd J Fowler Jr, Mick P Couper, James M Lepkowski, Eleanor Singer, and Roger Tourangeau. 2011.Survey methodology. John Wiley & Sons

2011

-

[25]

2021.Enhancing learning through formative assessment and feedback

Alastair Irons and Sam Elkington. 2021.Enhancing learning through formative assessment and feedback. Routledge

2021

-

[26]

Carolin S Keutzer. 1993. Midterm evaluation of teaching provides helpful feedback to instructors.Teaching of psychology20, 4 (1993), 238–240

1993

-

[27]

Yunsung Kim and Chris Piech. 2023. High-resolution course feedback: Timely feedback mechanism for instructors. InProceedings of the Tenth ACM Conference on Learning@ Scale. 81–91

2023

-

[28]

James A Kulik. 2001. Student ratings: Validity, utility, and controversy.New directions for institutional research2001, 109 (2001), 9–25

2001

-

[29]

Henrik Levinsson, August Nilsson, Katarina Mårtensson, and Stefan D Persson

-

[30]

Course design as a stronger predictor of student evaluation of quality and student engagement than teacher ratings.Higher Education(2024), 1–17

2024

-

[31]

Karron G Lewis. 2001. Using midsemester student feedback and responding to it. New Directions for Teaching and Learning2001, 87 (2001), 33–44

2001

-

[32]

Herbert W Marsh. 2007. Students’ evaluations of university teaching: Dimen- sionality, reliability, validity, potential biases and usefulness.The scholarship of teaching and learning in higher education: An evidence-based perspective(2007), 319–383

2007

-

[33]

Herbert W Marsh and Lawrence Roche. 1993. The use of students’ evaluations and an individually structured intervention to enhance university teaching effec- tiveness.American educational research journal30, 1 (1993), 217–251

1993

-

[34]

Herbert W Marsh and Lawrence A Roche. 1997. Making students’ evaluations of teaching effectiveness effective: The critical issues of validity, bias, and utility. American psychologist52, 11 (1997), 1187

1997

-

[35]

Kathleen E McKone. 1999. Analysis of student feedback improves instructor effectiveness.Journal of Management Education23, 4 (1999), 396–415

1999

-

[36]

1988.Teacher Evaluation: Im- provement, Accountability, and Effective Learning.Teachers College Press

Milbrey Wallin McLaughlin and R Scott Pfeifer. 1988.Teacher Evaluation: Im- provement, Accountability, and Effective Learning.Teachers College Press

1988

-

[37]

James Monks and Robert M Schmidt. 2011. The Impact of Class Size on Outcomes in Higher Education.The BE Journal of Economic Analysis & Policy11, 1 (2011)

2011

-

[38]

Catherine Mulryan-Kyne. 2010. Teaching large classes at college and university level: Challenges and opportunities.Teaching in higher Education15, 2 (2010), 175–185

2010

-

[39]

Harry G Murray. 1997. Does evaluation of teaching lead to improvement of teaching?The International Journal for Academic Development2, 1 (1997), 8–23

1997

-

[40]

Kasturi Narasimhan. 2001. Improving the climate of teaching sessions: the use of evaluations by students and instructors.Quality in Higher Education7, 3 (2001), 179–190. A Large-Scale Observational Study on Obtaining Lightweight, Randomized Weekly Student Feedback L@S ’26, June 29–July 3, 2026, Seoul, Republic of Korea

2001

-

[41]

JU Overall and Herbert W Marsh. 1979. Midterm feedback from students: Its relationship to instructional improvement and students’ cognitive and affective outcomes.Journal of educational psychology71, 6 (1979), 856

1979

-

[42]

Angela R Penny. 2003. Changing the agenda for research into students’ views about university teaching: Four shortcomings of SRT research.Teaching in higher education8, 3 (2003), 399–411

2003

-

[43]

Angela R Penny and Robert Coe. 2004. Effectiveness of consultation on student ratings feedback: A meta-analysis.Review of educational research74, 2 (2004), 215–253

2004

-

[44]

Stephen R Porter, Michael E Whitcomb, and William H Weitzer. 2004. Multiple surveys of students and survey fatigue.New directions for institutional research 2004, 121 (2004), 63–73

2004

-

[45]

William J Read, Dasaratha V Rama, and K Raghunandan. 2001. The relationship between student evaluations of teaching and faculty evaluations.Journal of Education for Business76, 4 (2001), 189–192

2001

-

[46]

Richard Remedios and David A Lieberman. 2008. I liked your course because you taught me well: The influence of grades, workload, expectations and goals on students’ evaluations of teaching.British Educational Research Journal34, 1 (2008), 91–115

2008

-

[47]

John TE Richardson. 2005. Instruments for obtaining student feedback: A review of the literature.Assessment & evaluation in higher education30, 4 (2005), 387–415

2005

-

[48]

Liora Pedhazur Schmelkin, Karin J Spencer, and Estelle S Gellman. 1997. Faculty perspectives on course and teacher evaluations.Research in Higher Education38 (1997), 575–592

1997

-

[49]

Ronald D Simpson. 1995. Uses and misuses of student evaluations of teaching effectiveness.Innovative Higher Education20, 1 (1995), 3–5

1995

-

[50]

Karin J Spencer and Liora Pedhazur Schmelkin. 2002. Student perspectives on teaching and its evaluation.Assessment & Evaluation in Higher Education27, 5 (2002), 397–409

2002

-

[51]

Pieter Spooren, Bert Brockx, and Dimitri Mortelmans. 2013. On the validity of student evaluation of teaching: The state of the art.Review of Educational Research83, 4 (2013), 598–642

2013

-

[52]

Marilla D Svinicki. 2001. Encouraging your students to give feedback.New Directions for Teaching and Learning2001, 87 (2001), 17–24

2001

-

[53]

Ann Veeck, Kelley O’Reilly, Amy MacMillan, and Hongyan Yu. 2016. The use of collaborative midterm student evaluations to provide actionable results.Journal of Marketing Education38, 3 (2016), 157–169

2016

-

[54]

Maxwell K Winchester and Tiffany M Winchester. 2012. If you build it will they come?; Exploring the student perspective of weekly student evaluations of teaching.Assessment & evaluation in higher education37, 6 (2012), 671–682

2012

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.