Recognition: unknown

Performance and Energy Benefits of MRDIMMs

Pith reviewed 2026-05-08 02:49 UTC · model grok-4.3

The pith

MRDIMMs increase memory bandwidth by 41% and provide up to 30% energy savings for memory-bound server workloads.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Multiplexed Rank DIMMs (MRDIMMs) enable higher bandwidth than conventional registered DIMMs (RDIMMs) without increasing DRAM chip frequencies. In a production server, the upgrade increases bandwidth by 41%, which delivers 27-41% higher performance for bandwidth-bound workloads and hundreds of nanoseconds lower latency for latency-sensitive ones. At equal bandwidth utilization, power consumption is comparable, but the performance gains in the extended bandwidth region outweigh the power increase, yielding up to 30% server energy savings for memory-bound workloads.

What carries the argument

MRDIMMs that multiplex ranks on each DIMM to raise effective bandwidth without raising DRAM frequency or chip count.

If this is right

- Bandwidth-bound workloads see performance gains of 27-41%.

- Latency-sensitive workloads benefit from memory access time reductions of hundreds of nanoseconds.

- Server energy consumption falls by as much as 30% for memory-bound applications.

- Power draw remains similar to RDIMMs at identical bandwidth utilization levels.

Where Pith is reading between the lines

- Data-center operators could treat MRDIMMs as a straightforward upgrade path to raise throughput while lowering energy per operation.

- Memory vendors might extend rank multiplexing to future DIMM generations to scale bandwidth without proportional frequency increases.

- Testing MRDIMMs under cloud-scale mixed workloads would reveal whether the energy savings persist when compute and memory phases interact.

- System architects could model total cost of ownership improvements by combining the measured performance and energy numbers with real server utilization traces.

Load-bearing premise

The tested workloads and server configuration represent typical production environments so that the observed bandwidth, performance, and energy differences generalize beyond the measured points.

What would settle it

Running the same benchmarks on a different server model or with a broader set of production-like workloads and checking whether the 41% bandwidth increase and up to 30% energy savings still hold.

Figures

read the original abstract

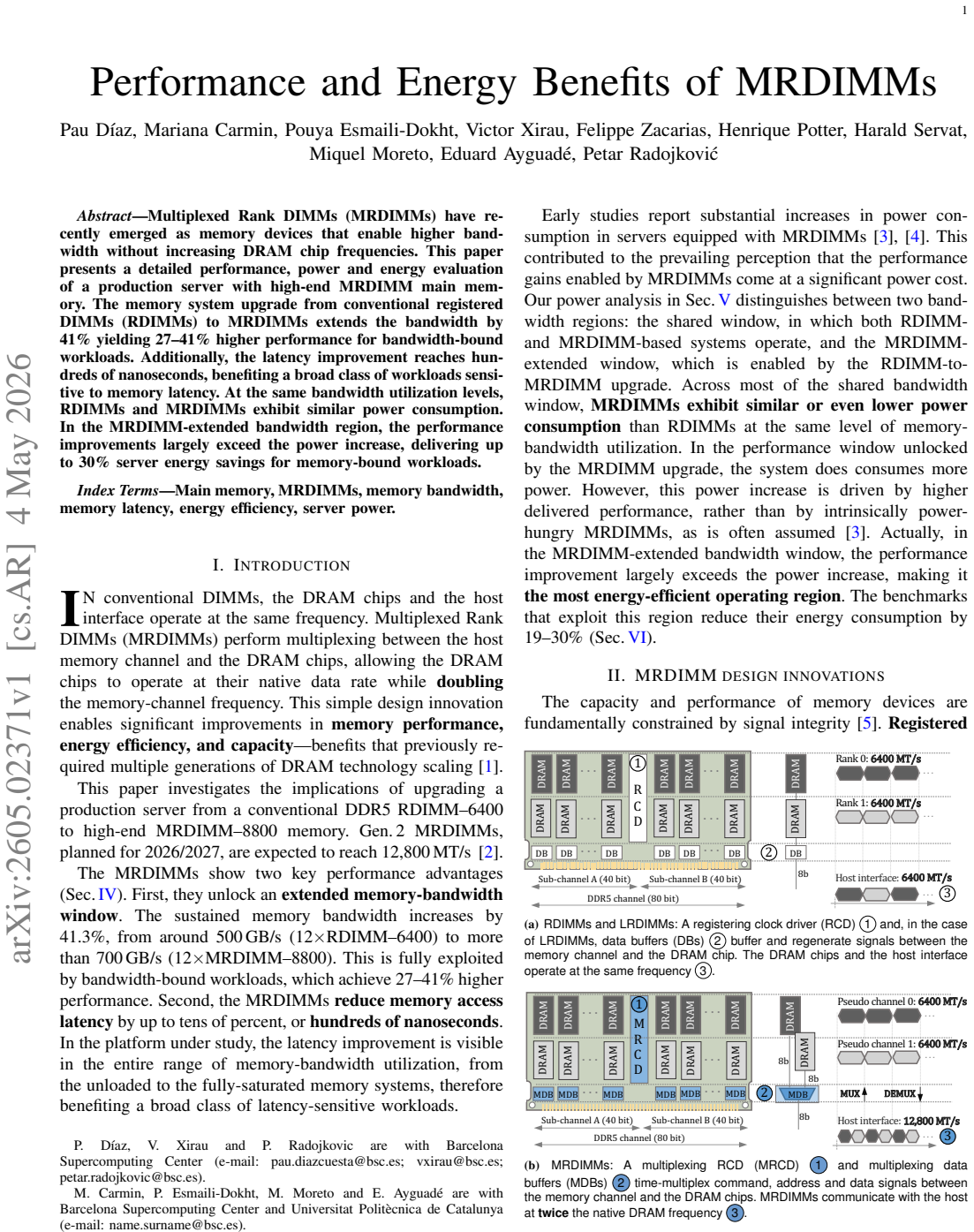

Multiplexed Rank DIMMs (MRDIMMs) have recently emerged as memory devices that enable higher bandwidth without increasing DRAM chip frequencies. This paper presents a detailed performance, power and energy evaluation of a production server with high-end MRDIMM main memory. The memory system upgrade from conventional registered DIMMs (RDIMMs) to MRDIMMs extends the bandwidth by 41% yielding 27-41% higher performance for bandwidth-bound workloads. Additionally, the latency improvement reaches hundreds of nanoseconds, benefiting a broad class of workloads sensitive to memory latency. At the same bandwidth utilization levels, RDIMMs and MRDIMMs exhibit similar power consumption. In the MRDIMM-extended bandwidth region, the performance improvements largely exceed the power increase, delivering up to 30% server energy savings for memory-bound workloads.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper evaluates MRDIMMs versus conventional RDIMMs on a production high-end server, reporting that MRDIMMs deliver a 41% bandwidth increase that yields 27-41% higher performance on bandwidth-bound workloads, hundreds of nanoseconds of latency reduction, comparable power draw at matched utilization, and up to 30% server energy savings for memory-bound workloads because performance gains outpace any power increase in the extended bandwidth region.

Significance. If the measured gains prove robust and generalizable, the work supplies concrete empirical data on a commercially relevant memory technology that avoids raising DRAM frequencies. The use of direct server-level measurements rather than simulation or modeling is a strength, as is the joint reporting of bandwidth, performance, latency, power, and energy metrics. These results could inform memory-system design choices for bandwidth-sensitive server workloads.

major comments (3)

- [Evaluation section] Evaluation section: the manuscript reports quantitative outcomes (41% bandwidth, 27-41% performance, 30% energy) from a single server configuration and a limited set of bandwidth-bound workloads but provides neither workload selection criteria, miss-rate or access-granularity statistics, nor raw data or error bars. This absence makes it impossible to determine whether the cited gains are representative or sensitive to post-hoc workload choice, directly affecting the central claim that the benefits extend to production memory-bound workloads.

- [Power and energy analysis] Power and energy analysis: the statement that RDIMMs and MRDIMMs exhibit similar power at equal bandwidth utilization levels does not specify how utilization is measured or normalized given MRDIMM multiplexing. Without this definition, the claim that performance improvements exceed power increases (and therefore produce 30% energy savings) rests on an unverified assumption and is load-bearing for the energy result.

- [Results presentation] Results presentation: no statistical significance tests, confidence intervals, or repeated-run data accompany the reported percentages. The 41% bandwidth and 27-41% performance figures are therefore presented without evidence that they exceed measurement variability, weakening the quantitative claims.

minor comments (2)

- [Abstract and introduction] The abstract and introduction could more explicitly state the exact server model, core count, and memory configuration used, allowing readers to assess representativeness without searching the evaluation section.

- [Figures] Figure captions and axis labels should clarify whether bandwidth utilization is normalized to the MRDIMM or RDIMM peak, to avoid ambiguity when comparing the two configurations.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and for recognizing the value of our direct server-level measurements. We address each major comment point by point below and indicate where revisions will be made.

read point-by-point responses

-

Referee: [Evaluation section] Evaluation section: the manuscript reports quantitative outcomes (41% bandwidth, 27-41% performance, 30% energy) from a single server configuration and a limited set of bandwidth-bound workloads but provides neither workload selection criteria, miss-rate or access-granularity statistics, nor raw data or error bars. This absence makes it impossible to determine whether the cited gains are representative or sensitive to post-hoc workload choice, directly affecting the central claim that the benefits extend to production memory-bound workloads.

Authors: We agree that explicit workload selection criteria and supporting statistics would improve transparency. In the revised manuscript we will add a dedicated subsection describing the criteria used to classify workloads as bandwidth-bound (high sustained memory bandwidth utilization observed via hardware counters) and will report available miss-rate and access-granularity statistics from our profiling runs. We will also augment the figures with error bars derived from repeated measurements. Full raw datasets cannot be released because of confidentiality agreements with the server vendor, but the added statistics and error bars will allow readers to assess representativeness. revision: partial

-

Referee: [Power and energy analysis] Power and energy analysis: the statement that RDIMMs and MRDIMMs exhibit similar power at equal bandwidth utilization levels does not specify how utilization is measured or normalized given MRDIMM multiplexing. Without this definition, the claim that performance improvements exceed power increases (and therefore produce 30% energy savings) rests on an unverified assumption and is load-bearing for the energy result.

Authors: We acknowledge that the normalization method must be stated explicitly. The revised manuscript will define bandwidth utilization as the ratio of measured workload bandwidth to the peak sustainable bandwidth of the installed DIMM configuration, with MRDIMM multiplexing accounted for by using the extended peak bandwidth value when computing utilization for MRDIMMs. This ensures comparisons occur at equivalent relative load points. The power and energy sections will be updated with this definition and supporting measurements. revision: yes

-

Referee: [Results presentation] Results presentation: no statistical significance tests, confidence intervals, or repeated-run data accompany the reported percentages. The 41% bandwidth and 27-41% performance figures are therefore presented without evidence that they exceed measurement variability, weakening the quantitative claims.

Authors: We agree that statistical support is necessary. The revised version will include confidence intervals calculated from multiple independent runs for all key metrics and will report the results of appropriate statistical tests (e.g., paired t-tests) confirming that the observed improvements exceed measurement variability at conventional significance levels. revision: yes

- Release of complete raw experimental datasets from the production server due to vendor confidentiality constraints.

Circularity Check

No circularity: direct empirical measurements only

full rationale

The paper reports benchmark results from a production server comparing RDIMMs and MRDIMMs. All central claims (41% bandwidth uplift, 27-41% performance gains, hundreds of ns latency reduction, up to 30% energy savings) are stated as observed outcomes from direct measurement of specific workloads and configurations. No equations, fitted models, predictions derived from parameters, or self-citation chains appear in the derivation chain. The evaluation is self-contained against external benchmarks and does not reduce any result to its own inputs by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The selected bandwidth-bound and latency-sensitive workloads are representative of production server usage.

Reference graph

Works this paper leans on

-

[1]

https://newsroom.intel.com/data-center/ new-ultrafast-memory-boosts-intel-data-center-chips, Nov 2024

New Ultrafast Memory Boosts Intel Data Cen- ter Chips. https://newsroom.intel.com/data-center/ new-ultrafast-memory-boosts-intel-data-center-chips, Nov 2024

2024

-

[2]

Introduction to MRDIMM Memory Technology

Lenovo Press. Introduction to MRDIMM Memory Technology. 2025

2025

-

[3]

Granite Rapids

Revisiting DDR5-6400 vs. MRDIMM-8800 Performance With In- tel Xeon 6 “Granite Rapids”. https://www.phoronix.com/review/ ddr5-6400-mrdimm-8800, September 2025

2025

-

[4]

Dr ´avai and I

B. Dr ´avai and I. Z. Reguly. Performance and efficiency: A multi- generational benchmark of modern processors on bandwidth-bound HPC applications.Future Generation Computer Systems, 2025

2025

-

[5]

Z. Mollah. Expanding Server Memory Capabilities with Multiplexed Rank DIMM (MRDIMM) Technology.White paper, 2025

2025

-

[6]

JESD82-514.01: DDR5 Registering Clock Driver Defini- tion (DDR5RCD04)

JEDEC. JESD82-514.01: DDR5 Registering Clock Driver Defini- tion (DDR5RCD04). June 2024

2024

-

[7]

JESD82-521: DDR5 Data Buffer Definition (DDR5DB01)

JEDEC. JESD82-521: DDR5 Data Buffer Definition (DDR5DB01). Revision 1.1. December 2021

2021

-

[8]

Unlock the power of more cores with MRDIMM.Micron White paper, 2024

Micron Technology Inc. Unlock the power of more cores with MRDIMM.Micron White paper, 2024

2024

-

[9]

®Intel ®Xeon 6980P Processor, September 2024

Intel Corporation. ®Intel ®Xeon 6980P Processor, September 2024

2024

-

[10]

M. D. Powell et al. Intel Xeon 6 Product Family.IEEE Micro, 2025

2025

-

[11]

J. D. McCalpin. Memory bandwidth and machine balance in current high performance computers.IEEE TCCA, 1995

1995

-

[12]

Multichase

Google. Multichase. https://github.com/google/multichase, 2021

2021

-

[13]

http://lmbench.sourceforge.net, 2005

LMbench. http://lmbench.sourceforge.net, 2005

2005

-

[14]

oneMKL Developer Guide for Linux

Intel Corporation. oneMKL Developer Guide for Linux. Technical report

-

[15]

Intel Memory Latency Checker v3.5

Intel Corporation. Intel Memory Latency Checker v3.5. https://software. intel.com/en-us/articles/intelr-memory-latency-checker, 2023

2023

-

[16]

Esmaili-Dokht et al

P. Esmaili-Dokht et al. A Mess of Memory System Benchmarking, Simulation and Application Profiling. InMICRO, 2024

2024

-

[17]

David et al

H. David et al. RAPL: Memory power estimation and capping. In ISLPED, 2010

2010

-

[18]

Laurie et al

D. Laurie et al. IPMItool. https://github.com/ipmitool/ipmitool

-

[19]

I. E. Papazian. New 3rd Gen Intel® Xeon® Scalable Processor (Codename: Ice Lake-SP). InIEEE Hot Chips 32 Symposium, 2020

2020

-

[20]

https://www.mouser.com/, 2026

Mouser Electronics. https://www.mouser.com/, 2026

2026

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.