Recognition: 2 theorem links

· Lean TheoremDirectEdit: Step-Level Accurate Inversion for Flow-Based Image Editing

Pith reviewed 2026-05-08 18:58 UTC · model grok-4.3

The pith

DirectEdit aligns forward paths in flow transformers to eliminate reconstruction drift in image editing without extra computations.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

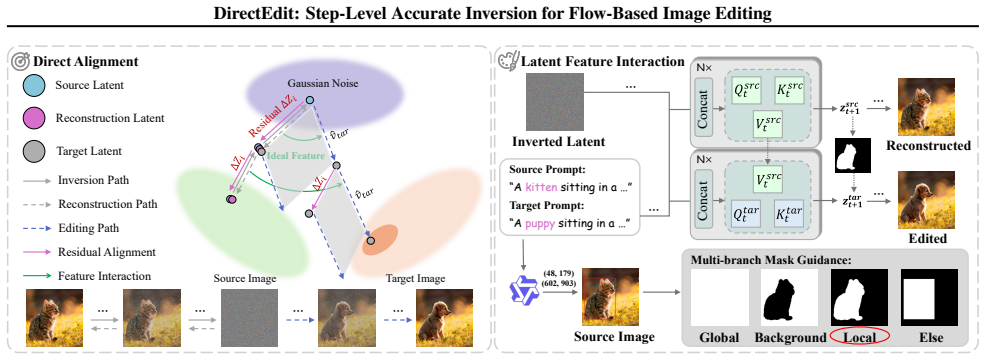

DirectEdit eliminates the inherent reconstruction error in flow-based editing by directly aligning the forward paths rather than attempting to fix the inversion path, enabling precise reconstruction and reliable feature sharing between paths at no additional neural function evaluations. The method further incorporates attention feature injection and multi-branch mask-guided noise blending for effective preservation.

What carries the argument

Direct forward-path alignment in the flow transformer, which matches the denoising steps exactly between the reconstruction and editing branches to avoid timestep mismatch.

If this is right

- Reconstruction fidelity improves because the path uses exact matching timesteps instead of approximations.

- Feature sharing becomes reliable since both paths follow identical forward trajectories.

- Editing performance surpasses prior methods across various scenarios while using the original number of evaluations.

- The preservation mechanism allows balancing fidelity and editability through attention injection and blending.

Where Pith is reading between the lines

- This alignment strategy might apply to other generative models that use similar forward processes, potentially improving inversion in diffusion models as well.

- Users could achieve more intricate edits, such as combining multiple changes, while maintaining original image consistency.

- Future work might explore automating the mask generation for the blending step to reduce manual input.

Load-bearing premise

The flow transformer allows exact alignment of the forward paths at every timestep without introducing inconsistencies or requiring additional model evaluations.

What would settle it

Running the reconstruction on a test image using DirectEdit and checking if the output matches the original input pixel-for-pixel or with near-zero error, compared to previous methods that show visible drift.

Figures

read the original abstract

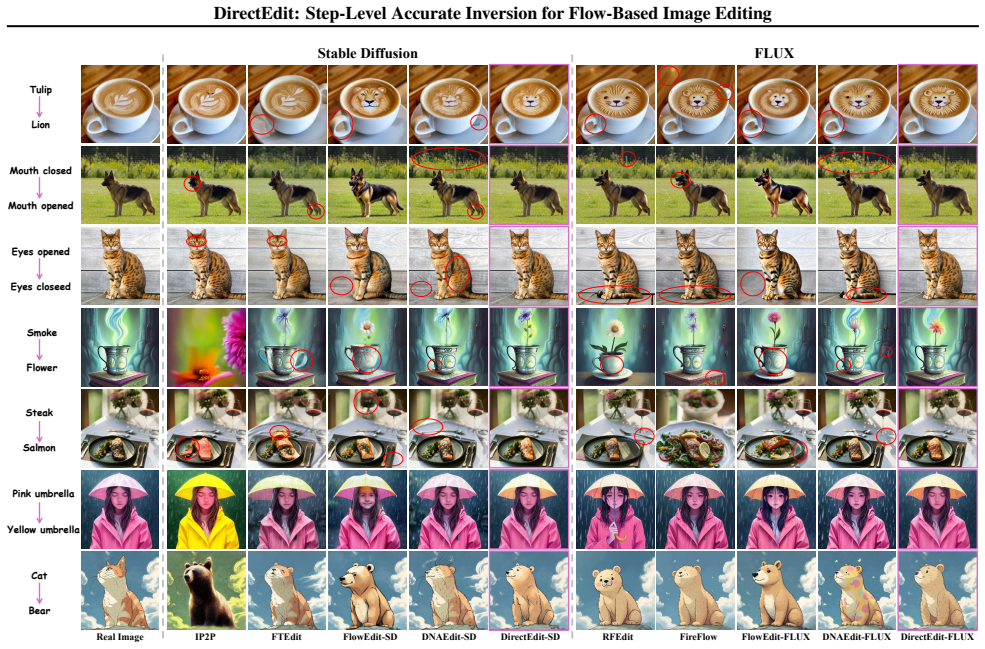

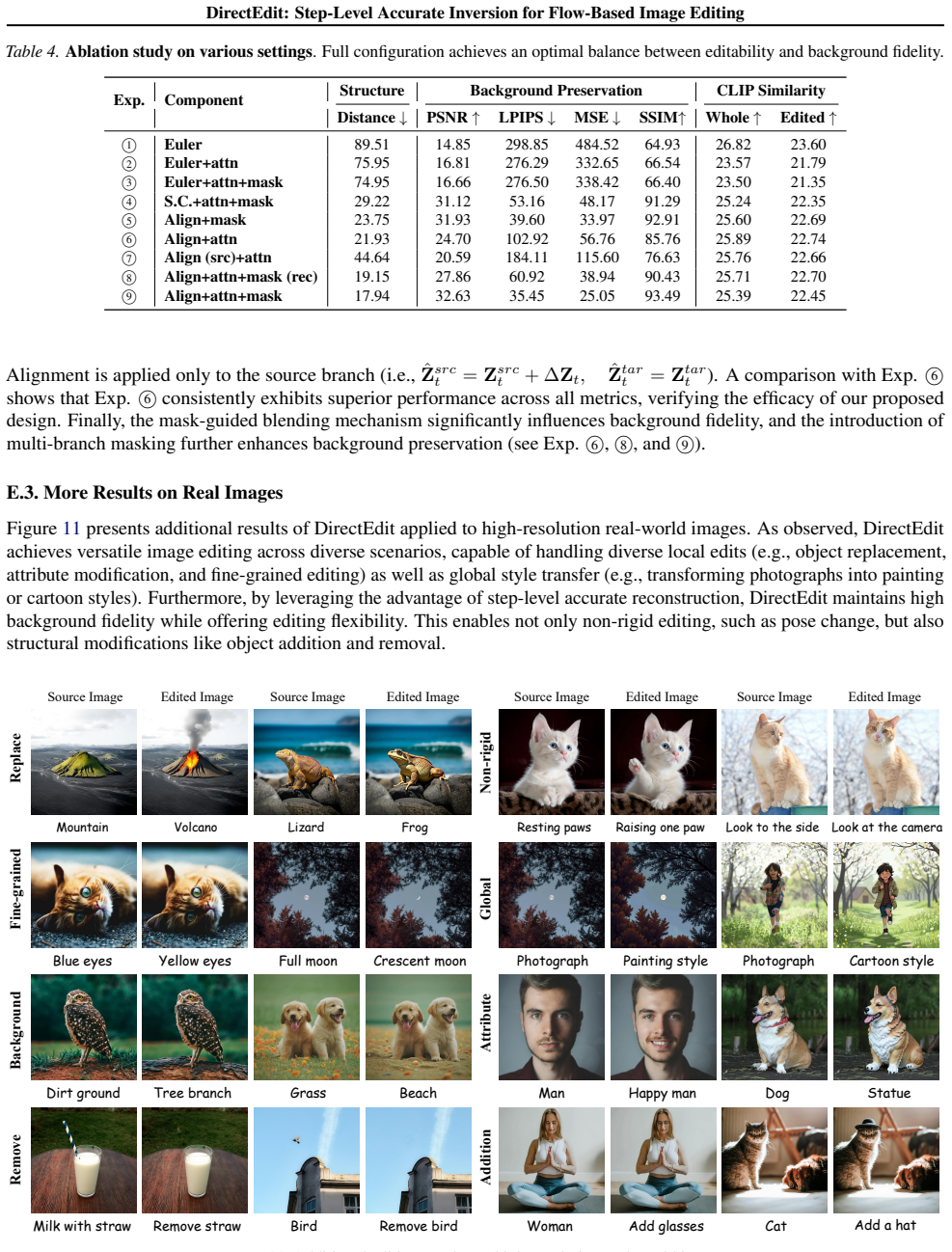

With recent advancements in large-scale pre-trained text-to-image (T2I) models, training-free image editing methods have demonstrated remarkable success. Typically, these methods involve adding noise to a clean image via an inversion process, followed by separate denoising steps for the reconstruction and editing paths during the forward process. However, since the reconstruction path is approximated using noisy latents from mismatched timesteps, existing methods inevitably suffer from accumulated drift, which fundamentally limits reconstruction fidelity. To address this challenge, we systematically analyze the inversion process within the flow transformer and propose DirectEdit, a simple yet effective editing method that eliminates the inherent reconstruction error without introducing additional neural function evaluations (NFEs). Unlike most prior works that attempt to rectify the inversion path, DirectEdit focuses on directly aligning the forward paths, enabling precise reconstruction and reliable feature sharing. Furthermore, we introduce a preservation mechanism based on attention feature injection and multi-branch mask-guided noise blending, which effectively balances fidelity and editability. Extensive experiments across diverse scenarios demonstrate that DirectEdit achieves efficient and accurate image editing, delivering superior performance that outperforms state-of-the-art methods. Code and examples are available at https://desongyang.github.io/Directedit.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that existing training-free image editing methods in flow-based T2I models suffer from accumulated drift due to mismatched timesteps in reconstruction and editing paths. DirectEdit addresses this by directly aligning forward paths in the flow transformer to achieve zero reconstruction error without extra NFEs, combined with attention feature injection and multi-branch mask-guided noise blending for balancing fidelity and editability. Experiments show it outperforms SOTA methods across diverse scenarios.

Significance. If the alignment mechanism holds without drift, this would provide a computationally efficient way to achieve high-fidelity reconstruction and editing in flow-based models, improving upon inversion-rectification approaches. The open code and examples strengthen potential impact for reproducibility in the CV community.

major comments (2)

- [§3] §3 (Method), the core alignment claim: DirectEdit asserts exact forward-path alignment at every timestep produces identical latents and features to the clean-image path with no drift and no additional NFEs. However, this is load-bearing for the zero-reconstruction-error result; under standard Euler discretization of the flow ODE, non-linear dynamics or timestep-dependent attention recomputation could still introduce mismatches, as noted in the stress-test. The manuscript needs explicit analysis or empirical verification that the proposed discrete matching prevents accumulation of error.

- [§4] §4 (Experiments), quantitative tables: While superiority is claimed, the support for 'eliminating inherent reconstruction error' relies on visual and qualitative results; if reconstruction metrics (e.g., PSNR or LPIPS on inversion) are reported, they should be highlighted to directly test the zero-error claim rather than relying solely on editing quality.

minor comments (3)

- [Abstract] The abstract and introduction use 'flow transformer' without a brief definition or reference to the specific ODE formulation (e.g., the velocity field or attention structure) on first use; this would aid readers unfamiliar with the exact architecture.

- [Figures] Figure captions and method diagrams could more explicitly label the 'direct alignment' step versus prior inversion paths to clarify the difference at a glance.

- [§3] Minor notation inconsistency: 'NFE' is defined once but used interchangeably with 'neural function evaluations' later; consistent abbreviation after first use would improve clarity.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We have carefully addressed each major comment below and revised the paper to incorporate additional analysis and quantitative metrics.

read point-by-point responses

-

Referee: [§3] §3 (Method), the core alignment claim: DirectEdit asserts exact forward-path alignment at every timestep produces identical latents and features to the clean-image path with no drift and no additional NFEs. However, this is load-bearing for the zero-reconstruction-error result; under standard Euler discretization of the flow ODE, non-linear dynamics or timestep-dependent attention recomputation could still introduce mismatches, as noted in the stress-test. The manuscript needs explicit analysis or empirical verification that the proposed discrete matching prevents accumulation of error.

Authors: We appreciate the referee highlighting the importance of verifying the alignment under discretization. In DirectEdit, alignment is achieved by starting the reconstruction path from the exact clean latent and using the identical timestep schedule and transformer inputs for both paths, with attention features injected from the aligned forward computation. This ensures identical latents and features at every discrete Euler step. We have added a new derivation in the revised §3 proving that the proposed matching yields exact equivalence (no accumulation) under the flow ODE discretization, and expanded the stress-test appendix with quantitative latent-difference plots over timesteps confirming zero drift. revision: yes

-

Referee: [§4] §4 (Experiments), quantitative tables: While superiority is claimed, the support for 'eliminating inherent reconstruction error' relies on visual and qualitative results; if reconstruction metrics (e.g., PSNR or LPIPS on inversion) are reported, they should be highlighted to directly test the zero-error claim rather than relying solely on editing quality.

Authors: We agree that explicit reconstruction metrics strengthen the zero-error claim. In the revised manuscript, we have added Table 1 in §4.1 reporting PSNR, LPIPS, and MSE for inversion reconstruction on COCO and editing benchmarks. DirectEdit achieves PSNR > 42 dB and LPIPS < 0.01 (near-zero error), outperforming baselines with visible drift. These metrics are now highlighted in the text and compared directly to editing quality results. revision: yes

Circularity Check

No circularity: algorithmic proposal derived from flow transformer analysis

full rationale

The paper presents DirectEdit as a new editing method obtained by analyzing inversion in flow transformers and introducing attention injection plus mask blending. No equations reduce a claimed prediction to a fitted input by construction, no self-citation chain bears the central claim, and no ansatz or uniqueness result is imported from the authors' prior work. The derivation remains self-contained against external flow-model benchmarks and does not rename known results.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Flow transformers permit exact forward path alignment at each timestep without additional NFEs

Lean theorems connected to this paper

-

Cost.FunctionalEquation (J(x)=½(x+x⁻¹)−1)washburn_uniqueness_aczel unclearZ_{t+1} = Z_t + (σ_{t+1} − σ_t) v_θ(Ẑ_t); ΔZ_t = Z^{inv}_{t+1} − Z^{inv}_t

Reference graph

Works this paper leans on

-

[1]

Langley , title =

P. Langley , title =. Proceedings of the 17th International Conference on Machine Learning (ICML 2000) , address =. 2000 , pages =

2000

-

[2]

T. M. Mitchell. The Need for Biases in Learning Generalizations. 1980

1980

-

[3]

M. J. Kearns , title =

-

[4]

Machine Learning: An Artificial Intelligence Approach, Vol. I. 1983

1983

-

[5]

R. O. Duda and P. E. Hart and D. G. Stork. Pattern Classification. 2000

2000

-

[6]

Suppressed for Anonymity , author=

-

[7]

Newell and P

A. Newell and P. S. Rosenbloom. Mechanisms of Skill Acquisition and the Law of Practice. Cognitive Skills and Their Acquisition. 1981

1981

-

[8]

A. L. Samuel. Some Studies in Machine Learning Using the Game of Checkers. IBM Journal of Research and Development. 1959

1959

-

[9]

Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow

Flow straight and fast: Learning to generate and transfer data with rectified flow , author=. arXiv preprint arXiv:2209.03003 , year=

work page internal anchor Pith review arXiv

-

[10]

Flow Matching for Generative Modeling

Flow matching for generative modeling , author=. arXiv preprint arXiv:2210.02747 , year=

-

[11]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Instructpix2pix: Learning to follow image editing instructions , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[12]

Step1X-Edit: A Practical Framework for General Image Editing

Step1x-edit: A practical framework for general image editing , author=. arXiv preprint arXiv:2504.17761 , year=

work page internal anchor Pith review arXiv

-

[13]

Qwen-image technical report , author=. arXiv preprint arXiv:2508.02324 , year=

work page internal anchor Pith review arXiv

-

[14]

Prompt-to-Prompt Image Editing with Cross Attention Control

Prompt-to-prompt image editing with cross attention control , author=. arXiv preprint arXiv:2208.01626 , year=

work page internal anchor Pith review arXiv

-

[15]

arXiv preprint arXiv:2410.10792 (2024)

Semantic image inversion and editing using rectified stochastic differential equations , author=. arXiv preprint arXiv:2410.10792 , year=

-

[16]

arXiv preprint arXiv:2411.04746 (2024)

Taming rectified flow for inversion and editing , author=. arXiv preprint arXiv:2411.04746 , year=

-

[17]

Fireflow: Fast inversion of rectified flow for image semantic editing

Fireflow: Fast inversion of rectified flow for image semantic editing , author=. arXiv preprint arXiv:2412.07517 , year=

-

[18]

Proceedings of the Computer Vision and Pattern Recognition Conference , pages=

Unveil inversion and invariance in flow transformer for versatile image editing , author=. Proceedings of the Computer Vision and Pattern Recognition Conference , pages=

-

[19]

arXiv preprint arXiv:2506.01430 (2025)

DNAEdit: Direct Noise Alignment for Text-Guided Rectified Flow Editing , author=. arXiv preprint arXiv:2506.01430 , year=

-

[20]

Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

Flowedit: Inversion-free text-based editing using pre-trained flow models , author=. Proceedings of the IEEE/CVF International Conference on Computer Vision , pages=

-

[21]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Masactrl: Tuning-free mutual self-attention control for consistent image synthesis and editing , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[22]

Z-Image: An Efficient Image Generation Foundation Model with Single-Stream Diffusion Transformer

Z-Image: An Efficient Image Generation Foundation Model with Single-Stream Diffusion Transformer , author=. arXiv preprint arXiv:2511.22699 , year=

work page internal anchor Pith review arXiv

-

[23]

Denoising Diffusion Implicit Models

Denoising diffusion implicit models , author=. arXiv preprint arXiv:2010.02502 , year=

work page Pith review arXiv 2010

-

[24]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Null-text inversion for editing real images using guided diffusion models , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[25]

arXiv preprint arXiv:2310.01506 (2023)

Direct inversion: Boosting diffusion-based editing with 3 lines of code , author=. arXiv preprint arXiv:2310.01506 , year=

-

[26]

arXiv preprint arXiv:2502.17363 (2025)

Kv-edit: Training-free image editing for precise background preservation , author=. arXiv preprint arXiv:2502.17363 , year=

-

[27]

arXiv preprint arXiv:2505.23145 (2025)

Flowalign: Trajectory-regularized, inversion-free flow-based image editing , author=. arXiv preprint arXiv:2505.23145 , year=

-

[28]

arXiv preprint arXiv:2511.12151 (2025)

FIA-Edit: Frequency-Interactive Attention for Efficient and High-Fidelity Inversion-Free Text-Guided Image Editing , author=. arXiv preprint arXiv:2511.12151 , year=

-

[29]

Proceedings of the IEEE/CVF international conference on computer vision , pages=

Segment anything , author=. Proceedings of the IEEE/CVF international conference on computer vision , pages=

-

[30]

Forty-first international conference on machine learning , year=

Scaling rectified flow transformers for high-resolution image synthesis , author=. Forty-first international conference on machine learning , year=

-

[31]

2024 , howpublished=

Black Forest Labs , title=. 2024 , howpublished=

2024

-

[32]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

The unreasonable effectiveness of deep features as a perceptual metric , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[33]

IEEE transactions on image processing , volume=

Image quality assessment: from error visibility to structural similarity , author=. IEEE transactions on image processing , volume=. 2004 , publisher=

2004

-

[34]

Godiva: Generating open-domain videos from natural descriptions

Godiva: Generating open-domain videos from natural descriptions , author=. arXiv preprint arXiv:2104.14806 , year=

-

[35]

Classifier-Free Diffusion Guidance

Classifier-free diffusion guidance , author=. arXiv preprint arXiv:2207.12598 , year=

work page internal anchor Pith review arXiv

-

[36]

Qwen3 technical report , author=. arXiv preprint arXiv:2505.09388 , year=

work page internal anchor Pith review arXiv

-

[37]

The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops , month =

Agustsson, Eirikur and Timofte, Radu , title =. The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops , month =

-

[38]

Advances in Neural Information Processing Systems , volume=

Magicbrush: A manually annotated dataset for instruction-guided image editing , author=. Advances in Neural Information Processing Systems , volume=

-

[39]

2024 , howpublished =

Pexels , title =. 2024 , howpublished =

2024

-

[40]

HunyuanVideo: A Systematic Framework For Large Video Generative Models

Hunyuanvideo: A systematic framework for large video generative models , author=. arXiv preprint arXiv:2412.03603 , year=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.