Recognition: unknown

Degeneracy-Aware Functional and Algorithmic Resilience in Virtualized 6G Networks Under Correlated Failures

Pith reviewed 2026-05-08 17:30 UTC · model grok-4.3

The pith

Structural diversity, not replica counts, determines resilience in virtualized 6G networks under correlated failures

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

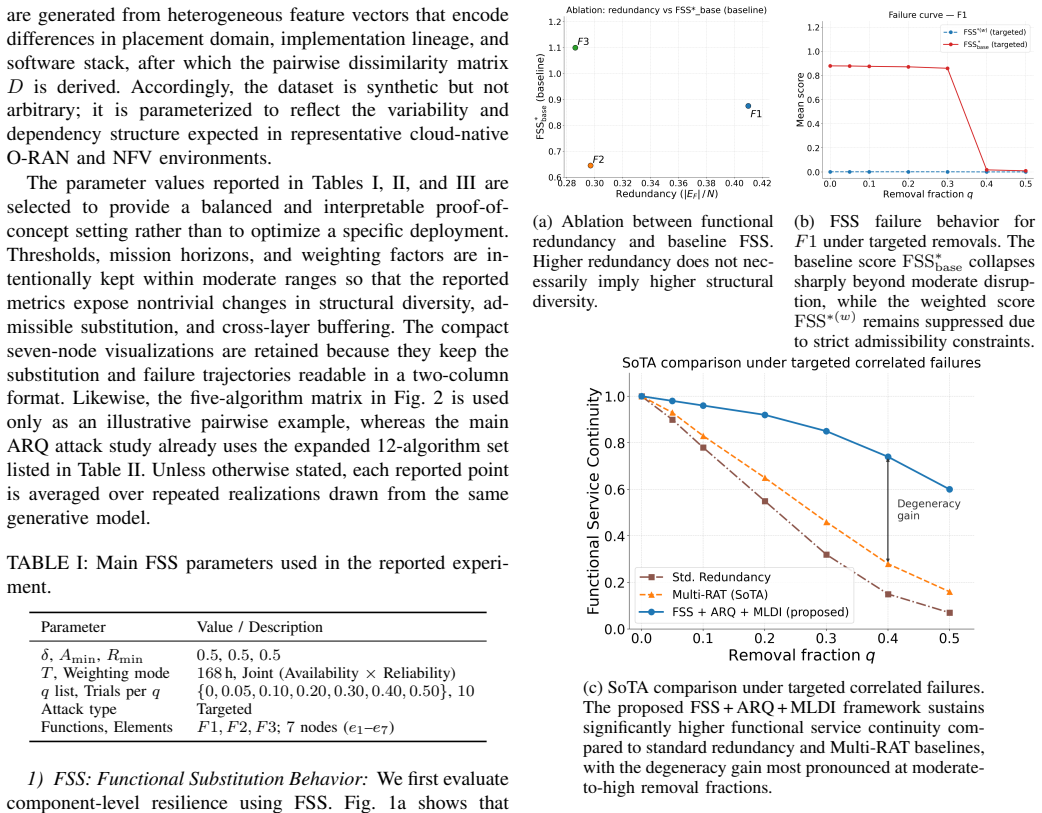

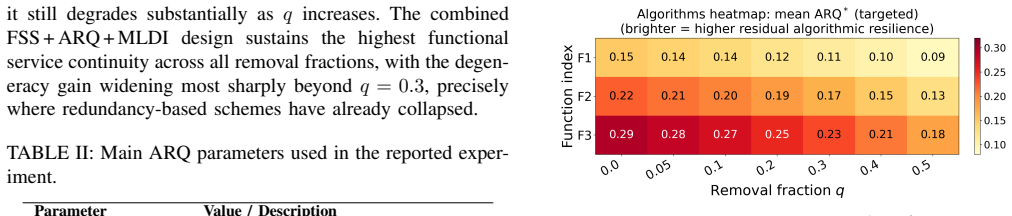

Adopting a degeneracy-aware perspective where robustness depends on the availability of structurally diverse yet functionally equivalent alternatives, the paper formalizes this through the Functional Substitution Score, Algorithmic Resilience Quotient, and Multi-Layer Degeneracy Index. Targeted disruption protocols on synthesized data show that redundancy and robustness diverge substantially, with FSS separating structural diversity from replica count, ARQ distinguishing genuine algorithmic alternatives from near-duplicate implementations, and MLDI capturing cross-layer buffering that remains hidden under redundancy-only analysis.

What carries the argument

The degeneracy-aware perspective, carried by three metrics: the Functional Substitution Score (FSS) that quantifies structurally distinct substitutes for a function, the Algorithmic Resilience Quotient (ARQ) that measures diversity among algorithms delivering comparable performance, and the Multi-Layer Degeneracy Index (MLDI) that captures distribution of functional diversity across layers.

If this is right

- Resilience evaluations for 6G must separate structural and algorithmic diversity from raw replication numbers.

- Network designs should identify and preserve cross-layer buffering to protect against correlated failures.

- Open and disaggregated 6G systems can treat degeneracy as an explicit design primitive alongside traditional redundancy.

Where Pith is reading between the lines

- The same metrics could be tested in other virtualized environments such as large-scale cloud platforms that face analogous shared-failure risks.

- Automated computation of FSS, ARQ, and MLDI during network operation might enable dynamic reconfiguration for higher robustness.

- Standards bodies could incorporate degeneracy requirements when specifying resilience targets for future generations of virtualized networks.

Load-bearing premise

The synthesized data and targeted disruption protocols sufficiently model the complexities of real 6G networks and correlated failure scenarios.

What would settle it

Applying the same targeted disruption protocols to traces or simulations drawn from an operational 6G deployment and finding no substantial divergence between simple replica counts and the values produced by FSS, ARQ, and MLDI would indicate that the degeneracy metrics add no new information.

Figures

read the original abstract

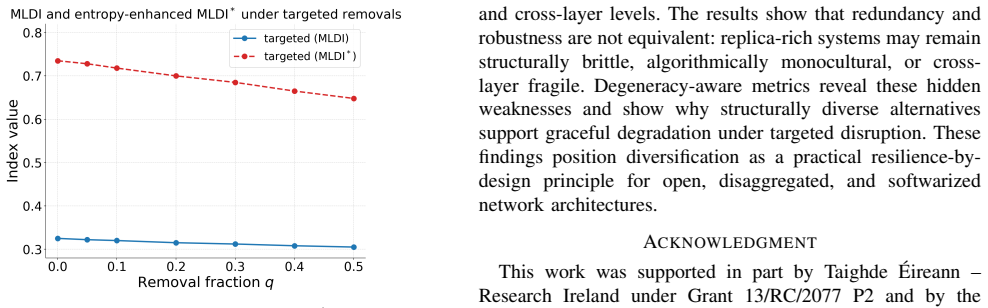

Redundancy is widely used to sustain service continuity in programmable and virtualized networks; however, replicated functions often share platforms, software stacks, and control dependencies, making them vulnerable to correlated failures. Consequently, replica counts alone may overestimate true resilience. This paper adopts a degeneracy-aware perspective, where robustness depends on the availability of structurally diverse yet functionally equivalent alternatives. We formalize this perspective through three complementary metrics: the Functional Substitution Score (FSS), which quantifies structurally distinct substitutes for a function; the Algorithmic Resilience Quotient (ARQ), which measures diversity among algorithms that remain comparable in delivered performance; and the Multi-Layer Degeneracy Index (MLDI), which captures how functional diversity is distributed across architectural layers. Using targeted disruption protocols on a synthesized data, we show that redundancy and robustness can diverge substantially. The results show that FSS separates structural diversity from replica count, ARQ distinguishes genuine algorithmic alternatives from near-duplicate implementations, and MLDI captures cross-layer buffering that remains hidden under redundancy-only analysis. These findings establish degeneracy as a practical resilience primitive for open, disaggregated, and virtualized 6G systems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript argues that redundancy in virtualized 6G networks overestimates resilience under correlated failures because replicas often share platforms, software stacks, and control dependencies. It introduces a degeneracy-aware perspective formalized via three metrics: Functional Substitution Score (FSS) quantifying structurally distinct substitutes, Algorithmic Resilience Quotient (ARQ) measuring diversity among comparable algorithms, and Multi-Layer Degeneracy Index (MLDI) capturing cross-layer distribution of functional diversity. Using targeted disruption protocols on synthesized data, the paper claims to demonstrate substantial divergence between redundancy counts and true robustness, with the metrics revealing buffering and alternatives hidden by redundancy-only analysis.

Significance. If the central claims are substantiated with validated experiments, the work could meaningfully advance resilience engineering for disaggregated 6G systems by shifting emphasis from replica quantity to structural and algorithmic diversity. The proposed metrics offer a concrete, multi-layer framework that could inform simulation tools, control-plane design, and standards for open virtualized networks, addressing a practical gap in handling correlated failures.

major comments (2)

- Abstract: The central claim that 'redundancy and robustness can diverge substantially' and that FSS/ARQ/MLDI capture hidden aspects rests entirely on results from 'targeted disruption protocols on a synthesized data', yet the abstract (and by extension the manuscript's experimental core) provides no quantitative outcomes, baselines, error bars, or statistical tests. This leaves the divergence unquantified and the load-bearing evidence unsupported.

- Methodology and results sections: The synthesized data generator and its correlation structure are unspecified; there is no generative model, no procedure for fitting to empirical 6G failure traces, and no sensitivity analysis on parameters such as topology, correlation strength, or platform dependencies. Without these, the reported gaps between redundancy and the new metrics could be artifacts of the chosen synthesis rather than a general property of virtualized 6G networks.

minor comments (2)

- Abstract: The phrasing 'on a synthesized data' is grammatically imprecise and should read 'on synthesized data' or 'on a synthesized dataset'.

- Throughout: Ensure first-use definitions for FSS, ARQ, and MLDI, and add explicit comparisons to prior redundancy-based resilience metrics in the related-work section to clarify novelty.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed comments, which help clarify the presentation of our experimental claims and the reproducibility of the synthesized data. We address each major point below and will incorporate revisions to strengthen the manuscript.

read point-by-point responses

-

Referee: Abstract: The central claim that 'redundancy and robustness can diverge substantially' and that FSS/ARQ/MLDI capture hidden aspects rests entirely on results from 'targeted disruption protocols on a synthesized data', yet the abstract (and by extension the manuscript's experimental core) provides no quantitative outcomes, baselines, error bars, or statistical tests. This leaves the divergence unquantified and the load-bearing evidence unsupported.

Authors: We agree that the abstract currently summarizes the divergence qualitatively without specific numbers. The results section does contain quantitative comparisons (e.g., divergence percentages between redundancy counts and FSS/ARQ/MLDI under varying correlation strengths, with baseline redundancy-only analysis), but these are not reflected in the abstract. We will revise the abstract to include key quantitative outcomes, reference to baselines, and mention of error bars/statistical tests from the main results. This addresses the concern without altering the core claims. revision: yes

-

Referee: Methodology and results sections: The synthesized data generator and its correlation structure are unspecified; there is no generative model, no procedure for fitting to empirical 6G failure traces, and no sensitivity analysis on parameters such as topology, correlation strength, or platform dependencies. Without these, the reported gaps between redundancy and the new metrics could be artifacts of the chosen synthesis rather than a general property of virtualized 6G networks.

Authors: The methodology section outlines the high-level correlation structure derived from standard 6G disaggregated topologies and common failure modes (e.g., shared platform and control dependencies). However, we acknowledge that an explicit generative model, any fitting procedure (even for synthesized traces), and sensitivity analysis are not sufficiently detailed. We will add a new subsection with the full generative process, parameter ranges, and sensitivity results across topology and correlation strength to demonstrate that the observed divergence is robust rather than artifactual. revision: yes

Circularity Check

No circularity: metrics are explicitly defined constructs applied to synthetic data without reduction to inputs or self-citations.

full rationale

The paper introduces FSS, ARQ, and MLDI as newly formalized metrics that quantify distinct aspects of degeneracy (structural diversity, algorithmic alternatives, and cross-layer distribution). These are not derived from or fitted to the target resilience quantities; instead, they are applied via targeted disruption protocols to a synthesized dataset to demonstrate divergence from simple redundancy counts. No equations reduce by construction to fitted parameters, no uniqueness theorems are imported from self-citations, and no ansatz or renaming of known results is invoked. The central claims rest on empirical observation of the synthetic ensemble rather than tautological re-expression of inputs.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Resilience-by-Design in 6G Networks: Literature Review and Novel Enabling Concepts,

L. Khaloopour, Y . Su, F. Raskob, T. Meuser, R. Bless, L. Janzen,et al., “Resilience-by-Design in 6G Networks: Literature Review and Novel Enabling Concepts,”IEEE Access, vol. 12, pp. 155666–155695, 2024, doi: 10.1109/ACCESS.2024.3480275

-

[2]

On the Dependability of 6G Networks,

I. Ahmad, M. A. Habibi, S. Mumtaz, J. Rodriguez, and Z. Han, “On the Dependability of 6G Networks,”Electronics, vol. 12, no. 6, p. 1472, 2023, doi: 10.3390/electronics12061472

-

[3]

Cloud-Native Computing: A Survey From the Perspective of Services,

S. Deng, X. Li, S. Dustdar, and A. Iosup, “Cloud-Native Computing: A Survey From the Perspective of Services,”ACM Comput. Surv., 2024

2024

-

[4]

S. Azadiabad and F. Khendek, “Dependability of Network Services in the Context of NFV: A Taxonomy and State of the Art Classification,” J. Network Syst. Manage., 2024, doi: 10.1007/s10922-024-09810-2

-

[5]

Cloud-Native Service Mesh Readiness for 5G and Beyond,

S. Aldas and A. Babakian, “Cloud-Native Service Mesh Readiness for 5G and Beyond,”IEEE Access, vol. 11, pp. 132286–132295, 2023, doi: 10.1109/ACCESS.2023.3335994

-

[6]

Security of Open Radio Access Net- works,

D. Mimran and Y . Shavitt, “Security of Open Radio Access Net- works,”Computers & Security, vol. 121, p. 102830, 2022, doi: 10.1016/j.cose.2022.102830

-

[7]

Report on the Cybersecurity of Open Radio Access Networks (Open RAN),

NIS Cooperation Group, “Report on the Cybersecurity of Open Radio Access Networks (Open RAN),” European Union, May 2022

2022

-

[8]

Open RAN Security Report,

U.S. National Telecommunications and Information Administration, “Open RAN Security Report,” 2023

2023

-

[9]

Degeneracy and Complexity in Biological Systems,

G. M. Edelman and J. A. Gally, “Degeneracy and Complexity in Biological Systems,”Proc. Natl. Acad. Sci. USA, vol. 98, no. 24, pp. 13763–13768, 2001, doi: 10.1073/pnas.231499798

-

[10]

A Survey on Open Radio Access Networks: Challenges, Opportunities, and Future Research Directions,

W. Azariah, M. A. Ahmad, H. Gacanin, and A. Imran, “A Survey on Open Radio Access Networks: Challenges, Opportunities, and Future Research Directions,”Sensors, vol. 24, no. 3, p. 1038, 2024, doi: 10.3390/s24031038

-

[11]

A. Aldossari, M. E. A. Hamza, and M. S. Hossain, “Reliabil- ity and Availability in Virtualized Networks: A Survey on Stan- dards, Modeling Approaches and Research Challenges,”arXiv preprint arXiv:2503.22034, 2025

-

[12]

A Survey on Network Slicing Security: Attacks, Challenges, Solutions and Research Directions,

C. De Alwis, P. Porambage, K. Dev, T. R. Gadekallu, and M. Liyanage, “A Survey on Network Slicing Security: Attacks, Challenges, Solutions and Research Directions,”IEEE Commun. Surveys Tuts., vol. 26, no. 1, pp. 534–570, 2024, doi: 10.1109/COMST.2023.3312349

-

[13]

A Comprehensive Survey on 6G-Security: Physical, Connection and Service Layers,

M. M. Saeed,et al., “A Comprehensive Survey on 6G-Security: Physical, Connection and Service Layers,”Discover Internet of Things, 2025

2025

-

[14]

Failure Analysis in Next-Generation Critical Cellular Communication Infrastructures,

“Failure Analysis in Next-Generation Critical Cellular Communication Infrastructures,”arXiv preprint arXiv:2402.04448, 2024

-

[15]

Network Functions Virtualisation (NFV): Architectural Frame- work,

ETSI, “Network Functions Virtualisation (NFV): Architectural Frame- work,” ETSI GS NFV 002, 2012

2012

-

[16]

A Scalable and Fault-Tolerant 5G Core on Kubernetes,

S. Paul, T. Keluskar, and M. Vutukuru, “A Scalable and Fault-Tolerant 5G Core on Kubernetes,” 2025

2025

-

[17]

S. Barrachina-Mu ˜noz,et al., “Cloud-Native 5G Experimental Platform With Over-the-Air Transmissions: Deployments and Monitoring for 5G and Beyond,”arXiv preprint arXiv:2207.11936, 2022

-

[18]

Cloud-Native Plinth: A Platform to Support Containerized 5G Core Networks,

S. Gao, S. Quan, and J. Wu, “Cloud-Native Plinth: A Platform to Support Containerized 5G Core Networks,” inProc. IEEE 21st Int. Conf. on Mobile Ad-Hoc and Smart Systems (MASS), 2024, doi: 10.1109/MASS62177.2024.00080

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.