Recognition: unknown

Robust Inverse Quadratic Error Decay with Meshing and Beam Search for Random Subset Sum

Pith reviewed 2026-05-08 17:12 UTC · model grok-4.3

The pith

Beam search on an O(B/w) mesh solves random subset sum with expected error O(B/(n w squared)).

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

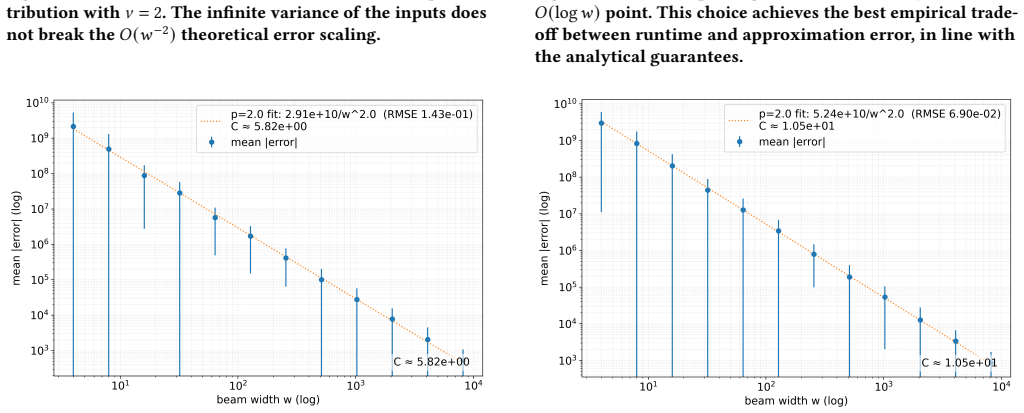

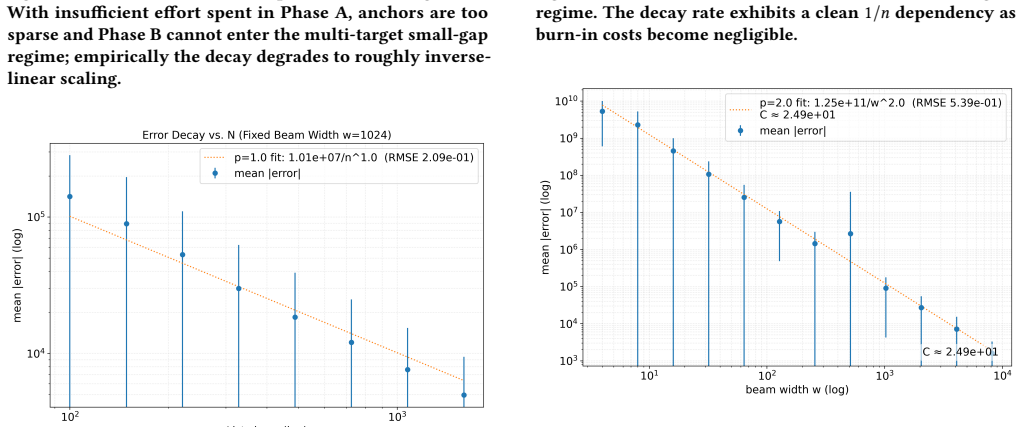

The paper first gives an algorithm that, with high probability, builds the same O(B/w) mesh of candidate sums while keeping only w elements at each step and finishing in O(w log w) time. It then defines a beam search heuristic that traverses this mesh across the full list of n numbers, maintaining w candidates at every layer and using a simple scoring rule to keep the closest sums. Under the standard mean-field assumption of equal standard deviations, the expected error of the final output shrinks as O(B/(n w squared)). The same structure extends to variants of the problem with only local changes to the scoring function.

What carries the argument

The O(B/w) mesh that is trimmed to width w at each step, combined with beam search that scores partial sums for closeness to the target.

If this is right

- The procedure supplies a concrete practical baseline for epsilon-approximations to subset sum.

- Linearithmic runtime in n and w makes larger problem sizes feasible on standard hardware.

- Only local changes to the scoring heuristic are needed to handle natural variants of the problem.

- Empirical tests across multiple input distributions confirm the method does not rely on one special case.

- Error decay improves faster with wider beams than with longer input lists alone.

Where Pith is reading between the lines

- The same meshing-plus-beam pattern could be tested on other combinatorial problems whose state spaces admit compact representations.

- If the quadratic scaling holds in practice, hardware implementations could trade beam width for accuracy more efficiently than increasing sample size n.

- Cryptographic instances often use non-uniform distributions, so direct measurement of error on such data would test how far the mean-field prediction carries.

- The trimming step that keeps only w mesh points may generalize to other dynamic-programming approximations where memory is the bottleneck.

Load-bearing premise

The standard mean-field assumption with equal standard deviation invoked to obtain the O(B/n w squared) expected error bound.

What would settle it

Run the beam-search procedure on random instances where the numbers are drawn from distributions with unequal variances and check whether the observed error still decays proportionally to 1 over n w squared.

Figures

read the original abstract

The Subset Sum Problem is a fundamental NP-complete problem in cryptography and combinatorial optimization, with many real-world applications. The Random Subset Sum Problem (RSSP) is a more applicable version of subset sum, where numbers are drawn from some i.i.d input distribution. We present an algorithm that, with probability $1-\delta$, constructs the same $O(B/w)$ mesh as Da Cunha et al. (2023), while trimming to $w$ elements throughout and running in $O(w\log w)$ time. Then, we present a novel beam search heuristic running in linearithmic time w.r.t list size $n$ and beam width $w$ using the mesh that gives an expected error of $O\!\left(\frac{B}{nw^2}\right)$ under a standard mean-field assumption with equal standard deviation, demonstrating the practical effectiveness of meshing to achieve error decay. The algorithm is empirically robust to multiple input distributions and can naturally extend to variants with simple changes to the scoring heuristic, establishing a new practical baseline for robust subset sum error decay and $\epsilon$-approximation theory.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents a meshing-based beam search heuristic for the Random Subset Sum Problem (RSSP). It claims an O(w log w)-time construction of an O(B/w) mesh (matching Da Cunha et al. 2023 with probability 1-δ) while trimming to beam width w, followed by a linearithmic-time beam search that yields expected error O(B/(n w^2)) under a mean-field assumption with equal standard deviations. Empirical robustness across input distributions and simple extensibility to variants are also asserted.

Significance. If the mean-field assumption holds and is independently validated, the work would supply a practical heuristic achieving inverse-quadratic error decay for RSSP together with explicit linearithmic runtime, extending prior meshing techniques. The high-probability mesh guarantee and empirical robustness to multiple distributions constitute concrete strengths that could establish a new baseline for approximation heuristics in this domain.

major comments (2)

- [Abstract] Abstract: the stated expected error O(B/nw^2) is obtained exclusively under the 'standard mean-field assumption with equal standard deviation'; no derivation, justification, or empirical check (e.g., measured per-layer error variances or test of the equal-std-dev closure for the i.i.d. inputs) is supplied, so the inverse-quadratic decay claim reduces to a quantity defined by the modeling assumption itself rather than an independent result.

- [Abstract] Abstract: the algorithm is asserted to construct the identical O(B/w) mesh as Da Cunha et al. (2023) with probability 1-δ while trimming to w elements throughout, yet no analysis is given of how the trimming step preserves the mesh guarantee or determines δ; this guarantee is load-bearing for the claimed robustness.

minor comments (2)

- [Abstract] Abstract: the runtime is described only as 'linearithmic time w.r.t list size n and beam width w' without an explicit big-O expression (e.g., O(n w log w) or similar) that would allow direct verification.

- [Abstract] Abstract: the claim of 'empirical robustness' and 'new practical baseline' is made without reference to concrete quantitative metrics, baseline comparisons, or controls that would appear in a later empirical section.

Simulated Author's Rebuttal

We thank the referee for their thorough review and valuable comments on our manuscript. We address the major comments point by point below, and we will incorporate revisions to address the concerns raised.

read point-by-point responses

-

Referee: [Abstract] Abstract: the stated expected error O(B/nw^2) is obtained exclusively under the 'standard mean-field assumption with equal standard deviation'; no derivation, justification, or empirical check (e.g., measured per-layer error variances or test of the equal-std-dev closure for the i.i.d. inputs) is supplied, so the inverse-quadratic decay claim reduces to a quantity defined by the modeling assumption itself rather than an independent result.

Authors: The referee correctly notes that the inverse-quadratic error bound is derived under the mean-field assumption. In the current manuscript, we present the bound as a consequence of this standard assumption in the literature, but we agree that a self-contained derivation and empirical validation would strengthen the claim. We will add a new subsection in the analysis section providing the step-by-step derivation of the expected error O(B/(n w^2)) from the mean-field model with equal standard deviations. Furthermore, we will include additional experiments reporting the observed per-layer error variances for i.i.d. inputs to empirically support the equal-std-dev assumption. revision: yes

-

Referee: [Abstract] Abstract: the algorithm is asserted to construct the identical O(B/w) mesh as Da Cunha et al. (2023) with probability 1-δ while trimming to w elements throughout, yet no analysis is given of how the trimming step preserves the mesh guarantee or determines δ; this guarantee is load-bearing for the claimed robustness.

Authors: We acknowledge that while the abstract states the high-probability guarantee for the mesh construction with trimming, the detailed analysis of how the trimming to w elements preserves the O(B/w) mesh property and the specific dependence of δ on the trimming is not elaborated in the provided text. This is a valid point. In the revision, we will expand the section on mesh construction to include a lemma or proposition that bounds the probability δ accounting for the trimming step, showing that the trimmed mesh still covers the range with the stated probability by relating it to the original Da Cunha et al. construction. revision: yes

Circularity Check

No significant circularity; error bound is conditional on explicit assumption.

full rationale

The paper's central theoretical claim states that the beam-search heuristic yields expected error O(B/nw²) under a 'standard mean-field assumption with equal standard deviation.' This is presented as a derived quantity conditional on the modeling assumption rather than a result that defines or is equivalent to the assumption by construction. The mesh construction is referenced to external prior work (Da Cunha et al. 2023) with no self-citation overlap or load-bearing uniqueness theorem invoked. No equations or steps reduce a prediction to a fitted input, self-definition, or ansatz smuggled via citation; the empirical robustness claims stand separately from the conditional bound. The derivation chain is therefore self-contained against the enumerated patterns.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption standard mean-field assumption with equal standard deviation for the distribution of remaining subset sums

Reference graph

Works this paper leans on

-

[1]

AND Subset Sum is Hard: Tight Conditional Lower Bounds for Scheduling, Matching, and Related Problems.SIAM J. Comput.48, 2 (2019), 539–579. doi:10.1137/16M1082018 Anja Becker, Jean-Sébastien Coron, and Antoine Joux

-

[2]

2009.A Statistical Learning Perspective of Genetic Programming

Improved Generic Algo- rithms for Hard Knapsacks. InAdvances in Cryptology – EUROCRYPT 2011 (Lecture Notes in Computer Science, Vol. 6632). Springer, 364–385. doi:10.1007/978-3-642- 20465-4_21 David Biesner, Rafet Sifa, and Christian Bauckhage

-

[3]

doi:10.36227/techrxiv.18994160.v1 Preprint

Solving Subset Sum Prob- lems using Binary Optimization with Applications in Auditing and Financial Data Analysis.TechRxiv– (2022), –. doi:10.36227/techrxiv.18994160.v1 Preprint. Xavier Bonnetain, Rémi Bricout, André Schrottenloher, and Yixin Shen

-

[4]

InAdvances in Cryptology – ASIACRYPT 2020 (Lecture Notes in Computer Science, Vol

Improved Classical and Quantum Algorithms for Subset-Sum. InAdvances in Cryptology – ASIACRYPT 2020 (Lecture Notes in Computer Science, Vol. 12492). Springer, 633–666. doi:10.1007/978-3-030-64834-3_22 Christian Borgs, Jennifer T Chayes, and Boris Pittel

-

[5]

Lin Chen, Jiayi Lian, Yuchen Mao, and Guochuan Zhang

Phase transition and finite- size scaling for the number partitioning problem.Random Structures & Algorithms 19, 3-4 (2001), 247–288. Lin Chen, Jiayi Lian, Yuchen Mao, and Guochuan Zhang. 2024a. Approximating Partition in Near-Linear Time. InProceedings of the 56th Annual ACM Symposium Edwin Chen and Christof Teuscher on Theory of Computing (STOC 2024). A...

-

[6]

Computational Complexity2, 2 (1992), 111–128

Improved Low-Density Subset Sum Algorithms. Computational Complexity2, 2 (1992), 111–128. doi:10.1007/BF01201999 Arthur Carvalho Walraven Da Cunha, Francesco d’Amore, Frédéric Giroire, Hicham Lesfari, Emanuele Natale, and Laurent Viennot

-

[7]

274), Inge Li Gørtz, Martin Farach-Colton, Simon J

(Leibniz International Proceedings in Informatics (LIPIcs), Vol. 274), Inge Li Gørtz, Martin Farach-Colton, Simon J. Puglisi, and Grzegorz Herman (Eds.). Schloss Dagstuhl — Leibniz-Zentrum für Informatik, Dagstuhl, Germany, 37:1–37:11. doi:10.4230/ LIPIcs.ESA.2023.37 Abraham D. Flaxman and Bartosz Przydatek

2023

-

[8]

InSTACS 2005 (Lecture Notes in Computer Science, Vol

Solving Medium-Density Subset Sum Problems in Expected Polynomial Time. InSTACS 2005 (Lecture Notes in Computer Science, Vol. 3404), Volker Diekert and Bruno Durand (Eds.). Springer, Berlin, Heidelberg, 305–314. doi:10.1007/978-3-540-31856-9_25 Jonathan Frankle, Gintare Karolina Dziugaite, Daniel M. Roy, and Michael Carbin

-

[9]

InProceedings of the 37th International Conference on Machine Learning (ICML)

Linear Mode Connectivity and the Lottery Ticket Hypothesis. InProceedings of the 37th International Conference on Machine Learning (ICML). Proceedings of Machine Learning Research (PMLR), Virtual Event, 3259–3269. https://proceedings. mlr.press/v119/frankle20a.html Michael R. Garey and David S. Johnson. 1979.Computers and Intractability: A Guide to the Th...

1979

-

[10]

doi:10.1007/ BFb0006603 Fred Glover

Fast approximation algorithms for knapsack type problems.System Modeling and Optimization8 (1979), 277–281. doi:10.1007/ BFb0006603 Fred Glover

1979

-

[11]

doi:10.1016/0305- 0548(86)90048-1 Fred Glover

Future paths for integer programming and links to artificial intelli- gence.Computers & Operations Research13, 5 (1986), 533–549. doi:10.1016/0305- 0548(86)90048-1 Fred Glover

-

[12]

doi:10.1287/ijoc.1.3.190 Fred Glover

Tabu Search—Part I.ORSA Journal on Computing1, 3 (1989), 190–206. doi:10.1287/ijoc.1.3.190 Fred Glover

-

[13]

doi:10.1287/ijoc.2.1.4 David E

Tabu Search—Part II.ORSA Journal on Computing2, 1 (1990), 4–32. doi:10.1287/ijoc.2.1.4 David E. Goldberg. 1989.Genetic Algorithms in Search, Optimization and Machine Learning(1st ed.). Addison-Wesley. Addison-Wesley Professional. Ellis Horowitz and Sartaj Sahni

-

[14]

ACM21, 2 (April 1974), 277–292

Computing Partitions with Applications to the Knapsack Problem.J. ACM21, 2 (April 1974), 277–292. doi:10.1145/321812.321823 Nick Howgrave-Graham and Antoine Joux

-

[15]

InAdvances in Cryptology – EUROCRYPT 2010 (Lecture Notes in Com- puter Science, Vol

New Generic Algorithms for Hard Knapsacks. InAdvances in Cryptology – EUROCRYPT 2010 (Lecture Notes in Com- puter Science, Vol. 6110). Springer, 235–256. doi:10.1007/978-3-642-13190-5_12 Liang Huang, Kai Zhao, and Mingbo Ma

-

[16]

When to Finish? Optimal Beam Search for Neural Text Generation (modulo beam size). InProceedings of the 2017 Conference on Empirical Methods in Natural Language Processing (EMNLP), Martha Palmer, Rebecca Hwa, and Sebastian Riedel (Eds.). Association for Computational Linguistics, Copenhagen, Denmark, 2134–2139. doi:10.18653/v1/D17-1227 James Kennedy and R...

-

[17]

doi:10.1109/ICNN.1995.488968 Scott Kirkpatrick, C

IEEE, 1942–1948. doi:10.1109/ICNN.1995.488968 Scott Kirkpatrick, C. D. Gelatt Jr., and M. P. Vecchi

-

[18]

Optimization by Simulated Annealing.Science220, 4598 (1983), 671–680. doi:10.1126/science.220.4598.671 Jeffrey C. Lagarias and Andrew M. Odlyzko

-

[19]

Solving Low-Density Subset Sum Problems.J. ACM32, 1 (1985), 229–246. doi:10.1145/2455.2461 Murali Krishna Madugula, Santosh Kumar Majhi, and Nibedan Panda

-

[20]

In2022 International Conference on Connected Systems & Intelligence (CSI)

An Effi- cient Arithmetic Optimization Algorithm for Solving Subset-sum Problem. In2022 International Conference on Connected Systems & Intelligence (CSI). IEEE, Piscataway, NJ, USA, 1–7. doi:10.1109/CSI54720.2022.9923996 Stephan Mertens

-

[21]

Phase transition in the number partitioning problem.Physical Review Letters81, 20 (1998),

1998

-

[22]

A Genetic Algorithm for the Subset Sum Problem.Neurocomputing61, 1–3 (2004), 453–459. doi:10.1016/j.neucom.2003. 11.025 Richard Schroeppel and Adi Shamir

-

[23]

A t=o(2n/2), s=o(2n/4) algorithm for certain np-complete problems.SIAM J

A 𝑇=𝑂( 2𝑛/2 ), 𝑆=𝑂( 2𝑛/4 ) Algorithm for Certain NP-Complete Problems.SIAM J. Comput.10, 3 (Aug. 1981), 456–464. doi:10.1137/0210033 David Wagner

-

[24]

InProceedings of the 2016 Conference on Empirical Methods in Natural Language Processing (EMNLP)

Sequence-to-Sequence Learning as Beam- Search Optimization. InProceedings of the 2016 Conference on Empirical Methods in Natural Language Processing (EMNLP). Association for Computational Linguistics, Austin, Texas, 1296–1306. doi:10.18653/v1/D16-1137 Zhihui Zhou and Zongzhang Zhang

-

[25]

Association for the Advance- ment of Artificial Intelligence (AAAI), 6744–6751. doi:10.1609/aaai.v34i04.6162 A Variance of Error Variance bound in the random-phase regime.Let 𝐷𝑡 denote the global gap at Phase B step 𝑡, and define the one-step improvement Δ𝑡 :=𝐷 𝑡 −𝐷 𝑡+1 ≥0. In the random-phase, small-gap regime 𝐷𝑡 ≪Δ=𝐵/𝑤 we have E[Δ 𝑡 |𝐷 𝑡 ]=Θ 𝑤 2 𝐵 𝐷 2 𝑡...

-

[26]

We require 𝑆𝑚 ≥ln(𝑛𝑤)

The sum of𝑚 independent Exp(1) variables exactly follows a Gamma distribution: 𝑆𝑚 = 𝑚∑︁ 𝑖=1 𝑋𝑖 ∼Γ(𝑚,1), where E[𝑆𝑚]=𝑚 and Var(𝑆𝑚)=𝑚 . We require 𝑆𝑚 ≥ln(𝑛𝑤) . Because E[𝑆𝑘𝐵 ]=𝑘 𝐵, setting 𝑘𝐵 ≈ln(𝑛𝑤) achieves the target re- duction in expectation. Applying standard Chernoff bounds for the Gamma distribution, padding the required budget by the standard devia...

2010

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.