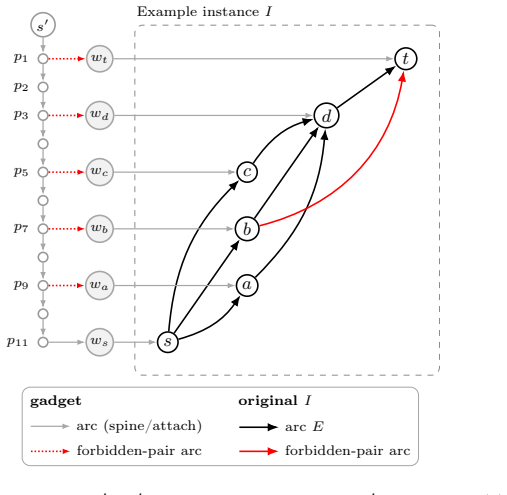

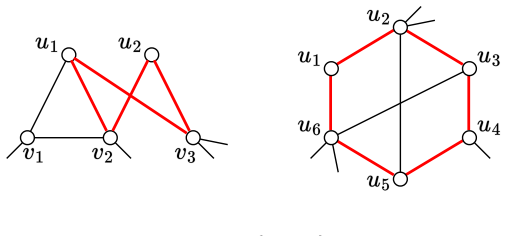

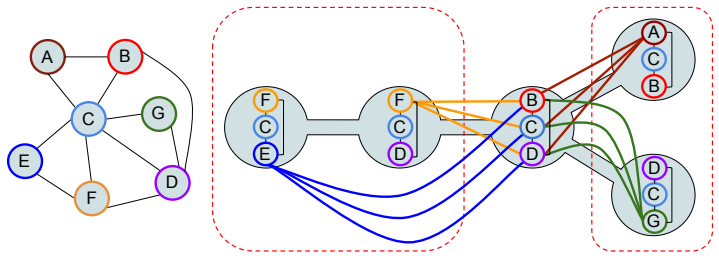

Graph doubling maps ultrabubbles to weak superbubbles in linear time

The construction lets existing directed-graph algorithms compute these nested structures on any bidirected graph without new code.

Data Structures and Algorithms

Covers data structures and analysis of algorithms. Roughly includes material in ACM Subject Classes E.1, E.2, F.2.1, and F.2.2.

The construction lets existing directed-graph algorithms compute these nested structures on any bidirected graph without new code.

Union digraph layering enables tractability even with unrestricted forbidden pairs and exponentially many paths.

full image

full image

On the LSH Distortion of Ulam and Cayley Similarities

Lower bound Omega(n^0.12) for Ulam; Cayley similarity requires Theta(n) distortion, showing when hashing works for permutation nearest-neigh

full image

full image

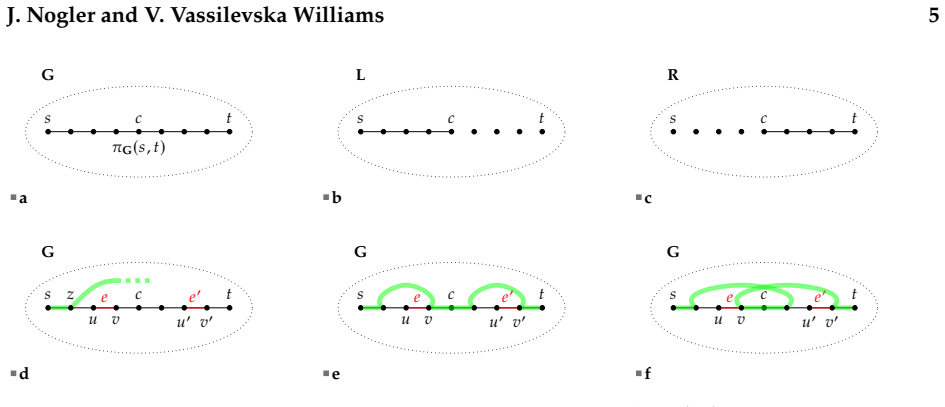

Maximizing Reachability via Shifting of Temporal Paths

The problem remains tractable for any number of shifts when only the number of paths is the parameter.

Connectivity augmentation is fixed-parameter tractable

New algorithms run in time 2 to the O of k log of k plus λ times n to the O of 1, removing the prior limit of λ at most 4.

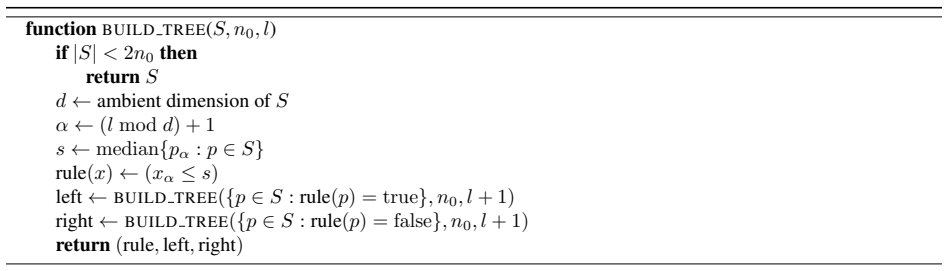

Performance bounds for nearest neighbor search with k-d trees

When dimension grows polylogarithmically with data points, defeatist search matches chance level and comprehensive search checks every cell

full image

full image

Chasing Small Sets Optimally Against Adaptive Adversaries

Closes 30-year gap and proves tightness even for randomized algorithms against adaptive adversaries

full image

full image

FPT Approximation Schemes for Min-Sum Radii and Min-Sum Diameters Clustering

Algorithms run in time exponential in k for any desired approximation factor, closing an open question on FPT approximation schemes.

full image

full image

Convex Optimization with Local Label Differential Privacy: Tight Bounds in All Privacy Regimes

New non-interactive algorithm and matching lower bound replace prior linear cost on label space size for large sample sizes n.

An Approximation Algorithm for 2-Vertex-Connectivity via Cycle-Restricted 2-Edge-Covers

Cycle-restricted edge covers replace the prior 4/3 guarantee for finding the cheapest set of edges that survives any single vertex failure.

full image

full image

A 4.509-Approximation Algorithm for Generalized Min Sum Set Cover

Improved LP analysis and new Bernoulli tail bounds reduce the ratio from 4.642 toward the hardness threshold of 4.

full image

full image

Dynamic Rank, Basis, and Matching

Algorithms achieve Õ(r^1.405) time per entry change and extend to maintaining maximum matching size in graphs.

full image

full image

Online Steiner Forest with Recourse

An algorithm keeps connectivity costs low for arriving terminal pairs while changing only O(log n) edges per demand on average.

Streaming Complexity Separations for Dense and Sparse Graphs

Graphs with about n/ε² edges require an extra logarithmic factor to output the approximate cut

full image

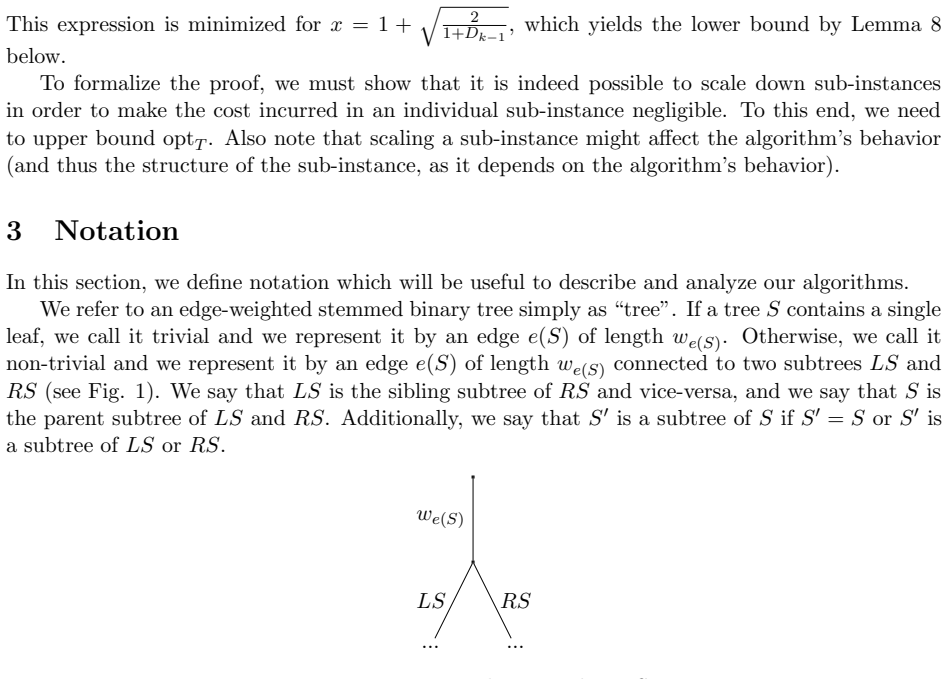

full image

Near-Linear Time Generalized Sinkhorn Algorithms for Bounded Genus Graphs

Separator decompositions and fast distance matrix multiplies let approximate geodesic optimal transport scale to large meshes.

full image

full image

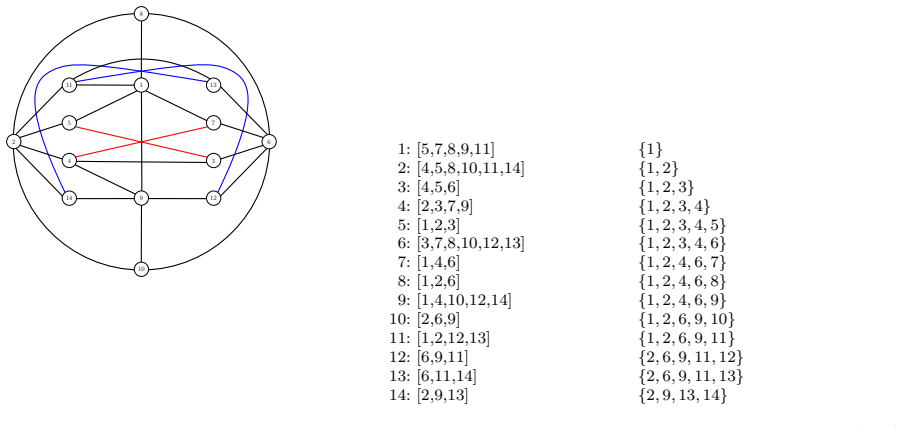

TreeWidzard: An Engine for Width-Based Dynamic Programming and Automated Theorem Proving

Given any Boolean formula of properties and an integer k, it decides whether every graph of treewidth at most k satisfies the formula.

full image

full image

State Canonization and Early Pruning in Width-Based Automated Theorem Proving

The reductions let a dynamic-programming search prove the conjecture holds for all triangle-free graphs of pathwidth at most 5 and treewidth

full image

full image

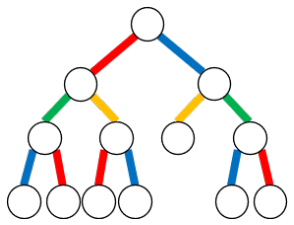

Dynamic Edge Coloring of Forests

Incremental case stays efficient while fully dynamic forests need randomization or rooting to avoid logarithmic recoloring chains.

full image

full image

A Scalable and Unified Framework to Weighted Rank Aggregation

Local one-medians on few inputs give (2-α) approximations in constant MPC rounds with sublinear local memory for Ulam and related distances.

full image

full image

Deterministically finding an element of large order in mathbb{Z}_N^*

Runs in square-root-of-D time once D exceeds exp of sqrt(2 log N log log N), improving on prior N^{1/6} threshold.

Computing Flows in Subquadratic Space

Algorithm reports approximate flow on each edge as it is read in the last pass, using Õ(n^1.5) space and sidestepping quadratic lower bounds

full image

full image

Planar maxima and hull problems force deterministic output-sensitive methods to trade off speed against memory accesses.

Equitable Colorings of Vertex-Weighted Graphs

A (1+ε) guarantee needs only (c/ε² log(1/ε))Δ colors; a factor-2 guarantee works at Δ+1 colors and both are polynomial-time computable.

Witness-Sensitive Detection of Induced Diamonds

The witness-sensitive method improves on matrix-multiplication time once the graph contains enough such subgraphs.

full image

full image

Node-Weighted Triangles: Faster and Simpler

The method removes the last superpolylogarithmic overhead that separated weighted from unweighted triangle detection.

Online Matrix Factorization, Online Private Query Release, and Online Discrepancy Minimization

New algorithm handles arriving query rows one by one while staying competitive with best offline matrix factorizations for private release.

full image

full image

Search and evacuation with a near majority of faulty agents

When n equals 2f plus 1, new coordination limits worst-case time to at most 7.437 times the distance for three agents and 4 plus 2 times the

full image

full image

Planarizing Gadgets for (k, l)-tight Graphs Do Not Exist

The usual reduction from general instances to planar ones is impossible.

full image

full image

Parameterized Local Search for Vertex Cover: When only the Search Radius is Crucial

With k fixed as a user constant, structural parameters like treewidth or h-index control the cost of finding improving swaps.

Towards Settling the Complexity of the Lettericity Problem

Coloring retrieval matches graph isomorphism complexity; symmetric lettericity equals neighborhood diversity and runs in linear time.

full image

full image

Computing bases in Hermite normal form of lattices of integer relations

When the input matrix is square the procedure returns the standard Hermite normal form using time comparable to a constant number of matrix

full image

full image

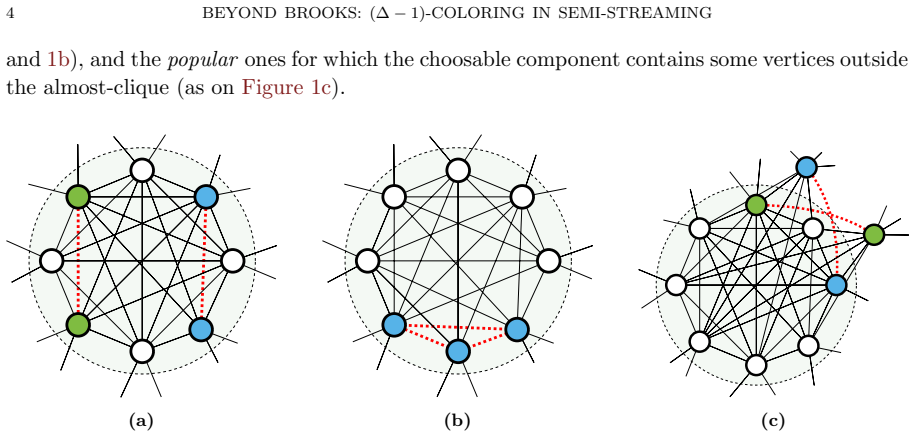

Beyond Brooks: (Delta-1)-Coloring in Semi-Streaming

Graphs with maximum degree ≥10^14 and no Δ-clique receive a proper (Δ-1)-coloring after a single pass over their edges in semi-streaming.

full image

full image

Faster Deterministic Streaming Vertex Coloring

New algorithm cuts passes from O(log Δ) to sublogarithmic while using linear memory and a linear-in-Δ palette.

Coordinated Motion Planning is FPT on Discretized Simple Polygons

Algorithm parameterized by robot count solves the problem on graphs from simple polygons, generalizing rectangular cases and advancing to pl

full image

full image

Optimal Learning-Augmented Algorithm for Online Bidding

Any algorithm reduces to a distribution over bids whose optimum satisfies delayed differential equations, achieving Pareto-optimal trade-off

full image

full image

On the Complexity of the Matching Problem of Regular Expressions with Backreferences

Hardness results rule out faster algorithms for k references while single-use cases finish in near-linear time with log factors.

EPTAS for Hard Graph Cut Problems for Dense Graphs

Weak regularity lemma plus sampling gives f(1/ε) n^O(1) time for ConstrainedMinCut, MinQuotientCut, and ProductSparsestCut.

full image

full image

Connectivity Oracle Under Vertex Failures by Shortcutting Unbreakable Decomposition

O(k^6) update independent of n, near-linear space and preprocessing when k is constant.

Deterministic Monotone Min-Plus Product and Convolution

It removes randomization from the fastest algorithms for edit distance, RNA folding, and tree edit distance without any loss in speed.

Estimating Correlation Clustering Cost in Node-Arrival Stream

C^4Approx stores only a small fraction of nodes yet matches offline pivot methods on real data sets with constant passes.

full image

full image

Equivalence of Coarse and Fine-Grained Models for Learning with Distribution Shift

A black-box reduction equates the two, yielding hardness for halfspaces but efficient algorithms with membership queries.

Modern column generation for estimating single- and multi-purchase ranked list choice models

A new solver for the subproblem handles the explosion of consumer types and delivers faster fits for both single- and multi-purchase cases.

Accelerated Relax-and-Round for Concave Coverage Problems

Replaces LP solving with gradient descent on a surrogate and uses hypersimplex rounding to obtain tight guarantees in near-linear time.

full image

full image

Polylogarithmic Approximation for Covering and Connecting Multi-Interface Networks

Randomized rounding of the LP relaxation also yields the first non-trivial O(log² m) guarantee for connectivity

full image

full image

Fast decremental tree sums in forests

Micro-macro decomposition and precomputation beat standard log n bounds when no edge insertions occur after start.

On the Parameterized Approximability of (Mergeable) Sum of Radii Clustering

Hardness rules out (1+ε) FPT approximations unless W[2]=FPT, but mergeable constraints allow an (8/3+ε) FPT approximation and extend to fair

full image

full image

Designing Capacitated Subnetworks for Shortest Path Routing

Simple heuristic of routing first then deactivating unused links stays close to the true optimum on practical instances.

Bilateral Treewidth for QBF: Where Strategies and Resolution Meet

The new parameter unifies strategy branching with resolution steps to cover more quantified formulas than prior measures.

The Pareto Frontier of Randomized Learning-Augmented Online Bidding

Upper and lower bounds on consistency coincide for robustness at or above 2.885, fully determining the tradeoff.

full image

full image

Label Correcting Algorithms for the Multiobjective Temporal Shortest Path Problem

They use a path-length bound or sufficient conditions to recover every nondominated image in discrete-time multiobjective problems.

Discrete Optimal Transport: Rapid Convergence of Simulated Annealing Algorithms

Discrete optimal transport bounds KL error by the action of the annealing path, giving polynomial rates for mean-field models.

full image

full image

Algorithmic Phase Transition for Large Independent Sets in Dense Hypergraphs

They reach the optimal ratio r to the 1 over r-1 and prove no online method can exceed it while identifying the precise maximum size.

Nearly Optimal Attention Coresets

A small subset of keys and values matches full softmax attention within epsilon for every query of norm at most rho.

A Separator for Minor-Free Graphs Beyond the Flow Barrier

The construction breaks the flow-based limit and narrows the gap to the O(h sqrt(n)) conjecture of Alon, Seymour, and Thomas.

full image

full image

A Separator for Minor-Free Graphs Beyond the Flow Barrier

The bound breaks the O(h log h sqrt n) flow barrier and moves closer to the long-standing O(h sqrt n) conjecture.

full image

full image

Faster Algorithms for Shortest Unique or Absent Substrings

Decomposing candidates by length and period and reducing to geometry beats the linear-time suffix-tree method for small alphabets.

full image

full image

Online Orthogonal Vectors Revisited

It improves two-decade-old bounds in moderate dimensions and refutes a hardness conjecture via a structure-versus-randomness split.

Robust Inverse Quadratic Error Decay with Meshing and Beam Search for Random Subset Sum

The linearithmic algorithm trims candidates to width w and reduces expected error as O(B over n w squared) under standard assumptions.

full image

full image

Submodular Ground-Set Pruning: Monotone Tightness and a Non-Monotone Separation

Non-monotone cases receive 1/2-ε containment algorithms that beat direct optimization hardness

Constructing Suffixient Arrays Revisited

Processes suffix array, LCP and reverse BWT in a single scan without extra logarithmic cost.

Faster Iterative φ Queries on the Positional BWT

A constant-overlap decomposition of haplotypes yields faster or smaller indexes for chains of predecessor lookups when haplotypes are fewer.

Nearly-Tight Bounds for Zonotope Containment and Beyond

Sampling method nearly matches Ω(√d/log d) lower bound and reaches optimal under sparsification conjecture

full image

full image

An widetilde{O} (n^{3/7}) Round Parallel Algorithm for Matroid Bases

Cuts adaptive query rounds for any matroid by analyzing multi-element dependencies in random circuits.

Optimal Hardness of Online Algorithms for Large Common Induced Subgraphs

The largest possible reaches 4 log n but online methods are provably limited to 2, shown via overlap gap property.

full image

full image

Near-linear work suffices for single-source reachability and shortest paths once edges exceed n^{1+o(1)}, improving the prior Omega(sqrt(n))

full image

full image

On Computing Total Variation Distance Between Mixtures of Product Distributions

Achieves (1±ε) multiplicative error for k-component mixtures over n dimensions in poly((nq)^k,1/ε) time

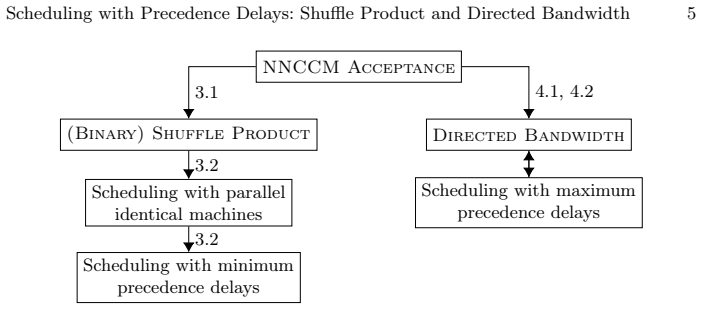

Results imply hardness for min and max delay scheduling parameterized by DAG width or delay value, and resolve trees case for bandwidth.

full image

full image

Exact and Approximate Algorithms for Polytree Learning

Beats prior O(3^n) exact bound and delivers polynomial-time approximations within factor k or 2 of optimal.

full image

full image

Counting Small Balanced (p,q)-bicliques in Signed Bipartite Graphs

In signed bipartite graphs a direct-enumeration method with early pruning beats adapted baselines on real data for small p and q.

An Optimal Algorithm for Cardinality-Constrained Diameter Partitioning

It proves a matching lower bound and computes all cardinalities together by solving a coloring problem on the maximum spanning tree.

Provable Accuracy Collapse in Embedding-Based Representations under Dimensionality Mismatch

Any representation using dimension below a fixed fraction of the ground-truth D must fail on at least 50 percent of distance comparisons.

full image

full image

For fixed k they support paths of length at least k or detours longer by k and even or odd length paths with 2 to the O of k cubed times log

A Poisson Process for Submodular Maximization

The method reaches the (1-1/e) guarantee for matroid-constrained maximization without discretization or rounding.

Four graph-based optimization problems compute the highest and lowest ranks a special group can occupy when similar items are allowed to be,

full image

full image

A Polynomial Kernel for Vertex Deletion to the Scattered Class of Proper Interval Graph and Trees

The vertex-deletion problem whose components must be proper interval graphs or trees reduces to an equivalent instance of size O(k to the 33

full image

full image

On the power of standard DFS and BFS

Single or double traversals suffice for trivially perfect, split, and proper interval graphs by checking pattern-avoiding orderings.

full image

full image

Undirected Replacement Paths: Dual Fault Reduces to Single Source

Tight weight-preserving reduction in undirected graphs gives matching algorithms and lower bounds

full image

full image

A fine-grained dichotomy for the center problem on Gromov hyperbolic graphs

Linear-time algorithm for the center problem on 1/2-hyperbolic graphs, with a conditional lower bound ruling it out for 1-hyperbolic graphs.

Randomized k-server in polynomial time

O(log k) random bits keep polynomial configurations on trees, delivering polylog ratio on arbitrary metrics.

A Unified Approach to Minimizing Symmetric Submodular Functions

A single parameter connects minimum adjacency and minimum degree orderings and produces an O(n cubed) contraction procedure.