Recognition: unknown

Universal Neural Propagator: Learning Time Evolution in Many-Body Quantum Systems

Pith reviewed 2026-05-08 17:20 UTC · model grok-4.3

The pith

A single neural network learns the mapping from driving protocols to quantum time-evolution operators.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

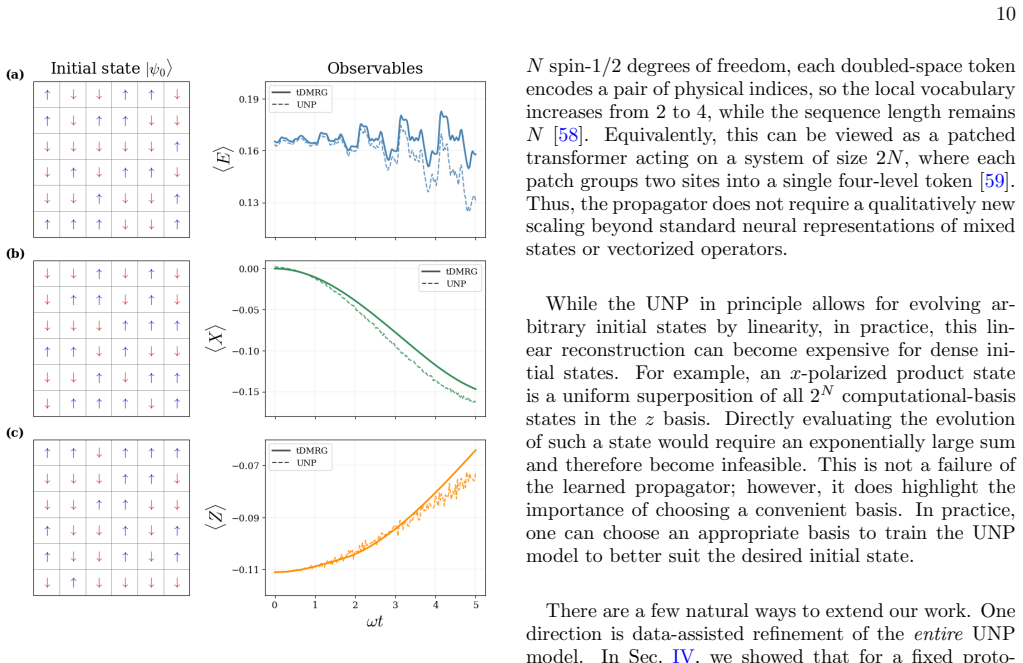

The Universal Neural Propagator is a single neural network that learns the functional mapping from driving protocols to time-evolution propagators. Once trained in a self-supervised manner, the same model simultaneously predicts dynamics across a continuous space of driving protocols and across an exponentially large Hilbert space of initial states. On the two-dimensional driven Ising model it remains accurate for product and entangled initial states, for in-distribution and out-of-distribution protocols, and for system sizes beyond the reach of exact diagonalization, while also allowing efficient fine-tuning from observable data.

What carries the argument

The Universal Neural Propagator itself, a neural network trained to output the time-evolution operator (propagator) as a direct function of the driving protocol.

If this is right

- One trained model replaces repeated full simulations for every new driving protocol.

- The same model applies to any initial state without retraining or additional data.

- Simulations remain feasible at sizes where storing the full Hilbert space is impossible.

- Observable data alone can be used to adapt the model across all initial states.

- Transfer to protocols outside the original training distribution is possible without retraining from scratch.

Where Pith is reading between the lines

- The operator-learning route may reduce the cost of exploring many driving protocols in quantum control or many-body physics.

- The same idea could be tested on other time-dependent models such as driven spin chains or lattice gauge theories.

- If the mapping generalizes further, it might support rapid optimization of protocols by querying the model repeatedly rather than running separate simulations.

Load-bearing premise

A neural network can learn a functional mapping from protocols to propagators that remains accurate for protocols and system sizes never seen during training.

What would settle it

Compute exact propagators for out-of-distribution protocols on system sizes still reachable by exact diagonalization and check whether the UNP prediction error exceeds the reported accuracy threshold.

Figures

read the original abstract

Conventional approaches to simulating quantum many-body dynamics produce a single trajectory: if the Hamiltonian or the initial state is changed, the computation must be re-performed. Recent efforts toward foundation models have begun to address this limitation, yet existing methods transfer across either Hamiltonians or initial states, but not both. In this work, we introduce the Universal Neural Propagator (UNP), a single, unified model that learns the functional mapping from driving protocols to time-evolution propagators. Trained in an entirely self-supervised way, a single UNP model predicts dynamics across a function space of driving protocols and an exponentially large Hilbert space of initial states simultaneously. We benchmark on a two-dimensional driven Ising model and demonstrate the UNP's accuracy and transferability across product and entangled initial states, as well as for both in- and out-of-distribution driving protocols. The UNP remains accurate at system sizes beyond exact diagonalization, and can be efficiently fine-tuned across all initial states using observable data. By shifting the object of learning from quantum states to operators, this work opens a route toward transferable simulation of driven quantum matter.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces the Universal Neural Propagator (UNP), a single neural network that learns the functional mapping from driving protocols to time-evolution propagators. Trained entirely self-supervised, the model is claimed to simultaneously predict dynamics over a space of driving protocols and an exponentially large space of initial states (product and entangled). Benchmarks on the 2D driven Ising model assert accuracy and transferability for both in- and out-of-distribution protocols, with continued accuracy at system sizes beyond exact diagonalization and the option for fine-tuning on observable data.

Significance. If the generalization claims hold, the work would constitute a meaningful step toward operator-based foundation models for driven quantum many-body dynamics, potentially allowing a single trained model to replace repeated simulations for new protocols or initial states and thereby reducing computational overhead in quantum simulation.

major comments (2)

- [Abstract] Abstract and results sections: the central claim that the UNP remains accurate for out-of-distribution protocols and Hilbert-space dimensions beyond exact diagonalization is not independently verified. The self-supervised loss provides no a-priori guarantee against accumulation of approximation errors or failure to capture non-perturbative effects outside the training distribution; without quantitative comparisons to an independent method (e.g., tensor-network evolution) on the same OOD or large instances, it is impossible to distinguish genuine operator learning from interpolation within the sampled manifold.

- [Methods] Training and evaluation details (presumably §3–4): the abstract reports accuracy and transferability yet omits full methods, training hyperparameters, error bars, and data-exclusion criteria. This absence directly undermines quantitative support for the transferability claims across protocols and system sizes.

minor comments (2)

- [Abstract] Clarify how the self-supervised loss explicitly enforces unitarity or consistency with the time-dependent Schrödinger equation; a brief derivation or pseudocode would improve reproducibility.

- The manuscript would benefit from additional citations to prior neural-network approaches for quantum dynamics and to existing work on transferable or foundation-model-style simulators.

Simulated Author's Rebuttal

We thank the referee for their careful reading of the manuscript and for the constructive feedback. We address each major comment below and describe the revisions we will implement.

read point-by-point responses

-

Referee: [Abstract] Abstract and results sections: the central claim that the UNP remains accurate for out-of-distribution protocols and Hilbert-space dimensions beyond exact diagonalization is not independently verified. The self-supervised loss provides no a-priori guarantee against accumulation of approximation errors or failure to capture non-perturbative effects outside the training distribution; without quantitative comparisons to an independent method (e.g., tensor-network evolution) on the same OOD or large instances, it is impossible to distinguish genuine operator learning from interpolation within the sampled manifold.

Authors: We agree that independent verification against other numerical methods is important for rigorously supporting the generalization claims, particularly for OOD protocols and system sizes beyond exact diagonalization. Our self-supervised training procedure samples a broad distribution of protocols and initial states to encourage learning of the underlying propagator operator rather than interpolation; however, we acknowledge that this does not constitute a priori proof against error accumulation. In the revised manuscript we will add direct quantitative comparisons to tensor-network evolution (e.g., TEBD or MPO-based methods) for selected OOD protocols on system sizes where both approaches are feasible, including error metrics and analysis of non-perturbative regimes. revision: yes

-

Referee: [Methods] Training and evaluation details (presumably §3–4): the abstract reports accuracy and transferability yet omits full methods, training hyperparameters, error bars, and data-exclusion criteria. This absence directly undermines quantitative support for the transferability claims across protocols and system sizes.

Authors: We concur that complete methodological transparency is required to substantiate the reported accuracy and transferability. While some training details appear in the current Methods section and supplementary material, we will substantially expand this content in the revision. The updated manuscript will include a comprehensive table of all hyperparameters, statistical error bars obtained from multiple independent training runs, explicit definitions and quantitative criteria used to designate in-distribution versus out-of-distribution protocols, and a clear description of data-generation and exclusion procedures. revision: yes

Circularity Check

No circularity: empirical NN training on simulator data with reported generalization benchmarks

full rationale

The paper trains a neural network via self-supervised loss on trajectories generated by conventional quantum simulators to approximate the functional mapping from driving protocols to propagators. This is standard empirical learning; the model's outputs on held-out or OOD protocols are not equivalent to the training inputs by construction. Accuracy claims for sizes beyond ED rely on fine-tuning with observable data and benchmarks, which are independent verification steps rather than reductions to the original fit. No self-definitional equations, fitted inputs renamed as predictions, or load-bearing self-citations appear in the provided abstract and description. The derivation chain remains self-contained as an ML method.

Axiom & Free-Parameter Ledger

free parameters (1)

- Neural network weights and biases

axioms (1)

- standard math Time evolution of closed quantum systems is generated by unitary operators obtained from the time-dependent Hamiltonian.

invented entities (1)

-

Universal Neural Propagator (UNP)

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Schollw¨ ock, The density-matrix renormalization group, Reviews of Modern Physics77, 259 (2005)

U. Schollw¨ ock, The density-matrix renormalization group, Reviews of Modern Physics77, 259 (2005)

2005

-

[2]

Schollw¨ ock, The density-matrix renormalization group in the age of matrix product states, Annals of Physics 326, 96 (2011)

U. Schollw¨ ock, The density-matrix renormalization group in the age of matrix product states, Annals of Physics 326, 96 (2011)

2011

-

[3]

S. R. White, Density matrix formulation for quantum renormalization groups, Physical Review Letters69, 2863 (1992)

1992

-

[4]

S. R. White, Density-matrix algorithms for quantum renormalization groups, Physical Review B48, 10345 (1993)

1993

-

[5]

¨Ostlund and S

S. ¨Ostlund and S. Rommer, Thermodynamic limit of den- sity matrix renormalization, Physical Review Letters75, 3537 (1995)

1995

-

[6]

Jordan, R

J. Jordan, R. Or´ us, G. Vidal, F. Verstraete, and J. I. Cirac, Classical simulation of infinite-size quantum lat- tice systems in two spatial dimensions, Phys. Rev. Lett. 101, 250602 (2008)

2008

-

[7]

J. I. Cirac, D. P´ erez-Garc´ ıa, N. Schuch, and F. Ver- straete, Matrix product states and projected entangled 14 pair states: Concepts, symmetries, theorems, Reviews of Modern Physics93, 10.1103/revmodphys.93.045003 (2021)

-

[8]

Carleo and M

G. Carleo and M. Troyer, Solving the quantum many- body problem with artificial neural networks, Science 355, 602 (2017)

2017

-

[9]

Ibarra-Garc´ ıa-Padilla, H

E. Ibarra-Garc´ ıa-Padilla, H. Lange, R. G. Melko, R. T. Scalettar, J. Carrasquilla, A. Bohrdt, and E. Khatami, Autoregressive neural quantum states of fermi hubbard models, Phys. Rev. Res.7, 013122 (2025)

2025

-

[10]

Zhang and M

Y.-H. Zhang and M. Di Ventra, Transformer quantum state: A multipurpose model for quantum many-body problems, Phys. Rev. B107, 075147 (2023)

2023

-

[11]

D¨ oschl, F

F. D¨ oschl, F. A. Palm, H. Lange, F. Grusdt, and A. Bohrdt, Neural network quantum states for the in- teracting hofstadter model with higher local occupations and long-range interactions, Phys. Rev. B111, 045408 (2025)

2025

-

[12]

Bukov, M

M. Bukov, M. Schmitt, and M. Dupont, Learning the ground state of a non-stoquastic quantum Hamiltonian in a rugged neural network landscape, SciPost Phys.10, 147 (2021)

2021

-

[13]

Joshi, R

A. Joshi, R. Peters, and T. Posske, Ground state proper- ties of quantum skyrmions described by neural network quantum states, Phys. Rev. B108, 094410 (2023)

2023

-

[14]

Schmitt and M

M. Schmitt and M. Heyl, Quantum many-body dynamics in two dimensions with artificial neural networks, Phys. Rev. Lett.125, 100503 (2020)

2020

-

[15]

Gravina, V

L. Gravina, V. Savona, and F. Vicentini, Neural pro- jected quantum dynamics: a systematic study, Quantum 9, 1803 (2025)

2025

-

[16]

Van de Walle, M

A. Van de Walle, M. Schmitt, and A. Bohrdt, Many-body dynamics with explicitly time-dependent neural quan- tum states, Machine Learning: Science and Technology 6, 045011 (2025)

2025

-

[17]

Sinibaldi, D

A. Sinibaldi, D. Hendry, F. Vicentini, and G. Carleo, Time-dependent neural galerkin method for quantum dy- namics, Phys. Rev. Lett.136, 120402 (2026)

2026

-

[18]

I. L. Guti´ errez and C. B. Mendl, Real time evolution with neural-network quantum states, Quantum6, 627 (2022)

2022

-

[19]

On the Opportunities and Risks of Foundation Models

R. Bommasani, D. A. Hudson, E. Adeli, R. Altman, S. Arora, S. von Arx, M. S. Bernstein, J. Bohg, A. Bosselut, E. Brunskill, E. Brynjolfsson, S. Buch, D. Card, R. Castellon, N. Chatterji, A. Chen, K. Creel, J. Q. Davis, D. Demszky, C. Donahue, M. Doumbouya, E. Durmus, S. Ermon, J. Etchemendy, K. Ethayarajh, L. Fei-Fei, C. Finn, T. Gale, L. Gillespie, K. Go...

work page internal anchor Pith review arXiv 2022

-

[20]

Rende, L

R. Rende, L. L. Viteritti, F. Becca, A. Scardicchio, A. Laio, and G. Carleo, Foundation neural-networks quantum states as a unified ansatz for multiple hamil- tonians, Nature communications16, 7213 (2025)

2025

-

[21]

T. Zaklama, D. Guerci, and L. Fu, Attention- based foundation model for quantum states (2025), arXiv:2512.11962 [cond-mat.str-el]

-

[22]

T. Zaklama, M. Geier, and L. Fu, Large electron model: A universal ground state predictor, arXiv preprint arXiv:2603.02346 (2026)

- [23]

- [24]

-

[25]

F. Shah, T. L. Patti, J. Berner, B. Tolooshams, J. Kos- saifi, and A. Anandkumar, Fourier neural operators for learning dynamics in quantum spin systems, Communi- cations Physics 10.1038/s42005-026-02644-1 (2026), arti- cle in press

- [26]

-

[27]

J. Li, X. Yang, X. Peng, and C.-P. Sun, Hybrid quantum- classical approach to quantum optimal control, Phys. Rev. Lett.118, 150503 (2017)

2017

-

[28]

Werschnik and E

J. Werschnik and E. Gross, Quantum optimal control theory, Journal of Physics B: Atomic, Molecular and Op- tical Physics40, R175 (2007)

2007

-

[29]

Ansel, E

Q. Ansel, E. Dionis, F. Arrouas, B. Peaudecerf, S. Gu´ erin, D. Gu´ ery-Odelin, and D. Sugny, Introduction to theoretical and experimental aspects of quantum op- timal control, Journal of Physics B: Atomic, Molecular and Optical Physics57, 133001 (2024)

2024

-

[30]

Hornik, M

K. Hornik, M. Stinchcombe, and H. White, Multilayer feedforward networks are universal approximators, Neu- ral Networks2, 359 (1989)

1989

-

[31]

Cybenko, Approximation by superpositions of a sig- moidal function, Mathematics of Control, Signals, and Systems2, 303 (1989)

G. Cybenko, Approximation by superpositions of a sig- moidal function, Mathematics of Control, Signals, and Systems2, 303 (1989)

1989

-

[32]

Hornik, Approximation capabilities of multilayer feed- forward networks, Neural Networks4, 251 (1991)

K. Hornik, Approximation capabilities of multilayer feed- forward networks, Neural Networks4, 251 (1991)

1991

-

[33]

R. G. Melko, G. Carleo, J. Carrasquilla, and J. I. Cirac, Restricted boltzmann machines in quantum physics, Na- ture Physics15, 887 (2019)

2019

-

[34]

Nomura, Helping restricted boltzmann machines with quantum-state representation by restoring symmetry, Journal of Physics: Condensed Matter33, 174003 (2021)

Y. Nomura, Helping restricted boltzmann machines with quantum-state representation by restoring symmetry, Journal of Physics: Condensed Matter33, 174003 (2021)

2021

-

[35]

Vieijra, C

T. Vieijra, C. Casert, J. Nys, W. De Neve, J. Haegeman, J. Ryckebusch, and F. Verstraete, Restricted boltzmann machines for quantum states with non-abelian or anyonic symmetries, Physical review letters124, 097201 (2020)

2020

-

[36]

X. Liang, W.-Y. Liu, P.-Z. Lin, G.-C. Guo, Y.-S. Zhang, and L. He, Solving frustrated quantum many-particle models with convolutional neural networks, Physical Review B98, 104426 (2018), arXiv:1807.09422 [cond- mat.str-el]

- [37]

-

[38]

X. Liang, S.-J. Dong, and L. He, Hybrid convolutional neural network and projected entangled pair states wave functions for quantum many-particle states, Physical Re- view B103, 035138 (2021), arXiv:2009.14370 [cond- mat.str-el]

-

[39]

Y. Yu, X. Si, C. Hu, and J. Zhang, A review of recurrent neural networks: Lstm cells and network architectures, Neural computation31, 1235 (2019)

2019

-

[40]

Hibat-Allah, M

M. Hibat-Allah, M. Ganahl, L. E. Hayward, R. G. Melko, and J. Carrasquilla, Recurrent neural network wave func- tions, Phys. Rev. Res.2, 023358 (2020)

2020

-

[41]

D. E. Rumelhart, G. E. Hinton, and R. J. Williams, Learning internal representations by error propagation, Tech. Rep. (1985)

1985

-

[42]

Sharir, Y

O. Sharir, Y. Levine, N. Wies, G. Carleo, and A. Shashua, Deep autoregressive models for the efficient variational simulation of many-body quantum systems, Physical Re- view Letters124, 020503 (2020)

2020

-

[43]

S. Morawetz, I. J. S. De Vlugt, J. Carrasquilla, and R. G. Melko,U(1) symmetric recurrent neural networks for quantum state reconstruction, Physical Review A104, 012401 (2021), arXiv:2010.14514 [quant-ph]

-

[44]

K. Donatella, Z. Denis, A. Le Boit´ e, and C. Ciuti, Dy- namics with autoregressive neural quantum states, Phys- ical Review A108, 022210 (2023), arXiv:2209.03241 [quant-ph]

-

[45]

Lange, F

H. Lange, F. D¨ oschl, J. M. Boehnlein, G. Mazzola, N. A. Modine, M. J. Scherer, and A. Mezzacapo, A review of neural quantum states, Quantum Science and Technology 9, 040501 (2024)

2024

-

[46]

Choi, Completely positive linear maps on complex matrices, Linear Algebra and its Applications10, 285 (1975)

M.-D. Choi, Completely positive linear maps on complex matrices, Linear Algebra and its Applications10, 285 (1975)

1975

-

[47]

Jamio lkowski, Linear transformations which preserve trace and positive semidefiniteness of operators, Reports on Mathematical Physics3, 275 (1972)

A. Jamio lkowski, Linear transformations which preserve trace and positive semidefiniteness of operators, Reports on Mathematical Physics3, 275 (1972)

1972

-

[48]

Neural- network quantum states for many-body physics,

M. Medvidovi´ c and J. R. Moreno, Neural-network quantum states for many-body physics, arXiv preprint arXiv:2402.11014 10.48550/arXiv.2402.11014 (2024)

-

[49]

Kovachki, Z

N. Kovachki, Z. Li, B. Liu, K. Azizzadenesheli, K. Bhat- tacharya, A. Stuart, and A. Anandkumar, Neural opera- tor: Learning maps between function spaces with appli- cations to PDEs, Journal of Machine Learning Research 24, 1 (2023)

2023

- [50]

-

[51]

Z. Li, N. Kovachki, K. Azizzadenesheli, B. Liu, K. Bhat- tacharya, A. Stuart, and A. Anandkumar, Fourier neu- ral operator for parametric partial differential equations, inInternational Conference on Learning Representations (2021) arXiv:2010.08895

work page internal anchor Pith review arXiv 2021

- [52]

-

[53]

P. Schauss, Quantum simulation of transverse Ising mod- els with Rydberg atoms, Quantum Science and Technol- ogy3, 023001 (2018), arXiv:1706.09014 [physics.atom- ph]

-

[54]

Scholl, M

P. Scholl, M. Schuler, H. J. Williams, A. A. Eberharter, D. Barredo, K.-N. Schymik, V. Lienhard, L.-P. Henry, T. C. Lang, T. Lahaye, A. M. L¨ auchli, and A. Browaeys, Quantum simulation of 2d antiferromagnets with hun- dreds of rydberg atoms, Nature595, 233–238 (2021)

2021

-

[55]

Ebadi, T

S. Ebadi, T. T. Wang, H. Levine, A. Keesling, G. Se- meghini, A. Omran, D. Bluvstein, R. Samajdar, H. Pich- ler, W. W. Ho, S. Choi, S. Sachdev, M. Greiner, V. Vuleti´ c, and M. D. Lukin, Quantum phases of matter on a 256-atom programmable quantum simulator, Nature 595, 227–232 (2021)

2021

-

[56]

D. M. Greenberger, M. A. Horne, and A. Zeilinger, Going beyond bell’s theorem, inBell’s theorem, quantum theory and conceptions of the universe(Springer, 1989) pp. 69– 72

1989

-

[57]

Rende, S

R. Rende, S. Goldt, F. Becca, and L. L. Viteritti, Fine- tuning neural network quantum states, Phys. Rev. Res. 6, 043280 (2024)

2024

-

[58]

S. Kothe and P. Kirton, Liouville-space neural network representation of density matrices, Physical Review A 109, 10.1103/physreva.109.062215 (2024)

-

[59]

K. Sprague and S. Czischek, Variational monte carlo with large patched transformers, Communications Physics7, 10.1038/s42005-024-01584-y (2024)

-

[60]

Vaswani, N

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser, and I. Polosukhin, At- tention is all you need, Advances in neural information processing systems30(2017)

2017

-

[61]

L. L. Viteritti, R. Rende, and F. Becca, Transformer vari- ational wave functions for frustrated quantum spin sys- tems, Phys. Rev. Lett.130, 236401 (2023)

2023

-

[62]

L. L. Viteritti, R. Rende, A. Parola, S. Goldt, and F. Becca, Transformer wave function for two dimensional frustrated magnets: Emergence of a spin-liquid phase in the shastry-sutherland model, Phys. Rev. B111, 134411 (2025)

2025

-

[63]

D. Luo, Z. Chen, J. Carrasquilla, and B. K. Clark, Au- toregressive neural network for simulating open quantum systems via a probabilistic formulation, Physical Review Letters128, 090501 (2022)

2022

- [64]

-

[65]

Gaussian Error Linear Units (GELUs)

D. Hendrycks and K. Gimpel, Gaussian error linear units (GELUs) (2016), arXiv:1606.08415

work page internal anchor Pith review arXiv 2016

-

[66]

D. P. Kingma and J. Ba, Adam: A method for stochas- tic optimization, inInternational Conference on Learning Representations(2015) arXiv:1412.6980

work page internal anchor Pith review arXiv 2015

-

[67]

J. Hauschild and F. Pollmann, Efficient numerical sim- ulations with tensor networks: Tensor network python (tenpy), SciPost Physics Lecture Notes 10.21468/scipost- physlectnotes.5 (2018)

-

[68]

Hauschild, J

J. Hauschild, J. Unfried, S. Anand, B. Andrews, M. Bintz, U. Borla, S. Divic, J. Geiger, M. Hefel, K. H´ emery, W. Kadow, J. Kemp, N. Kirchner, V. S. Liu, G. M¨ oller, D. Parker, M. Rader, A. Romen, S. Scalet, L. Schoonderwoerd, M. Schulz, T. Soejima, P. Thoma, Y. Wu, P. Zechmann, L. Zweng, R. S. K. Mong, M. P. Zaletel, and F. Pollmann, Tensor network pyt...

2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.