Recognition: unknown

A Comparison of Massively Parallel Performance Portable Particle-in-Cell schemes for electrostatic kinetic plasma simulations

Pith reviewed 2026-05-08 15:19 UTC · model grok-4.3

The pith

FFT solver is fastest in absolute time for electrostatic PIC simulations but limited in applicability, while PIF offers scalable high-fidelity alternative

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

In absolute time the FFT solver is advantageous, but is limited in its applicability. All other field solvers in the PIC scheme are an order-of-magnitude more expensive in terms of time, but scale similarly to the FFT case in the electrostatic PIC context. The PIF scheme serves as a high fidelity alternative to standard PIC, and while it is costlier than the FFT-based PIC scheme, it shows excellent scalability on all the architectures.

What carries the argument

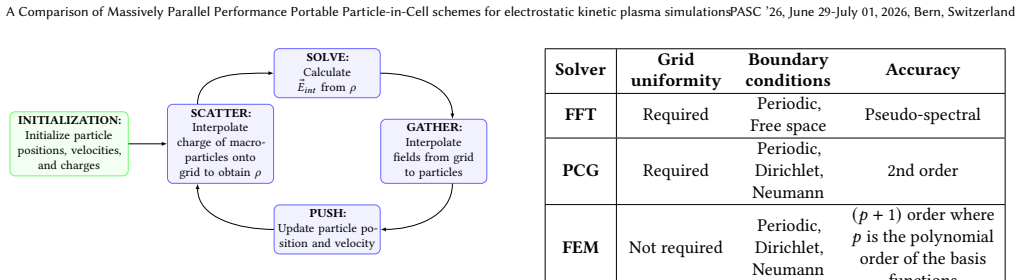

The solve phase of the PIC loop comparing FFT pseudo-spectral, matrix-free PCG, matrix-free FEM, and non-uniform-FFT-based PIF Poisson solvers inside the IPPL library on the Landau damping benchmark.

If this is right

- When the FFT solver applies, it should be selected to minimize wall-clock time per PIC step.

- Matrix-free PCG and FEM solvers deliver comparable scaling to FFT at roughly ten times the cost.

- The PIF scheme trades higher cost for high fidelity and strong scaling across GPU architectures.

- The IPPL implementations achieve performance portability on both AMD and Nvidia GPUs.

Where Pith is reading between the lines

- For problems with non-periodic boundaries where FFT cannot be used, PIF or the matrix-free solvers could substitute without large scalability penalties.

- The observed scaling of PIF suggests it may enable higher-resolution or longer-time plasma simulations on exascale machines.

- Benchmarking the same solvers on nonlinear or multi-scale plasma phenomena would test whether the reported performance ordering persists.

Load-bearing premise

The Landau damping test case with the chosen grid sizes and particle loading is representative enough to draw general conclusions about solver performance and portability for electrostatic kinetic plasma simulations.

What would settle it

Running the same solvers on a different electrostatic test problem such as two-stream instability and observing substantially different relative costs or scaling curves.

Figures

read the original abstract

We compare different Poisson solvers within the context of an electrostatic Vlasov-Poisson system. These schemes are implemented as part of the IPPL (Independent Parallel Particle Layer) library (Frey et al., 2024), which provides performance portable and dimension independent building blocks for scientific simulations requiring particle-mesh methods, with Eulerian (mesh-based) and Lagrangian (particle-based) approaches. The simulation used to compare the performance and portability of the schemes is Landau damping, part of a set of mini-applications implemented to benchmark and showcase the capabilities of the IPPL library (Muralikrishnan et al., 2024). We use grid-sizes of $512^3$ and $1024^3$ with 8 particles per cell, running with different algorithms in the solve phase of the Particle-in-Cell (PIC) loop: a Fast Fourier Transform (FFT) pseudo-spectral solver, a matrix-free finite difference Preconditioned Conjugate Gradient (PCG) solver, and a matrix-free Finite Element (FEM) solver. We also compare these PIC schemes to the novel Particle-in-Fourier (PIF) scheme, which performs interpolations using non-uniform FFTs thereby avoiding a grid in the real space. We obtain results on different computing architectures, such as AMD GPUs (LUMI at CSC), and Nvidia GPUs (Alps at CSCS and JUWELS Booster at J\"ulich Supercomputing Center), showcasing portability. In terms of absolute time the FFT solver is advantageous, but is limited in its applicability. All other field solvers in the PIC scheme are an order-of-magnitude more expensive in terms of time, but scale similarly to the FFT case in the electrostatic PIC context. The PIF scheme serves as a high fidelity alternative to standard PIC, and while it is costlier than the FFT-based PIC scheme, it shows excellent scalability on all the architectures.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript compares the performance and portability of Poisson solvers (FFT pseudo-spectral, matrix-free finite-difference PCG, matrix-free FEM) inside standard electrostatic PIC schemes and a novel Particle-in-Fourier (PIF) scheme that uses non-uniform FFTs to avoid a real-space grid. All methods are implemented via the IPPL library and benchmarked on the Landau damping problem at 512^3 and 1024^3 grids with 8 particles per cell, run on AMD (LUMI) and Nvidia (Alps, JUWELS) GPUs. The central claims are that FFT is fastest in absolute wall-clock time but limited in applicability, the other PIC solvers are roughly an order of magnitude more expensive yet exhibit comparable strong scaling, and PIF offers higher fidelity at increased cost while maintaining excellent scalability across architectures.

Significance. If the reported cost ratios and scaling behaviors prove robust, the work supplies concrete, hardware-grounded guidance for selecting field solvers in performance-portable particle-mesh codes for electrostatic plasma simulations. Direct timings on multiple GPU platforms and the use of an open library (IPPL) constitute practical strengths that could help practitioners weigh the trade-off between spectral accuracy, iterative solver overhead, and portability.

major comments (2)

- Abstract: the statements that 'All other field solvers in the PIC scheme are an order-of-magnitude more expensive' and 'scale similarly to the FFT case' are presented without tabulated timings, speed-up factors, iteration counts, or error bars, rendering the quantitative claims difficult to verify or reproduce.

- Abstract and Landau-damping benchmark description: the generalization to 'electrostatic kinetic plasma simulations' rests on a single linear, periodic, uniform-density test; in nonlinear or inhomogeneous regimes the iteration counts of PCG/FEM and the communication pattern of PIF NUFFTs can increase substantially, so the claimed order-of-magnitude cost ratio and scaling similarity lack a secure anchor for the broader class named in the title.

minor comments (1)

- A compact table listing absolute wall-clock times, strong-scaling efficiencies, and solver iteration counts for each architecture and grid size would improve readability and allow direct comparison of the reported order-of-magnitude difference.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback and positive evaluation of the practical value of our performance-portability study. We address each major comment below, proposing targeted revisions where appropriate while defending the manuscript's scope and claims on the basis of the presented evidence.

read point-by-point responses

-

Referee: Abstract: the statements that 'All other field solvers in the PIC scheme are an order-of-magnitude more expensive' and 'scale similarly to the FFT case' are presented without tabulated timings, speed-up factors, iteration counts, or error bars, rendering the quantitative claims difficult to verify or reproduce.

Authors: We agree that the abstract would benefit from greater quantitative grounding to improve verifiability. The full manuscript already reports detailed wall-clock timings, strong-scaling efficiencies, and iteration counts for the PCG and FEM solvers in the results section (Figures 3–5 and associated text for the 512³ and 1024³ cases on LUMI, Alps, and JUWELS). In the revised manuscript we will update the abstract to include representative quantitative statements (e.g., “approximately 8–12× higher wall-clock time” and “strong-scaling efficiencies above 80 % up to full machine scale”) while explicitly referencing the benchmark data and tables in the main text. This change preserves abstract length while making the claims directly traceable to the reported measurements. revision: yes

-

Referee: Abstract and Landau-damping benchmark description: the generalization to 'electrostatic kinetic plasma simulations' rests on a single linear, periodic, uniform-density test; in nonlinear or inhomogeneous regimes the iteration counts of PCG/FEM and the communication pattern of PIF NUFFTs can increase substantially, so the claimed order-of-magnitude cost ratio and scaling similarity lack a secure anchor for the broader class named in the title.

Authors: Landau damping is a standard, widely adopted benchmark precisely because it isolates the essential kinetic and electrostatic features of the Vlasov–Poisson system while remaining computationally tractable for large-scale performance studies. The observed cost ratios and scaling behaviors arise from fundamental properties of the solvers (direct FFT versus matrix-free iterative methods) and the NUFFT communication pattern in PIF, which are implemented in the performance-portable IPPL library; these algorithmic traits are expected to govern relative performance even when iteration counts rise in more demanding regimes. Nevertheless, we acknowledge that the single-test scope limits the strength of the generalization. In the revised manuscript we will add an explicit limitations paragraph in the discussion section that (i) qualifies the claims to the tested linear periodic regime, (ii) notes that iteration counts and NUFFT overhead may increase in nonlinear or inhomogeneous cases, and (iii) recommends future benchmarks (e.g., two-stream instability or density-gradient problems) to extend the comparison. This revision anchors the title and abstract more securely without altering the reported results. revision: partial

Circularity Check

No circularity: performance claims rest on direct hardware timings for a fixed test case

full rationale

The paper reports empirical wall-clock timings and scaling behavior obtained by executing the IPPL-based PIC and PIF codes on AMD and Nvidia GPUs for the Landau damping problem at 512^3 and 1024^3 grids with 8 ppc. No equations, fitted parameters, or predictions are derived; the central statements (FFT fastest but limited, other solvers ~10x slower yet similarly scaling, PIF high-fidelity with good scalability) are direct summaries of measured run times. Self-citations to the IPPL library (Frey et al. 2024) and mini-applications (Muralikrishnan et al. 2024) supply only the implementation framework and test definition; they do not supply the numerical results or the cost-ratio claims. Because the load-bearing content is fresh benchmark data rather than a closed derivation or self-referential fit, the analysis contains no circular steps.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Landau damping is a representative test for electrostatic kinetic plasma behavior

Reference graph

Works this paper leans on

-

[1]

Andreas Adelmann, Pedro Calvo, Matthias Frey, Achim Gsell, Uldis Locans, Christof Metzger-Kraus, Nicole Neveu, Chris Rogers, Steve Russell, Suzanne Sheehy, Jochem Snuverink, and Daniel Winklehner. 2019. OPAL a Versatile Tool for Charged Particle Accelerator Simulations.arXiv:1905.06654 [physics](May 2019). http://arxiv.org/abs/1905.06654

-

[2]

Andreas Adelmann, Pedro Calvo, Achim Gsell, Sriramkrishnan Muralikrishnan, Nicole Neveu, Philippe Piot, Chris Rogers, Mohsen Sadr, Jochem Snuverink, Jonathan Thompson, and Daniel Winklehner. 2025. The OPAL(X) Framework. https://opalx-project.github.io/Manual/

2025

-

[3]

2018.Stochastic and spectral particle methods for plasma physics

Jakob Ameres. 2018.Stochastic and spectral particle methods for plasma physics. Ph. D. Dissertation. Technische Univerisität München

2018

-

[4]

Alan Ayala, Stanimire Tomov, Azzam Haidar, and Jack Dongarra. 2020. heffte: Highly efficient fft for exascale. InInternational Conference on Computational Science. Springer, 262–275

2020

-

[5]

Alex H Barnett. 2021. Aliasing error of the exp(𝛽 √ 1−𝑧 2 ) kernel in the nonuni- form fast Fourier transform.Applied and Computational Harmonic Analysis51 (2021), 1–16

2021

-

[6]

exponential of semicircle

Alexander H Barnett, Jeremy Magland, and Ludvig af Klinteberg. 2019. A parallel nonuniform fast Fourier transform library based on an “exponential of semicircle" kernel.SIAM Journal on Scientific Computing41, 5 (2019), C479–C504

2019

-

[7]

C. K. Birdsall and A. B. Langdon. 2018.Plasma Physics via Computer Simulation. CRC Press, Boca Raton. https://doi.org/10.1201/9781315275048

-

[8]

Emily Bourne, Philippe Leleux, Katharina Kormann, Carola Kruse, Virginie Grand- girard, Yaman Güçlü, Martin J. Kühn, Ulrich Rüde, Eric Sonnendrücker, and Edoardo Zoni. 2023. Solver comparison for Poisson-like equations on tokamak geometries.J. Comput. Phys.488 (Sept. 2023), 112249. https://doi.org/10.1016/j. jcp.2023.112249

work page doi:10.1016/j 2023

-

[9]

Martin Campos Pinto, Jakob Ameres, Katharina Kormann, and Eric Sonnen- drücker. 2024. On variational Fourier particle methods.Journal of Scientific Computing101, 3 (2024), 68

2024

-

[10]

Carter Edwards, Christian R

H. Carter Edwards, Christian R. Trott, and Daniel Sunderland. 2014. Kokkos: Enabling manycore performance portability through polymorphic memory access patterns.J. Parallel and Distrib. Comput.74, 12 (July 2014). https://doi.org/10. 1016/j.jpdc.2014.07.003 Institution: Sandia National Lab. (SNL-NM), Albuquerque, NM (United States) Number: SAND-2013-5603J

2014

-

[11]

Zane D. Crawford, O. H. Ramachandran, Scott O’Connor, John Luginsland, and B. Shanker. 2021. Higher Order Charge Conserving Electromagnetic Finite Element Particle in Cell Method. https://doi.org/10.48550/arXiv.2111.12411 arXiv:2111.12411 [physics]

-

[12]

Matthias Frey, Alessandro Vinciguerra, Sriramkrishnan Muralikrishnan, Sonali, vmontanaro, Mohsen, Andreas Adelmann, manuel5975p, and Felix Schurk. 2024. IPPL-framework/ippl: IPPL-3.2.0. https://doi.org/10.5281/zenodo.10878166

-

[13]

Amir Gholami, Dhairya Malhotra, Hari Sundar, and George Biros. 2016. FFT, FMM, or Multigrid? A comparative Study of State-Of-the-Art Poisson Solvers for Uniform and Nonuniform Grids in the Unit Cube.SIAM Journal on Scientific Computing38, 3 (Jan. 2016), C280–C306. https://doi.org/10.1137/15M1010798 arXiv:1408.6497 [math]

-

[14]

R. Hiptmair. 2002. Finite elements in computational electromagnetism.Acta Numerica11 (Jan. 2002), 237–339. https://doi.org/10.1017/S0962492902000041

-

[15]

R Hiptmair. 2024. Numerical Methods for (Partial) Differential Equations. (2024). Lecture Notes

2024

-

[16]

Hockney and J

R.W. Hockney and J. W Eastwood. 1988.Computer Simulation Using Particles. CRC Press

1988

-

[17]

Martin Kronbichler, Dmytro Sashko, and Peter Munch. 2023. Enhancing data lo- cality of the conjugate gradient method for high-order matrix-free finite-element implementations.The International Journal of High Performance Computing Ap- plications37, 2 (March 2023), 61–81. https://doi.org/10.1177/10943420221107880

- [18]

-

[19]

Matthew S Mitchell, Matthew T Miecnikowski, Gregory Beylkin, and Scott E Parker. 2019. Efficient Fourier basis particle simulation.J. Comput. Phys.396 (2019), 837–847

2019

-

[20]

Cerfon, Miroslav Stoyanov, Rahulkumar Gayatri, and Andreas Adelmann

Sriramkrishnan Muralikrishnan, Matthias Frey, Alessandro Vinciguerra, Michael Ligotino, Antoine J. Cerfon, Miroslav Stoyanov, Rahulkumar Gayatri, and Andreas Adelmann. 2024. Scaling and performance portability of the particle-in-cell scheme for plasma physics applications through mini-apps targeting exascale architectures. InProceedings of the 2024 SIAM C...

-

[21]

Sriramkrishnan Muralikrishnan and Robert Speck. 2025. Error Analysis and Parallel Scaling Study of a Parareal Parallel-in-Time Integration Algorithm for Particle-in-Fourier Schemes.SIAM Journal on Scientific Computing(2025), S311– S336

2025

-

[22]

A. Myers, A. Almgren, L.D. Amorim, J. Bell, L. Fedeli, L. Ge, K. Gott, D.P. Grote, M. Hogan, A. Huebl, R. Jambunathan, R. Lehe, C. Ng, M. Rowan, O. Shapoval, M. Thévenet, J.-L. Vay, H. Vincenti, E. Yang, N. Zaïm, W. Zhang, Y. Zhao, and E. Zoni. 2021. Porting WarpX to GPU-accelerated platforms.Parallel Comput.108 (2021), 102833. https://doi.org/10.1016/j.p...

-

[23]

N Ohana, A Jocksch, E Lanti, TM Tran, S Brunner, C Gheller, F Hariri, and L Villard. 2016. Towards the optimization of a gyrokinetic Particle-In-Cell (PIC) code on large-scale hybrid architectures. InJournal of Physics: Conference Series, Vol. 775(1). IOP Publishing, 012010

2016

-

[24]

2016.Massively Parallel, Fast Fourier Transforms and Particle-Mesh Methods

Michael Pippig. 2016.Massively Parallel, Fast Fourier Transforms and Particle-Mesh Methods. Ph. D. Dissertation. Dissertation, Chemnitz, Technische Universität Chemnitz, 2015

2016

-

[25]

Daniel Potts, Gabriele Steidl, and Manfred Tasche. 2001. Fast Fourier transforms for nonequispaced data: A tutorial.Modern Sampling Theory: Mathematics and Applications(2001), 247–270

2001

-

[26]

L F Ricketson and A J Cerfon. 2016. Sparse grid techniques for particle-in- cell schemes.Plasma Physics and Controlled Fusion59, 2 (Dec. 2016), 024002. https://doi.org/10.1088/1361-6587/59/2/024002

-

[27]

Bradley A Shadwick, Alexander B Stamm, and Evstati G Evstatiev. 2014. Varia- tional formulation of macro-particle plasma simulation algorithms.Physics of Plasmas21, 5 (2014)

2014

-

[28]

Changxiao Shen, Antoine Cerfon, and Sriramkrishnan Muralikrishnan. 2024. A particle-in-Fourier method with semi-discrete energy conservation for non- periodic boundary conditions.Journal of computational physics519 (2024), 113390

2024

-

[29]

Gilbert Strang. 2006. Multigrid Methods, Mathematical Methods for Engineers II. (2006)

2006

-

[30]

Cela, Edilberto Sánchez, and Francisco Castejón

Xavier Sáez, Alejandro Soba, Jose M. Cela, Edilberto Sánchez, and Francisco Castejón. 2011. Particle-in-Cell Algorithms for Plasma Simulations on Heteroge- neous Architectures. In2011 19th International Euromicro Conference on Parallel, Distributed and Network-Based Processing. 385–389. https://doi.org/10.1109/PDP. 2011.42 A Non-uniform Fast Fourier Trans...

work page doi:10.1109/pdp 2011

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.