Recognition: unknown

Low-Latency Out-of-Core ANN Search in High-Dimensional Space

Pith reviewed 2026-05-08 03:37 UTC · model grok-4.3

The pith

SkipDisk achieves 63 percent of HNSW latency at 20 percent memory footprint for out-of-core high-dimensional ANN search.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

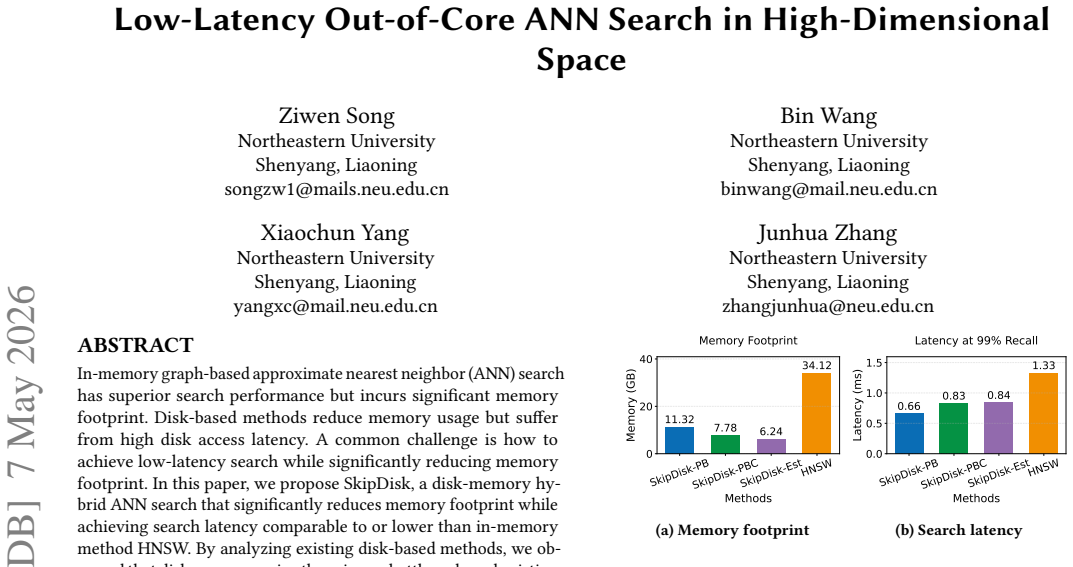

SkipDisk equips each point with its own dedicated pivot to strengthen the triangle inequality lower bound, then applies an estimation-based filter to eliminate most irrelevant disk accesses. A three-level pruning strategy keeps only informative data resident in memory, and neighbor nodes are stored in memory to enable asynchronous I/O that decouples the in-memory search from disk operations. These steps together produce search latency equal to 85 percent of HNSW at approximately 10 percent memory footprint or 63 percent of HNSW latency at around 20 percent memory footprint.

What carries the argument

Dedicated per-point pivot that tightens the triangle-inequality lower bound, paired with estimation-based filtering and three-level data pruning to control disk accesses and memory residency.

If this is right

- Disk accesses drop sharply because the tightened lower bound filters out most irrelevant points before any read occurs.

- Memory footprint falls to 10-20 percent of a pure in-memory graph while nearest-neighbor accuracy remains comparable.

- Asynchronous I/O hides remaining disk latency by letting in-memory search continue while neighbor nodes are fetched.

- The hybrid storage model scales ANN to datasets larger than available RAM without reverting to slow disk-only performance.

Where Pith is reading between the lines

- If the per-point pivot choice works across many datasets, the same bound-tightening idea could improve filtering in other out-of-core indexes such as range or k-NN variants.

- Keeping neighbor nodes in memory while pruning the rest of the graph to three levels may suggest selective caching policies for other I/O-bound database operators.

- The latency gains might increase or diminish at higher dimensions; running controlled tests that vary dimensionality would test the method's robustness.

Load-bearing premise

The per-point pivot produces a lower bound tight enough that the filter removes most irrelevant disk accesses without dropping true neighbors and the three-level pruning retains enough information to keep search quality intact.

What would settle it

On a standard high-dimensional benchmark, measure that either recall falls more than a few percent below HNSW at the claimed memory levels or the observed disk access count fails to explain the reported latency reduction.

Figures

read the original abstract

In-memory graph-based approximate nearest neighbor (ANN) search has superior search performance but incurs significant memory footprint. Disk-based methods reduce memory usage but suffer from high disk access latency. A common challenge is how to achieve low-latency search while significantly reducing memory footprint. In this paper, we propose SkipDisk, a disk-memory hybrid ANN search that significantly reduces memory footprint while achieving search latency comparable to or lower than in-memory method HNSW. By analyzing existing disk-based methods, we observed that disk access remains the primary bottleneck, and existing lower bound based filtering methods are two loose to effectively reduce disk access. Therefore, we design SkipDisk to achieve tight lower bound with low memory footprint to reduce the search latency. First, we design a dedicated pivot for each point to improve the lower bound of the triangle inequality for effective filtering. We further design an estimation-based approach based on this lower bound. Second, to reduce the memory footprint, we employ a three-level data pruning strategy to preserve informative data in memory. Third, to further reduce search latency, we design an asynchronous I/O strategy based on the decoupling of in-memory search and disk access by storing neighbor nodes in memory. Experiments show that our method achieves a latency of 85 of HNSW's latency with approximately 10 memory footprint, and a latency to 63 of HNSW's with a slightly higher memory footprint of around 20.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces SkipDisk, a disk-memory hybrid ANN search method for high-dimensional data. It uses a dedicated per-point pivot to tighten triangle-inequality lower bounds, an estimation-based filter to prune disk accesses, a three-level pruning strategy to keep memory low, and asynchronous I/O that keeps neighbor nodes in RAM. The central claim is that the resulting system delivers 85% of HNSW latency at ~10% memory footprint and 63% of HNSW latency at ~20% memory footprint.

Significance. If the latency and memory claims are substantiated with reproducible experiments, SkipDisk would address a practical bottleneck in large-scale ANN: how to keep search latency close to in-memory graph indexes while dropping the memory requirement by an order of magnitude. The per-point pivot plus estimation filter is a concrete, if incremental, idea for tightening bounds without storing full distance matrices.

major comments (3)

- [Abstract, §4] Abstract and §4 (Experiments): the headline performance numbers (85% and 63% of HNSW latency at 10% and 20% memory) are stated without any description of the datasets, their dimensionality, the recall@K target, the HNSW baseline parameters, or the hardware (SSD vs. HDD, page size, etc.). Without these, the central latency/memory trade-off cannot be evaluated or reproduced.

- [§3.1] §3.1 (Dedicated pivot): the claim that a per-point pivot yields a sufficiently tight lower bound for the estimation-based filter to remove most irrelevant disk accesses rests on an untested assumption. No measurements of bound tightness (e.g., average ratio of lower bound to true distance), filter false-negative rate, or actual disk-I/O reduction factor are reported, leaving the method vulnerable to the well-known distance-concentration problem in high dimensions.

- [§3.2] §3.2 (Three-level pruning): the strategy is described at a high level, but no analysis shows how much information is retained versus discarded at each level, nor any guarantee that true neighbors survive the pruning with high probability. This directly affects whether the 10-20% memory figures can be achieved without quality loss.

minor comments (2)

- [Abstract] Abstract contains several typographical omissions: '85 of HNSW's latency' should read '85% of HNSW's latency'; 'a latency to 63 of HNSW's' should read '63% of HNSW's latency'; '10 memory footprint' and '20 memory footprint' are missing the percent sign.

- [§3] Notation for the per-point pivot and the estimation-based filter is introduced without a clear summary table or pseudocode, making the algorithmic description harder to follow than necessary.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback, which identifies key areas where additional details and analysis will strengthen the manuscript. We address each major comment below and commit to revisions that improve clarity and substantiation of the claims without altering the core contributions.

read point-by-point responses

-

Referee: [Abstract, §4] Abstract and §4 (Experiments): the headline performance numbers (85% and 63% of HNSW latency at 10% and 20% memory) are stated without any description of the datasets, their dimensionality, the recall@K target, the HNSW baseline parameters, or the hardware (SSD vs. HDD, page size, etc.). Without these, the central latency/memory trade-off cannot be evaluated or reproduced.

Authors: We agree that these details are essential for evaluation and reproducibility. In the revised manuscript, we will expand the abstract to include a brief mention of the datasets (sizes and dimensionalities), target recall@K, and hardware context. Section 4 will be augmented with a dedicated experimental setup subsection specifying dataset characteristics, exact recall targets (e.g., recall@10), HNSW parameters (M and efConstruction values), and hardware details including SSD type, page size, and I/O configuration. This will directly enable reproduction of the reported latency/memory trade-offs. revision: yes

-

Referee: [§3.1] §3.1 (Dedicated pivot): the claim that a per-point pivot yields a sufficiently tight lower bound for the estimation-based filter to remove most irrelevant disk accesses rests on an untested assumption. No measurements of bound tightness (e.g., average ratio of lower bound to true distance), filter false-negative rate, or actual disk-I/O reduction factor are reported, leaving the method vulnerable to the well-known distance-concentration problem in high dimensions.

Authors: The original submission did not include explicit quantitative measurements of bound tightness or filter performance, which leaves the assumption insufficiently supported. While end-to-end results in §4 show effective disk-access reduction and maintained recall, we will add a new analysis subsection (or appendix) reporting the average lower-bound-to-true-distance ratio, estimation-filter false-negative rates, and measured disk-I/O reduction factors on the evaluated high-dimensional datasets. This will provide direct evidence that the dedicated pivot produces sufficiently tight bounds despite distance concentration. revision: yes

-

Referee: [§3.2] §3.2 (Three-level pruning): the strategy is described at a high level, but no analysis shows how much information is retained versus discarded at each level, nor any guarantee that true neighbors survive the pruning with high probability. This directly affects whether the 10-20% memory figures can be achieved without quality loss.

Authors: We acknowledge that the high-level description of the three-level pruning lacks supporting analysis on retention rates and neighbor survival. In the revision, we will add empirical measurements quantifying the fraction of data retained/discarded at each pruning level and the empirical survival probability of true nearest neighbors. Additional experiments will demonstrate that recall is preserved at the reported 10-20% memory footprints, and we will discuss any probabilistic guarantees derivable from the estimation filter. These additions will substantiate that quality is maintained. revision: yes

Circularity Check

No significant circularity; performance claims are experimental comparisons

full rationale

The paper introduces SkipDisk with three design elements (per-point pivots for tighter triangle-inequality bounds, estimation-based filtering, three-level pruning, and asynchronous I/O) and validates them via direct latency and memory measurements against HNSW. No equations, fitted parameters renamed as predictions, or self-citation chains appear in the provided text; the central claims reduce to empirical results rather than any self-referential derivation or ansatz smuggled through prior work. The reader's assessment of score 2.0 aligns with the absence of load-bearing self-referential steps.

Axiom & Free-Parameter Ledger

free parameters (1)

- pruning thresholds

axioms (1)

- standard math Triangle inequality holds for the distance metric used

invented entities (1)

-

Dedicated pivot per point

no independent evidence

Reference graph

Works this paper leans on

-

[1]

2026.Anserini: OpenAI-ada2 Embeddings for MS MARCO Passage.Retrieved April 17, 2026 from https://github.com/castorini/anserini/blob/master/docs/ experiments-msmarco-passage-openai-ada2.md?utm_source=chatgpt.com

2026

-

[2]

2026.argilla-warehouse/personahub-fineweb-edu-4-embeddings.Retrieved April 17, 2026 from https://huggingface.co/datasets/argilla-warehouse/personahub- fineweb-edu-4-embeddings

2026

-

[3]

Retrieved April 17, 2026 from https://github.com/axboe/ liburing

2026.axboe/liburing. Retrieved April 17, 2026 from https://github.com/axboe/ liburing

2026

-

[4]

2026.bigcode/stack-exchange-embeddings-20230914.Retrieved April 17, 2026 from https://huggingface.co/datasets/bigcode/stack-exchange-embeddings- 20230914

2026

-

[5]

2026.CohereLabs/msmarco-v2.1-embed-english-v3.Retrieved April 17, 2026 from https://huggingface.co/datasets/CohereLabs/msmarco-v2.1-embed-english-v3

2026

-

[6]

Retrieved April 17, 2026 from https://huggingface.co/

2026.Hugging Face. Retrieved April 17, 2026 from https://huggingface.co/

2026

-

[7]

Retrieved April 17, 2026 from https://en.wikipedia.org/wiki/ IEEE_754

2026.IEEE 754. Retrieved April 17, 2026 from https://en.wikipedia.org/wiki/ IEEE_754

2026

-

[8]

Alexandr Andoni and Piotr Indyk. 2008. Near-optimal hashing algorithms for approximate nearest neighbor in high dimensions.Commun. ACM51, 1 (2008), 117–122

2008

-

[9]

Alexandr Andoni and Ilya Razenshteyn. 2015. Optimal data-dependent hashing for approximate near neighbors. InProceedings of the forty-seventh annual ACM symposium on Theory of computing. 793–801

2015

-

[10]

Fabien André, Anne-Marie Kermarrec, and Nicolas Le Scouarnec. 2015. Cache locality is not enough: High-Performance Nearest Neighbor Search with Product Quantization Fast Scan.Proc. VLDB Endow.9, 4 (2015), 288–299

2015

-

[11]

Ilias Azizi, Karima Echihabi, and Themis Palpanas. 2025. Graph-Based Vector Search: An Experimental Evaluation of the State-of-the-Art.Proc. ACM Manag. Data3, 1, Article 43 (Feb. 2025), 31 pages. https://doi.org/10.1145/3709693 Ziwen Song, Bin Wang, Xiaochun Yang, and Junhua Zhang

- [12]

-

[13]

Kakade, and John Langford

Alina Beygelzimer, Sham M. Kakade, and John Langford. 2006. Cover trees for nearest neighbor. InMachine Learning, Proceedings of the Twenty-Third In- ternational Conference (ICML 2006), Pittsburgh, Pennsylvania, USA, June 25-29, 2006 (ACM International Conference Proceeding Series), William W. Cohen and Andrew W. Moore (Eds.), Vol. 148. ACM, 97–104

2006

-

[14]

Cheng Chen, Chenzhe Jin, Yunan Zhang, Sasha Podolsky, Chun Wu, Szu- Po Wang, Eric Hanson, Zhou Sun, Robert Walzer, and Jianguo Wang. 2024. SingleStore-V: An Integrated Vector Database System in SingleStore.Proc. VLDB Endow.17, 12 (2024), 3772–3785. https://doi.org/10.14778/3685800.3685805

-

[15]

Chen, Wei-Cheng Chang, Jyun-Yu Jiang, Hsiang-Fu Yu, Inderjit S

Patrick H. Chen, Wei-Cheng Chang, Jyun-Yu Jiang, Hsiang-Fu Yu, Inderjit S. Dhillon, and Cho-Jui Hsieh. 2023. FINGER: Fast Inference for Graph-based Approximate Nearest Neighbor Search. InProceedings of the ACM Web Conference 2023, WWW 2023, Austin, TX, USA, 30 April 2023 - 4 May 2023, Ying Ding, Jie Tang, Juan F. Sequeda, Lora Aroyo, Carlos Castillo, and ...

2023

-

[16]

Qi Chen, Bing Zhao, Haidong Wang, Mingqin Li, Chuanjie Liu, Zengzhong Li, Mao Yang, and Jingdong Wang. 2021. SPANN: Highly-efficient Billion-scale Approximate Nearest Neighborhood Search. InAdvances in Neural Information Processing Systems 34: Annual Conference on Neural Information Processing Sys- tems 2021, NeurIPS 2021, December 6-14, 2021, virtual, Ma...

2021

-

[17]

Weijian Chen, Haotian Liu, Yangshen Deng, Long Xiang, Liang Huang, Gezi Li, and Bo Tang. 2026. AlayaLaser: Efficient Index Layout and Search Strategy for Large-scale High-dimensional Vector Similarity Search.CoRRabs/2602.23342 (2026). https://doi.org/10.48550/ARXIV.2602.23342 arXiv:2602.23342

-

[18]

Paolo Ciaccia, Marco Patella, and Pavel Zezula. 1997. M-tree: An Efficient Ac- cess Method for Similarity Search in Metric Spaces. InVLDB’97, Proceedings of 23rd International Conference on Very Large Data Bases, August 25-29, 1997, Athens, Greece, Matthias Jarke, Michael J. Carey, Klaus R. Dittrich, Frederick H. Lochovsky, Pericles Loucopoulos, and Manfr...

1997

-

[19]

Mirrokni

Mayur Datar, Nicole Immorlica, Piotr Indyk, and Vahab S. Mirrokni. 2004. Locality-sensitive hashing scheme based on p-stable distributions. InProceedings of the 20th ACM Symposium on Computational Geometry, Brooklyn, New York, USA, June 8-11, 2004, Jack Snoeyink and Jean-Daniel Boissonnat (Eds.). ACM, 253–262

2004

-

[20]

Liwei Deng, Penghao Chen, Ximu Zeng, Tianfu Wang, Yan Zhao, and Kai Zheng. 2024. Efficient Data-aware Distance Comparison Operations for High- Dimensional Approximate Nearest Neighbor Search.Proc. VLDB Endow.18, 3 (2024), 812–821. https://doi.org/10.14778/3712221.3712244

-

[21]

Wei Dong, Moses Charikar, and Kai Li. 2011. Efficient k-nearest neighbor graph construction for generic similarity measures. InProceedings of the 20th Interna- tional Conference on World Wide Web, WWW 2011, Hyderabad, India, March 28 - April 1, 2011, Sadagopan Srinivasan, Krithi Ramamritham, Arun Kumar, M. P. Ravindra, Elisa Bertino, and Ravi Kumar (Eds.)...

2011

-

[22]

Razenshteyn, and Tal Wagner

Yihe Dong, Piotr Indyk, Ilya P. Razenshteyn, and Tal Wagner. 2020. Learning Space Partitions for Nearest Neighbor Search. In8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, April 26-30, 2020. OpenReview.net. https://openreview.net/forum?id=rkenmREFDr

2020

-

[23]

Wenqi Fan, Yujuan Ding, Liangbo Ning, Shijie Wang, Hengyun Li, Dawei Yin, Tat-Seng Chua, and Qing Li. 2024. A Survey on RAG Meeting LLMs: To- wards Retrieval-Augmented Large Language Models. InProceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, KDD 2024, Barcelona, Spain, August 25-29, 2024, Ricardo Baeza-Yates and France...

-

[24]

Cong Fu, Changxu Wang, and Deng Cai. 2021. High dimensional similarity search with satellite system graph: Efficiency, scalability, and unindexed query compatibility.IEEE Transactions on Pattern Analysis and Machine Intelligence44, 8 (2021), 4139–4150

2021

-

[25]

Cong Fu, Chao Xiang, Changxu Wang, and Deng Cai. 2019. Fast Approximate Nearest Neighbor Search With The Navigating Spreading-out Graph.Proc. VLDB Endow.12, 5 (2019), 461–474

2019

-

[26]

Junhao Gan, Jianlin Feng, Qiong Fang, and Wilfred Ng. 2012. Locality-sensitive hashing scheme based on dynamic collision counting. InProceedings of the ACM SIGMOD International Conference on Management of Data, SIGMOD 2012, Scottsdale, AZ, USA, May 20-24, 2012, K. Selçuk Candan, Yi Chen, Richard T. Snodgrass, Luis Gravano, and Ariel Fuxman (Eds.). ACM, 541–552

2012

-

[27]

Jianyang Gao and Cheng Long. 2023. High-Dimensional Approximate Nearest Neighbor Search: with Reliable and Efficient Distance Comparison Operations. Proc. ACM Manag. Data1, 2 (2023), 137:1–137:27

2023

-

[28]

Jianyang Gao and Cheng Long. 2024. RaBitQ: Quantizing High-Dimensional Vectors with a Theoretical Error Bound for Approximate Nearest Neighbor Search.Proc. ACM Manag. Data2, 3, Article 167 (May 2024), 27 pages. https: //doi.org/10.1145/3654970

-

[29]

Tiezheng Ge, Kaiming He, Qifa Ke, and Jian Sun. 2013. Optimized Product Quantization for Approximate Nearest Neighbor Search. In2013 IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, June 23-28, 2013. IEEE Computer Society, 2946–2953

2013

-

[30]

Hao Guo and Youyou Lu. 2025. Achieving Low-Latency Graph-Based Vector Search via Aligning Best-First Search Algorithm with SSD. In19th USENIX Symposium on Operating Systems Design and Implementation, OSDI 2025, Boston, MA, USA, July 7-9, 2025, Lidong Zhou and Yuanyuan Zhou (Eds.). USENIX Association, 171–186. https://www.usenix.org/conference/osdi25/prese...

2025

-

[31]

Rentong Guo, Xiaofan Luan, Long Xiang, Xiao Yan, Xiaomeng Yi, Jigao Luo, Qianya Cheng, Weizhi Xu, Jiarui Luo, Frank Liu, Zhenshan Cao, Yanliang Qiao, Ting Wang, Bo Tang, and Charles Xie. 2022. Manu: A Cloud Native Vector Database Management System.Proc. VLDB Endow.15, 12 (2022), 3548–3561

2022

-

[32]

Kiana Hajebi, Yasin Abbasi-Yadkori, Hossein Shahbazi, and Hong Zhang. 2011. Fast Approximate Nearest-Neighbor Search with k-Nearest Neighbor Graph. InIJCAI 2011, Proceedings of the 22nd International Joint Conference on Artifi- cial Intelligence, Barcelona, Catalonia, Spain, July 16-22, 2011, Toby Walsh (Ed.). IJCAI/AAAI, 1312–1317

2011

-

[33]

Ben Harwood and Tom Drummond. 2016. FANNG: Fast Approximate Nearest Neighbour Graphs. In2016 IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2016, Las Vegas, NV, USA, June 27-30, 2016. IEEE Computer Society, 5713–5722. https://doi.org/10.1109/CVPR.2016.616

-

[34]

Chengying Huan, Lizheng Chen, Zhengyi Yang, Shaonan Ma, Rong Gu, Renjie Yao, Zhibin Wang, Mingxing Zhang, Fang Xi, Jie Tao, Gang Zhang, Guihai Chen, and Chen Tian. 2025. OrchANN: A Unified I/O Orchestration Framework for Skewed Out-of-Core Vector Search.CoRRabs/2512.22838 (2025). https: //doi.org/10.48550/ARXIV.2512.22838 arXiv:2512.22838

-

[35]

Qiang Huang, Jianlin Feng, Yikai Zhang, Qiong Fang, and Wilfred Ng. 2015. Query-Aware Locality-Sensitive Hashing for Approximate Nearest Neighbor Search.Proc. VLDB Endow.9, 1 (2015), 1–12

2015

-

[36]

Piotr Indyk and Rajeev Motwani. 1998. Approximate nearest neighbors: towards removing the curse of dimensionality. InProceedings of the thirtieth annual ACM symposium on Theory of computing. 604–613

1998

-

[37]

Suhas Jayaram Subramanya, Fnu Devvrit, Harsha Vardhan Simhadri, Ravishankar Krishnawamy, and Rohan Kadekodi. 2019. Diskann: Fast accurate billion-point nearest neighbor search on a single node.Advances in Neural Information Processing Systems32 (2019)

2019

-

[38]

Hervé Jégou, Romain Tavenard, Matthijs Douze, and Laurent Amsaleg. 2011. Searching in one billion vectors: Re-rank with source coding. InProceedings of the IEEE International Conference on Acoustics, Speech, and Signal Processing, ICASSP 2011, May 22-27, 2011, Prague Congress Center, Prague, Czech Republic. IEEE, 861–864

2011

-

[39]

Haodi Jiang, Hao Guo, Minhui Xie, Jiwu Shu, and Youyou Lu. 2025. High- Throughput, Cost-Effective Billion-Scale Vector Search with a Single GPU.Proc. ACM Manag. Data3, 6 (2025), 1–27. https://doi.org/10.1145/3769799

-

[40]

Yannis Kalantidis and Yannis Avrithis. 2014. Locally Optimized Product Quan- tization for Approximate Nearest Neighbor Search. In2014 IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2014, Columbus, OH, USA, June 23-28, 2014. IEEE Computer Society, 2329–2336

2014

-

[41]

Dingyi Kang, Dongming Jiang, Hanshen Yang, Hang Liu, and Bingzhe Li. 2025. Scalable Disk-Based Approximate Nearest Neighbor Search with Page-Aligned Graph.CoRRabs/2509.25487 (2025). https://doi.org/10.48550/ARXIV.2509.25487 arXiv:2509.25487

-

[42]

Leonardo Kuffo, Elena Krippner, and Peter Boncz. 2025. PDX: A Data Layout for Vector Similarity Search.Proc. ACM Manag. Data3, 3, Article 196 (June 2025), 26 pages. https://doi.org/10.1145/3725333

-

[43]

Binhong Li, Xiao Yan, and Shangqi Lu. 2025. Fast-Convergent Proximity Graphs for Approximate Nearest Neighbor Search.CoRRabs/2510.05975 (2025). https: //doi.org/10.48550/ARXIV.2510.05975 arXiv:2510.05975

-

[44]

Hui Li, Shiyuan Deng, Xiao Yan, Xiangyu Zhi, and James Cheng. 2025. SAQ: Pushing the Limits of Vector Quantization through Code Adjustment and Di- mension Segmentation.Proc. ACM Manag. Data3, 6 (2025), 1–25. https: //doi.org/10.1145/3769824

-

[45]

Sen Li, Fuyu Lv, Taiwei Jin, Guli Lin, Keping Yang, Xiaoyi Zeng, Xiao-Ming Wu, and Qianli Ma. 2021. Embedding-based Product Retrieval in Taobao Search. In KDD ’21: The 27th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, Virtual Event, Singapore, August 14-18, 2021, Feida Zhu, Beng Chin Ooi, and Chunyan Miao (Eds.). ACM, 3181–3189. https://d...

-

[46]

Kejing Lu, Mineichi Kudo, Chuan Xiao, and Yoshiharu Ishikawa. 2021. HVS: Hi- erarchical Graph Structure Based on Voronoi Diagrams for Solving Approximate Nearest Neighbor Search.Proc. VLDB Endow.15, 2 (2021), 246–258

2021

-

[47]

Kejing Lu, Chuan Xiao, and Yoshiharu Ishikawa. 2024. Probabilistic Routing for Graph-Based Approximate Nearest Neighbor Search. InForty-first International Conference on Machine Learning, ICML 2024, Vienna, Austria, July 21-27, 2024 (Proceedings of Machine Learning Research), Ruslan Salakhutdinov, Zico Kolter, Katherine A. Heller, Adrian Weller, Nuria Oli...

2024

-

[48]

Yu A Malkov and Dmitry A Yashunin. 2018. Efficient and robust approximate nearest neighbor search using hierarchical navigable small world graphs.IEEE transactions on pattern analysis and machine intelligence42, 4 (2018), 824–836

2018

-

[49]

James Jie Pan, Jianguo Wang, and Guoliang Li. 2024. Survey of vector database management systems.VLDB J.33, 5 (2024), 1591–1615. https://doi.org/10.1007/ S00778-024-00864-X

2024

-

[50]

Yun Peng, Byron Choi, Tsz Nam Chan, Jianye Yang, and Jianliang Xu. 2023. Efficient Approximate Nearest Neighbor Search in Multi-dimensional Databases. Proc. ACM Manag. Data1, 1 (2023), 54:1–54:27

2023

-

[51]

Chanop Silpa-Anan and Richard I. Hartley. 2008. Optimised KD-trees for fast im- age descriptor matching. In2008 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR 2008), 24-26 June 2008, Anchorage, Alaska, USA. IEEE Computer Society

2008

-

[52]

Yitong Song, Pengcheng Zhang, Chao Gao, Bin Yao, Kai Wang, Zongyuan Wu, and Lin Qu. 2025. TRIM: Accelerating High-Dimensional Vector Similarity Search with Enhanced Triangle-Inequality-Based Pruning.Proc. ACM Manag. Data3, 6 (2025), 1–26. https://doi.org/10.1145/3769838

-

[53]

Ziwen Song, Bin Wang, and Xiaochun Yang. 2025. Accelerating High- Dimensional ANN Search via Skipping Redundant Distance Computations.Proc. ACM Manag. Data3, 6 (2025), 1–29. https://doi.org/10.1145/3769753

- [54]

-

[55]

Yufei Tao, Ke Yi, Cheng Sheng, and Panos Kalnis. 2009. Quality and efficiency in high dimensional nearest neighbor search. InProceedings of the ACM SIGMOD International Conference on Management of Data, SIGMOD 2009, Providence, Rhode Island, USA, June 29 - July 2, 2009, Ugur Çetintemel, Stanley B. Zdonik, Donald Kossmann, and Nesime Tatbul (Eds.). ACM, 563–576

2009

-

[56]

Bing Tian, Haikun Liu, Yuhang Tang, Shihai Xiao, Zhuohui Duan, Xiaofei Liao, Hai Jin, Xuecang Zhang, Junhua Zhu, and Yu Zhang. 2025. Towards High-throughput and Low-latency Billion-scale Vector Search via CPU/GPU Collaborative Filtering and Re-ranking. In23rd USENIX Conference on File and Storage Technologies, FAST 2025, Santa Clara, CA, February 25-27, 2...

2025

-

[57]

Jingdong Wang and Shipeng Li. 2012. Query-driven iterated neighborhood graph search for large scale indexing. InProceedings of the 20th ACM Multime- dia Conference, MM ’12, Nara, Japan, October 29 - November 02, 2012, Noboru Babaguchi, Kiyoharu Aizawa, John R. Smith, Shin’ichi Satoh, Thomas Plagemann, Xian-Sheng Hua, and Rong Yan (Eds.). ACM, 179–188

2012

-

[58]

Jun Wang, Wei Liu, Sanjiv Kumar, and Shih-Fu Chang. 2016. Learning to Hash for Indexing Big Data - A Survey.Proc. IEEE104, 1 (2016), 34–57

2016

-

[59]

Jianguo Wang, Xiaomeng Yi, Rentong Guo, Hai Jin, Peng Xu, Shengjun Li, Xi- angyu Wang, Xiangzhou Guo, Chengming Li, Xiaohai Xu, Kun Yu, Yuxing Yuan, Yinghao Zou, Jiquan Long, Yudong Cai, Zhenxiang Li, Zhifeng Zhang, Yihua Mo, Jun Gu, Ruiyi Jiang, Yi Wei, and Charles Xie. 2021. Milvus: A Purpose-Built Vector Data Management System. InSIGMOD ’21: Internatio...

2021

-

[60]

Mengzhao Wang, Weizhi Xu, Xiaomeng Yi, Songlin Wu, Zhangyang Peng, Xi- angyu Ke, Yunjun Gao, Xiaoliang Xu, Rentong Guo, and Charles Xie. 2024. Starling: An I/O-Efficient Disk-Resident Graph Index Framework for High- Dimensional Vector Similarity Search on Data Segment.Proc. ACM Manag. Data2, 1 (2024), V2mod014:1–V2mod014:27

2024

-

[61]

Mengzhao Wang, Xiaoliang Xu, Qiang Yue, and Yuxiang Wang. 2021. A Com- prehensive Survey and Experimental Comparison of Graph-Based Approximate Nearest Neighbor Search.Proc. VLDB Endow.14, 11 (2021), 1964–1978

2021

-

[62]

Likang Wu, Zhi Zheng, Zhaopeng Qiu, Hao Wang, Hongchao Gu, Tingjia Shen, Chuan Qin, Chen Zhu, Hengshu Zhu, Qi Liu, Hui Xiong, and Enhong Chen

-

[63]

https://doi.org/10.1007/S11280-024-01291-2

A survey on large language models for recommendation.World Wide Web (WWW)27, 5 (2024), 60. https://doi.org/10.1007/S11280-024-01291-2

-

[64]

Jiadong Xie, Jeffrey Xu Yu, and Yingfan Liu. 2025. Graph Based K-Nearest Neighbor Search Revisited.ACM Trans. Database Syst.50, 4 (2025), 14:1–14:30. https://doi.org/10.1145/3736716

-

[65]

Yuming Xu, Hengyu Liang, Jin Li, Shuotao Xu, Qi Chen, Qianxi Zhang, Cheng Li, Ziyue Yang, Fan Yang, Yuqing Yang, Peng Cheng, and Mao Yang. 2023. SPFresh: Incremental In-Place Update for Billion-Scale Vector Search. InPro- ceedings of the 29th Symposium on Operating Systems Principles, SOSP 2023, Koblenz, Germany, October 23-26, 2023, Jason Flinn, Margo I....

- [66]

-

[67]

Mingyu Yang, Wentao Li, Jiabao Jin, Xiaoyao Zhong, Xiangyu Wang, Zhitao Shen, Wei Jia, and Wei Wang. 2025. Effective and General Distance Computation for Approximate Nearest Neighbor Search. In41st IEEE International Conference on Data Engineering, ICDE 2025, Hong Kong, May 19-23, 2025. IEEE, 1098–1110. https://doi.org/10.1109/ICDE65448.2025.00087

- [68]

-

[69]

Peiqi Yin, Xiao Yan, Qihui Zhou, Hui Li, Xiaolu Li, Lin Zhang, Meiling Wang, Xin Yao, and James Cheng. 2025. Gorgeous: Revisiting the Data Layout for Disk-Resident High-Dimensional Vector Search.CoRRabs/2508.15290 (2025). https://doi.org/10.48550/ARXIV.2508.15290 arXiv:2508.15290

-

[70]

Weichen Zhao, Yuncheng Lu, Yao Tian, Hao Zhang, Jiehui Li, Minghao Zhao, Yakun Li, and Weining Qian. 2026. Optimizing SSD-Resident Graph Indexing for High-Throughput Vector Search.CoRRabs/2602.22805 (2026). https://doi.org/ 10.48550/ARXIV.2602.22805 arXiv:2602.22805

-

[71]

Bolong Zheng, Xi Zhao, Lianggui Weng, Quoc Viet Hung Nguyen, Hang Liu, and Christian S. Jensen. 2022. PM-LSH: a fast and accurate in-memory framework for high-dimensional approximate NN and closest pair search.VLDB J.31, 6 (2022), 1339–1363. https://doi.org/10.1007/S00778-021-00680-7

-

[72]

Yuxin Zheng, Qi Guo, Anthony K. H. Tung, and Sai Wu. 2016. LazyLSH: Approx- imate Nearest Neighbor Search for Multiple Distance Functions with a Single Index. InProceedings of the 2016 International Conference on Management of Data, SIGMOD Conference 2016, San Francisco, CA, USA, June 26 - July 01, 2016, Fatma Özcan, Georgia Koutrika, and Sam Madden (Eds....

2016

-

[73]

Yifan Zhu, Lu Chen, Yunjun Gao, and Christian S. Jensen. 2022. Pivot selection algorithms in metric spaces: a survey and experimental study.VLDB J.31, 1 (2022), 23–47. https://doi.org/10.1007/S00778-021-00691-4

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.