Recognition: unknown

Multiagent Stochastic Shortest Path Problem

Pith reviewed 2026-05-08 03:49 UTC · model grok-4.3

The pith

Multiple agents in a stochastic setting can minimize the expected time until the first one reaches a target using either independent or coordinated strategies.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

In the MSSP problem, k agents strive to reach a target state while minimizing the expected time to reach the target by any agent. The problem is analyzed in autonomous and coordinated settings, with efficient strategy-synthesis algorithms designed for both. The algorithms are experimentally evaluated on instances of increasing size against natural baselines.

What carries the argument

Strategy-synthesis algorithms that compute optimal policies for minimizing the expected time to absorption by any of k agents, applied separately to autonomous Markov decision processes and coordinated stochastic games with a single absorbing target.

If this is right

- Optimal strategies exist and can be computed in polynomial time relative to the size of the underlying game when the number of agents is fixed.

- Coordination never increases the expected time and can be exploited without requiring exponential blow-up in the state space for the coordinated variant.

- The same algorithmic template applies uniformly to both autonomous and coordinated settings, allowing direct comparison of their performance on identical instances.

- Experimental scaling shows that the running time grows manageably with the number of states and agents, outperforming naive baselines.

Where Pith is reading between the lines

- The same reduction technique could be reused for variants in which agents have different speeds or failure probabilities, by adjusting only the transition probabilities.

- The complexity separation between autonomous and coordinated cases supplies a concrete benchmark for measuring the value of communication in other multi-agent planning problems.

- Because the target is absorbing, the framework directly extends to objectives that combine reachability with secondary costs such as energy consumption until absorption.

Load-bearing premise

The model consists of a finite number of states and actions together with a single absorbing target state for which expected times remain finite and well-defined.

What would settle it

A small finite-state MSSP instance for which exhaustive enumeration of all policies yields a strictly lower expected time than the value returned by the paper's synthesis algorithm.

Figures

read the original abstract

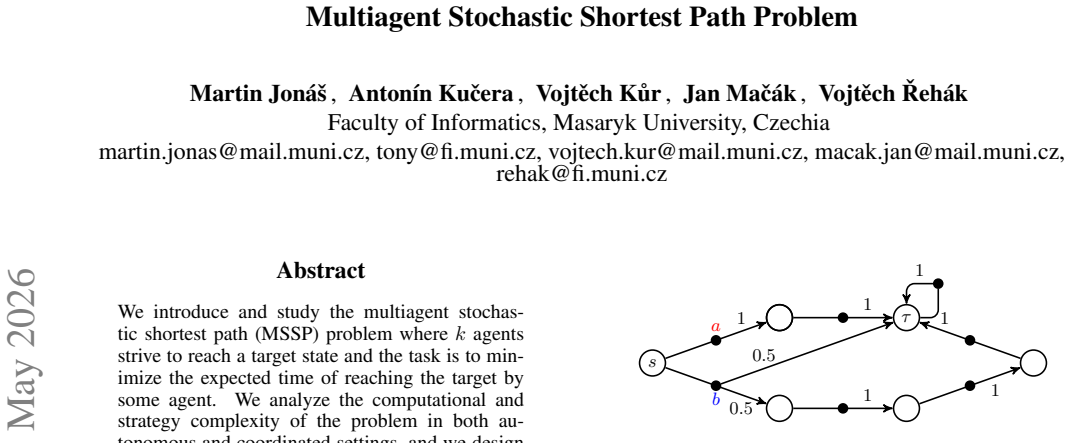

We introduce and study the multi-agent stochastic shortest path (MSSP) problem, in which $k$ agents strive to reach a target state, aiming to minimize the expected time to reach the target by any agent. We analyze the computational and strategy-complexity of the problem in both autonomous and coordinated settings, and we design efficient strategy-synthesis algorithms. The algorithms are experimentally evaluated on instances of increasing size against natural baselines.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces the multi-agent stochastic shortest path (MSSP) problem in which k agents aim to minimize the expected time until any one reaches a designated target state. It analyzes computational and strategy complexity for both autonomous and coordinated settings, presents efficient strategy-synthesis algorithms, and reports experimental results on instances of increasing size against natural baselines.

Significance. If the complexity characterizations and algorithms hold, the work extends classical stochastic shortest-path results to a multi-agent setting with a natural 'first-to-target' objective. This could support applications in cooperative robotics and distributed planning under uncertainty. The experimental evaluation against baselines is a positive element that helps ground the algorithmic claims.

minor comments (2)

- [Abstract and complexity-analysis section] The abstract states that algorithms are 'efficient' and 'experimentally evaluated,' but the main text should explicitly state the polynomial-time bounds or complexity classes achieved (e.g., in the complexity-analysis section) to make the central claims immediately verifiable.

- [Model definition] Notation for the autonomous versus coordinated settings should be introduced with a clear table or side-by-side comparison early in the model section to avoid reader confusion when the algorithms are later presented.

Simulated Author's Rebuttal

We thank the referee for the positive assessment of our manuscript on the multi-agent stochastic shortest path problem and for recommending minor revision. The provided summary correctly captures the problem definition, complexity analysis in autonomous and coordinated modes, strategy-synthesis algorithms, and experimental evaluation.

Circularity Check

No significant circularity: new problem class with standard complexity and algorithm results

full rationale

The paper defines the MSSP problem on finite-state stochastic games/MDPs with a single absorbing target, then analyzes its computational and strategy complexity in autonomous/coordinated settings and presents strategy-synthesis algorithms. No equations, parameters, or claims reduce by construction to fitted inputs, self-definitions, or unverified self-citations; the model assumptions (finiteness, well-defined expected times) are explicitly standard for SSP problems and the results are derived from them without circular reduction. This is a self-contained theoretical contribution.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

L. Aceto. Action Refinement in Process Algebras

-

[2]

Learning representations by back-propagating errors , volume =

Rumelhart, David E and Hinton, Geoffrey E and Williams, Ronald J , date-added =. Learning representations by back-propagating errors , volume =. nature , number =

-

[3]

Anderson

W.J. Anderson. Continuous-Time Markov Chains: An Applications-Oriented Approach

-

[4]

Aho and J.E

A.V. Aho and J.E. Hopcroft and J.D. Ullman. The Design and Analysis of Computer Algorithms

-

[5]

Athreya and P.E

K.B. Athreya and P.E. Ney. Branching Processes

-

[6]

Bertsekas

D.P. Bertsekas. Dynamic Programming and Optimal Control

-

[7]

Billingsley

P. Billingsley. Probability and Measure

-

[8]

Br \'e maud

P. Br \'e maud. Markov chains: Gibbs fields, Monte Carlo simulation, and queues

-

[9]

Baier and J.-P

C. Baier and J.-P. Katoen. Principles of Model Checking

-

[10]

Barthe and J.-P

G. Barthe and J.-P. Katoen and A. Silva. Foundations of Probabilistic Programming

-

[11]

Barbu and N

V.L. Barbu and N. Limnios. Semi-Markov Chains and Hidden Semi- Markov Models toward Applications

-

[12]

Bini and G

D. Bini and G. Latouche and B. Meini. Numerical methods for Structured Markov Chains

-

[13]

R.V.\ Book and F. Otto. String Rewriting Systems

-

[14]

Bach and J

E. Bach and J. Shallit. Algorithmic Number Theory. Vol. 1, Efficient Algorithms

-

[15]

Baeten and W.P

J.C.M. Baeten and W.P. Weijland. Process Algebra

-

[16]

Clark and O

E.M. Clark and O. Grumberg and D.A. Peled. Model Checking

-

[17]

H.M. Choset. Principles of Robot Motion: Theory, Algorithms, and Implementation

-

[18]

K.L. Chung. Markov Chains with Stationary Transition Probabilities

-

[19]

B.F. Chellas. Modal Logic---An Introduction

-

[20]

A. Dixon. Multi-Agent Systems: Design, Synthesis and Analysis

-

[21]

Desel and J

J. Desel and J. Esparza. Free Choice P etri Nets

-

[22]

Dubhashi and A

D.P. Dubhashi and A. Panconesi. Concentration of Measure for the Analysis of Randomized Algorithms

-

[23]

W. Feller. An Introduction to Probability Theory and Its Applications, Vol. 1

-

[24]

Handbook of Markov Decision Processes

-

[25]

Filar and K

J. Filar and K. Vrieze. Competitive Markov Decision Processes

-

[26]

Grimmett and D.R

G.R. Grimmett and D.R. Stirzaker. Probability and Random Processes

-

[27]

Gr \" a del and W

E. Gr \" a del and W. Thomas and T. Wilke. Automata, Logics, and Infinite Games

-

[28]

T.E. Harris. The Theory of Branching Processes

-

[29]

C.A.R. Hoare. Communicating Sequential Processes

-

[30]

Hopcroft and J.D

J.E. Hopcroft and J.D. Ullman. Introduction to Automata Theory, Languages, and Computation

-

[31]

Isaacson and H.B

E. Isaacson and H.B. Keller. Analysis of Numerical Methods

-

[32]

Johnston and N

E. Johnston and N. Harrigan and M. Gimeno-Segovia. Programming Quantum Computers: Essential Algorithms and Code Samples

-

[33]

Kallenberg

O. Kallenberg. Foundations of Modern Probability

-

[34]

D.C. Kozen. Automata and Computability

-

[35]

R. Kress. Numerical Analysis

-

[36]

Koller and N

D. Koller and N. Friedman. Probabilistic Graphical Models

-

[37]

Kemeny and J.L

J.G. Kemeny and J.L. Snell. Finite Markov chains

-

[38]

Kemeny and J.L

J.G. Kemeny and J.L. Snell and A.W. Knapp. Denumerable Markov chains

-

[39]

Kleinberg and E

J. Kleinberg and E. Tardos. Algorithm Design

-

[40]

S.M. LaValle. Planning Algorithms

-

[41]

Latouche and V

G. Latouche and V. Ramaswami. Introduction to Matrix Analytic Methods in Stochastic Modeling

-

[42]

Puterman

M.L. Puterman. Markov Decision Processes

-

[43]

R. Milner. Communication and Concurrency

-

[44]

M.L. Minsky. Computation: Finite and Infinite Machines

-

[45]

R. Motwani. Randomized Algorithms

-

[46]

Motwani and P

R. Motwani and P. Raghavan. Randomized Algorithms

-

[47]

Manning and H

C. Manning and H. Sch \" u tze. Foundations of Statistical Natural Language Processing

-

[48]

Mitrinovic and J

D.S. Mitrinovic and J. S \'a ndor and B. Crstici. Handbook of number theory

-

[49]

Meyn and R.L

S. Meyn and R.L. Tweedie. Markov Chains and Stochastic Stability

-

[50]

M.F. Neuts. Matrix-geometric Solutions in Stochastic Models: An Algorithmic Approach

-

[51]

J.R. Norris. Markov Chains

-

[52]

McNaughton and S

R. McNaughton and S. Papert. Counter-Free Automata

-

[53]

Neyman and S

A. Neyman and S. Sorin. Stochastic Games and Applications

-

[54]

Papadimitriou

Ch. Papadimitriou. Computational Complexity

-

[55]

Peterson

J.L. Peterson. Petri Net Theory and the Modelling of Systems

-

[56]

W. Reisig. Petri Nets---An Introduction

-

[57]

R \' e dei

L. R \' e dei. The Theory of Finitely Generated Commutative Semigroups

-

[58]

Rosenthal

J.S. Rosenthal. A first look at rigorous probability theory

-

[59]

S.M. Ross. Stochastic Processes

-

[60]

Y. Shoham. Multiagent Systems

-

[61]

D.A. Schmidt. Denotational Semantics

-

[62]

Stirling

C. Stirling. Modal and Temporal Properties of Processes

-

[63]

A. Tarski. A Decision Method for Elementary Algebra and Geometry

-

[64]

M. Tambe. Security and Game Theory. Algorithms, Deployed Systems, Lessons Learned

-

[65]

D. Taubner. Finite Representations of CCS and TCSP Programs by Automata and P etri Nets

-

[66]

Toth and D

P. Toth and D. Vigo. The Vehicle Routing Problem

-

[67]

R. Webster. Convexity

-

[68]

G. Winskel. The Formal Semantics of Programming Languages

-

[69]

Williams

D. Williams. Probability with Martingales

-

[70]

Wooldridge

M. Wooldridge. Introduction to MultiAgent Systems

-

[71]

A. Church. Applications of recursive arithmetic to the problem of circuit synthesis

-

[72]

Chodil and A

M. Chodil and A. Ku c era. The Finite Satisfiability Problem for PCTL is Undecidable

-

[73]

S. Demri. Logiques pour la Sp \' e cification et V \' e rification

-

[74]

Walukiewicz

I. Walukiewicz. Private communication

-

[75]

R. Mayr. Private communication

-

[76]

Etessami and M

K. Etessami and M. Yannakakis. Algorithmic Verification of Recursive Probabilistic Systems

-

[77]

Br \' a zdil and S

T. Br \' a zdil and S. Kiefer and A. Ku c era. Efficient Analysis of Probabilistic Programs with an Unbounded Counter

-

[78]

Hardesty

L. Hardesty. Graphics in reverse: Probabilistic programming does in 50 lines of code what used to take thousands

-

[79]

Jon \' a s and A

M. Jon \' a s and A. Ku c era and V. K u r and J. Ma c \' a k. Steady-State Strategy Synthesis for Swarms of Autonomous Agents. arXiv. 2025

2025

-

[80]

T. Lamser. Algorithmic Analysis of Security Games

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.