Recognition: unknown

Adaptive Coordinate Transforms for Neural Operators

Pith reviewed 2026-05-08 03:50 UTC · model grok-4.3

The pith

Neural operators improve when they learn adaptive coordinate systems instead of using fixed grids.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

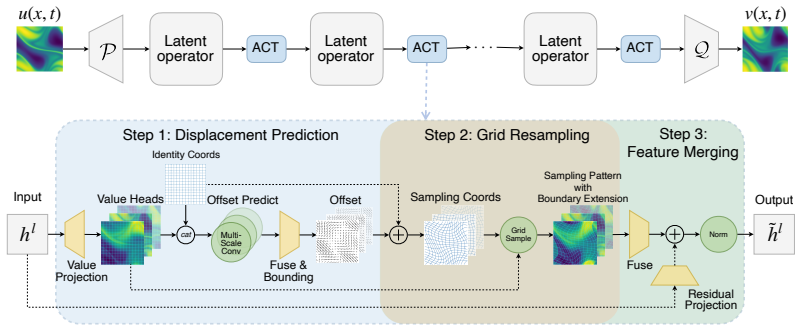

The ACT block learns a coordinate transformation from an input feature and uses differentiable sampling to express the same feature in the transformed coordinate system. By adapting the coordinate frame to the data, the block reduces spatial misalignment, lowers operator complexity, and improves the network's ability to capture sharp transitions in PDE solutions.

What carries the argument

The ACT block, a plug-and-play module that learns a coordinate transformation and applies differentiable sampling to re-represent features under the new system, thereby aligning the operator's spatial view with the data.

If this is right

- Neural operators can dynamically align with evolving physical structures rather than remain locked to static grids.

- Operator mappings become simpler because the network works in a coordinate system matched to the data.

- Predictive accuracy rises consistently across different PDE problems and different base neural operator architectures.

- The same adaptation mechanism can be inserted into existing models as a general geometric prior.

Where Pith is reading between the lines

- Coordinate learning may transfer to other mesh-free representations such as graph neural operators or particle methods where spatial alignment is an issue.

- If the sampling remains artifact-free under training, hybrid schemes could combine the learned transforms with classical adaptive-mesh refinement rules.

- The method suggests testing whether similar blocks improve performance on time-dependent or multi-physics problems where structures move at different speeds.

Load-bearing premise

The learned coordinate transformation and its differentiable sampling step preserve the original physical signal without adding artifacts or distortions.

What would settle it

Apply the ACT-augmented operator to a PDE benchmark containing known sharp moving fronts; if accuracy stays the same or drops relative to the fixed-coordinate baseline, the claim fails.

Figures

read the original abstract

Neural operators have achieved promising performance on partial differential equations (PDEs), but most existing models are built on fixed Eulerian coordinates. This mismatch between evolving physical structures and static coordinates creates spatial misalignment, leading to unnecessarily non-local operator mappings and reinforcing a smoothness preference near sharp transitions. Inspired by adaptive coordinate transformations in classical PDE analysis, we propose the Adaptive Coordinate Transform (ACT) block, a plug-and-play module for data-driven geometric adaptation in neural operators. ACT blocks resolve this structural limitation by learning adaptive coordinate systems within the operator learning pipeline. Specifically, given an input feature, the ACT block learns a coordinate transformation and represents the same feature under the transformed coordinates via differentiable sampling. This operation preserves the underlying signal while changing its spatial representation, equivalent to expressing the same physical quantity in different coordinate systems. By adapting the coordinate system to the data, ACT allows the network to better track evolving structures, reduce operator complexity, and dynamically focus on critical features to improve learning. We evaluate the proposed approach across diverse PDE benchmarks and multiple neural operator architectures. Experimental results demonstrate consistent and significant improvements in predictive accuracy, indicating that learning coordinate systems provides a powerful mechanism for enhancing operator learning.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces the Adaptive Coordinate Transform (ACT) block as a plug-and-play module for neural operators. Given an input feature, the block learns a coordinate transformation and uses differentiable sampling to represent the feature in the new coordinates. This is presented as equivalent to changing coordinate systems for the same physical quantity, allowing better tracking of evolving structures in PDEs, reduced operator complexity, and improved accuracy. Experiments across diverse PDE benchmarks and multiple neural operator architectures are claimed to show consistent and significant predictive accuracy gains.

Significance. If the central claims hold after addressing sampling artifacts, the ACT block would represent a general, architecture-agnostic enhancement to neural operators by enabling learned geometric adaptation. This directly targets a known limitation of fixed Eulerian grids in operator learning and could improve performance on PDEs with moving features or sharp fronts, building on classical adaptive coordinate ideas in a data-driven way.

major comments (2)

- [Method (ACT block definition)] Method section (ACT block and differentiable sampling): The statement that the sampling 'preserves the underlying signal' and is exactly 'equivalent to expressing the same physical quantity in different coordinate systems' holds only for band-limited fields. Bilinear (or equivalent) interpolation necessarily damps high wavenumbers, which could introduce low-pass filtering that confounds accuracy gains on PDEs with shocks or discontinuities. No quantitative interpolation error analysis or ablation against a matched-parameter fixed-grid baseline is provided to isolate the contribution of coordinate adaptation.

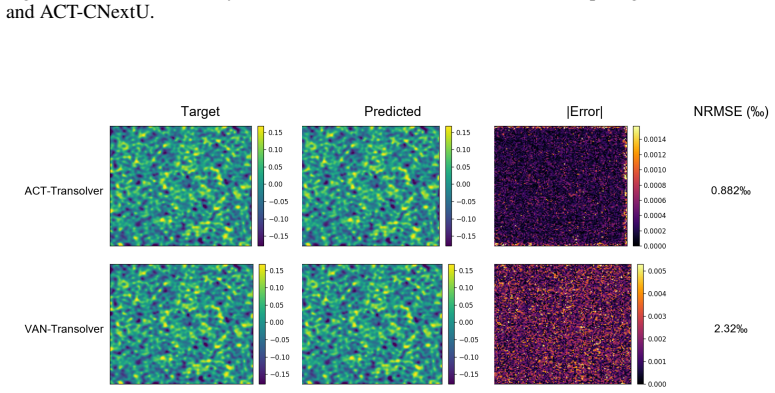

- [Experiments] Experimental results: The abstract and results claim 'consistent and significant improvements' without reporting specific error metrics, baselines, error bars, or ablation tables (e.g., ACT vs. non-adaptive version with identical parameter count). This prevents verification of the magnitude of gains and whether they arise from the proposed mechanism rather than implicit smoothing.

minor comments (2)

- [Abstract] The abstract would benefit from including one or two key quantitative results (e.g., relative error reductions on specific benchmarks) to substantiate the 'significant improvements' claim.

- [Method] Clarify the precise interpolation kernel and any anti-aliasing steps used in the differentiable sampling operation.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below and have revised the manuscript to incorporate the suggested clarifications and additional analyses.

read point-by-point responses

-

Referee: Method section (ACT block and differentiable sampling): The statement that the sampling 'preserves the underlying signal' and is exactly 'equivalent to expressing the same physical quantity in different coordinate systems' holds only for band-limited fields. Bilinear (or equivalent) interpolation necessarily damps high wavenumbers, which could introduce low-pass filtering that confounds accuracy gains on PDEs with shocks or discontinuities. No quantitative interpolation error analysis or ablation against a matched-parameter fixed-grid baseline is provided to isolate the contribution of coordinate adaptation.

Authors: We agree that the equivalence holds only approximately for discretized fields and that bilinear sampling introduces high-wavenumber damping, which is a legitimate concern for problems with shocks or discontinuities. In the revised manuscript we have updated the ACT block description to explicitly note that the operation approximates a coordinate change within the given grid resolution. We have added a quantitative interpolation-error study on both band-limited and discontinuous synthetic fields, together with an ablation that compares ACT-augmented operators against non-adaptive versions that use an identical parameter budget, thereby isolating the contribution of the learned coordinate adaptation. revision: yes

-

Referee: Experimental results: The abstract and results claim 'consistent and significant improvements' without reporting specific error metrics, baselines, error bars, or ablation tables (e.g., ACT vs. non-adaptive version with identical parameter count). This prevents verification of the magnitude of gains and whether they arise from the proposed mechanism rather than implicit smoothing.

Authors: We acknowledge that the original abstract and summary statements lacked concrete numerical detail. The revised manuscript now includes specific relative-error reductions in the abstract, reports error bars from multiple random seeds, and adds an explicit ablation table that compares each ACT-enhanced architecture against its non-adaptive counterpart with matched parameter count across all benchmarks. These additions enable direct verification that the observed gains are attributable to coordinate adaptation rather than any incidental smoothing. revision: yes

Circularity Check

No circularity in the ACT block derivation or claims

full rationale

The paper introduces the ACT block as a learned module that applies a data-driven coordinate transformation followed by differentiable sampling to re-represent input features. This construction is not self-definitional: the transformation parameters are optimized end-to-end rather than being fitted to a target quantity and then re-used as a 'prediction.' No load-bearing step reduces to a self-citation chain, an imported uniqueness theorem, or an ansatz smuggled from prior author work. The asserted equivalence to 'expressing the same physical quantity in different coordinate systems' is presented as a conceptual motivation, not as a mathematical identity that forces the experimental outcome. All performance claims rest on external benchmark comparisons rather than internal re-labeling of fitted quantities. The derivation chain therefore remains self-contained and non-circular.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption A coordinate transformation learned from data can be applied via differentiable sampling while exactly preserving the underlying physical signal.

invented entities (1)

-

ACT block

no independent evidence

Reference graph

Works this paper leans on

-

[1]

International Workshop on Deep Learning in Medical Image Analysis , pages=

End-to-end unsupervised deformable image registration with a convolutional neural network , author=. International Workshop on Deep Learning in Medical Image Analysis , pages=. 2017 , organization=

2017

-

[2]

Journal of Machine Learning Research , volume=

Fourier neural operator with learned deformations for pdes on general geometries , author=. Journal of Machine Learning Research , volume=

-

[3]

2025 , url=

GVS Mothish and J Rishi and Shobhit Kumar Shukla and Deepak Subramani , journal=. 2025 , url=

2025

-

[4]

IEEE transactions on medical imaging , volume=

Voxelmorph: a learning framework for deformable medical image registration , author=. IEEE transactions on medical imaging , volume=. 2019 , publisher=

2019

-

[5]

Deformable

Xizhou Zhu and Weijie Su and Lewei Lu and Bin Li and Xiaogang Wang and Jifeng Dai , booktitle=. Deformable. 2021 , url=

2021

-

[6]

Proceedings of the IEEE international conference on computer vision , pages=

Deformable convolutional networks , author=. Proceedings of the IEEE international conference on computer vision , pages=

-

[7]

International Conference on Learning Representations , year=

Fourier Neural Operator for Parametric Partial Differential Equations , author=. International Conference on Learning Representations , year=

-

[8]

The Thirteenth International Conference on Learning Representations , year=

Lie Algebra Canonicalization: Equivariant Neural Operators under arbitrary Lie Groups , author=. The Thirteenth International Conference on Learning Representations , year=

-

[9]

Advances in Neural Information Processing Systems , volume=

Towards universal mesh movement networks , author=. Advances in Neural Information Processing Systems , volume=

-

[10]

Forty-second International Conference on Machine Learning , year=

G-Adaptivity: optimised graph-based mesh relocation for finite element methods , author=. Forty-second International Conference on Machine Learning , year=

-

[11]

Journal of Computational Physics , volume=

Deep reinforcement learning for adaptive mesh refinement , author=. Journal of Computational Physics , volume=. 2023 , publisher=

2023

-

[12]

Advances in neural information processing systems , volume=

Swarm reinforcement learning for adaptive mesh refinement , author=. Advances in neural information processing systems , volume=

-

[13]

Better Neural

Peiyan Hu and Yue Wang and Zhi-Ming Ma , booktitle=. Better Neural. 2024 , url=

2024

-

[14]

Advances in Neural Information Processing Systems , volume=

The well: a large-scale collection of diverse physics simulations for machine learning , author=. Advances in Neural Information Processing Systems , volume=

-

[15]

2024 , eprint=

Transolver: A Fast Transformer Solver for PDEs on General Geometries , author=. 2024 , eprint=

2024

-

[16]

Advances in neural information processing systems , volume=

Pdebench: An extensive benchmark for scientific machine learning , author=. Advances in neural information processing systems , volume=

-

[17]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

A convnet for the 2020s , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[18]

P., 2018, @doi [ ] 10.1093/mnrasl/sly131 , http://adsabs.harvard.edu/abs/2018MNRAS.tmpL.135G

The growth and entrainment of cold gas in a hot wind. Monthly Notices of the Royal Astronomical Society: Letters , keywords =. doi:10.1093/mnrasl/sly131 , archivePrefix =. 1806.02728 , primaryClass =

-

[19]

Taming the TuRMoiL: The Temperature Dependence of Turbulence in Cloud Wind Interactions. The Astrophysical Journal , keywords =. doi:10.3847/1538-4357/ad1e51 , archivePrefix =. 2210.15679 , primaryClass =

-

[20]

Multiphase Gas and the Fractal Nature of Radiative Turbulent Mixing Layers. The Astrophysical Journal Letters , keywords =. doi:10.3847/2041-8213/ab8d2c , archivePrefix =. 2003.08390 , primaryClass =

-

[21]

and Mandli, Kyle T

Ketcheson, David I. and Mandli, Kyle T. and Ahmadia, Aron J. and Alghamdi, Amal and. SIAM Journal on Scientific Computing , Month = nov, Number =

-

[22]

and Troy, W.C

Klaasen, G.A. and Troy, W.C. , title =. SIAM Journal on Applied Mathematics , volume =. 1984 , doi =

1984

-

[23]

, journal =

Turing, A. , journal =

-

[24]

and Mangani, L

Moukalled, F. and Mangani, L. and Darwish, M. , title =

-

[25]

and Haberland, Matt and Reddy, Tyler and Cournapeau, David and Burovski, Evgeni and Peterson, Pearu and Weckesser, Warren and Bright, Jonathan and

Virtanen, Pauli and Gommers, Ralf and Oliphant, Travis E. and Haberland, Matt and Reddy, Tyler and Cournapeau, David and Burovski, Evgeni and Peterson, Pearu and Weckesser, Warren and Bright, Jonathan and. Nature Methods , year =

-

[26]

Physics of Fluids , volume=

Instabilities and nonlinear dynamics of concentrated active suspensions , author=. Physics of Fluids , volume=. 2013 , publisher=

2013

-

[27]

Physical Review Fluids , volume=

Analytical structure, dynamics, and coarse graining of a kinetic model of an active fluid , author=. Physical Review Fluids , volume=. 2017 , publisher=

2017

-

[28]

Journal of Computational Physics , pages=

Learning fast, accurate, and stable closures of a kinetic theory of an active fluid , author=. Journal of Computational Physics , pages=. 2024 , publisher=

2024

-

[29]

Physical Review Fluids , volume=

Thermodynamically consistent coarse-graining of polar active fluids , author=. Physical Review Fluids , volume=. 2022 , publisher=

2022

-

[30]

Complex Fluids in Biological Systems: Experiment, Theory, and Computation , pages=

Theory of active suspensions , author=. Complex Fluids in Biological Systems: Experiment, Theory, and Computation , pages=. 2015 , publisher=

2015

-

[31]

, journal =

Cho, Jungyeon and Lazarian, A. , journal =

-

[32]

and Lazarian, A

Kowal, G. and Lazarian, A. and Beresnyak, A. , title =. The Astrophysical Journal , year =

-

[33]

The Astrophysical Journal Letters , year = 2014, month = apr, volume = 785, pages =

BICEP2 I: Detection Of B-mode Polarization at Degree Angular Scales. The Astrophysical Journal Letters , year = 2014, month = apr, volume = 785, pages =

2014

-

[34]

and Gaensler, B

Burkhart, Blakesley and Lazarian, A. and Gaensler, B. M. , title =. The Astrophysical Journal , year =

-

[35]

Portillo, S. K. N. and Finkbeiner, D. P. , title = ". The Astrophysical Journal , year = 2018, month = aug, volume = 862, pages =

2018

-

[36]

and Benjamin, Robert A

Hill, Alex S. and Benjamin, Robert A. and Kowal, Grzegorz and Reynolds, Ronald J. and Haffner, L. Matthew and Lazarian, Alex , title =. The Astrophysical Journal , year =

-

[37]

and Ostriker, Eve C

McKee, Christopher F. and Ostriker, Eve C. , title =. Annual Review of Astronomy and Astrophysics , year =

-

[38]

, title =

Burkhart, Blakesley and Falceta-Goncalves, Diego and Kowal, Grzegorz and Lazarian, A. , title =. The Astrophysical Journal , year =

-

[39]

The Catalogue for Astrophysical Turbulence Simulations (CATS). The Astrophysical Journal , year = 2020, month = dec, volume =. doi:10.3847/1538-4357/abc484 , archivePrefix =. 2010.11227 , primaryClass =

-

[40]

Transolver++: An Accurate Neural Solver for

Huakun Luo and Haixu Wu and Hang Zhou and Lanxiang Xing and Yichen Di and Jianmin Wang and Mingsheng Long , booktitle=. Transolver++: An Accurate Neural Solver for. 2025 , url=

2025

-

[41]

Journal of Machine Learning Research , volume=

Neural operator: Learning maps between function spaces with applications to pdes , author=. Journal of Machine Learning Research , volume=

-

[42]

Journal of computational physics , volume=

An arbitrary Lagrangian-Eulerian computing method for all flow speeds , author=. Journal of computational physics , volume=. 1974 , publisher=

1974

-

[43]

2010 , publisher=

Adaptive moving mesh methods , author=. 2010 , publisher=

2010

-

[44]

International conference on machine learning , pages=

On the spectral bias of neural networks , author=. International conference on machine learning , pages=. 2019 , organization=

2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.