Recognition: unknown

LearnMate²: Design and Evaluation of an LLM-powered Personalized and Adaptive Support System for Online Learning

Pith reviewed 2026-05-08 07:11 UTC · model grok-4.3

The pith

An LLM system for online courses creates custom study plans and real-time help that raise learning gains and user satisfaction.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

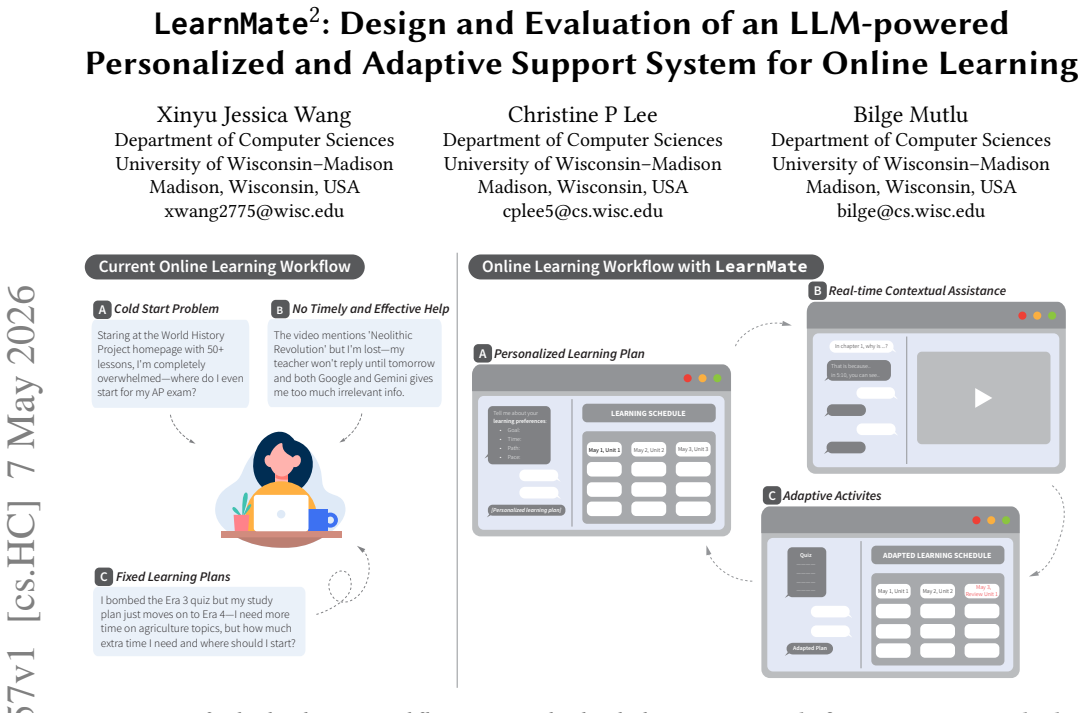

LearnMate^2 advances AI pedagogy by improving both learning outcomes and user experience compared to existing online learning and support tools. It does so by iteratively designing features that combine personalized study plans, real-time contextual assistance, and adaptive learning activities powered by large language models, with evaluations showing advantages over combinations of established platforms and standalone LLM support.

What carries the argument

LearnMate^2, the integrated LLM-powered system that generates personalized study plans, supplies real-time contextual assistance, and adapts activities based on user progress.

Load-bearing premise

The results from small groups doing short, specific tasks will apply to larger, varied populations using the system over longer periods in actual courses.

What would settle it

A follow-up study with hundreds of diverse students across multiple courses and several weeks of use showing no improvement or lower outcomes and satisfaction for LearnMate^2 compared to control groups.

Figures

read the original abstract

Personalization is crucial for effective learning, yet online learning, designed for widespread availability and open access, lacks personalized guidance. Recent advancements in large language models (LLMs) offer opportunities to bridge this gap. We explore how LLM-driven tools may be designed to support personalized and adaptive learning and examine how they shape user experience and learning outcomes. We iteratively designed \tool{} to support online learning by providing personalized study plans, real-time contextual assistance, and adaptive learning activities. A preliminary study ($n=24$) assessed the effectiveness and usability of \tool{} and informed refinements in our system, which we then evaluated ($n = 16$) against a combination of a state-of-the-art online learning platform and an LLM for learning support. Results indicate that \tool{} advances AI pedagogy by improving both learning outcomes and user experience compared to existing online learning and support tools. This work advances our understanding of the design space of personalized, AI-driven educational tools and their potential impact on user experience.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents LearnMate^2, an LLM-powered system for online learning that provides personalized study plans, real-time contextual assistance, and adaptive activities. It describes an iterative design process informed by a preliminary usability study (n=24) and reports results from a subsequent comparative evaluation (n=16) against a state-of-the-art online learning platform combined with a separate LLM support tool, claiming superior learning outcomes and user experience.

Significance. If substantiated, the work would contribute to AI-enhanced education by showing the value of an integrated LLM approach for personalization over modular baselines. The iterative, user-centered design process is a clear strength, as is the attempt to evaluate both performance metrics and experiential outcomes in a comparative setting. The paper advances understanding of the design space for such tools.

major comments (2)

- [Comparative Evaluation] The headline claim of improved learning outcomes rests on the n=16 comparative study. The manuscript provides no details on the specific outcome measures (e.g., pre/post-test scores, task completion accuracy), statistical tests performed, p-values, effect sizes, or power analysis. Without these, the reported positive results cannot be assessed for reliability given the small sample and known noise in learning-gain data.

- [Comparative Evaluation] No information is given on controls for individual variance, prior knowledge, or task selection to mitigate ceiling effects. The baseline condition (state-of-the-art platform + LLM) is also underspecified, preventing clear attribution of any gains to the integrated design of LearnMate^2 rather than implementation differences.

minor comments (1)

- [Abstract] The abstract would be more informative if it briefly noted the sample sizes and the direction of the observed improvements.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback, which identifies key areas where additional detail will improve the transparency and interpretability of our comparative evaluation. We address each comment below and will revise the manuscript to incorporate the requested information and clarifications.

read point-by-point responses

-

Referee: The headline claim of improved learning outcomes rests on the n=16 comparative study. The manuscript provides no details on the specific outcome measures (e.g., pre/post-test scores, task completion accuracy), statistical tests performed, p-values, effect sizes, or power analysis. Without these, the reported positive results cannot be assessed for reliability given the small sample and known noise in learning-gain data.

Authors: We agree that the current manuscript omits critical statistical details necessary for assessing result reliability. In the revised version, we will expand the evaluation section to explicitly describe the outcome measures (pre/post-test scores on standardized quizzes covering core concepts plus task completion accuracy), the statistical tests applied (independent t-tests with Welch correction for between-group comparisons and paired tests for within-group gains), exact p-values, effect sizes (Cohen's d), and a dedicated limitations paragraph addressing the small sample size, absence of a priori power analysis, and implications for generalizability. These additions will enable readers to evaluate the findings appropriately. revision: yes

-

Referee: No information is given on controls for individual variance, prior knowledge, or task selection to mitigate ceiling effects. The baseline condition (state-of-the-art platform + LLM) is also underspecified, preventing clear attribution of any gains to the integrated design of LearnMate^2 rather than implementation differences.

Authors: We acknowledge that greater detail on experimental controls and the baseline is required to support causal attribution. The revised manuscript will specify: random assignment of participants to conditions, a pre-study knowledge quiz used to verify group balance and control for prior knowledge variance, and task selection criteria (pilot-tested items spanning easy-to-difficult levels to reduce ceiling effects). For the baseline, we will name the state-of-the-art platform, describe the separate LLM tool configuration (including prompt templates), and contrast it with LearnMate^2's integrated design features (e.g., context-aware assistance embedded in the learning flow versus external modular tools). This will clarify the source of observed differences. revision: yes

Circularity Check

No significant circularity: empirical design and evaluation study

full rationale

The paper describes an iterative design process for an LLM-powered learning tool followed by two user studies (preliminary n=24, comparative n=16) that measure usability, learning outcomes, and user experience against baselines. No equations, fitted parameters, predictions derived from inputs, or self-citation chains appear in the provided text. Central claims rest on observed participant data and direct comparisons rather than any self-referential reduction or renaming of results. The work is therefore self-contained as an empirical HCI contribution with no load-bearing circular steps.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Khan Academy. 2025. Khan Academy. https://www.khanacademy.org/. Accessed: 2025-09-06

2025

-

[2]

Maha Yaqoob Al Balushi and Alia Salim Al Harthi. 2024. Adaptive Learning Pathways: Advancing Personalization in Online Education Platforms. In2024 2nd International Conference on Computing and Data Analytics (ICCDA). IEEE, 1–6

2024

-

[3]

Vincent Aleven, Elizabeth A McLaughlin, R Amos Glenn, and Kenneth R Koedinger. 2016. Instruction based on adaptive learning technologies. InHand- book of research on learning and instruction. Routledge, 477–518

2016

-

[4]

Bennett, Kori Inkpen, Jaime Teevan, Ruth Kikin-Gil, and Eric Horvitz

Saleema Amershi, Dan Weld, Mihaela Vorvoreanu, Adam Fourney, Besmira Nushi, Penny Collisson, Jina Suh, Shamsi Iqbal, Paul N. Bennett, Kori Inkpen, Jaime Teevan, Ruth Kikin-Gil, and Eric Horvitz. 2019. Guidelines for Human- AI Interaction. InProceedings of the 2019 CHI Conference on Human Factors in Computing Systems(Glasgow, Scotland Uk)(CHI ’19). Associa...

-

[5]

Joanne L Badge, Emma Dawson, Alan J Cann, and Jon Scott. 2008. Assessing the accessibility of online learning.Innovations in Education and Teaching Interna- tional45, 2 (2008), 103–113

2008

-

[6]

Seyyed Kazem Banihashem, Omid Noroozi, Stan van Ginkel, Leah P. Macfadyen, and Harm J.A. Biemans. 2022. A systematic review of the role of learning analytics in enhancing feedback practices in higher education.Educational Research Review 37 (2022), 100489. https://doi.org/10.1016/j.edurev.2022.100489

-

[7]

Charles Bellemare, Luc Bissonnette, and Sabine Kröger. 2014. Statistical power of within and between-subjects designs in economic experiments. (2014)

2014

-

[8]

Matthew L Bernacki, Meghan J Greene, and Nikki G Lobczowski. 2021. A sys- tematic review of research on personalized learning: Personalized by whom, to what, how, and for what purpose (s)?Educational Psychology Review33, 4 (2021), 1675–1715

2021

-

[9]

Debra Black, Charmaine Bissessar, and Mehraz Boolaky. 2019. Online education as an opportunity equalizer: The changing canvas of online education.Interchange 50, 3 (2019), 423–443

2019

-

[10]

Garvin Brod, Natalia Kucirkova, Joshua Shepherd, Dietsje Jolles, and Inge Mole- naar. 2023. Agency in educational technology: Interdisciplinary perspectives and implications for learning design.Educational Psychology Review35, 1 (2023), 25

2023

-

[11]

John Brooke et al. 1996. SUS-A quick and dirty usability scale.Usability evaluation in industry189, 194 (1996), 4–7

1996

-

[12]

Zhenyao Cai, Seehee Park, Nia Nixon, and Shayan Doroudi. 2024. Advancing Knowledge Together: Integrating Large Language Model-based Conversational AI in Small Group Collaborative Learning. InExtended Abstracts of the CHI Conference on Human Factors in Computing Systems(Honolulu, HI, USA)(CHI EA ’24). Association for Computing Machinery, New York, NY, USA,...

-

[13]

Yiming Cao, Zhen Li, Lizhen Cui, and Chunyan Miao. 2025. Adaptive Human- LLMs Interaction Collaboration: Reinforcement Learning driven Vision-Language Models for Medical Report Generation. InProceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems (CHI EA ’25). Association for Computing Machinery, New York, NY, USA...

-

[14]

Jiaju Chen, Minglong Tang, Yuxuan Lu, Bingsheng Yao, Elissa Fan, Xiaojuan Ma, Ying Xu, Dakuo Wang, Yuling Sun, and Liang He. 2025. Characterizing LLM- Empowered Personalized Story Reading and Interaction for Children: Insights From Multi-Stakeholder Perspectives. InProceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI ’25). Ass...

-

[15]

Lijia Chen, Pingping Chen, and Zhijian Lin. 2020. Artificial intelligence in education: A review.IEEE access8 (2020), 75264–75278

2020

-

[16]

Liuqing Chen, Shuhong Xiao, Yunnong Chen, Yaxuan Song, Ruoyu Wu, and Lingyun Sun. 2024. ChatScratch: An AI-Augmented System Toward Autonomous Visual Programming Learning for Children Aged 6-12. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems(Honolulu, HI, USA) (CHI ’24). Association for Computing Machinery, New York, NY, USA...

-

[17]

Yu-Jung Chung, Chen-Wei Hsu, Meng-Hsun Chan, and Fu-Yin Cherng. 2024. Enhancing ESL Learners’ Experience and Performance through Gradual Adjust- ment of Video Speed during Extensive Viewing. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems(Honolulu, HI, USA)(CHI ’24). Association for Computing Machinery, New York, NY, USA, Ar...

-

[18]

Victoria Clarke and Virginia Braun. 2014. Thematic analysis. InEncyclopedia of critical psychology. Springer, 1947–1952

2014

-

[19]

Allan Collins, John Seely Brown, Ann Holum, et al. 1991. Cognitive apprentice- ship: Making thinking visible.American educator15, 3 (1991), 6–11

1991

-

[20]

Coursera. 2026. Coursera. https://www.coursera.org/. Accessed: 2026-04-09

2026

-

[21]

John Daniel. 2012. Making sense of MOOCs: Musings in a maze of myth, paradox and possibility.Journal of interactive Media in education2012, 3 (2012), 18–18

2012

-

[22]

Google DeepMind. 2025. Gemini. https://deepmind.google/technologies/gemini/. Accessed: 2025-09-06

2025

-

[23]

Vanessa Paz Dennen. 2013. Cognitive apprenticeship in educational practice: Research on scaffolding, modeling, mentoring, and coaching as instructional strategies. InHandbook of research on educational communications and technology. Routledge, 804–819

2013

-

[24]

Michelle Deschênes. 2020. Recommender systems to support learners’ Agency in a Learning Context: a systematic review.International journal of educational technology in higher education17, 1 (2020), 50

2020

-

[25]

Do, Usama Bin Shafqat, Elsie Ling, and Nikhil Sarda

Tiffany D. Do, Usama Bin Shafqat, Elsie Ling, and Nikhil Sarda. 2025. PAIGE: Examining Learning Outcomes and Experiences with Personalized AI-Generated Educational Podcasts. InProceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI ’25). Association for Computing Machinery, New York, NY, USA, Article 896, 12 pages. https://doi.or...

-

[26]

Upol Ehsan, Q Vera Liao, Michael Muller, Mark O Riedl, and Justin D Weisz

-

[27]

In Proceedings of the 2021 CHI conference on human factors in computing systems

Expanding explainability: Towards social transparency in ai systems. In Proceedings of the 2021 CHI conference on human factors in computing systems. 1–19

2021

-

[28]

O Elwarraki, S Aammou, Youssef Jdidou, and Jalal Lahiassi. 2023. Toward the future of personalized learning: Emerging trends and challenges in recommenda- tion systems.ICERI2023 Proceedings(2023), 7226–7235

2023

-

[29]

Haoxiang Fan, Guanzheng Chen, Xingbo Wang, and Zhenhui Peng. 2024. Lesson- Planner: Assisting novice teachers to prepare pedagogy-driven lesson plans with large language models. InProceedings of the 37th Annual ACM Symposium on User Interface Software and Technology. 1–20

2024

-

[30]

Dhananjaya. G. M., R. H. Goudar, Anjanabhargavi A. Kulkarni, Vijayalaxmi N. Rathod, and Geetabai S. Hukkeri. 2024. A Digital Recommendation System for Personalized Learning to Enhance Online Education: A Review.IEEE Access12 (2024), 34019–34041. https://doi.org/10.1109/ACCESS.2024.3369901

-

[31]

Meiyuzi Gao, Philip Kortum, and Frederick Oswald. 2018. Psychometric eval- uation of the use (usefulness, satisfaction, and ease of use) questionnaire for reliability and validity. InProceedings of the human factors and ergonomics so- ciety annual meeting, Vol. 62. SAGE Publications Sage CA: Los Angeles, CA, 1414–1418

2018

-

[32]

Jenna Gillett-Swan. 2017. The challenges of online learning: Supporting and engaging the isolated learner.Journal of learning design10, 1 (2017), 20–30

2017

-

[33]

Ilie Gligorea, Marius Cioca, Romana Oancea, Andra-Teodora Gorski, Hortensia Gorski, and Paul Tudorache. 2023. Adaptive learning using artificial intelligence in e-learning: A literature review.Education Sciences13, 12 (2023), 1216

2023

-

[34]

Charles R Graham, Jered Borup, Cecil R Short, and Leanna Archambault. 2019. K-12 blended teaching: A guide to personalized learning and online integration. Provo, UT: EdTechBooks(2019)

2019

-

[35]

Stephen Grossberg. 2020. A path toward explainable AI and autonomous adap- tive intelligence: Deep learning, adaptive resonance, and models of perception, emotion, and action.Frontiers in neurorobotics14 (2020), 36

2020

-

[36]

Bui Phu Hung and Loc Tan Nguyen. 2022. Scaffolding language learning in the online classroom.New trends and applications in Internet of things (IoT) and big data analytics(2022), 109–122

2022

-

[37]

Fumiya Iida and Fabio Giardina. 2023. On the timescales of embodied intelli- gence for autonomous adaptive systems.Annual Review of Control, Robotics, and Autonomous Systems6, 1 (2023), 95–122

2023

-

[38]

Muhammad Imran, Norah Almusharraf, Saim Ahmed, and Muhammad Ismail Mansoor. 2024. Personalization of E-Learning: Future Trends, Opportunities, and Challenges.International Journal of Interactive Mobile Technologies18, 10 (2024)

2024

-

[39]

Francisco Iniesto and Covadonga Rodrigo. 2024. Understanding accessibility in MOOCS: findings and recommendations for future designs.Journal of Interactive Media in Education2024, 1 (2024), 20

2024

-

[40]

Majeed Kazemitabaar, Runlong Ye, Xiaoning Wang, Austin Zachary Henley, Paul Denny, Michelle Craig, and Tovi Grossman. 2024. CodeAid: Evaluating a Classroom Deployment of an LLM-based Programming Assistant that Balances Student and Educator Needs. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems(Honolulu, HI, USA)(CHI ’24). As...

-

[41]

Deepak Kem. 2022. Personalised and adaptive learning: Emerging learning platforms in the era of digital and smart learning.International Journal of Social Science and Human Research5, 2 (2022), 385–391

2022

-

[42]

Kenneth R Koedinger and Vincent Aleven. 2007. Exploring the assistance dilemma in experiments with cognitive tutors.Educational psychology review19, 3 (2007), 239–264

2007

-

[43]

Todd Kulesza, Margaret Burnett, Weng-Keen Wong, and Simone Stumpf. 2015. Principles of explanatory debugging to personalize interactive machine learning. InProceedings of the 20th international conference on intelligent user interfaces. 126–137

2015

-

[44]

Poonam Kumar, Anil Kumar, Shailendra Palvia, and Sanjay Verma. 2019. Online business education research: Systematic analysis and a conceptual model.The International Journal of Management Education17, 1 (2019), 26–35

2019

-

[45]

Christine P Lee, Min Kyung Lee, and Bilge Mutlu. 2024. The AI-DEC: a card-based design method for user-centered AI explanations. InProceedings of the 2024 ACM Designing Interactive Systems Conference. 1010–1028

2024

-

[46]

Christine P Lee, David Porfirio, Xinyu Jessica Wang, Kevin Chenkai Zhao, and Bilge Mutlu. 2025. VeriPlan: Integrating formal verification and LLMs into end- user planning. InProceedings of the 2025 CHI Conference on Human Factors in Computing Systems. 1–19

2025

-

[47]

Christine P Lee, Xinyu Jessica Wang, Aws Albarghouthi, David Porfirio, and Bilge Mutlu. 2026. U-Define: Designing User Workflows for Hard and Soft Constraints in LLM-Based Planning. arXiv:2605.02765 [cs.AI] https://arxiv.org/abs/2605. 02765

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[48]

Joanne Leong, Pat Pataranutaporn, Valdemar Danry, Florian Perteneder, Yaoli Mao, and Pattie Maes. 2024. Putting things into context: Generative AI-enabled context personalization for vocabulary learning improves learning motivation. In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems. 1–15

2024

-

[49]

Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, et al. 2020. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in neural information processing systems33 (2020), 9459–9474

2020

-

[50]

Fengying Li, Yifeng He, and Qingshui Xue. 2021. Progress, challenges and countermeasures of adaptive learning.Educational Technology & Society24, 3 (2021), 238–255

2021

-

[51]

Effie Maclellan. 2014. How might teachers enable learner self-confidence? A review study.Educational Review66, 1 (2014), 59–74

2014

-

[52]

Uwe Maier and Christian Klotz. 2022. Personalized feedback in digital learning environments: Classification framework and literature review.Computers and education: Artificial intelligence3 (2022), 100080

2022

-

[53]

Florence Martin, Yan Chen, Robert L Moore, and Carl D Westine. 2020. Systematic review of adaptive learning research designs, context, strategies, and technologies from 2009 to 2018.Educational Technology Research and Development68, 4 (2020), 1903–1929

2020

-

[54]

Nora McDonald, Sarita Schoenebeck, and Andrea Forte. 2019. Reliability and Inter-rater Reliability in Qualitative Research: Norms and Guidelines for CSCW and HCI Practice.Proceedings of the ACM on Human-Computer Interaction3 (11 2019), 1–23. https://doi.org/10.1145/3359174

-

[55]

Meta Platforms, Inc. 2013. React – A JavaScript library for building user interfaces. https://reactjs.org/. Accessed: 2025-09-11

2013

-

[56]

Sein Minn. 2022. AI-assisted knowledge assessment techniques for adaptive learning environments.Computers and Education: Artificial Intelligence3 (2022), 100050

2022

-

[57]

William H Money and Benjamin P Dean. 2019. Incorporating student population differences for effective online education: A content-based review and integrative model.Computers & Education138 (2019), 57–82

2019

-

[58]

Mir Murtaza, Yamna Ahmed, Jawwad Ahmed Shamsi, Fahad Sherwani, and Mariam Usman. 2022. AI-based personalized e-learning systems: Issues, chal- lenges, and solutions.IEEE access10 (2022), 81323–81342

2022

-

[59]

Alaeddin Nassani, John Blake, and Julian Villegas. 2025. Adaptive Learning Companions: Enhancing Education with Biosignal-Driven Digital Human. In Proceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems (CHI EA ’25). Association for Computing Machinery, New York, NY, USA, Article 63, 7 pages. https://doi.org/10.11...

-

[60]

OnTask. 2026. OnTask. https://www.ontasklearning.org/. Accessed: 2026-04-09

2026

-

[61]

Bens Pardamean, Teddy Suparyanto, Tjeng Wawan Cenggoro, Digdo Sudigyo, and Andri Anugrahana. 2022. AI-based learning style prediction in online learning for primary education.Ieee Access10 (2022), 35725–35735

2022

-

[62]

Minju Park, Sojung Kim, Seunghyun Lee, Soonwoo Kwon, and Kyuseok Kim

-

[63]

InExtended Abstracts of the CHI Conference on Human Factors in Computing Systems

Empowering personalized learning through a conversation-based tutoring system with student modeling. InExtended Abstracts of the CHI Conference on Human Factors in Computing Systems. 1–10

-

[64]

Yujia Qin, Shihao Liang, Yining Ye, Kunlun Zhu, Lan Yan, Yaxi Lu, Yankai Lin, Xin Cong, Xiangru Tang, Bill Qian, et al. 2023. Toolllm: Facilitating large language models to master 16000+ real-world apis.arXiv preprint arXiv:2307.16789(2023)

work page internal anchor Pith review arXiv 2023

-

[65]

Jason Quense. n.d.. React Big Calendar. https://github.com/jquense/react-big- calendar Accessed: 2025-01-24

2025

-

[66]

Emilee Rader, Kelley Cotter, and Janghee Cho. 2018. Explanations as mechanisms for supporting algorithmic transparency. InProceedings of the 2018 CHI conference on human factors in computing systems. 1–13

2018

-

[67]

Lin, Emma Anderson, Matt Taylor, Anastasia K

Prerna Ravi, John Masla, Gisella Kakoti, Grace C. Lin, Emma Anderson, Matt Taylor, Anastasia K. Ostrowski, Cynthia Breazeal, Eric Klopfer, and Hal Abelson

-

[68]

InProceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI ’25)

Co-designing Large Language Model Tools for Project-Based Learning with K12 Educators. InProceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI ’25). Association for Computing Machinery, New York, NY, USA, Article 138, 25 pages. https://doi.org/10.1145/3706598.3713971

-

[69]

Mohi Reza, Juho Kim, Ananya Bhattacharjee, Anna N Rafferty, and Joseph Jay Williams. 2021. The MOOClet framework: unifying experimentation, dynamic improvement, and personalization in online courses. InProceedings of the eighth acm conference on learning@ scale. 15–26

2021

-

[70]

Sherry Ruan, Liwei Jiang, Justin Xu, Bryce Joe-Kun Tham, Zhengneng Qiu, Yeshuang Zhu, Elizabeth L Murnane, Emma Brunskill, and James A Landay. 2019. Quizbot: A dialogue-based adaptive learning system for factual knowledge. In Proceedings of the 2019 CHI conference on human factors in computing systems. 1–13

2019

-

[71]

Atikah Shemshack and Jonathan Michael Spector. 2020. A systematic literature review of personalized learning terms.Smart learning environments7, 1 (2020), 33

2020

-

[72]

Ashraful Amin, and Amin Ahsan Ali

Muhtasim Ibteda Shochcho, Mohammad Ashfaq Ur Rahman, Shadman Rohan, Ashraful Islam, Hasnain Heickal, AKM Mahbubur Rahman, M. Ashraful Amin, and Amin Ahsan Ali. 2025. Improving User Engagement and Learning Outcomes in LLM-Based Python Tutor: A Study of PACE. InProceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems...

-

[73]

Edlir Spaho, Betim Çiço, and Isak Shabani. 2025. IoT Integration Approaches into Personalized Online Learning: Systematic Review.Computers14, 2 (2025), 63

2025

-

[74]

Jenny Spouse. 1998. Scaffolding student learning inclinical practice.Nurse Education Today18, 4 (1998), 259–266. https://doi.org/10.1016/S0260-6917(98) 80042-7

-

[75]

Karen Swan. 2003. Learning effectiveness online: What the research tells us. Elements of quality online education, practice and direction4, 1 (2003), 13–47

2003

-

[76]

Keith S Taber. 2018. Scaffolding learning: Principles for effective teaching and the design of classroom resources.Effective teaching and learning: Perspectives, strategies and implementation(2018), 1–43

2018

-

[77]

Yilin Tang, Liuqing Chen, Ziyu Chen, Wenkai Chen, Yu Cai, Yao Du, Fan Yang, and Lingyun Sun. 2024. EmoEden: Applying Generative Artificial Intelligence to Emotional Learning for Children with High-Function Autism. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems(Honolulu, HI, USA)(CHI ’24). Association for Computing Machinery...

-

[78]

Department of Education

U.S. Department of Education. 2010.Transforming American education: learning powered by technology. Technical Report. Office of Educational Technology, Washington, DC

2010

-

[79]

Mieke Vandewaetere, Piet Desmet, and Geraldine Clarebout. 2011. The contribu- tion of learner characteristics in the development of computer-based adaptive learning environments.Computers in Human Behavior27, 1 (2011), 118–130

2011

-

[80]

George Veletsianos and Shandell Houlden. 2019. An analysis of flexible learning and flexibility over the last 40 years of Distance Education.Distance Education 40, 4 (2019), 454–468

2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.