Recognition: 3 theorem links

· Lean TheoremThe Causally Emergent Alignment Hypothesis: Causal Emergence Aligns with and Predicts Final Reward in Reinforcement Learning Agents

Pith reviewed 2026-05-11 00:54 UTC · model grok-4.3

The pith

Successful RL agents show causal emergence in their latent representations that predicts final reward early in training.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Our results suggested a Causally Emergent Alignment Hypothesis: successful agents exhibited causal emergence that was consistently predictive of final reward early in training and whose representational dynamics aligned with reward improvement in most tasks. This idea suggests that causal emergence may be a previously undisclosed axis of reorganization of neural representations in RL agents, with the potential to establish causal relationships and interventions that will lead to better RL agents. Our work also highlights the alignment between causal emergence and learning as another way biological and artificial creatures compare.

What carries the argument

The ΦID measure of causal emergence, applied to the latent-space activations of neural-network RL agents over their training lifetime, which quantifies the unique predictive power of the agent's internal state on future events.

If this is right

- Causal emergence can serve as an early indicator of whether an RL agent will ultimately succeed at its task.

- Changes in causal emergence during training align with and may help explain gains in reward performance.

- Interventions that increase or maintain causal emergence in representations could improve learning outcomes in RL.

- The same measure reveals a shared pattern between artificial agents and biological systems that increase causal emergence after learning.

Where Pith is reading between the lines

- Monitoring causal emergence during training might enable early detection of failing runs without waiting for final reward.

- If the alignment holds more generally, training procedures could be designed to explicitly promote causal emergence in latent states.

- The hypothesis opens the possibility of comparing representational reorganization across biological and artificial agents using the same quantitative measure.

Load-bearing premise

The ΦID calculation on latent activations truly measures causal emergence rather than some other correlated property, and the observed links to reward are not produced by the specific environments, algorithms, or analysis methods chosen.

What would settle it

In a replication using new RL environments or algorithms, causal emergence measured via ΦID on latent states shows no early correlation with final reward or fails to track reward gains during training.

Figures

read the original abstract

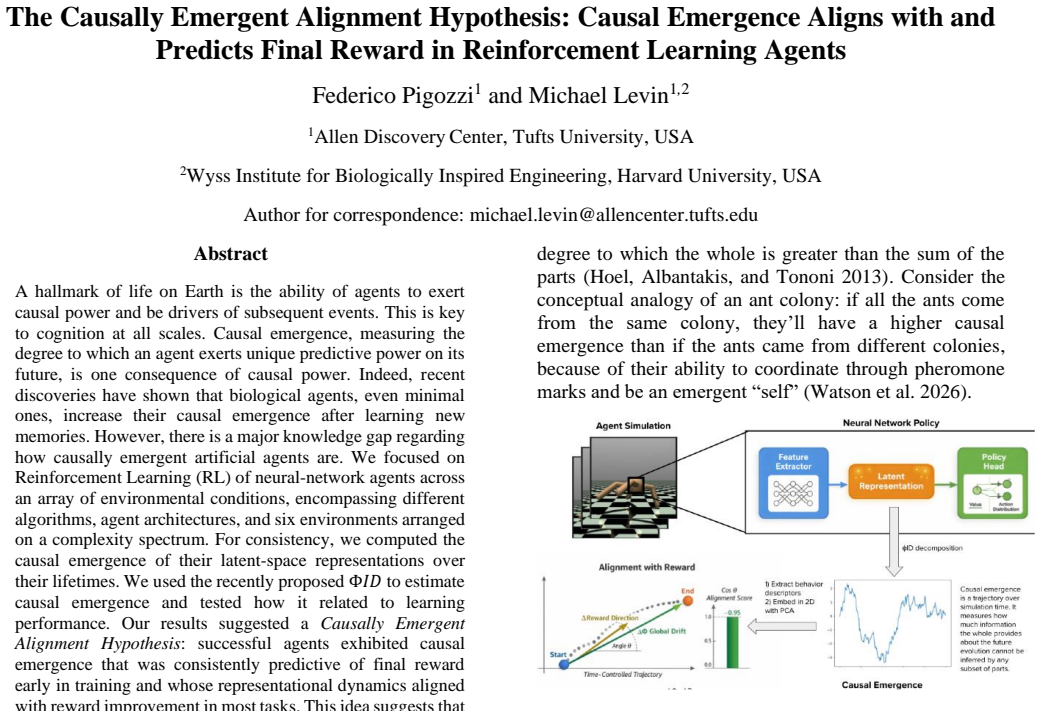

A hallmark of life on Earth is the ability of agents to exert causal power and be drivers of subsequent events. This is key to cognition at all scales. Causal emergence, measuring the degree to which an agent exerts unique predictive power on its future, is one consequence of causal power. Indeed, recent discoveries have shown that biological agents, even minimal ones, increase their causal emergence after learning new memories. However, there is a major knowledge gap regarding how causally emergent artificial agents are. We focused on Reinforcement Learning (RL) of neural-network agents across an array of environmental conditions, encompassing different algorithms, agent architectures, and six environments arranged on a complexity spectrum. For consistency, we computed the causal emergence of their latent-space representations over their lifetimes. We used the recently proposed {\Phi}ID to estimate causal emergence and tested how it related to learning performance. Our results suggested a Causally Emergent Alignment Hypothesis: successful agents exhibited causal emergence that was consistently predictive of final reward early in training and whose representational dynamics aligned with reward improvement in most tasks. This idea suggests that causal emergence may be a previously undisclosed axis of reorganization of neural representations in RL agents, with the potential to establish causal relationships and interventions that will lead to better RL agents. Our work also highlights the alignment between causal emergence and learning as another way biological and artificial creatures compare.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces the Causally Emergent Alignment Hypothesis, asserting that across multiple RL algorithms, neural architectures, and six environments of varying complexity, successful agents exhibit causal emergence (quantified via ΦID on latent-space activations) that is predictive of final reward early in training and whose dynamics align with reward improvement over the course of learning. The work computes ΦID consistently on latent representations throughout agent lifetimes and links these measures to performance outcomes, suggesting causal emergence as a reorganization axis in RL representations with potential for better agent design and biological parallels.

Significance. If the central empirical correlations hold under rigorous validation of the measure, the hypothesis would identify a novel, previously unexamined dimension of representational change in RL that tracks learning success, offering a potential bridge between artificial and biological agents and opening avenues for causal interventions during training. The breadth of conditions tested (algorithms, architectures, environments) strengthens the claim if methodological concerns are addressed.

major comments (3)

- [Methods] Methods section: The application of ΦID to high-dimensional continuous latent activations from neural networks is not accompanied by explicit validation or justification of any required discretization, binning, or approximation steps. Since ΦID was formulated for discrete, low-dimensional systems with explicit causal graphs, the absence of such checks means the reported causal emergence values may not accurately capture unique predictive power over future states, directly undermining the hypothesis.

- [Results] Results section (and abstract claims): The manuscript reports that ΦID values are 'consistently predictive' of final reward and 'aligned with reward improvement in most tasks' across conditions, yet provides no statistical tests, error bars, confidence intervals, or details on handling of data exclusions and multiple comparisons. This omission prevents assessment of whether the observed relationships are robust or could arise from shared sensitivity to training progress.

- [Results/Discussion] §4 (or equivalent results/discussion): The central claim treats early-training ΦID as predictive of final reward, but without controls for confounding factors such as environment statistics or general training dynamics, it remains unclear whether the correlations reflect genuine causal-emergence alignment rather than artifacts of the chosen post-hoc analysis or environments.

minor comments (1)

- [Abstract] The abstract and introduction could more clearly distinguish correlation from the stronger language of 'predictive' and 'aligns with' to avoid overstatement pending statistical confirmation.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback, which has helped us identify areas to strengthen the manuscript. We address each major comment below with specific plans for revision where feasible.

read point-by-point responses

-

Referee: [Methods] Methods section: The application of ΦID to high-dimensional continuous latent activations from neural networks is not accompanied by explicit validation or justification of any required discretization, binning, or approximation steps. Since ΦID was formulated for discrete, low-dimensional systems with explicit causal graphs, the absence of such checks means the reported causal emergence values may not accurately capture unique predictive power over future states, directly undermining the hypothesis.

Authors: We agree that the discretization procedure requires explicit justification and validation. In the original work, latent activations were first reduced via PCA to the top 10 components and then discretized into 8 equal-frequency bins per component to enable ΦID computation on the resulting discrete variables. We will expand the Methods section with a dedicated subsection detailing this pipeline, citing prior applications of information-theoretic measures to neural activations, and add supplementary robustness checks varying bin count (4–16) and PCA dimensionality to confirm that the reported correlations with reward remain stable. revision: yes

-

Referee: [Results] Results section (and abstract claims): The manuscript reports that ΦID values are 'consistently predictive' of final reward and 'aligned with reward improvement in most tasks' across conditions, yet provides no statistical tests, error bars, confidence intervals, or details on handling of data exclusions and multiple comparisons. This omission prevents assessment of whether the observed relationships are robust or could arise from shared sensitivity to training progress.

Authors: We acknowledge the need for greater statistical transparency. The revised Results section and associated figures will report Pearson correlation coefficients with Bonferroni-corrected p-values across the tested environments and algorithms, standard-error bars computed over five independent random seeds per condition, and 95% confidence intervals for the early-training ΦID–final-reward relationships. We will also clarify that all training runs were retained except for the small fraction (<5%) that failed to produce any learning progress, and that alignment was quantified via Spearman rank correlations between the ΦID and reward time series. revision: yes

-

Referee: [Results/Discussion] §4 (or equivalent results/discussion): The central claim treats early-training ΦID as predictive of final reward, but without controls for confounding factors such as environment statistics or general training dynamics, it remains unclear whether the correlations reflect genuine causal-emergence alignment rather than artifacts of the chosen post-hoc analysis or environments.

Authors: This concern is well-taken. We will add several control analyses to the revised Results: partial correlations that hold training epoch and mean episode length constant, direct comparisons of ΦID’s predictive power against simpler statistics such as activation variance and state–action mutual information, and environment-normalized ΦID scores. While the breadth of six environments and multiple algorithms already provides some protection against environment-specific artifacts, we will explicitly discuss residual confounding as a limitation in the Discussion and propose targeted causal-intervention experiments for future work. revision: partial

Circularity Check

Empirical correlations between ΦID and reward exhibit no circular derivation

full rationale

The paper reports observational results: ΦID computed on latent activations of RL agents is correlated with final reward and its dynamics align with reward improvement across environments. No equations define the target quantity in terms of itself, no parameter is fitted to a subset and then relabeled as a prediction, and the central hypothesis is not justified solely by self-citation. The ΦID measure is imported from prior work as an external tool; the load-bearing content is the new empirical patterns across algorithms and tasks, which remain independently falsifiable. This is the expected non-circular outcome for an empirical study.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

We used the recently proposed ΦID to estimate causal emergence... decomposed this capacity as the sum of two terms: Downward causation... Synergy

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanembed_injective unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Gaussian Information Theory... copula-based Gaussianization... minimum-information bipartition

-

IndisputableMonolith/Foundation/BranchSelection.leanbranch_selection unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

global reward alignment... cosine similarity between w and the global direction

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Fields, C., A

'AI -driven automated discovery tools reveal diverse behavioral competencies of biological networks', Elife, 13. Fields, C., A. Goldstein, and L. Sandved -Smith. 2024. 'Making the Thermodynamic Cost of Active Inference Explicit', Entropy, 26. Gao, H. C., T. R. Xu, T. R. Zhang, Y. Q. Guo, C. J. Zhao, J. S. Ren, Y. Z. Jiang, S. Q. Guo, and F. Chen. 2025. 'C...

2024

-

[2]

Levin, M

'The information theory of individuality', Theory in Biosciences, 139: 209-23. Levin, M. 2019. 'The Computational Boundary of a "Self": Developmental Bioe lectricity Drives Multicellularity and Scale-Free Cognition', Frontiers in Psychology, 10. Levin, M. 2022. 'Technological Approach to Mind Everywhere: An Experimentally -Grounded Framework for Understan...

2019

-

[3]

Rosas, F

'Quantifying high -order interdependencies via multivariate extensions of the mutual information', Physical Review E, 100. Rosas, F. E., P. A. M. Mediano, H. J. Jensen, A. K. Seth, A. B. Barrett, R. L. Carhart -Harris, and D. Bor. 2020. 'Reconciling emergences: An information -theoretic approach to identify ca usal emergence in multivariate data', Plos Co...

2020

-

[4]

Vernon, D., R

'The topology of synergy: Linking topological and information -theoretic approaches to higher -order interactions in complex systems', Plos Computational Biology, 21. Vernon, D., R. Lowe, S. Thill, and T. Ziemke. 2015. 'Embodied cognition and circular causality: on the role of constitutive autonomy in the reciprocal coupling of perception and action', Fro...

2015

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.