Recognition: no theorem link

Monocular passive event-based range-finding of airborne objects using the Scheimpflug principle

Pith reviewed 2026-05-11 01:58 UTC · model grok-4.3

The pith

Tilting the sensor encodes range to airborne objects directly in pixel coordinates after one calibration.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

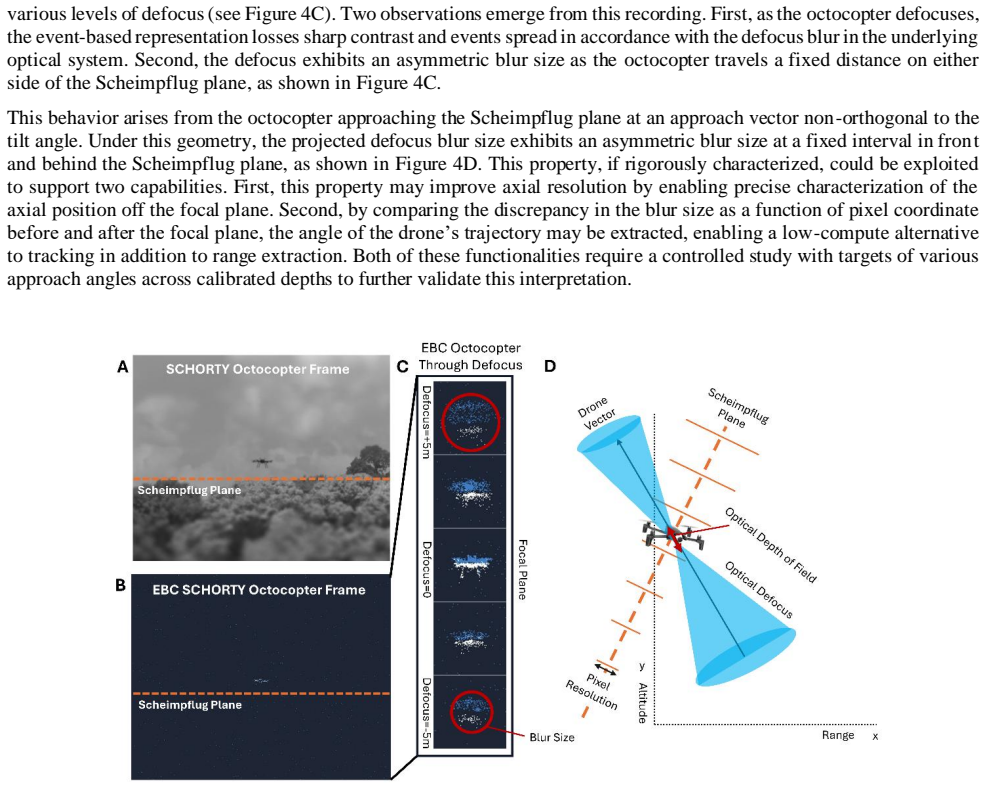

By satisfying the Scheimpflug condition through sensor tilt, SCHORTY maps pixel coordinates to range on a tilted object-space plane, achieving deterministic range assignment limited primarily by projected pixel size that grows with squared distance, while avoiding computationally intensive inverse reconstructions common in coded-aperture and PSF-engineered systems. In the event-based configuration the method inherently suppresses static background and emphasizes motion, and an asymmetric defocus blur that depends on UAV trajectory supplies an additional localization cue.

What carries the argument

The Scheimpflug principle realized by tilting the sensor relative to the imaging optics, which tilts the object-space plane and thereby encodes range as a monotonic function of image-plane coordinates.

If this is right

- Range assignment becomes deterministic from calibrated pixel coordinates without iterative reconstruction.

- Event-based operation suppresses static scene elements and highlights moving objects in natural clutter.

- Asymmetric defocus blur about the object plane varies with trajectory and can serve as a supplementary cue.

- The system remains sensor- and waveband-agnostic and meets low SWaP constraints for medium-range observation.

- Future combination with 2.5D PSF engineering or event-based deconvolution is directly compatible.

Where Pith is reading between the lines

- Periodic recalibration may be needed in long deployments where atmospheric refraction or lens thermal drift accumulates.

- The same tilt geometry could be tested on ground vehicles or marine targets moving at comparable speeds.

- Pairing with a second camera at a different baseline could convert the 2.5D range map into full 3D point clouds.

Load-bearing premise

The one-time geometric calibration stays valid across changing atmosphere, flight paths, and clutter, and targets remain sufficiently close to the tilted object plane for the linear range mapping to hold.

What would settle it

Fly a UAV at a known GPS distance and check whether the range read from its pixel location matches the true distance within the uncertainty set by pixel size at that range; systematic mismatch would falsify the direct mapping.

Figures

read the original abstract

Passive 3D sensing is increasingly critical for early detection and tracking of small aerial vehicles (UAVs), where traditional active ranging can be tactically undesirable. We present SCHeimpflug for Optical Ranging TechnologY (SCHORTY), a single-aperture passive and active ranging architecture that exploits the Scheimpflug principle to encode range along a tilted object space plane by tilting the sensor relative to the imaging optics. SCHORTY requires only a one-time geometric calibration to map pixel coordinates to range and is inherently sensor and waveband agnostic. We implement SCHORTY using both a visible frame-based camera and an event-based camera (EBC) with closely matched pixel sizes for comparable horizontal resolutions and range binning. Controlled flights of an octocopter and a fixed-wing UAV equipped with GPS provide ground truth distances out to 1.1 km. Experimental results show that SCHORTY achieves deterministic range assignment limited primarily by the projected pixel size, which grows squared distance, while avoiding computationally intensive inverse reconstructions common in coded aperture and PSF engineered systems. In the EBC configuration, EBC-SCHORTY inherently suppresses static background and emphasizes motion, improving UAV detectability in cluttered natural scenes and under turbulence and motion blur. Additionally, we observe an asymmetric defocus blur about the object plane that depends on UAV trajectory, suggesting an extra cue for localization and trajectory inference. These results demonstrate SCHORTY as a practical and Size, Weight, and Power (SWaP) efficient passive ranging solution for medium-range UAV observation and motivate future integration with 2.5D/3D PSF engineering and event-based deconvolution to enhance 3D sensing performance.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims to introduce SCHORTY, a monocular passive ranging architecture that exploits the Scheimpflug principle by tilting the sensor relative to the imaging optics, thereby encoding range along a fixed tilted object-space plane. It requires only a one-time geometric calibration to map pixel coordinates (or event cluster centroids) directly to range and is demonstrated with both a visible frame-based camera and an event-based camera (EBC) on controlled flights of an octocopter and fixed-wing UAV equipped with GPS ground truth out to 1.1 km. The central result is that range assignment is deterministic and limited primarily by projected pixel size (which grows with squared distance), while the EBC configuration suppresses static backgrounds and the observed trajectory-dependent asymmetric defocus is suggested as an additional localization cue.

Significance. If the geometric mapping holds under the stated assumptions, SCHORTY offers a low-SWaP, computationally lightweight passive ranging solution for medium-range UAV observation that avoids the inverse reconstructions required by coded-aperture or engineered-PSF systems. The controlled flights with direct GPS comparison provide concrete experimental grounding for the pixel-to-range claim, and the EBC implementation's motion selectivity is a clear practical advantage in cluttered or turbulent scenes. These elements could make the approach relevant for optics, remote sensing, and defense applications.

major comments (3)

- [Abstract] Abstract: the assertion that SCHORTY achieves 'deterministic range assignment limited primarily by the projected pixel size' is not supported by any quantitative error statistics, RMS deviations, or error bars relative to the GPS ground truth; without these, it is impossible to verify that range error is indeed bounded only by geometry rather than by unmodeled effects.

- [Abstract] Abstract: the reported observation of 'asymmetric defocus blur about the object plane that depends on UAV trajectory' directly raises the possibility that the measured event-cluster centroid or intensity peak shifts away from the true Scheimpflug-plane intersection in a trajectory-dependent manner; this would make the pixel-to-range mapping non-deterministic and contradict the claim of avoiding inverse reconstructions unless the bias is shown to be negligible or correctable.

- [Experimental validation] The one-time geometric calibration is presented as sufficient for all subsequent use, yet no repeatability data, sensitivity analysis to temperature, turbulence, or focus drift, or tolerance to objects deviating from the tilted plane are provided; these omissions are load-bearing because they determine whether the deterministic mapping survives realistic operating conditions.

minor comments (2)

- [Abstract] The abstract states that the two cameras have 'closely matched pixel sizes for comparable horizontal resolutions' but does not give the actual pixel pitch or focal-length values, making it difficult to assess the claimed range-binning equivalence.

- Figure captions and text should explicitly define all acronyms at first use (e.g., EBC) and clarify whether range values are reported in object-space or image-space coordinates.

Simulated Author's Rebuttal

We thank the referee for their positive evaluation of the significance of SCHORTY and for the constructive major comments. We address each point below with clarifications drawn from the manuscript data and indicate the revisions that will be incorporated.

read point-by-point responses

-

Referee: [Abstract] Abstract: the assertion that SCHORTY achieves 'deterministic range assignment limited primarily by the projected pixel size' is not supported by any quantitative error statistics, RMS deviations, or error bars relative to the GPS ground truth; without these, it is impossible to verify that range error is indeed bounded only by geometry rather than by unmodeled effects.

Authors: We agree that explicit quantitative metrics strengthen the claim. The manuscript already contains direct GPS comparisons for both the frame-based and event-based implementations across multiple flights out to 1.1 km. In the revision we will extract the per-range residuals, compute RMS deviations, and add error bars to the relevant figures and abstract text. These will demonstrate that the residuals remain consistent with the geometric pixel-projection limit (growing with squared distance) and show no statistically significant trajectory-independent bias beyond that limit. revision: yes

-

Referee: [Abstract] Abstract: the reported observation of 'asymmetric defocus blur about the object plane that depends on UAV trajectory' directly raises the possibility that the measured event-cluster centroid or intensity peak shifts away from the true Scheimpflug-plane intersection in a trajectory-dependent manner; this would make the pixel-to-range mapping non-deterministic and contradict the claim of avoiding inverse reconstructions unless the bias is shown to be negligible or correctable.

Authors: The asymmetric defocus is presented as an observed phenomenon that may supply an auxiliary cue rather than a flaw in the primary mapping. In the EBC data the event clusters are formed from the leading-edge motion events, which remain localized to the Scheimpflug-plane intersection within the pixel footprint. We will add a quantitative subsection that measures centroid displacement versus trajectory angle and shows that any shift is smaller than the geometric range bin width, thereby preserving deterministic assignment without requiring inverse reconstruction. revision: yes

-

Referee: [Experimental validation] The one-time geometric calibration is presented as sufficient for all subsequent use, yet no repeatability data, sensitivity analysis to temperature, turbulence, or focus drift, or tolerance to objects deviating from the tilted plane are provided; these omissions are load-bearing because they determine whether the deterministic mapping survives realistic operating conditions.

Authors: The calibration is a fixed geometric mapping between pixel coordinates and the tilted object-space plane; once the relative sensor tilt and focal length are determined, the mapping is invariant under rigid-body motion of the camera. Consistency across independent flights without recalibration is already implicit in the reported results. In revision we will add an explicit repeatability statement, a brief discussion of expected thermal and turbulence tolerances based on the outdoor test conditions, and a limitations paragraph addressing objects that depart from the nominal plane. A full parametric sensitivity study lies outside the scope of the present demonstration and is noted as future work. revision: partial

Circularity Check

No circularity: range mapping follows from Scheimpflug geometry plus independent GPS validation

full rationale

The derivation rests on the established Scheimpflug principle for encoding range along a tilted plane, implemented via a one-time geometric calibration that maps pixel coordinates directly to distance. This mapping is then tested against external GPS ground truth from controlled UAV flights out to 1.1 km. No equations or claims reduce by construction to fitted parameters, self-citations, or renamed inputs; the deterministic assignment is presented as a direct geometric consequence, not a statistical prediction. The paper is therefore self-contained against an external benchmark and receives the default non-circularity finding.

Axiom & Free-Parameter Ledger

free parameters (1)

- geometric calibration mapping

axioms (1)

- domain assumption Objects of interest lie on or near the tilted plane defined by the Scheimpflug condition

Reference graph

Works this paper leans on

-

[1]

A Review of Intelligent Vision Enhancement Technology for Battlefield,

Y. Wu et al., “A Review of Intelligent Vision Enhancement Technology for Battlefield,” Wirel. Commun. Mob. Comput., vol. 2023, no. 1, p. 6733262, 2023, doi: 10.1155/2023/6733262

-

[2]

T. Ito, “Intelligence Failures in the Asymmetric War between Ukraine and Russia: A Literature Review of Ukraine’s Drone Attacks on Russian Military Infrastructure,” Secur. Intell. Terror. J. SITJ, vol. 2, no. 3, pp. 262– 273, Sep. 2025, doi: 10.70710/sitj.v2i3.63

-

[3]

Laser range-finding techniques in the sensing of 3-D objects,

I. P. A. Kaisto, J. T. Kostamovaara, I. Moring, and R. A. Myllylae, “Laser range-finding techniques in the sensing of 3-D objects,” in Sensing and Reconstruction of Three-Dimensional Objects and Scenes, SPIE, Jan. 1990, pp. 122–133. doi: 10.1117/12.20011

-

[4]

S. Se and N. Pears, “Passive 3D Imaging,” in 3D Imaging, Analysis and Applications, N. Pears, Y. Liu, and P. Bunting, Eds., London: Springer, 2012, pp. 35–94. doi: 10.1007/978-1-4471-4063-4_2

-

[5]

R. I. Hartley and P. Sturm, “Triangulation,” Comput. Vis. Image Underst., vol. 68, no. 2, pp. 146–157, Nov. 1997, doi: 10.1006/cviu.1997.0547

-

[6]

Range Finding with a Plenoptic Camera,

R. A. Raynor, “Range Finding with a Plenoptic Camera,” Art. no. AFITENP14M29, Mar. 2014, Accessed: Apr. 17, 2026. [Online]. Available: https://apps.dtic.mil/sti/html/tr/ADA599366/

work page 2014

-

[7]

Depth-of-field engineering in coded aperture imaging,

M. R. Rai and J. Rosen, “Depth-of-field engineering in coded aperture imaging,” Opt. Express, vol. 29, no. 2, pp. 1634–1648, Jan. 2021, doi: 10.1364/OE.412744

-

[8]

Coded aperture compressive snapshot imaging for moving objects location,

Y. Zong, “Coded aperture compressive snapshot imaging for moving objects location,” in Fourth International Conference on Image Processing and Intelligent Control (IPIC 2024), SPIE, Aug. 2024, pp. 50–56. doi: 10.1117/12.3038535

-

[9]

Deformable mirror based optimal PSF engineering for 3D super-resolution imaging,

S. Fu et al., “Deformable mirror based optimal PSF engineering for 3D super-resolution imaging,” Opt. Lett., vol. 47, no. 12, pp. 3031–3034, Jun. 2022, doi: 10.1364/OL.460949

-

[10]

Fouriscale: A frequency perspective on training-free high-resolution image synthesis,

K. Khare, M. Butola, and S. Rajora, “PSF Engineering,” in Fourier Optics and Computational Imaging, K. Khare, M. Butola, and S. Rajora, Eds., Cham: Springer International Publishing, 2023, pp. 249–260. doi: 10.1007/978-3- 031-18353-9_17

-

[11]

3D computer-generated holography by non-convex optimization,

J. Zhang, N. Pégard, J. Zhong, H. Adesnik, and L. Waller, “3D computer-generated holography by non-convex optimization,” Optica, vol. 4, no. 10, pp. 1306–1313, Oct. 2017, doi: 10.1364/OPTICA.4.001306

-

[12]

Deep learning for fast spatially varying deconvolution,

K. Yanny, K. Monakhova, R. W. Shuai, and L. Waller, “Deep learning for fast spatially varying deconvolution,” Optica, vol. 9, no. 1, pp. 96–99, Jan. 2022, doi: 10.1364/OPTICA.442438

-

[13]

Imaging Characteristics of Three Cameras Using the Scheimpflug Principle,

M. Kojima, A. Wegener, and O. Hockwin, “Imaging Characteristics of Three Cameras Using the Scheimpflug Principle,” Ophthalmic Res., vol. 22, no. Suppl. 1, pp. 29–35, Dec. 2009, doi: 10.1159/000267061

-

[14]

Scheimpflug cameras for range-resolved observations of the atmospheric effects on laser propagation,

N. Meraz et al., “Scheimpflug cameras for range-resolved observations of the atmospheric effects on laser propagation,” in Laser Radar Technology and Applications XXX, SPIE, May 2025, pp. 41–60. doi: 10.1117/12.3054806

-

[15]

G. Gallego et al., “Event-Based Vision: A Survey,” IEEE Trans. Pattern Anal. Mach. Intell., vol. 44, no. 1, pp. 154–180, Jan. 2022, doi: 10.1109/TPAMI.2020.3008413

-

[16]

Frequency-aware Event-based Video Deblurring for Real-World Motion Blur,

T. Kim, H. Cho, and K.-J. Yoon, “Frequency-aware Event-based Video Deblurring for Real-World Motion Blur,” presented at the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 24966–24976. Accessed: Apr. 22, 2026. [Online]. Available: https://openaccess.thecvf.com/content/CVPR2024/html/Kim_Frequency-aware_Event- ba...

work page 2024

-

[17]

Turbulence mitigation in imagery including moving objects from a static event camera,

N. Boehrer, R. P. J. Nieuwenhuizen, and J. Dijk, “Turbulence mitigation in imagery including moving objects from a static event camera,” Opt. Eng., vol. 60, no. 5, p. 053101, May 2021, doi: 10.1117/1.OE.60.5.053101

-

[18]

I. Akanbi and M. Ayomoh, “Event-Based Vision Application on Autonomous Unmanned Aerial Vehicle: A Systematic Review of Prospects and Challenges,” Sensors, vol. 26, no. 1, Dec. 2025, doi: 10.3390/s26010081

-

[19]

New Opportunities for Forest Remote Sensing Through Ultra-High-Density Drone Lidar,

J. R. Kellner et al., “New Opportunities for Forest Remote Sensing Through Ultra-High-Density Drone Lidar,” Surv. Geophys., vol. 40, no. 4, pp. 959–977, Jul. 2019, doi: 10.1007/s10712-019-09529-9

-

[20]

Remote sensing platforms and sensors: A survey,

C. Toth and G. Jóźków, “Remote sensing platforms and sensors: A survey,” ISPRS J. Photogramm. Remote Sens., vol. 115, pp. 22–36, May 2016, doi: 10.1016/j.isprsjprs.2015.10.004

-

[21]

Depth from focusing and defocusing,

Y. Xiong and S. A. Shafer, “Depth from focusing and defocusing,” in Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, Jun. 1993, pp. 68–73. doi: 10.1109/CVPR.1993.340977

-

[22]

Learning to Deblur and Generate High Frame Rate Video with an Event Camera,

C. Haoyu, T. Minggui, S. Boxin, W. YIzhou, and H. Tiejun, “Learning to Deblur and Generate High Frame Rate Video with an Event Camera,” Mar. 20, 2020, arXiv: arXiv:2003.00847. doi: 10.48550/arXiv.2003.00847

-

[23]

Model-Based 2.5-D Deconvolution for Extended Depth of Field in Brightfield Microscopy,

F. Aguet, D. Van De Ville, and M. Unser, “Model-Based 2.5-D Deconvolution for Extended Depth of Field in Brightfield Microscopy,” IEEE Trans. Image Process., vol. 17, no. 7, pp. 1144–1153, Jul. 2008, doi: 10.1109/TIP.2008.924393

-

[24]

Pupil engineering for extended depth-of-field imaging in a fluorescence miniscope,

J. Greene et al., “Pupil engineering for extended depth-of-field imaging in a fluorescence miniscope,” Neurophotonics, vol. 10, no. 4, p. 044302, May 2023, doi: 10.1117/1.NPh.10.4.044302

-

[25]

A PyTorch-enabled tool for synthetic event camera data generation and algorithm development,

J. L. Greene, A. Kar, I. Galindo, E. Quiles, E. Chen, and M. Anderson, “A PyTorch-enabled tool for synthetic event camera data generation and algorithm development,” in Synthetic Data for Artificial Intelligence and Machine Learning: Tools, Techniques, and Applications III, SPIE, May 2025, pp. 123–143. doi: 10.1117/12.3053238

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.