Recognition: 2 theorem links

· Lean TheoremTRACE: Transport Alignment Conformal Prediction via Diffusion and Flow Matching Models

Pith reviewed 2026-05-11 01:10 UTC · model grok-4.3

The pith

Averaging errors along transport trajectories produces scalar scores for valid conformal prediction in diffusion and flow matching models.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

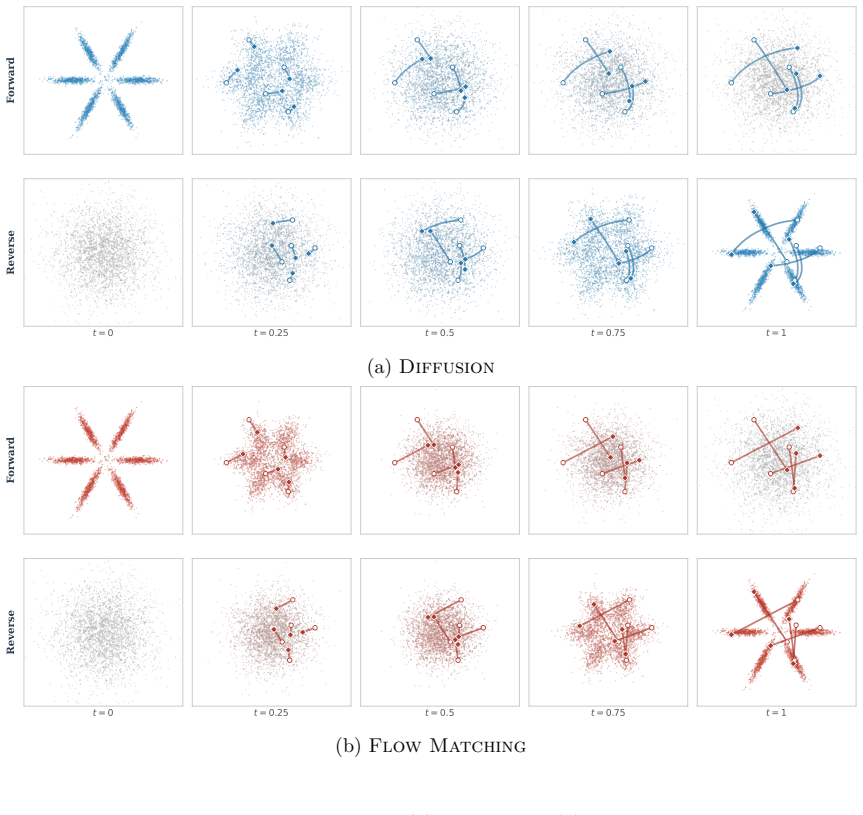

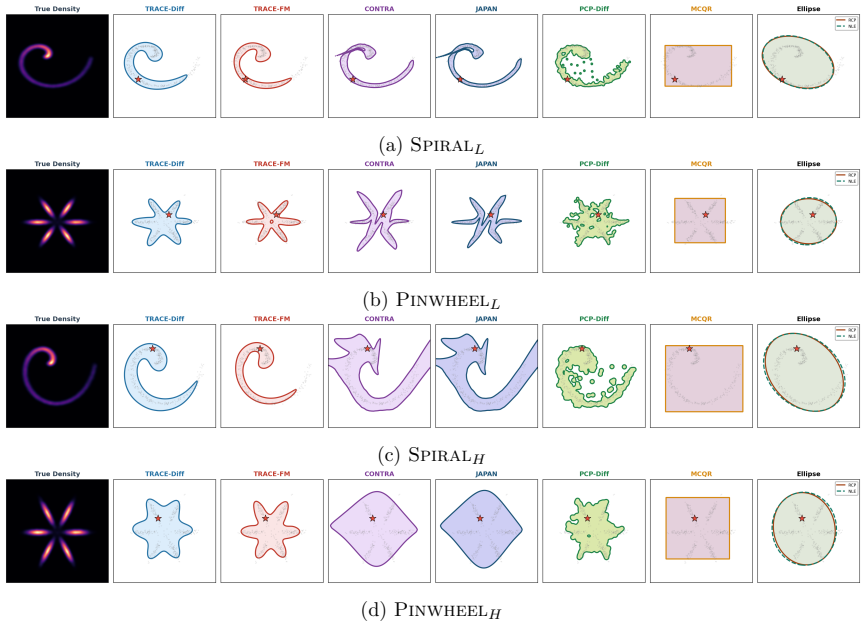

TRACE defines nonconformity through transport alignment by averaging denoising or velocity-matching errors along stochastic transport trajectories in diffusion and flow matching models. The resulting scalar scores are calibrated with split conformal prediction to obtain valid marginal coverage under exchangeability, without explicit likelihood evaluation or additional geometric assumptions on the conditional distribution. Statistical properties of the scores are analyzed, including their behavior under limited computational budget, and experiments confirm that the induced prediction regions adapt to multimodal and non-convex supports.

What carries the argument

Transport alignment nonconformity score formed by averaging denoising or velocity-matching errors collected along stochastic trajectories of the generative dynamics.

If this is right

- The method supplies finite-sample marginal coverage for multi-dimensional outputs from generative models.

- Prediction regions adapt automatically to multimodal and non-convex conditional distributions.

- Score quality trades off against computational budget through the number of trajectory steps.

- The same score construction applies uniformly to both diffusion and flow-matching formulations.

Where Pith is reading between the lines

- The same trajectory-averaging idea could be tested on other generative processes that admit a denoising or velocity field, such as score-based models outside the diffusion family.

- Because the scores remain scalar, they could be combined with existing conformal techniques for conditional or adaptive coverage without further modification.

- If trajectory length is treated as a tunable hyperparameter, one could study whether optimal length depends on the degree of multimodality in the target distribution.

Load-bearing premise

Averaging denoising or velocity-matching errors along stochastic transport trajectories produces nonconformity scores whose calibration yields valid coverage without additional geometric assumptions or likelihood evaluation.

What would settle it

Empirical coverage falling below the nominal level on an exchangeable collection of samples drawn from a known multimodal conditional distribution when the trajectory length used for score computation is held fixed.

Figures

read the original abstract

Constructing valid and informative conformal prediction regions for multi-dimensional outputs remains a fundamental challenge. While conformal prediction provides finite-sample, distribution-free coverage guarantees, its practical performance critically depends on the choice of nonconformity score. Existing approaches often rely on restrictive geometric assumptions or require explicit likelihood evaluation and invertible transformations, limiting their applicability in complex generative settings. In this work, we introduce TRACE (TRansport Alignment Conformal Estimation), a conformal prediction framework that defines nonconformity through transport alignment in diffusion and flow matching models. Rather than evaluating likelihoods, we measure how well a candidate output aligns with the learned generative dynamics by averaging denoising or velocity-matching errors along stochastic transport trajectories. The resulting transport-based scores are scalar-valued and can be calibrated using split conformal prediction, yielding valid marginal coverage under exchangeability. We further analyze the statistical properties of the proposed scores and their sensitivity to computational budget. Experiments on synthetic and real datasets demonstrate valid coverage and show that the resulting regions adapt naturally to multimodal and non-convex conditional distributions.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces TRACE, a conformal prediction framework for multi-dimensional outputs that defines nonconformity scores via transport alignment in diffusion and flow matching models. Scores are computed as averages of denoising or velocity-matching errors along stochastic transport trajectories from a fixed generative model; these scalar scores are then calibrated via split conformal prediction to obtain valid marginal coverage under exchangeability. The work further analyzes statistical properties of the scores (including sensitivity to computational budget) and reports experiments on synthetic and real datasets demonstrating adaptation to multimodal and non-convex conditionals.

Significance. If the central claim holds, the contribution is a practical, likelihood-free route to valid conformal regions in complex generative settings that avoids restrictive geometric assumptions or invertible maps. The approach reuses pre-trained diffusion/flow models to produce adaptive scores whose validity follows from the standard split-CP rank argument (conditional on the training data used to fit the generative model), which is a clean and useful observation.

minor comments (4)

- [Abstract] The abstract asserts valid marginal coverage and statistical analysis but provides no derivation sketch or reference to the precise exchangeability conditioning (training data vs. calibration/test points); a one-paragraph outline in §2 or §3 would clarify that the guarantee is the usual one and does not require extra geometric assumptions.

- [Method] The description of score computation (averaging along trajectories) is clear at a high level but lacks an explicit algorithmic box or pseudocode showing how the number of trajectory samples and denoising steps enter the final nonconformity value; this affects reproducibility of the reported efficiency results.

- [Experiments] Experiments are said to demonstrate valid coverage and adaptation to multimodal distributions, yet the abstract and summary give no details on number of Monte Carlo repetitions, exact coverage deviation observed, or baseline methods; adding a table with empirical coverage and interval lengths (with standard errors) would strengthen the efficiency claims.

- Notation for the transport-based score (e.g., how the averaging operator is denoted and whether it is conditional on the candidate point) should be introduced once and used consistently; occasional shifts between denoising-error and velocity-matching formulations are not always sign-posted.

Simulated Author's Rebuttal

We thank the referee for the positive assessment of our work and the recommendation for minor revision. The provided summary correctly identifies the core idea of TRACE: defining nonconformity scores via averaged denoising or velocity errors along stochastic transport paths in pre-trained diffusion and flow-matching models, followed by standard split conformal calibration to obtain marginal coverage guarantees. We appreciate the recognition that this yields a practical, likelihood-free approach without requiring invertible maps or restrictive geometric assumptions.

Circularity Check

No significant circularity

full rationale

The paper defines a nonconformity score by averaging denoising or velocity-matching errors along trajectories from a pre-trained diffusion or flow-matching model, then applies standard split conformal prediction to these scalar scores. The marginal coverage guarantee is obtained directly from the classical exchangeability-based rank argument of split CP and does not depend on the internal construction of the score or on any fitted parameter that is later renamed as a prediction. No self-definitional steps, fitted-input predictions, or load-bearing self-citations appear in the derivation chain; the statistical validity remains independent of the generative-model details.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Data points are exchangeable so that split conformal prediction yields marginal coverage guarantees.

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearaveraging denoising or velocity-matching errors along stochastic transport trajectories... scalar-valued and can be calibrated using split conformal prediction

-

IndisputableMonolith/Foundation/AlphaCoordinateFixation.leanJ_uniquely_calibrated_via_higher_derivative unclearTRACE scores... Monte Carlo approximation error decays at rate O(1/sqrt(B))

Reference graph

Works this paper leans on

-

[1]

Conformal Prediction With Conditional Guarantees , author=. 2024 , eprint=

work page 2024

-

[2]

Proceedings of the Asian Conference on Machine Learning , pages =

Conditional Validity of Inductive Conformal Predictors , author =. Proceedings of the Asian Conference on Machine Learning , pages =. 2012 , editor =

work page 2012

-

[3]

Information and Inference: A Journal of the IMA , year=

The limits of distribution-free conditional predictive inference , author=. Information and Inference: A Journal of the IMA , year=

-

[4]

Multicalibration: Calibration for the (

Hebert-Johnson, Ursula and Kim, Michael and Reingold, Omer and Rothblum, Guy , booktitle =. Multicalibration: Calibration for the (. 2018 , editor =

work page 2018

-

[5]

Vladimir Vovk , title =. CoRR , volume =. 2012 , url =. 1209.2673 , timestamp =

-

[6]

The limits of distribution-free conditional predictive inference , author=. 2020 , eprint=

work page 2020

-

[7]

Donald W. K. Andrews and Xiaoxia Shi , journal =. INFERENCE BASED ON CONDITIONAL MOMENT INEQUALITIES , urldate =

-

[8]

Classification with Valid and Adaptive Coverage , author=. 2020 , eprint=

work page 2020

-

[9]

Uncertainty Sets for Image Classifiers using Conformal Prediction , author=. 2022 , eprint=

work page 2022

-

[10]

The 40th Conference on Uncertainty in Artificial Intelligence , year=

Normalizing Flows for Conformal Regression , author=. The 40th Conference on Uncertainty in Artificial Intelligence , year=

-

[11]

Density estimation using Real NVP

Density estimation using real nvp , author=. arXiv preprint arXiv:1605.08803 , year=

work page internal anchor Pith review arXiv

-

[12]

International Conference on Machine Learning , pages=

Neural autoregressive flows , author=. International Conference on Machine Learning , pages=. 2018 , organization=

work page 2018

-

[13]

Advances in Neural Information Processing Systems , volume=

Residual flows for invertible generative modeling , author=. Advances in Neural Information Processing Systems , volume=

-

[14]

Advances in neural information processing systems , volume=

Glow: Generative flow with invertible 1x1 convolutions , author=. Advances in neural information processing systems , volume=

-

[15]

Journal of Machine Learning Research , year =

George Papamakarios and Eric Nalisnick and Danilo Jimenez Rezende and Shakir Mohamed and Balaji Lakshminarayanan , title =. Journal of Machine Learning Research , year =

-

[16]

Learning Likelihoods with Conditional Normalizing Flows , author=. ArXiv , year=

-

[17]

Kobyzev, Ivan and Prince, Simon J.D. and Brubaker, Marcus A. , year=. Normalizing Flows: An Introduction and Review of Current Methods , volume=. IEEE Transactions on Pattern Analysis and Machine Intelligence , publisher=. doi:10.1109/tpami.2020.2992934 , number=

-

[18]

Sbornik: Mathematics , abstract =

V I Bogachev and A V Kolesnikov and K V Medvedev , title =. Sbornik: Mathematics , abstract =. 2005 , month =. doi:10.1070/SM2005v196n03ABEH000882 , url =

-

[19]

Inductive confidence machines for regression , author=. Machine Learning: ECML 2002: 13th European Conference on Machine Learning Helsinki, Finland, August 19--23, 2002 Proceedings 13 , pages=. 2002 , organization=

work page 2002

-

[20]

Algorithmic learning in a random world , author=. 2005 , publisher=

work page 2005

-

[21]

Annals of Mathematics and Artificial Intelligence , volume=

A conformal prediction approach to explore functional data , author=. Annals of Mathematics and Artificial Intelligence , volume=. 2015 , publisher=

work page 2015

-

[22]

BERT : Pre-training of deep bidirectional transformers for language understanding

Devlin, Jacob and Chang, Ming-Wei and Lee, Kenton and Toutanova, Kristina. BERT : Pre-training of Deep Bidirectional Transformers for Language Understanding. Proceedings of the 2019 Conference of the North A merican Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers). 2019. doi:10.18653/v...

-

[23]

DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter

Victor Sanh and Lysandre Debut and Julien Chaumond and Thomas Wolf , title =. CoRR , volume =. 2019 , url =. 1910.01108 , timestamp =

work page internal anchor Pith review arXiv 2019

-

[24]

Xinxin Li , publisher =. AG News and IMDB , year =. doi:10.21227/f9vv-5898 , url =

-

[25]

Class-Conditional Conformal Prediction with Many Classes , author=. 2023 , eprint=

work page 2023

- [26]

- [27]

-

[28]

THE MNIST DATABASE of handwritten digits

LECUN, Y. THE MNIST DATABASE of handwritten digits. http://yann.lecun.com/exdb/mnist/

-

[29]

Fashion-MNIST: a Novel Image Dataset for Benchmarking Machine Learning Algorithms

Han Xiao and Kashif Rasul and Roland Vollgraf , title =. CoRR , volume =. 2017 , url =. 1708.07747 , timestamp =

work page internal anchor Pith review arXiv 2017

-

[30]

Reading Digits in Natural Images with Unsupervised Feature Learning , author=. 2011 , url=

work page 2011

-

[31]

Learning Multiple Layers of Features from Tiny Images , author=. 2009 , url=

work page 2009

-

[32]

Character-level Convolutional Networks for Text Classification , url =

Zhang, Xiang and Zhao, Junbo and LeCun, Yann , booktitle =. Character-level Convolutional Networks for Text Classification , url =

- [33]

-

[34]

Efficient Intent Detection with Dual Sentence Encoders , year =

I. Efficient Intent Detection with Dual Sentence Encoders , year =

-

[35]

Annals of Mathematical Statistics , year=

Asymptotic Minimax Character of the Sample Distribution Function and of the Classical Multinomial Estimator , author=. Annals of Mathematical Statistics , year=

-

[36]

The Tight Constant in the Dvoretzky-Kiefer-Wolfowitz Inequality , author=. Annals of Probability , year=

-

[37]

Scaling Learning Algorithms Towards

Bengio, Yoshua and LeCun, Yann , booktitle =. Scaling Learning Algorithms Towards

-

[38]

and Osindero, Simon and Teh, Yee Whye , journal =

Hinton, Geoffrey E. and Osindero, Simon and Teh, Yee Whye , journal =. A Fast Learning Algorithm for Deep Belief Nets , volume =

- [39]

-

[40]

Journal of the American Statistical Association , volume=

Distribution-free predictive inference for regression , author=. Journal of the American Statistical Association , volume=. 2018 , publisher=

work page 2018

-

[41]

Advances in neural information processing systems , volume=

Conformalized quantile regression , author=. Advances in neural information processing systems , volume=

-

[42]

Conformal and Probabilistic Prediction and Applications , pages=

Conformal uncertainty sets for robust optimization , author=. Conformal and Probabilistic Prediction and Applications , pages=. 2021 , organization=

work page 2021

-

[43]

A Gentle Introduction to Conformal Prediction and Distribution-Free Uncertainty Quantification

A gentle introduction to conformal prediction and distribution-free uncertainty quantification , author=. arXiv preprint arXiv:2107.07511 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[44]

Conformal and Probabilistic Prediction with Applications , pages=

Ellipsoidal conformal inference for multi-target regression , author=. Conformal and Probabilistic Prediction with Applications , pages=. 2022 , organization=

work page 2022

-

[45]

NICE: Non-linear Independent Components Estimation

Nice: Non-linear independent components estimation , author=. arXiv preprint arXiv:1410.8516 , year=

work page internal anchor Pith review arXiv

-

[46]

Flexible distribution-free conditional predictive bands using density estimators , author =. Proceedings of the Twenty Third International Conference on Artificial Intelligence and Statistics , pages =. 2020 , editor =

work page 2020

-

[47]

arXiv preprint arXiv:2206.06584 , year=

Probabilistic conformal prediction using conditional random samples , author=. arXiv preprint arXiv:2206.06584 , year=

-

[48]

Pubblicazioni del R Istituto Superiore di Scienze Economiche e Commericiali di Firenze , volume=

Teoria statistica delle classi e calcolo delle probabilita , author=. Pubblicazioni del R Istituto Superiore di Scienze Economiche e Commericiali di Firenze , volume=

-

[49]

Journal of the American statistical association , volume=

Multiple comparisons among means , author=. Journal of the American statistical association , volume=. 1961 , publisher=

work page 1961

-

[50]

Journal of Machine Learning Research , volume=

Cd-split and hpd-split: Efficient conformal regions in high dimensions , author=. Journal of Machine Learning Research , volume=

-

[51]

Multi-target regression via input space expansion: treating targets as inputs , volume=

Spyromitros-Xioufis, Eleftherios and Tsoumakas, Grigorios and Groves, William and Vlahavas, Ioannis , year=. Multi-target regression via input space expansion: treating targets as inputs , volume=. Machine Learning , publisher=. doi:10.1007/s10994-016-5546-z , number=

-

[52]

Athanasios Tsanas and Angeliki Xifara , keywords =. Accurate quantitative estimation of energy performance of residential buildings using statistical machine learning tools , journal =. 2012 , issn =. doi:https://doi.org/10.1016/j.enbuild.2012.03.003 , url =

- [53]

-

[54]

Prentic Hall of India Private Limited, New delhi , year=

Topology , author=. Prentic Hall of India Private Limited, New delhi , year=

-

[55]

Proceedings of the National Academy of Sciences , volume=

Distributional conformal prediction , author=. Proceedings of the National Academy of Sciences , volume=. 2021 , publisher=

work page 2021

-

[56]

Dalmasso, N. and Pospisil, T. and Lee, A.B. and Izbicki, R. and Freeman, P.E. and Malz, A.I. , year=. Conditional density estimation tools in python and R with applications to photometric redshifts and likelihood-free cosmological inference , volume=. doi:10.1016/j.ascom.2019.100362 , journal=

-

[57]

Approximating conditional distribution functions using dimension reduction , volume=

Hall, Peter and Yao, Qiwei , year=. Approximating conditional distribution functions using dimension reduction , volume=. The Annals of Statistics , publisher=. doi:10.1214/009053604000001282 , number=

-

[58]

arXiv preprint arXiv:1401.3632 , year=

Bayesian conditional density filtering , author=. arXiv preprint arXiv:1401.3632 , year=

-

[59]

Sbornik: Mathematics , volume=

Triangular transformations of measures , author=. Sbornik: Mathematics , volume=. 2005 , publisher=

work page 2005

-

[60]

Advances in neural information processing systems , volume=

Neural spline flows , author=. Advances in neural information processing systems , volume=

-

[61]

Journal of Machine Learning Research , volume=

Calibrated multiple-output quantile regression with representation learning , author=. Journal of Machine Learning Research , volume=

- [62]

- [63]

-

[64]

Advances in neural information processing systems , volume=

Denoising diffusion probabilistic models , author=. Advances in neural information processing systems , volume=

- [65]

-

[66]

Copula-based conformal prediction for multi-target regression , journal =

Soundouss Messoudi and Sébastien Destercke and Sylvain Rousseau , keywords =. Copula-based conformal prediction for multi-target regression , journal =. 2021 , issn =. doi:https://doi.org/10.1016/j.patcog.2021.108101 , url =

-

[67]

The Eleventh International Conference on Learning Representations , year=

Flow Matching for Generative Modeling , author=. The Eleventh International Conference on Learning Representations , year=

-

[68]

Denoising Diffusion Probabilistic Models

Jonathan Ho and Ajay Jain and Pieter Abbeel , title =. CoRR , volume =. 2020 , url =. 2006.11239 , timestamp =

work page internal anchor Pith review arXiv 2020

- [69]

-

[70]

Approximation Theorems of Mathematical Statistics , author=. 1980 , publisher=

work page 1980

-

[71]

International Conference on Learning Representations , year=

Score-Based Generative Modeling through Stochastic Differential Equations , author=. International Conference on Learning Representations , year=

-

[72]

International Conference on Learning Representations , year=

Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow , author=. International Conference on Learning Representations , year=

-

[73]

Diederik P. Kingma and Tim Salimans and Ben Poole and Jonathan Ho , title =. CoRR , volume =. 2021 , url =. 2107.00630 , timestamp =

-

[74]

Stochastic Interpolants: A Unifying Framework for Flows and Diffusions , author=. 2025 , eprint=

work page 2025

-

[75]

Multivariate Conformal Prediction using Optimal Transport , author=. 2025 , eprint=

work page 2025

-

[76]

Forty-second International Conference on Machine Learning , year=

Optimal transport-based conformal prediction , author=. Forty-second International Conference on Machine Learning , year=

-

[77]

Journal of the American Statistical Association , volume =

Jing Lei and James Robins and Larry Wasserman , title =. Journal of the American Statistical Association , volume =. 2013 , publisher =. doi:10.1080/01621459.2012.751873 , note =

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.