Recognition: no theorem link

On a stochastic column-block bregman method for nonlinear systems

Pith reviewed 2026-05-11 01:52 UTC · model grok-4.3

The pith

A stochastic column-block nonlinear Bregman method computes sparse solutions to nonlinear systems.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The proposed stochastic column-block nonlinear Bregman method efficiently computes sparse solutions to nonlinear systems. Under certain assumptions, the method converges and an upper bound on its convergence rate is derived.

What carries the argument

The stochastic column-block nonlinear Bregman method, which applies Bregman iterations stochastically over selected column blocks of the nonlinear operator to enforce sparsity.

Load-bearing premise

The convergence analysis and rate bound depend on unspecified certain assumptions about the nonlinear operator and the Bregman function holding in practice.

What would settle it

Apply the method to a nonlinear system known to violate the assumptions and observe whether convergence fails or the observed rate exceeds the derived bound.

Figures

read the original abstract

Sparse solution problems play an important role in both signal processing and image restoration. In this paper, we propose a stochastic column-block nonlinear Bregman method for efficiently computing sparse solutions to nonlinear systems. Under certain assumptions, we analyze the convergence of the proposed method and derive an upper bound for its convergence rate. Numerical experiments, including an image recovery problem, are presented to illustrate the efficiency of the proposed method.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes a stochastic column-block nonlinear Bregman method for computing sparse solutions to nonlinear systems. It claims to establish convergence of the iteration and derive an upper bound on the convergence rate under certain (unspecified in the abstract) assumptions on the nonlinear operator, Bregman function, and block selection, and supports the claims with numerical experiments including an image recovery application.

Significance. If the convergence analysis holds under verifiable conditions that are satisfied by the target applications, the stochastic block variant could provide a computationally efficient extension of Bregman methods to large-scale nonlinear sparse recovery problems in signal processing and imaging. The work builds on standard optimization theory without introducing free parameters or circular definitions.

major comments (2)

- [§4 (Convergence Analysis)] The convergence analysis and rate bound (abstract and §4) rest on 'certain assumptions' regarding the nonlinear operator F, the Bregman function, and the stochastic block selection probabilities. These assumptions are not explicitly enumerated or motivated in the abstract, and no verification is provided that they hold for the image-recovery operator used in the experiments. This makes the rate bound's applicability to the advertised problems impossible to assess from the given material.

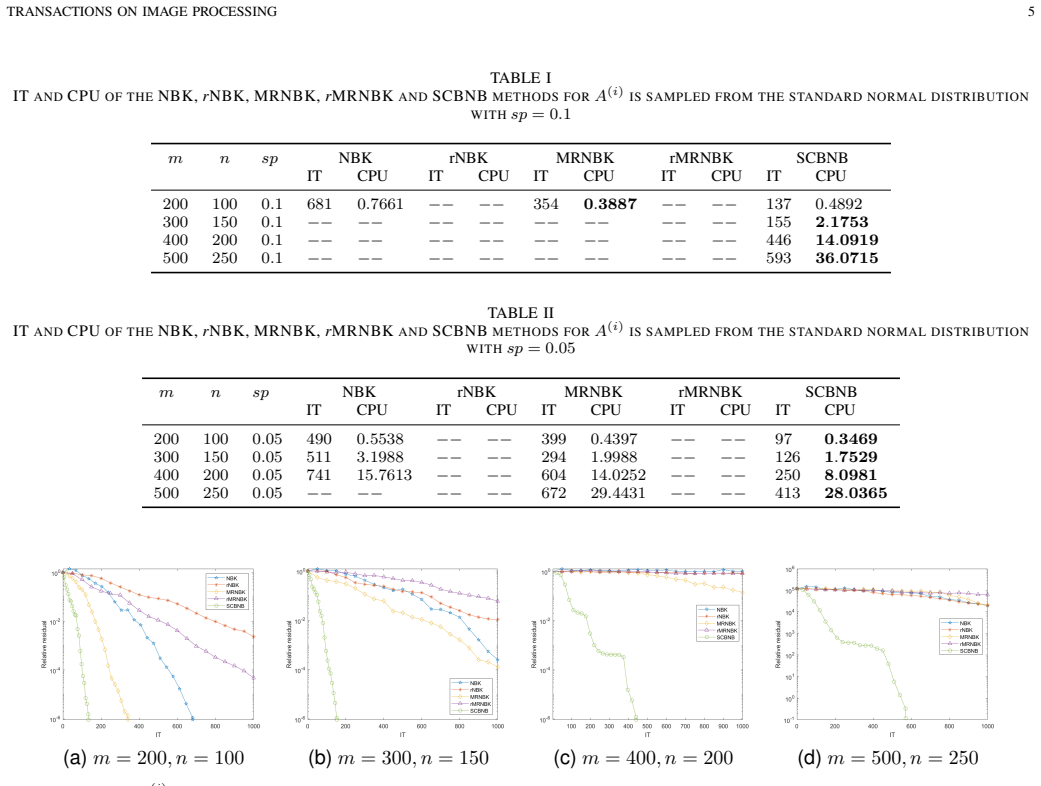

- [§5 (Numerical Experiments)] Table 1 and the image-recovery experiment (presumably §5) report efficiency but do not include quantitative error metrics, baseline comparisons, or checks against the monotonicity/restricted strong convexity conditions required by the analysis. Without these, the numerical results do not corroborate the central convergence claim.

minor comments (1)

- [§3] Notation for the block selection probabilities and the stochastic update rule should be introduced with a clear definition before the convergence theorem.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive comments. We provide point-by-point responses to the major comments below and will revise the manuscript accordingly to improve clarity and strengthen the connection between theory and experiments.

read point-by-point responses

-

Referee: [§4 (Convergence Analysis)] The convergence analysis and rate bound (abstract and §4) rest on 'certain assumptions' regarding the nonlinear operator F, the Bregman function, and the stochastic block selection probabilities. These assumptions are not explicitly enumerated or motivated in the abstract, and no verification is provided that they hold for the image-recovery operator used in the experiments. This makes the rate bound's applicability to the advertised problems impossible to assess from the given material.

Authors: We agree that explicitly listing the assumptions in the abstract would improve accessibility. In the revised manuscript, we will modify the abstract to enumerate the key assumptions on F, the Bregman function, and the block selection probabilities, along with brief motivation. For the image-recovery operator, while the original submission did not include explicit verification, these assumptions are standard and hold for the nonlinear operators in imaging applications as per existing literature on sparse recovery. We will add a paragraph in Section 5 discussing the satisfaction of these conditions for the specific problem, thereby clarifying the applicability of the rate bound. revision: yes

-

Referee: [§5 (Numerical Experiments)] Table 1 and the image-recovery experiment (presumably §5) report efficiency but do not include quantitative error metrics, baseline comparisons, or checks against the monotonicity/restricted strong convexity conditions required by the analysis. Without these, the numerical results do not corroborate the central convergence claim.

Authors: We acknowledge the value of quantitative metrics and comparisons for corroborating the theoretical results. In the revision, we will enhance the numerical experiments by including quantitative error metrics (e.g., relative reconstruction error), comparisons with baseline methods such as the standard nonlinear Bregman iteration and other stochastic variants, and numerical checks or discussions verifying the monotonicity and restricted strong convexity conditions where feasible. This will better demonstrate the practical convergence and efficiency of the proposed method. revision: yes

Circularity Check

No significant circularity; derivation is self-contained from standard theory.

full rationale

The paper proposes a stochastic column-block nonlinear Bregman iteration and derives a convergence rate bound under stated assumptions on the nonlinear operator and Bregman function. No step reduces by construction to a fitted parameter, self-referential definition, or load-bearing self-citation chain; the analysis is presented as a forward application of existing Bregman and stochastic optimization results without renaming known patterns or smuggling ansatzes via prior self-work. The unspecified assumptions are external inputs rather than internal tautologies.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Stable image reconstruction using total variation minimization,

D. Needell and R. A. Ward, “Stable image reconstruction using total variation minimization,”SIAM J. Imaging Sci., vol. 6, pp. 1035–1058, 2012

work page 2012

-

[2]

Image super-resolution using deep convolutional networks,

C. Dong, C. C. Loy, K. He, and X. Tang, “Image super-resolution using deep convolutional networks,”IEEE TPAMI, vol. 38, pp. 295–307, 2016

work page 2016

-

[3]

arXiv preprint arXiv:1907.04840 , year=

T. Dettmers and L. Zettlemoyer, “Sparse networks from scratch: faster training without losing performance,” 2019, arXiv preprint arXiv:1907.04840

-

[4]

Nonlinear Kaczmarz algorithms and their convergence,

Q. Wang, W. Li, and W. Bao, “Nonlinear Kaczmarz algorithms and their convergence,”J. Comput. Appl. Math., vol. 399, no. 113720, 2021

work page 2021

-

[5]

On maximum residual nonlinear Kaczmarz-type algorithms for large nonlinear systems of equations,

J. Zhang, Y . Wang, and J. Zhao, “On maximum residual nonlinear Kaczmarz-type algorithms for large nonlinear systems of equations,” J. Comput. Appl. Math., vol. 452, no. 115065, 2023

work page 2023

-

[6]

Y . Lv, L. Xing, W. Bao, W. Li, and Z. Guo, “A class of pseudoinverse- free greedy block nonlinear Kaczmarz methods for nonlinear systems of equations,”Netw. Heterog. Media., vol. 19, pp. 305–323, 2024

work page 2024

-

[7]

A residual-based weighted nonlinear Kaczmarz method for solving nonlinear systems of equations,

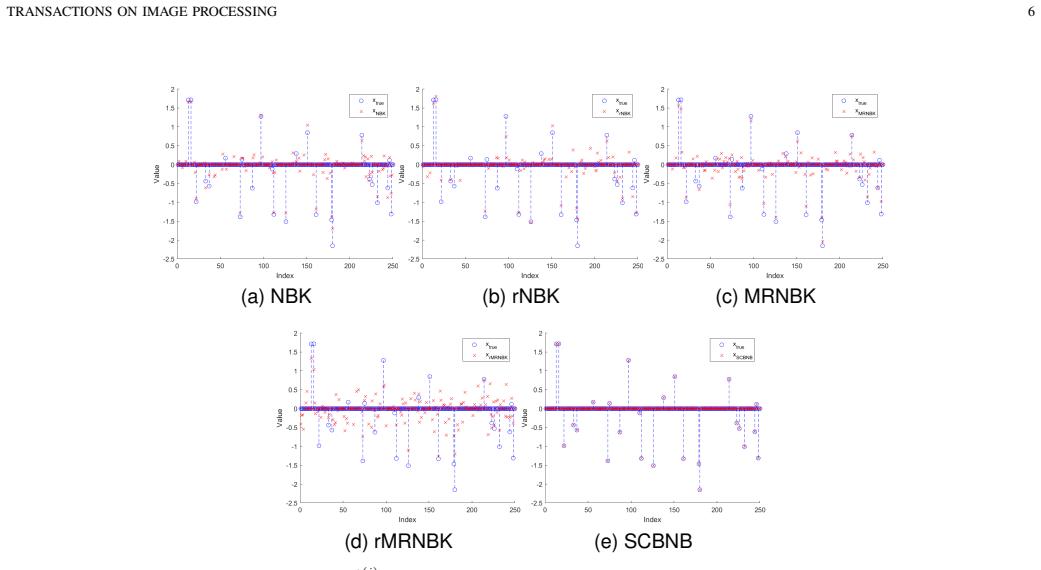

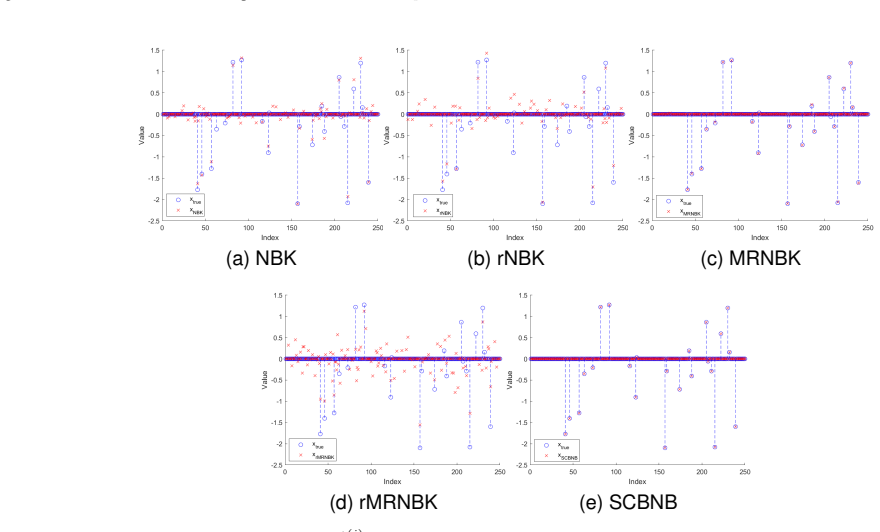

Y . Ye and J. Yin, “A residual-based weighted nonlinear Kaczmarz method for solving nonlinear systems of equations,”Comput. Appl. Math., vol. 43, no. 276, 2024. TRANSACTIONS ON IMAGE PROCESSING 6 (a) NBK (b) rNBK (c) MRNBK (d) rMRNBK (e) SCBNB Fig. 3. The original signal and recovered signal ofA (i) is sampled from the standard normal distribution withm= ...

work page 2024

-

[8]

On stochastic block methods for solving nonlinear equations,

W. Bao, Z. Guo, L. Xing, and W. Li, “On stochastic block methods for solving nonlinear equations,”Numer. Algorithms, 2025

work page 2025

-

[9]

Bregman iterative al- gorithms forl 1-minimization with applications to compressed sensing,

W. Yin, S. Osher, D. Goldfarb, and J. Darbon, “Bregman iterative al- gorithms forl 1-minimization with applications to compressed sensing,” SIAM Journal on Imaging Sciences, vol. 1, no. 1, pp. 143–168, 2008

work page 2008

-

[10]

The linearized Bregman method via split feasibility problems: Analysis and generalizations,

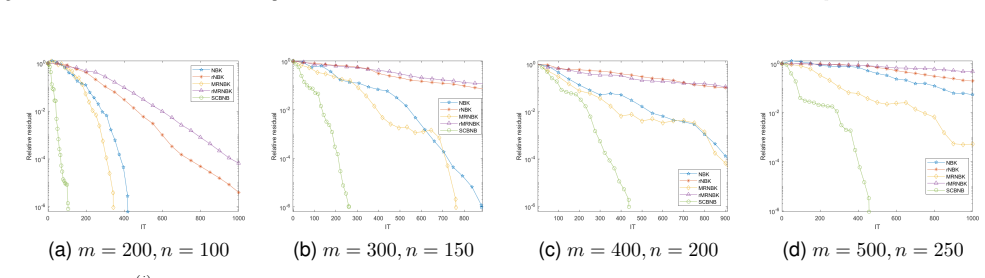

D. A. Lorenz, F. Sch ¨opfer, and S. Wenger, “The linearized Bregman method via split feasibility problems: Analysis and generalizations,” SIAM J. Imaging Sci., vol. 7, pp. 1237–1262, 2014. TRANSACTIONS ON IMAGE PROCESSING 7 TABLE III ITANDCPUOF THENBK,rNBK, MRNBK,rMRNBKANDSCBNBMETHODS FORA (i) IS A RANDOM PARTIALDCTMATRIX WITHsp= 0.1 m n spNBK rNBK MRNBK ...

-

[11]

A sparse Kacz- marz solver and a linearized bregman method for online compressed sensing,

D. A. Lorenz, S. Wenger, F. Sch ¨opfer, and M. Magnor, “A sparse Kacz- marz solver and a linearized bregman method for online compressed sensing,”IEEE ICIP, pp. 1347–1351, 2014

work page 2014

-

[12]

Linear convergence of the randomized sparse Kaczmarz method,

F. Sch ¨opfer and D. A. Lorenz, “Linear convergence of the randomized sparse Kaczmarz method,”Math. Program., vol. 173, pp. 509–536, 2019

work page 2019

-

[13]

A weighted randomized sparse Kaczmarz method for solving linear systems,

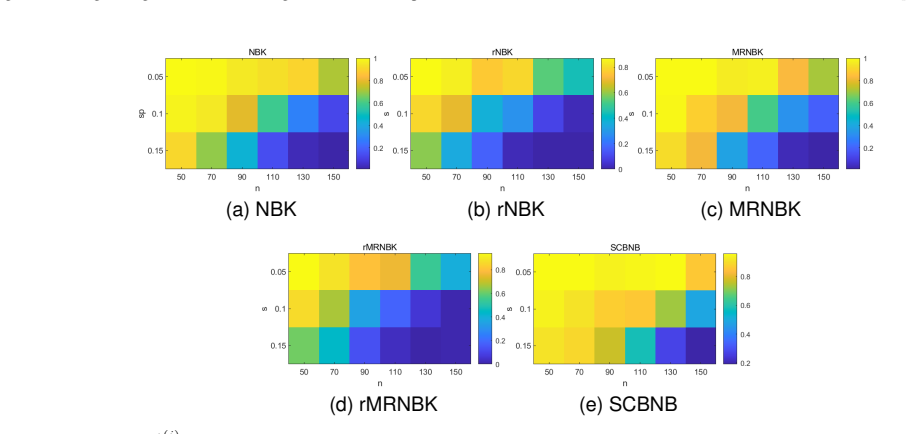

L. Zhang, Z. Yuan, H. Wang, and H. Zhang, “A weighted randomized sparse Kaczmarz method for solving linear systems,”Computational and Applied Mathematics, vol. 41, 2022. TRANSACTIONS ON IMAGE PROCESSING 8 (a) NBK (b) rNBK (c) MRNBK (d) rMRNBK (e) SCBNB Fig. 8. Success rate ofA (i) which is a random partial DCT matrix withm= 100,n= 50 : 20 : 150andsp= 0.05...

work page 2022

-

[14]

Adaptively sketched Bregman projection methods for linear systems,

Z. Yuan, L. Zhang, H. Wang, and H. Zhang, “Adaptively sketched Bregman projection methods for linear systems,”Inverse Probl., vol. 38, 2022

work page 2022

-

[15]

Faster randomized block sparse Kaczmarz by averaging,

L. N. Tondji, M. Winkler, and D. A. Lorenz, “Faster randomized block sparse Kaczmarz by averaging,”Numer. Algor., vol. 93, pp. 1417–1451, 2023

work page 2023

-

[16]

A greedy average block sparse kaczmarz method for sparse solutions of linear systems,

A. Xiao and j. Yin, “A greedy average block sparse kaczmarz method for sparse solutions of linear systems,”Appl. Math. Lett., vol. 153, 2024

work page 2024

-

[17]

A fast block sparse Kaczmarz algorithm for sparse signal recovery,

Y .-Q. Niu and B. Zheng, “A fast block sparse Kaczmarz algorithm for sparse signal recovery,”Signal Process., vol. 227, 2025

work page 2025

-

[18]

A Bregman–Kaczmarz method for nonlinear systems of equations,

D. Gower, R.and Lorenz and M. Winkler, “A Bregman–Kaczmarz method for nonlinear systems of equations,”Comput. Optim. Appl., vol. 87, p. 1059–1098, 2024

work page 2024

-

[19]

On stochastic mirror descent: convergence analysis and adaptive variants methods,

R. D’Orazio, N. Loizou, I. Hadj Laradji, and I. Mitliagkas, “On stochastic mirror descent: convergence analysis and adaptive variants methods,”Computer Science

-

[20]

Iterative regularization methods for nonlinear ill-posed problems,

B. Kaltenbacher, A. Neubauer, and O. Scherzer, “Iterative regularization methods for nonlinear ill-posed problems,” 2008

work page 2008

-

[21]

Greedy randomized sampling nonlinear Kaczmarz methods,

Y . Zhang, H. Li, and L. Tang, “Greedy randomized sampling nonlinear Kaczmarz methods,”Calcolo, vol. 61, no. 25, 2024

work page 2024

-

[22]

A stochastic column-block gradient descent method for solving nonlinear systems of equations,

N. Jiang, W. Bao, L. Xing, and W. Li, “A stochastic column-block gradient descent method for solving nonlinear systems of equations,” Appl. Math. Lett

-

[23]

A fast block sparse kaczmarz algorithm for sparse signal recovery,

Y . Q. Niu and B. Zheng, “A fast block sparse kaczmarz algorithm for sparse signal recovery,”Signal Processing. APPENDIX PROOF OFTHEOREM1 From Lemma 1, we have D x∗ k+1 φ (xk+1,ˆx) ≤D x∗ k φ (xk,ˆx) +⟨x∗ k+1 −x ∗ k, xk −ˆx⟩+ 1 2γ∥x∗ k+1 −x ∗ k∥22 =D x∗ k φ (xk,ˆx) +⟨−δ γ∥∇f:,ξk(xk)Tf(xk)∥22 ∥∇f:,ξk(xk)∇f:,ξk(xk)Tf(xk)∥22 I:,ξk∇f:,ξk(xk)Tf(xk), xk −ˆx⟩ + 1...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.