Recognition: 2 theorem links

· Lean TheoremSelf-normalized tests for multistep conditional predictive ability

Pith reviewed 2026-05-11 01:44 UTC · model grok-4.3

The pith

Self-normalized CUSUM functionals yield pivotal tests for multistep forecast comparisons without covariance estimation.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Normalizing the sample mean of the transformed loss differential by adjusted-range functionals of its scalar CUSUM process (or by matrix functionals for vector versions) produces test statistics whose null limiting distributions are free of unknown parameters, while the tests are consistent against alternatives that exhibit conditional predictive ability.

What carries the argument

The adjusted-range normalizer (scalar) and matrix normalizer (vector), both constructed as functionals of the CUSUM process of the loss differential, which replace direct long-run covariance estimation.

If this is right

- No bandwidth, kernel, or truncation parameter must be chosen by the user.

- Null critical values are obtained from known distributions of CUSUM functionals and require no further estimation.

- Finite-sample size distortions are reduced relative to HAC-based competitors in Monte Carlo designs.

- Power is retained against conditional predictability alternatives.

- The same construction applies to both scalar and vector loss differentials.

Where Pith is reading between the lines

- The approach could be ported to other time-series tests where long-run variance estimation is the dominant practical obstacle.

- Direct application to real macroeconomic or financial forecast series would reveal whether conditional predictability is more common than HAC tests suggest.

- Extensions to nonlinear or asymmetric loss functions remain open but would follow the same normalization logic.

Load-bearing premise

The loss differential process obeys weak dependence and moment bounds that make its CUSUM functionals converge to pivotal Brownian-motion limits.

What would settle it

Generate finite samples under the null with dependence strong enough to violate the regularity conditions and observe whether empirical rejection rates stay close to the nominal level.

Figures

read the original abstract

This paper proposes self-normalized tests for multistep conditional predictive ability in forecast comparison. By normalizing the sample mean of the transformed loss differential using functionals of its cumulative sum (CUSUM) process, specifically an adjusted-range normalizer for scalars and a matrix normalizer for vectors, our approach avoids direct estimation of the long-run covariance matrix. Consequently, it eliminates the need for the ad hoc bandwidth, kernel, and lag-truncation choices required by traditional methods. We establish the asymptotic theory for these statistics, deriving pivotal null limiting distributions and proving test consistency. Monte Carlo simulations show that the proposed tests effectively mitigate the finite-sample size distortions associated with traditional heteroskedasticity and autocorrelation consistent (HAC) methods, while retaining strong empirical power against conditional predictability alternatives.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

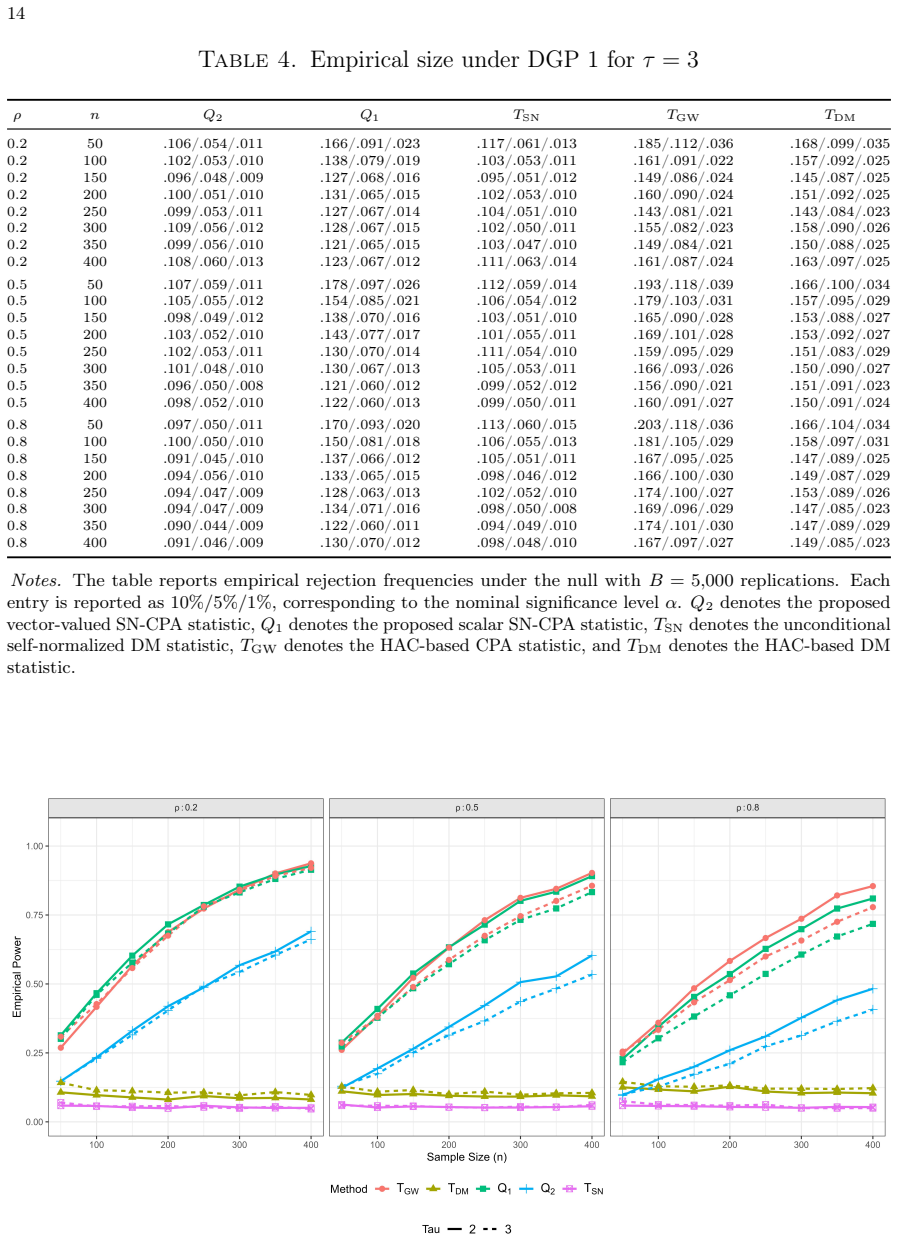

Summary. The paper proposes self-normalized tests for multistep conditional predictive ability in forecast comparison. By normalizing the sample mean of the transformed loss differential using functionals of its cumulative sum (CUSUM) process—specifically an adjusted-range normalizer for scalars and a matrix normalizer for vectors—the approach avoids direct estimation of the long-run covariance matrix and the associated ad hoc bandwidth, kernel, and lag-truncation choices. The authors derive pivotal null limiting distributions under weak dependence and moment conditions, prove consistency against conditional predictability alternatives, and present Monte Carlo evidence that the tests reduce finite-sample size distortions relative to traditional HAC methods while retaining power.

Significance. If the derivations hold, the contribution is significant for time-series forecast evaluation: it supplies a practical, tuning-parameter-free alternative to HAC-based tests for conditional predictive ability, which is a common setting in econometrics where long-run variance estimation is sensitive to bandwidth choice. The CUSUM-based self-normalization and the explicit asymptotic theory (pivotal limits plus consistency) are strengths; the simulation design, if reproducible, further supports the finite-sample claim.

minor comments (2)

- [Abstract / Introduction] The regularity conditions (weak dependence, moment bounds) invoked for the functional central limit theorem and CUSUM convergence are stated in the full manuscript but could be summarized more explicitly in the abstract or introduction to make the scope of the pivotal limits immediately clear to readers.

- [Monte Carlo simulations] In the Monte Carlo section, the exact design of the data-generating processes, the choice of multistep horizons, and the number of replications should be reported with sufficient detail (including seed or code availability) to allow exact replication of the reported size and power results.

Simulated Author's Rebuttal

We thank the referee for the positive and constructive report, which accurately captures the paper's contribution and recommends minor revision. The referee's summary of the self-normalized CUSUM approach, its avoidance of HAC estimation, pivotal asymptotics, and improved finite-sample properties aligns with our manuscript. No specific major comments are provided in the report.

Circularity Check

No significant circularity in the derivation chain

full rationale

The paper's central derivation applies standard functional central limit theorems to the CUSUM process of the transformed loss differential to obtain pivotal limiting distributions for the adjusted-range and matrix self-normalizers. These limits follow from general weak-dependence and moment conditions that are independent of the specific test statistics and do not reduce to the inputs by construction. No self-definitional steps, fitted parameters renamed as predictions, load-bearing self-citations, or ansatz smuggling appear in the abstract or described methodology. The avoidance of long-run covariance estimation is achieved through the CUSUM functionals themselves, which is a methodological choice justified by external convergence theory rather than tautology. The argument is self-contained against standard probability benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The transformed loss differential process satisfies weak dependence and moment conditions sufficient for a functional central limit theorem to hold for its partial-sum process.

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearBy normalizing the sample mean of the transformed loss differential using functionals of its cumulative sum (CUSUM) process, specifically an adjusted-range normalizer for scalars and a matrix normalizer for vectors, our approach avoids direct estimation of the long-run covariance matrix.

-

IndisputableMonolith/Foundation/AlexanderDuality.leanalexander_duality_circle_linking unclearTheorem 3.1. ... Qh m,n,τ ⇒ B(1)² / (sup B(r) − inf B(r))²

Reference graph

Works this paper leans on

-

[1]

CONSISTENT SPECIFICATION TESTING WITH NUISANCE PARAMETERS PRESENT ONLY UNDER THE ALTERNATIVE , volume=. Econometric Theory , author=. 1998 , pages=

work page 1998

-

[2]

Some invariance principles and central limit theorems for dependent heterogeneous processes , author=. Econometric theory , volume=. 1988 , publisher=

work page 1988

-

[3]

A functional central limit theorem for strongly mixing sequences of random variables , author=. Zeitschrift f. 1985 , publisher=

work page 1985

-

[4]

Econometrica: Journal of the Econometric Society , pages=

A consistent conditional moment test of functional form , author=. Econometrica: Journal of the Econometric Society , pages=. 1990 , publisher=

work page 1990

-

[5]

Diebold, Francis X. and Mariano, Roberto S. , title =. Journal of Business & Economic Statistics , year =

- [6]

-

[7]

Clark, Todd E. and McCracken, Michael W. , title =. Journal of Econometrics , year =

-

[8]

Clark, Todd E. and McCracken, Michael W. , title =. Econometric Reviews , year =

-

[9]

Clark, Todd E. and West, Kenneth D. , title =. Journal of Econometrics , year =

-

[10]

Giacomini, Raffaella and White, Halbert , title =. Econometrica , year =

-

[11]

Newey, Whitney K. and West, Kenneth D. , title =. Econometrica , year =

-

[12]

Andrews, Donald W. K. , title =. Econometrica , year =

-

[13]

Kiefer, Nicholas M. and Vogelsang, Timothy J. , title =. Econometric Theory , year =

-

[14]

Journal of the Royal Statistical Society: Series B (Statistical Methodology) , year =

Shao, Xiaofeng , title =. Journal of the Royal Statistical Society: Series B (Statistical Methodology) , year =

- [15]

-

[16]

Journal of Business & Economic Statistics , year =

Hansen, Peter Reinhard , title =. Journal of Business & Economic Statistics , year =

- [17]

-

[18]

International Journal of Forecasting , volume =

Coroneo, Laura and Iacone, Fabrizio , title =. International Journal of Forecasting , volume =

-

[19]

Harvey, David I. and Leybourne, Stephen J. and Zu, Yang , title =. Journal of Applied Econometrics , volume =

-

[20]

Li, Haiqi and Zhang, Ni and Zhou, Jin , title =. Economics Letters , volume =

-

[21]

Journal of Econometrics , volume =

Zhu, Yinchu and Timmermann, Allan , title =. Journal of Econometrics , volume =

-

[22]

Brown, B. M. , title =. The Annals of Mathematical Statistics , volume =

- [23]

-

[24]

The Annals of Probability , volume =

Herrndorf, Norbert , title =. The Annals of Probability , volume =

-

[25]

Davidson, James , title =

-

[26]

Journal of the American Statistical Association , volume=

Testing for change points in time series , author=. Journal of the American Statistical Association , volume=. 2010 , publisher=

work page 2010

-

[27]

The Annals of Statistics , volume=

Hypothesis testing for high-dimensional time series via self-normalization , author=. The Annals of Statistics , volume=. 2020 , publisher=

work page 2020

-

[28]

The Annals of Statistics , volume=

Inference for change points in high-dimensional data via self-normalization , author=. The Annals of Statistics , volume=. 2022 , publisher=

work page 2022

-

[29]

de Jong, Robert M. and Davidson, James , title =. Econometric Theory , volume =

-

[30]

Journal of Econometrics , volume=

Kolmogorov-Smirnov type testing for structural breaks: A new adjusted-range based self-normalization approach , author=. Journal of Econometrics , volume=. 2024 , publisher=

work page 2024

-

[31]

Nonlinear regression with dependent observations , author=. Econometrica , volume=. 1984 , publisher=

work page 1984

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.