Recognition: no theorem link

A Log-Domain Approximation of SOCS Decoding for Turbo Product Codes

Pith reviewed 2026-05-11 01:56 UTC · model grok-4.3

The pith

A max-log approximation turns SOCS decoding into a practical log-domain rule for turbo product codes.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The proposed decoder replaces the probability-domain operations of SOCS with a max-log rule whose soft-output is given by a piecewise-linear function of the reliability gaps between the Chase-II list and the out-of-list hypotheses; this rule remains fully compatible with the standard iterative TPC loop and, for a product code built from (256,239) extended BCH components, yields bit-error-rate curves that lie between those of the Chase-Pyndiah decoder and the full SOCS decoder at the same list size.

What carries the argument

The piecewise-linear function of reliability gaps between the Chase-II list hypotheses and the out-of-list hypotheses, which supplies the extrinsic information in the max-log SOCS rule.

If this is right

- The decoder stays compatible with any existing iterative TPC scheduling that expects Chase-Pyndiah-style extrinsic values.

- At the same list size the new rule produces noticeably lower error rates than Chase-Pyndiah decoding.

- The gap to full SOCS performance is small enough that the approximation remains attractive for practical use.

- Only additions, comparisons and a few linear segments are required, removing the need for probability-domain arithmetic.

Where Pith is reading between the lines

- The same gap-based linear approximation could be tried on other list-based soft-output decoders that currently rely on probability-domain calculations.

- Hardware implementations could further simplify the linear segments into fixed-point lookup tables with negligible extra loss.

- If the approximation holds for longer component codes, the same technique might extend the reach of SOCS-style gains to higher-rate product codes.

Load-bearing premise

The piecewise-linear mapping of reliability gaps keeps enough quality in the extrinsic information for the iterative decoder to converge properly.

What would settle it

A bit-error-rate simulation on the (256,239) eBCH turbo product code in which the proposed decoder either fails to converge or shows an error floor clearly above the full SOCS curve would falsify the claim.

Figures

read the original abstract

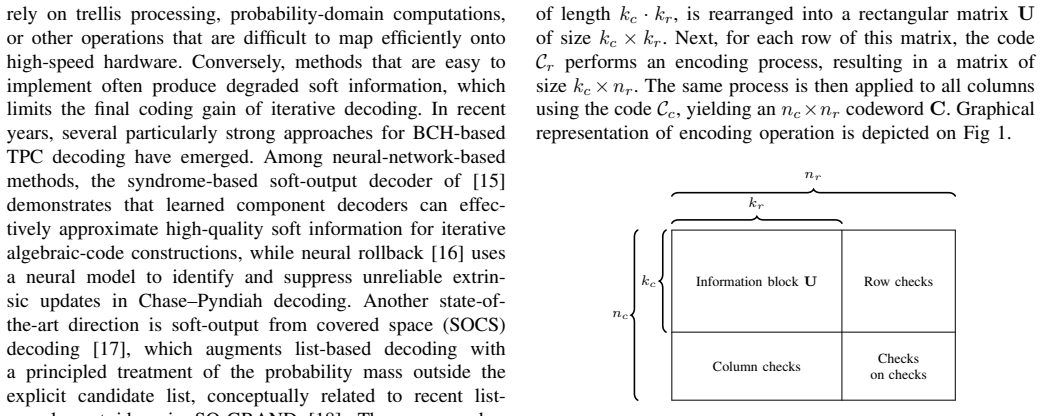

This paper studies low-complexity soft-output decoding of turbo product codes with extended Bose--Chaudhuri--Hocquenghem component codes. Recent soft-output from covered space (SOCS) decoding substantially improves the quality of extrinsic information compared with the conventional Chase--Pyndiah decoder, but its probabilistic-domain implementation is less attractive for hardware-oriented realizations. We therefore propose a log-domain approximation of SOCS based on max-log approach. The proposed soft-input soft-output rule replaces probability-domain operations with a piecewise-linear function of reliability gaps between competing Chase-II decoding list and out of the list hypotheses, which preserves compatibility with the standard iterative TPC decoding loop. Numerical results for a TPC built from (256,239) eBCH component codes show that the proposed decoder clearly outperforms the baseline Chase--Pyndiah decoder with the same list size and approaches the performance of SOCS decoder.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes a log-domain approximation to soft-output from covered space (SOCS) decoding for turbo product codes (TPCs) constructed from extended BCH component codes. It replaces the probabilistic-domain operations of SOCS with a max-log approach that uses a piecewise-linear function of reliability gaps between Chase-II list and out-of-list hypotheses. The approximation is claimed to preserve compatibility with the standard iterative TPC decoding loop. Numerical results for a TPC built from (256,239) eBCH codes show that the proposed decoder outperforms the baseline Chase-Pyndiah decoder at the same list size and approaches the performance of full SOCS decoding.

Significance. If the approximation is shown to maintain extrinsic LLR quality sufficiently across iterations, the work would offer a hardware-oriented alternative to SOCS that delivers clear gains over Chase-Pyndiah while nearing optimal soft-output performance. The reported numerical results for the (256,239) eBCH TPC provide concrete evidence of practical improvement, strengthening the case for low-complexity soft-output decoding in coding applications.

major comments (2)

- [Section III] Section III (proposed log-domain approximation): the piecewise-linear max-log mapping is introduced without an analytic error bound relative to the original SOCS probabilistic rule or a demonstration that extrinsic LLR bias does not accumulate over iterations. This is load-bearing for the central claim, because the headline result (approaching SOCS performance) rests on the assumption that the approximation preserves sufficient extrinsic information quality for the iterative TPC loop to converge.

- [Section IV] Section IV (numerical results): the performance curves for the (256,239) eBCH TPC are presented without reported error bars, number of simulated frames, or explicit definition of the piecewise-linear breakpoints and slopes. These omissions limit verification of how closely the proposed decoder approaches SOCS and whether the observed gap closure is robust.

minor comments (2)

- [Abstract] The abstract and introduction could more explicitly state the complexity reduction (e.g., operations per iteration) achieved by the log-domain rule compared with probabilistic SOCS.

- [Section III] Notation for the reliability gaps and the piecewise-linear function should be introduced with numbered equations at the start of the proposed-method section to improve readability.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review. The comments highlight important aspects for strengthening the theoretical justification and reproducibility of the results. We address each major comment below and outline the revisions we will make.

read point-by-point responses

-

Referee: [Section III] Section III (proposed log-domain approximation): the piecewise-linear max-log mapping is introduced without an analytic error bound relative to the original SOCS probabilistic rule or a demonstration that extrinsic LLR bias does not accumulate over iterations. This is load-bearing for the central claim, because the headline result (approaching SOCS performance) rests on the assumption that the approximation preserves sufficient extrinsic information quality for the iterative TPC loop to converge.

Authors: We acknowledge that the manuscript does not include a formal analytic error bound for the piecewise-linear approximation relative to the original SOCS rule. Deriving such a bound for the full iterative decoder is non-trivial because of the feedback loop and the dependence on component-code list statistics. The approximation is instead designed as a monotonic, max-log-style mapping of reliability gaps that preserves LLR sign and ordering, consistent with standard practice in log-domain turbo decoding. To address potential bias accumulation, we have performed supplementary simulations that track the mean and variance of extrinsic LLRs across iterations for the (256,239) TPC; these show no measurable drift and stable convergence behavior. In the revised manuscript we will add a short subsection in Section III that (i) motivates the piecewise-linear choice via the max-log principle, (ii) states the design criteria used for breakpoints and slopes, and (iii) presents the new LLR-stability plots as empirical evidence that the approximation maintains sufficient extrinsic quality for the observed performance gains. revision: partial

-

Referee: [Section IV] Section IV (numerical results): the performance curves for the (256,239) eBCH TPC are presented without reported error bars, number of simulated frames, or explicit definition of the piecewise-linear breakpoints and slopes. These omissions limit verification of how closely the proposed decoder approaches SOCS and whether the observed gap closure is robust.

Authors: We agree that these details are necessary for reproducibility and will incorporate them in the revision. Section IV will be updated to: (1) state that 10^7 frames were simulated per SNR point, ensuring reliable BER estimates to approximately 10^{-6}; (2) include 95 % confidence-interval error bars on all BER curves; and (3) add an explicit table (or set of equations) listing the breakpoints and slopes of the piecewise-linear function used in the max-log approximation. These changes will allow readers to verify both the closeness to SOCS performance and the robustness of the reported gains over Chase-Pyndiah. revision: yes

Circularity Check

No significant circularity in the log-domain SOCS approximation derivation

full rationale

The paper derives its log-domain approximation directly from the standard max-log technique applied to SOCS probability-domain operations, introducing a piecewise-linear function of reliability gaps as a replacement rule. This choice is presented as preserving iterative TPC compatibility by construction of the mapping, but the performance claims (outperforming Chase-Pyndiah and approaching SOCS) rest on external numerical validation against independent baselines for the (256,239) eBCH TPC, not on any reduction of results to fitted parameters or self-referential inputs. No load-bearing self-citation chains, uniqueness theorems from the same authors, or ansatzes smuggled via prior work are evident; the central approximation and its empirical demonstration remain self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Iterative TPC decoding loop remains stable when extrinsic information is replaced by the proposed log-domain approximation.

Reference graph

Works this paper leans on

-

[1]

P. Elias, “Error-free Coding,”Transactions of the IRE Professional Group on Information Theory, vol. 4, no. 4, pp. 29–37, 1954

work page 1954

-

[2]

Turbo Product Codes: Ap- plications, Challenges, and Future Directions,

H. Mukhtar, A. Al-Dweik, and A. Shami, “Turbo Product Codes: Ap- plications, Challenges, and Future Directions,”IEEE Communications Surveys & Tutorials, vol. 18, no. 4, pp. 3052–3069, 2016

work page 2016

-

[3]

FPGA Implementation of Turbo Product Codes for Error Correction,

M. G. Greeshma and S. Murugan, “FPGA Implementation of Turbo Product Codes for Error Correction,” inSustainable Communication Networks and Application. Singapore: Springer Nature Singapore, 2021, pp. 189–200

work page 2021

-

[4]

Flexible and high-efficiency turbo product code decoder design,

L. Zhou, H. Liu, and B. Zhang, “Flexible and high-efficiency turbo product code decoder design,”IEICE Electronics Express, vol. 9, no. 12, pp. 1044–1050, 2012

work page 2012

-

[5]

Performance of Product Codes and Related Structures with Iterated Decoding,

J. Justesen, “Performance of Product Codes and Related Structures with Iterated Decoding,”IEEE Transactions on Communications, vol. 59, no. 2, pp. 407–415, 2011

work page 2011

-

[6]

Near-optimum decoding of product codes: block turbo codes,

R. Pyndiah, “Near-optimum decoding of product codes: block turbo codes,”IEEE Transactions on Communications, vol. 46, no. 8, pp. 1003–1010, 1998

work page 1998

-

[7]

A Class of Algorithms for Decoding Block Codes with Channel Measurement Information,

D. Chase, “A Class of Algorithms for Decoding Block Codes with Channel Measurement Information,”IEEE Transactions on Information Theory, vol. 18, no. 1, pp. 170–181, 1972

work page 1972

-

[8]

Optimal decoding of linear codes for minimizing symbol error rate (Corresp.),

L. Bahl, J. Cocke, F. Jelinek, and J. Raviv, “Optimal decoding of linear codes for minimizing symbol error rate (Corresp.),”IEEE Transactions on Information Theory, vol. 20, no. 2, pp. 284–287, 1974

work page 1974

-

[9]

An optimum symbol-by-symbol decod- ing rule for linear codes,

C. Hartmann and L. Rudolph, “An optimum symbol-by-symbol decod- ing rule for linear codes,”IEEE Transactions on Information Theory, vol. 22, no. 5, pp. 514–517, 1976

work page 1976

-

[10]

MAP algorithms for decoding linear block codes based on sectionalized trellis diagrams,

Y . Liu, M. Fossorier, and S. Lin, “MAP algorithms for decoding linear block codes based on sectionalized trellis diagrams,” inIEEE GLOBECOM 1998, vol. 1, 1998, pp. 562–566 vol.1

work page 1998

-

[11]

Turbo decoding of product codes using adaptive belief propagation,

C. Jego and W. Gross, “Turbo decoding of product codes using adaptive belief propagation,”IEEE Transactions on Communications, vol. 57, no. 10, pp. 2864–2867, 2009

work page 2009

-

[12]

An efficient decoding algorithm for block turbo codes,

S. Dave, J. Kim, and S. C. Kwatra, “An efficient decoding algorithm for block turbo codes,”IEEE Transactions on Communications, vol. 49, no. 1, pp. 41–46, 2001

work page 2001

-

[13]

Soft-Information Post-Processing for Chase-Pyndiah Decoding Based on Generalized Mutual Information,

A. Straßhofer, D. Lentner, G. Liva, and A. G. i. Amat, “Soft-Information Post-Processing for Chase-Pyndiah Decoding Based on Generalized Mutual Information,” in2023 12th International Symposium on Topics in Coding (ISTC). IEEE, Sep. 2023, p. 1–5. [Online]. Available: http://dx.doi.org/10.1109/ISTC57237.2023.10273464

-

[14]

An enhanced chase-pyndiah algorithm for turbo product codes,

K. Yu and W. Xu, “An enhanced chase-pyndiah algorithm for turbo product codes,” in2023 9th International Conference on Computer and Communications (ICCC), 2023, pp. 737–741

work page 2023

-

[15]

Iterative Syndrome-Based Deep Neural Network Decoding,

D. Artemasov, K. Andreev, P. Rybin, and A. Frolov, “Iterative Syndrome-Based Deep Neural Network Decoding,”IEEE Open Journal of the Communications Society, vol. 6, pp. 629–641, 2025

work page 2025

-

[16]

Iterative Neural Rollback Chase–Pyndiah Decoding,

D. Artemasov, O. Nesterenkov, K. Andreev, P. Rybin, and A. Frolov, “Iterative Neural Rollback Chase–Pyndiah Decoding,”IEEE Communi- cations Letters, vol. 30, pp. 482–486, 2026

work page 2026

-

[17]

Soft-Output from Covered Space Decoding of Product Codes,

T. Janz, S. Oberm ¨uller, A. Zunker, and S. Ten Brink, “Soft-Output from Covered Space Decoding of Product Codes,” in2025 13th International Symposium on Topics in Coding (ISTC), 2025, pp. 1–5

work page 2025

-

[18]

Soft- Output (SO) GRAND and Iterative Decoding to Outperform LDPC Codes,

P. Yuan, M. M ´edard, K. Galligan, and K. R. Duffy, “Soft- Output (SO) GRAND and Iterative Decoding to Outperform LDPC Codes,”IEEE Transactions on Wireless Communications, vol. 24, no. 4, p. 3386–3399, Apr. 2025. [Online]. Available: http://dx.doi.org/10.1109/TWC.2025.3530880

-

[19]

R. Storn and K. Price, “Differential evolution – a simple and efficient heuristic for global optimization over continuous spaces,”Journal of global optimization, vol. 11, no. 4, pp. 341–359, 1997

work page 1997

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.