This paper derives an improved lower bound of order sqrt(n log log n) on the support size…

The capacity-achieving input distribution for the binomial channel must have support size at least order sqrt(n log log n).

Information Theory

Covers theoretical and experimental aspects of information theory and coding. Includes material in ACM Subject Class E.4 and intersects with H.1.1.

The capacity-achieving input distribution for the binomial channel must have support size at least order sqrt(n log log n).

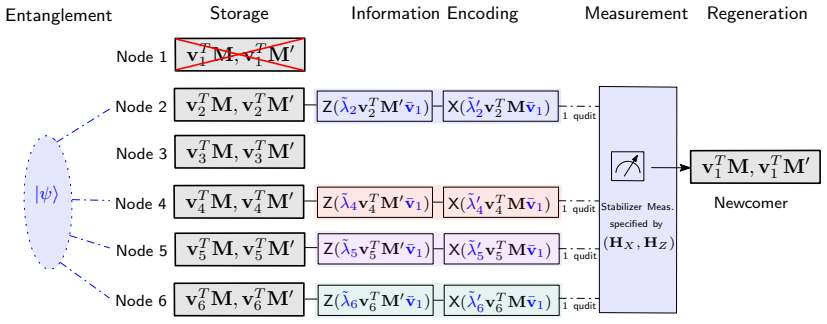

Simultaneously Minimizing Storage and Bandwidth Under Exact Repair With Quantum Entanglement

The minimal alpha and d beta_q point known for functional repair holds under exact repair when d is at least 2k-2.

full image

full image

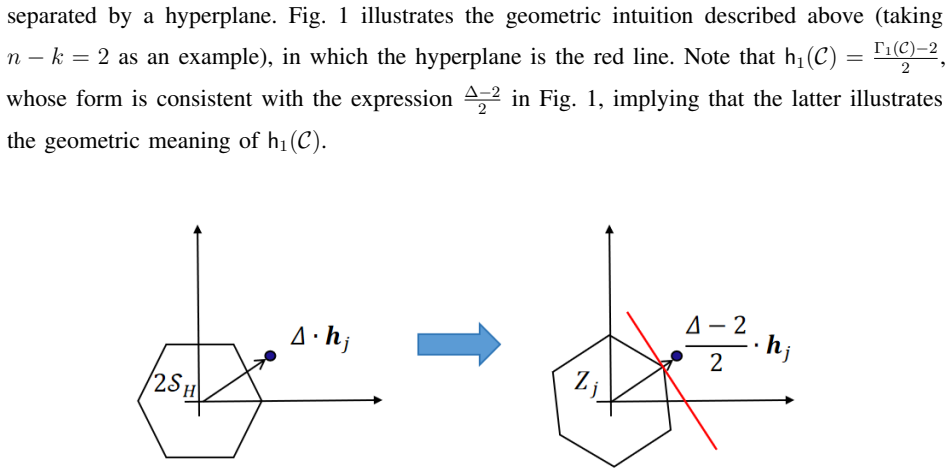

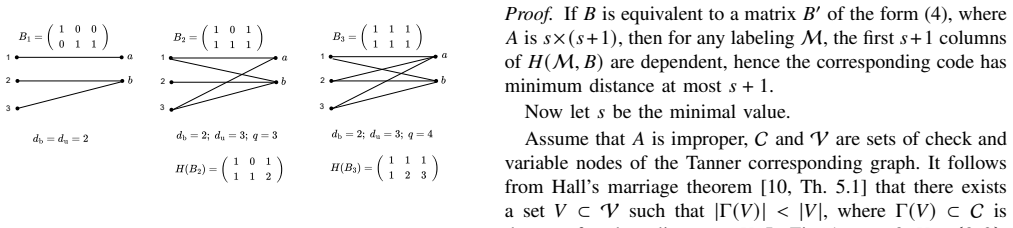

Angle Between Two Vectors over Finite Fields and an Application to Projective Unique Decoding

angle_H(u, C) below d/2 guarantees a single closest direction in the code up to scaling, after the function descends to a true metric on the

Secure (Multiple) Key-Cast over Networks: Multiple Eavesdropping Nodes

This rate is achievable and optimal for key-cast when sources reach all nodes via d vertex-disjoint paths despite ℓ node observers.

full image

full image

Single neural network enables reliable decoding and low packet loss in uncoordinated high-traffic control networks.

full image

full image

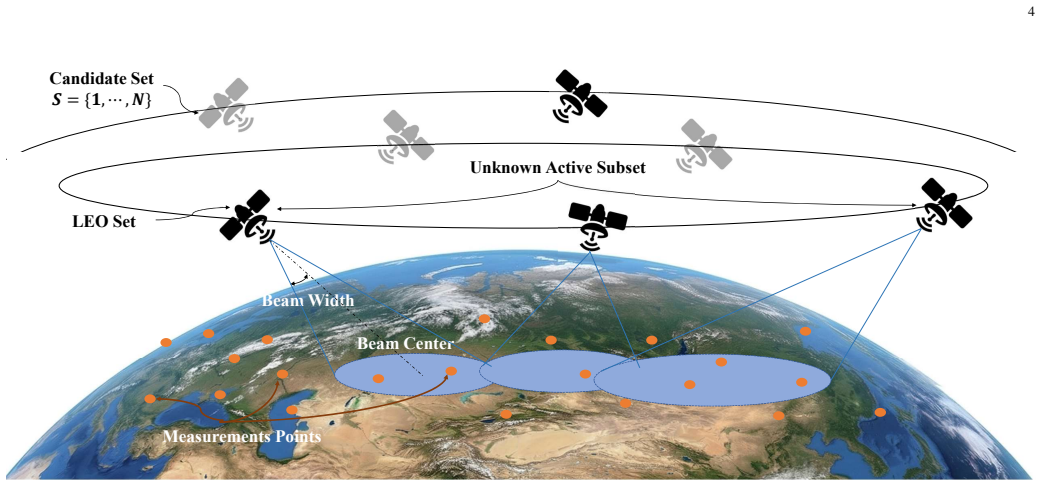

Capacity Scalability of LEO Constellations With Dynamic Link Failures

Under a Markov model of ISL states, scalability rises to an optimum then falls toward zero as satellite count grows.

full image

full image

A framework for constructing non-GRS MDS-NMDS codes from deep holes and its application

Codes with covering radius n-k yield new length-n+1 non-GRS families while preserving MDS/NMDS distance and avoiding GRS equivalence.

On the Hamming Distance and LCD Properties of Binary Polycyclic Codes and Their Duals

The codes are reversible and LCD for certain exponents, producing optimal binary linear codes at larger lengths.

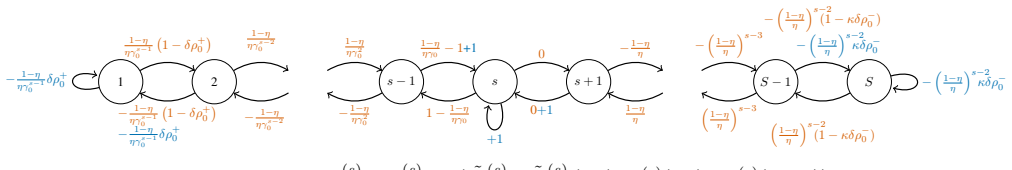

On Capacity and Delay of Wireless Networks with Node Failures

Even with the same number of active nodes, a failing network has lower capacity than a failure-free one and needs extra redundancy to match.

full image

full image

Memory Constrained Adversarial Hypothesis Testing

Upper and lower bounds on asymptotic minimax error share the same exponential dependence on the number of states and coincide for some cases

full image

full image

CR²: Cost-Aware Risk-Controlled Routing for Wireless Device-Edge LLM Inference

Margin gate and risk calibration cut wireless deployment costs by up to 16.9% at matched accuracy.

full image

full image

Strengthened inequalities recover Ky Fan's as a special case and are strictly stronger than classical versions for non-diagonal positive-def

When the shift constant delta is a unit, every ideal in the quotient ring is generated explicitly, with full lists, torsion, and sizes for n

Maximum Entropy of Sums of Independent Ternary Random Variables

The maximum occurs when n-1 variables are uniform on {0,2} and the last one's middle probability is set by the difference of two binomial-1/

full image

full image

Sequences are encoded by their minimum polar-compressed length plus the compressed bits in a prefix-free scheme that needs no source model.

full image

full image

Empirical coordination in the finite blocklength regime: an achievability result---Extended version

Method of types produces both precise and second-order rate expressions for agents to match target action distributions with short blocks.

full image

full image

Schur Products of Constacyclic Codes via the Constacyclic Discrete Fourier Transform

Pattern polynomials and additive combinatorics yield dimension formulas for arbitrary constacyclic code products.

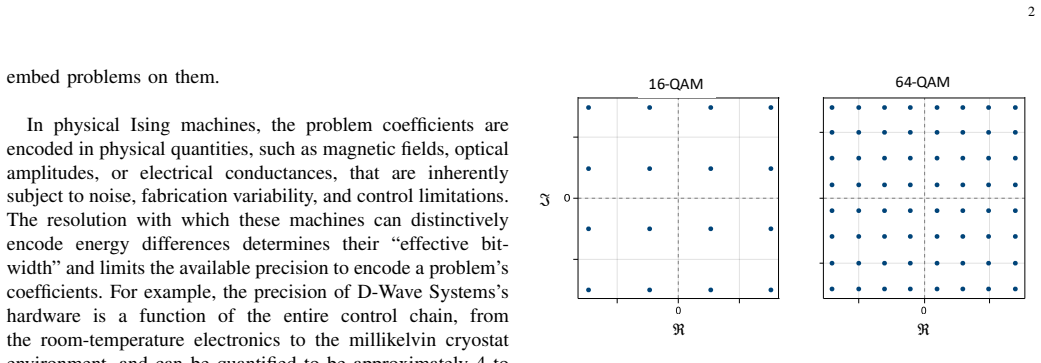

Performance of QUBO-Formulated MIMO Detection Under Hardware Precision Constraints

Derived distributions and sufficient-precision bounds let non-uniform bit allocation preserve error rates across antenna counts and up to 2

full image

full image

The Entropy of Floating-Point Numbers

An approximation links it to the continuous distribution and shows the entropy changes little when the variable is rescaled.

full image

full image

Recent Advances in Spatially Coupled Codes: Overview and Outlook

Review covers recent constructions, their properties, and design methods that make them attractive for communications and storage.

full image

full image

Hagiwara codes from quantum Reed-Solomon codes gain a practical algorithm for composite errors.

full image

full image

Beyond Polynomials: Optimal Locally Recoverable Codes from Good Rational Functions

Galois counting of split places in function fields yields infinite families with improved locality and distance parameters.

Optimal Codes with Positive Griesmer Defects, Related Optimal and Almost Optimal LRC Codes

New constructions yield codes not equivalent to known classes; some reach the CM bound exactly as locality-two LRCs.

RankGuardPolar Private Public Finite Length Polar Codes with Rank-Certified Leakage

A linear extractor isolates leaked combinations, enabling safe publication over shared binary erasure channels.

Parameter Estimation of Mutual Information Maximized Channels

Two algorithms enforce mutual-information optimality to jointly estimate both the unknown channel and its capacity-achieving input from y-0n

full image

full image

Sensor Design for Accuracy-Bounded Estimation via Maximum-Entropy Likelihood Synthesis

The method inverts classical sensor design by constructing the measurement model directly from an error budget and the dynamical prior.

full image

full image

Random Access Expectation in DNA Storage and Fountain Codes

Fully symmetric codes give normalized expectation at least 0.7854 with 0.7869 achievable under peeling in the large-blocklength limit.

full image

full image

Private Information Retrieval With Arbitrary Privacy Requirements for Graph-Based Storage

Neighborhood ranges let retrieval privacy vary continuously from local to full while capacity is bounded or solved exactly

Local Private Information Retrieval: A New Privacy Perspective for Graph-Based Replicated Systems

Requiring privacy only from servers that store the message yields exact rates for cycles and odd paths plus better bounds for other graphs.

Scalable Mamba-Based Message-Passing Neural Decoder for Error-Correcting Codes

The decoder gains 0.45 dB over prior neural methods on a 1056-bit LDPC code while using 1.5 times less memory, with savings increasing for 1

full image

full image

Sparse Signal Recovery using Log-Sum Regularization and Adaptive Smoothing

Adaptive smoothing keeps the proximal operator continuous so that AMP and state evolution accurately predict reconstruction error and error.

full image

full image

Weight distributions of cosets of weight 2 of the generalized doubly extended Reed-Solomon codes

in generalized doubly extended Reed-Solomon codes, giving the explicit distribution when the gcd condition holds

List-Decodable Folded Quantum Hermitian Codes

They achieve list-decodability with comparable lengths but over smaller alphabets than Reed-Solomon codes.

Optimal Repair Bandwidth and Repair I/O of (n,n-2,2) MDS Array Codes

The minimum cost equals the maximum of two explicit bounds for all admissible lengths over any finite field.

Syndrome Adaptive Gain Control for Min-Sum Decoding of Quantum LDPC Codes

Scaling factor is adjusted from the fraction of unsatisfied stabilizers, removing the need for per-code offline tuning while keeping min-sum

full image

full image

Misspecified Universal Learning

Minimax regret analysis with log-loss extends to cases where true data process is outside the hypothesis class, unifying modes and tasks.

full image

full image

Low-Cost GNSS Anti-Jamming Through 2-Bit Phase Shift Beamforming with Machine Learning

Optimized QPSK weights hold 20.8 dB-Hz C/N0 while unprotected receivers drop to 9.3 dB-Hz.

full image

full image

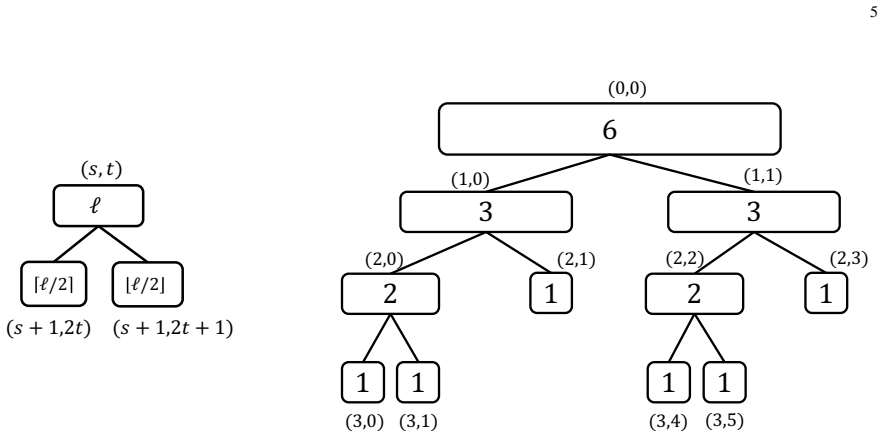

A Fast Hierarchical Splitting Approach for Non-Adaptive Learning of Random Hypergraphs

The scheme uses O(m log n) queries to recover 3-uniform edges but reduces decoding from cubic in n to polynomial in m for random instances.

Survey-Free Radio Map Construction via HMM-Based Coarse-to-Fine Inference

In unidirectional corridors the coarse-to-fine method delivers 8.96 dB MAE and 3.33 m localization without surveys.

full image

full image

Annotation-Free Indoor Radio Mapping via Physics-Informed Trajectory Inference

Physics-informed Bayesian model uses PADP continuity to reach 0.88 m localization error without labels or IMUs.

full image

full image

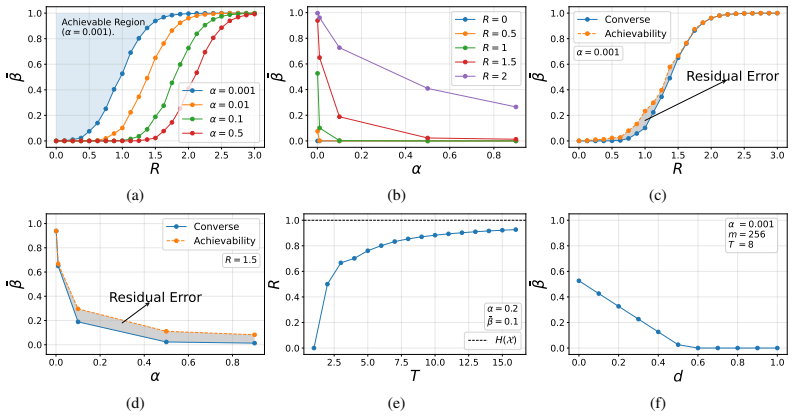

R\'enyi Rate-Distortion-Perception-Privacy Tradeoff under Indirect Observation

In Gaussian indirect observations standard metrics force a tradeoff with utility; a residual-only definition decouples them and yields exact

full image

full image

Closed-Form Gaussian Estimators for Multi-Source Partial Information Decomposition

Log-determinant formulas on covariance blocks give explicit redundancy, unique information, and synergy terms for any number of sources.

full image

full image

A Global Coding Scheme for OFDM over Finite Fields

Algebraic subcarriers via Galois transform create partial-geometry codes decoded with parallel binary algorithms at linear cost.

full image

full image

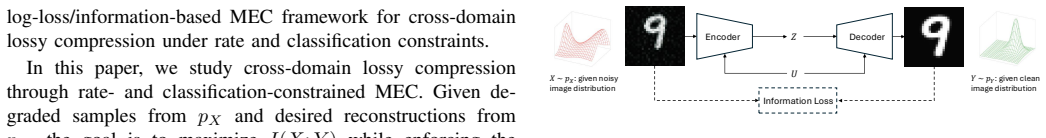

Cross-Domain Lossy Compression via Constrained Minimum Entropy Coupling

Rate-constrained minimum entropy coupling removes intermediate representations while preserving classification performance in image tasks.

full image

full image

Learning from Acceptance: Cumulative Regret in the Game of Coding

Repeated observations of acceptance outcomes let the data collector approach optimal performance without knowing the trade-off in advance.

full image

full image

Recovery Algorithms for Linear Batch Codes

A systematic study establishes order relations among algorithm types and extends graph-based constructions to arbitrary bipartite graphs.

Geometry of R\'enyi Entropy on the Majorization Lattice

It is also supermodular for α=0 and α≥1 after relating comonotone and independent couplings of marginal distributions.

full image

full image

Sparse Discrete Laplace and Gaussian Mechanisms under Local Differential Privacy

Smallest viable support cardinality achieves target privacy with least distortion in Laplace and Gaussian channels.

full image

full image

Universal Feature Selection with Noisy Observations and Weak Symmetry Conditions

The SVD-based procedure still attains near-optimal error exponents when symmetry deviations and observation noise stay small

Secure and Private Structured-Subset Retrieval: Fundamental Limits and Achievable Schemes

The bound holds with shared-randomness ratio exactly D over N-1 and one scheme works for every demand family.

Secure and Private Structured-Subset Retrieval: Fundamental Limits and Achievable Schemes

Shared randomness ratio D/(N-1) and subpacketization (N-1)/gcd(D,N-1) hold uniformly across families.

Covert Capacity of Degraded Broadcast Channels

The computable capacity region shows time-sharing is suboptimal and superposition achieves higher rates while avoiding warden detection.

full image

full image

Error-Correcting Weakly Constrained Codes: Constructions and Achievable Rates

Expurgation then produces positive-rate codes with linear distance, and concatenation enables efficient encoding and decoding.

Tight Lower Bounds on The Single-Error Detection Threshold for Analog Error-Correcting Codes

This settles the open question on minimal height profiles for single-error detection when redundancy equals two and shows the general bound

full image

full image

Fundamental Trade-Offs in Multi-Bit Watermarking of Stochastic Processes

Information-theoretic analysis gives scheme-agnostic limits on false alarms, errors, distortion and rate for stochastic data.

full image

full image

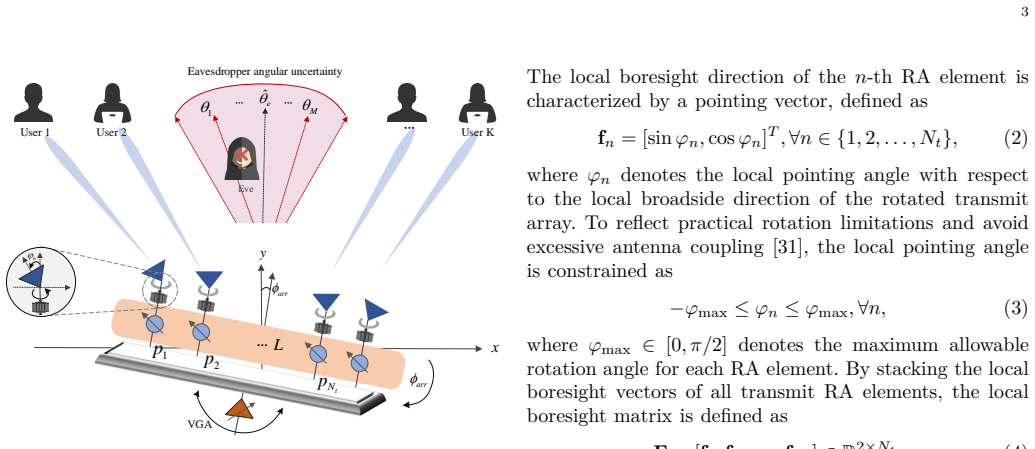

Array and element rotations plus CRB uncertainty modeling outperform fixed antennas by directing gains to users and suppressing leakage to e

full image

full image

On Codes with Support-Constrained Parity Checks

The distance is achieved over large fields, but generalized Reed-Solomon subcodes fail for some masks like the K6,6 incidence structure.

full image

full image

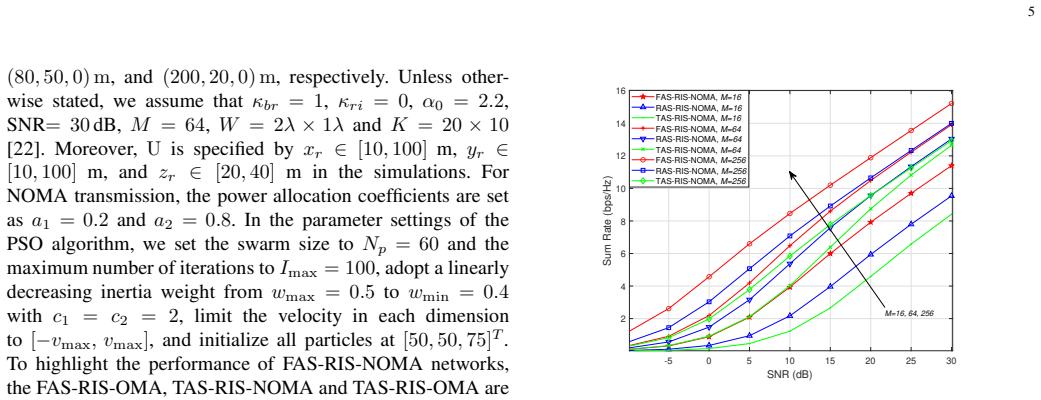

Fluid Antennas Assisted RIS-NOMA Communication Networks

Simulations show gains versus fixed antennas and orthogonal access, with further improvement from more RIS elements or larger fluid antennas

full image

full image

Non-binary LDPC codes for Data Storage

Lifted constructions from binary matrices reach the maximum possible minimum distance for erasure correction.

full image

full image

On Reducing Decoding Complexity of Successive-Cancellation List Flip Decoding of Polar Codes

PSCLF adds 0.1 dB gain over SCLF and matches plain SCL latency at low FER through early termination and restarts.

Chase-like Decoding: Test Pattern Design and Performance Analysis

A covering algorithm selects patterns that capture likely errors, outperforming Chase-II sets at the same complexity for high-rate codes.

full image

full image

Chase-like Decoding: Test Pattern Design and Performance Analysis

New test-pattern sets cover likely errors more effectively than Chase-II patterns for high-rate BCH codes.

full image

full image

Semantic Smoothing for Language Models via Distribution Estimation and Embeddings

Interpolation blends empirical counts with embedding-derived neighbors, cutting worst-case risk and test perplexity on standard estimators.

full image

full image

Probabilistic Ising machines reach maximum-likelihood accuracy with 100 iterations and outperform standard MMSE methods for future 6G arrays

Probabilistic physics-inspired computing matches ideal performance for massive 6G wireless systems in 100 steps while beating current MMSE.

It identifies active satellites and builds accurate continuous RSS fields, aiding interference handling in dense LEO networks.

full image

full image

Kolmogorov--Nagumo Mean Frameworks for Conditional Entropy

The new averaging framework represents Augustin-Csiszár conditional entropy in cases where standard η-averaging cannot.

Kolmogorov--Nagumo Mean Frameworks for Conditional Entropy

Kolmogorov-Nagumo means let generalized g-conditional entropies include alpha-parameterized measures missed by prior eta-averaging.

Kolmogorov--Nagumo Mean Frameworks for Conditional Entropy

Kolmogorov-Nagumo based generalized g-conditional entropies include Augustin-Csiszár cases for alpha in (0,1) or (1, infinity).

A Syndrome-Space Approach to Proximity Gaps and Correlated Agreement for Random Linear Codes

Direct proofs reach radius 1-R-ε for large alphabets and near-capacity bounds for constant alphabets without list decoding.

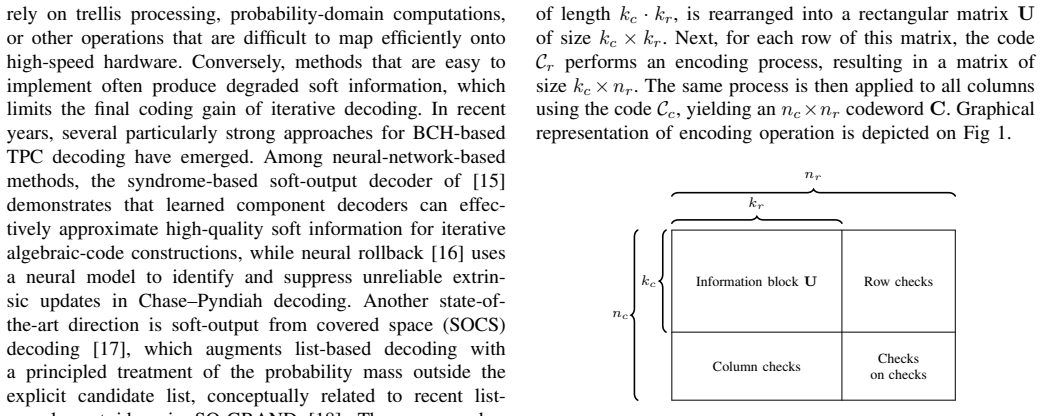

A Log-Domain Approximation of SOCS Decoding for Turbo Product Codes

A piecewise-linear function of reliability gaps replaces probability arithmetic while staying compatible with standard iterative decoding.

full image

full image

UMVUE-Type Estimators under Bregman Losses

One direction recovers classical unbiased estimation; the other supports new minimum-variance estimators through dual-space conditioning.

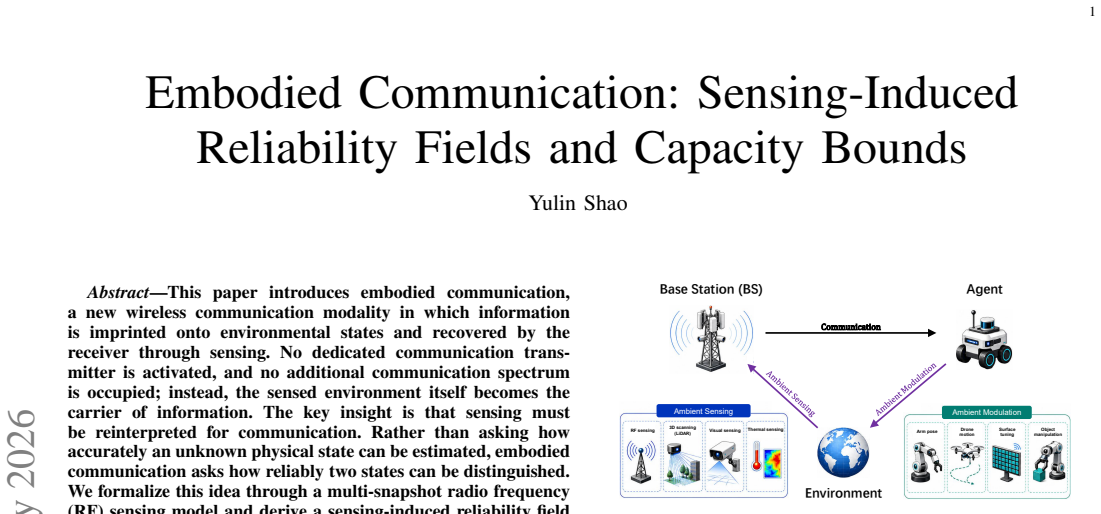

Embodied Communication: Sensing-Induced Reliability Fields and Capacity Bounds

Multi-snapshot sensing produces a reliability field that turns state distinction into a geometric codebook problem with an optimal finite-

full image

full image

How Big Should a Wireless Foundation Model Be?

Physical constraints on propagation degrees of freedom make further scaling ineffective once data is sufficient.

full image

full image

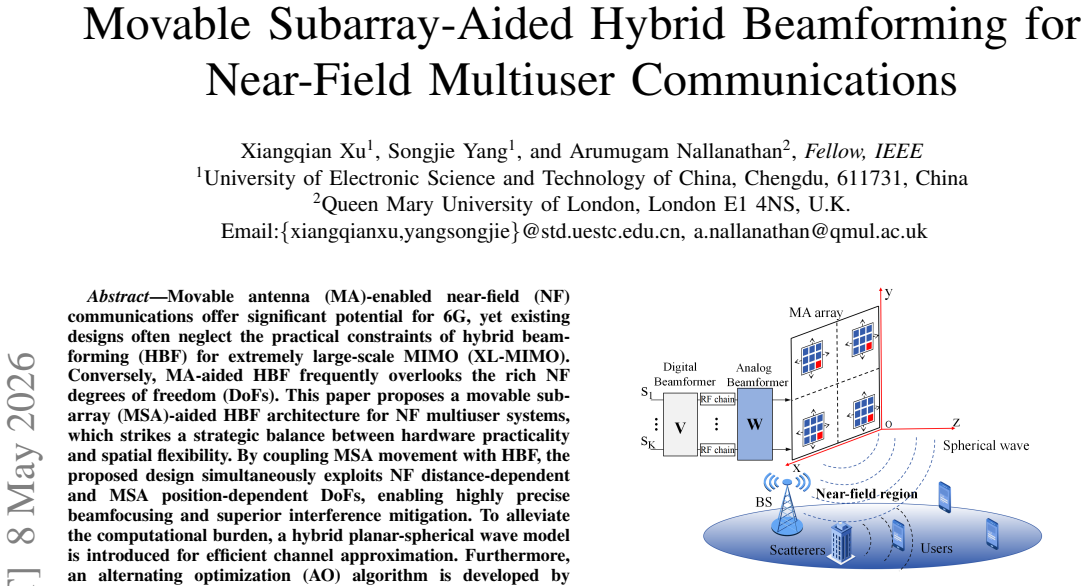

Movable Subarray-Aided Hybrid Beamforming for Near-Field Multiuser Communications

Coupling subarray movement with hybrid beamforming adds position-dependent freedom for sharper focusing and less interference.

full image

full image

Sub-Gaussian Concentration and Entropic Normality of the Maximum Likelihood Estimator

Score assumptions produce tail bounds, moment convergence, and vanishing relative entropy to the Gaussian, with smoothing removable when the

Hybrid Multiport Receivers for Slow Fluid Antenna Multiple Access

Hybrid multiport receiver cuts computation over 60 percent in slow multiuser FAMA while keeping selection benefits.

full image

full image

The strong converse shows that the threshold for reliable and secure communication remains fixed for any chosen tolerances, with universal c

full image

full image

Generalized Skew Multivariate Goppa Codes

A new parity check matrix establishes the link and provides bounds on dimension and minimum distance for the multivariate case.

Generalized Skew Multivariate Goppa Codes

New parity check matrix yields bounds on dimension and minimum distance for the multivariate versions.