Recognition: 2 theorem links

· Lean TheoremEvaluating Design Conformance Through Trace Comparison

Pith reviewed 2026-05-11 03:19 UTC · model grok-4.3

The pith

Comparing distributed traces to design traces produces a quantitative conformance metric for system implementations.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Distributed traces produced by instrumented applications are evaluated for conformance by comparison to design traces. The resulting conformance percentage is a quantitative metric that can be tracked over time to determine how closely a concrete implementation corresponds to the key attributes of the expected design model.

What carries the argument

Trace comparison for conformance checking, which aligns sequences of events from implementation traces with those from design traces to compute a similarity score.

If this is right

- The conformance percentage can be tracked across time or releases to identify increasing divergence from the design.

- Design adherence becomes an objective, trackable property instead of a subjective judgment.

- Specific points of behavioral deviation can be isolated through the trace alignment process.

- Implementation changes can be assessed for their effect on overall design fidelity.

Where Pith is reading between the lines

- Regular monitoring of the metric could support automated checks in development workflows to catch drift early.

- The need for design traces may encourage teams to maintain more explicit and up-to-date design representations.

- The method could be applied beyond distributed systems in any setting where comparable execution traces exist.

Load-bearing premise

Design traces can be feasibly generated or represented in a form that allows meaningful automated comparison with implementation traces.

What would settle it

A controlled test where the implementation is altered to violate one specific design attribute, after which the conformance percentage is checked to see if it drops in a way that reflects the introduced mismatch.

Figures

read the original abstract

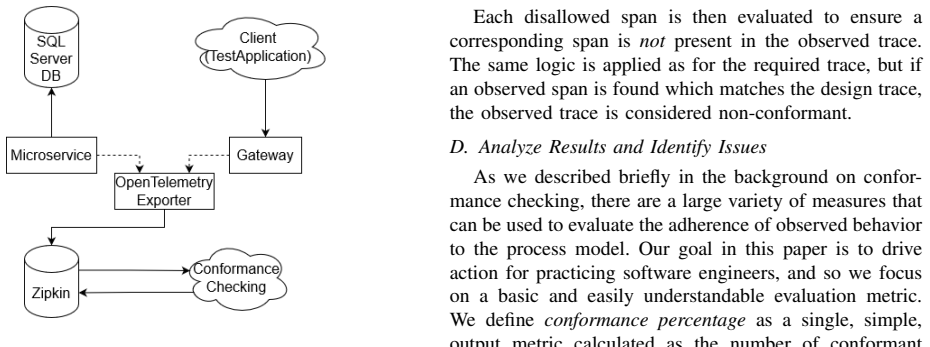

The design of a system and its implementation are two tasks often carried out by different individuals on a development team, and can occur weeks or months apart. This creates a potential for divergence between real behavior and the designed model that an implementation is intended to match. Particularly as time passes and individuals who were present for the original conception of the design leave, a system can lose coherence and drift from intended design principles. Even with a robust system design, more is needed to ensure that the key implementation details match the design and that adherence to a particular strategy is not lost over time. This paper proposes an approach to address that concern for distributed systems using conformance checking, a methodology borrowed from process mining. Distributed traces produced by instrumented applications are evaluated for conformance by comparison to design traces. The resulting conformance percentage is a quantitative metric that can be tracked over time to determine how closely a concrete implementation corresponds to the key attributes of the expected design model. This analysis is done using the dominant industry standard, OpenTelemetry, and so should apply to a wide range of distributed systems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes an approach to monitor design-implementation alignment in distributed systems by applying conformance checking from process mining: traces produced by OpenTelemetry-instrumented implementations are compared against 'design traces' to yield a quantitative conformance percentage that can be tracked over time.

Significance. If the comparison mechanism can be made concrete, the work would supply a practical, standards-based metric for detecting design drift in evolving distributed systems, extending process-mining conformance techniques to the OpenTelemetry ecosystem that already sees wide industrial use.

major comments (1)

- [Abstract] Abstract: the central claim requires that design traces can be generated or extracted from design artifacts and aligned with OpenTelemetry spans to compute a conformance percentage, yet the manuscript supplies neither a generation procedure, a representation format, nor mapping rules between the two trace types; without these the quantitative metric cannot be computed.

minor comments (1)

- [Abstract] The abstract refers to 'key attributes of the expected design model' without indicating how those attributes are identified or encoded for comparison.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback. The comment correctly identifies a gap in the current manuscript regarding the concrete mechanisms for producing and aligning design traces. We address this below and will revise the paper accordingly.

read point-by-point responses

-

Referee: [Abstract] Abstract: the central claim requires that design traces can be generated or extracted from design artifacts and aligned with OpenTelemetry spans to compute a conformance percentage, yet the manuscript supplies neither a generation procedure, a representation format, nor mapping rules between the two trace types; without these the quantitative metric cannot be computed.

Authors: We agree that the manuscript, as currently written, presents the high-level approach and the resulting conformance metric but does not supply an explicit generation procedure, a concrete representation format for design traces, or detailed mapping rules to OpenTelemetry spans. The abstract and introduction describe the intended use of design traces derived from design artifacts, yet the body of the paper focuses primarily on the comparison step once such traces exist. To make the quantitative metric fully operational, the revised manuscript will add a dedicated section (provisionally titled “Generating and Representing Design Traces”) that (1) outlines a systematic procedure for extracting design traces from standard artifacts such as UML sequence diagrams or BPMN models, (2) defines a lightweight JSON representation that mirrors the structure of OpenTelemetry spans (including span name, attributes, and temporal ordering), and (3) specifies deterministic alignment and mapping rules that enable direct computation of the conformance percentage. These additions will be illustrated with a small running example so that readers can reproduce the metric from design artifacts and observed traces. revision: yes

Circularity Check

No circularity: methodological proposal with no equations, fits, or self-referential derivations

full rationale

The paper proposes a high-level conformance-checking method that borrows process-mining ideas and applies them to OpenTelemetry traces versus design traces. No equations, fitted parameters, predictions, or uniqueness theorems appear. No self-citations are load-bearing. The central claim is a suggestion for future tooling rather than a derivation that reduces to its own inputs by construction. The absence of any quantitative model or self-referential step keeps the circularity score at zero.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Distributed traces produced by instrumented applications are evaluated for conformance by comparison to design traces. The resulting conformance percentage is a quantitative metric...

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Spans in design traces are split into two collections, the collection of required spans that must be present, and the collection of disallowed spans that must be absent.

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

From Monolithic Architecture to Microservices Architecture,

L. De Lauretis, “From Monolithic Architecture to Microservices Architecture,” in 2019 IEEE International Symposium on Software Reliability Engineering Workshops (ISSREW) , Oct. 2019

work page 2019

-

[2]

Conformance checking: a state-of-the-art literature review,

S. Dunzer, M. Stierle, M. Matzner, and S. Baier, “Conformance checking: a state-of-the-art literature review,” in Proceedings of the 11th International Conference on Subject-Oriented Business Process Management, 2019

work page 2019

-

[3]

Agile Software Development Methods: Review and Analysis,

P. Abrahamsson, O. Salo, J. Ronkainen, and J. Warsta, “Agile Software Development Methods: Review and Analysis,” Sep. 2017

work page 2017

-

[4]

Process mining on medi- cal treatment history using conformance checking,

W. Chomyat and W. Premchaiswadi, “Process mining on medi- cal treatment history using conformance checking,” in 2016 14th International Conference on ICT and Knowledge Engineering (ICT&KE), Nov. 2016

work page 2016

-

[5]

Conformance checking of par- tially matching processes: An entropy-based approach,

A. Polyvyanyy and A. Kalenkova, “Conformance checking of par- tially matching processes: An entropy-based approach,” Information Systems, 2022

work page 2022

-

[6]

Dapper, a Large-Scale Distributed Systems Tracing Infrastructure

B. H. Sigelman, L. A. Barroso, M. Burrows, P. Stephenson, M. Plakal, D. Beaver, S. Jaspan, and C. Shanbhag, “Dapper, a Large-Scale Distributed Systems Tracing Infrastructure.”

-

[7]

Anomaly Detection and Classification using Distributed Tracing and Deep Learning,

S. Nedelkoski, J. Cardoso, and O. Kao, “Anomaly Detection and Classification using Distributed Tracing and Deep Learning,” in 2019 19th IEEE/ACM International Symposium on Cluster, Cloud and Grid Computing (CCGRID) , May 2019

work page 2019

-

[8]

review on opentelemetry and HTTP implementation,

A. Thakur and M. B. Chandak, “review on opentelemetry and HTTP implementation,” International journal of health sciences , Jun. 2022

work page 2022

-

[9]

Towards executable specifications for microservices,

J. G. Quenum and S. Aknine, “Towards executable specifications for microservices,” in 2018 IEEE International Conference on Services Computing (SCC), 2018

work page 2018

-

[10]

Formal property verification in a conformance testing framework,

H. Abbas, H. Mittelmann, and G. Fainekos, “Formal property verification in a conformance testing framework,” in 2014 Twelfth ACM/IEEE Conference on Formal Methods and Models for Code- sign (MEMOCODE). IEEE, Oct. 2014

work page 2014

-

[11]

Using Dis- tributed Tracing to Identify Inefficient Resources Composition in Cloud Applications,

C. Casse, P. Berthou, P. Owezarski, and S. Josset, “Using Dis- tributed Tracing to Identify Inefficient Resources Composition in Cloud Applications,” in 2021 IEEE 10th International Conference on Cloud Networking (CloudNet) , Nov. 2021

work page 2021

-

[12]

Privacy-Risk Detection in Microservices Composition Using Distributed Trac- ing,

D. Gorige, E. Al-Masri, S. Kanzhelev, and H. Fattah, “Privacy-Risk Detection in Microservices Composition Using Distributed Trac- ing,” in 2020 IEEE Eurasia Conference on IOT, Communication and Engineering (ECICE) , Oct. 2020

work page 2020

-

[13]

Man- aging IoT Cyber-Security Using Programmable Telemetry and Machine Learning,

A. Sivanathan, H. Habibi Gharakheili, and V . Sivaraman, “Man- aging IoT Cyber-Security Using Programmable Telemetry and Machine Learning,” IEEE Transactions on Network and Service Management, Mar. 2020

work page 2020

-

[14]

Semantic Conventions for HTTP Spans

OpenTelemetry, “Semantic Conventions for HTTP Spans.” [Online]. Available: https://opentelemetry.io/docs/specs/semconv/ http/http-spans/

-

[15]

Microservices smell detection through dynamic analysis,

P. Bacchiega, I. Pigazzini, and F. A. Fontana, “Microservices smell detection through dynamic analysis,” in 2022 48th Euromicro Conference on Software Engineering and Advanced Applications (SEAA), Aug. 2022

work page 2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.