Nine LLM audits on prompts found 51 defects and converged to zero

Expanded-scope rounds in a 7150-line multi-agent system caught issues single-file checks missed.

full image

full image

Software Engineering

Covers design tools, software metrics, testing and debugging, programming environments, etc. Roughly includes material in all of ACM Subject Classes D.2, except that D.2.4 (program verification) should probably have Logics in Computer Science as the primary subject area.

Expanded-scope rounds in a 7150-line multi-agent system caught issues single-file checks missed.

full image

full image

Shared-buffer modal design unifies editing, execution, and debugging in one lightweight terminal tool.

Characterizing the Failure Modes of LLMs in Resolving Real-World GitHub Issues

Strategy formulation and logic synthesis cause the highest error rates while localization succeeds more often across tested models.

full image

full image

Uncertainty Quantification for LLM-based Code Generation

A method using hypothesis testing guarantees correct solutions in partial code outputs while cutting removals by 24.5%.

full image

full image

ReproBreak: A Dataset of Reproducible Web Locator Breaks

Mining 359 repositories and validating changes in top projects yields examples of breaks from structural UI edits.

full image

full image

CIDR: A Large-Scale Industrial Source Code Dataset for Software Engineering Research

CIDR gives researchers 373 million lines of real production code from 12 companies for model training and quality studies.

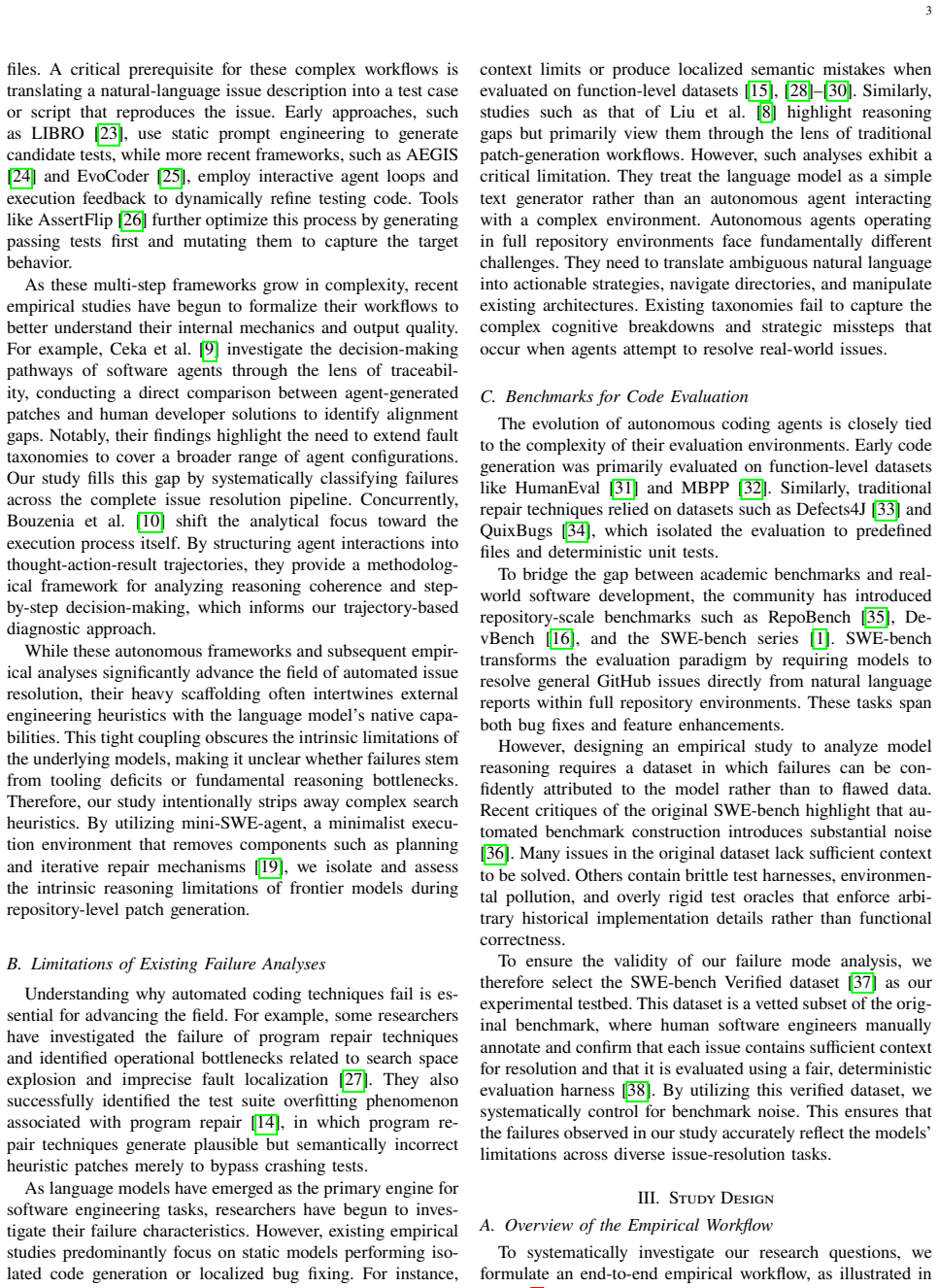

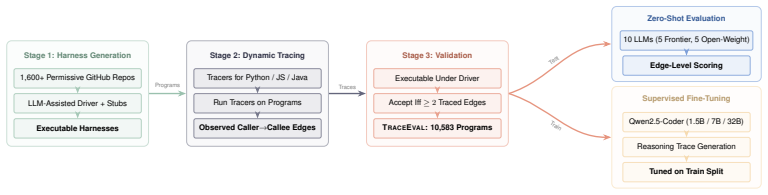

It's Not the Size: Harness Design Determines Operational Stability in Small Language Models

Pipeline wrappers lift task rates to 0.952 while raw prompts trigger format collapse and lower scores in 2-3B models.

full image

full image

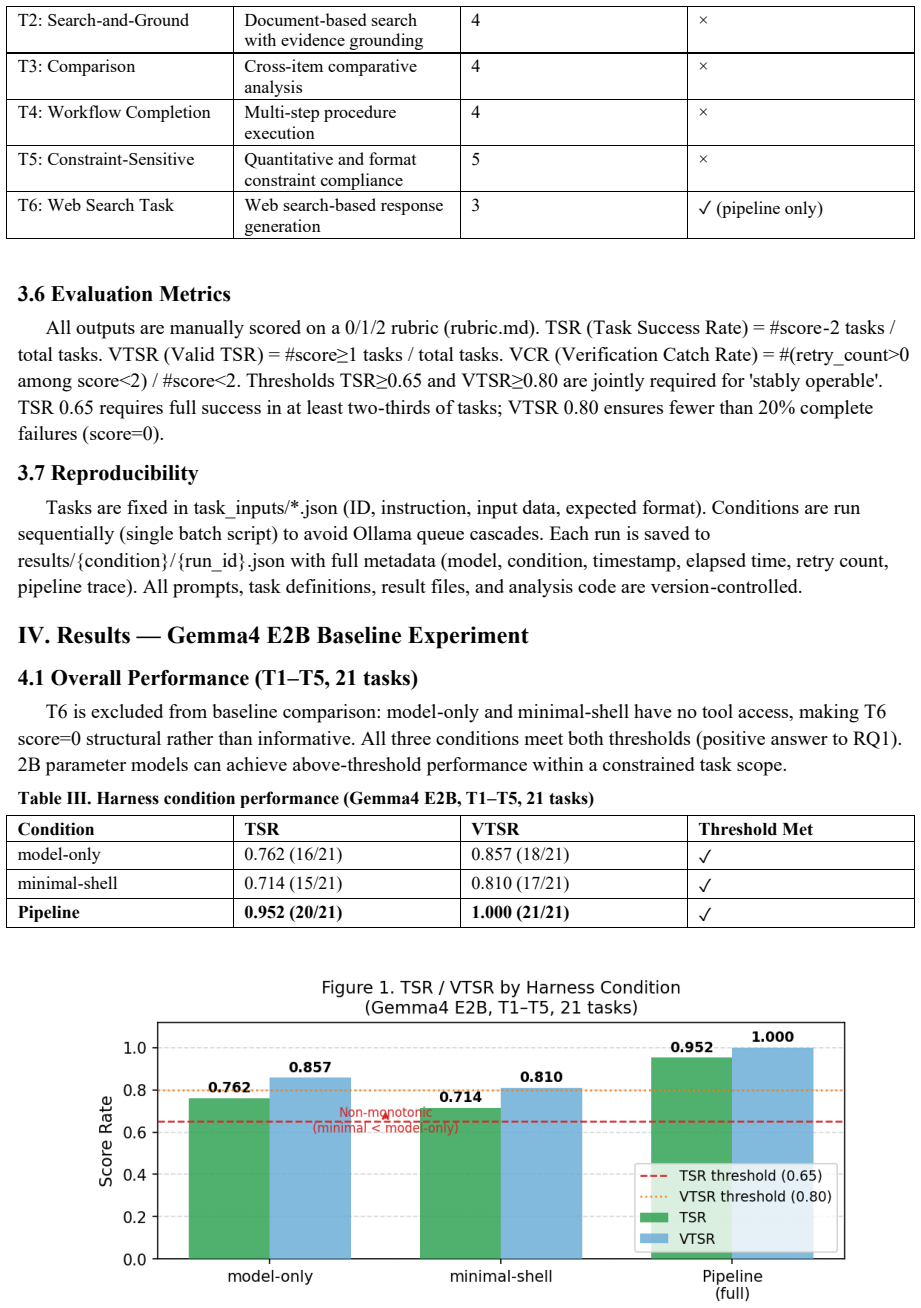

HM-Req: A Framework for Embedding Values within CPS Human Monitoring Requirements

Controlled natural language plus dashboard flags value clashes early during requirements work for systems that watch people.

full image

full image

Pilot classifies Decision Event Schema properties and finds one universal gap plus four regime-specific gaps in reconstructing what agents,

StepCodeReasoner: Aligning Code Reasoning with Stepwise Execution Traces via Reinforcement Learning

Explicitly modeling each execution state reduces inconsistent reasoning and boosts overall code performance.

full image

full image

The Death Spiral of Open Source Projects: A Post-Mortem Analysis of Pull Request Workflow Dynamics

Analysis of 1.3 million pull requests shows friction and negativity grow with age but do not cause failure; innovation and ecosystem value (

full image

full image

An Extensive Replication Study of the ABLoTS Approach for Bug Localization

An incorrect cut-off date let later bug reports enter training, inflating ABLoTS performance on the original Java projects.

Constraint graphs from PyPI data and selective LLM use cut median time from 151 s to 24 s and LLM calls by 11x versus PLLM on HG2.9K.

full image

full image

A Research Agenda on Agents and Software Engineering: Outcomes from the Rio A2SE Seminar

Experts map short- and long-term directions across governance, architecture, quality, and sustainability to align efforts on agentic AI.

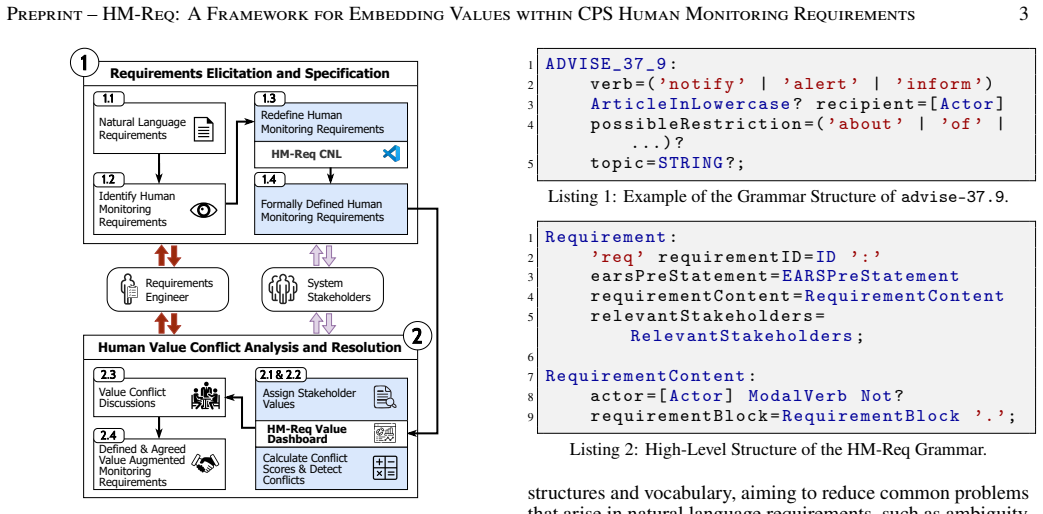

Decaf: Improving Neural Decompilation with Automatic Feedback and Search

Search guided by compilation errors corrects semantic mistakes in AI outputs from optimized binaries while preserving source similarity.

full image

full image

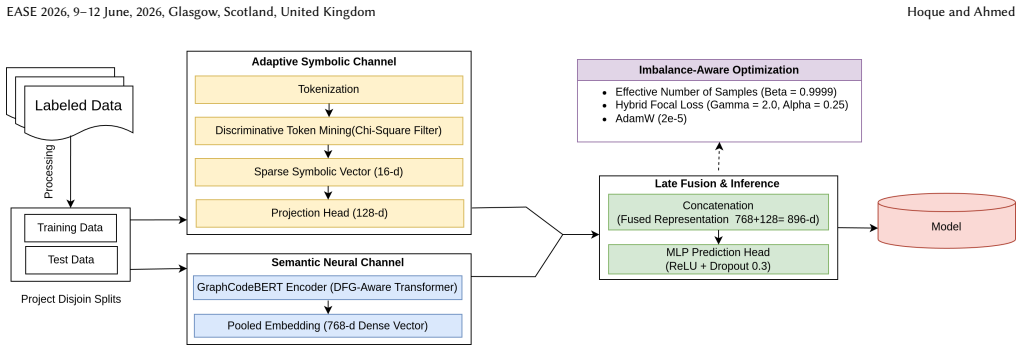

NeuroFlake: A Neuro-Symbolic LLM Framework for Flaky Test Classification

Neuro-symbolic injection halves the performance drop on perturbed tests versus pure neural baselines.

full image

full image

Natural Language based Specification and Verification

Preliminary results suggest this route can catch issues in LLM-written programs without rigid formal languages.

full image

full image

SHIA: A Direct SysML-Hardware Interface Architecture for Model-Centric Verification

Logic gate test shows correct bidirectional messages and zero output discrepancy, keeping the original model as the active reference.

full image

full image

Using Logs to support Programming Education

Granular interaction data enables analysis of comprehension, errors, and exercise timing in programming classes.

CppPerf: An Automated Pipeline and Dataset for Performance-Improving C++ Commits

Testing shows automated tools fix only 13.5 percent of these multi-file changes from mature projects.

full image

full image

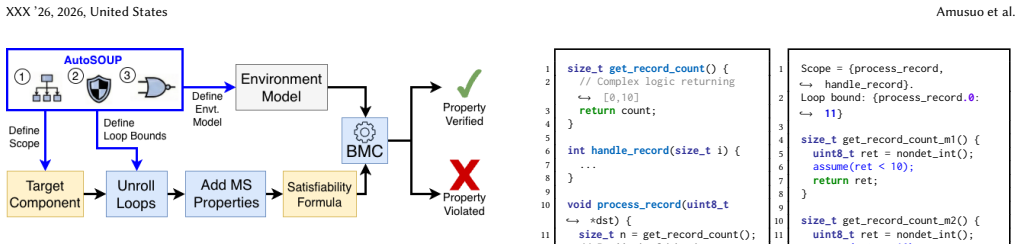

AutoSOUP: Safety-Oriented Unit Proof Generation for Component-level Memory-Safety Verification

The system encodes scope, loop bounds, and environment models automatically so formal proofs of memory safety no longer require manual setup

full image

full image

ChatGPT: Friend or Foe When Comprehending and Changing Unfamiliar Code

Lab comparison shows every detailed step appears with or without AI, yet stuck causes and recovery paths differ between the two groups.

full image

full image

On Problems of Implicit Context Compression for Software Engineering Agents

In-Context Autoencoder succeeds on single-shot code tasks yet breaks down when agents must plan and edit across multiple steps.

CrackMeBench: Binary Reverse Engineering for Agents

CrackMeBench uses educational tasks in a sandbox to score how well models recover keys and logic from compiled programs.

full image

full image

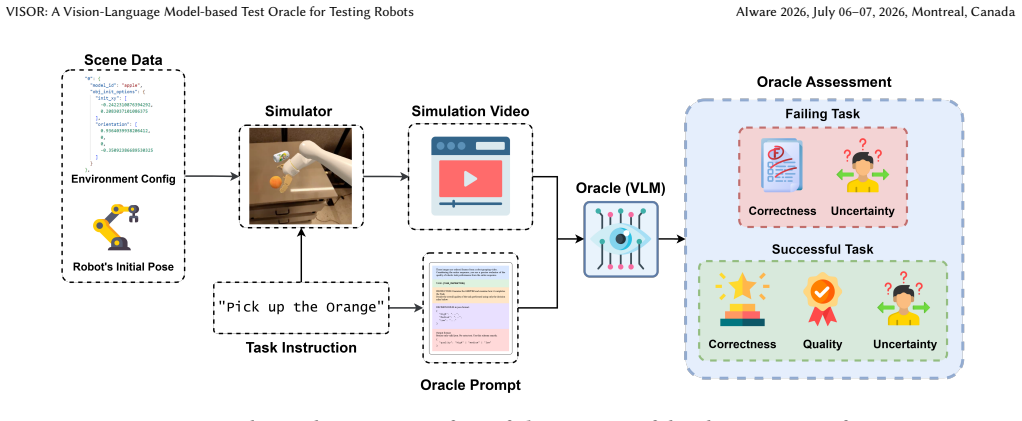

VISOR: A Vision-Language Model-based Test Oracle for Testing Robot

Scores task correctness and quality from videos while reporting its own uncertainty, tested on over 1,000 robot videos.

full image

full image

DREAMS: Modelling Support for Research into Engineering and Artistic Design

Preliminary tests with four users show faster revisions, fewer edge crossings, and quicker evidence retrieval than manual drawing of causal

full image

full image

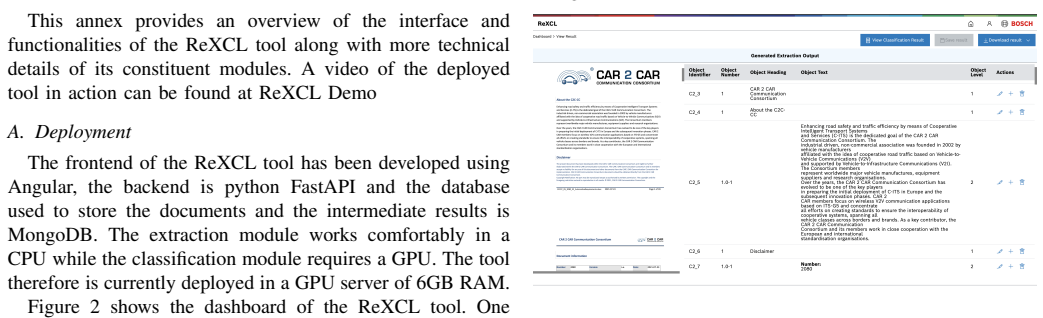

Read, Extract, Classify: A Tool for Smarter Requirements Engineering

It combines heuristics and adaptive model fine-tuning to schematize semi-structured documents with better efficiency and accuracy.

full image

full image

MARGIN: Margin-Aware Regularized Geometry for Imbalanced Vulnerability Detection

MARGIN aligns embedding distributions with Voronoi cells via von Mises-Fisher concentration to stabilize decision boundaries on skewed data.

full image

full image

Factorial test of four variables shows only session progress and task type produce reliable differences in compliance.

full image

full image

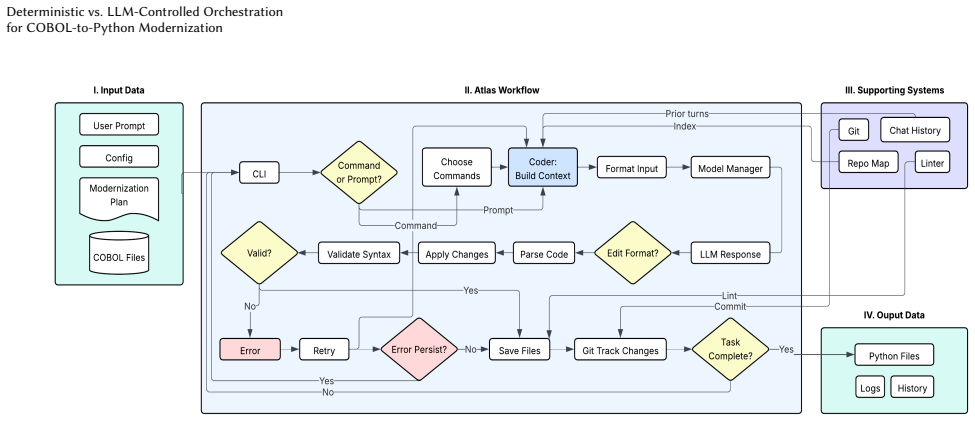

Deterministic vs. LLM-Controlled Orchestration for COBOL-to-Python Modernization

In COBOL-to-Python tests, fixed execution policies deliver stable results and cut token use without losing translation quality.

full image

full image

Evaluating Tool Cloning in Agentic-AI Ecosystems

Audit of 100k tools across 8,861 repositories shows hidden duplication inflates diversity counts and biases benchmarks.

full image

full image

An Executable Benchmarking Suite for Tool-Using Agents

Clean baseline and live-stressed evaluations pick different variants under the same workload in WebArena Verified study.

full image

full image

Thematic analysis of literature and discourse finds lowered code costs but rising demands on specification, verification, and governance.

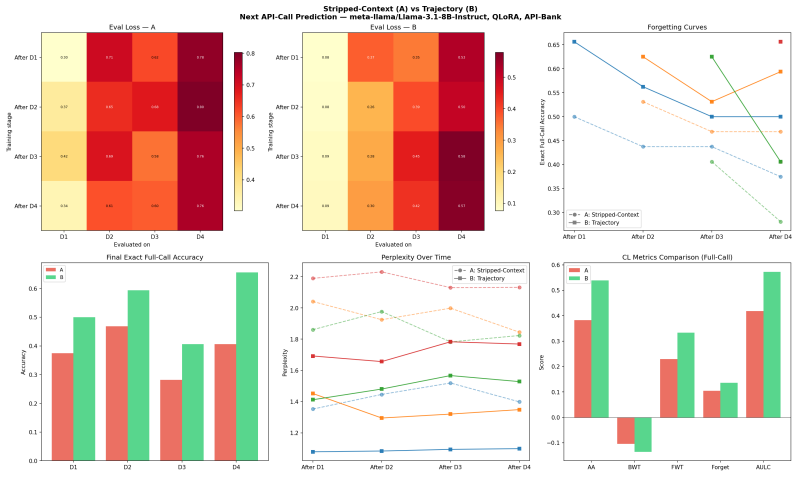

Trajectory Supervision for Continual Tool-Use Learning in LLMs

Retaining full API histories during sequential domain training improves next-call prediction over stripped final-call training in a pilot on

full image

full image

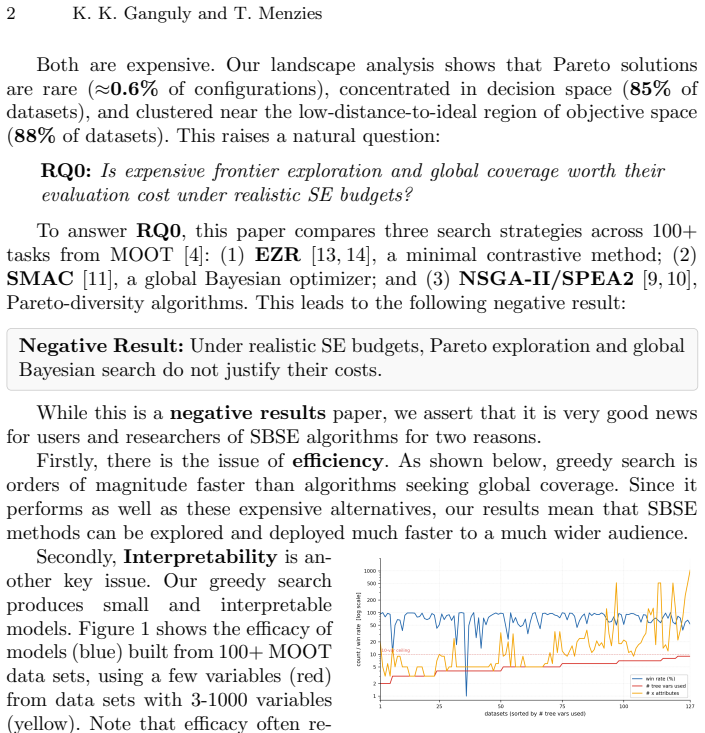

Greedy focus on compact optimal islands runs 1000x faster than full exploration under limited budgets.

full image

full image

ConCovUp: Effective Agent-Based Test Driver Generation for Concurrency Testing

Multi-agent LLM framework with static analysis and backward tracing generates drivers that hit more shared memory pairs in C/C++ code.

full image

full image

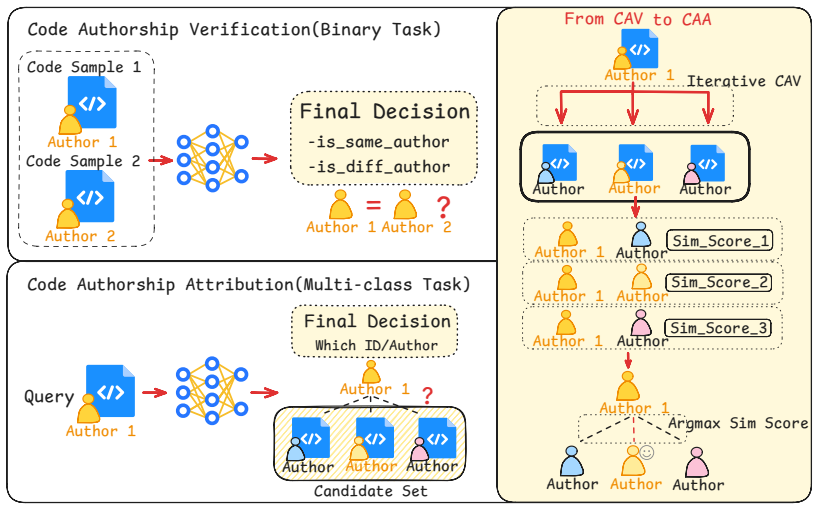

MACAA: Belief-Revision Multi-Agent Reasoning for Open-World Code Authorship Verification

Coordinator reconciles layout, syntax and pattern evidence from four agents to handle unknown authors and mixed languages.

full image

full image

MACAA: Belief-Revision Multi-Agent Reasoning for Open-World Code Authorship Verification

Coordinator integrates layout, lexical, syntactic and pattern signals from four experts to reach 89 percent F1 same-language and 80 percent

full image

full image

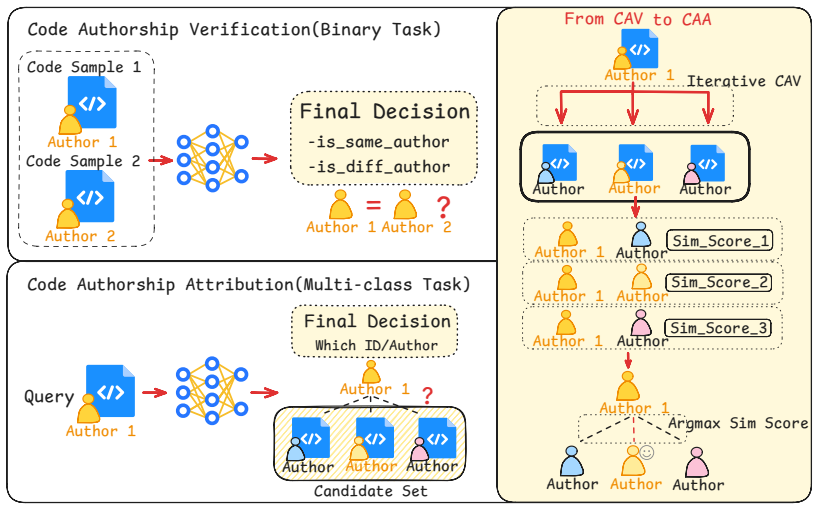

Analysis of 126 stakeholders finds governance mechanisms offer best trade-off under budget limits for software engineering classes

full image

full image

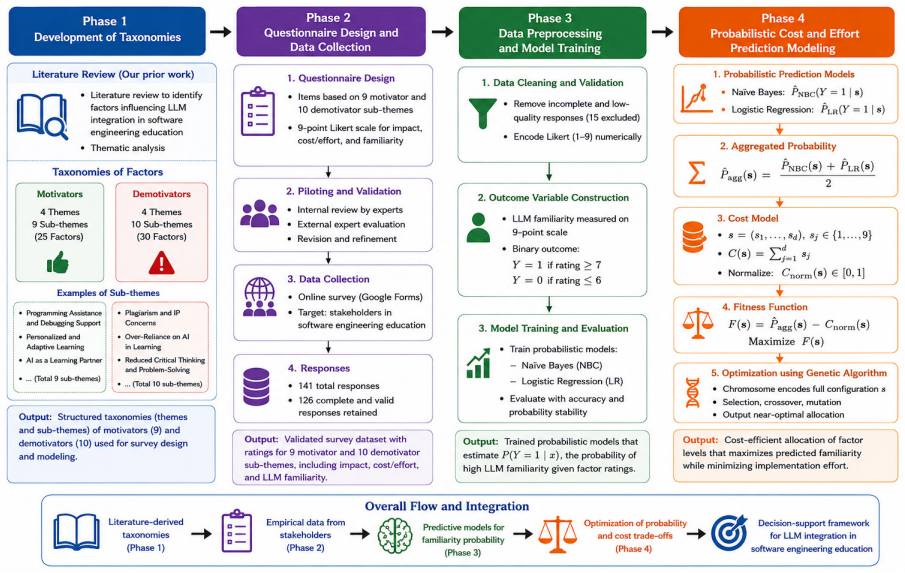

An Execution-Verified Multi-Language Benchmark for Code Semantic Reasoning

TraceEval mechanically confirms every call edge in 10k real programs, letting researchers measure how well models recover runtime structure.

full image

full image

Generating Complex Code Analyzers from Natural Language Questions

The system refines queries iteratively with retrieval and self-tests, letting programmers finish analysis tasks 31 percent faster on large代码

full image

full image

Evaluating LLM-Generated Code: A Benchmark and Developer Study

Three-fold method shows developer input reveals production readiness gaps in code from GPT-4.1, DeepSeek-V3 and Claude Opus 4.

ParityFuzz mutates contracts and compares normalized outputs across environments to expose differences that affect migration and security.

full image

full image

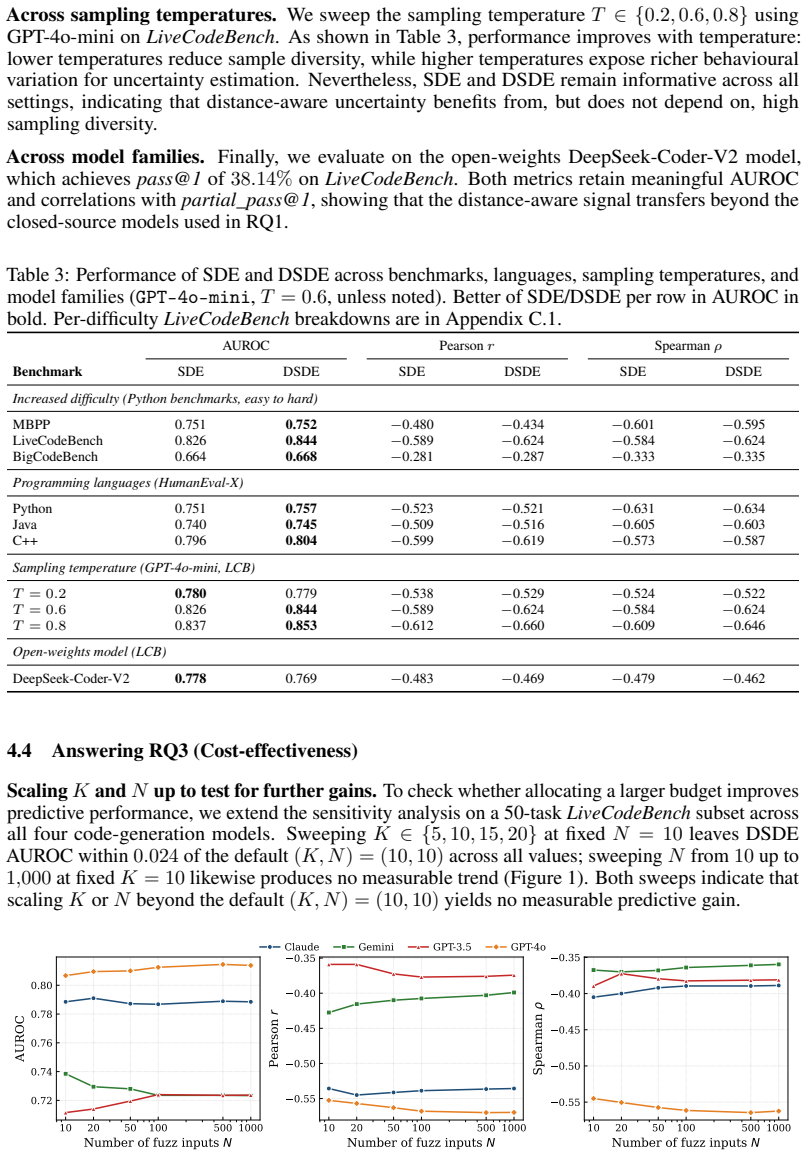

Using Semantic Distance to Estimate Uncertainty in LLM-Based Code Generation

Measuring behavioral severity in sampled programs gives stronger correctness signals and halves runtime across models and languages.

full image

full image

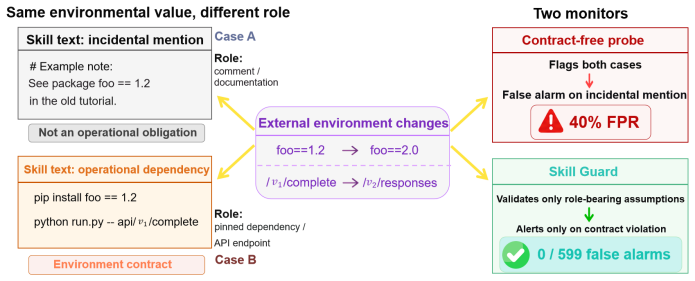

Skill Drift Is Contract Violation: Proactive Maintenance for LLM Agent Skill Libraries

Extracting role-bearing assumptions from skill documents cuts false alarms to zero and raises repair success from 10% to 78%.

full image

full image

Debugging the Debuggers: Failure-Anchored Structured Recovery for Software Engineering Agents

PROBE raises diagnosis accuracy to 65% and recovery to 22% on unresolved cases without changing agent policy or tools.

full image

full image

A Learning Method for Symbolic Systems Using Large Language Models

The method converts LLM reasoning into reusable symbolic code, raising automated success rates on real verification projects without runtime

full image

full image

Semantic Voting: Execution-Grounded Consensus for LLM Code Generation

When no oracle exists, running candidates on generated inputs selects better programs than majority vote on the source text.

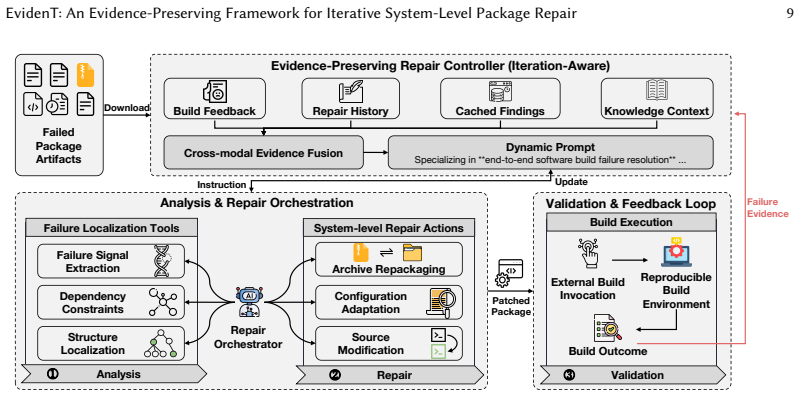

EvidenT: An Evidence-Preserving Framework for Iterative System-Level Package Repair

By retaining all repair history and using external build feedback, it doubles the success rate of agentic baselines.

full image

full image

VeriContest: A Competitive-Programming Benchmark for Verifiable Code Generation

Benchmark of 946 problems shows specification and proof writing as the central barriers to verifiable AI-generated software.

full image

full image

A Dataset of Agentic AI Coding Tool Configurations

From 4,738 open repositories, it tracks how developers guide tools like Claude Code and Cursor with context files and rules.

full image

full image

On an AI-only network their discussions stress security, trust and workflows but rarely include code artifacts or error reproductions that

full image

full image

Collaborator or Assistant? How AI Coding Agents Partition Work Across Pull Request Lifecycles

Study of 29,585 cases shows collaborator tools let agents drive work while approval stays human across all tools.

full image

full image

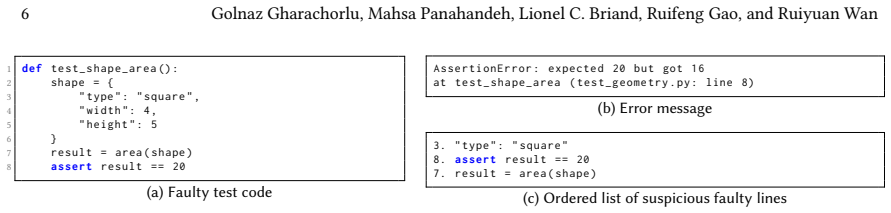

Similar Pattern Annotation via Retrieval Knowledge for LLM-Based Test Code Fault Localization

SPARK pulls matching CI cases to mark likely buggy lines, raising accuracy on multi-fault tests without added cost.

full image

full image

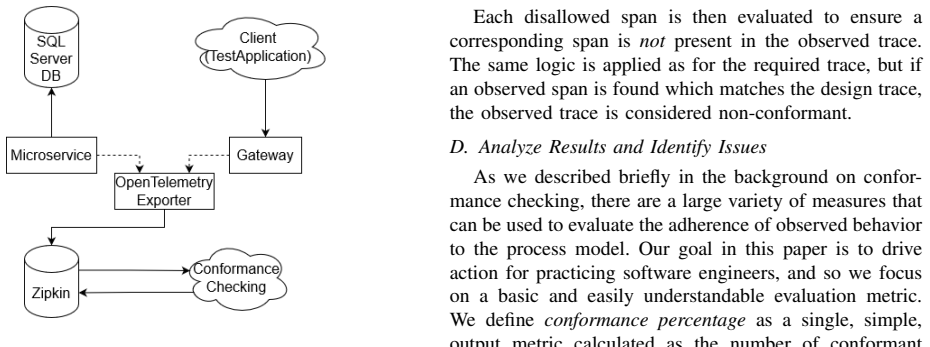

Evaluating Design Conformance Through Trace Comparison

Distributed system traces from execution can be aligned with design traces to give a percentage that tracks how well code stays true to its

full image

full image

Mazocarta: A Seeded Procedural Deckbuilder for Instrumented Game Development

Mazocarta keeps interactive sessions, simulations, and automated checks on a single deterministic core to avoid drift.

full image

full image

Unsafe by Flow: Uncovering Bidirectional Data-Flow Risks in MCP Ecosystem

Protocol-specific static analysis reveals recurring data-flow risks missed by existing tools across 15k real-world repositories.

full image

full image

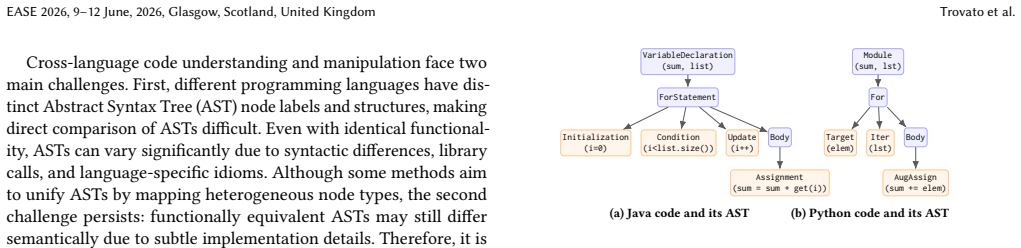

Functionally equivalent snippets from different languages are placed near each other in vector space, raising clone-detection F1 to 99.93%

full image

full image

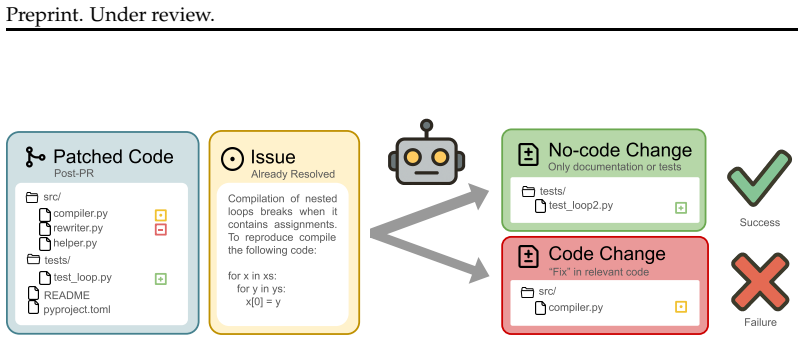

Coding Agents Don't Know When to Act

FixedBench tests show models default to changes even when none are needed, highlighting an action bias in current training.

full image

full image

Lifting semantics into knowledge graphs improves CVE coverage and cuts false positives on real ICS hardware from multiple vendors.

full image

full image

SARC: A Governance-by-Architecture Framework for Agentic AI Systems

Runtime checks cut soft overages 89.5% versus policy-as-code and extend to multi-agent trace trees

full image

full image

The AI-Native Large-Scale Agile Software Development Manifesto

Six principles aim to replace meetings and documents with parallel, intent-driven, AI-orchestrated processes.

full image

full image

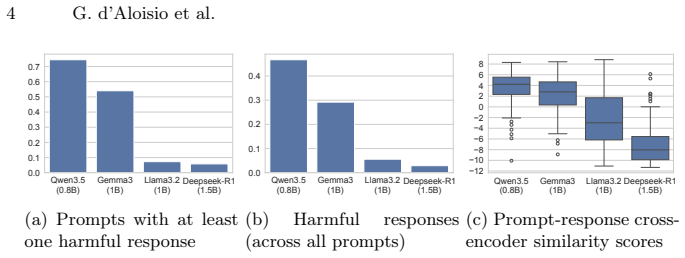

SafeTune: Search-based Harmfulness Minimisation for Large Language Models

Hyperparameter and prompt search on Qwen3.5 0.8B yields large drops in harm and gains in relevance.

full image

full image

Characterizing and Mitigating False-Positive Bug Reports in the Linux Kernel

Study of 2,006 reports finds false positives consume effort comparable to real bugs, concentrated in file systems and drivers.

full image

full image

Do not copy and paste! Rewriting strategies for code retrieval

Full transcription of queries and snippets adds up to 0.51 NDCG@10; corpus-only changes degrade results in 62% of cases; entropy shift flags

full image

full image

System Test Generation for Virtual Reality Applications using Scenario Models

UltraInstinctVR generates and runs system tests on ten open-source applications, beating prior automated tools in coverage and unique bug fl

full image

full image

Can LLMs Solve Science or Just Write Code? Evaluating Quantum Solver Generation

Stronger models still produce numerically inaccurate outputs instead of crashes, limiting direct use for science.

full image

full image

Can LLMs Solve Science or Just Write Code? Evaluating Quantum Solver Generation

Tests across five problem families show execution errors drop as models improve, yet numerical inaccuracies and extra compute remain.

full image

full image

MASPrism: Lightweight Failure Attribution for Multi-Agent Systems Using Prefill-Stage Signals

MASPrism ranks sources of failure in long traces by extracting negative log-likelihood and attention weights before any output is generated.

full image

full image

Ten-run evaluation shows 0.034 kappa gain only for Claude Haiku; all models over-predict negative feedback while Gemini shows highest var

full image

full image

Boosting Automatic Java-to-Cangjie Translation with Multi-Stage LLM Training and Error Repair

Syntactic datasets and iterative repair overcome scarce parallel examples for better semantic alignment.

full image

full image

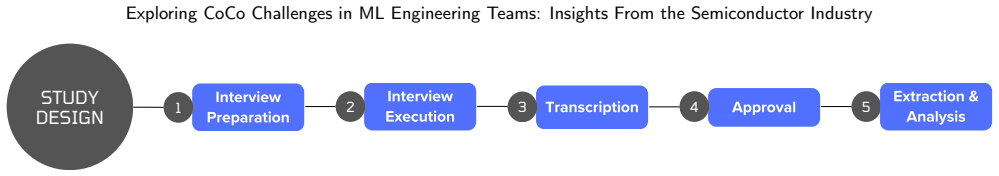

Exploring CoCo Challenges in ML Engineering Teams: Insights From the Semiconductor Industry

Interviews in one global company reveal 16 collaboration issues amplified by hardware constraints, with unclear responsibilities as the top.

full image

full image

Low-code and no-code with BESSER to create and deploy smart web applications

BESSER models smart applications at a high level and generates runnable code, avoiding the restrictions of commercial platforms.

full image

full image

Execution Envelopes: A Shared Admission Contract for Backend AI Execution Requests

An execution envelope records who asked for what and what was granted, letting shared behavior attach without rebuilding per service.

RepoZero: Can LLMs Generate a Code Repository from Scratch?

New benchmark turns generation into reproduction from API specs and shows current models fall short of full software development demands.

full image

full image

From Assistance to Agency: Rethinking Autonomy and Control in CI/CD Pipelines

Current systems limit agents to localized data-plane actions and rely on external governance for safety, creating an urgent need for control

Computer Use at the Edge of the Statistical Precipice

Blind execution of recorded actions equals the source agent's pass@k rate on static deterministic tests.

SmellBench: Evaluating LLM Agents on Architectural Code Smell Repair

On scikit-learn the best agent resolves 47.7 percent while the most aggressive adds 140 new smells

full image

full image

SmellBench: Evaluating LLM Agents on Architectural Code Smell Repair

A benchmark on a major Python library shows agents spot false alarms accurately but repair attempts often create new design problems across

full image

full image

Guidelines for Cultivating a Sense of Belonging to Reduce Developer Burnout

Guidelines synthesized from prior research on belongingness characteristics and factors to help reduce developer burnout in software…

full image

full image