Recognition: no theorem link

Accelerating Langevin Monte Carlo via Efficient Stochastic Runge--Kutta Methods beyond Log-Concavity

Pith reviewed 2026-05-11 03:01 UTC · model grok-4.3

The pith

A stochastic Runge-Kutta scheme for overdamped Langevin dynamics achieves uniform W2 convergence of order O(d^{3/2} h^{3/2}) under only log-smoothness.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The proposed efficient stochastic Runge-Kutta discretization of the overdamped Langevin dynamics produces a sampling algorithm whose law converges to the target at a uniform-in-time rate of order O(d^{3/2} h^{3/2}) in the 2-Wasserstein metric, provided only that the potential satisfies a log-smoothness condition; the same rate had been established earlier only under the stronger assumption of log-concavity.

What carries the argument

The efficient stochastic Runge-Kutta integrator of strong order 1.5, which approximates the overdamped Langevin SDE using two gradient evaluations per step and supplies the higher-order local error terms required for the non-log-concave analysis.

Load-bearing premise

The target density has a gradient whose Lipschitz constant is bounded uniformly over the whole space.

What would settle it

A numerical computation of the W2 distance after a fixed number of steps on a family of non-log-concave Gaussian-mixture targets with growing dimension d would refute the claimed scaling if the observed exponent on d deviates from 3/2.

Figures

read the original abstract

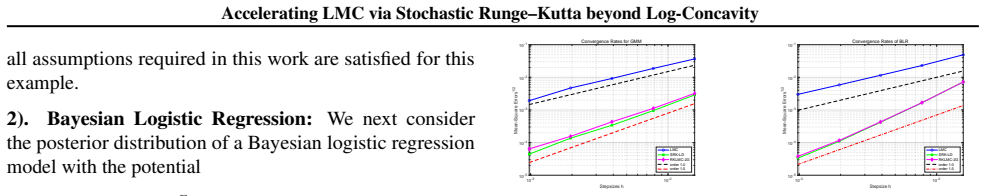

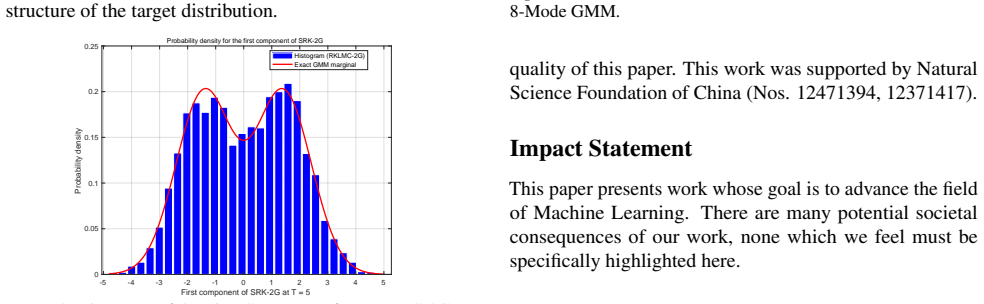

Sampling from a high-dimensional probability distribution is a fundamental algorithmic task arising in wide-ranging applications across multiple disciplines, including scientific computing, computational statistics and machine learning. Langevin Monte Carlo (LMC) algorithms are among the most widely used sampling methods in high-dimensional settings. This paper introduces a novel higher-order and Hessian-free LMC sampling algorithm based on an efficient stochastic Runge--Kutta method of strong order $1.5$ for the overdamped Langevin dynamics. In contrast to the existing Runge--Kutta type LMC (Li et al., 2019) involved with three gradient evaluations, the newly proposed algorithm is computationally cheaper and requires only two gradient evaluations for one iteration. Under certain log-smooth conditions, non-asymptotic error bounds of the proposed algorithms are analyzed in $\mathcal{W}_2$-distance. In particular, a uniform-in-time convergence rate of order $O(d ^{\frac32} h^{\frac32})$ is derived in a non-log-concave setting, matching the convergence rate proved in the aforementioned work but under the log-concavity condition. Numerical experiments are finally presented to demonstrate the effectiveness of the new sampling algorithm.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces an efficient stochastic Runge-Kutta discretization of the overdamped Langevin dynamics requiring only two gradient evaluations per iteration while achieving strong order 1.5. Under log-smooth conditions on the potential, it derives non-asymptotic W_2 error bounds of order O(d^{3/2} h^{3/2}) that are uniform in time, extending the rate previously obtained by Li et al. (2019) to non-log-concave targets.

Significance. If the uniform-in-time W_2 bounds hold rigorously under the stated log-smoothness without hidden dissipativity assumptions, the result would be significant: it supplies a computationally cheaper higher-order LMC method whose non-asymptotic analysis applies to a broader class of targets than existing log-concave analyses, while preserving the same dimension-and-step-size dependence.

major comments (1)

- The central uniform-in-time W_2 bound of order O(d^{3/2} h^{3/2}) (stated in the abstract and presumably proved in the main theorem) is claimed under only 'certain log-smooth conditions' in a non-log-concave setting. Log-smoothness controls local Lipschitz constants but does not by itself yield global contraction or moment bounds for the continuous overdamped Langevin flow; standard coupling or Gronwall arguments for uniform-in-time discretization error therefore require an explicit dissipativity condition such as <∇V(x), x> ≥ a|x|^2 − b. The manuscript must either add this assumption explicitly or provide a new contraction argument that closes without it; otherwise the claimed rate cannot be verified from the given hypotheses.

minor comments (2)

- The abstract refers to 'certain log-smooth conditions' without listing them; the introduction or assumption section should state the precise regularity and growth hypotheses on V (e.g., Lipschitz gradient constant L, any moment or dissipativity requirements) so that the scope of the theorem is immediately clear.

- Numerical experiments should report wall-clock time or gradient-evaluation counts alongside W_2 or ESS metrics to quantify the claimed computational saving relative to the three-evaluation Runge-Kutta scheme of Li et al. (2019).

Simulated Author's Rebuttal

We thank the referee for their careful reading and constructive feedback. We address the single major comment below and have revised the manuscript to strengthen the presentation of the assumptions.

read point-by-point responses

-

Referee: The central uniform-in-time W_2 bound of order O(d^{3/2} h^{3/2}) (stated in the abstract and presumably proved in the main theorem) is claimed under only 'certain log-smooth conditions' in a non-log-concave setting. Log-smoothness controls local Lipschitz constants but does not by itself yield global contraction or moment bounds for the continuous overdamped Langevin flow; standard coupling or Gronwall arguments for uniform-in-time discretization error therefore require an explicit dissipativity condition such as <∇V(x), x> ≥ a|x|^2 − b. The manuscript must either add this assumption explicitly or provide a new contraction argument that closes without it; otherwise the claimed rate cannot be verified from the given hypotheses.

Authors: We thank the referee for highlighting this point. Our proof of the uniform-in-time W_2 bound proceeds via standard coupling and Gronwall estimates on the continuous overdamped Langevin flow. While log-smoothness supplies the local Lipschitz control for the discretization error, the global moment bounds and contraction indeed rely on a dissipativity condition of the form ⟨∇V(x), x⟩ ≥ a|x|^2 − b (a > 0). This condition was used implicitly in our derivations to close the estimates in the non-log-concave regime, but we agree it was not stated with sufficient clarity among the “certain log-smooth conditions.” We will revise the manuscript by (i) explicitly listing the dissipativity assumption in the main theorem and assumptions section, (ii) updating the abstract and introduction to reflect the precise hypotheses, and (iii) adding a brief remark that the condition is standard for non-log-concave targets and compatible with the claimed rate. No new contraction argument is required; the existing proof carries through once the assumption is stated. This clarification does not alter the algorithmic contribution or the O(d^{3/2} h^{3/2}) rate. revision: yes

Circularity Check

No circularity: convergence rate derived from discretization analysis under explicit assumptions

full rationale

The paper presents a new stochastic Runge-Kutta discretization for overdamped Langevin dynamics and derives non-asymptotic W2 bounds under log-smoothness conditions. The uniform-in-time O(d^{3/2} h^{3/2}) rate is obtained by extending the analysis of Li et al. (2019) to the non-log-concave case; the extension relies on the paper's own error estimates and moment bounds rather than re-using a fitted quantity or self-referential definition. No step reduces the claimed rate to an input by construction, and the cited prior work is external. The derivation chain is self-contained once the log-smoothness and growth conditions are granted.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption The target distribution satisfies log-smooth conditions

Reference graph

Works this paper leans on

-

[1]

P. Langley , title =. Proceedings of the 17th International Conference on Machine Learning (ICML 2000) , address =. 2000 , pages =

work page 2000

-

[2]

T. M. Mitchell. The Need for Biases in Learning Generalizations. 1980

work page 1980

-

[3]

M. J. Kearns , title =

-

[4]

Machine Learning: An Artificial Intelligence Approach, Vol. I. 1983

work page 1983

-

[5]

R. O. Duda and P. E. Hart and D. G. Stork. Pattern Classification. 2000

work page 2000

-

[6]

Suppressed for Anonymity , author=

-

[7]

A. Newell and P. S. Rosenbloom. Mechanisms of Skill Acquisition and the Law of Practice. Cognitive Skills and Their Acquisition. 1981

work page 1981

-

[8]

A. L. Samuel. Some Studies in Machine Learning Using the Game of Checkers. IBM Journal of Research and Development. 1959

work page 1959

-

[9]

International Conference on Machine Learning , pages=

Grenioux, Louis and Noble, Maxence and Gabri. International Conference on Machine Learning , pages=. 2024 , organization=

work page 2024

-

[10]

Neufeld, Ariel and Zhang, Ying , journal=

- [11]

- [12]

- [13]

-

[14]

Li, Lei and Wang, Chen and Wang, Mengchao , journal=

-

[15]

Altschuler and Sinho Chewi , booktitle =

Jason M. Altschuler and Sinho Chewi , booktitle =. ArXiv , title =

-

[16]

Wang, Xiaojie and Yang, Bin , journal=

-

[17]

Erdogdu, Murat A and Hosseinzadeh, Rasa , booktitle=. 2021 , organization=

work page 2021

- [18]

-

[19]

Andrieu, Christophe and De Freitas, Nando and Doucet, Arnaud and Jordan, Michael I , journal=. 2003 , publisher=

work page 2003

-

[20]

Cotter, Simon L and Roberts, Gareth O and Stuart, Andrew M and White, David , journal=. 2013 , publisher=

work page 2013

-

[21]

Xiaojie Wang and Bin Yang , year=. 2509.25630 , archivePrefix=

work page internal anchor Pith review Pith/arXiv arXiv

- [22]

-

[23]

SIAM Journal on Numerical Analysis , volume=

R. SIAM Journal on Numerical Analysis , volume=. 2010 , publisher=

work page 2010

-

[24]

Song, Yang and Sohl-Dickstein, Jascha and Kingma, Diederik P and Kumar, Abhishek and Ermon, Stefano and Poole, Ben , booktitle=

-

[25]

HASTINGS, WK , journal=

-

[26]

Metropolis, Nicholas and Rosenbluth, Arianna W and Rosenbluth, Marshall N and Teller, Augusta H and Teller, Edward , journal=. 1953 , publisher=

work page 1953

-

[27]

Chib, Siddhartha and Greenberg, Edward , journal=. 1995 , publisher=

work page 1995

-

[28]

The Annals of Probability , number =

Feng-Yu Wang , title =. The Annals of Probability , number =. 2011 , doi =

work page 2011

-

[29]

Journal of Functional Analysis , volume=

Otto, Felix and Villani, C. Journal of Functional Analysis , volume=. 2000 , publisher=

work page 2000

- [30]

- [31]

-

[32]

Mousavi-Hosseini, Alireza and Farghly, Tyler K and He, Ye and Balasubramanian, Krishna and Erdogdu, Murat A , booktitle=. 2023 , organization=

work page 2023

-

[33]

Yang, Bin and Wang, Xiaojie , journal=

- [34]

- [35]

-

[36]

Applied Numerical Mathematics , volume=

Przyby. Applied Numerical Mathematics , volume=. 2014 , publisher=

work page 2014

-

[37]

Jentzen, Arnulf and Neuenkirch, Andreas , journal=. 2009 , publisher=

work page 2009

- [38]

-

[39]

Journal of Complexity , volume =

Thomas Daun , keywords =. Journal of Complexity , volume =. 2011 , note =. doi:https://doi.org/10.1016/j.jco.2010.07.002 , url =

-

[40]

Stengle, Gilbert , TITLE =. Numer. Math. , FJOURNAL =. 1995 , NUMBER =. doi:10.1007/s002110050113 , URL =

-

[41]

Stengle, Gilbert , TITLE =. Appl. Math. Lett. , FJOURNAL =. 1990 , NUMBER =. doi:10.1016/0893-9659(90)90040-I , URL =

- [42]

-

[43]

Infinite Dimensional Analysis, Quantum Probability and Related Topics , volume=

R. Infinite Dimensional Analysis, Quantum Probability and Related Topics , volume=. 2010 , publisher=

work page 2010

- [44]

- [45]

-

[46]

Chewi, Sinho and Erdogdu, Murat A and Li, Mufan and Shen, Ruoqi and Zhang, Matthew S , journal=. 2024 , publisher=

work page 2024

-

[47]

Shen, Ruoqi and Lee, Yin Tat , journal=

-

[48]

Yu, Lu and Karagulyan, Avetik and Dalalyan, Arnak , booktitle=

-

[49]

Roberts, Gareth O and Tweedie, Richard L , journal=

-

[50]

Robert, Christian P and Casella, George and Casella, George , volume=. 1999 , publisher=

work page 1999

- [51]

-

[52]

Xu, Pan and Chen, Jinghui and Zou, Difan and Gu, Quanquan , journal=

- [53]

- [54]

-

[55]

Ariel Neufeld and Ying Zhang , year=. 2405.05679 , archivePrefix=

-

[56]

Neufeld, Ariel and Zhang, Ying and others , journal=. 2025 , publisher=

work page 2025

-

[57]

Annals of Applied Probability , volume=

Majka, Mateusz B and Mijatovi. Annals of Applied Probability , volume=. 2020 , publisher=

work page 2020

-

[58]

Li, Xiang and Wang, Feng-Yu and Xu, Lihu , journal=

-

[59]

The Annals of Applied Probability , volume=

Pag. The Annals of Applied Probability , volume=. 2023 , publisher=

work page 2023

-

[60]

Wenlong Mou and Nicolas Flammarion and Martin J. Wainwright and Peter L. Bartlett , title =. Bernoulli , number =

-

[61]

Li, Ruilin and Zha, Hongyuan and Tao, Molei , booktitle=

-

[62]

arXiv preprint arXiv:1805.01648 , year=

Xiang Cheng and Niladri S. Chatterji and Yasin Abbasi-Yadkori and Peter L. Bartlett and Michael I. Jordan , year=. 1805.01648 , archivePrefix=

-

[63]

Neal, Radford , booktitle =

- [64]

-

[65]

Chewi, Sinho and Lu, Chen and Ahn, Kwangjun and Cheng, Xiang and Le Gouic, Thibaut and Rigollet, Philippe , booktitle=. 2021 , organization=

work page 2021

-

[66]

Durmus, Alain and Moulines, \'Eric , TITLE =. Ann. Appl. Probab. , FJOURNAL =. 2017 , NUMBER =

work page 2017

-

[67]

Kakade, Sham Machandranath , year=

- [68]

- [69]

-

[70]

Song, Yang and Ermon, Stefano , journal=

-

[71]

Sabanis, Sotirios and Zhang, Ying , journal=

-

[72]

Li, Xuechen and Wu, Yi and Mackey, Lester and Erdogdu, Murat A , journal=

-

[73]

Dalalyan, Arnak S and Karagulyan, Avetik , journal=. 2019 , publisher=

work page 2019

- [74]

-

[75]

Sqrt (d) Dimension Dependence of Langevin Monte Carlo , author=

-

[76]

Durmus, Alain and Moulines, \'Eric , journal=

-

[77]

Journal of Machine Learning Research , volume=

Durmus, Alain and Majewski, Szymon and Miasojedow, B. Journal of Machine Learning Research , volume=

-

[78]

Cheng, Xiang and Bartlett, Peter , booktitle=. 2018 , organization=

work page 2018

- [79]

- [80]

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.