Recognition: 2 theorem links

· Lean TheoremBias Correction for Semiparametric Regression Models

Pith reviewed 2026-05-12 01:12 UTC · model grok-4.3

The pith

A simulation-based method corrects finite-sample bias in semiparametric regression models without inflating variance.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

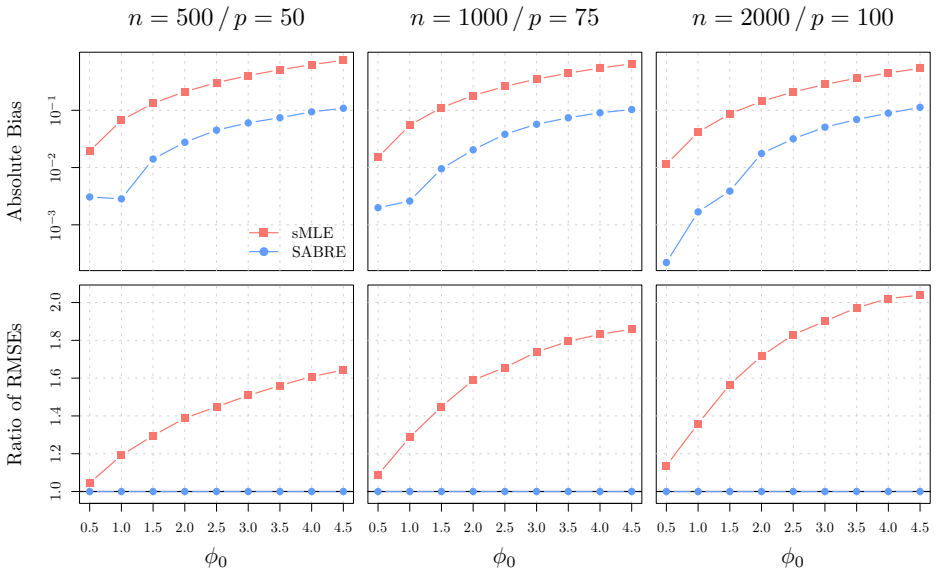

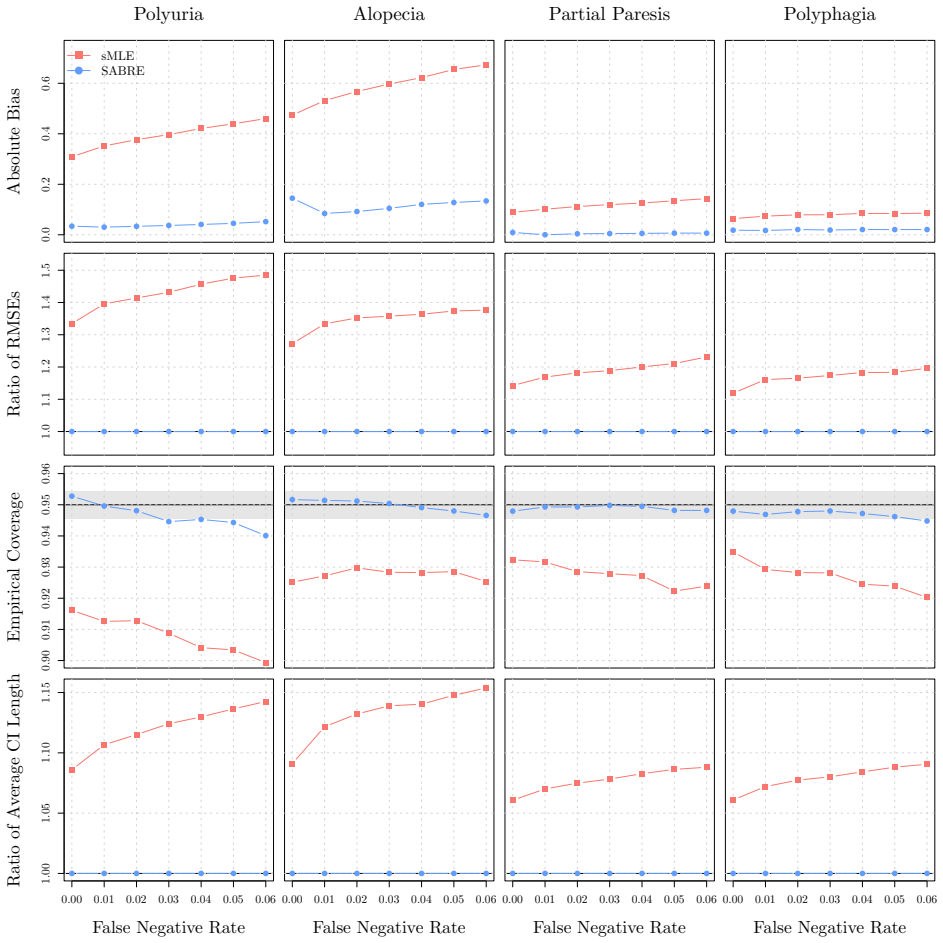

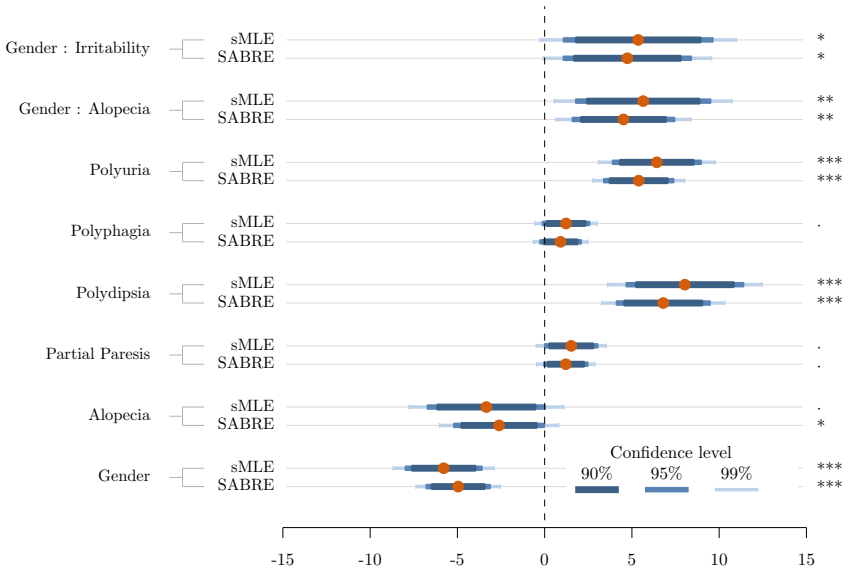

SABRE is a simulation-based bias correction framework for the model class f{Y | x^T β + m(z), φ} with diverging p. For the subclass of generalized partially linear models, it achieves bias reduction for β and φ without variance inflation and has established asymptotic properties; the underlying principle may be adapted more generally.

What carries the argument

SABRE, a simulation-based bias correction procedure that estimates the finite-sample bias by simulating responses under the fitted model and subtracts the estimated bias from the initial estimator.

Where Pith is reading between the lines

- The simulation principle could extend to semiparametric models outside the generalized partially linear subclass if the simulation step is suitably modified for the nonparametric component.

- Corrected estimators may support more reliable inference in applied domains such as biostatistics where both coefficients and dispersion carry scientific meaning.

- Performance under mild misspecification of the nonparametric function remains an open question that could be tested with targeted simulations.

Load-bearing premise

The data-generating process belongs to the subclass of generalized partially linear models and the simulation step accurately reproduces the finite-sample bias distribution under the true unknown smooth function.

What would settle it

In repeated Monte Carlo simulations drawn from a generalized partially linear model with known true parameters, the SABRE-corrected estimator for β or φ shows no bias reduction or exhibits higher variance than the uncorrected estimator.

Figures

read the original abstract

We consider a broad class of semiparametric regression models in which the conditional distribution of the response takes the form $f\{Y|\bf{x}^{\rm T}\boldsymbol{\beta}+m(z), \phi\}$, which is known up to a parametric component $\boldsymbol{\beta}$ of diverging dimension $p$, a smooth function $m(\cdot)$, and a dispersion parameter $\phi$. Existing semiparametric literature on such models has primarily focused on semiparametric efficiency for $\boldsymbol{\beta}$, typically treating $\phi$ and $m(\cdot)$ as nuisances and largely ignoring their finite-sample bias. However, the finite-sample bias of standard estimators can be substantial (especially when $p$ is large relatively to $n$ and/or dispersion is high) and can seriously undermine inference for $\boldsymbol{\beta}$. Moreover, $\phi$ is often of direct scientific interest and requires accurate estimation. To address this gap, we propose SABRE, a simulation-based bias correction framework for this broad semiparametric model class. We establish asymptotic properties of SABRE for the subclass of generalized partially linear models, where bias reduction for $\boldsymbol{\beta}$ and $\phi$ can be achieved without inflating variance, and we outline how the underlying principle may be adapted more generally. Comprehensive simulation studies and a real-data application on early-stage diabetes demonstrate the empirical effectiveness of SABRE in reducing bias and improving inference.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes SABRE, a simulation-based bias correction framework for the broad class of semiparametric regression models with conditional distribution f{Y | x^T β + m(z), φ}, where β has diverging dimension p. Asymptotic properties establishing bias reduction for both β and φ without variance inflation are derived for the generalized partially linear models (GPLM) subclass (canonical link and fully specified variance structure up to φ), while an adaptation principle is outlined for the general case. The approach is illustrated via comprehensive simulations and a real-data application to early-stage diabetes.

Significance. If the asymptotic claims hold, the work addresses an understudied practical issue in semiparametric inference: substantial finite-sample bias in estimators for β (especially when p/n is not small) and for the scientifically relevant dispersion φ. The demonstration that bias correction can be achieved while preserving the semiparametric efficiency bound for β in the GPLM case, together with the provision of simulation studies and empirical validation, represents a useful contribution to the literature on bias correction in models with nonparametric components.

major comments (2)

- Abstract and the statement of main results: the central claim that SABRE achieves asymptotic bias reduction for β (diverging dimension) and φ while preserving the semiparametric efficiency bound is formally established only for the GPLM subclass. For the broader semiparametric class the paper provides only an outline of the adaptation principle; no limiting distribution is derived and no bound is given on the additional covariance that may arise when the simulation step employs a plug-in estimator of the unknown m(·). This gap is load-bearing for the paper's framing as a method for the broad model class.

- Description of the SABRE procedure (simulation step): the bias estimate is generated by simulating responses from the fitted model that itself contains the plug-in estimate of m(·). The argument that this step reproduces the finite-sample bias distribution without inflating asymptotic variance is shown only under the GPLM assumptions; for the general case the paper does not bound the covariance between the original estimator and the simulated correction term, leaving open the possibility that the correction introduces a non-negligible variance contribution.

minor comments (2)

- Abstract: the phrase 'without inflating variance' should be qualified by the GPLM restriction already stated later in the abstract, to avoid any ambiguity for readers who stop at the opening sentence.

- Notation: the distinction between the true m(·) and its estimator should be made explicit when describing the simulation step, as the current wording can be misread as treating m as known.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive report. The comments correctly identify that our asymptotic theory is fully developed only for the GPLM subclass, while the broader semiparametric class receives an outline of the adaptation principle. We will revise the abstract, introduction, and relevant sections to make this scope explicit and to discuss the covariance issue in the general case. Point-by-point responses follow.

read point-by-point responses

-

Referee: Abstract and the statement of main results: the central claim that SABRE achieves asymptotic bias reduction for β (diverging dimension) and φ while preserving the semiparametric efficiency bound is formally established only for the GPLM subclass. For the broader semiparametric class the paper provides only an outline of the adaptation principle; no limiting distribution is derived and no bound is given on the additional covariance that may arise when the simulation step employs a plug-in estimator of the unknown m(·). This gap is load-bearing for the paper's framing as a method for the broad model class.

Authors: We agree that the formal limiting distribution and the proof that the semiparametric efficiency bound is preserved (i.e., no asymptotic variance inflation) are established only under the GPLM assumptions (canonical link and fully specified variance up to φ). For the general model class we supply only an outline of how the simulation-based correction can be adapted. In the revised manuscript we will modify the abstract and the opening paragraphs of the introduction to state explicitly that the detailed asymptotic bias-reduction and efficiency results apply to the GPLM subclass, while the general case is addressed via the outlined adaptation principle. We will also add a short paragraph in Section 3 noting that a full covariance bound for the plug-in estimator of m(·) remains open for future work. These changes will align the framing with the actual theorems without diminishing the practical utility demonstrated in the simulations and data example. revision: yes

-

Referee: Description of the SABRE procedure (simulation step): the bias estimate is generated by simulating responses from the fitted model that itself contains the plug-in estimate of m(·). The argument that this step reproduces the finite-sample bias distribution without inflating asymptotic variance is shown only under the GPLM assumptions; for the general case the paper does not bound the covariance between the original estimator and the simulated correction term, leaving open the possibility that the correction introduces a non-negligible variance contribution.

Authors: The referee is correct that the rigorous argument showing the simulated correction term is asymptotically uncorrelated with the original estimator (hence no variance inflation) relies on the GPLM structure. In the general setting the simulation uses a plug-in estimator of m(·), and we have not derived a bound on the resulting covariance. In the revision we will expand the description of the SABRE algorithm (currently Section 2.2) to include an explicit caveat that variance preservation is proven only for GPLM and that, in the general case, the additional covariance term is not yet bounded. We will also insert a brief remark in the discussion section indicating that controlling this term may require additional smoothness or rate conditions on the nonparametric estimator. This clarification will prevent readers from over-generalizing the variance result. revision: yes

Circularity Check

No circularity: SABRE bias correction and asymptotics are methodologically independent of inputs

full rationale

The paper defines SABRE as a simulation-based procedure that generates bias estimates from the fitted semiparametric model and then derives its asymptotic bias-reduction and variance properties for the generalized partially linear model subclass. This construction does not reduce the target estimator or its limiting distribution to a fitted parameter by definition, nor does it rename a known result, smuggle an ansatz via self-citation, or invoke a uniqueness theorem from the authors' prior work. The simulation step is an explicit algorithmic component whose finite-sample behavior is analyzed under stated regularity conditions rather than assumed to match the target by construction. The broader model class receives only an outline without formal claims, so no load-bearing circular step appears. The derivation chain remains self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe establish asymptotic properties of SABRE for the subclass of generalized partially linear models, where bias reduction for β and φ can be achieved without inflating variance.

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearSABRE is defined by matching this initial estimator to its simulation-based expectation under the same approximating model.

Reference graph

Works this paper leans on

-

[1]

The Annals of Statistics , pages=

Convergence rates for parametric components in a partly linear model , author=. The Annals of Statistics , pages=. 1988 , publisher=

work page 1988

-

[2]

Journal of the Royal Statistical Society: Series B (Methodological) , volume=

Semiparametric additive regression , author=. Journal of the Royal Statistical Society: Series B (Methodological) , volume=. 1992 , publisher=

work page 1992

-

[3]

The Annals of Statistics , volume=

On asymptotically optimal confidence regions and tests for high-dimensional models , author=. The Annals of Statistics , volume=. 2014 , publisher=

work page 2014

-

[4]

The Annals of Statistics , volume=

A general theory of hypothesis tests and confidence regions for sparse high dimensional models , author=. The Annals of Statistics , volume=. 2017 , publisher=

work page 2017

-

[5]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Confidence intervals for low dimensional parameters in high dimensional linear models , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 2014 , publisher=

work page 2014

-

[6]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Regression shrinkage and selection via the lasso , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 1996 , publisher=

work page 1996

-

[7]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Spline smoothing in a partly linear model , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 1986 , publisher=

work page 1986

-

[8]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Kernel smoothing in partial linear models , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 1988 , publisher=

work page 1988

-

[9]

Journal of the Royal Statistical Society: Series C (Applied Statistics) , volume=

Extra-Poisson variation in log-linear models , author=. Journal of the Royal Statistical Society: Series C (Applied Statistics) , volume=. 1984 , publisher=

work page 1984

-

[10]

Differential expression analysis for sequence count data , author=. Nature Precedings , pages=. 2010 , publisher=

work page 2010

-

[11]

Journal of the American Statistical Association , volume=

Generalized partially linear single-index models , author=. Journal of the American Statistical Association , volume=. 1997 , publisher=

work page 1997

-

[12]

American heart journal , volume=

Overall and coronary heart disease mortality rates in relation to major risk factors in 325,348 men screened for the MRFIT , author=. American heart journal , volume=. 1986 , publisher=

work page 1986

-

[13]

ASTIN Bulletin: The Journal of the IAA , volume=

Fitting Tweedie's compound Poisson model to insurance claims data: dispersion modelling , author=. ASTIN Bulletin: The Journal of the IAA , volume=. 2002 , publisher=

work page 2002

-

[14]

Beyond mean modelling: Bias due to misspecification of dispersion in Poisson-inverse Gaussian regression , author=. Biometrical Journal , volume=. 2019 , publisher=

work page 2019

-

[15]

Bias-corrected maximum likelihood estimator of the negative binomial dispersion parameter , author=. Biometrics , volume=. 2005 , publisher=

work page 2005

-

[16]

Journal of Econometrics , volume=

Approximate bias correction in econometrics , author=. Journal of Econometrics , volume=. 1998 , publisher=

work page 1998

-

[17]

Which moments to match? , author=. Econometric Theory , volume=. 1996 , publisher=

work page 1996

- [18]

-

[19]

Econometrica: Journal of the Econometric Society , pages=

A method of simulated moments for estimation of discrete response models without numerical integration , author=. Econometrica: Journal of the Econometric Society , pages=. 1989 , publisher=

work page 1989

-

[20]

Journal of the Royal Statistical Society: Series B (Methodological) , volume=

A general definition of residuals , author=. Journal of the Royal Statistical Society: Series B (Methodological) , volume=. 1968 , publisher=

work page 1968

-

[21]

Journal of Time Series Econometrics , volume=

Valid locally uniform Edgeworth expansions for a class of weakly dependent processes or sequences of smooth transformations , author=. Journal of Time Series Econometrics , volume=. 2014 , publisher=

work page 2014

-

[22]

The Econometrics Journal , volume=

A class of indirect inference estimators: higher-order asymptotics and approximate bias correction , author=. The Econometrics Journal , volume=. 2015 , publisher=

work page 2015

-

[23]

Simulation-based Inference in Econometrics: Methods and Applications , pages=

Calibration by simulation for small sample bias correction , author=. Simulation-based Inference in Econometrics: Methods and Applications , pages=. 2000 , publisher=

work page 2000

-

[24]

Journal of the American Statistical Association , volume=

Simulation-based bias correction methods for complex models , author=. Journal of the American Statistical Association , volume=. 2019 , publisher=

work page 2019

- [25]

-

[26]

Nonparametric and semiparametric models , author=. 2012 , publisher=

work page 2012

-

[27]

An Introduction to the Estimation of GPLMs and Data Examples for the R gplm Package , author=. Package vignette. R package. URL: http://cran. r-project. org , year=

-

[28]

Journal of Multivariate Analysis , volume=

On parameters of increasing dimensions , author=. Journal of Multivariate Analysis , volume=. 2000 , publisher=

work page 2000

-

[29]

arXiv preprint arXiv:2310.19244 , year=

High-dimensional statistics , author=. arXiv preprint arXiv:2310.19244 , year=

- [30]

- [31]

-

[32]

Statistics and Computing , volume=

Mean and median bias reduction in generalized linear models , author=. Statistics and Computing , volume=. 2020 , publisher=

work page 2020

- [33]

-

[34]

Advances in Neural Information Processing Systems , volume=

Generative adversarial nets , author=. Advances in Neural Information Processing Systems , volume=

-

[35]

Handbook of Econometrics , volume=

Large sample estimation and hypothesis testing , author=. Handbook of Econometrics , volume=. 1994 , publisher=

work page 1994

-

[36]

Econometrics and Statistics , year=

A spline-assisted semiparametric approach to nonparametric measurement error models , author=. Econometrics and Statistics , year=

- [37]

-

[38]

Generalized linear models , author=. Regression , pages=. 2013 , publisher=

work page 2013

- [39]

-

[40]

Journal of Econometrics , volume=

Semiparametric binary regression models under shape constraints with an application to Indian schooling data , author=. Journal of Econometrics , volume=. 2009 , publisher=

work page 2009

-

[41]

The Annals of Statistics , volume=

Robust estimates in generalized partially linear models , author=. The Annals of Statistics , volume=

-

[42]

Journal of the American Statistical Association , volume=

On profile likelihood , author=. Journal of the American Statistical Association , volume=. 2000 , publisher=

work page 2000

-

[43]

Journal of the American Statistical Association , volume=

Quasi-likelihood estimation in semiparametric models , author=. Journal of the American Statistical Association , volume=. 1994 , publisher=

work page 1994

-

[44]

Wiley Interdisciplinary Reviews: Computational Statistics , volume=

Bias in parametric estimation: reduction and useful side-effects , author=. Wiley Interdisciplinary Reviews: Computational Statistics , volume=. 2014 , publisher=

work page 2014

-

[45]

Proceedings of the National Academy of Sciences , volume=

A modern maximum-likelihood theory for high-dimensional logistic regression , author=. Proceedings of the National Academy of Sciences , volume=. 2019 , publisher=

work page 2019

-

[46]

Bias-corrected confidence intervals for the concentration parameter in a dilution assay , author=. Biometrics , volume=. 1999 , publisher=

work page 1999

-

[47]

Empirical evidence concerning the finite sample performance of EL-type structural equation estimation and inference methods , author=. Chapter , volume=

-

[48]

Journal of Applied Econometrics , volume=

Indirect inference , author=. Journal of Applied Econometrics , volume=. 1993 , publisher=

work page 1993

-

[49]

Journal of Econometrics , pages=

Semiparametric approach to estimation of marginal mean effects and marginal quantile effects , author=. Journal of Econometrics , pages=. 2023 , publisher=

work page 2023

-

[50]

Firth, D. , journal =. Bias reduction of maximum likelihood estimates , volume =

-

[51]

Kosmidis, I. and Firth, D. , journal =. Bias reduction in exponential family nonlinear models , volume =

-

[52]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Asymptotically unbiased estimation in generalized linear models with random effects , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 1995 , publisher=

work page 1995

-

[53]

arXiv preprint arXiv:2204.07907 , year=

Just Identified Indirect Inference Estimator: Accurate Inference through Bias Correction , author=. arXiv preprint arXiv:2204.07907 , year=

-

[54]

The Annals of Statistics , volume=

Local asymptotics for polynomial spline regression , author=. The Annals of Statistics , volume=. 2003 , publisher=

work page 2003

-

[55]

Spline estimation of single-index models , author=. Statistica Sinica , pages=. 2009 , publisher=

work page 2009

- [56]

-

[57]

Varying-coefficient models and basis function approximations for the analysis of repeated measurements , author=. Biometrika , volume=. 2002 , publisher=

work page 2002

-

[58]

Estimation and variable selection for semiparametric additive partial linear models (ss-09-140) , author=. Statistica Sinica , volume=. 2011 , publisher=

work page 2011

-

[59]

The Annals of Statistics , volume=

Estimation and variable selection for generalized additive partial linear models , author=. The Annals of Statistics , volume=. 2011 , publisher=

work page 2011

-

[60]

Journal of the American Statistical Association , volume=

A semiparametric single-index risk score across populations , author=. Journal of the American Statistical Association , volume=. 2017 , publisher=

work page 2017

-

[61]

The Annals of Statistics , volume=

Additive regression and other nonparametric models , author=. The Annals of Statistics , volume=. 1985 , publisher=

work page 1985

-

[62]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Identification of non-linear additive autoregressive models , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 2004 , publisher=

work page 2004

-

[63]

The Annals of Statistics , volume=

Local asymptotics for regression splines and confidence regions , author=. The Annals of Statistics , volume=. 1998 , publisher=

work page 1998

-

[64]

The Annals of Statistics , volume=

Joint asymptotics for semi-nonparametric regression models with partially linear structure , author=. The Annals of Statistics , volume=. 2015 , publisher=

work page 2015

-

[65]

Efficient estimation of the partly linear additive

Huang, Jian , journal=. Efficient estimation of the partly linear additive. 1999 , publisher=

work page 1999

- [66]

-

[67]

Nonparametric and semiparametric models , author=. 2004 , publisher=

work page 2004

-

[68]

Computer Vision and Machine Intelligence in Medical Image Analysis , pages=

Likelihood prediction of diabetes at early stage using data mining techniques , author=. Computer Vision and Machine Intelligence in Medical Image Analysis , pages=. 2020 , publisher=

work page 2020

-

[69]

Current Developments in Nutrition , volume=

Older Adults with Lowest Risk of Developing Type 2 Diabetes: Results from a Large and Diverse US Cohort (2003--2016)(OR22-06-19) , author=. Current Developments in Nutrition , volume=. 2019 , publisher=

work page 2003

-

[70]

The Annals of Statistics , volume=

Semiparametric efficiency bounds for high-dimensional models , author=. The Annals of Statistics , volume=. 2018 , publisher=

work page 2018

-

[71]

International Journal of Molecular Sciences , volume=

Influence of gender in diabetes mellitus and its complication , author=. International Journal of Molecular Sciences , volume=. 2022 , publisher=

work page 2022

- [72]

-

[73]

Diabetes technology & therapeutics , volume=

Does glycemic variability impact mood and quality of life? , author=. Diabetes technology & therapeutics , volume=. 2012 , publisher=

work page 2012

-

[74]

International Journal of Women's Dermatology , volume=

Association of type 2 diabetes with central-scalp hair loss in a large cohort study of African American women , author=. International Journal of Women's Dermatology , volume=. 2019 , publisher=

work page 2019

-

[75]

How the diabetic eye loses vision , author=. Endocrine , volume=. 2007 , publisher=

work page 2007

-

[76]

Stochastic Approximation and Recursive Algorithms and Applications , author=. 2003 , publisher=

work page 2003

-

[77]

The Annals of Mathematical Statistics , pages=

A stochastic approximation method , author=. The Annals of Mathematical Statistics , pages=. 1951 , publisher=

work page 1951

-

[78]

Journal of the American Statistical Association , volume=

Randomized response: A survey technique for eliminating evasive answer bias , author=. Journal of the American Statistical Association , volume=. 1965 , publisher=

work page 1965

-

[79]

Advances in Neural Information Processing Systems , volume=

Deep learning with label differential privacy , author=. Advances in Neural Information Processing Systems , volume=

-

[80]

AMIA Annual Symposium Proceedings , volume=

An empirical study for impacts of measurement errors on EHR based association studies , author=. AMIA Annual Symposium Proceedings , volume=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.