Recognition: no theorem link

Prediction-Powered Linear Regression: A Balance Between Interpretation and Prediction

Pith reviewed 2026-05-12 01:41 UTC · model grok-4.3

The pith

The Prediction-powered Unified Model Averaging framework combines linear regression with machine learning to achieve both interpretability and optimal prediction.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We propose the Prediction-powered Unified Model Averaging (PUMA) framework to combine linear regression and machine learning methods, achieving a balance between interpretation and prediction. Unlike existing prediction-powered inference work, PUMA is the first to jointly address uncertainty arising from model misspecification, power-tuning selection, and the choice of machine learning algorithms by using model averaging. Theoretically, we establish the asymptotic prediction optimality of the proposed method both in-sample and out-of-sample under mild conditions, along with estimation consistency. Extensive simulations and a real-world application further demonstrate the empirical advantages

What carries the argument

The Prediction-powered Unified Model Averaging (PUMA) framework, which performs model averaging over linear regression and ML predictors to balance interpretability and predictive power while handling multiple sources of uncertainty.

If this is right

- Provides interpretable linear coefficients alongside high predictive accuracy from ML.

- Enables effective use of unlabeled data in economic studies where outcomes are hard to observe.

- Maintains optimality guarantees even when the linear model is misspecified or the ML algorithm is arbitrary.

- Delivers consistent estimation of the linear regression parameters.

- Shows empirical advantages over separate linear or ML approaches in simulations and real economic applications.

Where Pith is reading between the lines

- If the mild conditions are satisfied in typical economic datasets, PUMA could serve as a practical default for analysts who need both explanation and accuracy.

- The framework might be extended to generalized linear models or other interpretable bases while preserving the same averaging logic.

- Finite-sample behavior and robustness to particular ML failures remain open questions that could be tested directly on economic data.

Load-bearing premise

The asymptotic optimality and consistency results rely on unspecified mild conditions that must accommodate model misspecification, data-driven power-tuning selection, and arbitrary machine learning algorithms.

What would settle it

A dataset or simulation in which the PUMA estimator fails to achieve lower prediction error than either pure linear regression or pure ML under explicit model misspecification.

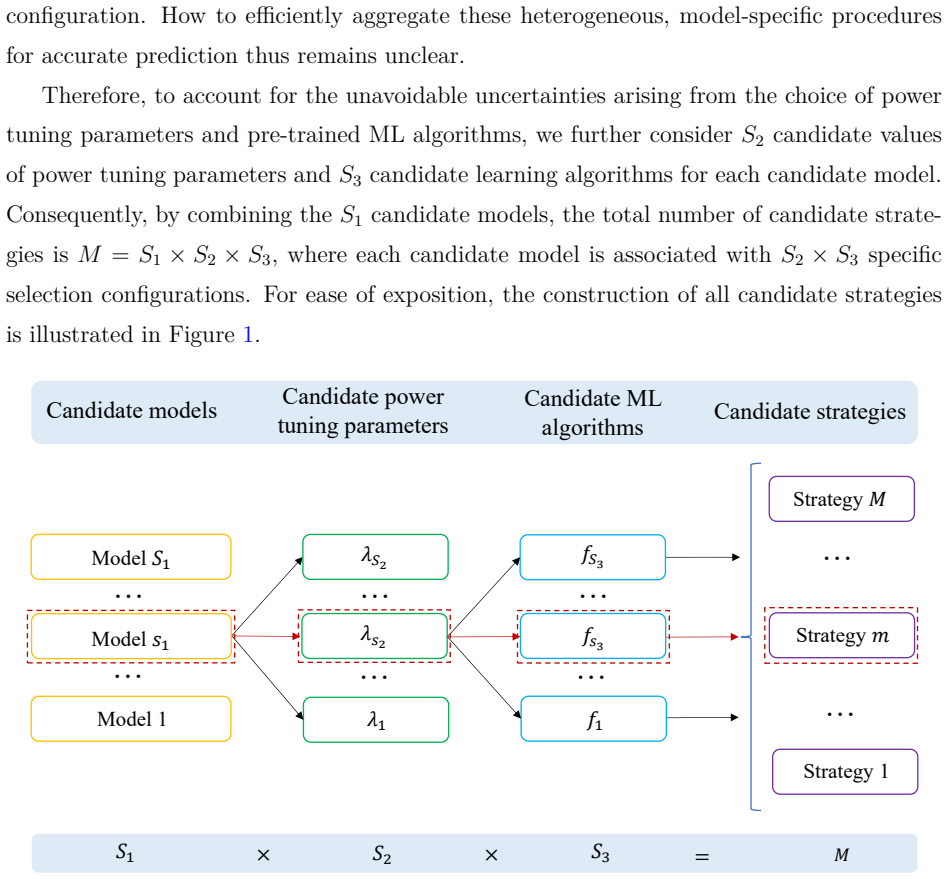

Figures

read the original abstract

Unlabeled data are increasingly prevalent in contemporary economic studies, yet their effective use for improving prediction remains challenging because the outcomes are often costly or even infeasible to observe. Machine learning methods can help label these data and achieve high predictive accuracy, but they often lack interpretability. In this paper, we propose a Prediction-powered Unified Model Averaging (PUMA) framework to combine linear regression and machine learning methods, achieving a balance between interpretation and prediction. Unlike existing works on prediction powered inference, our approach is the first to jointly address uncertainty arising from model misspecification, power-tuning selection, and the choice of machine learning algorithms by using model averaging. Theoretically, we establish the asymptotic prediction optimality of the proposed method both in-sample and out-of-sample under mild conditions, along with estimation consistency. Extensive simulations and a real-world application further demonstrate the empirical advantages of the proposed method.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes the Prediction-powered Unified Model Averaging (PUMA) framework, which uses model averaging to combine linear regression with machine learning predictors. This is intended to balance interpretability and predictive accuracy when leveraging unlabeled data in economic applications. The central theoretical claims are asymptotic in-sample and out-of-sample prediction optimality plus estimation consistency, all under unspecified mild conditions; these are supported by simulations and one real-data example.

Significance. If the optimality and consistency results can be rigorously established, the work would contribute a unified approach to prediction-powered inference that simultaneously handles model misspecification, data-driven tuning, and ML algorithm selection. Such a method could be useful in econometrics and statistics where both interpretability and accuracy matter.

major comments (1)

- [Abstract] Abstract: the claim of asymptotic prediction optimality (both in-sample and out-of-sample) and estimation consistency under 'mild conditions' is load-bearing for the paper's contribution. These conditions are not stated explicitly, so it is impossible to verify whether they accommodate data-dependent model-averaging weights (chosen to minimize estimated risk) together with arbitrary ML algorithms whose convergence rates or biases may be uncontrolled. If the proof instead fixes tuning parameters or assumes a single consistent ML estimator, the advertised generality does not extend to the implemented procedure.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive feedback on our manuscript. We address the major comment regarding the explicit statement of conditions for our asymptotic results below, and we clarify how the framework accommodates the implemented procedure.

read point-by-point responses

-

Referee: [Abstract] Abstract: the claim of asymptotic prediction optimality (both in-sample and out-of-sample) and estimation consistency under 'mild conditions' is load-bearing for the paper's contribution. These conditions are not stated explicitly, so it is impossible to verify whether they accommodate data-dependent model-averaging weights (chosen to minimize estimated risk) together with arbitrary ML algorithms whose convergence rates or biases may be uncontrolled. If the proof instead fixes tuning parameters or assumes a single consistent ML estimator, the advertised generality does not extend to the implemented procedure.

Authors: We thank the referee for this important observation. The mild conditions are stated explicitly in the statements of Theorems 1 (asymptotic in-sample prediction optimality), Theorem 2 (asymptotic out-of-sample prediction optimality), and Theorem 3 (estimation consistency) in Section 3, along with the supporting Assumptions 1–4 in the same section. These assumptions are designed to accommodate data-dependent model-averaging weights: Assumption 3 requires only that the weights minimize a cross-validated estimate of the risk (which is data-dependent by construction) and that the candidate set of linear and ML predictors is finite. The proofs in the appendix (particularly the derivations leading to the oracle inequality) do not fix tuning parameters or require consistency of any individual ML estimator. Instead, they rely on a uniform integrability condition on the ML predictions (Assumption 2) and allow arbitrary convergence rates or biases for individual ML methods, with the averaging step ensuring the overall procedure achieves the claimed optimality. We agree that the abstract would benefit from a brief summary of these conditions and will revise it accordingly in the next version. revision: yes

Circularity Check

No circularity identified; theoretical claims remain independent of inputs.

full rationale

The provided abstract and context describe a new PUMA model-averaging procedure whose asymptotic optimality and consistency are asserted under unspecified mild conditions. No equations, self-citations, or derivation steps are quoted that reduce the optimality result to a fitted quantity, a self-defined weight, or a prior result by the same authors. The central claim therefore does not collapse by construction to its own inputs; any evaluation of the mild conditions would require the full proof, which is not shown to be circular here.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption mild conditions suffice for asymptotic prediction optimality and estimation consistency

invented entities (1)

-

PUMA framework

no independent evidence

Reference graph

Works this paper leans on

-

[1]

author Algaba, A. , author Ardia, D. , author Bluteau, K. , author Borms, S. , author Boudt, K. , year 2020 . title Econometrics meets sentiment: An overview of methodology and applications . journal Journal of Economic Surveys volume 34 , pages 512--547

work page 2020

-

[2]

author Ando, T. , author Li, K.C. , year 2014 . title A model-averaging approach for high-dimensional regression . journal Journal of the American Statistical Association volume 109 , pages 254--265

work page 2014

-

[3]

author Ando, T. , author Li, K.C. , year 2017 . title A weight-relaxed model averaging approach for high-dimensional generalized linear models . journal The Annals of Statistics volume 45 , pages 2654--2679

work page 2017

-

[4]

author Angelopoulos, A.N. , author Bates, S. , author Fannjiang, C. , author Jordan, M.I. , author Zrnic, T. , year 2023 a. title Prediction-powered inference . journal Science volume 382 , pages 669--674

work page 2023

-

[5]

arXiv preprint arXiv:2311.01453 , year=

author Angelopoulos, A.N. , author Duchi, J.C. , author Zrnic, T. , year 2023 b. title PPI ++: Efficient prediction-powered inference . journal arXiv preprint arXiv:2311.01453

-

[6]

author Bu, Q. , author Liang, H. , author Zhang, X. , author Zou, J. , year 2025 . title Improving tensor regression by optimal model averaging . journal Journal of the American Statistical Association volume 120 , pages 1115--1126

work page 2025

-

[7]

author Chakrabortty, A. , author Cai, T. , year 2018 . title Efficient and adaptive linear regression in semi-supervised settings . journal The Annals of Statistics volume 46 , pages 1541--1572

work page 2018

-

[8]

author Chakrabortty, A. , author Dai, G. , author Carroll, R.J. , year 2022 . title Semi-supervised quantile estimation: Robust and efficient inference in high dimensional settings . journal arXiv preprint arXiv:2201.10208

-

[9]

author Deng, S. , author Ning, Y. , author Zhao, J. , author Zhang, H. , year 2024 . title Optimal and safe estimation for high-dimensional semi-supervised learning . journal Journal of the American Statistical Association volume 119 , pages 2748--2759

work page 2024

-

[10]

author Echevin, D. , author Fotso, G. , author Bouroubi, Y. , author Coulombe, H. , author Li, Q. , year 2025 . title Combining survey and census data for improved poverty prediction using semi-supervised deep learning . journal Journal of Development Economics volume 172 , pages 103385

work page 2025

-

[11]

author Fisch, A. , author Maynez, J. , author Hofer, R. , author Dhingra, B. , author Globerson, A. , author Cohen, W.W. , year 2024 . title Stratified prediction-powered inference for effective hybrid evaluation of language models . journal Advances in Neural Information Processing Systems volume 37 , pages 111489--111514

work page 2024

-

[12]

author Gan, F. , author Liang, W. , author Zou, C. , year 2024 . title Prediction de-correlated inference: A safe approach for post-prediction inference . journal Australian & New Zealand Journal of Statistics volume 66 , pages 417--440

work page 2024

-

[13]

author Gao, Z. , author Liu, H. , author Zhang, X. , year 2024 . title Semi-supervised learning using copula-based regression and model averaging . journal arXiv preprint arXiv:2411.07617

-

[14]

author Gu, Y. , author Xia, D. , year 2024 . title Local prediction-powered inference . journal arXiv preprint arXiv:2409.18321

-

[15]

author Hansen, B.E. , year 2007 . title Least squares model averaging . journal Econometrica volume 75 , pages 1175--1189

work page 2007

-

[16]

author Hansen, B.E. , author Racine, J.S. , year 2012 . title Jackknife model averaging . journal Journal of Econometrics volume 167 , pages 38--46

work page 2012

-

[17]

author Jiang, B. , author Lv, J. , author Li, J. , author Cheng, M.Y. , year 2025 . title Robust model averaging prediction of longitudinal response with ultrahigh-dimensional covariates . journal Journal of the Royal Statistical Society Series B: Statistical Methodology volume 87 , pages 337--361

work page 2025

-

[18]

author Kato, K. , year 2012 . title Estimation in functional linear quantile regression . journal The Annals of Statistics volume 40 , pages 3108--3136

work page 2012

-

[19]

author Kim, I. , author Wasserman, L. , author Balakrishnan, S. , author Neykov, M. , year 2024 . title Semi-supervised U -statistics . journal arXiv preprint arXiv:2402.18921

-

[20]

author Kriegler, B. , author Berk, R.A. , year 2010 . title Small area estimation of the homeless in los angeles: An application of cost-sensitive stochastic gradient boosting . journal Annals of Applied Statistics volume 4 , pages 1234--1255

work page 2010

-

[21]

author Li, K. , author Mai, F. , author Shen, R. , author Yan, X. , year 2021 . title Measuring corporate culture using machine learning . journal The Review of Financial Studies volume 34 , pages 3265--3315

work page 2021

-

[22]

author Li, S. , author Ignatiadis, N. , year 2025 . title Prediction-powered adaptive shrinkage estimation . journal arXiv preprint arXiv:2502.14166

-

[23]

author Li, W. , author Zhang, X. , year 2025 . title Factor-adjusted model averaging . journal Journal of the American Statistical Association , pages 1--23

work page 2025

-

[24]

author Li, Z. , author Jin, H. , author Dong, S. , author Qian, B. , author Yang, B. , author Chen, X. , year 2022 . title Semi-supervised ensemble support vector regression based soft sensor for key quality variable estimation of nonlinear industrial processes with limited labeled data . journal Chemical Engineering Research and Design volume 179 , pages...

work page 2022

-

[25]

author Liu, Z. , author Zhang, G. , author Lu, J. , year 2024 . title Semi-supervised heterogeneous domain adaptation for few-sample credit risk classification . journal Neurocomputing volume 596 , pages 127948

work page 2024

-

[26]

author Lu, X. , author Su, L. , year 2015 . title Jackknife model averaging for quantile regressions . journal Journal of Econometrics volume 188 , pages 40--58

work page 2015

-

[27]

author Miao, J. , author Miao, X. , author Wu, Y. , author Zhao, J. , author Lu, Q. , year 2025 . title Assumption-lean and data-adaptive post-prediction inference . journal Journal of Machine Learning Research volume 26 , pages 1--31

work page 2025

-

[28]

author Racine, J.S. , author Li, Q. , author Yu, D. , author Zheng, L. , year 2023 . title Optimal model averaging of mixed-data kernel-weighted spline regressions . journal Journal of Business & Economic Statistics volume 41 , pages 1251--1261

work page 2023

-

[29]

author Sifaou, H. , author Simeone, O. , year 2024 . title Semi-supervised learning via cross-prediction-powered inference for wireless systems . journal IEEE Transactions on Machine Learning in Communications and Networking volume 3 , pages 30--44

work page 2024

-

[30]

author Song, S. , author Lin, Y. , author Zhou, Y. , year 2024 . title A general M -estimation theory in semi-supervised framework . journal Journal of the American Statistical Association volume 119 , pages 1065--1075

work page 2024

-

[31]

author Tu, J. , author Liu, W. , author Mao, X. , year 2024 . title Distributed estimation on semi-supervised generalized linear model . journal Journal of Machine Learning Research volume 25 , pages 1--41

work page 2024

-

[32]

author Tu, Y. , author Wang, S. , year 2025 . title Quantile prediction with factor-augmented regression: Structural instability and model uncertainty . journal Journal of Econometrics volume 249 , pages 105999

work page 2025

-

[33]

author Wan, A.T. , author Zhang, X. , author Zou, G. , year 2010 . title Least squares model averaging by Mallows criterion . journal Journal of Econometrics volume 156 , pages 277--283

work page 2010

-

[34]

author Wan, Z. , author Fang, F. , author Jiang, B. , year 2025 . title High-dimensional model averaging via cross-validation . journal arXiv preprint arXiv:2506.08451

-

[35]

author Wang, G. , author Wen, M. , author Zou, C. , year 2025 . title Empirical likelihood meets prediction-powered inference . journal arXiv preprint arXiv:2512.16363

-

[36]

author Wang, L. , year 2011 . title GEE analysis of clustered binary data with diverging number of covariates . journal The Annals of Statistics volume 39 , pages 389--417

work page 2011

-

[37]

author Wang, S. , author McCormick, T.H. , author Leek, J.T. , year 2020 . title Methods for correcting inference based on outcomes predicted by machine learning . journal Proceedings of the National Academy of Sciences volume 117 , pages 30266--30275

work page 2020

-

[38]

author Wu, D. , author Liu, M. , year 2025 . title Robust and efficient semi-supervised learning for ising model . journal Biometrics volume 81 , pages ujaf060

work page 2025

-

[39]

author Xi, L. , author Yun, Z. , author Liu, H. , author Wang, R. , author Huang, X. , author Fan, H. , year 2022 . title Semi-supervised time series classification model with self-supervised learning . journal Engineering Applications of Artificial Intelligence volume 116 , pages 105331

work page 2022

-

[40]

author Xu, Z. , author Witten, D. , author Shojaie, A. , year 2025 . title A unified framework for semiparametrically efficient semi-supervised learning . journal arXiv preprint arXiv:2502.17741

-

[41]

author Yu, D. , author Zhang, X. , author Liang, H. , year 2025 . title Unified optimal model averaging with a general loss function based on cross-validation . journal Journal of the American Statistical Association , pages 1--23

work page 2025

-

[42]

author Zhang, A. , author Brown, L.D. , author Cai, T.T. , year 2019 . title Semi-supervised inference: General theory and estimation of means . journal The Annals of Statistics volume 47 , pages 2538--2566

work page 2019

-

[43]

author Zhang, X. , author Liu, C.A. , year 2023 . title Model averaging prediction by K -fold cross-validation . journal Journal of Econometrics volume 235 , pages 280--301

work page 2023

-

[44]

author Zhang, X. , author Yu, D. , author Zou, G. , author Liang, H. , year 2016 . title Optimal model averaging estimation for generalized linear models and generalized linear mixed-effects models . journal Journal of the American Statistical Association volume 111 , pages 1775--1790

work page 2016

-

[45]

author Zhang, Y. , author Bradic, J. , year 2022 . title High-dimensional semi-supervised learning: in search of optimal inference of the mean . journal Biometrika volume 109 , pages 387--403

work page 2022

-

[46]

author Zhu, R. , author Wan, A.T. , author Zhang, X. , author Zou, G. , year 2019 . title A Mallows -type model averaging estimator for the varying-coefficient partially linear model . journal Journal of the American Statistical Association volume 114 , pages 882--892

work page 2019

-

[47]

author Zrnic, T. , author Cand \`e s, E.J. , year 2024 . title Cross-prediction-powered inference . journal Proceedings of the National Academy of Sciences volume 121 , pages e2322083121

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.