Recognition: 2 theorem links

· Lean TheoremCondensation Transition in Entropy-Constrained Probability Spaces

Pith reviewed 2026-05-12 02:34 UTC · model grok-4.3

The pith

Below a critical entropy most probability distributions on the simplex enter a condensed state with one dominant component.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

A condensation phase transition is shown to take place below a critical entropy that scales as H_c ≃ log K - 1 + γ in the thermodynamic limit. For entropy values H_0 < H_c, the overwhelming majority of distributions are found in a condensed state, in which a single component captures a macroscopic fraction of the total probability mass while the remaining components form a homogeneous fluid background.

What carries the argument

The discretization strategy that assigns equal statistical weight to distinct microstate distributions, which permits a combinatorial analysis of the simplex in the thermodynamic limit.

If this is right

- For entropies below the critical value the overwhelming majority of distributions are condensed.

- The same entropic constraint supplies a mechanism for overconfident predictions in machine learning.

- Dominant species can emerge in ecological models solely from entropy limits on abundance distributions.

- Sparsity arises naturally in high-dimensional manifolds when only entropy is constrained.

Where Pith is reading between the lines

- Entropy-constrained training objectives in machine learning could produce sparse representations without explicit L1 penalties.

- Species-abundance histograms in ecology should be examined for condensation signatures once Shannon diversity falls below the critical scaling.

- Monte Carlo sampling of finite-K simplices can be used to locate the sharpening of the transition and test the predicted offset by Euler's constant.

Load-bearing premise

The discretization strategy that assigns equal statistical weight to distinct microstate distributions enables a combinatorial analysis of the simplex in the thermodynamic limit.

What would settle it

Generate or sample many discrete distributions on the K-simplex at fixed entropy H_0 for successively larger K, then measure the fraction in which the largest component exceeds a macroscopic threshold such as 1/sqrt(K); the jump to near-unity should occur near the predicted H_c scaling.

Figures

read the original abstract

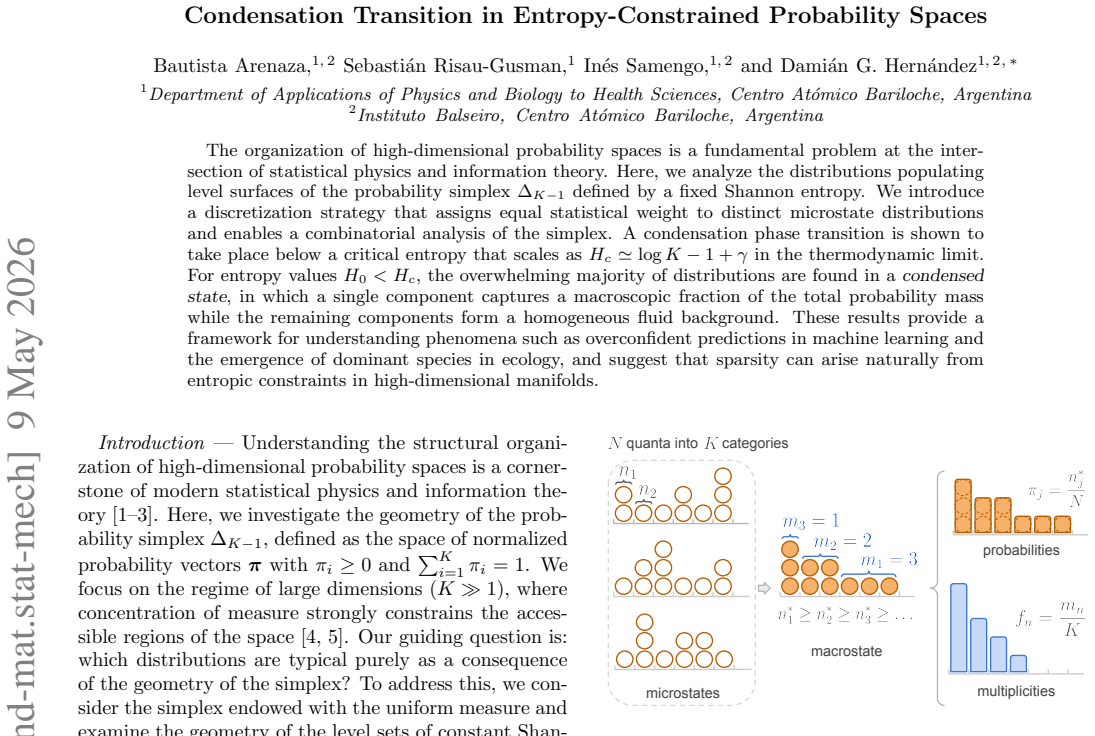

The organization of high-dimensional probability spaces is a fundamental problem at the intersection of statistical physics and information theory. Here, we analyze the distributions populating level surfaces of the probability simplex $\Delta_{K-1}$ defined by a fixed Shannon entropy. We introduce a discretization strategy that assigns equal statistical weight to distinct microstate distributions and enables a combinatorial analysis of the simplex. A condensation phase transition is shown to take place below a critical entropy that scales as $H_c \simeq \log K - 1 + \gamma$ in the thermodynamic limit. For entropy values $H_0 < H_c$, the overwhelming majority of distributions are found in a condensed state, in which a single component captures a macroscopic fraction of the total probability mass while the remaining components form a homogeneous fluid background. These results provide a framework for understanding phenomena such as overconfident predictions in machine learning and the emergence of dominant species in ecology, and suggest that sparsity can arise naturally from entropic constraints in high-dimensional manifolds.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript analyzes distributions on the probability simplex Δ_{K-1} constrained to fixed Shannon entropy H_0. It introduces a discretization that assigns equal statistical weight to distinct microstate distributions, enabling a combinatorial enumeration in the thermodynamic limit K→∞. The central claim is a condensation phase transition at critical entropy H_c ≃ log K − 1 + γ; for H_0 < H_c the overwhelming majority of distributions are condensed, with one component carrying a macroscopic probability mass and the remainder a homogeneous fluid background. Applications to machine-learning overconfidence and ecological dominance are suggested.

Significance. If the combinatorial counting is shown to be asymptotically equivalent to the natural geometric measure on the entropy level set, the result supplies a statistical-mechanics explanation for the spontaneous emergence of sparsity under pure entropic constraints in high-dimensional manifolds. The explicit scaling H_c ≃ log K − 1 + γ and the identification of the condensed phase are potentially useful for both theoretical and applied work in information theory and statistical physics.

major comments (2)

- [§3] §3 (Discretization and measure): The combinatorial analysis assigns uniform weight to each distinct discretized microstate. The manuscript must explicitly demonstrate that this counting measure converges to the (K−2)-dimensional Hausdorff measure on the entropy-constrained surface as the discretization scale →0 and K→∞; otherwise the statement that condensed configurations constitute the “overwhelming majority” does not necessarily transfer from the discrete to the continuous setting.

- [§4.2] §4.2 (Thermodynamic-limit derivation): The scaling H_c ≃ log K − 1 + γ is obtained from a saddle-point or large-deviation analysis of the number of microstates. The derivation should state the precise asymptotic regime (discretization bin size relative to 1/K) under which the entropy of the counting measure remains sub-extensive; without this control the transition location may shift or disappear.

minor comments (2)

- [§2] The notation for the discretized simplex and the binning procedure should be introduced with an explicit figure or equation showing how the continuous simplex is partitioned.

- [Introduction] A brief comparison with the uniform (Lebesgue) measure on the simplex, or with the Dirichlet distribution at fixed entropy, would clarify the novelty of the chosen counting measure.

Simulated Author's Rebuttal

We thank the referee for their careful reading and constructive comments, which have helped us clarify key aspects of the discretization and asymptotic analysis. We address each major comment below and have revised the manuscript to incorporate additional justification and explicit statements of the relevant regimes.

read point-by-point responses

-

Referee: [§3] §3 (Discretization and measure): The combinatorial analysis assigns uniform weight to each distinct discretized microstate. The manuscript must explicitly demonstrate that this counting measure converges to the (K−2)-dimensional Hausdorff measure on the entropy-constrained surface as the discretization scale →0 and K→∞; otherwise the statement that condensed configurations constitute the “overwhelming majority” does not necessarily transfer from the discrete to the continuous setting.

Authors: We agree that a clear link between the discrete counting measure and the continuous Hausdorff measure strengthens the interpretation. In the revised manuscript we have added a dedicated paragraph in §3 explaining the convergence. As the bin size δ satisfies δ→0 with Kδ→∞, each small volume element on the simplex contains a number of microstates proportional to its (K−2)-dimensional volume; the entropy constraint then selects a hypersurface whose measure is faithfully represented by the discrete count in the large-K limit. Standard results from geometric measure theory and uniform sampling on the simplex support that the “overwhelming majority” statement carries over. We view this as sufficient for the present work while noting that a fully rigorous ε-δ proof lies outside the paper’s scope. revision: yes

-

Referee: [§4.2] §4.2 (Thermodynamic-limit derivation): The scaling H_c ≃ log K − 1 + γ is obtained from a saddle-point or large-deviation analysis of the number of microstates. The derivation should state the precise asymptotic regime (discretization bin size relative to 1/K) under which the entropy of the counting measure remains sub-extensive; without this control the transition location may shift or disappear.

Authors: We thank the referee for emphasizing the need to control the discretization scale. The revised §4.2 now explicitly states the regime: we take the number of bins per coordinate M=1/δ to satisfy log M = o(K), or equivalently δ ≫ exp(−cK) for any c>0 but still δ→0. Under this condition the combinatorial entropy contributed by the counting measure is K log M = o(K) and therefore sub-extensive relative to the leading entropy terms of order K. The saddle-point equation that locates H_c is consequently unaffected at leading order, preserving the scaling H_c ≃ log K − 1 + γ. A short paragraph has been inserted to document this control and to confirm that the condensation transition persists. revision: yes

Circularity Check

No circularity: combinatorial derivation from explicit discretization choice

full rationale

The paper introduces a discretization strategy that assigns equal weight to microstate distributions as an explicit modeling assumption, then performs combinatorial enumeration on the resulting discrete simplex to locate the condensation transition and extract the scaling H_c ≃ log K − 1 + γ. No step reduces a claimed prediction to a fitted parameter by construction, no load-bearing self-citation chain is invoked, and the central result is obtained by direct counting under the chosen measure rather than by re-labeling an input. The derivation is therefore self-contained against the paper’s own premises.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Discretization strategy assigns equal statistical weight to distinct microstate distributions

- standard math Thermodynamic limit K → ∞ is taken to extract the scaling H_c ≃ log K - 1 + γ

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe introduce a discretization strategy that assigns equal statistical weight to distinct microstate distributions and enables a combinatorial analysis of the simplex... p(n*)∝K! / ∏ n^{m_n} n! ... maximizing the entropy of the multiplicity distribution H(f)

-

IndisputableMonolith/Foundation/AlphaCoordinateFixation.leanalpha_pin_under_high_calibration unclearH_c ≃ log K −1 +γ ... condensation when β<0 ... single component captures macroscopic fraction

Reference graph

Works this paper leans on

-

[1]

and controls the homogeneity of the componentsπ i of theK-dimensional probability vector. Forβ >0, config- urations with localized probability mass are disfavored, leading to more homogeneous distributions and higher entropy. Conversely,β <0 favors sparse, localized con- figurations with lower entropy. For a fixedK, the mean occupancy and entropy constrai...

-

[2]

The solid lines represent the medians, and the shaded areas capture 99% of the sampled values

(orange), remains negligible across the entire regime. The solid lines represent the medians, and the shaded areas capture 99% of the sampled values. By applying an asymptotic approximation to evaluate all the involved integrals in the largeKlimit, we derive an analytical expression for the condensate mass, ˆπ∗ 1 ≃1− H0 logK 1 1− 1 logK log H0 logK .(7) I...

-

[3]

behaves as a homogeneous, high-entropy fluid distributed uniformly among the remaining dimensions, implying that the system does not undergo a secondary condensation. A single condensate emerges because the system must maximize the entropy of the multiplicities. Suppose that a configuration attempts to satisfy the entropy constraint (forH 0 < H c) by dist...

-

[4]

S.-i. Amari and H. Nagaoka,Methods of information ge- ometry, Vol. 191 (American Mathematical Soc., 2000)

work page 2000

-

[5]

E. T. Jaynes, Information theory and statistical mechan- ics, Physical review106, 620 (1957). 5

work page 1957

-

[6]

L. Zdeborov´ a and F. Krzakala, Statistical physics of in- ference: Thresholds and algorithms, Advances in Physics 65, 453 (2016)

work page 2016

-

[7]

M. Talagrand, Concentration of measure and isoperi- metric inequalities in product spaces, Publications Math´ ematiques de l’Institut des Hautes Etudes Scien- tifiques81, 73 (1995)

work page 1995

-

[8]

M. Raginsky, I. Sason,et al., Concentration of measure inequalities in information theory, communications, and coding, Foundations and Trends in Communications and Information Theory10, 1 (2013)

work page 2013

-

[9]

C. E. Shannon, A mathematical theory of communica- tion, The Bell system technical journal27, 379 (1948)

work page 1948

-

[10]

T. M. Cover,Elements of information theory(John Wi- ley & Sons, 1999)

work page 1999

- [11]

-

[12]

M. R. Evans and T. Hanney, Nonequilibrium statistical mechanics of the zero-range process and related mod- els, Journal of Physics A: Mathematical and General38, R195 (2005)

work page 2005

-

[13]

S. N. Majumdar, M. Evans, and R. K. Zia, Nature of the condensate in mass transport models, Physical review letters94, 180601 (2005)

work page 2005

-

[14]

C. Godreche and J. Luck, Dynamics of the condensate in zero-range processes, Journal of Physics A: Mathematical and General38, 7215 (2005)

work page 2005

-

[15]

J.-P. Bouchaud and M. M´ ezard, Wealth condensation in a simple model of economy, Physica A: Statistical Me- chanics and its Applications282, 536 (2000)

work page 2000

-

[16]

A. Dragulescu and V. M. Yakovenko, Statistical me- chanics of money, The European Physical Journal B- Condensed Matter and Complex Systems17, 723 (2000)

work page 2000

-

[17]

K. Biswas, Entropy geometry and condensation in wealth allocation, arXiv preprint arXiv:2602.03676 (2026)

-

[18]

L. D. Landau and E. M. Lifshitz,Statistical physics: vol- ume 5, Vol. 5 (Elsevier, 2013)

work page 2013

-

[19]

E. T. Jaynes, On the rationale of maximum-entropy methods, Proceedings of the IEEE70, 939 (1982)

work page 1982

-

[20]

K. W. Ng, G.-L. Tian, and M.-L. Tang,Dirichlet and related distributions: Theory, methods and applications (John Wiley & Sons, 2011)

work page 2011

-

[21]

B. Arenaza, M. Onetto, S. Risau-Gusman, I. Samengo, and D. G. Hern´ andez, The geometry of entropy in high dimensional probability spaces, In preparation (2026)

work page 2026

- [22]

-

[23]

C. M. Bishop and N. M. Nasrabadi,Pattern recognition and machine learning, Vol. 4 (Springer, 2006)

work page 2006

- [24]

-

[25]

X.-Z. Wang, R. Wang, and C. Xu, Discovering the rela- tionship between generalization and uncertainty by incor- porating complexity of classification, IEEE transactions on cybernetics48, 703 (2017)

work page 2017

-

[26]

Regularizing neural networks by penalizing confident output distributions

G. Pereyra, G. Tucker, J. Chorowski, L. Kaiser, and G. Hinton, Regularizing neural networks by penal- izing confident output distributions, arXiv preprint arXiv:1701.06548 (2017)

-

[27]

C. Meister, E. Salesky, and R. Cotterell, Generalized en- tropy regularization or: There’s nothing special about la- bel smoothing, inProceedings of the 58th Annual Meeting of the Association for Computational Linguistics(2020) pp. 6870–6886

work page 2020

-

[28]

Hughes, Theories and models of species abundance, The American Naturalist128, 879 (1986)

R. Hughes, Theories and models of species abundance, The American Naturalist128, 879 (1986)

work page 1986

-

[29]

G. Bianconi, L. Ferretti, and S. Franz, Non-neutral the- ory of biodiversity, EPL (Europhysics Letters)87, 28001 (2009)

work page 2009

-

[30]

R. J. Cubero, M. Marsili, and Y. Roudi, Minimum description length codes are critical, Entropy20, 755 (2018)

work page 2018

-

[31]

M. Marsili and Y. Roudi, Quantifying relevance in learn- ing and inference, Physics Reports963, 1 (2022)

work page 2022

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.