Recognition: 1 theorem link

· Lean TheoremUsing Semantic Distance to Estimate Uncertainty in LLM-Based Code Generation

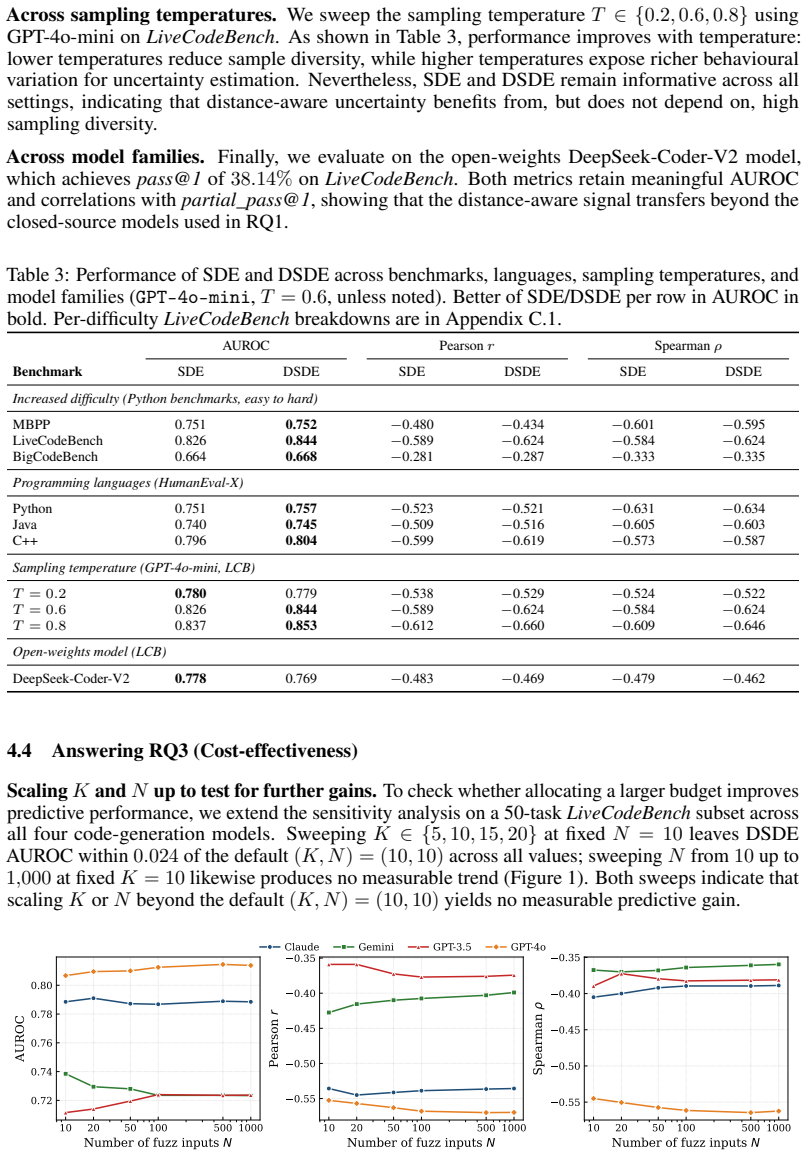

Pith reviewed 2026-05-12 02:03 UTC · model grok-4.3

The pith

Semantic distance between execution behaviors of sampled programs estimates uncertainty in LLM code generation more accurately than binary disagreement measures.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The authors argue that measuring semantic distances in the execution behaviors of multiple sampled programs yields uncertainty estimates that correlate more strongly with actual correctness than existing sample-based baselines, and that this approach is robust and efficient across diverse models and languages.

What carries the argument

Semantic distance-aware uncertainty estimation, which calculates the severity of differences in program execution behaviors instead of just counting disagreements.

Load-bearing premise

The assumption that semantic distances derived from execution behaviors on the selected benchmarks capture the real-world importance of behavioral differences and extend reliably to untested fuzzing and sampling conditions.

What would settle it

A demonstration that semantic distance metrics fail to outperform disagreement-based baselines on correctness prediction when applied to a new benchmark suite or under significantly altered fuzzing parameters.

Figures

read the original abstract

LLMs show strong performance in code generation, but their outputs lack correctness guarantees. Sample-based uncertainty estimators address this by generating multiple candidate programs and measuring their disagreement. However, existing estimators make different design choices about how behaviours are identified, aggregated, referenced and compared, making them difficult to assess. We therefore first introduce a taxonomy that disentangles these choices and reveals a missing design point: semantic distance-aware uncertainty estimation, which measures not only whether sampled programs disagree, but how severely their execution behaviours differ. Across LiveCodeBench, MBPP, HumanEval-X and BigCodeBench, spanning Python, Java and C++, our metrics provide strong proxies for correctness, and consistently outperform state-of-the-art sample-based baselines across both closed-source models (GPT-3.5-Turbo, GPT-4o-mini, Gemini-2.5-Flash-Lite, Claude Opus 4.5) and an open-source model (DeepSeek-Coder-V2). The method is practical: it requires neither model internals nor LLM-as-judge calls, remains robust across models, languages, sampling temperatures and fuzzing settings, and reduces runtime by approximately 48-79% relative to existing baselines.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces a taxonomy of design choices in sample-based uncertainty estimation for LLM code generation (how behaviors are identified, aggregated, referenced, and compared) and fills a gap with semantic distance-aware metrics that quantify not just disagreement but the severity of differences in execution behaviors across sampled programs. It evaluates these metrics empirically on LiveCodeBench, MBPP, HumanEval-X, and BigCodeBench (Python, Java, C++) using multiple models (GPT-3.5-Turbo, GPT-4o-mini, Gemini-2.5-Flash-Lite, Claude Opus 4.5, DeepSeek-Coder-V2), claiming they serve as strong proxies for correctness, consistently outperform state-of-the-art sample-based baselines, require no model internals or LLM-as-judge calls, remain robust across temperatures and fuzzing settings, and reduce runtime by 48-79%.

Significance. If the results hold, the work offers a practical, efficient, model-agnostic approach to uncertainty estimation for code generation that could improve reliability of LLM-based tools without added inference costs. The taxonomy clarifies the design space and the semantic-distance approach provides a new point that measures behavioral severity rather than binary disagreement, with potential to reduce false positives in correctness proxies.

major comments (2)

- [Evaluation methodology] Evaluation methodology (implicit in results sections): Semantic distance is computed from execution traces on the same benchmark fuzzers and test suites (HumanEval, MBPP, etc.) used to derive correctness labels. This risks overestimating proxy strength, as two programs may agree on the limited exercised inputs (yielding low distance) yet diverge on untested inputs where one is incorrect; the reported outperformance and 'strong proxy' claims are measured exactly against these labels. The robustness claims across fuzzing settings do not address generalization to held-out or real-world inputs.

- [Results] Results presentation: The abstract and results claim consistent outperformance and 'strong proxies' across models and benchmarks, but without reported statistical significance tests, effect sizes, or variance across runs, it is difficult to determine whether gains exceed noise or are benchmark-specific. Exact definitions of behavior aggregation, reference choice, and distance computation (e.g., how traces are compared) are needed to reproduce and verify the taxonomy's missing design point.

minor comments (2)

- [Abstract] Abstract: The runtime reduction is stated as 'approximately 48-79%'; reporting per-benchmark or per-model values and the exact baseline comparison would improve precision and allow readers to assess practical impact.

- [Taxonomy] Taxonomy section: The taxonomy disentangles choices but could benefit from a table or diagram explicitly mapping existing baselines to the four axes (identification, aggregation, reference, comparison) to make the 'missing design point' visually clear.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback. We address each major comment below, indicating planned revisions where appropriate while defending the core contributions of the work.

read point-by-point responses

-

Referee: [Evaluation methodology] Evaluation methodology (implicit in results sections): Semantic distance is computed from execution traces on the same benchmark fuzzers and test suites (HumanEval, MBPP, etc.) used to derive correctness labels. This risks overestimating proxy strength, as two programs may agree on the limited exercised inputs (yielding low distance) yet diverge on untested inputs where one is incorrect; the reported outperformance and 'strong proxy' claims are measured exactly against these labels. The robustness claims across fuzzing settings do not address generalization to held-out or real-world inputs.

Authors: We acknowledge the potential for overestimation when semantic distance and correctness labels are derived from the same test suites, which is a common challenge in code generation benchmarks. Our use of fuzzers generates substantially more diverse inputs than the original test cases to approximate behavioral semantics, and the consistent gains across four benchmarks with differing test characteristics (LiveCodeBench, MBPP, HumanEval-X, BigCodeBench) provide evidence that the approach captures meaningful differences. Robustness across fuzzing settings (varying input counts) further suggests the metric is not brittle to exact test coverage. However, we agree this does not fully address generalization to truly held-out or real-world inputs. We will add an explicit limitations subsection discussing this gap and its implications for proxy strength. revision: partial

-

Referee: [Results] Results presentation: The abstract and results claim consistent outperformance and 'strong proxies' across models and benchmarks, but without reported statistical significance tests, effect sizes, or variance across runs, it is difficult to determine whether gains exceed noise or are benchmark-specific. Exact definitions of behavior aggregation, reference choice, and distance computation (e.g., how traces are compared) are needed to reproduce and verify the taxonomy's missing design point.

Authors: We agree that statistical tests, effect sizes, and variance reporting would strengthen the presentation. In revision we will include standard deviations over multiple runs (different random seeds) and apply paired statistical tests (e.g., Wilcoxon signed-rank) with p-values and effect sizes to quantify whether improvements exceed noise. On definitions, Sections 3 and 4 already detail the taxonomy (behavior identification via execution traces, aggregation functions, reference selection, and semantic distance computation on normalized traces). We will expand these with formal equations, pseudocode for trace comparison and distance calculation, and explicit parameter settings to ensure full reproducibility. revision: yes

Circularity Check

No significant circularity in empirical evaluation

full rationale

The paper defines a taxonomy of uncertainty estimation design choices and proposes semantic-distance metrics as an additional point in that space. Its strongest claims rest on direct empirical comparisons of these metrics against sample-based baselines across fixed benchmarks (LiveCodeBench, MBPP, HumanEval-X, BigCodeBench) and multiple LLMs, using correctness labels derived from the same test suites. No equations, self-definitional reductions, fitted-parameter predictions, or load-bearing self-citations appear in the provided text; the reported outperformance numbers are measured against external labels rather than being forced by the metric definitions themselves. The evaluation is therefore self-contained against the chosen benchmarks.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

We define two metrics... SDE = sum pi pj dij (Rao quadratic entropy); DSDE = sum i≠c* pi dc*,i (top-anchored).

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

To believe or not to believe your llm: Iterative prompting for estimating epistemic uncertainty

Yasin Abbasi-Yadkori, Ilja Kuzborskij, András György, and Csaba Szepesvari. To believe or not to believe your llm: Iterative prompting for estimating epistemic uncertainty. InThe Thirty-eighth Annual Conference on Neural Information Processing Systems, 2024

work page 2024

-

[2]

Program Synthesis with Large Language Models

Jacob Austin, Augustus Odena, Maxwell I. Nye, Maarten Bosma, Henryk Michalewski, David Dohan, Ellen Jiang, Carrie J. Cai, Michael Terry, Quoc V . Le, and Charles Sutton. Program synthesis with large language models.CoRR, abs/2108.07732, 2021. URL https://arxiv. org/abs/2108.07732

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[3]

Pearson correlation coefficient

Jacob Benesty, Jingdong Chen, Yiteng Huang, and Israel Cohen. Pearson correlation coefficient. InNoise Reduction in Speech Processing, volume 2 ofSpringer Topics in Signal Processing, pages 1–37. Springer, Berlin, Heidelberg, 2009. ISBN 978-3-642-00296-0. doi: 10.1007/ 978-3-642-00296-0_5

work page 2009

-

[4]

Andrew P. Bradley. The use of the area under the ROC curve in the evaluation of machine learning algorithms.Pattern Recognition, 30(7):1145–1159, 1997. ISSN 0031-3203. doi: 10.1016/S0031-3203(96)00142-2

-

[5]

Evaluating Large Language Models Trained on Code

Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code, 2021. URLhttps://arxiv.org/abs/2107.03374

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[6]

Vítor Mateus de Brito and Kleinner Farias. Understanding the role of large language models in software engineering: Evidence from an industry survey, 2025. URL https://arxiv.org/ abs/2512.21347

-

[7]

Sebastian Farquhar, Jannik Kossen, Lorenz Kuhn, and Yarin Gal. Detecting hallucinations in large language models using semantic entropy.Nature, 630(8017):625–630, 2024. doi: 10.1038/s41586-024-07421-0

-

[8]

Chainpoll: A high efficacy method for llm hallucination detection

Robert Friel and Amartya Sanyal. Chainpoll: A high efficacy method for llm hallucination detection. arXiv preprint arXiv:2310.18344, 2023. URL https://arxiv.org/abs/2310. 18344

-

[9]

Cuiyun Gao, Guodong Fan, Chun Yong Chong, Shizhan Chen, Chao Liu, David Lo, Zibin Zheng, and Qing Liao. A systematic literature review of code hallucinations in llms: Characterization, mitigation methods, challenges, and future directions for reliable ai, 2025. URL https: //arxiv.org/abs/2511.00776

-

[10]

Automated whitebox fuzz testing

Patrice Godefroid, Michael Y Levin, David A Molnar, et al. Automated whitebox fuzz testing. InNdss, volume 8, pages 151–166, 2008

work page 2008

-

[11]

Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Peiyi Wang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, Xiaokang Zhang, Xingkai Yu, Yu Wu, Z. F. Wu, Zhibin Gou, Zhihong Shao, Zhuoshu Li, Ziyi Gao, Aixin Liu, Bing Xue, Bingxuan Wang, Bochao Wu, Bei Feng, Chengda Lu, Chenggang Zhao, Chengqi Deng, Chong Ruan, Damai Dai, Deli Chen, Dongjie Ji, ...

work page 2025

-

[12]

James A. Hanley and Barbara J. McNeil. The meaning and use of the area under a receiver operating characteristic (roc) curve.Radiology, 143(1):29–36, 1982

work page 1982

-

[13]

Measuring Coding Challenge Competence With APPS

Dan Hendrycks, Steven Basart, Saurav Kadavath, Mantas Mazeika, Akul Arora, Ethan Guo, Collin Burns, Samir Puranik, Horace He, Dawn Song, and Jacob Steinhardt. Measuring coding challenge competence with APPS, 2021. URLhttps://arxiv.org/abs/2105.09938

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[14]

Yuheng Huang, Jiayang Song, Zhijie Wang, Shengming Zhao, Huaming Chen, Felix Juefei-Xu, and Lei Ma. Look before you leap: An exploratory study of uncertainty analysis for large language models.IEEE Transactions on Software Engineering, 51(2):413–429, 2025. doi: 10.1109/TSE.2024.3519464

-

[15]

Livecodebench: Holistic and contamination free evaluation of large language models for code

Naman Jain, King Han, Alex Gu, Wen-Ding Li, Fanjia Yan, Tianjun Zhang, Sida Wang, Ar- mando Solar-Lezama, Koushik Sen, and Ion Stoica. Livecodebench: Holistic and contamination free evaluation of large language models for code. InThe Thirteenth International Conference on Learning Representations, ICLR 2025, Singapore, April 24-28, 2025. OpenReview.net, 2...

work page 2025

-

[16]

Lorenz Kuhn, Yarin Gal, and Sebastian Farquhar. Semantic uncertainty: Linguistic invari- ances for uncertainty estimation in natural language generation. InThe Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenRe- view.net, 2023. URLhttps://openreview.net/forum?id=VD-AYtP0dve

work page 2023

-

[17]

Showing llm-generated code selectively based on confidence of llms

Jingxuan Li, Yuxin Zhu, Yiming Li, Guoping Li, and Zhi Jin. Showing llm-generated code selectively based on confidence of llms. arXiv preprint arXiv:2410.03234, 2024. URL https: //arxiv.org/abs/2410.03234

-

[18]

arXiv preprint arXiv:2305.19187 , year=

Zhen Lin, Shubhendu Trivedi, and Jimeng Sun. Generating with confidence: Uncertainty quantification for black-box large language models.arXiv preprint arXiv:2305.19187, 2023

-

[19]

Jiawei Liu, Chunqiu Steven Xia, Yuyao Wang, and Lingming Zhang. Is your code generated by chatgpt really correct? rigorous evaluation of large language models for code generation, 2023. URLhttps://arxiv.org/abs/2305.01210

work page internal anchor Pith review arXiv 2023

- [21]

-

[22]

An empirical evaluation of github copilot’s code suggestions

Nhan Nguyen and Sarah Nadi. An empirical evaluation of github copilot’s code suggestions. InProceedings of the 19th International Conference on Mining Software Repositories (MSR), pages 1–5, 2022. doi: 10.1145/3524842.3528470

-

[23]

Rodrigo Pato Nogueira, Marco Vieira, and João R. Campos. Beyond functional correctness: An empirical evaluation of large language models for text-to-code generation. InProceedings of the IEEE International Symposium on Software Reliability Engineering (ISSRE), pages 264–275,

-

[24]

doi: 10.1109/ISSRE66568.2025.00036. 11

-

[25]

Assessing correctness in LLM-based code generation via uncertainty estimation, 2025

Arindam Sharma and Cristina David. Assessing correctness in LLM-based code generation via uncertainty estimation, 2025. URLhttps://arxiv.org/abs/2502.11620

-

[26]

Incoherence as oracle-less measure of error in LLM-based code generation

Thomas Valentin, Ardi Madadi, Gaetano Sapia, and Marcel Böhme. Incoherence as oracle-less measure of error in LLM-based code generation. InProceedings of the 40th AAAI Conference on Artificial Intelligence (AAAI), 2026. Accepted for publication

work page 2026

-

[27]

Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc V . Le, Ed H. Chi, Sharan Narang, Aakanksha Chowdhery, and Denny Zhou. Self-consistency improves chain of thought reasoning in language models. InThe Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net, 2023. URL https://openreview.net/ forum?...

work page 2023

-

[28]

The spearman correlation formula.Science, 22(558):309–311, 1905

Clark Wissler. The spearman correlation formula.Science, 22(558):309–311, 1905. doi: 10.1126/science.22.558.309

-

[29]

Sangyeop Yeo, Yu-Seung Ma, Sang Cheol Kim, Hyungkook Jun, and Taeho Kim. Framework for evaluating code generation ability of large language models.ETRI Journal, 46(1):106–117,

-

[30]

doi: 10.4218/etrij.2023-0357

-

[31]

Codegeex: A pre-trained model for code generation with multilingual benchmarking on humaneval-x

Qinkai Zheng, Xiao Xia, Xu Zou, Yuxiao Dong, Shan Wang, Yufei Xue, Lei Shen, Zihan Wang, Andi Wang, Yang Li, Teng Su, Zhilin Yang, and Jie Tang. Codegeex: A pre-trained model for code generation with multilingual benchmarking on humaneval-x. In Ambuj K. Singh, Yizhou Sun, Leman Akoglu, Dimitrios Gunopulos, Xifeng Yan, Ravi Kumar, Fatma Ozcan, and Jieping ...

-

[32]

Bigcodebench: Benchmarking code generation with diverse function calls and complex instructions

Terry Yue Zhuo, Minh Chien Vu, Jenny Chim, Han Hu, Wenhao Yu, Ratnadira Widyasari, Imam Nur Bani Yusuf, Haolan Zhan, Junda He, Indraneil Paul, Simon Brunner, Chen Gong, James Hoang, Armel Randy Zebaze, Xiaoheng Hong, Wen-Ding Li, Jean Kaddour, Ming Xu, Zhihan Zhang, Prateek Yadav, and et al. Bigcodebench: Benchmarking code generation with diverse function...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.