Recognition: no theorem link

Local Legendre Frame Approximation from Equispaced Data

Pith reviewed 2026-05-12 02:40 UTC · model grok-4.3

The pith

The local Legendre frame method reconstructs functions stably from equispaced data by regularizing local polynomial expansions on subintervals.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The method partitions the domain, applies a common Legendre basis on mapped subintervals, and regularizes the local coefficient computation via truncated SVD of the equispaced sampling operator. This construction admits an efficient offline-online implementation and satisfies a quasi-optimal approximation bound, with practical success on smooth, oscillatory, and piecewise smooth test functions.

What carries the argument

Local Legendre expansions on subintervals, regularized by truncated singular value decomposition of the shared equispaced sampling matrix.

Load-bearing premise

The function varies smoothly enough within each subinterval that its local Legendre expansion converges rapidly before regularization is applied.

What would settle it

Numerical tests on a function with rapid oscillations confined to one subinterval, where the approximation error fails to decrease as predicted by the quasi-optimal bound despite suitable truncation.

Figures

read the original abstract

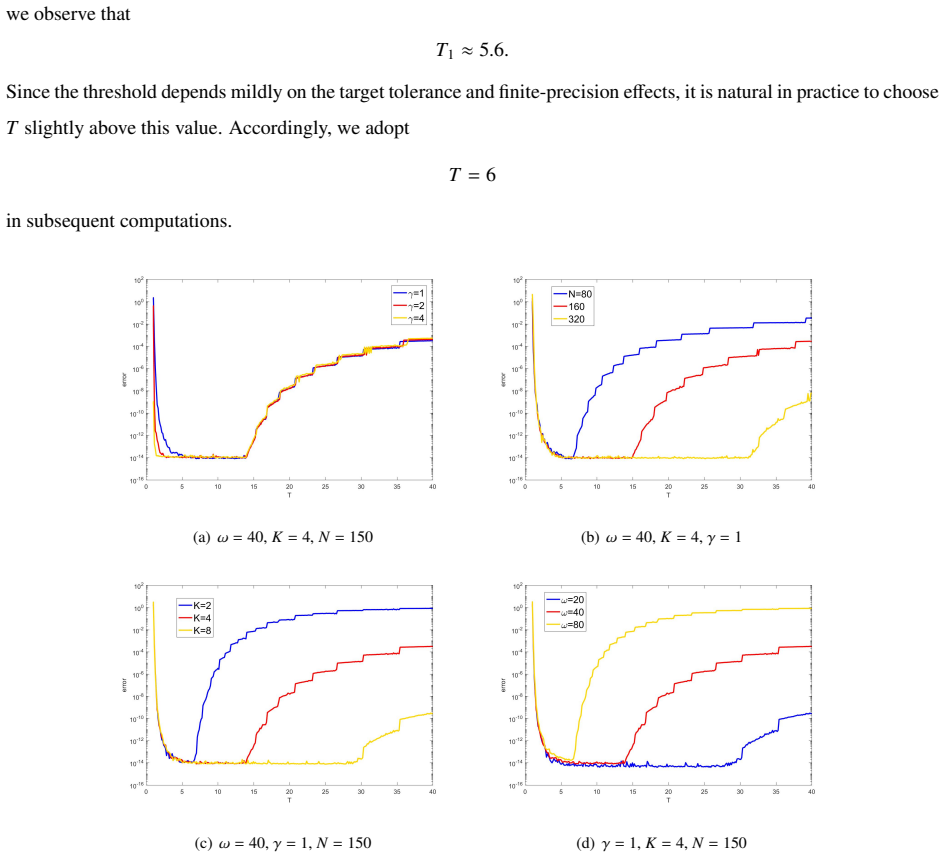

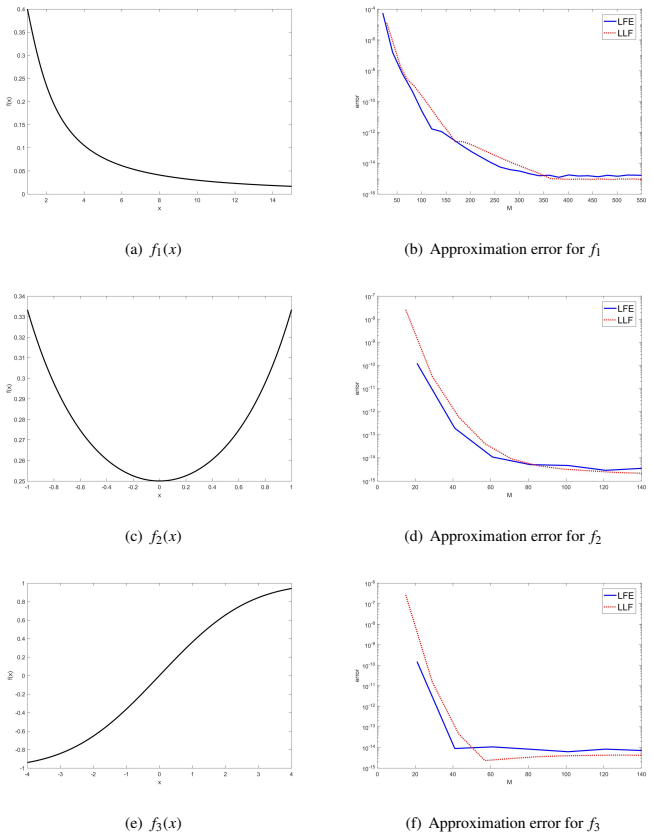

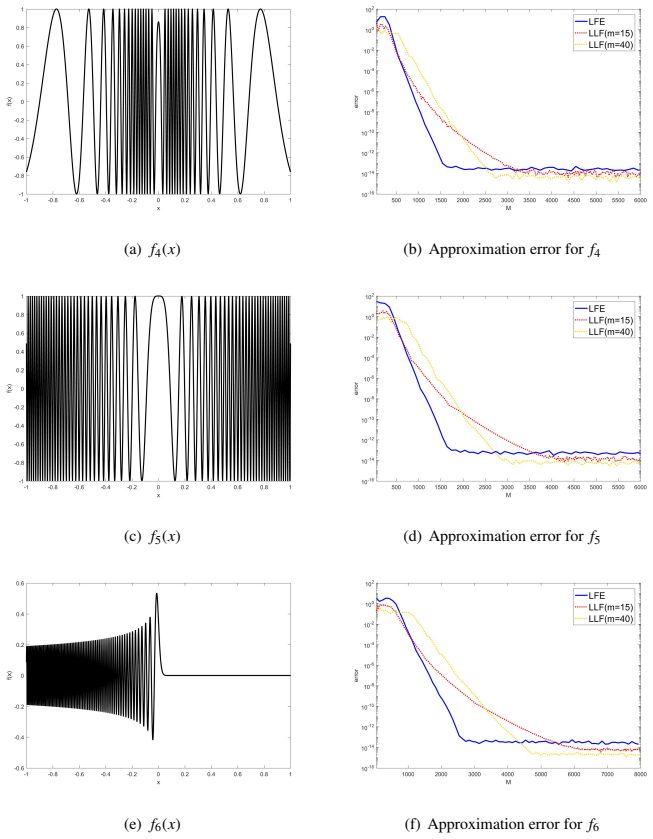

We propose a local Legendre frame (LLF) method for function approximation from equispaced data on a finite interval. Motivated by the difficulty of stable high-order polynomial approximation at equispaced points, especially in the presence of the Runge phenomenon, the method partitions the interval into subintervals, maps each subinterval to a common reference interval, and computes local coefficients by a truncated singular value decomposition (TSVD) regularization. Since all subintervals share the same local sampling matrix, the method admits a natural offline--online implementation. We establish a quasi-optimal estimate for the regularized reconstruction and discuss practical parameter selection. Numerical results show that LLF attains high accuracy for relatively smooth and moderately oscillatory functions, while it remains applicable to highly oscillatory functions, although comparable accuracy generally requires more sampling points. For continuous piecewise smooth functions with derivative singularities, the method also provides an effective detect--localize--correct strategy based on one-sided coefficient-energy indicators. These results indicate that LLF provides a stable and flexible local approximation framework for equispaced data.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes the local Legendre frame (LLF) method for stable high-order approximation of functions from equispaced data on a finite interval. The approach partitions the domain into subintervals, affinely maps each to a reference interval, and recovers local Legendre coefficients via truncated SVD (TSVD) regularization of the shared local sampling matrix. The manuscript claims to establish a quasi-optimal error estimate for the regularized reconstruction, discusses practical selection of the truncation parameter and subinterval length, and presents numerical results showing high accuracy for smooth and moderately oscillatory functions together with a detect-localize-correct procedure for continuous piecewise-smooth targets that uses one-sided coefficient-energy indicators.

Significance. If the quasi-optimal bound holds and the adaptive partitioning analysis is completed, LLF supplies a practical, stable framework for local polynomial approximation that circumvents the Runge phenomenon while admitting an efficient offline-online implementation because all subintervals share the same sampling matrix. The numerical evidence for oscillatory and piecewise-smooth cases is a concrete strength, and the method's flexibility for equispaced data addresses a long-standing practical need.

major comments (2)

- [Analysis of the detect-localize-correct procedure] The quasi-optimal error estimate is derived under the assumption of a fixed uniform partition into equal-length subintervals (ensuring identical local sampling matrices after mapping). The detect-localize-correct strategy for piecewise-smooth functions, however, selects or refines subintervals on the basis of data-dependent one-sided coefficient-energy indicators computed from the same TSVD coefficients; no separate analysis is supplied showing that the quasi-optimality constant remains controlled when the partition itself becomes data-dependent. Mis-localization of a singularity can therefore introduce an additional consistency error not bounded by the fixed-partition theory.

- [Parameter selection section] Practical parameter selection for the TSVD truncation rank and subinterval length is discussed, yet the text does not provide an explicit, a-priori rule that guarantees the truncation balances accuracy and stability without post-hoc tuning that depends on unknown features of the target function. This leaves the robustness claim for general (unknown) targets incompletely supported.

minor comments (2)

- [Abstract and introduction] The abstract states that a quasi-optimal estimate is established, but the explicit form of the constant or the precise statement of the bound is not restated in the introduction or conclusion for quick reference.

- [Preliminaries] Notation for the local sampling matrix and the TSVD truncation index should be introduced once with a clear forward reference to the error analysis.

Simulated Author's Rebuttal

We thank the referee for the careful reading and constructive feedback on our manuscript. The comments highlight important distinctions between the fixed-partition theory and the practical adaptive strategy, as well as the challenges of parameter selection. We address each major comment below and indicate the revisions we will make.

read point-by-point responses

-

Referee: The quasi-optimal error estimate is derived under the assumption of a fixed uniform partition into equal-length subintervals (ensuring identical local sampling matrices after mapping). The detect-localize-correct strategy for piecewise-smooth functions, however, selects or refines subintervals on the basis of data-dependent one-sided coefficient-energy indicators computed from the same TSVD coefficients; no separate analysis is supplied showing that the quasi-optimality constant remains controlled when the partition itself becomes data-dependent. Mis-localization of a singularity can therefore introduce an additional consistency error not bounded by the fixed-partition theory.

Authors: We agree that the quasi-optimal error estimate (Theorem 3.2) is proved under the assumption of a fixed, uniform partition that guarantees identical local sampling matrices. The detect-localize-correct procedure is presented as a practical, numerically validated heuristic for continuous piecewise-smooth targets, relying on one-sided coefficient-energy indicators to identify and correct subintervals near singularities. We do not assert that the same quasi-optimality constant applies when the partition becomes data-dependent; any mis-localization indeed introduces an additional consistency error outside the scope of the fixed-partition analysis. In the revised manuscript we will insert a clarifying paragraph in Section 4.3 explicitly stating that the error analysis for data-dependent partitions is left for future work, while retaining the numerical evidence demonstrating the procedure's effectiveness on the tested examples. revision: yes

-

Referee: Practical parameter selection for the TSVD truncation rank and subinterval length is discussed, yet the text does not provide an explicit, a-priori rule that guarantees the truncation balances accuracy and stability without post-hoc tuning that depends on unknown features of the target function. This leaves the robustness claim for general (unknown) targets incompletely supported.

Authors: Section 3.3 discusses parameter choice by linking the truncation rank to the decay of singular values of the local sampling matrix and to the subinterval length that keeps the effective condition number moderate, drawing on the quasi-optimal error bound. We acknowledge, however, that the section stops short of a fully explicit a-priori formula that would select both parameters without any reference to features of the unknown target. In the revision we will augment this section with sharper, bound-derived heuristics (e.g., choosing the truncation index so that the retained singular values exceed an a-priori noise or oscillation threshold estimated from the data) and will add a short numerical robustness study across a broader class of test functions. These additions will make the practical guidance more concrete while recognizing that some dependence on the target remains unavoidable for optimal performance. revision: partial

Circularity Check

No significant circularity; derivation relies on standard TSVD analysis

full rationale

The paper's central quasi-optimal error bound is derived for the TSVD-regularized local Legendre reconstruction on fixed uniform partitions with a shared sampling matrix after affine mapping. This bound uses standard singular-value truncation and does not reduce by the paper's own equations to a fitted parameter or input quantity. The detect-localize-correct procedure for piecewise-smooth targets is presented as an empirical strategy based on one-sided coefficient-energy indicators, without any claim that the fixed-partition quasi-optimality constant automatically carries over to data-dependent partitions. No self-definitional steps, fitted-input predictions, load-bearing self-citations, or ansatz smuggling appear in the derivation chain. The method is self-contained against external benchmarks for the fixed-partition case.

Axiom & Free-Parameter Ledger

free parameters (2)

- TSVD truncation parameter

- subinterval length

axioms (2)

- standard math Legendre polynomials form an orthogonal basis on the reference interval

- domain assumption The local sampling matrix is the same for all subintervals after mapping

Reference graph

Works this paper leans on

- [1]

- [2]

-

[3]

J. P. Boyd, J. R. Ong, Exponentially convergent strategies for defeating the Runge phenomenon, Commun. Comput. Phys. 5 (2–4) (2009) 484–497

work page 2009

- [4]

- [5]

-

[6]

J. P. Boyd, A comparison of numerical algorithms for Fourier extension, J. Comput. Phys. 178 (2002) 118–160

work page 2002

-

[7]

Huybrechs, On the Fourier extension of nonperiodic functions, SIAM J

D. Huybrechs, On the Fourier extension of nonperiodic functions, SIAM J. Numer. Anal. 47 (2010) 4326–4355

work page 2010

- [8]

- [9]

-

[10]

O. P. Bruno, M. Lyon, High-order unconditionally stable Fourier continuation methods, J. Comput. Phys. 229 (2010) 2009–2033

work page 2010

-

[11]

O. P. Bruno, J. Paul, Two-dimensional Fourier continuation and applications, SIAM J. Sci. Comput. 44 (2022) A964–A992

work page 2022

- [12]

-

[13]

D. Gottlieb, R. S. Hirsh, Parallel pseudospectral domain decomposition methods, J. Sci. Comput. 4 (4) (1989) 309–325

work page 1989

-

[14]

M. Israeli, L. V ozovoi, A. Averbuch, Spectral multi-domain technique with local Fourier basis i, J. Sci. Comput. 8 (2) (1993) 135–149

work page 1993

-

[15]

L. V ozovoi, M. Israeli, A. Averbuch, Spectral multi-domain technique with local Fourier basis ii, J. Sci. Comput. 9 (3) (1994) 311–326

work page 1994

-

[16]

Z. Y . Zhao, Y . F. Wang, A local Fourier extension method for function approximation, Inverse Problems and Imaging 22 (2026) 1–13

work page 2026

- [17]

-

[18]

Z. Y . Zhao, Y . F. Wang, A. G. Yagola, Fast algorithms for Fourier extension based on boundary interval data, Numer. Algorithms online first

- [19]

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.