Recognition: 2 theorem links

· Lean TheoremAn NPDo Approach for Principal Joint SVD-type Block Diagonalization

Pith reviewed 2026-05-12 02:59 UTC · model grok-4.3

The pith

An NPDo approach with Gauss-Seidel updating globally converges to a stationary point for principal joint SVD-type block diagonalization.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The NPDo approach combined with Gauss-Seidel-type updating is globally convergent to a stationary point while the objective increases monotonically.

What carries the argument

The NPDo approach for maximizing common dominant block-diagonal parts, implemented via Gauss-Seidel-type iterative updates.

If this is right

- The objective function increases at each update step.

- The sequence of iterates converges to a stationary point from any initial point.

- The extracted blocks optimally capture part of the total mass of the given matrices.

- For one-by-one blocks the method yields a dominant partial joint SVD.

Where Pith is reading between the lines

- The monotonicity property may extend to related optimization problems in matrix decomposition.

- Applications in data analysis could benefit from this guaranteed convergence behavior.

- Further work might explore acceleration techniques while preserving the convergence guarantees.

Load-bearing premise

The principal joint SVD-type block diagonalization problem is formulated such that the NPDo approach applies and Gauss-Seidel updates produce monotonic objective growth.

What would settle it

Finding an instance where the combined NPDo and Gauss-Seidel procedure produces a non-monotonic objective sequence or diverges from stationary points would falsify the result.

Figures

read the original abstract

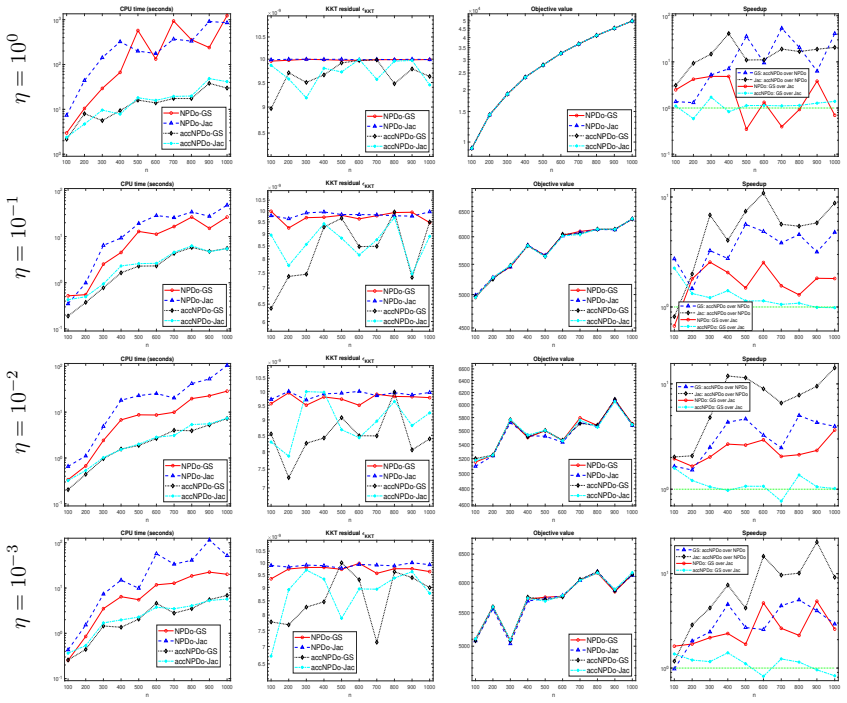

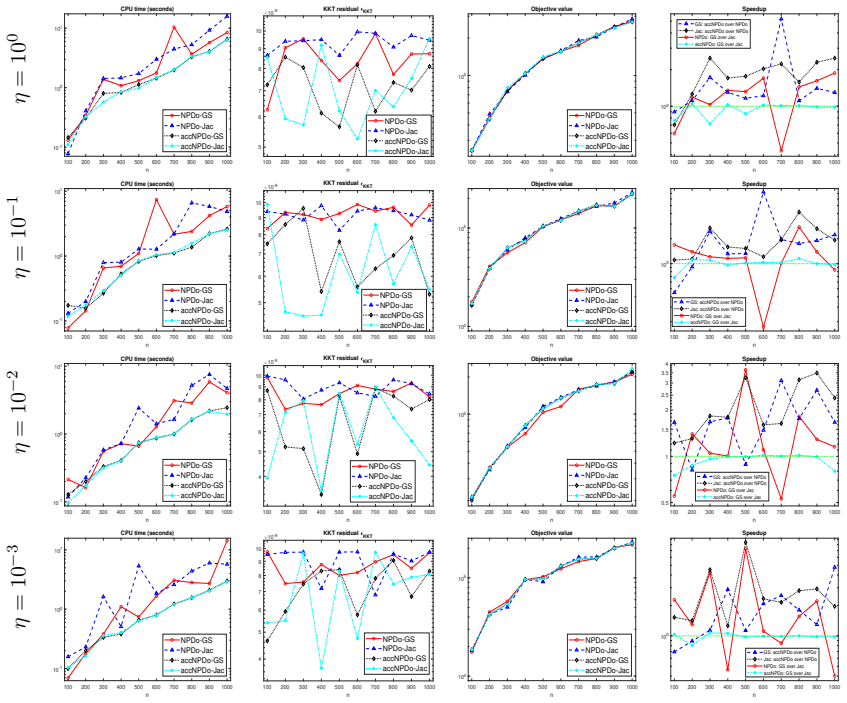

This paper is concerned with partial Joint SVD-type Block Diagonalization of several matrices so that the extracted diagonal parts collectively optimally assume part of the total mass of all given matrices. For that reason, it will be referred also as Principal Joint SVD-type Block Diagonalization. When each block-size is 1-by-1, it is about finding a dominant partial joint SVD decomposition for the matrices of interests. An NPDo approach is proposed for maximizing the common dominant block-diagonal parts collectively. It is shown that the NPDo approach combined with Gauss-Seidel-type updating is globally convergent to a stationary point while the objective increases monotonically. Numerical experiments are presented to illustrate the efficiency of the NPDo approach.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. This paper formulates the principal joint SVD-type block diagonalization problem as a constrained optimization task on the Stiefel manifold (orthogonal matrices) to maximize the collective dominant block-diagonal mass across several given matrices. It proposes an NPDo approach combined with Gauss-Seidel-type block coordinate updates. The central theoretical contribution is a proof that the iterates are globally convergent to a stationary point with a monotonically non-decreasing objective sequence, relying on compactness of the feasible set, continuity of the objective, and exact solution of each subproblem. Numerical experiments illustrate the method's efficiency.

Significance. If the convergence argument holds, the work supplies a reliable, monotonic iterative solver for a specialized joint matrix decomposition task with potential uses in multivariate analysis and signal processing. Credit is due for grounding the global convergence claim in standard compactness and exact-subproblem arguments rather than heuristic stopping criteria.

minor comments (3)

- [§2] §2: The precise mathematical statement of the objective (sum of dominant block-diagonal entries) and the role of block size parameters could be stated more explicitly at the outset to clarify the transition from the 1-by-1 case to general blocks.

- [§4] §4 (convergence proof): While the reliance on compactness and monotonicity is standard, an explicit invocation of the theorem guaranteeing that limit points are stationary (e.g., reference to a specific result on block-coordinate methods) would strengthen the argument.

- [Numerical experiments] Numerical section: The experiments would benefit from a direct comparison against at least one existing joint diagonalization algorithm (e.g., via relative objective values or iteration counts) to quantify the practical advantage of the NPDo scheme.

Simulated Author's Rebuttal

We thank the referee for their positive and accurate summary of our manuscript on the NPDo approach for principal joint SVD-type block diagonalization, as well as for recognizing the significance of the global convergence result. We appreciate the recommendation for minor revision. Since the report contains no specific major comments or requested changes, we have no points to address and no revisions are required.

Circularity Check

No significant circularity in the derivation chain

full rationale

The paper formulates principal joint SVD-type block diagonalization as a constrained optimization problem on the Stiefel manifold and applies an NPDo scheme with Gauss-Seidel block updates. The claimed global convergence to a stationary point with monotonic objective increase follows from standard arguments: compactness of the feasible set, continuity of the objective, and exact optimality of each subproblem. No step reduces by construction to a self-definition, a fitted parameter renamed as a prediction, or a load-bearing self-citation chain; the result is independent of the inputs and is not equivalent to them by definition.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearAn NPDo approach is proposed for maximizing the common dominant block-diagonal parts collectively. It is shown that the NPDo approach combined with Gauss-Seidel-type updating is globally convergent to a stationary point while the objective increases monotonically.

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearmax_{U,V} sum_ℓ ||BDiag_τk(U^H B_ℓ V)||_F² with KKT conditions H1(U,V)=U Λ1, H2(U,V)=V Λ2

Reference graph

Works this paper leans on

- [1]

-

[2]

Z. Bai, J. Demmel, J. Dongarra, A. Ruhe, and H. van der Vorst (e ditors). Templates for the solution of Algebraic Eigenvalue Problems: A Practical Gui de. SIAM, Philadelphia, 2000. 26

work page 2000

- [3]

- [4]

-

[5]

Y. Cai and R.-C. Li. Perturbation analysis for matrix joint block dia gonalization. Linear Algebra Appl., 581:163–197, 2019

work page 2019

-

[6]

M. Congedo, R. Phlypo, and D.-T. Pham. Approximate joint singula r value decomposition of an asymmetric rectangular matrix set. IEEE Trans. Signal Processing , 59(1):415–424, 2010

work page 2010

-

[7]

J. Demmel. Applied Numerical Linear Algebra . SIAM, Philadelphia, PA, 1997

work page 1997

-

[8]

A. Edelman, T. A. Arias, and S. T. Smith. The geometry of algorith ms with orthogonality constraints. SIAM J. Matrix Anal. Appl. , 20(2):303–353, 1999

work page 1999

-

[9]

B. Gao, X. Liu, X. Chen, and Y.-x. Yuan. A new first-order algorit hmic framework for optimization problems with orthogonality constraints. SIAM J. Optim. , 28(1):302–332, 2018

work page 2018

- [10]

-

[11]

N. J. Higham. Functions of Matrices: Theory and Computation . SIAM, Philadelphia, PA, USA, 2008

work page 2008

-

[12]

G. Hori. Comparison of two main approaches to joint SVD. In T¨ u lay Adali, Christian Jutten, Jo˜ ao Marcos Travassos Romano, and Allan Kardec Barros, editor s, Independent Component Analysis and Signal Separation , pages 42–49, Berlin, Heidelberg, 2009. Springer Berlin Hei- delberg

work page 2009

-

[13]

G. Hori. Joint SVD and its application to factorization method. In International Conference on Latent Variable Analysis and Signal Separation , pages 563–570, 2010

work page 2010

-

[14]

C. Kanzow and H.-D. Qi. A QP-free constrained Newton-type me thod for variational inequal- ity problems. Math. Program., 85:81–106, 1999

work page 1999

-

[15]

R.-C. Li. A perturbation bound for the generalized polar decomp osition. BIT, 33:304–308, 1993

work page 1993

-

[16]

R.-C. Li. New perturbation bounds for the unitary polar factor . SIAM J. Matrix Anal. Appl. , 16:327–332, 1995

work page 1995

-

[17]

R.-C. Li. Matrix perturbation theory. In L. Hogben, R. Brualdi, and G. W. Stewart, editors, Handbook of Linear Algebra, page Chapter 21. CRC Press, Boca Raton, FL, 2nd edition, 2014

work page 2014

-

[18]

R.-C. Li. Approximations of extremal eigenspace and orthonor mal polar factor. Linear Algebra Appl., 2026. Appeared online January 22, 2026

work page 2026

-

[19]

R.-C. Li. A theory of the NEPv approach for optimization on the S tiefel manifold. Found. Comput. Math. , 26:179–244, October 2026. published online October 31, 2024

work page 2026

-

[20]

R.-C. Li, D. Lu, L. Wang, and L.-H. Zhang. An NPDo approach for principal joint block diagonalization. BIT Numer. Math. , 66(26), 2026

work page 2026

-

[21]

A. Mesloub, T. Touhami, K. A. Meraim, A. Belouchrani, and M. Dje ddou. Joint singular value decomposition: A new algorithm for complex matrices. In 2024 32nd European Signal Processing Conference (EUSIPCO), pages 2257–2261, 2024. 27

work page 2024

-

[22]

J. Miao, G. Cheng, Y. Cai, and J. Xia. Approximate joint singular v alue decomposition algorithm based on Givens-like rotation. IEEE Signal Processing Letters, 25(5):620–624, 2018

work page 2018

-

[23]

J. Mor´ e and D. Sorensen. Computing a trust region step. SIAM J. Sci. Statist. Comput. , 4(3):553–572, 1983

work page 1983

-

[24]

B. Pesquet-Popescu, J.-C. Pesquet, and A. P. Petropulu. Jo int singular value decomposition-a new tool for separable representation of images. In Proceedings 2001 International Conference on Image Processing , volume 2, pages 569–572, 2001

work page 2001

-

[25]

G. W. Stewart and Ji-Guang Sun. Matrix Perturbation Theory . Academic Press, Boston, 1990

work page 1990

-

[26]

H. Sato. Joint singular value decomposition algorithm based on th e Riemannian trust-region method. JSIAM Letters , 7:13–16, 2015

work page 2015

-

[27]

L. Wang, B. Gao, and X. Liu. Multipliers correction methods for o ptimization problems over the Stiefel manifold. CSIAM Trans. Appl. Math. , 2(3):508–531, 2021

work page 2021

-

[28]

L. Wang, L.-H. Zhang, and R.-C. Li. Maximizing sum of coupled trac es with applications. Numer. Math. , 152:587–629, 2022. doi.org/10.1007/s00211-022-01322-y

-

[29]

L. Wang, L.-H. Zhang, and R.-C. Li. Trace ratio optimization with a n application to multi- view learning. Math. Program., 201:97–131, 2023. doi.org/10.1007/s10107-022-01900-w

- [30]

-

[31]

X. Yang, X. Wu, S. Li, and T. K. Sarkar. A fast and robust doa e stimation method based on JSVD for co-prime array. IEEE Access, 6:41697–41705, 2018

work page 2018

- [32]

-

[33]

L.-H. Zhang and R.-C. Li. Maximization of the sum of the trace rat io on the Stiefel manifold, I: Theory. SCIENCE CHINA Math. , 57(12):2495–2508, 2014

work page 2014

-

[34]

L.-H. Zhang and R.-C. Li. Maximization of the sum of the trace rat io on the Stiefel manifold, II: Computation. SCIENCE CHINA Math. , 58(7):1549–1566, 2015

work page 2015

- [35]

-

[36]

Y. Zhou and R.-C. Li. Bounding the spectrum of large Hermitian ma trices. Linear Algebra Appl., 435:480–493, 2011. 28

work page 2011

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.