Recognition: no theorem link

OpenIIR: An Open Simulation Platform for Information Retrieval Research

Pith reviewed 2026-05-12 02:28 UTC · model grok-4.3

The pith

OpenIIR supplies a shared simulation core and pluggable scenario types so researchers can run and compare reproducible multi-agent IR experiments driven by LLM personas.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

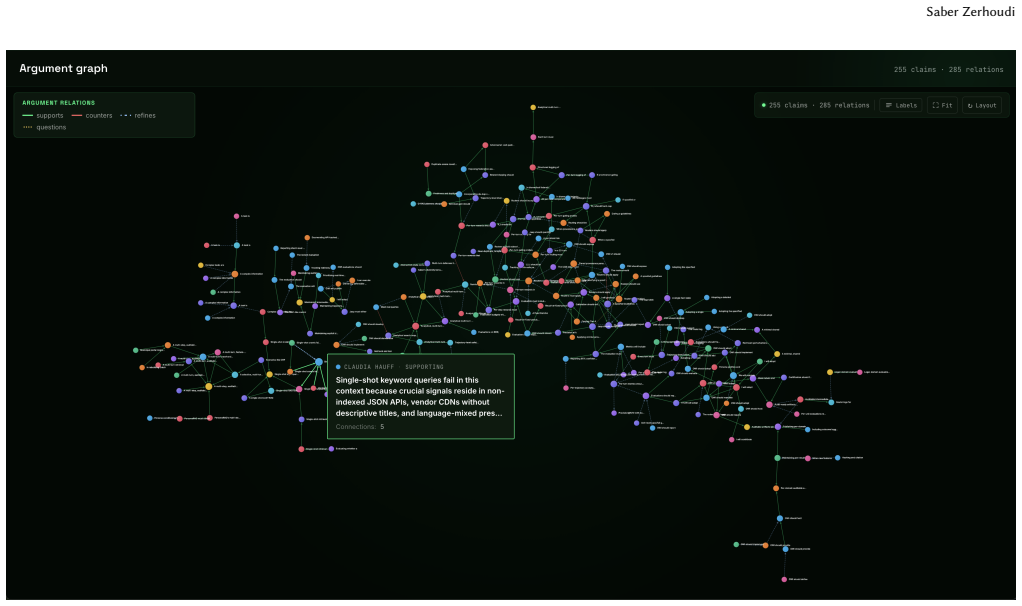

OpenIIR runs hundreds of LLM-driven personas as parameterised, reproducible IR research experiments. Researchers configure agents across four kinds of multi-agent study (deliberative panels, social platforms, curated recommender feeds, and evolutionary co-evolution between content producers and credibility detectors) under many priors, rounds, and constraints. Persona budgets, retrieval policies, ranker choices, intervention timings, and mutation rates are declared up front, and the same study can be re-run under different settings to compare outcomes side by side. Every run produces structured outputs (argument graphs, exposure logs, fitness traces, transcripts) that a downstream evaluator

What carries the argument

The shared core of agent runtime, world-model store, retrieval primitives, claim extractor and persona ontology together with the type interface that lets new scenario types be plugged in as short modules.

If this is right

- The same study configuration can be re-executed under changed retrieval policies or rankers to produce directly comparable structured outputs.

- New scenario types are implemented as 200-400 line plug-ins that reuse the shared core without rewriting agent runtime or data stores.

- Reference runs already exist for Panel, Social-Media, Curated-Feed and Multi-Generational types, providing immediate starting points for further experiments.

- Six modular extensions are sketched that map directly onto open IR research questions such as intervention timing and credibility detection.

- All outputs are structured so that external evaluators can consume argument graphs, fitness traces and transcripts without additional parsing.

Where Pith is reading between the lines

- The platform could let researchers test the effect of different retrieval policies on information flow at a scale that would be impractical with live users.

- Because parameters are declared upfront, the same experiment can serve as a controlled testbed for comparing multiple rankers or intervention strategies.

- If the generated transcripts and fitness traces prove stable across repeated runs, they could become a lightweight benchmark for multi-agent IR behaviors.

- The modular design invites extensions that mix LLM personas with recorded human traces to check how well simulations match observed behavior.

Load-bearing premise

That the outputs generated by LLM-driven personas under the chosen priors and constraints will advance real-world IR research questions instead of mainly echoing training data or prompt choices.

What would settle it

A reference run of one of the four released types whose generated argument graphs or exposure logs show no measurable difference from those produced by a simple random baseline or from data collected in an equivalent human-subject IR study.

Figures

read the original abstract

OpenIIR runs hundreds of LLM-driven personas as parameterised, reproducible IR research experiments. Researchers configure agents across four kinds of multi-agent study (deliberative panels, social platforms, curated recommender feeds, and evolutionary co-evolution between content producers and credibility detectors) under many priors, rounds, and constraints. Persona budgets, retrieval policies, ranker choices, intervention timings, and mutation rates are declared up front, and the same study can be re-run under different settings to compare outcomes side by side. Every run produces structured outputs (argument graphs, exposure logs, fitness traces, transcripts) that a downstream evaluator can consume directly, and a new study is a 200--400 line plug-in over a shared core (agent runtime, world-model store, retrieval primitives, claim extractor, persona ontology). The contributions are: (i) the shared core; (ii) a type interface for pluggable scenarios; (iii) four released types with reference runs (Panel, Social-Media, Curated-Feed, Multi-Generational); and (iv) six modular extensions sketched against open IR research questions.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces OpenIIR, an open simulation platform for IR research that runs hundreds of LLM-driven personas in parameterized, reproducible multi-agent experiments. Researchers configure agents across four study types (deliberative panels, social platforms, curated recommender feeds, and evolutionary co-evolution) with declared priors, rounds, constraints, and policies. The platform provides a shared core (agent runtime, world-model store, retrieval primitives, claim extractor, persona ontology), a type interface for pluggable scenarios, four released types with reference runs, and sketches for six modular extensions targeting open IR questions. Every run yields structured outputs (argument graphs, exposure logs, fitness traces, transcripts) consumable by downstream evaluators, with new studies implemented as 200-400 line plug-ins.

Significance. If the modularity, reproducibility, and extensibility claims hold, OpenIIR could meaningfully advance IR research by offering a standardized, open framework for systematic multi-agent simulations involving LLMs. The release of a shared core, reference runs, and structured outputs would support community-driven comparisons of retrieval policies, rankers, and interventions, particularly in emerging areas like credibility detection and evolutionary content dynamics. The low barrier to new study types (200-400 lines) and emphasis on upfront parameter declaration are concrete strengths that align with reproducibility needs in simulation-based IR work.

major comments (2)

- [Abstract] Abstract: The manuscript claims that the platform enables side-by-side comparison of outcomes under different settings and that LLM-driven personas will surface stable phenomena advancing real-world IR questions, yet no empirical validation, error analysis, baseline comparisons, or sample results from the reference runs are provided. This is load-bearing for the central utility claim, as the platform's value rests on whether outputs are non-artifactual rather than reflections of LLM priors or prompt choices.

- [Contributions] Contributions list: The four released types (Panel, Social-Media, Curated-Feed, Multi-Generational) are presented with reference runs, but the description contains no quantitative assessment of run stability, sensitivity to hyperparameters, or comparison against existing IR simulation tools. Without such evidence, the assertion that these types meaningfully address open IR research questions cannot be evaluated.

minor comments (2)

- The 200-400 line estimate for new studies is useful, but the manuscript should specify the implementation language, key dependencies, and installation instructions to support immediate adoption and reproducibility.

- A high-level architecture diagram or table summarizing the shared core components and type interface would improve clarity for readers evaluating the modularity claims.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback and for recognizing the potential of OpenIIR to support standardized multi-agent IR simulations. We address the major comments below, clarifying the manuscript's scope as a platform description while committing to targeted revisions that strengthen the presentation of reference outputs without overstating empirical claims.

read point-by-point responses

-

Referee: [Abstract] Abstract: The manuscript claims that the platform enables side-by-side comparison of outcomes under different settings and that LLM-driven personas will surface stable phenomena advancing real-world IR questions, yet no empirical validation, error analysis, baseline comparisons, or sample results from the reference runs are provided. This is load-bearing for the central utility claim, as the platform's value rests on whether outputs are non-artifactual rather than reflections of LLM priors or prompt choices.

Authors: We agree that the absence of sample results and basic validation leaves the utility claims under-supported. The manuscript's core contribution is the platform architecture (shared core, type interface, and four reference implementations) that makes side-by-side comparisons possible through upfront parameter declaration and reproducible runs; it does not claim to have already demonstrated stable real-world phenomena. The reference runs are released in the repository precisely so that such analyses can be performed. In revision we will add a new subsection presenting illustrative outputs (argument graphs, exposure logs, fitness traces) from the four reference runs, together with simple descriptive statistics (e.g., run-to-run variance under fixed seeds) and a brief discussion of prompt-sensitivity checks that users can replicate. This addition will illustrate the structured data format without asserting that the current runs prove non-artifactual behavior. revision: partial

-

Referee: [Contributions] Contributions list: The four released types (Panel, Social-Media, Curated-Feed, Multi-Generational) are presented with reference runs, but the description contains no quantitative assessment of run stability, sensitivity to hyperparameters, or comparison against existing IR simulation tools. Without such evidence, the assertion that these types meaningfully address open IR research questions cannot be evaluated.

Authors: The manuscript positions the four types as reference implementations that demonstrate the pluggable scenario interface, not as fully evaluated IR studies. Quantitative stability or hyperparameter sensitivity analyses are research questions the platform is designed to support rather than questions answered within this platform paper. We will revise the contributions and evaluation sections to include (i) basic stability metrics across repeated reference runs and (ii) a concise comparison table situating OpenIIR against prior single-agent or non-LLM simulation frameworks in IR. These additions will be limited to descriptive statistics and architectural contrasts, preserving the paper's focus on the open platform itself. revision: yes

Circularity Check

No significant circularity

full rationale

The paper describes an extensible simulation platform (shared core, type interface for pluggable scenarios, four scenario types with reference runs, and modular extensions) rather than any derivation chain, first-principles result, or set of predictions. No equations, fitted parameters, or self-referential reductions appear in the provided text; the work is a configurable tool whose outputs are structured logs and graphs for downstream use. Self-citations, if present, are not load-bearing for any claimed result because no mathematical claim is being justified. The platform is offered as an open implementation, not as a validated empirical finding that reduces to its own inputs.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption LLM agents under explicit priors and constraints produce outputs that are useful proxies for human IR behavior

invented entities (1)

-

Deliberative panels, social platforms, curated recommender feeds, and evolutionary co-evolution study types

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Leif Azzopardi, Timo Breuer, Björn Engelmann, Christin Kreutz, Sean MacA- vaney, David Maxwell, Andrew Parry, Adam Roegiest, Xi Wang, and Saber Zerhoudi. 2024. SimIIR 3: A framework for the simulation of interactive and con- versational information retrieval. InProceedings of the 2024 Annual International ACM SIGIR Conference on Research and Development i...

work page 2024

-

[2]

Leif Azzopardi, Charles LA Clarke, Claudia Hauff, Yubin Kim, Zhaochun Ren, Adam Roegiest, Johanne Trippas, and Saber Zerhoudi. 2026. The Third Search Futures Workshop at ECIR’26. InEuropean Conference on Information Retrieval. Springer, 177–183

work page 2026

-

[3]

Leif Azzopardi, Charles LA Clarke, Paul Kantor, Bhaskar Mitra, Johanne R Trip- pas, Zhaochun Ren, Mohammad Aliannejadi, Negar Arabzadeh, Raman Chan- drasekar, Maarten De Rijke, et al. 2024. Report on the search futures workshop at ECIR 2024. InACM SIGIR Forum, Vol. 58. ACM New York, NY, USA, 1–41

work page 2024

-

[4]

Eytan Bakshy, Solomon Messing, and Lada A Adamic. 2015. Exposure to ide- ologically diverse news and opinion on Facebook.Science348, 6239 (2015), 1130–1132

work page 2015

-

[5]

Krisztian Balog, Nolwenn Bernard, Saber Zerhoudi, and ChengXiang Zhai. 2025. Theory and Toolkits for User Simulation in the Era of Generative AI: User Modeling, Synthetic Data Generation, and System Evaluation. InProceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval. 4138–4141. Saber Zerhoudi

work page 2025

-

[6]

Charles LA Clarke, Paul Kantor, Adam Roegiest, Johanne R Trippas, Zhaochun Ren, Maria Sofia Bucarelli, Xiao Fu, Yixing Fan, Michael Granitzer, David Graus, et al. 2025. Report on the 2nd Search Futures Workshop at ECIR 2025. InACM SIGIR Forum, Vol. 59. ACM New York, NY, USA, 1–28

work page 2025

-

[7]

Charles LA Clarke, Maria Maistro, Mark D Smucker, and Guido Zuccon. 2020. Overview of the TREC 2020 Health Misinformation Track.. InTREC

work page 2020

-

[8]

Guglielmo Faggioli, Laura Dietz, Charles LA Clarke, Gianluca Demartini, Matthias Hagen, Claudia Hauff, Noriko Kando, Evangelos Kanoulas, Martin Potthast, Benno Stein, et al. 2023. Perspectives on large language models for relevance judgment. InProceedings of the 2023 ACM SIGIR international conference on theory of information retrieval. 39–50

work page 2023

-

[9]

Michele Garetto, Alessandro Cornacchia, Franco Galante, Emilio Leonardi, Alessandro Nordio, and Alberto Tarable. 2025. Information Retrieval in the Age of Generative AI: The RGB Model. InProceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval. 602–612

work page 2025

- [10]

-

[11]

Joon Sung Park, Joseph O’Brien, Carrie Jun Cai, Meredith Ringel Morris, Percy Liang, and Michael S Bernstein. 2023. Generative agents: Interactive simulacra of human behavior. InProceedings of the 36th annual acm symposium on user interface software and technology. 1–22

work page 2023

-

[12]

Andrew Parry, Maik Fröbe, Harrisen Scells, Ferdinand Schlatt, Guglielmo Fag- gioli, Saber Zerhoudi, Sean MacAvaney, and Eugene Yang. 2025. Variations in relevance judgments and the shelf life of test collections. InProceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval. 3387–3397

work page 2025

-

[13]

Philipp Schaer, Christin Katharina Kreutz, Krisztian Balog, Timo Breuer, Andreas Kruff, Mohammad Aliannejadi, Christine Bauer, Nolwenn Bernard, Nicola Ferro, Marcel Gohsen, et al. 2025. Report on the Second Workshop on Simulations for Information Access (Sim4IA 2025) at SIGIR 2025. 59, 2 (2025), 1–15

work page 2025

-

[14]

Ilia Shumailov, Zakhar Shumaylov, Yiren Zhao, Nicolas Papernot, Ross Anderson, and Yarin Gal. 2024. AI models collapse when trained on recursively generated data.Nature631, 8022 (2024), 755–759

work page 2024

-

[15]

Alexander Sasha Vezhnevets, John P Agapiou, Avia Aharon, Ron Ziv, Jayd Matyas, Edgar A Duéñez-Guzmán, William A Cunningham, Simon Osindero, Danny Karmon, and Joel Z Leibo. 2023. Generative agent-based modeling with actions grounded in physical, social, or digital space using Concordia.arXiv preprint arXiv:2312.03664(2023)

-

[16]

Soroush Vosoughi, Deb Roy, and Sinan Aral. 2018. The spread of true and false news online.science359, 6380 (2018), 1146–1151

work page 2018

-

[17]

An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, et al. 2025. Qwen3 technical report.arXiv preprint arXiv:2505.09388(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

- [18]

-

[19]

Saber Zerhoudi and Michael Granitzer. 2024. Cognitive-Aware User Search Behavior Simulation. InProceedings of the 24th ACM/IEEE Joint Conference on Digital Libraries. 1–12

work page 2024

-

[20]

Saber Zerhoudi and Michael Granitzer. 2024. Generative Agents Navigating Digital Libraries. InInternational Conference on Asian Digital Libraries. Springer, 171–188

work page 2024

- [21]

- [22]

-

[23]

Saber Zerhoudi, Sebastian Günther, Kim Plassmeier, Timo Borst, Christin Seifert, Matthias Hagen, and Michael Granitzer. 2022. The simiir 2.0 framework: User types, markov model-based interaction simulation, and advanced query genera- tion. InProceedings of the 31st ACM International Conference on Information & Knowledge Management. 4661–4666

work page 2022

-

[24]

Saber Zerhoudi, Adam Roegiest, and Johanne R Trippas. 2026. Simulation of Interactive Information Retrieval: A Guided Tour. InProceedings of the 2026 Conference on Human Information Interaction and Retrieval. 434–436

work page 2026

-

[25]

Erhan Zhang, Xingzhu Wang, Peiyuan Gong, Yankai Lin, and Jiaxin Mao. 2024. Usimagent: Large language models for simulating search users. InProceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval. 2687–2692

work page 2024

-

[26]

Xuhui Zhou, Hao Zhu, Leena Mathur, Ruohong Zhang, Haofei Yu, Zhengyang Qi, Louis-Philippe Morency, Yonatan Bisk, Daniel Fried, Graham Neubig, et al

-

[27]

InInternational Conference on Learning Representations, Vol

Sotopia: Interactive evaluation for social intelligence in language agents. InInternational Conference on Learning Representations, Vol. 2024. 40975–41019

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.