Recognition: no theorem link

FrameTwin: Curve-Anchored Gaussian Alignment from Sparse Views for Adaptive Wireframe 3D Printing

Pith reviewed 2026-05-12 01:51 UTC · model grok-4.3

The pith

FrameTwin aligns partially printed wireframes to a curve-anchored Gaussian digital twin using sparse-view images.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

FrameTwin estimates a neural deformation field that aligns the partially printed target model with the deformed structure observed during fabrication, where the optimized curve-Gaussian representation serves as a digital twin of the evolving wireframe. Unlike general approaches, constraining kernel placement along parametric curves substantially reduces the ambiguity in sparse-view observations of thin structures, and the deformation-field alignment enforces global consistency across all struts.

What carries the argument

Gaussian kernels anchored to parametric curves, which provide a geometry-aware encoding for estimating the neural deformation field via differentiable rendering.

If this is right

- Blending the distorted printed geometry with remaining unprinted geometry to update future printing trajectories.

- Enforcing global consistency in the deformation field across all struts of the wireframe.

- Robustly capturing and compensating for deformation in robotized wireframe 3D printing systems.

- Substantially reducing ambiguity compared to unconstrained Gaussian splatting for thin structures.

Where Pith is reading between the lines

- This could allow monitoring of deformations in other slender manufactured objects using minimal cameras.

- Potential to integrate with parametric CAD models for automatic correction during fabrication.

- May support real-time adaptive printing by reducing computational demands through the compact curve representation.

Load-bearing premise

Anchoring Gaussians strictly to parametric curves sufficiently removes ambiguity in sparse-view observations of thin structures while keeping the deformation field globally consistent without needing extra regularization or more views.

What would settle it

Applying the method to a known wireframe print with measured large deformations from sparse views and checking if the estimated deformation field accurately predicts the actual geometry or produces incorrect trajectory updates.

Figures

read the original abstract

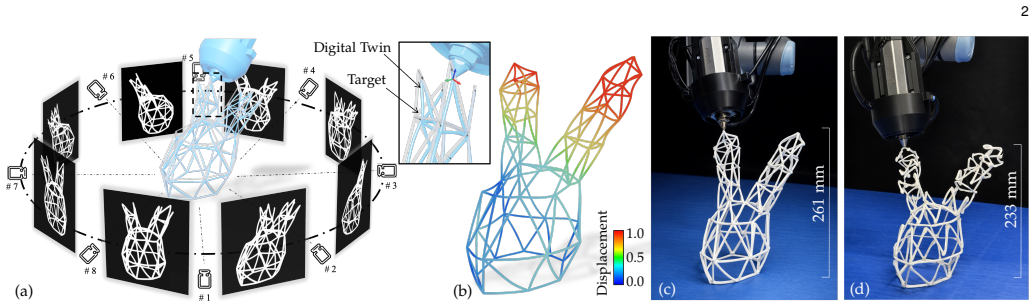

We present FrameTwin, a curve-anchored Gaussian alignment framework that uses sparse-view images to close the control loop for adaptive wireframe 3D printing. Our key idea is to capture the deformation of thin wireframe structures from sparse-view images using Gaussian kernels anchored to parametric curves, yielding a compact and geometry-aware encoding that explicitly captures strut topology. Driven by a differentiable rendering pipeline, FrameTwin estimates a neural deformation field that aligns the partially printed target model with the deformed structure observed during fabrication, where the optimized curve-Gaussian representation serves as a digital twin of the evolving wireframe. Unlike general Gaussian-splatting approaches, our formulation constrains kernel placement along parametric curves, substantially reducing the ambiguity inherent in sparse-view observations of thin structures. The resultant deformation-field alignment enforces global consistency across all struts. By using the estimated deformation field to blend the distorted printed geometry with the remaining unprinted geometry, FrameTwin enables adaptive updates to future printing trajectories. We demonstrate that FrameTwin can robustly capture and compensate for deformation in wireframe models fabricated using a robotized 3D printing system.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper presents FrameTwin, a curve-anchored Gaussian alignment method that uses sparse-view images of partially printed wireframe structures to estimate a neural deformation field. Gaussians are constrained to lie along parametric curves to encode strut topology compactly; a differentiable renderer optimizes the shared deformation field so that the resulting curve-Gaussian representation acts as a digital twin, allowing the system to blend observed distortions with remaining print paths and adapt future trajectories in a robotized 3D printer.

Significance. If the central alignment claim holds, the work would offer a practical advance for closed-loop control in robotic wireframe printing, where thin, topologically connected struts deform under gravity and fabrication forces. The curve-anchored representation reduces the degrees of freedom relative to free Gaussians, which is a targeted adaptation of recent 3D Gaussian splatting techniques to the domain of sparse-view, topology-aware reconstruction.

major comments (2)

- [Abstract] Abstract: The central claim that constraining kernels to parametric curves 'substantially reduc[es] the ambiguity inherent in sparse-view observations of thin structures' and that the resulting deformation-field alignment 'enforces global consistency across all struts' is load-bearing for the entire contribution. No regularization terms, inter-strut coupling losses, or topology-preservation constraints are described that would guarantee uniqueness of the recovered deformation (e.g., distinguishing between different bending or twist modes that project identically from a few views).

- [Abstract] Abstract: The paper asserts that FrameTwin 'can robustly capture and compensate for deformation' and enables 'adaptive updates to future printing trajectories,' yet provides no quantitative error metrics, ablation studies on view count or curve parameterization, or comparisons against baselines. Without such evidence the robustness claim cannot be evaluated.

Simulated Author's Rebuttal

We thank the referee for the constructive comments on our manuscript. We address each major comment below and indicate the revisions we will incorporate.

read point-by-point responses

-

Referee: [Abstract] Abstract: The central claim that constraining kernels to parametric curves 'substantially reduc[es] the ambiguity inherent in sparse-view observations of thin structures' and that the resulting deformation-field alignment 'enforces global consistency across all struts' is load-bearing for the entire contribution. No regularization terms, inter-strut coupling losses, or topology-preservation constraints are described that would guarantee uniqueness of the recovered deformation (e.g., distinguishing between different bending or twist modes that project identically from a few views).

Authors: The topology preservation is achieved by anchoring the Gaussian kernels to the parametric curves that define the wireframe struts, which is described in the method section as the core of our curve-anchored representation. This constraint reduces the degrees of freedom and encodes the strut topology compactly. The global consistency is enforced by the shared neural deformation field that is optimized jointly for all struts using the differentiable rendering pipeline from the sparse views. While we do not introduce additional explicit inter-strut coupling losses beyond the shared field and rendering loss, the formulation inherently couples the deformations. We agree that the abstract could be clarified to better highlight these aspects and reference the relevant sections. We will revise the abstract accordingly and expand the discussion in the method section on how the curve constraint and shared field help mitigate ambiguity in deformation recovery. revision: partial

-

Referee: [Abstract] Abstract: The paper asserts that FrameTwin 'can robustly capture and compensate for deformation' and enables 'adaptive updates to future printing trajectories,' yet provides no quantitative error metrics, ablation studies on view count or curve parameterization, or comparisons against baselines. Without such evidence the robustness claim cannot be evaluated.

Authors: We agree with the referee that the robustness claim in the abstract requires stronger quantitative backing. While the manuscript presents experimental demonstrations of FrameTwin in a robotic 3D printing setup, we will revise the experiments section to include quantitative error metrics for deformation capture, ablation studies on the number of input views and curve parameterization choices, and comparisons against relevant baselines such as standard Gaussian splatting or other deformation estimation methods. The abstract will be updated to reference these results. revision: yes

Circularity Check

No significant circularity in derivation chain

full rationale

The paper describes a forward optimization pipeline: curve-anchored Gaussians are placed along parametric curves, a neural deformation field is estimated via differentiable rendering from sparse views, and the resulting field is used to update printing trajectories. No equations, fitted parameters, or self-citations are presented that reduce any claimed prediction or alignment result to its own inputs by construction. The central claim of global consistency via curve anchoring is presented as an empirical outcome of the joint optimization rather than a definitional or self-referential step. The derivation remains self-contained against external benchmarks such as differentiable rendering and Gaussian splatting techniques.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Generating 3d house wireframes with semantics,

X. Ma, Y. Liu, W. Zhou, R. Wang, and H. Huang, “Generating 3d house wireframes with semantics,” inECCV, vol. 15080, 2024, pp. 223–240

work page 2024

-

[2]

Cost-effective printing of 3d objects with skin-frame structures,

W. Wang, T. Y. Wang, Z. Yang, L. Liu, X. Tong, W. Tong, J. Deng, F. Chen, and X. Liu, “Cost-effective printing of 3d objects with skin-frame structures,”ACM Trans. Graph., vol. 32, no. 6, Nov. 2013. [Online]. Available: https://doi.org/10.1145/2508363. 2508382

-

[3]

Topology optimization of self-supporting lattice structure,

W. Wang, D. Feng, L. Yang, S. Li, and C. C. Wang, “Topology optimization of self-supporting lattice structure,”Additive Manu- facturing, vol. 67, 2023

work page 2023

-

[4]

On-the-fly print: Incremental printing while modelling,

H. Peng, R. Wu, S. Marschner, and F. Guimbreti `ere, “On-the-fly print: Incremental printing while modelling,” inProceedings of the 2016 CHI conference on human factors in computing systems, 2016, pp. 887–896

work page 2016

-

[5]

Printing arbitrary meshes with a 5dof wireframe printer,

R. Wu, H. Peng, F. Guimbreti `ere, and S. Marschner, “Printing arbitrary meshes with a 5dof wireframe printer,”ACM Transactions on Graphics (TOG), vol. 35, no. 4, pp. 1–9, 2016

work page 2016

-

[6]

Framefab: Robotic fabrication of frame shapes,

Y. Huang, J. Zhang, X. Hu, G. Song, Z. Liu, L. Yu, and L. Liu, “Framefab: Robotic fabrication of frame shapes,”ACM Transac- tions on Graphics (TOG), vol. 35, no. 6, pp. 1–11, 2016

work page 2016

-

[7]

Learning based toolpath planner on diverse graphs for 3d printing,

Y. Huang, Y. Guo, R. Su, X. Han, J. Ding, T. Zhang, T. Liu, W. Wang, G. Fang, X. Song, E. Whiting, and C. Wang, “Learning based toolpath planner on diverse graphs for 3d printing,”ACM Trans. Graph., vol. 43, no. 6, Nov. 2024. 13

work page 2024

-

[8]

Vggt: Visual geometry grounded transformer,

J. Wang, M. Chen, N. Karaev, A. Vedaldi, C. Rupprecht, and D. Novotny, “Vggt: Visual geometry grounded transformer,” in Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 5294–5306

work page 2025

-

[9]

Poisson surface recon- struction,

M. Kazhdan, M. Bolitho, and H. Hoppe, “Poisson surface recon- struction,” inProceedings of the Fourth Eurographics Symposium on Geometry Processing, 2006, p. 61–70

work page 2006

-

[10]

P . Wang, L. Liu, N. Chen, H.-K. Chu, C. Theobalt, and W. Wang, “Vid2curve: simultaneous camera motion estimation and thin structure reconstruction from an rgb video,”ACM Transactions on Graphics (TOG), vol. 39, no. 4, pp. 132–1, 2020

work page 2020

-

[11]

H. Zimmer, F. Lafarge, P . Alliez, and L. Kobbelt, “Zometool shape approximation,”Graph. Models, vol. 76, no. 5, p. 390–401, Sep. 2014

work page 2014

-

[12]

F. Faruqi, J. Paonaskar, R. Schuler, A. Prevey, C. Taylor, A. Tak, A. Guinto, E. Shilamkar, N. Cheenaruenthong, and M. Nisser, “Wirebend-kit: A computational design and fabrication toolkit for wirebending custom 3d wireframe structures,” inProceedings of the ACM Symposium on Computational Fabrication. New York, NY, USA: Association for Computing Machinery...

-

[13]

Wireprint: 3d printed previews for fast prototyping,

S. Mueller, S. Im, S. Gurevich, A. Teibrich, L. Pfisterer, F. Guim- breti`ere, and P . Baudisch, “Wireprint: 3d printed previews for fast prototyping,” inProceedings of the 27th annual ACM symposium on User interface software and technology, 2014, pp. 273–280

work page 2014

-

[14]

Wirefab: Mix-dimensional modeling and fabrication for 3d mesh models,

M. Liu, Y. Zhang, J. Bai, Y. Cao, J. M. Alperovich, and K. Ramani, “Wirefab: Mix-dimensional modeling and fabrication for 3d mesh models,” inProceedings of the 2017 CHI Conference on Human Factors in Computing Systems, ser. CHI ’17. ACM, May 2017, p. 965–976. [Online]. Available: http://dx.doi.org/10.1145/3025453.3025619

-

[15]

Roma: Interactive fabrication with augmented reality and a robotic 3d printer,

H. Peng, J. Briggs, C.-Y. Wang, K. Guo, J. Kider, S. Mueller, P . Baudisch, and F. Guimbreti `ere, “Roma: Interactive fabrication with augmented reality and a robotic 3d printer,” inProceedings of the 2018 CHI conference on human factors in computing systems, 2018, pp. 1–12

work page 2018

-

[16]

Reconstructing thin structures of manifold surfaces by integrating spatial curves,

S. Li, Y. Yao, T. Fang, and L. Quan, “Reconstructing thin structures of manifold surfaces by integrating spatial curves,” inProceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 2887–2896

work page 2018

-

[17]

K.-W. Hsiao, J.-B. Huang, and H.-K. Chu, “Multi-view wire art.” ACM Trans. Graph., vol. 37, no. 6, p. 242, 2018

work page 2018

-

[18]

Image-based reconstruction of wire art,

L. Liu, D. Ceylan, C. Lin, W. Wang, and N. J. Mitra, “Image-based reconstruction of wire art,”ACM Transactions on Graphics (TOG), vol. 36, no. 4, pp. 1–11, 2017

work page 2017

-

[19]

Learning to reconstruct 3d manhattan wireframes from a single image,

Y. Zhou, H. Qi, Y. Zhai, Q. Sun, Z. Chen, L.-Y. Wei, and Y. Ma, “Learning to reconstruct 3d manhattan wireframes from a single image,” inProceedings of the IEEE/CVF International Conference on Computer Vision, 2019

work page 2019

-

[20]

Clr-wire: Towards continuous latent representations for 3d curve wireframe generation,

X. Ma, Y. Liu, T. Gao, Q. Huang, and H. Huang, “Clr-wire: Towards continuous latent representations for 3d curve wireframe generation,” inProceedings of the Special Interest Group on Computer Graphics and Interactive Techniques Conference Conference Papers, ser. SIGGRAPH Conference Papers ’25. New York, NY, USA: Association for Computing Machinery, 2025. ...

-

[21]

Nef: Neural edge fields for 3d parametric curve reconstruction from multi-view images,

Y. Ye, R. Yi, Z. Gao, C. Zhu, Z. Cai, and K. Xu, “Nef: Neural edge fields for 3d parametric curve reconstruction from multi-view images,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 8486–8495

work page 2023

-

[22]

Edgegaussians-3d edge mapping via gaussian splatting,

K. Chelani, A. Benbihi, T. Sattler, and F. Kahl, “Edgegaussians-3d edge mapping via gaussian splatting,” in2025 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV). IEEE, 2025, pp. 3268–3279

work page 2025

-

[23]

Curve- aware gaussian splatting for 3d parametric curve reconstruction,

Z. Gao, R. Yi, Y. Dai, X. Zhu, W. Chen, C. Zhu, and K. Xu, “Curve- aware gaussian splatting for 3d parametric curve reconstruction,” inProceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2025

work page 2025

-

[24]

arXiv preprint arXiv:2103.02766 (2021)

Y. Liu, S. D’Aronco, K. Schindler, and J. D. Wegner, “Pc2wf: 3d wireframe reconstruction from raw point clouds,”arXiv preprint arXiv:2103.02766, 2021

-

[25]

Nerve: Neural volumetric edges for parametric curve extraction from point cloud,

X. Zhu, D. Du, W. Chen, Z. Zhao, Y. Nie, and X. Han, “Nerve: Neural volumetric edges for parametric curve extraction from point cloud,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 13 601–13 610

work page 2023

-

[26]

Curvefusion: Reconstructing thin structures from rgbd sequences,

L. Liu, N. Chen, D. Ceylan, C. Theobalt, W. Wang, and N. J. Mitra, “Curvefusion: Reconstructing thin structures from rgbd sequences,”ACM Trans. Graph., vol. 37, no. 6, pp. 218:1–218:12, Dec. 2018. [Online]. Available: http://doi.acm.org/ 10.1145/3272127.3275097

-

[27]

Laplacian framework for interactive mesh editing,

Y. Lipman, O. Sorkine, M. Alexa, D. Cohen-Or, D. Levin, C. R ¨ossl, and H.-P . Seidel, “Laplacian framework for interactive mesh editing,”International Journal of Shape Modeling, vol. 11, no. 01, pp. 43–61, 2005

work page 2005

-

[28]

O. Sorkine, “Laplacian mesh processing,”Eurographics (State of the Art Reports), vol. 4, no. 4, p. 1, 2005

work page 2005

-

[29]

Mesh editing with poisson-based gradient field manipulation,

Y. Yu, K. Zhou, D. Xu, X. Shi, H. Bao, B. Guo, and H.-Y. Shum, “Mesh editing with poisson-based gradient field manipulation,” inACM SIGGRAPH 2004 Papers, 2004, pp. 644–651

work page 2004

-

[30]

Neural cages for detail-preserving 3d deformations,

Y. Wang, N. Aigerman, V . G. Kim, S. Chaudhuri, and O. Sorkine- Hornung, “Neural cages for detail-preserving 3d deformations,” inProceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 75–83

work page 2020

-

[31]

Mesh- based inverse kinematics,

R. W. Sumner, M. Zwicker, C. Gotsman, and J. Popovi ´c, “Mesh- based inverse kinematics,”ACM transactions on graphics (TOG), vol. 24, no. 3, pp. 488–495, 2005

work page 2005

-

[32]

3d gaussian splatting for real-time radiance field rendering

B. Kerbl, G. Kopanas, T. Leimk ¨uhler, and G. Drettakis, “3d gaussian splatting for real-time radiance field rendering.”ACM Trans. Graph., vol. 42, no. 4, pp. 139–1, 2023

work page 2023

-

[33]

Physgaussian: Physics-integrated 3d gaussians for generative dynamics,

T. Xie, Z. Zong, Y. Qiu, X. Li, Y. Feng, Y. Yang, and C. Jiang, “Physgaussian: Physics-integrated 3d gaussians for generative dynamics,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 4389–4398

work page 2024

-

[34]

Sc-gs: Sparse-controlled gaussian splatting for editable dynamic scenes,

Y.-H. Huang, Y.-T. Sun, Z. Yang, X. Lyu, Y.-P . Cao, and X. Qi, “Sc-gs: Sparse-controlled gaussian splatting for editable dynamic scenes,” inProceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 4220–4230

work page 2024

-

[35]

Real-time large-scale deformation of gaussian splatting,

L. Gao, J. Yang, B.-t. Zhang, J.-m. Sun, Y.-j. Yuan, H. Fu, and Y.- k. Lai, “Real-time large-scale deformation of gaussian splatting,” ACM Transactions on Graphics (TOG), vol. 43, no. 6, pp. 1–17, 2024

work page 2024

-

[36]

Shifting layer heights for closed-loop contours in additive manufacturing,

A. Roschli, I. Bhandari, M. Borish, C. Adkins, and L. White, “Shifting layer heights for closed-loop contours in additive manufacturing,” inProceedings of the 8th ACM Symposium on Computational Fabrication, ser. SCF ’23. New York, NY, USA: Association for Computing Machinery, 2023. [Online]. Available: https://doi.org/10.1145/3623263.3629162

-

[37]

B. D. Bevans, A. Carrington, A. Riensche, A. Tenequer, C. Barrett, H. S. Halliday, R. Srinivasan, K. D. Cole, and P . Rao, “Digital twins for rapid in-situ qualification of part quality in laser powder bed fusion additive manufacturing,”Additive Manufacturing, vol. 93, p. 104415, 2024. [Online]. Available: https://www.sciencedirect. com/science/article/pi...

work page 2024

-

[38]

Generalisable 3d printing error detection and correction via multi-head neural networks,

D. A. J. Brion and S. W. Pattinson, “Generalisable 3d printing error detection and correction via multi-head neural networks,”Nature Communications, vol. 13, no. 1, Aug. 2022. [Online]. Available: http://dx.doi.org/10.1038/s41467-022-31985-y

-

[39]

Closed-loop control of direct ink writing via reinforcement learning,

M. Piovar ˇci, M. Foshey, J. Xu, T. Erps, V . Babaei, P . Didyk, S. Rusinkiewicz, W. Matusik, and B. Bickel, “Closed-loop control of direct ink writing via reinforcement learning,”ACM Trans. Graph., vol. 41, no. 4, Jul. 2022. [Online]. Available: https://doi.org/10.1145/3528223.3530144

-

[40]

X. Li and S. W. Pattinson, “An efficient and uncertainty- aware reinforcement learning framework for quality assurance in extrusion additive manufacturing,”Additive Manufacturing, vol. 110, p. 104912, 2025. [Online]. Available: https://www. sciencedirect.com/science/article/pii/S2214860425002763

work page 2025

-

[41]

Y. Huang, R. Su, K. Qian, T. Zhang, Y. Chen, T. Liu, G. Fang, W. Wang, and C. C. Wang, “Force-based adaptive deposition in multi-axis additive manufacturing: Low porosity for enhanced strength,”Robotics and Computer-Integrated Manufacturing, vol. 98, p. 103123, 2026. [Online]. Available: https://www.sciencedirect. com/science/article/pii/S0736584525001772

work page 2026

-

[42]

Phystwin: Physics-informed reconstruction and simulation of deformable objects from videos,

H. Jiang, H.-Y. Hsu, K. Zhang, H.-N. Yu, S. Wang, and Y. Li, “Phystwin: Physics-informed reconstruction and simulation of deformable objects from videos,”ICCV, 2025

work page 2025

-

[43]

Digital twin catalog: A large- scale photorealistic 3d object digital twin dataset,

Z. Dong, K. Chen, Z. Lv, H.-X. Yu, Y. Zhang, C. Zhang, Y. Zhu, S. Tian, Z. Li, G. Moffatt, S. Christofferson, J. Fort, X. Pan, M. Yan, J. Wu, C. Y. Ren, and R. Newcombe, “Digital twin catalog: A large- scale photorealistic 3d object digital twin dataset,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2025...

work page 2025

-

[44]

Computation of rotation minimizing frames,

W. Wang, B. J ¨uttler, D. Zheng, and Y. Liu, “Computation of rotation minimizing frames,”ACM Transactions on Graphics, vol. 27, no. 1, pp. 1–18, 2008. 14

work page 2008

-

[45]

Nerf: Representing scenes as neural radiance fields for view synthesis,

B. Mildenhall, P . P . Srinivasan, M. Tancik, J. T. Barron, R. Ra- mamoorthi, and R. Ng, “Nerf: Representing scenes as neural radiance fields for view synthesis,”Communications of the ACM, vol. 65, no. 1, pp. 99–106, 2021

work page 2021

-

[46]

Adam: A method for stochastic optimization,

D. P . Kingma and J. Ba, “Adam: A method for stochastic optimization,”International Conference on Learning Representations (ICLR), 2015

work page 2015

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.