Recognition: 2 theorem links

· Lean TheoremPolicyCache-SDN: Hierarchical Intra-Path Learning for Adaptive SDN Traffic Control

Pith reviewed 2026-05-12 04:09 UTC · model grok-4.3

The pith

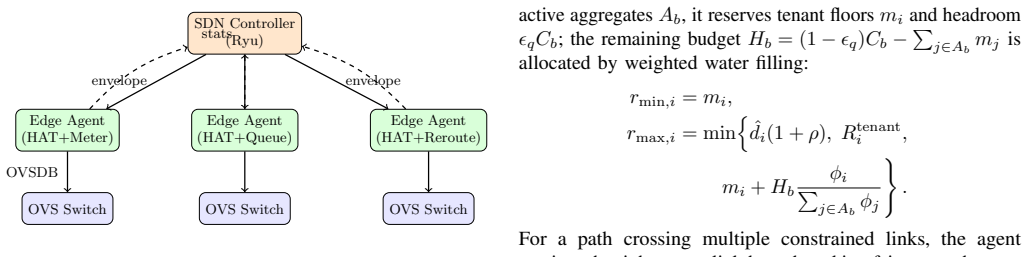

PolicyCache-SDN compiles network intent into per-path bounds so edge agents can safely adapt traffic control online.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that by using policy envelopes to bound per-path actions, the system allows local online learning that improves average core link utilization by 35.5% over Static ECMP and 18.3% over Centralized TE, while reducing elephant flow P99 FCT by 34.3% and SLA violations from 18.2% to 6.8% with minimal resource use.

What carries the argument

The policy envelope, a compiled set of bounds on per-path actions derived from network-wide intent that restricts local decisions to maintain safety and auditability.

If this is right

- Core link utilization increases by 35.5% compared to static ECMP routing.

- Elephant flow 99th percentile flow completion time decreases by 34.3% relative to end-host congestion control.

- SLA violations are reduced from 18.2% to 6.8%.

- Each edge agent requires less than 2% CPU and 12 MB memory.

Where Pith is reading between the lines

- Such hierarchical bounds could be applied to other domains requiring safe delegation of control, like autonomous vehicle fleets or distributed computing resources.

- The auditability of local actions might facilitate compliance in networks with strict regulatory requirements.

- Dynamic adjustment of envelopes based on observed shifts could further enhance robustness without full recentralization.

Load-bearing premise

That the compiled policy envelopes provide bounds tight enough for effective local learning yet loose enough to handle traffic variations without causing inconsistencies or new problems.

What would settle it

A scenario with traffic distribution shifts that push demands outside the envelope bounds, where one would check if local adaptations lead to increased congestion, higher violations, or require manual intervention.

Figures

read the original abstract

Software defined networks offer global visibility, yet centralized control loops are too slow for transient congestion and bursty traffic dynamics. Existing learned traffic control schemes often rely on offline training, making them fragile under distribution shifts. We present PolicyCache-SDN, a hierarchical SDN traffic control framework that enables local online adaptation under centralized policy control. Its key abstraction is a policy envelope: the controller compiles network wide intent into bounded per path action spaces, while edge agents learn and execute metering, queueing, and rerouting decisions only within those bounds. Policy envelopes also make local actions auditable and reversible when they affect shared bottlenecks. Evaluation on a 1,024 host software SDN testbed shows that PolicyCache-SDN improves average core link utilization by 35.5% over Static ECMP and 18.3% over Centralized TE. It reduces elephant flow P99 FCT by 34.3% over end host congestion control, lowers SLA violations from 18.2% to 6.8%, and uses less than 2% CPU and 12 MB memory per edge agent. The source code is available in an anonymized repository at https://anonymous.4open.science/r/JCC2026-PolicyCache-SDN/.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes PolicyCache-SDN, a hierarchical SDN traffic control framework. A centralized controller compiles network-wide intent into policy envelopes that define bounded per-path action spaces; edge agents then perform online learning for metering, queueing, and rerouting decisions strictly inside those bounds. The envelopes are intended to keep local actions auditable and reversible. On a 1,024-host software SDN testbed the system is reported to raise average core link utilization by 35.5 % over Static ECMP and 18.3 % over Centralized TE, to cut elephant-flow P99 FCT by 34.3 % relative to end-host congestion control, to lower SLA violations from 18.2 % to 6.8 %, and to incur <2 % CPU and 12 MB memory per edge agent. Source code is provided in an anonymized repository.

Significance. If the policy-envelope mechanism proves robust, the work would offer a practical middle ground between slow centralized TE and fragile offline-learned controllers, directly addressing transient congestion in SDNs. The public code release is a clear strength that supports reproducibility and follow-on research.

major comments (2)

- [Abstract and Evaluation section] Abstract and Evaluation section: the headline gains (35.5 % utilization, 34.3 % FCT, 18.2 %→6.8 % SLA) rest on the unverified assumption that policy envelopes can be compiled tightly enough for safety yet loosely enough for adaptation. No formal definition of the compilation procedure, no ablation on bound tightness, and no experiments that inject traffic-matrix shifts or link failures while checking envelope violations are presented.

- [Evaluation section] Evaluation section: the reported deltas are given without specification of the edge-agent learning algorithm, the precise implementation of the Centralized TE and end-host baselines, or any statistical tests, making it impossible to judge whether the numbers support the central claim of safe hierarchical adaptation.

minor comments (2)

- [Abstract] The abstract refers to a 'software SDN testbed' but does not name the emulation platform or topology generator.

- A short related-work subsection contrasting PolicyCache-SDN with prior hierarchical SDN and safe-RL approaches would improve context.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback, which identifies key areas where additional rigor and detail will strengthen the presentation of PolicyCache-SDN. We address each major comment below and commit to revisions that directly respond to the concerns raised.

read point-by-point responses

-

Referee: [Abstract and Evaluation section] Abstract and Evaluation section: the headline gains (35.5 % utilization, 34.3 % FCT, 18.2 %→6.8 % SLA) rest on the unverified assumption that policy envelopes can be compiled tightly enough for safety yet loosely enough for adaptation. No formal definition of the compilation procedure, no ablation on bound tightness, and no experiments that inject traffic-matrix shifts or link failures while checking envelope violations are presented.

Authors: We agree that the manuscript would be strengthened by a formal definition of the policy-envelope compilation procedure and by explicit validation of the safety-adaptation tradeoff. The current text presents the high-level mechanism and reports aggregate results on the 1,024-host testbed, but does not include a mathematical formulation of the bound computation or targeted ablation and failure-injection experiments. In the revision we will add a dedicated subsection that formally defines the compilation algorithm (including the per-path action-space bounds derived from network-wide intent) and will include (i) an ablation varying envelope tightness and (ii) new experiments that inject traffic-matrix shifts and link failures while logging envelope-violation counts. These additions will make the safety claims verifiable without altering the reported headline numbers. revision: yes

-

Referee: [Evaluation section] Evaluation section: the reported deltas are given without specification of the edge-agent learning algorithm, the precise implementation of the Centralized TE and end-host baselines, or any statistical tests, making it impossible to judge whether the numbers support the central claim of safe hierarchical adaptation.

Authors: We acknowledge that the Evaluation section omits explicit specification of the edge-agent learning algorithm, the exact baseline implementations, and statistical analysis. The manuscript states that edge agents perform online learning inside the envelopes and compares against Static ECMP, Centralized TE, and end-host congestion control, but does not provide the required algorithmic or statistical detail. In the revision we will expand the Evaluation section to describe the constrained online learning method used by the agents, supply precise references or pseudocode for the Centralized TE and end-host baselines, and report standard deviations together with appropriate statistical tests for all performance deltas. These clarifications will allow readers to assess the support for safe hierarchical adaptation. revision: yes

Circularity Check

No circularity; performance claims rest on empirical testbed results

full rationale

The provided manuscript text (abstract and description) contains no equations, derivations, or parameter-fitting steps. Central claims concern measured improvements in link utilization, FCT, and SLA violations on a 1024-host SDN testbed versus baselines (Static ECMP, Centralized TE, end-host congestion control). No self-definitional relations, fitted inputs renamed as predictions, or load-bearing self-citations appear. Policy envelopes are described as a compilation abstraction enabling local learning, but the text presents this as an engineering design evaluated empirically rather than derived from prior self-referential results. This matches the reader's assessment that claims are not quantities defined in terms of fitted parameters. No load-bearing step reduces to its own inputs by construction.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquationwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

Its key abstraction is a policy envelope: the controller compiles network wide intent into bounded per path action spaces, while edge agents learn and execute metering, queueing, and rerouting decisions only within those bounds.

-

IndisputableMonolith/Foundation/ArithmeticFromLogicLogicNat unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

We implement PolicyCache-SDN with Ryu, Open vSwitch, and gRPC... Hoeffding Adaptive Tree (HAT)

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

OpenFlow: Enabling innovation in campus networks,

N. McKeown, T. Anderson, H. Balakrishnan, G. Parulkar, L. Peterson, J. Rexford, S. Shenker, and J. Turner, “OpenFlow: Enabling innovation in campus networks,” inACM SIGCOMM Computer Communication Review, vol. 38, no. 2, 2008, pp. 69–74

work page 2008

-

[2]

Improving network management with software defined networking,

H. Kim and N. Feamster, “Improving network management with software defined networking,” inIEEE Communications Magazine, vol. 51, no. 2, 2013, pp. 114–119

work page 2013

-

[3]

BlockSDN: Towards a high-performance blockchain via software-defined cross networking optimization,

W. Jia, J. Wang, Z. Yan, P. Xiangli, and G. Yuan, “BlockSDN: Towards a high-performance blockchain via software-defined cross networking optimization,” in2025 6th International Conference on Computer Engineering and Intelligent Control (ICCEIC), 2025, pp. 288–293

work page 2025

-

[4]

W. Jia, J. Wang, Z. Yanet al., “BlockSDN-VC: A SDN-based vir- tual coordinate-enhanced transaction broadcast framework for high- performance blockchains,” inNetwork and Parallel Computing (NPC 2025), ser. Lecture Notes in Computer Science, vol. 16305. Springer, Cham, 2026

work page 2025

-

[5]

SDN-SYN PoW: Adaptive ingress- aware defense with non-interactive PoW against volumetric SYN floods,

W. Jia, J. Wang, X. Zou, and K. Lei, “SDN-SYN PoW: Adaptive ingress- aware defense with non-interactive PoW against volumetric SYN floods,” 2026

work page 2026

-

[6]

DevoFlow: Scaling flow management for high-performance networks,

A. R. Curtis, J. C. Mogul, J. Tourrilhes, P. Yalagandula, P. Sharma, and S. Banerjee, “DevoFlow: Scaling flow management for high-performance networks,” inProceedings of ACM SIGCOMM, 2011, pp. 254–265

work page 2011

-

[7]

A deep reinforcement learning perspective on internet congestion control,

N. Jay, N. Rotman, B. Godfrey, M. Schapira, and A. Tamar, “A deep reinforcement learning perspective on internet congestion control,” in Proceedings of the 36th International Conference on Machine Learning (ICML), 2019, pp. 3050–3059

work page 2019

-

[8]

Cellular network traffic scheduling with deep reinforcement learning,

S. Chinchali, P. Hu, T. Chu, M. Sharma, M. Bansal, R. Misra, M. Pavone, and S. Katti, “Cellular network traffic scheduling with deep reinforcement learning,” inProceedings of the AAAI Conference on Artificial Intelligence, vol. 32, 2018, pp. 766–774

work page 2018

-

[9]

PolicyCache: Intra-flow learning in congestion control,

H. Tian, H. Wang, W. Li, X. Liao, D. Sun, W. Li, D. Chen, B. Huang, S. Fu, J. Zhang, D. Shen, and K. Chen, “PolicyCache: Intra-flow learning in congestion control,” in23rd USENIX Symposium on Networked Systems Design and Implementation (NSDI 26). USENIX Association, 2026

work page 2026

-

[10]

Learning from time-changing data with adaptive windowing,

A. Bifet and R. Gavald`a, “Learning from time-changing data with adaptive windowing,” inProceedings of the 7th SIAM International Conference on Data Mining (SDM), 2007, pp. 443–448

work page 2007

- [11]

-

[12]

River: Online machine learning in Python,

J. Montiel, M. Halford, S. M. Mastelini, G. Bolmier, R. Sourty, R. Vaysse, A. Zouitine, H. M. Gomes, J. Read, T. Abdessalem, and A. Bifet, “River: Online machine learning in Python,” https://riverml.xyz, 2021

work page 2021

-

[13]

Let it flow: Resilient asymmetric load balancing with flowlet switching,

E. Vanini, R. Pan, M. Alizadeh, P. Taheri, and T. Edsall, “Let it flow: Resilient asymmetric load balancing with flowlet switching,” in14th USENIX Symposium on Networked Systems Design and Implementation (NSDI 17). USENIX Association, 2017, pp. 407–420

work page 2017

-

[14]

Presto: Edge-based load balancing for fast datacenter networks,

K. He, E. Rozner, K. Agarwal, W. Felter, J. Carter, and A. Akella, “Presto: Edge-based load balancing for fast datacenter networks,” inProceedings of ACM SIGCOMM, 2015, pp. 465–478

work page 2015

-

[15]

B4: Experience with a globally-deployed software defined WAN,

S. Jain, A. Kumar, S. Mandal, J. Ong, L. Poutievski, A. Singh, S. Venkata, J. Wanderer, J. Zhou, M. Zhu, J. Zolla, U. H¨olzle, S. Stuart, and A. Vahdat, “B4: Experience with a globally-deployed software defined WAN,” in Proceedings of ACM SIGCOMM, 2013, pp. 3–14

work page 2013

-

[16]

Achieving high utilization with software-driven W AN,

C.-Y . Hong, S. Kandula, R. Mahajan, M. Zhang, V . Gill, M. Nanduri, and R. Wattenhofer, “Achieving high utilization with software-driven W AN,” inProceedings of ACM SIGCOMM, 2013, pp. 15–26

work page 2013

-

[17]

Hedera: Dynamic flow scheduling for data center networks,

M. Al-Fares, S. Radhakrishnan, B. Raghavan, N. Huang, and A. Vahdat, “Hedera: Dynamic flow scheduling for data center networks,” in7th USENIX Symposium on Networked Systems Design and Implementation (NSDI 10), 2010, pp. 89–92

work page 2010

-

[18]

CONGA: Distributed congestion-aware load balancing for datacenters,

M. Alizadeh, T. Edsall, S. Dharmapurikar, R. Vaidyanathan, K. Chu, A. Fingerhut, V . T. Lam, F. Matus, R. Pan, N. Yadav, and G. Varghese, “CONGA: Distributed congestion-aware load balancing for datacenters,” inProceedings of ACM SIGCOMM, 2014, pp. 503–514

work page 2014

-

[19]

HULA: Scalable load balancing using programmable data planes,

N. Katta, M. Hira, C. Kim, A. Sivaraman, and J. L. Rexford, “HULA: Scalable load balancing using programmable data planes,” inProceedings of the Symposium on SDN Research (SOSR), 2016, pp. 10:1–10:12

work page 2016

-

[20]

PCC Vivace: Online-learning congestion control,

M. Dong, T. Meng, D. Zarchy, E. Arslan, Y . Gilad, B. Godfrey, and M. Schapira, “PCC Vivace: Online-learning congestion control,” in15th USENIX Symposium on Networked Systems Design and Implementation (NSDI 18), 2018, pp. 343–356

work page 2018

-

[21]

A unified congestion control framework for diverse application preferences and network conditions,

Z. Du, J. Zheng, H. Yu, L. Kong, and G. Chen, “A unified congestion control framework for diverse application preferences and network conditions,” inProceedings of ACM CoNEXT, 2021, pp. 282–296

work page 2021

-

[22]

LLM-enhanced heteroge- neous graph embedding model for multi-task DNS security,

W. Jia, J. Wang, Z. Yan, T. Liu, and K. Lei, “LLM-enhanced heteroge- neous graph embedding model for multi-task DNS security,” inNetwork and Parallel Computing (NPC 2025), ser. Lecture Notes in Computer Science, vol. 16305. Springer, Cham, 2026

work page 2025

-

[23]

W. Jia, Q. Xu, Z. Yan, C. Kang, Y . Yang, J. He, and K. Lei, “OpenCLAW-Nexus: A self-reinforcing trust framework for byzantine- resilient decentralized federated learning,” 2026

work page 2026

-

[24]

Planter: Seeding trees within switches,

C. Zheng and N. Zilberman, “Planter: Seeding trees within switches,” in Proceedings of ACM SIGCOMM (Posters and Demos), 2021, pp. 12–14

work page 2021

-

[25]

IIsy: Hybrid in-network classification using programmable switches,

C. Zheng, Z. Xiong, T. T. Bui, S. Kaupmees, R. Bensoussane, A. Bern- abeu, S. Vargaftik, Y . Ben-Itzhak, and N. Zilberman, “IIsy: Hybrid in-network classification using programmable switches,”IEEE/ACM Transactions on Networking, 2024

work page 2024

-

[26]

Onix: A distributed control platform for large-scale production networks,

T. Koponen, M. Casado, N. Gude, J. Stribling, L. Poutievski, M. Zhu, R. Ramanathan, Y . Iwata, H. Hama, K. Kobayashi, and S. Shenker, “Onix: A distributed control platform for large-scale production networks,” in Proceedings of USENIX OSDI, 2010, pp. 351–364

work page 2010

-

[27]

Kandoo: A framework for efficient and scalable offloading of control applications,

S. Hassas Yeganeh and Y . Ganjali, “Kandoo: A framework for efficient and scalable offloading of control applications,” inProceedings of the 1st ACM SIGCOMM Workshop on Hot Topics in Software Defined Networks (HotSDN), 2012, pp. 19–24

work page 2012

-

[28]

M. Alizadeh, A. Greenberg, D. A. Maltz, J. Padhye, P. Patel, B. Prabhakar, S. Sengupta, and G. Varghese, “Data center TCP (DCTCP),” in Proceedings of ACM SIGCOMM, 2010, pp. 63–74

work page 2010

-

[29]

HPCC: High precision congestion control,

Y . Li, R. Miao, H. H. Liu, Y . Zhuang, F. Feng, L. Tang, Z. Cao, M. Zhang, F. Kelly, M. Alizadeh, and M. Yang, “HPCC: High precision congestion control,” inProceedings of ACM SIGCOMM, 2019, pp. 44–58

work page 2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.