Recognition: 2 theorem links

· Lean TheoremEmergent Communication for Co-constructed Emotion Between Embodied Agents via Collective Predictive Coding

Pith reviewed 2026-05-12 02:16 UTC · model grok-4.3

The pith

Embodied agents develop aligned emotion categories through communication even when their internal bodily signals differ systematically.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

When two agents equipped with collective predictive coding exchange symbols via the Metropolis-Hastings Naming Game, their independently learned emotion categories become measurably more aligned, clearer, and mutually agreed upon than in non-communicative or non-selective baselines; the effect concentrates at the symbolic layer and remains robust even under systematic divergence in interoceptive dynamics, with each agent exhibiting distinct category-specific reshaping patterns.

What carries the argument

The Metropolis-Hastings Naming Game (MHNG) operating inside the Collective Predictive Coding (CPC) architecture, which lets agents propose and accept symbolic labels that minimize collective prediction error across their multimodal inputs.

If this is right

- Shared emotional categories can form at the level of discrete symbols without requiring identical perceptual or interoceptive representations.

- Interoceptive heterogeneity between agents does not block but instead shapes the emergence of common emotion categories through communication.

- The alignment effect is localized to the symbolic interface rather than propagating back into each agent's lower-level perceptual latent space.

- Predictive-coding agents can extend their internal models to social domains by treating other agents' signals as additional prediction targets.

Where Pith is reading between the lines

- The same architecture could be tested with more than two agents or with continuous rather than discrete emotion categories to check whether the alignment mechanism scales.

- If the symbolic layer remains the primary site of alignment, then interventions that alter only the naming-game rules should be sufficient to change shared emotional meaning without retraining perceptual encoders.

- The observed category-specific reshaping patterns suggest that each agent may retain private emotional nuance while still coordinating on public labels, a pattern worth checking against human psychological data on emotion granularity.

- Extending the model to include explicit reward for successful joint action after labeling could reveal whether communicative alignment improves downstream coordination performance.

Load-bearing premise

The chosen simulated visual, auditory, and interoceptive inputs plus the simple naming-game protocol are sufficient to stand in for the biological and social processes that construct shared emotion in humans.

What would settle it

An experiment in which MHNG communication produces no statistically significant gain in inter-agent category alignment or clarity relative to the non-communicative baseline.

Figures

read the original abstract

According to the theory of constructed emotion, the brain actively forms emotion categories by integrating multimodal bodily signals, and constructs emotional experiences by using these categories to predict and interpret sensory inputs. While research has advanced in modeling individual emotion construction, the social process of co-construction-how a shared understanding of emotions emerges between individuals-remains computationally underexplored. This study investigates this process by modeling emergent communication between two embodied agents using the Metropolis-Hastings Naming Game (MHNG), grounded in the Collective Predictive Coding (CPC) framework. Our experiments, using visual, auditory, and simulated interoceptive inputs, yield two main findings. First, MHNG-based communication significantly improves the alignment, clarity, and inter-agent agreement of the learned emotion categories compared to non-communicative and non-selective baselines, with the alignment effect concentrated at the symbolic layer rather than the perceptual latent representation. Second, even when the two agents have systematically divergent interoceptive dynamics, communication still produces robust categorical alignment, with distinct, category-specific reshaping patterns of each agent's emotion categories-consistent with the constructed-emotion view that interoceptive heterogeneity is constitutive of, rather than an obstacle to, shared emotional meaning. These findings provide computational support for the co-constructionist view of emotion and extend the CPC framework from physical to socially-grounded domains.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

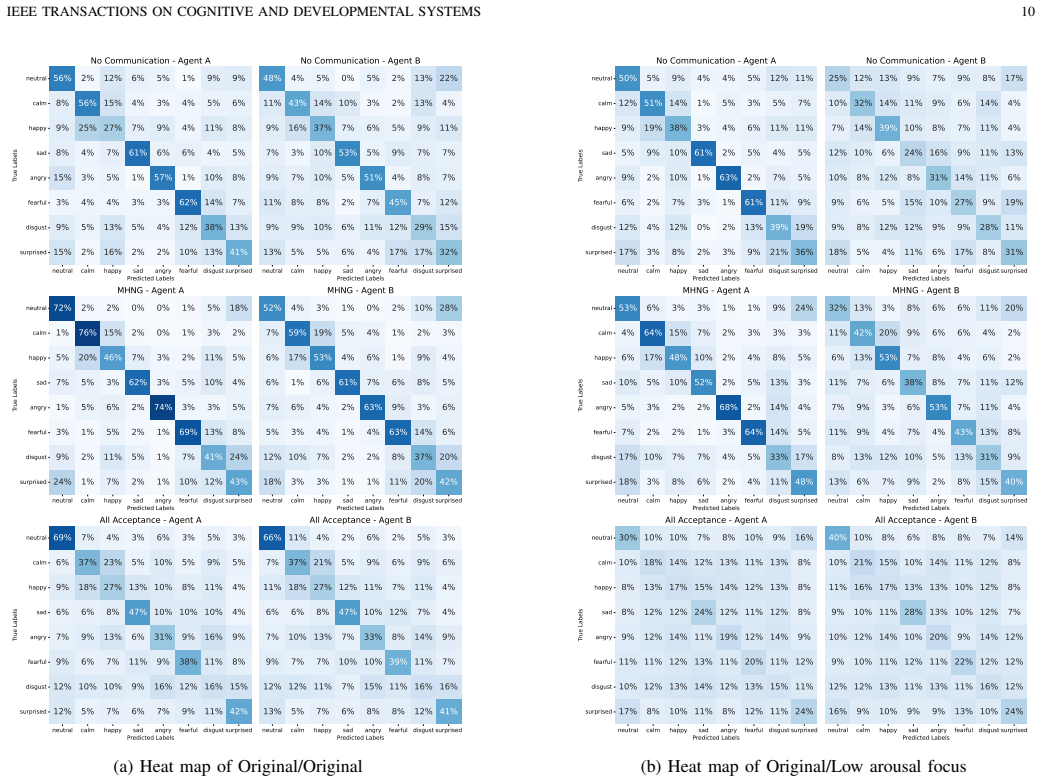

Summary. The manuscript models co-constructed emotion between two embodied agents by combining Collective Predictive Coding (CPC) with the Metropolis-Hastings Naming Game (MHNG) for emergent communication. Using simulated visual, auditory, and interoceptive inputs, the experiments compare MHNG-based communication against non-communicative and non-selective baselines. The central claims are that MHNG communication produces significantly higher alignment, clarity, and inter-agent agreement of learned emotion categories (with the effect localized to the symbolic layer rather than perceptual latents), and that this alignment remains robust even when the agents have systematically divergent interoceptive dynamics, accompanied by distinct category-specific reshaping of each agent's categories.

Significance. If the reported simulation outcomes hold under replication, the work supplies a concrete computational demonstration that shared emotional categories can emerge through selective communication despite individual differences in bodily signals, thereby furnishing support for the co-constructionist account of emotion. It usefully extends the CPC framework into a multi-agent social setting and employs controlled baseline comparisons plus a heterogeneity robustness check. These elements constitute genuine strengths for a modeling paper in multi-agent systems and affective computation.

minor comments (4)

- [Abstract] Abstract: the statements that MHNG communication 'significantly improves' alignment and produces 'robust categorical alignment' are not accompanied by any quantitative values (effect sizes, number of runs, statistical tests, or exact definitions of the alignment/clarity metrics).

- [Section 3] Section 3 (Model): the precise parameterization of the interoceptive, visual, and auditory input streams, the architecture of the CPC encoders/decoders, and the MHNG update rules should be stated with explicit equations or pseudocode so that the reported category-reshaping patterns can be reproduced.

- [Section 4] Section 4 (Experiments) and associated figures: the results tables or plots do not report variance across random seeds or confidence intervals; adding these would strengthen the claim that the alignment effect is concentrated at the symbolic layer.

- [Section 3.2] The manuscript would benefit from an explicit statement of the loss functions and optimization details used for the CPC component, as these choices directly affect the learned latent representations against which communication is compared.

Simulated Author's Rebuttal

We thank the referee for their positive assessment of the manuscript, accurate summary of our approach and findings, and recommendation for minor revision. We appreciate the recognition that the work provides computational support for the co-constructionist account of emotion and extends the CPC framework to a multi-agent setting. We will prepare a revised version incorporating any minor changes.

Circularity Check

No significant circularity; results from controlled simulation comparisons

full rationale

The paper's central claims rest on empirical outcomes from agent simulations that compare MHNG+CPC communication against non-communicative and non-selective baselines within the same experimental loop. These comparisons are not forced by construction because the alignment metrics (category agreement, clarity) are measured post-training on held-out or divergent interoceptive conditions. The frameworks (CPC, MHNG) are imported as established tools rather than derived internally, and no load-bearing step reduces a prediction to a fitted parameter or self-citation chain. The derivation chain is therefore self-contained against the paper's own benchmarks.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption The brain actively forms emotion categories by integrating multimodal bodily signals and uses these categories to predict and interpret sensory inputs (theory of constructed emotion).

- domain assumption Collective Predictive Coding provides a suitable computational substrate for modeling both individual emotion construction and inter-agent communication.

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearMHNG-based communication significantly improves the alignment, clarity, and inter-agent agreement of the learned emotion categories... even when the two agents have systematically divergent interoceptive dynamics

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearCollective Predictive Coding (CPC) framework... symbol emergence as decentralized Bayesian inference

Reference graph

Works this paper leans on

-

[1]

R. S. Lazarus,Emotion and Adaptation. Oxford University Press, 1991

work page 1991

-

[2]

What are emotions? and how can they be measured?

K. R. Scherer, “What are emotions? and how can they be measured?” Social Science Information, vol. 44, no. 4, pp. 695–729, 2005

work page 2005

-

[3]

A. R. Damasio,The Feeling of What Happens: Body and Emotion in the Making of Consciousness. Harcourt Brace, 1999

work page 1999

-

[4]

J. A. Russell, “A circumplex model of affect.”Journal of personality and social psychology, vol. 39, no. 6, p. 1161, 1980. IEEE TRANSACTIONS ON COGNITIVE AND DEVELOPMENTAL SYSTEMS 13 TABLE III: The parameters of each emotion used to generate core affect Emotionµ V µA σV σA θV θA Neutral 0.00 0.00 0.090 0.090 1.5 1.5 Calm 0.80 -0.50 0.135 0.180 2.1 1.8 Hap...

work page 1980

-

[5]

Cultural variations in emotions: A review,

B. Mesquita and N. H. Frijda, “Cultural variations in emotions: A review,”Psychological Bulletin, vol. 112, no. 2, pp. 179–204, 1992

work page 1992

-

[6]

S. Kitayama and H. R. Markus,Emotion and Culture: Empirical Studies of Mutual Influence. American Psychological Association, 1994

work page 1994

-

[7]

L. F. Barrett,How emotions are made: The secret life of the brain. Pan Macmillan, 2017

work page 2017

-

[8]

Emotion perception as conceptual synchrony,

M. Gendron and L. F. Barrett, “Emotion perception as conceptual synchrony,”Emotion Review, vol. 10, no. 2, pp. 101–110, 2018

work page 2018

-

[9]

A. R. Damasio,Descartes’ Error: Emotion, Reason, and the Human Brain. G. P. Putnam’s Sons, 1994

work page 1994

-

[10]

An argument for basic emotions,

P. Ekman, “An argument for basic emotions,”Cognition and Emotion, vol. 6, no. 3-4, pp. 169–200, 1992

work page 1992

-

[11]

The brain basis of emotion: a meta-analytic review,

K. A. Lindquist, T. D. Wager, H. Kober, E. Bliss-Moreau, and L. F. Barrett, “The brain basis of emotion: a meta-analytic review,”Behavioral and brain sciences, vol. 35, no. 3, pp. 121–143, 2012

work page 2012

-

[12]

L. F. Barrett, “Are emotions natural kinds?”Perspectives on psycholog- ical science, vol. 1, no. 1, pp. 28–58, 2006

work page 2006

-

[13]

J. A. Russell, “Is there universal recognition of emotion from facial ex- pression? a review of the cross-cultural studies.”Psychological bulletin, vol. 115, no. 1, p. 102, 1994

work page 1994

-

[14]

M. Gendron, D. Roberson, J. M. van der Vyver, and L. F. Barrett, “Perceptions of emotion from facial expressions are not culturally universal: evidence from a remote culture.”Emotion, vol. 14, no. 2, p. 251, 2014

work page 2014

-

[15]

Active inference: a process theory,

K. Friston, T. FitzGerald, F. Rigoli, P. Schwartenbeck, and G. Pezzulo, “Active inference: a process theory,”Neural Computation, vol. 29, no. 1, pp. 1–49, 2017

work page 2017

-

[16]

Active interoceptive inference and the emotional brain,

A. K. Seth and K. J. Friston, “Active interoceptive inference and the emotional brain,”Philosophical Transactions of the Royal Society B: Biological Sciences, vol. 371, no. 1708, p. 20160007, 2016

work page 2016

-

[17]

T. Horii, Y . Nagai, and M. Asada, “Modeling development of multimodal emotion perception guided by tactile dominance and perceptual improve- ment,”IEEE Transactions on Cognitive and Developmental Systems, vol. 10, no. 3, pp. 762–775, 2018

work page 2018

-

[18]

Deep emotion: A computational model of emotion using deep neural networks,

C. Hieida, T. Horii, and T. Nagai, “Deep emotion: A computational model of emotion using deep neural networks,” 2018. [Online]. Available: https://arxiv.org/abs/1808.08447

-

[19]

Symbol emergence in robotics: a survey,

T. Taniguchiet al., “Symbol emergence in robotics: a survey,”Advanced Robotics, vol. 30, no. 11-12, pp. 706–728, 2016

work page 2016

-

[20]

Symbol emergence as an interpersonal multimodal categorization,

Y . Hagiwara, H. Kobayashi, A. Taniguchi, and T. Taniguchi, “Symbol emergence as an interpersonal multimodal categorization,”Frontiers in Robotics and AI, vol. 6, p. 134, 2019

work page 2019

-

[21]

Collective predictive coding hypothesis: symbol emer- gence as decentralized bayesian inference,

T. Taniguchi, “Collective predictive coding hypothesis: symbol emer- gence as decentralized bayesian inference,”Frontiers in Robotics and AI, vol. 11, p. 1353870, 2024

work page 2024

-

[22]

N. L. Hoang, T. Taniguchi, Y . Hagiwara, and A. Taniguchi, “Emer- gent communication of multimodal deep generative models based on metropolis-hastings naming game,”Frontiers in Robotics and AI, vol. 10, 2024

work page 2024

-

[23]

Interoceptive inference, emotion, and the embodied self,

A. K. Seth, “Interoceptive inference, emotion, and the embodied self,” Trends in cognitive sciences, vol. 17, no. 11, pp. 565–573, 2013

work page 2013

-

[24]

Emergent communication through metropolis-hastings naming game with deep generative models,

T. Taniguchi, Y . Yoshida, Y . Matsui, N. Le Hoang, A. Taniguchi, and Y . Hagiwara, “Emergent communication through metropolis-hastings naming game with deep generative models,”Advanced Robotics, vol. 37, no. 19, pp. 1266–1282, 2023

work page 2023

-

[25]

Y . Hagiwara, K. Furukawa, A. Taniguchi, and T. Taniguchi, “Multiagent multimodal categorization for symbol emergence: emergent commu- nication via interpersonal cross-modal inference,”Advanced Robotics, vol. 36, no. 5-6, pp. 239–260, 2022

work page 2022

-

[26]

Mh- mug: Collaborative music generation game between ai agents towards emergent musical creativity,

K. Sakurai, H. Uenoyama, A. Taniguchi, and T. Taniguchi, “Mh- mug: Collaborative music generation game between ai agents towards emergent musical creativity,”IEEE Access, 2026

work page 2026

-

[27]

Multimodal generative models for scalable weakly-supervised learning,

M. Wu and N. Goodman, “Multimodal generative models for scalable weakly-supervised learning,”Advances in neural information processing systems, vol. 31, 2018

work page 2018

-

[28]

Variational mixture-of-experts autoen- coders for multi-modal deep generative models,

Y . Shi, B. Paige, P. Torr,et al., “Variational mixture-of-experts autoen- coders for multi-modal deep generative models,”Advances in neural information processing systems, vol. 32, 2019

work page 2019

-

[29]

T. M. Sutter, I. Daunhawer, and J. E. V ogt, “Generalized multimodal elbo,”arXiv preprint arXiv:2105.02470, 2021

-

[30]

L. Hubert and P. Arabie, “Comparing partitions,”Journal of classifica- tion, vol. 2, pp. 193–218, 1985

work page 1985

-

[31]

A coefficient of agreement for nominal scales,

J. Cohen, “A coefficient of agreement for nominal scales,”Educational and psychological measurement, vol. 20, no. 1, pp. 37–46, 1960

work page 1960

-

[32]

L. Van der Maaten and G. Hinton, “Visualizing data using t-sne.”Journal of machine learning research, vol. 9, no. 11, 2008

work page 2008

-

[33]

Representational similarity analysis-connecting the branches of systems neuroscience,

N. Kriegeskorte, M. Mur, and P. A. Bandettini, “Representational similarity analysis-connecting the branches of systems neuroscience,” Frontiers in systems neuroscience, vol. 2, p. 249, 2008

work page 2008

-

[34]

D. L. Davies and D. W. Bouldin, “A cluster separation measure,”IEEE transactions on pattern analysis and machine intelligence, no. 2, pp. 224–227, 2009

work page 2009

-

[35]

S. R. Livingstone and F. A. Russo, “The ryerson audio-visual database of emotional speech and song (ravdess): A dynamic, multimodal set of facial and vocal expressions in north american english,”PloS one, vol. 13, no. 5, p. e0196391, 2018

work page 2018

-

[36]

Openface 2.0: Facial behavior analysis toolkit,

B. Tadas, Z. Amir, L. Y . Chong, and M. Louis-Philippe, “Openface 2.0: Facial behavior analysis toolkit,” in13th IEEE International Conference on Automatic Face & Gesture Recognition, 2018

work page 2018

-

[37]

How does interoceptive awareness interact with the subjective experience of emotion? an fmri study,

Y . Terasawa, H. Fukushima, and S. Umeda, “How does interoceptive awareness interact with the subjective experience of emotion? an fmri study,”Human Brain Mapping, vol. 34, no. 3, pp. 598–612, 2013

work page 2013

-

[38]

Alexithymia: a general deficit of interoception,

R. Brewer, R. Cook, and G. Bird, “Alexithymia: a general deficit of interoception,”Royal Society Open Science, vol. 3, no. 10, p. 150664, 2016

work page 2016

-

[39]

Interoception and psychopathology: A developmental neuroscience perspective,

J. Murphy, R. Brewer, C. Catmur, and G. Bird, “Interoception and psychopathology: A developmental neuroscience perspective,”Develop- mental Cognitive Neuroscience, vol. 23, pp. 45–56, 2017

work page 2017

-

[40]

W. V . O. Quine,Word and Object. MIT Press, 1960

work page 1960

-

[41]

M. Asada, “Towards artificial empathy,”International Journal of Social Robotics, vol. 7, no. 1, pp. 19–33, 2015

work page 2015

-

[42]

Modeling early vocal development through infant–caregiver in- teraction,

——, “Modeling early vocal development through infant–caregiver in- teraction,”IEEE Transactions on Cognitive and Developmental Systems, vol. 8, no. 2, pp. 128–138, 2016

work page 2016

-

[43]

Perceptual and affective mechanisms in facial expression recognition: An integrative review,

M. G. Calvo and L. Nummenmaa, “Perceptual and affective mechanisms in facial expression recognition: An integrative review,”Cognition and Emotion, vol. 30, no. 6, pp. 1081–1106, 2016

work page 2016

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.